Recognition: unknown

Expertise Indices: Variants, Modifications, Advancements, and Computational Tools in R

Pith reviewed 2026-05-10 13:35 UTC · model grok-4.3

The pith

New x-index variants and the xxdi R package measure institutions' thematic research expertise beyond h- and g-indices.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The x-index, x_d-index and their bias-adjusted variants, including the recently introduced field-normalised and fractional versions together with the new category-adjusted x-index, inverse variance weighted x_d-index, and the novel x_o-index, quantify institutional research expertise by incorporating thematic content and resolvable biases; the xxdi R package implements simple functions for all of them so the wider community can apply the measures in practice.

What carries the argument

The family of x-index and x_d-index expertise indicators that quantify thematic research strength and apply explicit bias adjustments.

If this is right

- Institutions gain metrics that reflect depth in specific research themes rather than raw volume alone.

- Bias adjustments support fairer cross-field comparisons for performance-based funding.

- The xxdi R package makes the full set of indices immediately computable without custom coding.

- The x_o-index supplies a single summary statistic for overall institutional expertise.

- These measures can sit alongside h- and g-indices for more complete evaluations.

Where Pith is reading between the lines

- Funding panels could weight thematic-expertise scores when allocating resources to priority areas.

- Integration with publication databases might allow automated expertise profiles for teams or departments.

- Testing the indices against real grant outcomes or collaboration patterns would show whether the bias adjustments improve decisions.

- The same logic could extend from institutions to individual researchers once the package is available.

Load-bearing premise

The new indices and their adjustments actually capture thematic expertise in a way that is meaningfully better than h- and g-indices.

What would settle it

A direct comparison in which the x_o-index or category-adjusted x-index predicts institutional funding success or thematic output better than the h-index across multiple fields.

Figures

read the original abstract

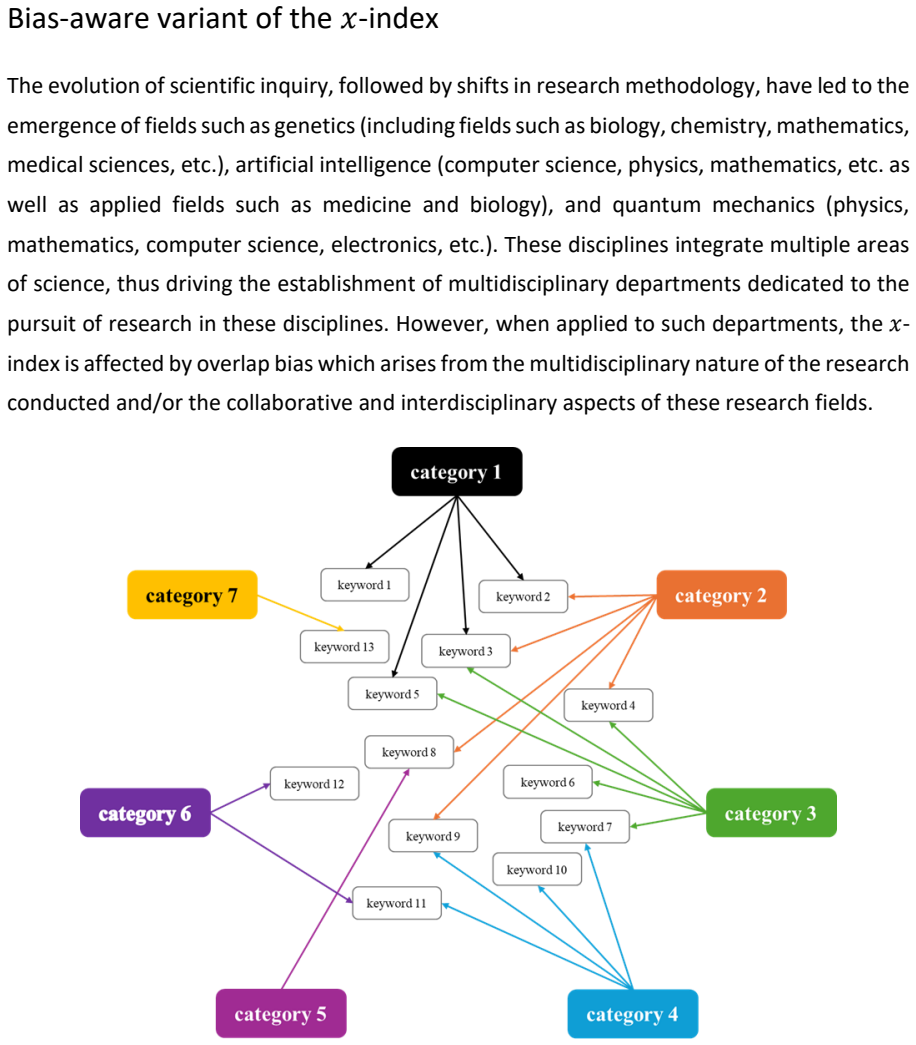

In the academic landscape, scientific research has been primarily conducted through research institutions, which requires a massive influx of funds from various sources. Presently, these funding bodies have been moving from trust-based funding to performance-based evaluation systems for granting funds to the research bodies. This has led to the rise in popularity of various indices or statistics that measure institutional research strength or expertise. Institutional research expertise usually focuses on publication volume and its impact measured using the widely used h- and g-indices. However, these indices fail to capture the thematic expertise of research for institutions. To address this gap, two new expertise indicators, namely the x-index, the x_d-index, and bias-adjusted variants, the field-normalised x_d-index, and the fractional x_d-index, were introduced recently. Additionally, we propose two new variants, the category-adjusted x-index and the inverse variance weighted x_d-index, which further account for resolvable bias, and a novel statistic, the x_o-index, which acts as a measure of the overall research expertise. While several packages that calculate the traditional h- and g-indices exist, these novel expertise indices are yet to be included in such existing packages. The 'xxdi' R package provides simple functions that implement these expertise indices and their variants, enabling their utilisation by the wider research community. A stable version of the package is available on CRAN (https://doi.org/10.32614/CRAN.package.xxdi) and an in-development version on GitHub (https://github.com/nilabhrardas/xxdi).

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces new bibliometric indices (x-index, x_d-index and bias-adjusted variants including field-normalised x_d-index and fractional x_d-index) to capture thematic research expertise of institutions, which the authors argue is not addressed by h- and g-indices. It proposes additional variants (category-adjusted x-index, inverse variance weighted x_d-index) and a novel overall measure (x_o-index), and supplies the 'xxdi' R package (available on CRAN and GitHub) with functions to compute them.

Significance. If the indices prove robust in practice, the work supplies a practical extension to existing bibliometric toolkits for performance-based research evaluation, particularly where thematic focus and bias adjustment matter. The open R package is a concrete deliverable that lowers the barrier to adoption and reproducibility.

minor comments (2)

- Abstract: while the full text supplies the mathematical definitions and package functions, the abstract itself contains no formulas, worked examples, or validation metrics; adding one concise definition or small illustrative calculation would improve immediate accessibility without lengthening the abstract substantially.

- Section on package implementation: the manuscript states that the package provides 'simple functions' but does not list the exported function names or their argument signatures; including a short table or code snippet of the main user-facing functions would aid readers who wish to apply the indices immediately.

Simulated Author's Rebuttal

We thank the referee for their positive summary of the manuscript, recognition of its potential significance for bibliometric toolkits, and recommendation of minor revision. No specific major comments were provided in the report.

Circularity Check

Minor self-citation for base indices; new variants and package are definitional

full rationale

The paper defines new indices (x-index, x_d-index variants, x_o-index) and supplies an R package as direct mathematical and computational proposals to fill a stated gap left by h- and g-indices. Base indices are referenced as 'introduced recently,' indicating self-citation, but this is not load-bearing for the new variants or package functions. No equation reduces a claimed prediction to a fitted parameter or prior result by construction; the derivations are explicit definitions. The work is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption h- and g-indices fail to capture the thematic expertise of research for institutions

Reference graph

Works this paper leans on

-

[1]

Aria, M., & Cuccurullo, C. (2017). bibliometrix: An R -tool for comprehensive science mapping analysis. Journal of Informetrics , 11(4), 959 –975. https://doi.org/10.1016/J.JOI.2017.08.007

-

[2]

R., & Nandy, A

Das, N. R., & Nandy, A. (2026). xxdi: An R Package for Evaluating Scholarly Expertise Indices for Institutional Research Assessment (Version 1.3.1) [Computer software]. https://cran.r- project.org/web/packages/xxdi/index.html

2026

-

[3]

Egghe, L. (2006). An improvement of the h-index: The g-index. ISSI Newsletter

2006

-

[4]

Franceschini, F., & Maisano, D. (2011). Structured evaluation of the scientific output of academic research groups by recent h-based indicators. Journal of Informetrics, 5(1), 64–

2011

-

[5]

https://doi.org/10.1016/j.joi.2010.08.003

-

[6]

Gagolewski, M., & Cena, A. (2023). agop: Aggregation Operators and Preordered Sets (Version 0.2.4) [Computer software]. https://cran.r - project.org/web/packages/agop/index.html

2023

-

[7]

Hirsch, J. E. (2005). An index to quantify an individual’s scientific research output. Proceedings of the National Academy of Sciences of the United States of America, 102(46), 16569–16572. https://doi.org/10.1073/pnas.0507655102

-

[8]

Huang, M., & Lin, C. -S. (2011). Counting methods & university ranking by H -index. Proceedings of the American Society for Information Science and Technology , 48(1), 1–6. https://doi.org/10.1002/meet.2011.14504801191

-

[9]

H., Nandy, A., & Singh, V

Lathabai, H. H., Nandy, A., & Singh, V. K. (2021a). Expertise -based institutional collaboration recommendation in different thematic areas. CEUR Workshop Proceedings, 2847

-

[11]

Lazaridis, T. (2010). Ranking university departments using the mean h -index. Scientometrics, 82(2), 211–216. https://doi.org/10.1007/s11192-009-0048-4

-

[12]

López-Illescas, C., de Moya -Anegón, F., & Moed, H. F. (2011). A ranking of universities should account for differences in their disciplinary specialization. Scientometrics, 88(2), 563–574. https://doi.org/10.1007/S11192-011-0398-6

-

[13]

Mendoza, M. (2021). Differences in Citation Patterns across Areas, Article Types and Age Groups of Researchers. Publications, 9(4), 47. https://doi.org/10.3390/publications9040047

-

[14]

Nandy, A., Lathabai, H. H., & Singh, V. K. (2023). x_d -index: An overall scholarly expertise index for the research portfolio management of institutions. Proceedings of ISSI 2023 – the 19th International Conference of the International Society for Scientometrics and Informetrics, 473–488. https://doi.org/10.5281/zenodo.8305585

-

[15]

Nandy, A., Lathabai, H. H., & Singh, V. K. (2024). $${\varvec{x}}_{{\varvec{d}}}$$-index and its variants: A set of overall scholarly expertise diversity indices for the research portfolio management of institutions. Scientometrics, 129(10), 5937 –5962. https://doi.org/10.1007/s11192-024-05131-y

-

[16]

Ye, F. Y., & Rousseau, R. (2010). Probing the h-core: An investigation of the tail–core ratio for rank distributions. Scientometrics, 84(2), 431 –439. https://doi.org/10.1007/s11192 - 009-0099-6

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.