Unification of Signal Transform Theory

Each transform is the eigenbasis for covariances invariant under a specific group, allowing automatic discovery from observed data

abstract

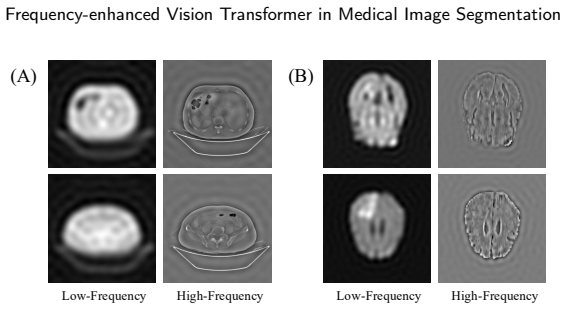

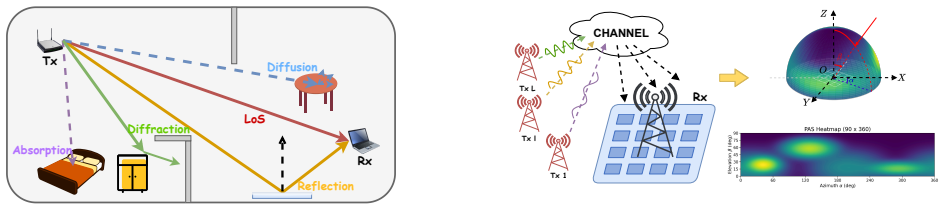

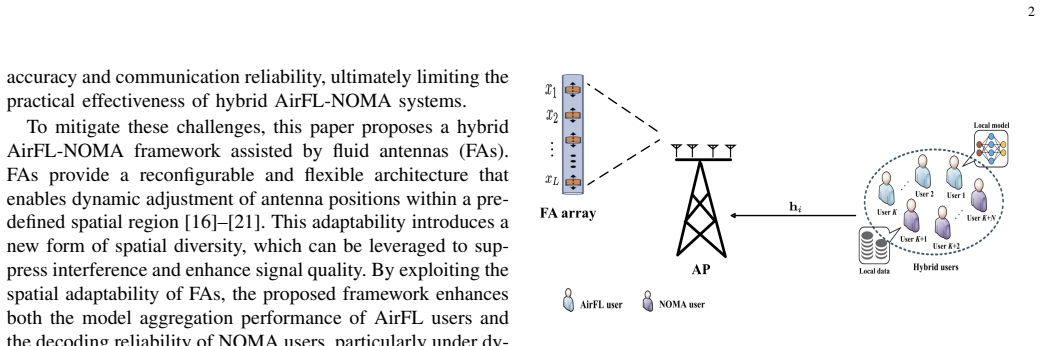

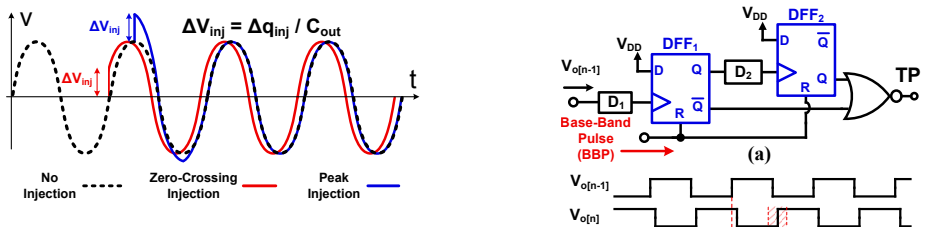

click to expand

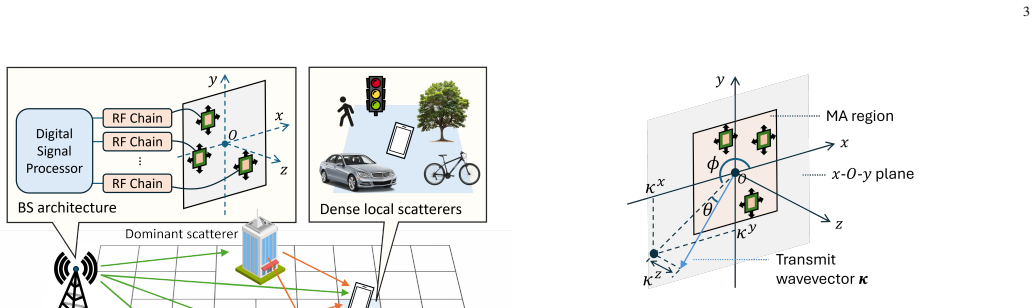

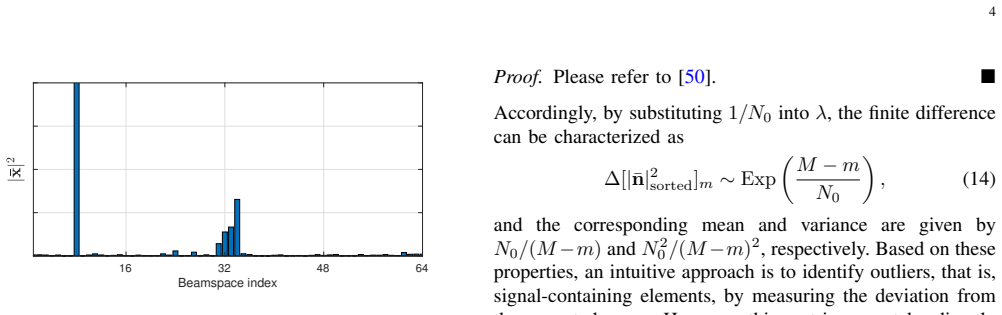

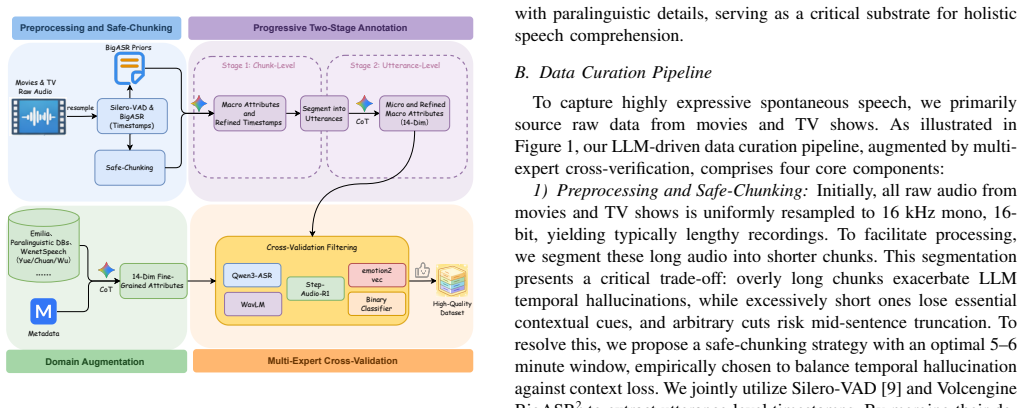

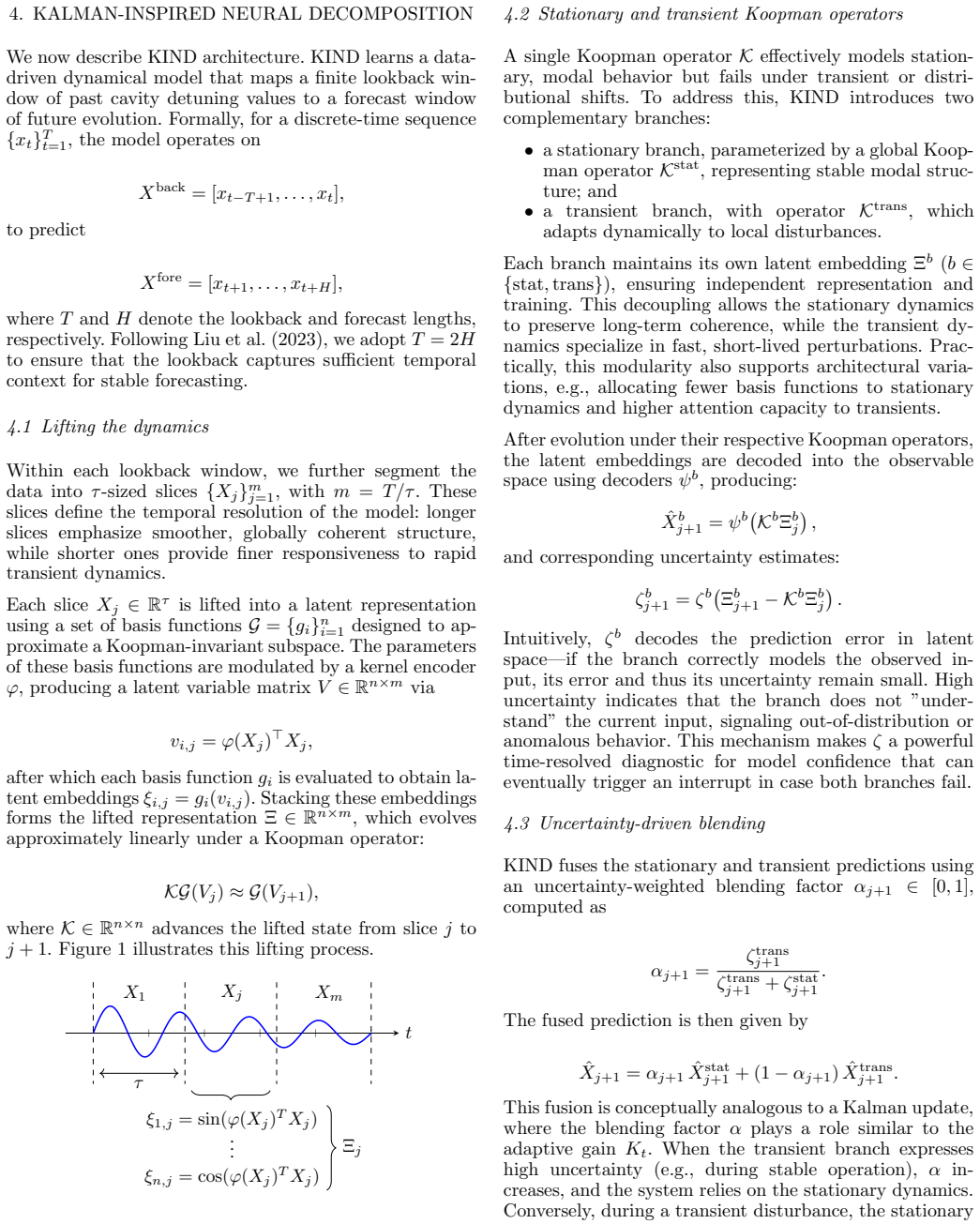

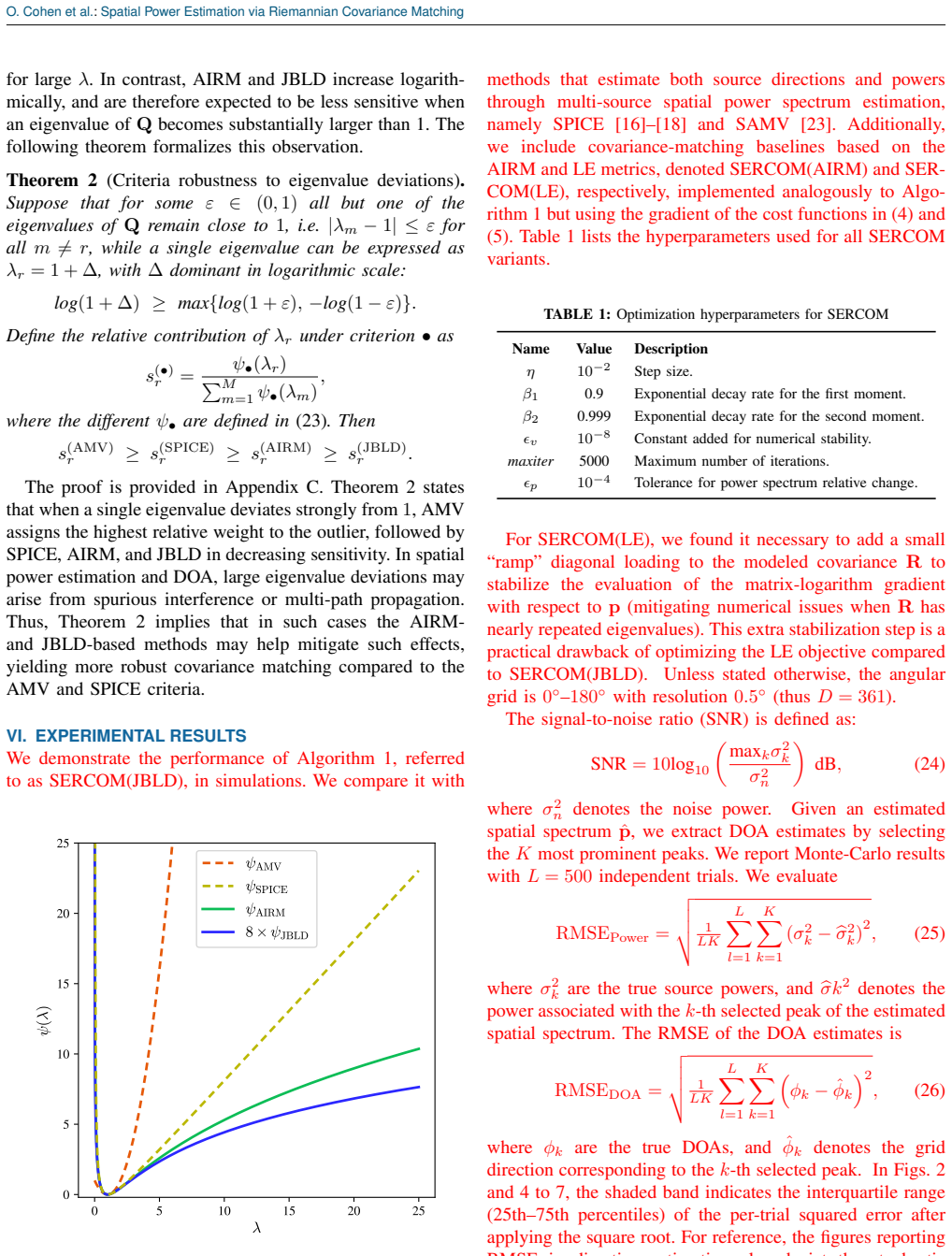

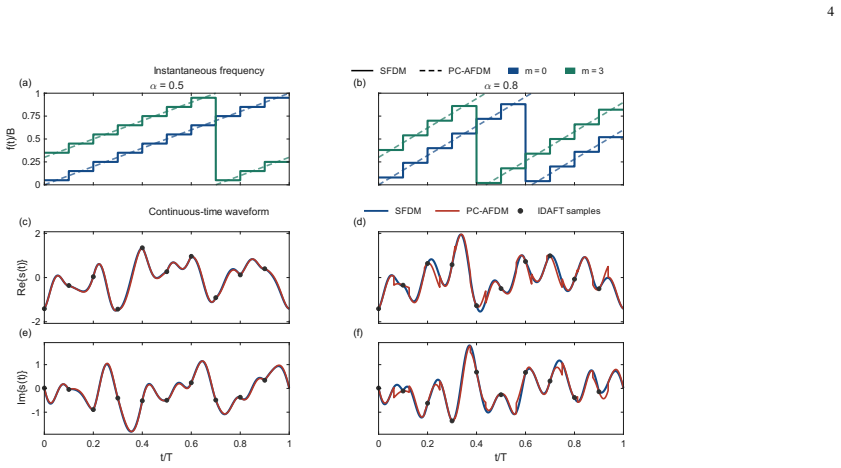

We unify the discrete Fourier transform (DFT), discrete cosine transform (DCT), Walsh-Hadamard, Haar wavelet, Karhunen-Lo\`eve transform, and several others along with their continuous counterparts (Fourier transform, Fourier series, spherical harmonics, fractional Fourier transform) under one representation-theoretic principle: each is the eigenbasis of every covariance invariant under a specific finite or compact group, with columns constructed from the irreducible matrix elements of the group via the Peter-Weyl theorem. The unification rests on the Algebraic Diversity (AD) framework, which identifies the matched group of a covariance as the foundational object of second-order signal processing. The data-dependent KLT emerges as the trivial-matched-group limit; classical transforms emerge as the cyclic, dihedral, elementary abelian, iterated wreath, and hybrid wreath cases. Composition rules cover direct, wreath, and semidirect products. The Reed-Muller and arithmetic transforms appear as related change-of-basis transforms on the matched group of Walsh-Hadamard. A polynomial-time algorithm for matched-group discovery, the DAD-CAD relaxation cast as a generalized eigenvalue problem in double-commutator form, closes the operational loop: the matched group of any empirical covariance is discovered without expert judgment, with noise-aware variants via the commutativity residual $\delta$ and algebraic coloring index $\alpha$ for finite-SNR settings. The fractional Fourier transform is treated as the metaplectic $SO(2)$ case with Hermite-Gauss matched basis, and a structural principle relates matched group size inversely to transform resolution. Modern applications (massive-MIMO, graph neural networks, transformer attention, point cloud and 3D vision, brain connectivity, single-cell genomics, quantum informatics) are sketched with their matched groups.

full image

full image