Recognition: no theorem link

Mixture of Predefined Experts: Maximizing Data Usage on Vertical Federated Learning

Pith reviewed 2026-05-16 01:48 UTC · model grok-4.3

The pith

Split-MoPE routes partially aligned samples through fixed experts and pretrained encoders to finish vertical federated training in one round.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

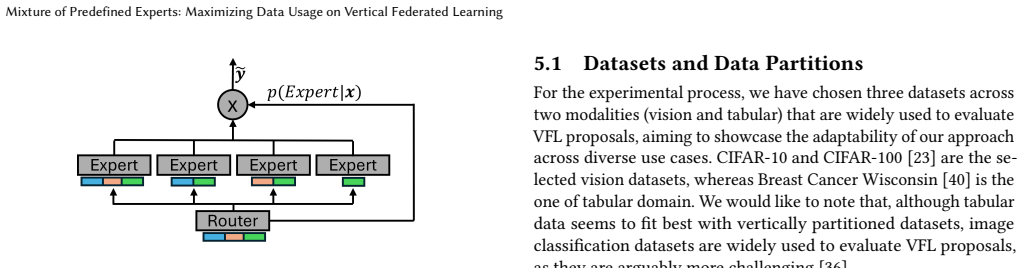

Split-MoPE integrates split learning with a Mixture of Predefined Experts architecture in which experts are statically assigned to specific patterns of data availability across parties. Pretrained encoders handle local feature extraction for each domain, allowing the system to use every sample that has at least one contributing party. This produces state-of-the-art accuracy on CIFAR-10/100 and Breast Cancer Wisconsin after one communication round, while adding robustness to noisy or malicious participants and per-sample interpretability through contribution quantification.

What carries the argument

Mixture of Predefined Experts (MoPE) that assigns fixed experts to concrete data-alignment patterns to maximize usable samples per batch.

If this is right

- Training and inference require only one round of communication between parties.

- Performance exceeds LASER and Vertical SplitNN on vision and tabular tasks with high rates of missing data.

- Each prediction yields an explicit score for every participant's contribution.

- The architecture tolerates malicious or noisy parties without extra defenses.

Where Pith is reading between the lines

- The same fixed-expert pattern could be tried in cross-silo settings where domain-specific encoders are already deployed.

- A hybrid version that occasionally learns new expert assignments might handle evolving data distributions.

- The per-sample contribution scores may simplify audits required for regulated collaborative training.

Load-bearing premise

Effective pretrained encoders must already exist for every data domain held by the participants.

What would settle it

Replace the pretrained encoders with randomly initialized ones on the same CIFAR and tabular benchmarks and check whether the single-round accuracy advantage over LASER and Vertical SplitNN vanishes.

Figures

read the original abstract

Vertical Federated Learning (VFL) has emerged as a critical paradigm for collaborative model training in privacy-sensitive domains such as finance and healthcare. However, most existing VFL frameworks rely on the idealized assumption of full sample alignment across participants, a premise that rarely holds in real-world scenarios. To bridge this gap, this work introduces Split-MoPE, a novel framework that integrates Split Learning with a specialized Mixture of Predefined Experts (MoPE) architecture. Unlike standard Mixture of Experts (MoE), where routing is learned dynamically, MoPE uses predefined experts to process specific data alignments, effectively maximizing data usage during both training and inference without requiring full sample overlap. By leveraging pretrained encoders for target data domains, Split-MoPE achieves state-of-the-art performance in a single communication round, significantly reducing the communication footprint compared to multi-round end-to-end training. Furthermore, unlike existing proposals that address sample misalignment, this novel architecture provides inherent robustness against malicious or noisy participants and offers per-sample interpretability by quantifying each collaborator's contribution to each prediction. Extensive evaluations on vision (CIFAR-10/100) and tabular (Breast Cancer Wisconsin) datasets demonstrate that Split-MoPE consistently outperforms state-of-the-art systems such as LASER and Vertical SplitNN, particularly in challenging scenarios with high data missingness.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces Split-MoPE, a framework that combines Split Learning with a Mixture of Predefined Experts (MoPE) architecture for Vertical Federated Learning. It addresses sample misalignment by using predefined experts for specific data alignments, claims single-round state-of-the-art performance on CIFAR-10/100 and Breast Cancer Wisconsin datasets via pretrained encoders, and asserts reduced communication, inherent robustness to malicious/noisy participants, and per-sample interpretability through contribution quantification.

Significance. If the single-round performance, robustness, and interpretability claims hold after proper ablation, the work could meaningfully advance practical VFL in privacy-sensitive settings by reducing communication rounds and handling real-world misalignment. The use of predefined experts to maximize data usage without full overlap is a potentially useful idea, though its novelty relative to existing MoE variants in federated settings requires clearer positioning.

major comments (3)

- [Abstract] Abstract: The claim of 'consistent outperformance' and 'state-of-the-art performance in a single communication round' is presented without any quantitative results, error bars, specific accuracy numbers, or ablation details, making it impossible to assess whether the gains are attributable to the MoPE architecture or to the pretrained encoders.

- [Abstract] Abstract and evaluation description: The performance, robustness, and interpretability claims are explicitly conditioned on 'leveraging pretrained encoders for target data domains,' yet no ablation is described that replaces these encoders with randomly initialized or jointly trained alternatives to isolate the contribution of the MoPE routing and single-round protocol.

- [Abstract] The manuscript provides no equations, derivations, or formal description of the MoPE routing mechanism, the predefined expert assignment, or how per-sample contribution quantification is computed, leaving the central algorithmic claims without mathematical grounding.

Simulated Author's Rebuttal

We thank the referee for the constructive comments on our manuscript. We address each of the major comments point by point below. Where revisions are needed, we have indicated that changes will be made in the next version of the paper.

read point-by-point responses

-

Referee: [Abstract] Abstract: The claim of 'consistent outperformance' and 'state-of-the-art performance in a single communication round' is presented without any quantitative results, error bars, specific accuracy numbers, or ablation details, making it impossible to assess whether the gains are attributable to the MoPE architecture or to the pretrained encoders.

Authors: The abstract serves as a concise summary of the key claims. Detailed quantitative results, including specific accuracy numbers with error bars from multiple runs, are provided in the experimental section of the manuscript (e.g., Tables 1-3). These results demonstrate the outperformance on the mentioned datasets. To improve the abstract's informativeness and allow readers to better assess the claims, we will revise it to include representative quantitative results. revision: yes

-

Referee: [Abstract] Abstract and evaluation description: The performance, robustness, and interpretability claims are explicitly conditioned on 'leveraging pretrained encoders for target data domains,' yet no ablation is described that replaces these encoders with randomly initialized or jointly trained alternatives to isolate the contribution of the MoPE routing and single-round protocol.

Authors: We recognize the value of isolating the MoPE contribution from the pretrained encoders. The current evaluation focuses on the practical setting with pretrained encoders, as this aligns with real-world VFL applications where domain-specific pretrained models are available. However, to address this concern, we will add new ablation experiments in the revised manuscript that include comparisons with randomly initialized encoders and end-to-end trained alternatives. This will help quantify the specific benefits of the MoPE routing and single-round protocol. revision: yes

-

Referee: [Abstract] The manuscript provides no equations, derivations, or formal description of the MoPE routing mechanism, the predefined expert assignment, or how per-sample contribution quantification is computed, leaving the central algorithmic claims without mathematical grounding.

Authors: We appreciate this feedback on the presentation. The manuscript does describe the MoPE architecture and routing in prose in Section 3, along with the contribution quantification method. To provide stronger mathematical grounding, we will include formal equations and a derivation for the routing mechanism, expert assignment, and per-sample contribution scores in the revised manuscript. This will make the algorithmic contributions more precise and easier to follow. revision: yes

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Pretrained encoders for target data domains are available and effective for the downstream task.

invented entities (1)

-

Mixture of Predefined Experts (MoPE) routing

no independent evidence

Reference graph

Works this paper leans on

- [1]

-

[2]

Weilin Cai, Juyong Jiang, Fan Wang, Jing Tang, Sunghun Kim, and Jiayi Huang

-

[3]

A survey on mixture of experts in large language models.IEEE Transactions on Knowledge and Data Engineering(2025)

work page 2025

- [4]

-

[5]

Zewen Chi, Li Dong, Shaohan Huang, Damai Dai, Shuming Ma, Barun Patra, Saksham Singhal, Payal Bajaj, Xia Song, Xian-Ling Mao, et al. 2022. On the repre- sentation collapse of sparse mixture of experts.Advances in Neural Information Processing Systems35 (2022), 34600–34613

work page 2022

-

[6]

Róbert Csordás, Kazuki Irie, and Jürgen Schmidhuber. 2023. Approximating Two-Layer Feedforward Networks for Efficient Transformers. InFindings of the Association for Computational Linguistics: EMNLP 2023. Association for Compu- tational Linguistics

work page 2023

- [7]

-

[8]

Damai Dai, Chengqi Deng, Chenggang Zhao, RX Xu, Huazuo Gao, Deli Chen, Jiashi Li, Wangding Zeng, Xingkai Yu, Yu Wu, et al. 2024. Deepseekmoe: Towards ultimate expert specialization in mixture-of-experts language models.arXiv preprint arXiv:2401.06066(2024)

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[9]

Nishanth Dikkala, Nikhil Ghosh, Raghu Meka, Rina Panigrahy, Nikhil Vyas, and Xin Wang. 2023. On the benefits of learning to route in mixture-of-experts models. InProceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. 9376–9396

work page 2023

-

[10]

William Fedus, Barret Zoph, and Noam Shazeer. 2022. Switch transformers: Scaling to trillion parameter models with simple and efficient sparsity.Journal of Machine Learning Research23, 120 (2022), 1–39

work page 2022

-

[11]

Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. 2016. Deep residual learning for image recognition. InProceedings of the IEEE conference on computer vision and pattern recognition. 770–778

work page 2016

-

[12]

Yuanqin He, Yan Kang, Xinyuan Zhao, Jiahuan Luo, Lixin Fan, Yuxing Han, and Qiang Yang. 2024. A hybrid self-supervised learning framework for vertical federated learning.IEEE Transactions on Big Data(2024)

work page 2024

-

[13]

Chung-ju Huang, Leye Wang, and Xiao Han. 2023. Vertical federated knowledge transfer via representation distillation for healthcare collaboration networks. In Proceedings of the ACM Web Conference 2023. 4188–4199

work page 2023

- [14]

- [15]

-

[16]

Robert A Jacobs, Michael I Jordan, Steven J Nowlan, and Geoffrey E Hinton. 1991. Adaptive mixtures of local experts.Neural computation3, 1 (1991), 79–87

work page 1991

-

[17]

Albert Q Jiang, Alexandre Sablayrolles, Antoine Roux, Arthur Mensch, Blanche Savary, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Emma Bou Hanna, Florian Bressand, et al . 2024. Mixtral of experts.arXiv preprint arXiv:2401.04088(2024)

work page internal anchor Pith review Pith/arXiv arXiv 2024

- [18]

-

[19]

Michael I Jordan and Robert A Jacobs. 1994. Hierarchical mixtures of experts and the EM algorithm.Neural computation6, 2 (1994), 181–214

work page 1994

-

[20]

Peter Kairouz, H Brendan McMahan, Brendan Avent, Aurélien Bellet, Mehdi Bennis, Arjun Nitin Bhagoji, Kallista Bonawitz, Zachary Charles, Graham Cor- mode, Rachel Cummings, et al. 2021. Advances and open problems in federated learning.Foundations and trends®in machine learning14, 1–2 (2021), 1–210

work page 2021

-

[21]

Yan Kang, Yang Liu, and Xinle Liang. 2022. Fedcvt: Semi-supervised vertical federated learning with cross-view training.ACM Transactions on Intelligent Systems and Technology (TIST)13, 4 (2022), 1–16

work page 2022

-

[22]

Afsana Khan, Marijn Ten Thij, Guangzhi Tang, and Anna Wilbik. 2025. VFL-RPS: Relevant Participant Selection in Vertical Federated Learning. In2025 Interna- tional Joint Conference on Neural Networks (IJCNN). IEEE, 1–8

work page 2025

-

[23]

Afsana Khan, Marijn ten Thij, and Anna Wilbik. 2025. Vertical federated learning: A structured literature review.Knowledge and Information Systems(2025), 1–39

work page 2025

-

[24]

Alex Krizhevsky, Geoffrey Hinton, et al. 2009. Learning multiple layers of features from tiny images.(2009)

work page 2009

-

[25]

Dmitry Lepikhin, HyoukJoong Lee, Yuanzhong Xu, Dehao Chen, Orhan Firat, Yanping Huang, Maxim Krikun, Noam Shazeer, and Zhifeng Chen. 2020. Gshard: Scaling giant models with conditional computation and automatic sharding. arXiv preprint arXiv:2006.16668(2020)

work page internal anchor Pith review Pith/arXiv arXiv 2020

-

[26]

Songze Li, Duanyi Yao, and Jin Liu. 2023. FedVS: Straggler-resilient and privacy- preserving vertical federated learning for split models. InInternational conference on machine learning. PMLR, 20296–20311

work page 2023

-

[27]

Tian Li, Anit Kumar Sahu, Manzil Zaheer, Maziar Sanjabi, Ameet Talwalkar, and Virginia Smith. 2020. Federated optimization in heterogeneous networks. Proceedings of Machine learning and systems2 (2020), 429–450

work page 2020

-

[28]

Yang Liu, Yan Kang, Tianyuan Zou, Yanhong Pu, Yuanqin He, Xiaozhou Ye, Ye Ouyang, Ya-Qin Zhang, and Qiang Yang. 2024. Vertical federated learning: Concepts, advances, and challenges.IEEE transactions on knowledge and data engineering36, 7 (2024), 3615–3634

work page 2024

- [29]

-

[30]

Brendan McMahan, Eider Moore, Daniel Ramage, Seth Hampson, and Blaise Aguera y Arcas. 2017. Communication-efficient learning of deep net- works from decentralized data. InArtificial intelligence and statistics. PMLR, 1273–1282

work page 2017

-

[31]

Daniel Morales, Isaac Agudo, and Javier Lopez. 2023. Private set intersection: A systematic literature review.Computer Science Review49 (2023), 100567

work page 2023

-

[32]

Xiaonan Nie, Shijie Cao, Xupeng Miao, Lingxiao Ma, Jilong Xue, Youshan Miao, Zichao Yang, Zhi Yang, and Bin Cui. 2021. Dense-to-sparse gate for mixture-of- experts. (2021)

work page 2021

-

[33]

Maxime Oquab, Timothée Darcet, Théo Moutakanni, Huy Vo, Marc Szafraniec, Vasil Khalidov, Pierre Fernandez, Daniel Haziza, Francisco Massa, Alaaeldin El- Nouby, et al. 2023. Dinov2: Learning robust visual features without supervision. arXiv preprint arXiv:2304.07193(2023)

work page internal anchor Pith review Pith/arXiv arXiv 2023

- [34]

-

[35]

Carlos Riquelme, Joan Puigcerver, Basil Mustafa, Maxim Neumann, Rodolphe Jenatton, André Susano Pinto, Daniel Keysers, and Neil Houlsby. 2021. Scaling vision with sparse mixture of experts.Advances in Neural Information Processing Systems34 (2021), 8583–8595

work page 2021

-

[36]

Noam Shazeer, Azalia Mirhoseini, Krzysztof Maziarz, Andy Davis, Quoc Le, Geoffrey Hinton, and Jeff Dean. 2017. Outrageously Large Neural Networks: The Sparsely-Gated Mixture-of-Experts Layer. arXiv:1701.06538 [cs.LG] https: //arxiv.org/abs/1701.06538

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[37]

Jingwei Sun, Ziyue Xu, Dong Yang, Vishwesh Nath, Wenqi Li, Can Zhao, Daguang Xu, Yiran Chen, and Holger R Roth. 2023. Communication-efficient vertical fed- erated learning with limited overlapping samples. InProceedings of the IEEE/CVF International Conference on Computer Vision. 5203–5212

work page 2023

- [38]

-

[39]

Praneeth Vepakomma, Otkrist Gupta, Tristan Swedish, and Ramesh Raskar. 2018. Split learning for health: Distributed deep learning without sharing raw patient data.arXiv preprint arXiv:1812.00564(2018)

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[40]

Jie Wen, Zhixia Zhang, Yang Lan, Zhihua Cui, Jianghui Cai, and Wensheng Zhang

-

[41]

A survey on federated learning: challenges and applications.International journal of machine learning and cybernetics14, 2 (2023), 513–535

work page 2023

-

[42]

William Wolberg, Olvi Mangasarian, Nick Street, and W. Street. 1993. Breast Cancer Wisconsin (Diagnostic). UCI Machine Learning Repository. DOI: https://doi.org/10.24432/C5DW2B

-

[43]

Yanzhao Zhang, Mingxin Li, Dingkun Long, Xin Zhang, Huan Lin, Baosong Yang, Pengjun Xie, An Yang, Dayiheng Liu, Junyang Lin, et al . 2025. Qwen3 Embedding: Advancing Text Embedding and Reranking Through Foundation Models.arXiv preprint arXiv:2506.05176(2025). A Models, Hyperparameters and Datasets Architectural details:In Table 6, we summarize the main ch...

work page internal anchor Pith review Pith/arXiv arXiv 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.