Recognition: unknown

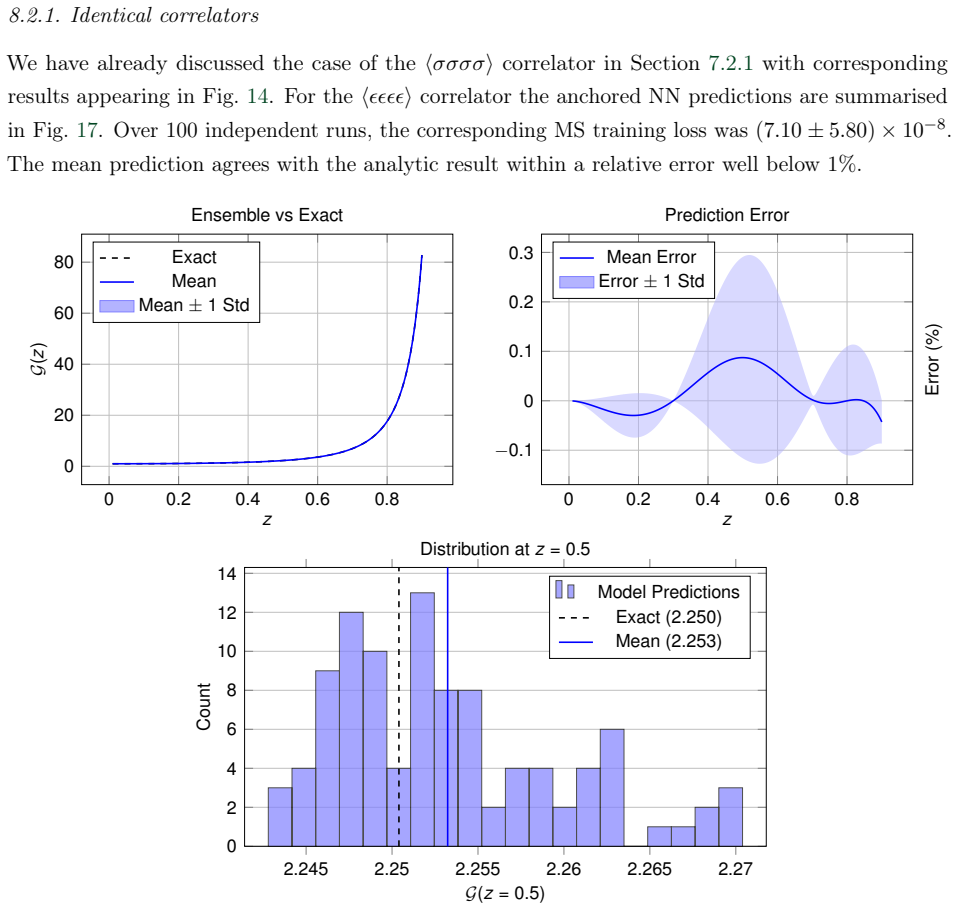

Neural Spectral Bias and Conformal Correlators I: Introduction and Applications

Pith reviewed 2026-05-10 04:00 UTC · model grok-4.3

The pith

Neural networks can reconstruct physical conformal correlators to within a few percent by optimizing only on crossing symmetry plus two scalar inputs.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

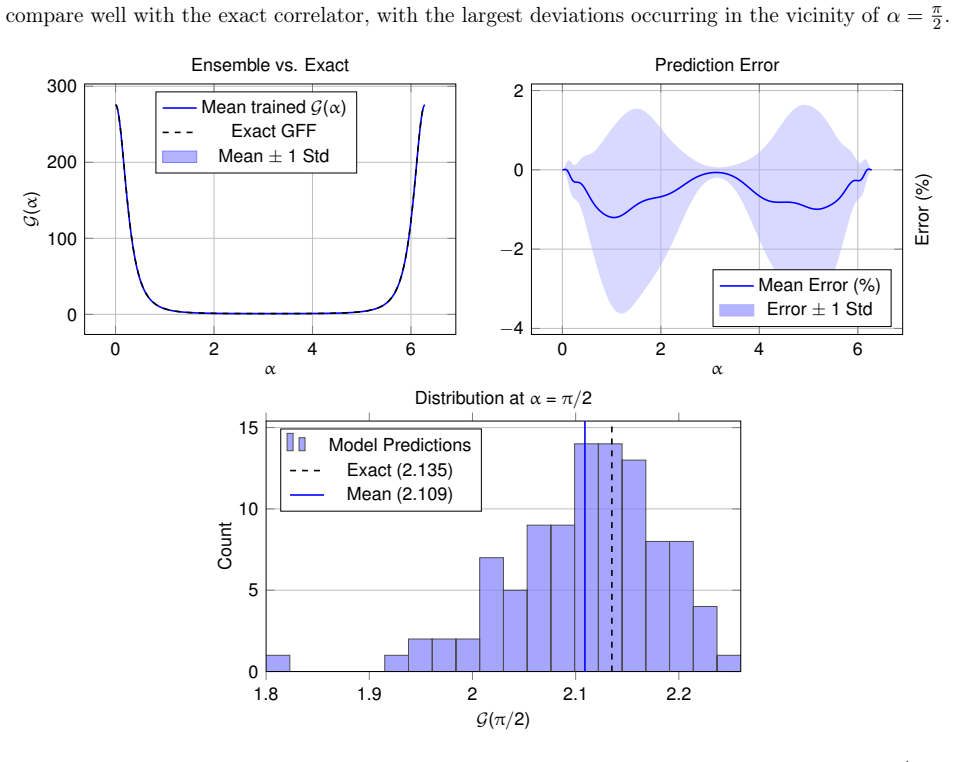

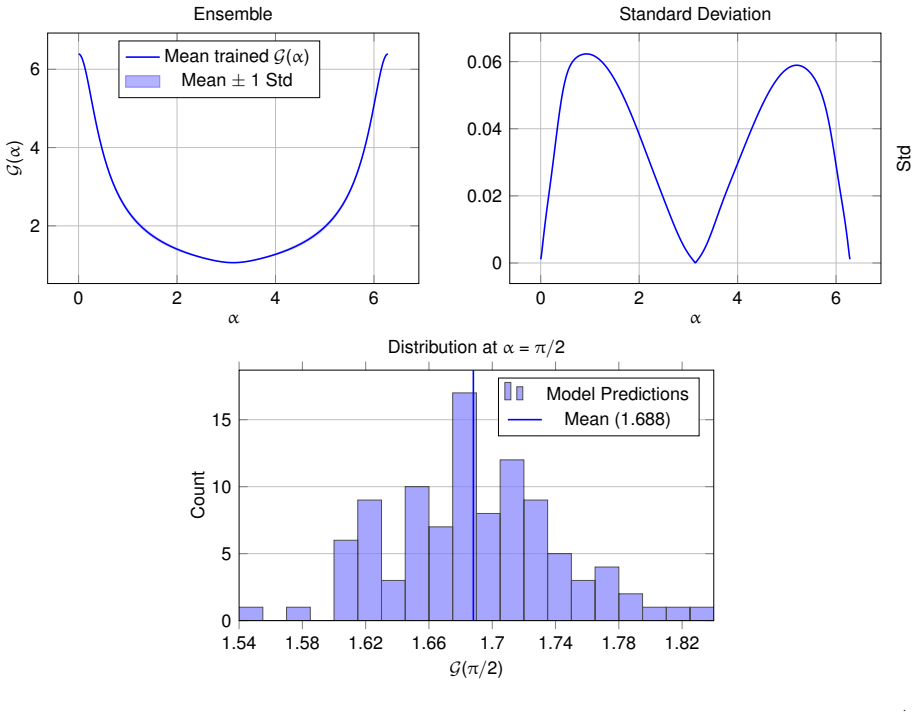

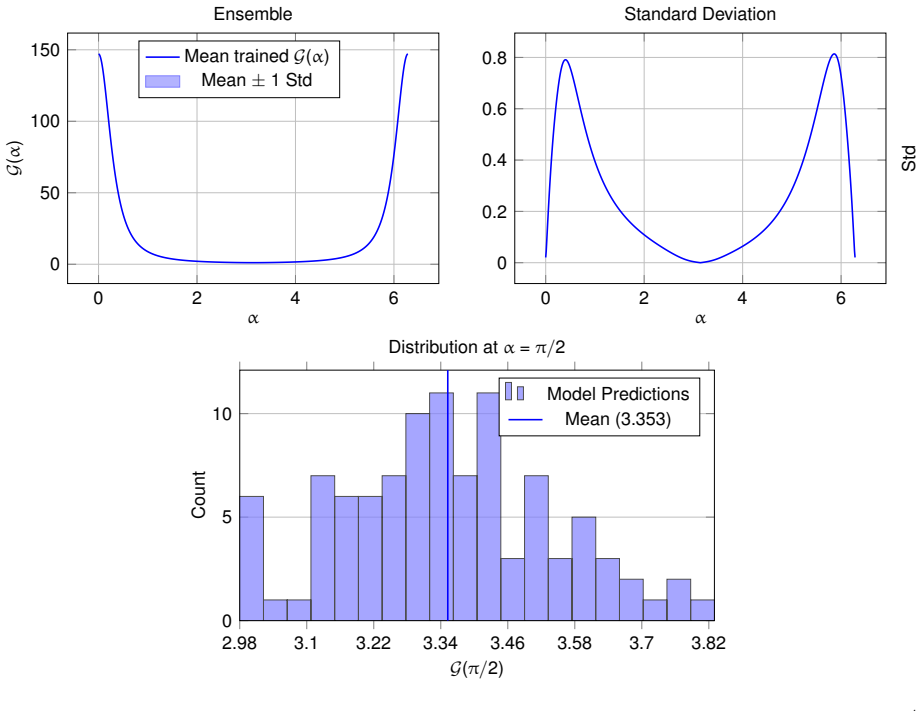

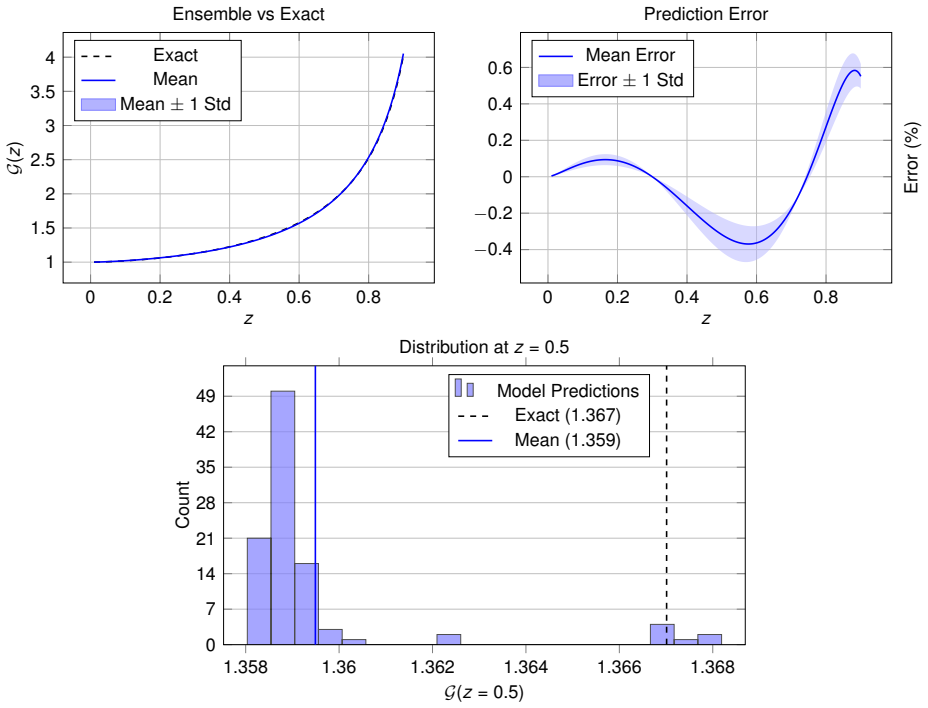

By optimizing a simple feed-forward neural network solely on the crossing symmetry condition, and supplying it with only the scaling dimension of the leading non-trivial operator and the correlator's value at a single anchor point, the network reconstructs target physical conformal correlators to within a few percent accuracy across a broad class of theories and dimensions.

What carries the argument

The spectral bias of gradient-based neural-network training, which preferentially learns smooth functions that match the smoothness properties of conformal correlators.

If this is right

- The same minimal-input procedure works for contact and one-loop Witten diagrams in AdS2, unitary and non-unitary 2d minimal models, half-BPS correlators in 4d N=4 SYM, and thermal two-point functions.

- The method extends beyond diagonal kinematics on the line.

- No additional data or assumptions beyond crossing symmetry and the two scalars are required for the reported accuracy.

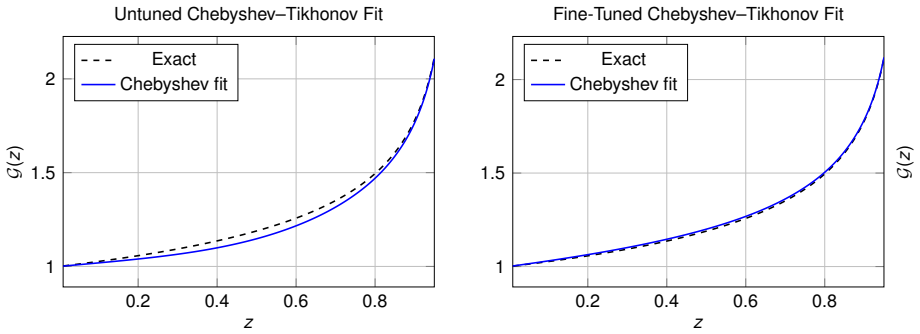

- Smoothness analysis via fractional Sobolev semi-norms and Chebyshev decompositions underpins why the networks succeed.

Where Pith is reading between the lines

- If spectral bias is the operative mechanism, analogous networks could be applied to other symmetry-constrained problems whose solutions are known to be smooth.

- Hybrid schemes that combine the network optimization with a small number of traditional bootstrap equations might further improve accuracy or reach higher-point functions.

- Testing the same protocol on correlators in higher dimensions or with multiple exchanged operators would clarify the boundary of its applicability.

Load-bearing premise

That the combination of crossing symmetry plus the two supplied scalars selects the unique physical correlator among all smooth functions that satisfy the same constraints.

What would settle it

For the 3d Ising model, where an independent bootstrap result is known, run the neural-network optimization with the leading-operator dimension and one anchor value; a reconstruction error substantially larger than a few percent would falsify the claim.

Figures

read the original abstract

We demonstrate that simple feed-forward neural networks (NNs) can accurately compute correlation functions of conformal field theories (CFTs) on a line. Strikingly, by optimising a NN solely on crossing symmetry and providing only the scaling dimension of the leading non-trivial operator and the correlator's value at a single "anchor point", we can reconstruct target physical correlators to within a few percent. We establish the robustness of this minimal-data approach across a broad class of theories and dimensions, including generalised free fields, contact and one-loop Witten diagrams in AdS$_2$, unitary and non-unitary 2d minimal models, the 3d Ising model, and half-BPS correlators in 4d $\mathcal{N}=4$ super-Yang-Mills theory, together with several thermal two-point functions, notably including those of the 3d Ising model. We argue that this remarkable alignment between NNs and CFTs stems from the spectral bias of gradient-based training, which heavily favours smooth functions. To ground this connection, we analyse the smoothness of conformal correlators using fractional Sobolev semi-norms, Chebyshev spectral decompositions, and a measure based on curvature. Finally, we establish the broader reconstructive power of this technique by extending it beyond the diagonal kinematics of the line.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript demonstrates that simple feed-forward neural networks can reconstruct conformal correlators by optimizing solely against crossing symmetry, using as input only the scaling dimension of the leading non-trivial operator and the correlator value at a single anchor point. It reports few-percent accuracy on generalized free fields, contact and one-loop Witten diagrams in AdS2, 2d minimal models, the 3d Ising model, half-BPS correlators in 4d N=4 SYM, and several thermal two-point functions, and extends the method beyond diagonal kinematics. The authors attribute the performance to the spectral bias of gradient descent toward smooth functions and support this with analyses based on fractional Sobolev semi-norms, Chebyshev decompositions, and curvature measures.

Significance. If the reconstruction is shown to systematically select the physical solution, the approach would provide a low-data, architecture-agnostic tool for computing CFT correlators that could complement bootstrap methods and AdS/CFT calculations. The breadth of examples tested and the independent mathematical characterization of correlator smoothness are genuine strengths that ground the connection to spectral bias.

major comments (3)

- [§4] §4 (Optimization and loss): Crossing symmetry is a linear functional equation on the cross-ratio. With only the leading Δ and one anchor point supplied, the manuscript provides no analysis of the dimension of the solution space or of whether other smooth functions satisfying the same constraints to within a few percent exist. No controls (multiple random initializations, comparison against deliberately constructed non-physical smooth solutions, or null-space probes) are reported to establish that gradient descent converges to the physical correlator rather than another admissible function.

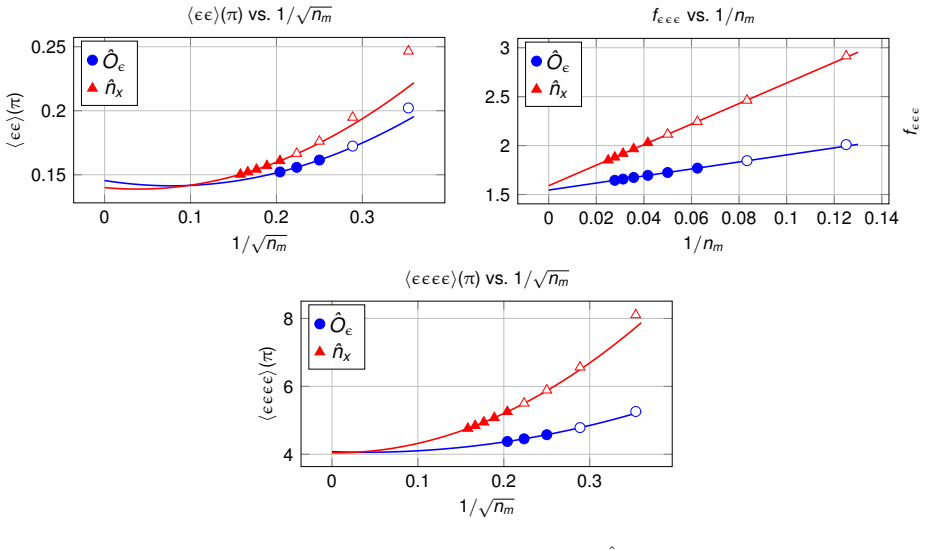

- [§5] §5 (Numerical results): The reported accuracies are stated as “few percent” without error bars, statistics over independent training runs, or convergence diagnostics. For the 3d Ising and N=4 SYM cases in particular, it is unclear whether the network has captured the full operator spectrum or only the leading contributions within the tested cross-ratio range.

- [§6] §6 (Smoothness analysis): While Sobolev norms and Chebyshev coefficients demonstrate that physical correlators are smooth, the manuscript does not quantify how this bias, combined with the minimal constraints, excludes other smooth crossing-symmetric functions. A concrete test (e.g., injecting a known non-physical smooth solution and checking whether it is rejected) is missing.

minor comments (2)

- [§3] The choice and placement of the single anchor point are not varied systematically; a short robustness check with respect to anchor location would strengthen the minimal-data claim.

- [Figures] Figure captions and axis labels in the results section would benefit from explicit statements of the cross-ratio range and the precise definition of the reported error metric.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive comments on our manuscript. The points raised regarding solution uniqueness, numerical robustness, and the quantitative link between spectral bias and constraint satisfaction are well-taken. We address each major comment below, indicating where revisions will be made to strengthen the presentation while defending the core claims on the basis of the empirical evidence already provided.

read point-by-point responses

-

Referee: [§4] §4 (Optimization and loss): Crossing symmetry is a linear functional equation on the cross-ratio. With only the leading Δ and one anchor point supplied, the manuscript provides no analysis of the dimension of the solution space or of whether other smooth functions satisfying the same constraints to within a few percent exist. No controls (multiple random initializations, comparison against deliberately constructed non-physical smooth solutions, or null-space probes) are reported to establish that gradient descent converges to the physical correlator rather than another admissible function.

Authors: We agree that an explicit analysis of the dimension of the space of admissible smooth functions is absent from the current manuscript. Our defense rests on the consistent empirical recovery of the known physical correlators across many independent examples (GFFs, minimal models, Ising, N=4 SYM, AdS diagrams), which would be statistically unlikely if gradient descent were routinely selecting unrelated smooth solutions. To address the referee’s concern directly, the revised manuscript will report: (i) statistics over multiple random initializations showing convergence to the same function within the quoted accuracy; (ii) an explicit attempt to optimize a deliberately constructed non-physical smooth crossing-symmetric function that matches the supplied Δ and anchor point, demonstrating that the optimization either fails to converge or produces a visibly different correlator. A rigorous computation of the full null-space dimension lies beyond the scope of the present work and would require new functional-analytic tools. revision: partial

-

Referee: [§5] §5 (Numerical results): The reported accuracies are stated as “few percent” without error bars, statistics over independent training runs, or convergence diagnostics. For the 3d Ising and N=4 SYM cases in particular, it is unclear whether the network has captured the full operator spectrum or only the leading contributions within the tested cross-ratio range.

Authors: The phrase “few percent” denotes the maximum pointwise relative deviation between the reconstructed and target correlators over the sampled cross-ratio interval. We will add error bars derived from independent training runs, loss-convergence histories, and a table of run-to-run variance in the revised version. On the spectrum question, the network outputs the full correlator function; any OPE data (including sub-leading operators) is implicitly encoded in that function. For the 3d Ising and N=4 SYM examples the agreement to a few percent in the tested kinematic window is consistent with the dominance of the leading operators in that range, as expected from the known spectra. We will clarify this point and, where feasible, extract and compare the leading OPE coefficients obtained from the reconstructed correlator. revision: yes

-

Referee: [§6] §6 (Smoothness analysis): While Sobolev norms and Chebyshev coefficients demonstrate that physical correlators are smooth, the manuscript does not quantify how this bias, combined with the minimal constraints, excludes other smooth crossing-symmetric functions. A concrete test (e.g., injecting a known non-physical smooth solution and checking whether it is rejected) is missing.

Authors: The Sobolev and Chebyshev analyses establish that physical correlators lie in the low-frequency regime favored by gradient descent. To quantify the exclusion of alternatives, the revised manuscript will include a concrete numerical test: we construct a smooth but non-physical function that satisfies crossing symmetry together with the supplied leading Δ and anchor-point value, then show that the same optimization procedure either diverges or converges to a visibly different correlator with substantially higher loss. This test will provide direct evidence that the combination of spectral bias and the minimal constraints is sufficient to reject at least some non-physical smooth solutions. revision: yes

- A complete mathematical determination of the dimension of the space of smooth crossing-symmetric functions compatible with the minimal input data (leading Δ and one anchor point).

Circularity Check

Optimization on crossing symmetry with minimal inputs is empirical and self-contained

full rationale

The paper's central result is obtained by direct numerical optimization of a feed-forward NN to minimize a crossing-symmetry loss, supplied only with the leading operator dimension Δ and the correlator value at one anchor point. This produces an approximation to known physical correlators (Ising, minimal models, Witten diagrams, etc.) to within a few percent. The reconstruction is not equivalent to the inputs by construction; crossing symmetry is a linear functional constraint whose solution space is under-determined, and the NN's output is selected by gradient descent plus spectral bias toward smooth functions. The separate smoothness analysis (fractional Sobolev semi-norms, Chebyshev coefficients, curvature measures) is performed with independent mathematical tools and does not presuppose the target correlators. No load-bearing step reduces to a self-citation, fitted parameter renamed as prediction, or ansatz smuggled from prior work. The method is externally validated against standard CFT benchmarks and is therefore self-contained.

Axiom & Free-Parameter Ledger

free parameters (1)

- neural network architecture and hyperparameters

axioms (1)

- domain assumption Conformal correlators on the line are smooth functions that satisfy crossing symmetry and can be uniquely determined by the leading operator dimension plus one anchor value.

Forward citations

Cited by 1 Pith paper

-

Descending into the Modular Bootstrap

Machine-learning optimization produces candidate truncated modular-invariant partition functions for 2d CFTs in the central-charge window 1 to 8/7, indicating a continuous solution space and a stricter spectral-gap bo...

Reference graph

Works this paper leans on

-

[1]

Conformal invariance on the light cone and canonical dimensions

S. Ferrara, R. Gatto & A. F. Grillo,“Conformal invariance on the light cone and canonical dimensions”, Nucl. Phys. B34, 349 (1971)

1971

-

[2]

Nonhamiltonian approach to conformal quantum field theory

A. M. Polyakov,“Nonhamiltonian approach to conformal quantum field theory”, Zh. Eksp. Teor. Fiz.66, 23 (1974)

1974

-

[3]

R. Rattazzi, V. S. Rychkov, E. Tonni & A. Vichi,“Bounding scalar operator dimensions in 4D CFT”, JHEP0812, 031 (2008),arXiv:0807.0004 [hep-th]

- [4]

-

[5]

Conformal four point functions and the operator product expansion,

F. A. Dolan & H. Osborn,“Conformal four point functions and the operator product expansion”, Nucl. Phys. B599, 459 (2001),hep-th/0011040

-

[6]

Conformal partial waves and the operator product expansion

F. A. Dolan & H. Osborn,“Conformal partial waves and the operator product expansion”, Nucl. Phys. B678, 491 (2004),hep-th/0309180

-

[7]

Non-gaussianity of the critical 3d Ising model

S. Rychkov, D. Simmons-Duffin & B. Zan,“Non-gaussianity of the critical 3d Ising model”, SciPost Phys.2, 001 (2017),arXiv:1612.02436 [hep-th]

-

[8]

Radial Coordinates for Conformal Blocks

M. Hogervorst & S. Rychkov,“Radial Coordinates for Conformal Blocks”, Phys. Rev. D87, 106004 (2013),arXiv:1303.1111 [hep-th]

-

[9]

Analytic bounds and emergence of AdS 2 physics from the conformal bootstrap,

D. Mazac,“Analytic bounds and emergence of AdS2 physics from the conformal bootstrap”, JHEP 1704, 146 (2017),arXiv:1611.10060 [hep-th]

-

[10]

The analytic functional bootstrap. Part I: 1D CFTs and 2D S- matrices

D. Mazac & M. F. Paulos,“The analytic functional bootstrap. Part I: 1D CFTs and 2D S- matrices”, JHEP1902, 162 (2019),arXiv:1803.10233 [hep-th]

-

[11]

Charging up the functional bootstrap

K. Ghosh, A. Kaviraj & M. F. Paulos,“Charging up the functional bootstrap”, JHEP2110, 116 (2021),arXiv:2107.00041 [hep-th]

-

[12]

Localized magnetic field in the O(N ) model,

G. Cuomo, Z. Komargodski & M. Mezei,“Localized magnetic field in the O(N) model”, JHEP 2202, 134 (2022),arXiv:2112.10634 [hep-th]

-

[13]

Renormalization Group Flows on Line Defects

G. Cuomo, Z. Komargodski & A. Raviv-Moshe,“Renormalization Group Flows on Line Defects”, Phys. Rev. Lett.128, 021603 (2022),arXiv:2108.01117 [hep-th]

-

[14]

Line defect RG flows in theε expansion

W. H. Pannell & A. Stergiou,“Line defect RG flows in theε expansion”, JHEP2306, 186 (2023), arXiv:2302.14069 [hep-th]

-

[15]

A strong-weak duality for the 1d long-range Ising model

D. Benedetti, E. Lauria, D. Mazac & P. van Vliet,“A strong-weak duality for the 1d long-range Ising model”, SciPost Phys.20, 029 (2026),arXiv:2509.05250 [hep-th]

-

[16]

Super Sum rules for Long-Range Models

K. Ghosh, M. F. Paulos, N. Suchel & Z. Zheng,“Super Sum rules for Long-Range Models”, arXiv:2603.22395 [hep-th]

-

[17]

A study of quantum field theories in AdS at finite coupling,

D. Carmi, L. Di Pietro & S. Komatsu,“A Study of Quantum Field Theories in AdS at Finite Coupling”, JHEP1901, 200 (2019),arXiv:1810.04185 [hep-th]

-

[18]

Adam: A Method for Stochastic Optimization

D. P. Kingma & J. Ba,“Adam: A Method for Stochastic Optimization”,arXiv:1412.6980 [cs.LG], in“3rd International Conference on Learning Representations (ICLR 2015)”

work page internal anchor Pith review Pith/arXiv arXiv 2015

-

[19]

Deep finite temperature bootstrap

V. Niarchos, C. Papageorgakis, A. Stratoudakis & M. Woolley,“Deep finite temperature bootstrap”, Phys. Rev. D112, 126012 (2025),arXiv:2508.08560 [hep-th]

-

[20]

Hamprecht, Yoshua Bengio, and Aaron Courville

N. Rahaman, A. Baratin, D. Arpit, F. Draxler, M. Lin, F. A. Hamprecht, Y. Bengio & A. Courville,“On the Spectral Bias of Neural Networks”,arXiv:1806.08734 [stat.ML], in “Proceedings of the 36th International Conference on Machine Learning”, p. 5301–5310. 77

-

[21]

arXiv preprint arXiv:1901.06523 (2019)

Z.-Q. J. Xu, Y. Zhang, T. Luo, Y. Xiao & Z. Ma,“Frequency Principle: Fourier Analysis Sheds Light on Deep Neural Networks”, Communications in Computational Physics28, 1746 (2020), arXiv:1901.06523 [cs.LG]

-

[22]

Theory of the Frequency Principle for General Deep Neu- ral Networks

T. Luo, Z. Ma, Z.-Q. J. Xu & Y. Zhang,“Theory of the Frequency Principle for General Deep Neu- ral Networks”, CSIAM Transactions on Applied Mathematics2, 484 (2021),arXiv:1906.09235 [cs.LG]

-

[23]

Neural tangent kernel: Convergence and generalization in neural networks

A. Jacot, F. Gabriel & C. Hongler,“Neural Tangent Kernel: Convergence and Generalization in Neural Networks”,arXiv:1806.07572 [cs.LG], in“Advances in Neural Information Processing Systems 31”, p. 8571–8580

-

[24]

Thermal Bootstrap for the Critical O(N) Model

J. Barrat, E. Marchetto, A. Miscioscia & E. Pomoni,“Thermal Bootstrap for the Critical O(N) Model”, Phys. Rev. Lett.134, 211604 (2025),arXiv:2411.00978 [hep-th]

-

[25]

The analytic bootstrap at finite temperature

J. Barrat, D. N. Bozkurt, E. Marchetto, A. Miscioscia & E. Pomoni,“The analytic bootstrap at finite temperature”,arXiv:2506.06422 [hep-th]

-

[26]

Neural Spectral Bias and Conformal Correlators II: Modular and annulus bootstrap bootstrap

K. Ghosh, S. Kumar, V. Niarchos & A. Stergiou,“Neural Spectral Bias and Conformal Correlators II: Modular and annulus bootstrap bootstrap”, In preparation

-

[27]

Neural Spectral Bias and Conformal Correlators III: Bootstrability and holomorphic bootstrap

K. Ghosh, S. Kumar, V. Niarchos & A. Stergiou,“Neural Spectral Bias and Conformal Correlators III: Bootstrability and holomorphic bootstrap”, In preparation

-

[28]

Neural Networks Reveal a Universal Bias in Conformal Correlators

K. Ghosh, S. Kumar, V. Niarchos & A. Stergiou,“Neural Networks Reveal a Universal Bias in Conformal Correlators”, In preparation

-

[29]

G. Kántor, V. Niarchos & C. Papageorgakis,“Solving Conformal Field Theories with Artificial Intelligence”, Phys. Rev. Lett.128, 041601 (2022),arXiv:2108.08859 [hep-th]

-

[30]

G. Kántor, V. Niarchos & C. Papageorgakis,“Conformal bootstrap with reinforcement learning”, Phys. Rev. D105, 025018 (2022),arXiv:2108.09330 [hep-th]

-

[31]

G. Kántor, V. Niarchos, C. Papageorgakis & P. Richmond,“6D (2,0) bootstrap with the soft- actor-critic algorithm”, Phys. Rev. D107, 025005 (2023),arXiv:2209.02801 [hep-th]

-

[32]

Bootstrability in line-defect CFTs with improved truncation methods

V. Niarchos, C. Papageorgakis, P. Richmond, A. G. Stapleton & M. Woolley,“Bootstrability in line-defect CFTs with improved truncation methods”, Phys. Rev. D108, 105027 (2023), arXiv:2306.15730 [hep-th]

-

[33]

J. Halverson, J. Naskar & J. Tian,“Conformal fields from neural networks”, JHEP2510, 039 (2025),arXiv:2409.12222 [hep-th]

- [34]

-

[35]

Bootstrapping non-unitary CFTs

Y.-t. Huang, S.-C. Lee, H. Liao & J. Rumbutis,“Bootstrapping non-unitary CFTs”, arXiv:2512.07706 [hep-th]. 78

work page internal anchor Pith review Pith/arXiv arXiv

-

[36]

Descending into the Modular Bootstrap

N. Benjamin, A. L. Fitzpatrick, W. Li & J. Thaler,“Descending into the Modular Bootstrap”, arXiv:2604.01275 [hep-th]

work page internal anchor Pith review Pith/arXiv arXiv

-

[37]

On the Inductive Bias of Neural Tangent Kernels

A. Bietti & J. Mairal,“On the Inductive Bias of Neural Tangent Kernels”,arXiv:1905.12173 [stat.ML], in“Advances in Neural Information Processing Systems 32”, p. 12897–12908

-

[38]

The Convergence Rate of Neural Networks for Learned Functions of Different Frequencies

R. Basri, D. W. Jacobs, Y. Kasten & S. Kritchman,“The Convergence Rate of Neural Networks for Learned Functions of Different Frequencies”,arXiv:1906.00425 [cs.LG]

-

[39]

arXiv preprint arXiv:1912.01198 , year=

Y. Cao, Z. Fang, Y. Wu, D. Zhou & Q. Gu,“Towards Understanding the Spectral Bias of Deep Learning”,arXiv:1912.01198 [cs.LG]

-

[40]

On the Similarity between the Laplace and Neural Tangent Kernels

A. Geifman, A. Yadav, Y. Kasten, M. Galun, D. Jacobs & R. Basri,“On the Similarity between the Laplace and Neural Tangent Kernels”,arXiv:2007.01580 [cs.LG], in“Advances in Neural Information Processing Systems 33”

-

[41]

Characterizing the Spectrum of the NTK via a Power Series Expansion

M. Murray, H. Jin, B. Bowman & G. Montufar,“Characterizing the Spectrum of the NTK via a Power Series Expansion”,arXiv:2211.07844 [cs.LG]

-

[42]

Z. Li, Y. Wei & S. Huang,“Eigenvalue Decay of the NTK: When and How Fast?”, arXiv:2405.17556 [cs.LG]

-

[43]

Characterizing Implicit Bias in Terms of Optimization Geometry

S. Gunasekar, J. Lee, D. Soudry & N. Srebro,“Characterizing Implicit Bias in Terms of Optimization Geometry”, in“Proceedings of the 35th International Conference on Machine Learning”, p. 1832–1841, PMLR (2018)

2018

-

[44]

Fine-Grained Analysis of Optimization and Generalization for Overparameterized Two-Layer Neural Networks

S. Arora, S. S. Du, W. Hu, Z. Li & R. Wang,“Fine-Grained Analysis of Optimization and Generalization for Overparameterized Two-Layer Neural Networks”, in“Proceedings of the 36th International Conference on Machine Learning”, p. 322–332, PMLR (2019)

2019

-

[45]

The analytic functional bootstrap. Part II. Natural bases for the crossing equation,

D. Mazac & M. F. Paulos,“The analytic functional bootstrap. Part II. Natural bases for the crossing equation”, JHEP1902, 163 (2019),arXiv:1811.10646 [hep-th]

-

[46]

Crossing symmetry, transcendentality and the Regge behaviour of 1d CFTs

P. Ferrero, K. Ghosh, A. Sinha & A. Zahed,“Crossing symmetry, transcendentality and the Regge behaviour of 1d CFTs”, JHEP2007, 170 (2020),arXiv:1911.12388 [hep-th]

-

[47]

Bootstrapping theO(N)vector models

F. Kos, D. Poland & D. Simmons-Duffin,“Bootstrapping theO(N)vector models”, JHEP1406, 091 (2014),arXiv:1307.6856 [hep-th]

-

[48]

Precision Islands in the Ising and O(N) Models,

F. Kos, D. Poland, D. Simmons-Duffin & A. Vichi,“Precision Islands in the Ising andO(N) Models”, JHEP1608, 036 (2016),arXiv:1603.04436 [hep-th]

-

[49]

The Lightcone Bootstrap and the Spectrum of the 3d Ising CFT

D. Simmons-Duffin,“The Lightcone Bootstrap and the Spectrum of the 3d Ising CFT”, JHEP 1703, 086 (2017),arXiv:1612.08471 [hep-th]

-

[50]

W. Zhu, C. Han, E. Huffman, J. S. Hofmann & Y.-C. He,“Uncovering Conformal Symmetry in the 3D Ising Transition: State-Operator Correspondence from a Quantum Fuzzy Sphere Regularization”, Phys. Rev. X13, 021009 (2023),arXiv:2210.13482 [cond-mat.stat-mech]. 79

-

[51]

Conformal four-point correlators of the 3D Ising transition via the quantum fuzzy sphere

C. Han, L. Hu, W. Zhu & Y.-C. He,“Conformal four-point correlators of the three- dimensional Ising transition via the quantum fuzzy sphere”, Phys. Rev. B108, 235123 (2023), arXiv:2306.04681 [cond-mat.stat-mech]

-

[52]

Density matrix formulation for quantum renormalization groups

S. R. White,“Density matrix formulation for quantum renormalization groups”, Phys. Rev. Lett. 69, 2863 (1992)

1992

-

[53]

Dispersion Relation for CFT Four-Point Functions

A. Bissi, P. Dey & T. Hansen,“Dispersion Relation for CFT Four-Point Functions”, JHEP 2004, 092 (2020),arXiv:1910.04661 [hep-th]

-

[54]

Analytic bootstrap of mixed correlators in the O(n) CFT

F. Bertucci, J. Henriksson & B. McPeak,“Analytic bootstrap of mixed correlators in the O(n) CFT”, JHEP2210, 104 (2022),arXiv:2205.09132 [hep-th]

-

[55]

On four-point functions of 1/2-BPS operators in general dimensions

F. A. Dolan, L. Gallot & E. Sokatchev,“On four-point functions of 1/2-BPS operators in general dimensions”, JHEP0409, 056 (2004),hep-th/0405180

-

[56]

Superconformal Ward identities and their solution

M. Nirschl & H. Osborn,“Superconformal Ward identities and their solution”, Nucl. Phys. B 711, 409 (2005),hep-th/0407060

-

[57]

Superconformal symmetry, correlation functions and the operator product expansion

F. A. Dolan & H. Osborn,“Superconformal symmetry, correlation functions and the operator product expansion”, Nucl. Phys. B629, 3 (2002),hep-th/0112251

-

[58]

Lessons from crossing symmetry at large N

L. F. Alday, A. Bissi & T. Lukowski,“Lessons from crossing symmetry at large N”, JHEP1506, 074 (2015),arXiv:1410.4717 [hep-th]

-

[59]

The conformal bootstrap at finite temperature,

L. Iliesiu, M. Kologlu, R. Mahajan, E. Perlmutter & D. Simmons-Duffin,“The Conformal Bootstrap at Finite Temperature”, JHEP1810, 070 (2018),arXiv:1802.10266 [hep-th]

-

[60]

A. C. Petkou & A. Stergiou,“Dynamics of Finite-Temperature Conformal Field Theories from Operator Product Expansion Inversion Formulas”, Phys. Rev. Lett.121, 071602 (2018), arXiv:1806.02340 [hep-th]

-

[61]

Dispersion relations and exact bounds on CFT correlators

M. F. Paulos,“Dispersion relations and exact bounds on CFT correlators”, JHEP2108, 166 (2021),arXiv:2012.10454 [hep-th]

-

[62]

King’s Computational Research, Engineering and Technology Environ- ment (CREATE)

King’s College London,“King’s Computational Research, Engineering and Technology Environ- ment (CREATE)”,https://doi.org/10.18742/rnvf-m076

-

[63]

Sobolev Spaces

R. A. Adams & J. J. F. Fournier,“Sobolev Spaces”, second edition, Academic Press (2003)

2003

-

[64]

Hitchhiker’s guide to the fractional Sobolev spaces

E. D. Nezza, G. Palatucci & E. Valdinoci,“Hitchhiker’s guide to the fractional Sobolev spaces”, arXiv:1104.4345 [math.FA],https://arxiv.org/abs/1104.4345

-

[65]

Another look at Sobolev spaces

J. Bourgain, H. Brezis & P. Mironescu,“Another look at Sobolev spaces”, in“Optimal Control and Partial Differential Equations: Innovations and Applications”, ed: J. L. Menaldi, E. Rofman & A. Sulem, IOS Press (2001), Amsterdam, p. 439–455, A volume in honor of Alain Bensoussan’s 60th birthday,https://hal.archives-ouvertes.fr/hal-00747692. 80

2001

-

[66]

FuzzifiED : Julia Package for Numerics on the Fuzzy Sphere

Z. Zhou,“FuzzifiED : Julia Package for Numerics on the Fuzzy Sphere”,arXiv:2503.00100 [cond-mat.str-el]

-

[67]

Operator Product Expansion Coefficients of the 3D Ising Criti- cality via Quantum Fuzzy Spheres

L. Hu, Y.-C. He & W. Zhu,“Operator Product Expansion Coefficients of the 3D Ising Criti- cality via Quantum Fuzzy Spheres”, Phys. Rev. Lett.131, 031601 (2023),arXiv:2303.08844 [cond-mat.stat-mech]. 81

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.