Recognition: unknown

Evaluating Structured Strategy Backtests: Peer Benchmarks, Regime Timing, and Live Performance

Pith reviewed 2026-05-10 02:27 UTC · model grok-4.3

The pith

Marketed strategy backtests reflect pre-launch factor regimes more than skill and show limited live portability.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

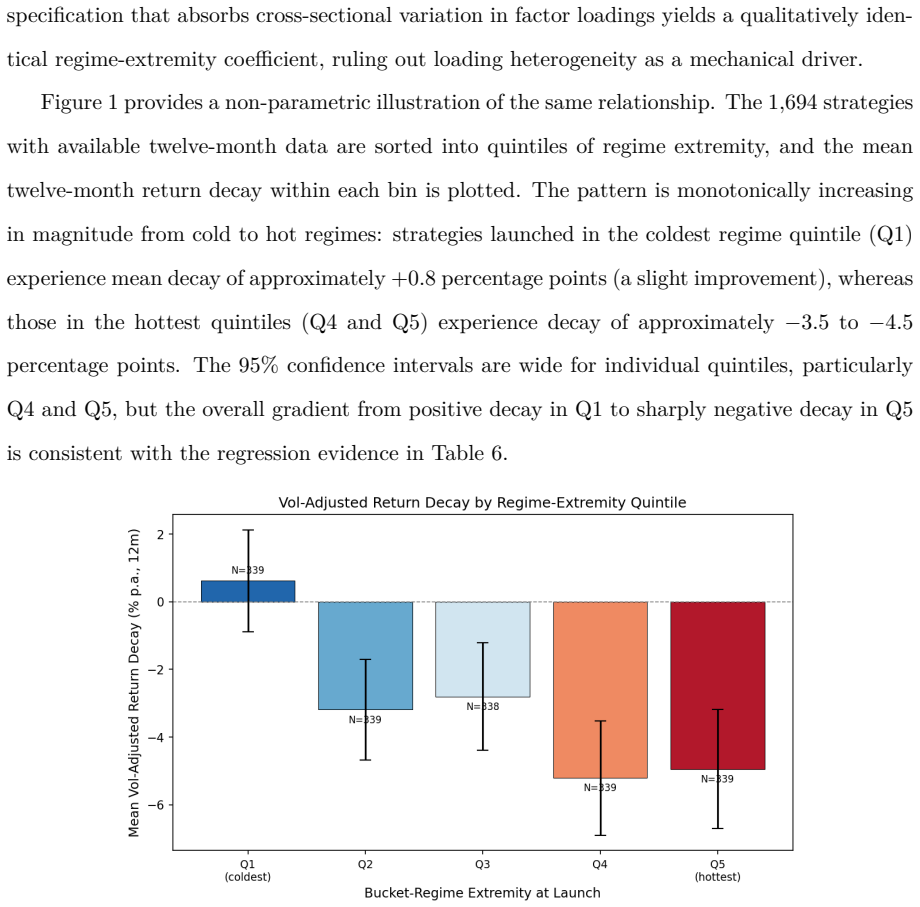

Using 1,726 commercially distributed structured strategies from ten global institutions, this paper shows that raw pro-forma performance has only limited portability into the live period and weakens sharply once live outcomes are measured relative to peer and external benchmarks. The evidence indicates that marketed backtests predominantly reflect the common factor regime present before launch rather than strategy-specific skill. Strategies launched after unusually strong bucket-factor conditions experience materially worse subsequent deterioration.

What carries the argument

Peer-benchmarked comparison of pro-forma versus live returns, conditioned on bucket-factor regime timing at launch.

If this is right

- Backtests should be judged relative to appropriate peer benchmarks rather than in isolation.

- A larger discount should be applied to backtests produced after extreme factor runs.

- Raw pro-forma metrics alone provide insufficient signal for evaluating strategy skill.

- Allocators should incorporate regime timing when assessing historical track records.

Where Pith is reading between the lines

- Providers may have an incentive to time launches during favorable factor regimes to improve apparent backtest appeal.

- The same regime-adjustment lens could be applied to evaluate other quantitative and systematic strategies.

- Persistent over-reliance on unadjusted backtests may systematically contribute to disappointing live portfolio outcomes.

Load-bearing premise

The 1,726 strategies form an unbiased sample of commercially distributed products and peer benchmarks can be built without material selection or survivorship bias.

What would settle it

Finding that live-performance decay is statistically identical for strategies launched after strong versus neutral factor conditions would falsify the regime-timing claim.

Figures

read the original abstract

Institutional allocators often evaluate structured strategies on the basis of marketed backtests -- hypothetical track records constructed by applying a strategy's rules to historical data prior to any live trading, also referred to as pro-forma performance. It is unclear how much of that signal survives once the strategy is actually traded. Using 1,726 commercially distributed structured strategies from ten global institutions, this paper shows that raw pro-forma performance has only limited portability into the live period and weakens sharply once live outcomes are measured relative to peer and external benchmarks. The evidence indicates that marketed backtests predominantly reflect the common factor regime present before launch rather than strategy-specific skill. Strategies launched after unusually strong bucket-factor conditions experience materially worse subsequent deterioration. For allocators, the implication is practical: backtests should be judged relative to appropriate peer benchmarks, and the discount applied to them should increase when launch occurs after an extreme factor run.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper analyzes 1,726 commercially distributed structured strategies from ten global institutions and concludes that raw pro-forma backtest performance exhibits only limited portability into live trading. Performance deteriorates sharply when measured against peer and external benchmarks, indicating that marketed backtests primarily capture pre-launch common factor regimes rather than strategy-specific skill. Strategies launched after unusually strong bucket-factor conditions show materially worse subsequent deterioration, with practical implications for allocators to apply larger discounts and use peer-relative evaluation.

Significance. If the central empirical findings survive scrutiny on sample construction and benchmark definitions, the results would carry substantial practical significance for institutional portfolio management. They provide large-scale evidence on the disconnect between hypothetical and realized strategy performance and offer actionable guidance on regime-aware backtest evaluation. The scale of the dataset (1,726 strategies) is a notable strength, though the commercial-distribution filter limits generalizability.

major comments (2)

- [Abstract and implied Data/Methodology sections] The core interpretation—that deterioration reflects factor regimes rather than skill—is load-bearing on the assumption that the sample of commercially distributed strategies is not conditioned on strong pro-forma outcomes. Because institutions market only strategies with attractive backtests, the observed portability failure and relative weakening versus peers can arise mechanically from selection on the dependent variable, without requiring a factor-regime explanation. This concern is not addressed by the abstract's reference to launch timing after strong conditions, as launch decisions are themselves endogenous to backtest results.

- [Abstract and implied Data/Methodology sections] No information is supplied on data selection rules, exact benchmark construction methodology, statistical tests for deterioration, or robustness checks (e.g., alternative peer definitions or survivorship adjustments). These omissions leave open the possibility that post-hoc choices drive the reported results and prevent evaluation of whether the peer/external benchmark comparisons isolate skill from common factors.

Simulated Author's Rebuttal

We thank the referee for the constructive report and the emphasis on methodological transparency and potential selection effects. We agree that the original submission omitted key details on data construction and benchmarks; these will be added in full. On the core interpretation, we believe the regime-timing variation provides identification beyond mechanical selection on backtest strength, but we will expand the discussion and add controls to address endogeneity explicitly.

read point-by-point responses

-

Referee: [Abstract and implied Data/Methodology sections] The core interpretation—that deterioration reflects factor regimes rather than skill—is load-bearing on the assumption that the sample of commercially distributed strategies is not conditioned on strong pro-forma outcomes. Because institutions market only strategies with attractive backtests, the observed portability failure and relative weakening versus peers can arise mechanically from selection on the dependent variable, without requiring a factor-regime explanation. This concern is not addressed by the abstract's reference to launch timing after strong conditions, as launch decisions are themselves endogenous to backtest results.

Authors: We acknowledge that commercial distribution inherently selects on attractive pro-forma results, creating a mechanical component to observed deterioration. However, the paper exploits cross-sectional variation in pre-launch bucket-factor conditions among marketed strategies. Strategies launched after extreme positive factor regimes exhibit significantly larger post-launch underperformance relative to peers, even after matching on backtest strength. We will add a dedicated subsection on selection and endogeneity, including regressions of deterioration on regime strength that control for the strategy's own pro-forma Sharpe ratio and other backtest metrics. This isolates the incremental role of the factor regime beyond selection on the dependent variable. revision: partial

-

Referee: [Abstract and implied Data/Methodology sections] No information is supplied on data selection rules, exact benchmark construction methodology, statistical tests for deterioration, or robustness checks (e.g., alternative peer definitions or survivorship adjustments). These omissions leave open the possibility that post-hoc choices drive the reported results and prevent evaluation of whether the peer/external benchmark comparisons isolate skill from common factors.

Authors: We agree that the initial version lacked sufficient methodological detail. The revised manuscript will contain a new Data and Sample Construction section specifying inclusion criteria for the 1,726 strategies (minimum live trading history, institutional source, and commercial distribution filter), exact peer benchmark construction (cohort-matched by launch date, asset class, and strategy category), external benchmark definitions (factor-mimicking portfolios and relevant indices), the statistical framework (tests for portability via paired differences and relative performance via benchmark-adjusted alphas), and a full robustness appendix with alternative peer groupings, survivorship adjustments, and placebo tests. revision: yes

Circularity Check

No circularity in empirical analysis

full rationale

The paper conducts a purely empirical study comparing pro-forma backtest performance to live outcomes for 1,726 commercially distributed strategies, using peer and external benchmarks to assess portability and factor regime effects. No mathematical derivations, equations, fitted parameters, or self-citations appear in the provided text that would reduce any claim to its inputs by construction. The central findings rest on direct observational comparisons of returns rather than tautological loops, self-definitional constructs, or renamed known results. This is a standard non-circular empirical paper.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The 1,726 strategies constitute a representative sample of commercially distributed structured products without material survivorship or selection bias.

Reference graph

Works this paper leans on

-

[1]

Amenc, N., Martellini, L., and Vaissi\'e, M. (2003). Benefits and risks of alternative investment strategies. Journal of Asset Management, 4(2), 96--118

2003

-

[2]

D., Beck, N., Kalesnik, V., and West, J

Arnott, R. D., Beck, N., Kalesnik, V., and West, J. (2016). How can `smart beta' go horribly wrong? Research Affiliates Fundamentals, February

2016

-

[3]

J., and Pedersen, L

Asness, C., Moskowitz, T. J., and Pedersen, L. H. (2013). Value and momentum everywhere. The Journal of Finance, 68(3), 929--985

2013

-

[4]

H., Borwein, J., L\'opez de Prado, M., and Zhu, Q

Bailey, D. H., Borwein, J., L\'opez de Prado, M., and Zhu, Q. J. (2014). Pseudo-mathematics and financial charlatanism: The effects of backtest overfitting on out-of-sample performance. Notices of the AMS, 61(5), 458--471

2014

-

[5]

Baquero, G., ter Horst, J., and Verbeek, M. (2005). Survival, look-ahead bias, and persistence in hedge fund performance. Journal of Financial and Quantitative Analysis, 40(3), 493--517

2005

-

[6]

Berk, J. B. and Green, R. C. (2004). Mutual fund flows and performance in rational markets. Journal of Political Economy, 112(6), 1269--1295

2004

-

[7]

Blin, O., Ielpo, F., Lee, J., and Teiletche, J. (2021). Alternative risk premia timing: A point-in-time macro, sentiment, valuation analysis. Journal of Systematic Investing, 1(1), 52--72

2021

-

[8]

C., and Miller, D

Cameron, A. C., and Miller, D. L. (2015). A practitioner's guide to cluster-robust inference. Journal of Human Resources, 50(2), 317--372

2015

-

[9]

Carhart, M. M. (1997). On persistence in mutual fund performance. The Journal of Finance, 52(1), 57--82

1997

-

[10]

and Vall\'ee, B

C\'el\'erier, C. and Vall\'ee, B. (2017). Catering to investors through security design: Headline rate and complexity. The Quarterly Journal of Economics, 132(3), 1469--1508

2017

-

[11]

and Wagalath, L

Cont, R. and Wagalath, L. (2013). Running for the exit: Distressed selling and endogenous correlation in financial markets. Mathematical Finance, 23(4), 718--741

2013

-

[12]

Cremers, M., Petajisto, A., and Zitzewitz, E. (2012). Should benchmark indices have alpha? Revisiting performance evaluation. Critical Finance Review, 2(1), 1--48

2012

-

[13]

Cuthbertson, K., Nitzsche, D., and O'Sullivan, N. (2023). UK mutual fund performance persistence: Optimal portfolios with positive alpha. Journal of Asset Management, 24(5), 356--368

2023

-

[14]

Daniel, K., Grinblatt, M., Titman, S., and Wermers, R. (1997). Measuring mutual fund performance with characteristic-based benchmarks. The Journal of Finance, 52(3), 1035--1058

1997

-

[15]

Evans, R. B. (2010). Mutual fund incubation. The Journal of Finance, 65(4), 1581--1611

2010

-

[16]

Fama, E. F. and French, K. R. (2010). Luck versus skill in the cross-section of mutual fund returns. The Journal of Finance, 65(5), 1915--1947

2010

-

[17]

Falck, A., Rej, A., and Thesmar, D. (2022). When do systematic strategies decay? Quantitative Finance, 22(11), 1955--1969

2022

-

[18]

Fieberg, C., Varmaz, A., and Poddig, T. (2019). Risk models vs characteristic models from an investor's perspective: Make use of the best of both worlds. Journal of Risk Finance, 20(2), 201--222

2019

-

[19]

and Hsieh, D

Fung, W. and Hsieh, D. A. (2004). Hedge fund benchmarks: A risk-based approach. Financial Analysts Journal, 60(5), 65--80

2004

-

[20]

and Shleifer, A

Greenwood, R. and Shleifer, A. (2014). Expectations of returns and expected returns. The Review of Financial Studies, 27(3), 714--746

2014

-

[21]

Hamdan, R., Pavlowsky, F., Roncalli, T., and Zheng, B. (2016). A primer on alternative risk premia. SSRN Working Paper, No.\ 2766850

2016

-

[22]

R., Liu, Y., and Zhu, H

Harvey, C. R., Liu, Y., and Zhu, H. (2016). and the cross-section of expected returns. The Review of Financial Studies, 29(1), 5--68

2016

-

[23]

Hunter, D., Kandel, E., Kandel, S., and Wermers, R. (2014). Mutual fund performance evaluation with active peer benchmarks. Journal of Financial Economics, 112(1), 1--29

2014

-

[24]

Ilmanen, A. (2012). Do financial markets reward buying or selling insurance and lottery tickets? Financial Analysts Journal, 68(5), 26--36

2012

-

[25]

Jagannathan, R., Malakhov, A., and Novikov, D. (2010). Do hot hands exist among hedge fund managers? An empirical evaluation. The Journal of Finance, 65(1), 217--255

2010

-

[26]

I., Kelly, B

Jensen, T. I., Kelly, B. T., and Pedersen, L. H. (2023). Is there a replication crisis in finance? The Journal of Finance, 78(5), 2465--2518

2023

-

[27]

Kosowski, R., Timmermann, A., Wermers, R., and White, H. (2006). Can mutual fund ``stars'' really pick stocks? New evidence from a bootstrap analysis. The Journal of Finance, 61(6), 2551--2595

2006

-

[28]

Lhabitant, F.-S. (2001). Assessing market risk for hedge funds and hedge fund portfolios. Journal of Risk Finance, 2(4), 16--32

2001

-

[29]

B., and Todorovic, N

Mateus, C., Mateus, I. B., and Todorovic, N. (2019). Benchmark-adjusted performance of US equity mutual funds and the issue of benchmark selection. Journal of Asset Management, 20(1), 15--30

2019

-

[30]

McLean, R. D. and Pontiff, J. (2016). Does academic research destroy stock return predictability? The Journal of Finance, 71(1), 5--32

2016

-

[31]

H., and Pulvino, T

Mitchell, M., Pedersen, L. H., and Pulvino, T. (2007). Slow moving capital. American Economic Review, 97(2), 215--220

2007

-

[32]

Pedersen, L. H. (2009). When everyone runs for the exit. International Journal of Central Banking, 5(4), 177--199

2009

-

[33]

P\'enasse, J. (2022). Understanding alpha decay. Management Science, 68(5), 3966--3973

2022

-

[34]

Rockafellar, R. T. and Uryasev, S. (2002). Conditional value-at-risk for general loss distributions. Journal of Banking & Finance, 26(7), 1443--1471

2002

-

[35]

Roncalli, T. (2017). Alternative risk premia: What do we know? In E. Jurczenko (Ed.), Factor Investing: From Traditional to Alternative Risk Premia. ISTE Press--Elsevier

2017

-

[36]

Wagner, N. (2002). On a model of portfolio selection with benchmark. Journal of Asset Management, 3(1), 55--65

2002

-

[37]

Sortino, F. A. and van der Meer, R. (1991). Downside risk. The Journal of Portfolio Management, 17(4), 27--31

1991

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.