Recognition: unknown

StabilizerBench: A Benchmark for AI-Assisted Quantum Error Correction Circuit Synthesis

Pith reviewed 2026-05-09 22:23 UTC · model grok-4.3

The pith

StabilizerBench supplies 192 stabilizer codes to benchmark AI agents on synthesizing quantum error correction circuits.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors present StabilizerBench as a collection of 192 stabilizer codes drawn from 12 families, covering qubit numbers from 4 to 196 and distances from 2 to 21, divided into tasks for state preparation circuit generation, constrained circuit optimization, and full fault-tolerant circuit synthesis, together with a generator-weighted scoring method and continuous metrics for fault tolerance and optimization quality.

What carries the argument

Generator-weighted scoring system that computes a capability score for breadth of success across codes and a quality score for circuit merit, supported by continuous fault-tolerance and optimization metrics that go beyond simple pass/fail grading.

If this is right

- AI agents can be ranked by how broadly and how well they handle the three synthesis tasks.

- High scores indicate an agent can perform gate decomposition, qubit routing, and semantics-preserving changes required for QEC.

- The open design allows any prompting or strategy to be tested against the same inputs and oracles.

- Scalable verification makes it practical to assess performance on codes up to 196 qubits.

Where Pith is reading between the lines

- Success here may indicate readiness for AI to assist in designing circuits for non-stabilizer codes as well.

- Automated synthesis could lower the barrier to experimenting with larger fault-tolerant quantum algorithms.

- Similar benchmark structures might be adapted for other quantum software tasks like compiling arbitrary circuits.

- Tracking progress over time on this benchmark could show whether AI capabilities are advancing in this domain.

Load-bearing premise

That the chosen tasks and scoring rules capture the main difficulties and quality measures needed for practical AI-assisted quantum error correction circuit design.

What would settle it

Finding that agents scoring high on the benchmark still produce circuits with undetected errors when run on quantum simulators with realistic noise models or when applied to codes outside the stabilizer family.

Figures

read the original abstract

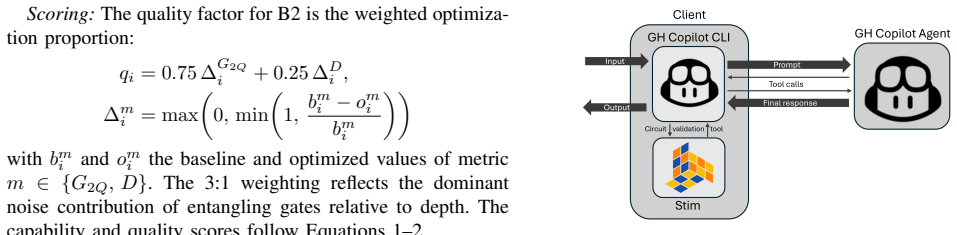

As quantum hardware scales toward fault tolerant operation, the demand for correct quantum error correction (QEC) circuits far outpaces manual design capacity. AI agents offer a promising path to automating this synthesis, yet no benchmark exists to measure their progress on the specialized task of generating QEC circuits. We introduce StabilizerBench, a benchmark suite of 192 stabilizer codes spanning 12 families, 4-196 qubits, and distances 2-21, organized into three tasks of increasing difficulty: state preparation circuit generation, circuit optimization under semantic constraints, and fault tolerant circuit synthesis. Although motivated by QEC, stabilizer circuits exercise core competencies required for general quantum programming, including gate decomposition, qubit routing, and semantic preserving transformations, while admitting efficient verification via the Gottesman Knill theorem, enabling the benchmark to scale to large codes without the exponential cost of full unitary comparison. We define a unified generator weighted scoring system with two tiers: a capability score measuring breadth of success and a quality score capturing circuit merit. We also introduce continuous fault tolerance and optimization metrics that grade error resilience and circuit improvements beyond binary pass or fail. Following the design of classical benchmarks such as SWE-bench, StabilizerBench specifies inputs, verification oracles, and scoring but leaves prompts and agent strategies open. We evaluate three frontier AI agents and find the benchmark discriminates across models and tasks with substantial headroom for improvement.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces StabilizerBench, a benchmark suite of 192 stabilizer codes spanning 12 families, 4-196 qubits, and distances 2-21. It organizes these into three tasks of increasing difficulty: state preparation circuit generation, circuit optimization under semantic constraints, and fault-tolerant circuit synthesis. The benchmark defines a unified generator-weighted scoring system with capability and quality tiers, plus continuous metrics for fault tolerance and optimization. Verification uses the Gottesman-Knill theorem for scalability. Evaluation of three frontier AI agents shows the benchmark discriminates across models and tasks with substantial headroom for improvement, following an open specification akin to SWE-bench.

Significance. If the scoring and metrics hold as faithful proxies, this provides a much-needed standardized framework to measure and drive progress in AI-assisted QEC synthesis, an area where manual design cannot scale with hardware. The efficient Gottesman-Knill verification and open task specification (inputs, oracles, scoring) are strengths that enable reproducible agent comparisons without prescribing prompts or strategies. This could accelerate automation of core quantum programming competencies like gate decomposition and semantic-preserving transformations.

major comments (2)

- [Benchmark Design and Scoring] The generator-weighted scoring system (capability and quality scores) and continuous fault-tolerance metric are load-bearing for the discrimination claim, yet the manuscript provides no explicit mapping or validation of these proxies against physical quantities such as logical error rates under depolarizing or leakage noise models, nor against known optimal circuits. This leaves open whether the observed discrimination and headroom reflect genuine QEC synthesis progress or metric artifacts.

- [Evaluation] The evaluation section reports that the benchmark discriminates across three frontier AI agents and tasks, but lacks details on exact scoring weights (listed as free parameters), full numerical results, or sensitivity analysis. Without these, the central claim that the benchmark 'discriminates ... with substantial headroom' cannot be independently verified.

minor comments (2)

- [Benchmark Construction] The manuscript would benefit from a summary table listing the 12 code families with their qubit ranges, distances, and task assignments to improve readability and allow quick assessment of coverage.

- [Conclusion] Although inspired by SWE-bench, the paper does not state whether the benchmark code, dataset, or verification oracles will be publicly released, which is a standard expectation for reproducibility in benchmark papers.

Simulated Author's Rebuttal

We thank the referee for their constructive comments, which highlight important aspects of verifiability and grounding for StabilizerBench. We address each major comment point by point below and indicate the revisions we will make.

read point-by-point responses

-

Referee: [Benchmark Design and Scoring] The generator-weighted scoring system (capability and quality scores) and continuous fault-tolerance metric are load-bearing for the discrimination claim, yet the manuscript provides no explicit mapping or validation of these proxies against physical quantities such as logical error rates under depolarizing or leakage noise models, nor against known optimal circuits. This leaves open whether the observed discrimination and headroom reflect genuine QEC synthesis progress or metric artifacts.

Authors: We designed the generator-weighted scoring and continuous metrics as scalable proxies that leverage the Gottesman-Knill theorem for efficient verification across large codes, avoiding the exponential cost of full quantum simulation. Direct mapping to physical error rates under specific noise models is not feasible within the benchmark's scope without introducing intractable computations that would undermine its purpose. In the revised manuscript we will add a dedicated subsection justifying the metric choices with references to standard QEC practices and include explicit comparisons against known optimal circuits for a subset of small codes (distances 2-3) where enumeration is possible. This will provide evidence that the observed discrimination tracks genuine circuit quality rather than artifacts. revision: partial

-

Referee: [Evaluation] The evaluation section reports that the benchmark discriminates across three frontier AI agents and tasks, but lacks details on exact scoring weights (listed as free parameters), full numerical results, or sensitivity analysis. Without these, the central claim that the benchmark 'discriminates ... with substantial headroom' cannot be independently verified.

Authors: We agree that the current presentation lacks sufficient detail for independent verification. The scoring weights are free parameters, and we will explicitly document the exact values used. In revision we will add an appendix with the complete numerical results for all three agents across all tasks and metrics, plus a sensitivity analysis that varies the weights to demonstrate robustness of the discrimination and headroom findings. revision: yes

Circularity Check

No circularity; benchmark and evaluation are externally grounded

full rationale

The paper defines StabilizerBench by enumerating 192 explicit stabilizer codes, three tasks, generator-weighted capability/quality scores, and continuous fault-tolerance metrics, all specified independently of any AI model outputs or fitted parameters. Verification is delegated to the external Gottesman-Knill theorem, which supplies an efficient oracle without reference to the benchmark's scoring rules. The reported discrimination across three frontier agents is an empirical observation obtained by running those agents on the fixed benchmark; it does not reduce to a self-referential fit or to any self-citation chain. No uniqueness theorems, ansatzes, or renamings of prior results are invoked. The construction therefore remains self-contained against external references such as SWE-bench.

Axiom & Free-Parameter Ledger

free parameters (1)

- generator weights in scoring system

axioms (1)

- standard math Gottesman-Knill theorem enables efficient classical simulation and verification of stabilizer circuits

Reference graph

Works this paper leans on

-

[1]

Polynomial-time algorithms for prime factorization and discrete logarithms on a quantum computer,

P. W. Shor, “Polynomial-time algorithms for prime factorization and discrete logarithms on a quantum computer,” vol. 26, no. 5, 1997, pp. 1484–1509

1997

-

[2]

Simulated quantum computation of molecular energies,

A. Aspuru-Guzik, A. D. Dutoi, P. J. Love, and M. Head-Gordon, “Simulated quantum computation of molecular energies,”Science, vol. 309, no. 5741, pp. 1704–1707, 2005

2005

-

[3]

Statistical assertions for validating patterns and finding bugs in quantum programs,

Y . Huang and M. Martonosi, “Statistical assertions for validating patterns and finding bugs in quantum programs,” inProceedings of the 46th International Symposium on Computer Architecture (ISCA), 2019

2019

-

[4]

QMon: Monitoring the execution of quantum circuits with mid-circuit measurement and reset,

N. Ma, J. Zhao, F. Khomh, S. Ali, and H. Li, “QMon: Monitoring the execution of quantum circuits with mid-circuit measurement and reset,” arXiv preprint arXiv:2512.13422, 2025

-

[5]

A comprehensive study of bug fixes in quantum programs,

J. Luo, P. Zhao, Z. Miao, S. Lan, and J. Zhao, “A comprehensive study of bug fixes in quantum programs,”arXiv preprint arXiv:2201.08662, 2022

-

[6]

Testing and debugging quantum programs: The road to 2030,

N. C. L. Ramalho, H. A. de Souza, and M. L. Chaim, “Testing and debugging quantum programs: The road to 2030,”arXiv preprint arXiv:2405.09178, 2024

-

[7]

OpenQASM 3: A broader and deeper quantum assembly language,

A. W. Cross, A. Javadi-Abhari, T. Alexander, N. de Beaudrap, L. S. Bishop, S. Heidel, C. A. Ryan, P. Sivarajah, J. Smolin, J. M. Gambetta, and B. R. Johnson, “OpenQASM 3: A broader and deeper quantum assembly language,”ACM Transactions on Quantum Computing, vol. 3, no. 3, pp. 1–50, 2022

2022

-

[8]

Q#: Enabling scalable quantum computing and development with a high-level DSL,

K. M. Svore, A. Geller, M. Troyer, J. Azariah, C. Granade, B. Heim, V . Kliuchnikov, M. Mykhailova, A. Paz, and M. Roetteler, “Q#: Enabling scalable quantum computing and development with a high-level DSL,” Proceedings of the Real World Domain Specific Languages Workshop (RWDSL), 2018

2018

-

[9]

Guppy: A pythonic quantum programming language,

Quantinuum, “Guppy: A pythonic quantum programming language,” https://github.com/CQCL/guppylang, 2024, accessed: 2026-03-01

2024

-

[10]

Qunity: A unified language for quantum and classical computing,

F. V oichick, L. Li, R. Rand, and M. Hicks, “Qunity: A unified language for quantum and classical computing,” inProceedings of the ACM on Programming Languages (POPL), vol. 7, 2023

2023

-

[11]

Tower: Data representations in a quantum programming language,

C. Yuan and M. Carbin, “Tower: Data representations in a quantum programming language,”Proceedings of the ACM on Programming Languages (POPL), vol. 8, 2024

2024

-

[12]

Stim: A fast stabilizer circuit simulator,

C. Gidney, “Stim: A fast stabilizer circuit simulator,”Quantum, vol. 5, p. 497, 2021. 1https://github.com/uw-math-ai/quantum-ai

2021

-

[13]

SWE-bench: Can Language Models Resolve Real-World GitHub Issues?

C. E. Jimenez, J. Yang, A. Wettig, S. Yao, K. Pei, O. Press, and K. Narasimhan, “SWE-bench: Can language models resolve real-world GitHub issues?”arXiv preprint arXiv:2310.06770, 2024

work page internal anchor Pith review arXiv 2024

-

[14]

Evaluating Large Language Models Trained on Code

M. Chen, J. Tworek, H. Jun, Q. Yuan, H. P. de Oliveira Pinto, J. Kaplan, H. Edwards, Y . Burda, N. Joseph, G. Brockmanet al., “Evaluating large language models trained on code,”arXiv preprint arXiv:2107.03374, 2021, introduces the HumanEval benchmark

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[15]

Qiskit HumanEval: An evaluation benchmark for quantum code generative models,

S. Vishwakarma, F. Harkins, S. Golecha, V . S. Bajpe, N. Dupuis, L. Bu- ratti, D. Kremer, A. Mezzacapo, and F. Tacchino, “Qiskit HumanEval: An evaluation benchmark for quantum code generative models,”arXiv preprint arXiv:2406.14712, 2024

-

[16]

Quanbench: Benchmarking quan- tum code generation with large language models,

X. Guo, M. Wang, and J. Zhao, “QuanBench: Benchmarking quan- tum code generation with large language models,”arXiv preprint arXiv:2510.16779, 2025, accepted at ASE 2025

-

[17]

A. Basit, M. Shao, M. H. Asif, N. Innan, M. Kashif, A. Marchisio, and M. Shafique, “QHackBench: Benchmarking large language models for quantum code generation using PennyLane hackathon challenges,” arXiv preprint arXiv:2506.20008, 2025, to appear at IEEE QAI 2025

-

[18]

T. Mikuriya, T. Ishigaki, M. Kawarada, S. Minami, T. Kadowaki, Y . Suzuki, S. Naito, S. Takata, T. Kato, T. Basseda, R. Yamada, and H. Takamura, “QCoder benchmark: Bridging language generation and quantum hardware through simulator-based feedback,”arXiv preprint arXiv:2510.26101, 2025, accepted at INLG 2025

-

[19]

Theory of quantum error-correcting codes,

E. Knill and R. Laflamme, “Theory of quantum error-correcting codes,” Physical Review A, vol. 55, no. 2, p. 900, 1997

1997

-

[20]

Quantum error correction for quantum memories,

B. M. Terhal, “Quantum error correction for quantum memories,” Reviews of Modern Physics, vol. 87, no. 2, p. 307, 2015

2015

-

[21]

The Heisenberg Representation of Quantum Computers

D. Gottesman, “The Heisenberg representation of quantum computers,” arXiv preprint quant-ph/9807006, 1998

work page internal anchor Pith review arXiv 1998

-

[22]

Improved simulation of stabilizer circuits,

S. Aaronson and D. Gottesman, “Improved simulation of stabilizer circuits,”Physical Review A, vol. 70, no. 5, p. 052328, 2004

2004

-

[23]

Efficient computations of encodings for quantum error correction,

R. Cleve and D. Gottesman, “Efficient computations of encodings for quantum error correction,”Physical Review A, vol. 56, no. 1, p. 76, 1997

1997

-

[24]

T. Peham, L. Schmid, L. Berent, M. M ¨uller, and R. Wille, “Automated synthesis of fault-tolerant state preparation circuits for quantum error- correction codes,”PRX Quantum, vol. 6, no. 2, May 2025. [Online]. Available: http://dx.doi.org/10.1103/PRXQuantum.6.020330

-

[25]

M. A. Nielsen and I. L. Chuang,Quantum Computation and Quantum Information: 10th Anniversary Edition. Cambridge University Press, 2010

2010

-

[26]

Stabilizer codes and quantum error correction,

D. Gottesman, “Stabilizer codes and quantum error correction,” Ph.D. dissertation, California Institute of Technology, Pasadena, California, 1997

1997

-

[27]

Quantum error correction with only two extra qubits,

R. Chao and B. W. Reichardt, “Quantum error correction with only two extra qubits,”Phys. Rev. Lett., vol. 121, p. 050502, Aug 2018. [Online]. Available: https://link.aps.org/doi/10.1103/PhysRevLett.121.050502

-

[28]

Flag fault-tolerant error correction with arbitrary distance codes,

C. Chamberland and M. E. Beverland, “Flag fault-tolerant error correction with arbitrary distance codes,”Quantum, vol. 2, p. 53, Feb

-

[29]

[Online]. Available: https://doi.org/10.22331/q-2018-02-08-53

-

[30]

Fault-tolerant syndrome extraction and cat state preparation with fewer qubits,

P. Prabhu and B. W. Reichardt, “Fault-tolerant syndrome extraction and cat state preparation with fewer qubits,”Quantum, vol. 7, p. 1154, Oct. 2023. [Online]. Available: http://dx.doi.org/10.22331/ q-2023-10-24-1154

2023

-

[31]

Program Synthesis with Large Language Models

J. Austin, A. Odena, M. Nye, M. Bosma, H. Michalewski, D. Dohan, E. Jiang, C. Cai, M. Terry, Q. Le, and C. Sutton, “Program synthesis with large language models,”arXiv preprint arXiv:2108.07732, 2021

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[32]

Quantum computer benchmarking: An explorative systematic literature review,

T. Rohe, F. Harjes Ruiloba, S. Egger, S. von Beck, J. Stein, and C. Linnhoff-Popien, “Quantum computer benchmarking: An explorative systematic literature review,”arXiv preprint arXiv:2509.03078, 2025

-

[33]

Low-distance surface codes under realistic quantum noise,

Y . Tomita and K. M. Svore, “Low-distance surface codes under realistic quantum noise,”Physical Review A, vol. 90, no. 6, p. 062320, 2014

2014

-

[34]

Topological quantum distilla- tion,

H. Bombin and M. A. Martin-Delgado, “Topological quantum distilla- tion,”Physical Review Letters, vol. 97, no. 18, p. 180501, 2006

2006

-

[35]

A. J. Landahl, J. T. Anderson, and P. R. Rice, “Fault-tolerant quantum computing with color codes,”arXiv preprint arXiv:1108.5738, 2011

-

[36]

Self, Marcello Benedetti, and David Amaro

C. N. Self, M. Benedetti, and D. Amaro, “Protecting expressive circuits with a quantum error detection code,”Nature Physics, vol. 20, no. 2, p. 219–224, Jan. 2024. [Online]. Available: http://dx.doi.org/10.1038/s41567-023-02282-2

-

[37]

High-performance fault-tolerant quantum computing with many-hypercube codes,

H. Goto, “High-performance fault-tolerant quantum computing with many-hypercube codes,”Science Advances, vol. 10, p. eadp6388, 2024

2024

-

[38]

High-threshold and low-overhead fault-tolerant quantum memory,

S. Bravyi, A. W. Cross, J. M. Gambetta, D. Maslov, P. Rall, and T. J. Yoder, “High-threshold and low-overhead fault-tolerant quantum memory,”Nature, vol. 627, pp. 778–782, 2024

2024

-

[39]

Perfect quantum error correcting code,

R. Laflamme, C. Miquel, J. P. Paz, and W. H. Zurek, “Perfect quantum error correcting code,”Physical Review Letters, vol. 77, no. 1, p. 198, 1996

1996

-

[40]

Multiple-particle interference and quantum error cor- rection,

A. M. Steane, “Multiple-particle interference and quantum error cor- rection,”Proceedings of the Royal Society A, vol. 452, no. 1954, pp. 2551–2577, 1996

1954

-

[41]

Scheme for reducing decoherence in quantum computer memory,

P. W. Shor, “Scheme for reducing decoherence in quantum computer memory,”Physical Review A, vol. 52, no. 4, p. R2493, 1995

1995

-

[42]

Quantum Reed-Muller codes,

A. M. Steane, “Quantum Reed-Muller codes,”IEEE Transactions on Information Theory, vol. 45, no. 5, pp. 1701–1703, 1999

1999

-

[43]

Quantum computing with realistically noisy devices,

E. Knill, “Quantum computing with realistically noisy devices,”Nature, vol. 434, pp. 39–44, 2005

2005

-

[44]

A. Paetznick, M. P. da Silva, C. Ryan-Anderson, J. M. Bello-Rivas, C. H. Camara, J. Craft, A. M. Dalzell, A. Eickbusch, C. Gidney, M. Graydon et al., “Demonstration of logical qubits and repeated error correction with better-than-physical error rates,”arXiv preprint arXiv:2404.02280, 2024

-

[45]

Quantum LDPC codes with positive rate and minimum distance proportional to the square root of the blocklength,

J.-P. Tillich and G. Z ´emor, “Quantum LDPC codes with positive rate and minimum distance proportional to the square root of the blocklength,” IEEE Transactions on Information Theory, vol. 60, no. 2, pp. 1193– 1202, 2014

2014

-

[46]

Fiber bundle codes: Breaking then 1/2 polylog(n)barrier for quantum LDPC codes,

M. B. Hastings, J. Haah, and R. O’Donnell, “Fiber bundle codes: Breaking then 1/2 polylog(n)barrier for quantum LDPC codes,” inProceedings of the 53rd Annual ACM Symposium on Theory of Computing (STOC), 2021, pp. 1276–1288

2021

-

[47]

Asymptotically good quantum and locally testable classical LDPC codes,

P. Panteleev and G. Kalachev, “Asymptotically good quantum and locally testable classical LDPC codes,” inProceedings of the 54th Annual ACM Symposium on Theory of Computing (STOC), 2022, pp. 375–388

2022

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.