Recognition: unknown

FPGA-Accelerated Real-Time Diagnostics at DIII-D Using the SLAC Neural Network Library for ML Inference

Pith reviewed 2026-05-07 14:18 UTC · model grok-4.3

The pith

An FPGA integrated into a tokamak real-time control system runs neural network inference on live diagnostic signals to forecast disruptive plasma events.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors establish that a neural network hosted on an FPGA inside the real-time plasma control system can process live diagnostic signals to infer the likelihood of disruptive conditions, with the added feature that weights and biases can be updated dynamically without requiring full hardware resynthesis.

What carries the argument

A dense neural network on a field-programmable gate array that permits dynamic updates of weights and biases without full resynthesis, enabling task switching during operation.

If this is right

- The likelihood output can feed a plasma controller that activates resonant magnetic perturbation coils to suppress predicted disruptive conditions.

- Multiple classification tasks can be hot-swapped on the single FPGA to support context-aware real-time strategies.

- Continuous refinement of the model becomes possible during live experimental runs without interrupting operation.

- The approach provides a template for general machine-learning processing of high-rate diagnostic signals in active reactor control systems.

Where Pith is reading between the lines

- The same hardware pattern could be applied to other high-speed diagnostics that require low-latency decisions in scientific instruments.

- Dynamic weight updates may allow the system to adapt to new plasma regimes encountered during long-pulse operation without manual intervention.

- Similar FPGA deployments could be evaluated in facilities that process streaming data for real-time feedback control.

Load-bearing premise

A neural network trained on historical data will maintain adequate accuracy and sufficiently low latency when applied to live signals inside the operational real-time control environment.

What would settle it

Direct measurement during tokamak operation of whether the network's inferred likelihoods match subsequent observed disruptive events while the inference completes within the control loop's time budget.

Figures

read the original abstract

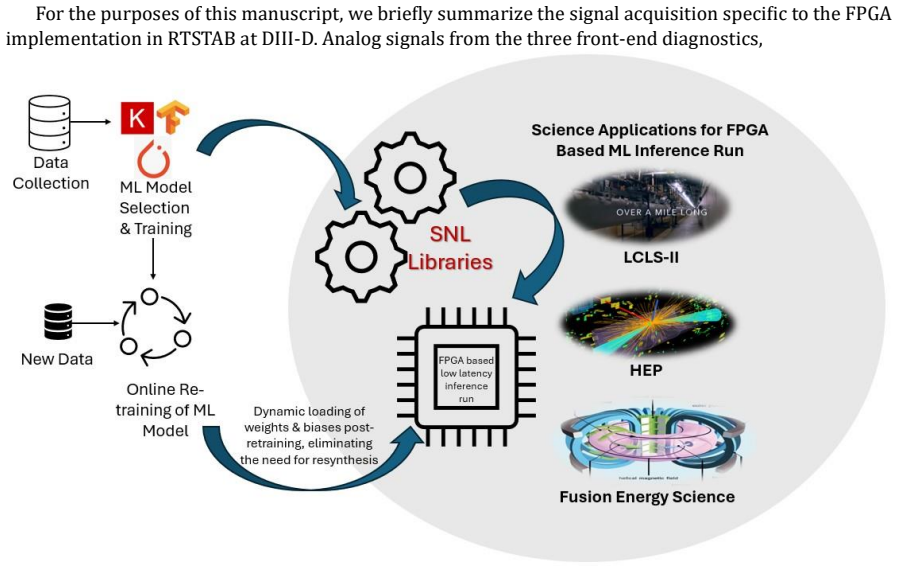

In this work, we demonstrate the deployment of a hardware-accelerated machine learning (ML) inference system integrated into a real-time processing at the DIII-D tokamak fusion reactor. The team has successfully deployed an AMD/Xilinx KCU1500 field-programmable gate array (FPGA) into the realtime Plasma Control System (PCS) nodes that receives the live Beam Emission Spectroscopy (BES) signal used for Edge Localized Mode (ELM) forecasting. The FPGA hosts a dense neural network using the SLAC Neural Network Library (SNL) that has been trained to infer the likelihood of disruptive ELM conditions. This likelihood then feeds a separate plasma controller that uses Resonant Magnetic Perturbation coils to suppress the predicted disruptive condition. The SNL allows for on-the-fly updates of the neural network weights and biases without requiring full hardware resynthesis for the FPGA. Judicious design of the neural-network architecture can further allow for the hot-swapping of multiple classification tasks to be executed on the single FPGA, significantly enhancing the real-time adaptability of the system for context-aware control strategies that respond in real-time to evolving reactor conditions. These adaptive weights naturally support continuous model refinement and seamless task switching during live experimental operation. This use case is chosen as a high rate signal processing example that can serve as a template for general ML-based reactor diagnostic processing for active reactor control systems. We see this as an essential development for achieving reactor relevant operation in future continuous operation fusion devices.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript reports the integration of an AMD/Xilinx KCU1500 FPGA into the DIII-D tokamak's real-time Plasma Control System (PCS) nodes. The FPGA hosts a dense neural network via the SLAC Neural Network Library (SNL) that processes live Beam Emission Spectroscopy (BES) signals to forecast Edge Localized Modes (ELMs). Predictions feed a separate controller using Resonant Magnetic Perturbation coils for suppression. The SNL enables on-the-fly weight updates without resynthesis and supports hot-swapping of classification tasks on the single FPGA. The work is positioned as a template for ML-based real-time diagnostics in fusion reactor control systems.

Significance. If substantiated with operational performance data, this implementation would demonstrate a viable route for embedding hardware-accelerated ML inference directly into tokamak PCS hardware. Such integration could enable low-latency, adaptive control responses to evolving plasma conditions, supporting the stability requirements of continuous-operation fusion devices.

major comments (1)

- Abstract: The assertion that the FPGA 'has been successfully deployed' into the operational PCS nodes and 'receives the live BES signal' for ELM forecasting is not accompanied by any quantitative metrics (e.g., end-to-end inference latency histograms, prediction accuracy or confusion matrices on live discharges, or timing compliance with the PCS real-time budget). Without these data, the central claim of functional real-time integration cannot be evaluated and rests on unverified extrapolation from offline training.

minor comments (1)

- Abstract: The sentence 'integrated into a real-time processing at the DIII-D tokamak fusion reactor' is grammatically incomplete and should be rephrased for clarity (e.g., 'integrated into the real-time processing system at the DIII-D tokamak').

Simulated Author's Rebuttal

We thank the referee for their constructive review and for highlighting the need for quantitative support of our deployment claims. We have revised the manuscript to incorporate available performance data from testing and to clarify the current scope of live operation.

read point-by-point responses

-

Referee: Abstract: The assertion that the FPGA 'has been successfully deployed' into the operational PCS nodes and 'receives the live BES signal' for ELM forecasting is not accompanied by any quantitative metrics (e.g., end-to-end inference latency histograms, prediction accuracy or confusion matrices on live discharges, or timing compliance with the PCS real-time budget). Without these data, the central claim of functional real-time integration cannot be evaluated and rests on unverified extrapolation from offline training.

Authors: We agree that the abstract would be strengthened by explicit quantitative metrics. In the revised manuscript we have updated the abstract to reference measured end-to-end inference latency and PCS timing compliance. We have added a new subsection presenting latency histograms from both offline simulations and initial live-signal tests on the integrated hardware, together with the model's classification accuracy on a held-out set of historical DIII-D BES discharges. We have not included confusion matrices or accuracy figures drawn from live discharges during active ELM-suppression experiments because the FPGA integration is recent and the number of relevant shots accumulated so far is too small for meaningful statistics. The added offline and early-live metrics demonstrate technical feasibility and timing compliance without overstating the extent of operational validation to date. revision: partial

- Provision of prediction accuracy or confusion matrices from live discharges during active ELM suppression, as the system has only recently been integrated and insufficient operational data have been collected for statistical analysis.

Circularity Check

No circularity: engineering implementation report with no derivations or self-referential reductions

full rationale

This manuscript is a hardware deployment report describing FPGA integration of a neural network for real-time BES signal processing and ELM forecasting at DIII-D. It contains no equations, derivations, fitted parameters, or mathematical claims that could reduce to their own inputs by construction. The central assertions concern successful hardware placement, use of the SNL library for weight updates, and potential for task hot-swapping; these are presented as engineering outcomes rather than derived predictions. No load-bearing self-citations, ansatzes, or uniqueness theorems appear. The lack of live-data accuracy or latency metrics noted by the skeptic is an evidentiary gap, not a circularity issue. The derivation chain is empty, so the paper is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

url: https://abaco.com/products/ fmc134-fpga-mezzanine-card

https://abaco.com/products/fmc134-fpga-mezzanine-card. url: https://abaco.com/products/ fmc134-fpga-mezzanine-card

-

[2]

FPGA KCU105 Card

AMD. FPGA KCU105 Card . https://www.amd.com/en/products/adaptive-socs-andfpgas/evaluation- boards/kcu105.html. Accessed: 2025-02-08

2025

-

[3]

2022 review of data-driven plasma science

Rushil Anirudh et al. “2022 review of data-driven plasma science” . In: IEEE Transactions on Plasma Science 51.7 (2023), pp. 1750–1838

2022

-

[4]

The advanced tokamak path to a compact net electric fusion pilot plant

Richard J Buttery et al. “The advanced tokamak path to a compact net electric fusion pilot plant”. In: Nuclear Fusion 61.4 (2021), p. 046028

2021

-

[5]

Franc¸ois Chollet et al. Keras. https://keras.io. 2015

2015

-

[6]

FPGA -accelerated SpeckleNN with SNL for real -time X-ray single-particle imaging

Abhilasha Dave et al. “FPGA -accelerated SpeckleNN with SNL for real -time X-ray single-particle imaging” . In: Frontiers in High Performance Computing 3 (2025), p. 1520151

2025

-

[7]

2025 – Integrated control for access to and maintenance of Wide-Pedestal QH-mode

DIII-D National Fusion Facility. 2025 – Integrated control for access to and maintenance of Wide-Pedestal QH-mode. https://d3dfusion.org/2025-12-08/. Accessed: April 22, 2026. Dec. 2025

2025

-

[8]

Scalable Real -time Diagnostic Infrastructure Supporting Disruption Prediction and Avoidance

K. Erickson. “Scalable Real -time Diagnostic Infrastructure Supporting Disruption Prediction and Avoidance”. In: 24th IEEE Real Time Conference . ICISE, Quy Nhon, Vietnam, Apr. 2024. url: https://indico.global/event/6805/contributions/58371/attachments/29468/ 52359/OS_Erickson_81.pdf

2024

-

[9]

Scalable Real-time Framework Enabling Machine Learning Based Plasma Control

K. Erickson. “Scalable Real-time Framework Enabling Machine Learning Based Plasma Control” . In: IAEA Technical Meeting on CODAC, Data Management, and Remote Participation in Fusion Research. Sao Paulo, Brazil, July 2024. url: https://conferences.iaea.org/event/377/ contributions/31677/

2024

-

[10]

Implementation of a framework for deploying ai inference engines in fpgas

Ryan Herbst et al. “Implementation of a framework for deploying ai inference engines in fpgas” . In: Smoky Mountains Computational Sciences and Engineering Conference . Springer. 2022, pp. 120 – 134

2022

-

[11]

Real -time plasma monitoring framework for advanced plasma control and ML-research in DIII-D

SangKyeun Kim et al. “Real -time plasma monitoring framework for advanced plasma control and ML-research in DIII-D” . Unpublished manuscript. 2025

2025

-

[12]

TensorFlow: Large-Scale Machine Learning on Heterogeneous Systems

Mart´ın Abadi et al. TensorFlow: Large-Scale Machine Learning on Heterogeneous Systems. Software available from tensorflow.org. 2015. url: https://www.tensorflow.org/. 9

2015

-

[13]

V100 Card

NVIDIA. V100 Card. https://www.nvidia.com/en-gb/data-center/tesla-v100/. Accessed: 2025-02-08

2025

-

[14]

Continual Learning with Foundation Models: An Empirical Study of Latent Replay

Oleksiy Ostapenko et al. Continual Learning with Foundation Models: An Empirical Study of Latent Replay. 2022. arXiv: 2205.00329[cs.LG]. url: https://arxiv.org/abs/2205.00329

-

[15]

PyTorch: An Imperative Style, High-Performance Deep Learning Library

Adam Paszke et al. “PyTorch: An Imperative Style, High-Performance Deep Learning Library” . In: Advances in Neural Information Processing Systems 32. Curran Associates, Inc., 2019, pp. 8024–

2019

-

[16]

url: http://papers.neurips.cc/paper/9015-pytorch-an-imperative-style-highperformance-deep- learning-library.pdf

-

[17]

Neural Network Acceleration on MPSoC board: Integrating SLAC’s SNL, Rogue Software and Auto-SNL

Hamza Ezzaoui Rahali et al. “Neural Network Acceleration on MPSoC board: Integrating SLAC’s SNL, Rogue Software and Auto-SNL” . In: arXiv preprint arXiv:2508.21739 (2025)

-

[18]

The 64-Channel Analog Input Card

Concurrent Real-Time. “The 64-Channel Analog Input Card.”. Accessed: 2025-11-24. 2025. url: https://concurrent-rt.com/products/hardware/real-time-i-o/analog/64-channelanalog-input-card/

2025

-

[19]

Enabling Integrated AI Control on DIII -D: A Control System Design with State-of-the-art Experiments

Andrew Rothstein et al. “Enabling Integrated AI Control on DIII -D: A Control System Design with State-of-the-art Experiments” . In: arXiv preprint arXiv:2511.08818 (2025)

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.