Recognition: unknown

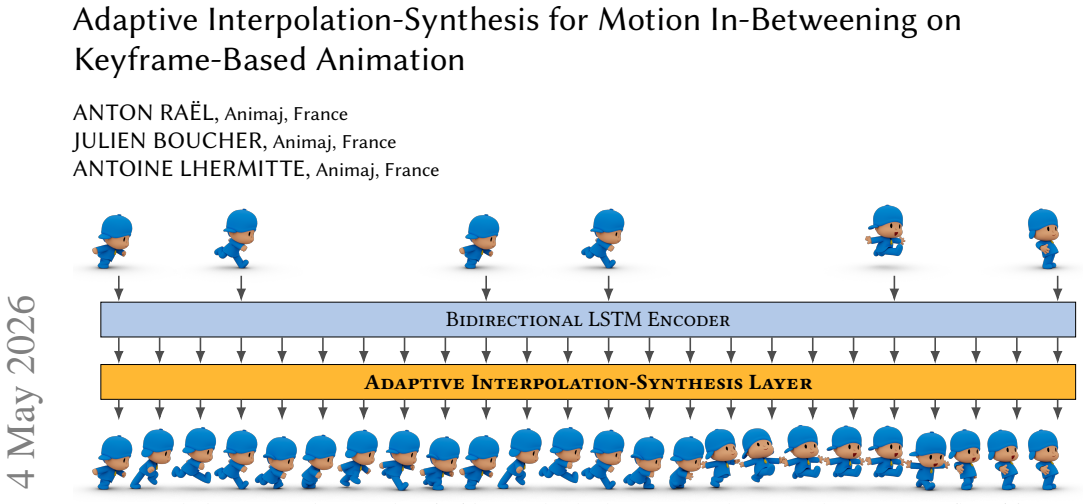

Adaptive Interpolation-Synthesis for Motion In-Betweening on Keyframe-Based Animation

Pith reviewed 2026-05-08 02:03 UTC · model grok-4.3

The pith

The Adaptive Interpolation-Synthesis layer dynamically balances interpolation and pose synthesis to align with professional keyframe workflows.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central discovery is that an Adaptive Interpolation-Synthesis layer, which dynamically balances learned interpolation against direct pose synthesis, combined with a domain-based input keypose schedule, produces in-between frames that respect the stylistic and temporal characteristics of keyframe-based production data. This alignment removes the mismatch between training distributions and actual animator practice, yielding measurable gains in both accuracy and speed.

What carries the argument

The Adaptive Interpolation-Synthesis (AIS) layer, which dynamically balances learned interpolation and direct pose synthesis according to the input keyposes.

Load-bearing premise

The method will maintain stylistic consistency and performance when applied to keyframe data from studios other than the authors' without further tuning.

What would settle it

Running the trained model on an independent set of production keyframe sequences from a different studio, without retraining or schedule adjustment, and measuring whether both motion quality and task-time reduction remain comparable to the reported results.

Figures

read the original abstract

Motion in-betweening is one of the most artistically demanding and time consuming stages of 3D animation, where the expressivity and rhythm of motion are defined. The level of creative control it requires makes it a major production bottleneck, underscoring the need for intelligent tools that assist animators in this process. Although recent deep learning approaches have achieved strong results in motion synthesis and in-betweening, they assume data characteristics, motion styles, and problem formulations that diverge from professional animation workflows. To bridge this gap, we propose a method explicitly aligned with the constraints of motion in-betweening for keyframe-based animation in production environments. At its core, the Adaptive Interpolation-Synthesis (AIS) layer mirrors the animator's creative process by dynamically balancing learned interpolation and direct pose synthesis. In addition, a domain-based input keypose schedule reflects the distribution of production data, improving stylistic consistency and alignment between training and real-world usage. Our method achieves state-of-the-art performance on production data; when integrated into Autodesk Maya, it enables animators to complete in-betweening tasks with a 3.5x speedup.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes an Adaptive Interpolation-Synthesis (AIS) layer for motion in-betweening that dynamically balances learned interpolation with direct pose synthesis to better align with keyframe-based professional animation workflows, together with a domain-based input keypose schedule that reflects production data distributions. It claims state-of-the-art performance on the authors' production data and a 3.5x speedup when integrated into Autodesk Maya.

Significance. If the empirical claims hold under rigorous evaluation, the approach could address a practical gap between academic motion synthesis methods and production constraints, offering animators more controllable tools that preserve stylistic consistency and reduce in-betweening time.

major comments (2)

- [Abstract] Abstract: the central claims of 'state-of-the-art performance on production data' and '3.5x speedup' are presented without any supporting quantitative metrics (pose error, foot-skate, stylistic distance), baselines, ablation studies, user-study protocol, or timing methodology, rendering the headline result unverifiable from the manuscript.

- [Methods (implied by abstract description)] The description of the AIS layer and domain-based keypose schedule provides no equations, pseudocode, or implementation details sufficient to reproduce the adaptive balancing mechanism or the schedule construction, which are load-bearing for the claimed alignment with production workflows.

minor comments (1)

- [Abstract] Abstract: 'production data' is referenced repeatedly without characterizing its size, diversity, motion styles, or how it differs from public motion-capture datasets.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive review. The comments highlight important areas for improving verifiability and reproducibility. We address each major comment below and will incorporate revisions to strengthen the manuscript.

read point-by-point responses

-

Referee: [Abstract] Abstract: the central claims of 'state-of-the-art performance on production data' and '3.5x speedup' are presented without any supporting quantitative metrics (pose error, foot-skate, stylistic distance), baselines, ablation studies, user-study protocol, or timing methodology, rendering the headline result unverifiable from the manuscript.

Authors: We agree that the abstract, as currently written, presents the headline claims without inline quantitative support, which limits immediate verifiability. The full manuscript contains these details in Section 4 (quantitative comparisons with pose error, foot-skate, and stylistic distance metrics against baselines), Section 4.3 (user-study protocol), and Section 5 (Maya integration timing methodology with explicit measurement protocol). To resolve the concern, we will revise the abstract to include the key numerical results (e.g., specific error reductions and the 3.5x factor with a brief methodology note) while preserving its length constraints. revision: yes

-

Referee: [Methods (implied by abstract description)] The description of the AIS layer and domain-based keypose schedule provides no equations, pseudocode, or implementation details sufficient to reproduce the adaptive balancing mechanism or the schedule construction, which are load-bearing for the claimed alignment with production workflows.

Authors: We acknowledge that the current Section 3 description of the AIS layer and domain-based keypose schedule is primarily textual and lacks the mathematical and algorithmic specificity needed for full reproducibility. We will add the governing equations for the adaptive interpolation-synthesis balancing (including the learned weight computation and conditioning on keypose context), along with pseudocode for constructing the domain-aligned keypose schedule from production data statistics. These additions will be placed in the main text with an expanded methods subsection. revision: yes

Circularity Check

No circularity in derivation chain

full rationale

The provided abstract and context contain no equations, no claimed first-principles derivations, and no self-referential definitions or fitted inputs presented as predictions. The core claims are empirical performance statements on production data and a Maya integration speedup, framed as outcomes rather than tautological redefinitions. No load-bearing steps reduce to self-citation chains, ansatzes smuggled via prior work, or renaming of known results. The method description (AIS layer balancing interpolation and synthesis, domain-based keypose schedule) is presented as a design choice aligned with workflows, without evidence that any result is forced by construction from its own inputs. This is the expected non-finding for a methods paper whose central assertions are empirical rather than deductive.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Recurrent transition networks for character locomotion , year =

Harvey, F\'. Recurrent transition networks for character locomotion , year =. SIGGRAPH Asia 2018 Technical Briefs , articleno =. doi:10.1145/3283254.3283277 , abstract =

-

[2]

Robust motion in-betweening , year =

Harvey, F\'. Robust motion in-betweening , year =. doi:10.1145/3386569.3392480 , journal =

-

[3]

Tang, Xiangjun and Wang, He and Hu, Bo and Gong, Xu and Yi, Ruifan and Kou, Qilong and Jin, Xiaogang , title =. 2022 , issue_date =. doi:10.1145/3528223.3530090 , journal =

-

[4]

and Valkanas, Antonios and Harvey, Félix G

Oreshkin, Boris N. and Valkanas, Antonios and Harvey, Félix G. and Ménard, Louis-Simon and Bocquelet, Florent and Coates, Mark J. , journal =. Motion In-Betweening via Deep -Interpolator , year =

-

[5]

and Aggarwal, Madhav and Velusamy, R

Sridhar, Pavithra and Aananth, V. and Aggarwal, Madhav and Velusamy, R. Leela , title =. 2022 , isbn =. doi:10.1007/978-3-031-27066-6_21 , booktitle =

-

[6]

Qin, Jia and Zheng, Youyi and Zhou, Kun , title =. 2022 , issue_date =. doi:10.1145/3550454.3555454 , journal =

-

[7]

Cohan, Setareh and Tevet, Guy and Reda, Daniele and Peng, Xue Bin and van de Panne, Michiel , title =. 2024 , isbn =. doi:10.1145/3641519.3657414 , booktitle =

-

[8]

and Guay, Martin and Buhmann, Jakob , title =

Studer, Justin and Agrawal, Dhruv and Borer, Dominik and Sadat, Seyedmorteza and Sumner, Robert W. and Guay, Martin and Buhmann, Jakob , title =. 2024 , isbn =. doi:10.1145/3677388.3696338 , booktitle =

-

[9]

AnyMoLe: Any Character Motion In-betweening Leveraging Video Diffusion Models , year=

Yun, Kwan and Hong, Seokhyeon and Kim, Chaelin and Noh, Junyong , booktitle=. AnyMoLe: Any Character Motion In-betweening Leveraging Video Diffusion Models , year=

-

[10]

Akhoundi, Elly and Ling, Hung Yu and Deshmukh, Anup and Butepage, Judith , year =. Silk: smooth interpolation framework for motion in-betweening a simplified computational approach , rights =. doi:10.1109/CVPRW67362.2025.00273 , booktitle =

-

[11]

Computer Graphics Forum , author =

Generative Motion Infilling from Imprecisely Timed Keyframes , volume =. Computer Graphics Forum , author =. 2025 , month = may, pages =. doi:10.1111/cgf.70060 , abstractnote =

-

[12]

Motion in- betweening with phase manifolds,

Starke, Paul and Starke, Sebastian and Komura, Taku and Steinicke, Frank , title =. 2023 , issue_date =. doi:10.1145/3606921 , journal =

-

[13]

MMM: Generative Masked Motion Model , year =

Pinyoanuntapong, Ekkasit and Wang, Pu and Lee, Minwoo and Chen, Chen , booktitle =. MMM: Generative Masked Motion Model , year =

-

[14]

Proceedings of the ACM SIGGRAPH/Eurographics Symposium on Computer Animation , pages =

Hong, Seokhyeon and Kim, Haemin and Cho, Kyungmin and Noh, Junyong , title =. Proceedings of the ACM SIGGRAPH/Eurographics Symposium on Computer Animation , pages =. 2024 , publisher =. doi:10.1111/cgf.15171 , abstract =

-

[15]

Computer Animation and Virtual Worlds , url =

Peng, Jiawen and Liu, Zhuoran and Lin, Jingzhong and He, Gaoqi , title =. Computer Animation and Virtual Worlds , url =. 2025 , month = may, volume =. doi:10.1002/cav.70040 , issn =

-

[16]

In: 2023 IEEE/CVF Conference on Com- puter Vision and Pattern Recognition (CVPR), pp

Mo, Clinton A. and Hu, Kun and Long, Chengjiang and Wang, Zhiyong , title =. 2023 , isbn =. doi:10.1109/CVPR52729.2023.01335 , booktitle =

-

[17]

Conditional motion in-betweening , volume =

Kim, Jihoon and Byun, Taehyun and Shin, Seungyoun and Won, Jungdam and Choi, Sungjoon , year =. Conditional motion in-betweening , volume =. doi:10.1016/j.patcog.2022.108894 , journal =

-

[18]

Martinez, Julieta and Black, Michael J. and Romero, Javier , title =. 2017 , isbn =. doi:10.1109/CVPR.2017.497 , booktitle =

-

[19]

Spherical Linear Interpolation and Bézier Curves , volume =

Jafari, Mehdi and Molaei, Habib , year =. Spherical Linear Interpolation and Bézier Curves , volume =

-

[20]

Rose, Charles and Guenter, Brian and Bodenheimer, Bobby and Cohen, Michael F. , title =. 1996 , isbn =. doi:10.1145/237170.237229 , booktitle =

-

[21]

Mukai, Tomohiko and Kuriyama, Shigeru , title =. 2005 , issue_date =. doi:10.1145/1073204.1073313 , journal =

-

[22]

Yi Zhou and Jingwan Lu and Connelly Barnes and Jimei Yang and Sitao Xiang and Hao Li , title =. CoRR , volume =. 2020 , url =. 2005.08891 , timestamp =

-

[23]

Single-shot motion completion with transformer

Yinglin Duan and Tianyang Shi and Zhengxia Zou and Yenan Lin and Zhehui Qian and Bohan Zhang and Yi Yuan , title =. CoRR , volume =. 2021 , url =. 2103.00776 , timestamp =

-

[24]

Inpainting-Driven Mask Optimization for Object Removal , year=

Shimosato, Kodai and Ukita, Norimichi , booktitle=. Inpainting-Driven Mask Optimization for Object Removal , year=

-

[25]

Journal of Information Processing , volume =

Takeshi Miura and Takaaki Kaiga and Hiroaki Katsura and Katsubumi Tajima and Takeshi Shibata and Hideo Tamamoto , title =. Journal of Information Processing , volume =. 2014 , url =

2014

-

[26]

Gopalakrishnan, Anand and Mali, Ankur and Kifer, Dan and Giles, Lee and Ororbia, Alexander G. , title =. 2019 , isbn =. doi:10.1109/CVPR.2019.01239 , booktitle =

-

[27]

1981 , publisher =

The Illusion of Life: Disney Animation , author =. 1981 , publisher =

1981

-

[28]

ACM Transactions on Graphics (TOG) , volume =

Globally and Locally Consistent Image Completion , author =. ACM Transactions on Graphics (TOG) , volume =

-

[29]

Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) , pages =

Generative Image Inpainting with Contextual Attention , author =. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) , pages =

-

[30]

ECCV , year =

Image inpainting for irregular holes using partial convolutions , author =. ECCV , year =

-

[31]

ICCV , year =

Free-form image inpainting with gated convolution , author =. ICCV , year =

-

[32]

Inpainting-Driven Mask Optimization for Object Removal , year =

Shimosato, Kodai and Ukita, Norimichi , booktitle =. Inpainting-Driven Mask Optimization for Object Removal , year =

-

[33]

Image Inpainting with Cascaded Modulation GAN and Object-Aware Training , volume =

Zheng, Haitian and Lin, Zhe and Lu, Jingwan and Cohen, Scott and Shechtman, Eli and Barnes, Connelly and Zhang, Jianming and Xu, Ning and Amirghodsi, Sohrab and Luo, Jiebo , year =. Image Inpainting with Cascaded Modulation GAN and Object-Aware Training , volume =. doi:10.1007/978-3-031-19787-1_16 , booktitle =

-

[34]

Shape-Aware Masking for Inpainting in Medical Imaging

Yeganeh, Yousef and Farshad, Azade and Navab, Nassir , biburl =. Shape-Aware Masking for Inpainting in Medical Imaging. , url =. CoRR , keywords =

-

[35]

CVPR , year =

Deep video inpainting , author =. CVPR , year =

-

[36]

ICCV , year =

FuseFormer: Fusing fine-grained information in transformers for video inpainting , author =. ICCV , year =

-

[37]

2025 , eprint =

Beyond Random Missingness: Clinically Rethinking for Healthcare Time Series Imputation , author =. 2025 , eprint =

2025

-

[38]

Unveiling the Secrets: How Masking Strategies Shape Time Series Imputation , journal =

Qian, Linglong and Ibrahim, Zina and Du, Wenjie and Yang, Yiyuan , year =. Unveiling the Secrets: How Masking Strategies Shape Time Series Imputation , journal =

-

[39]

Nature Machine Intelligence , volume =

X-ray scattering image inpainting with physics-informed deep learning , author =. Nature Machine Intelligence , volume =

-

[40]

AAAI , year =

Face completion with identity-guided generative adversarial networks , author =. AAAI , year =

-

[41]

ICCV , year =

Eyeglass-removal facial inpainting with adversarial attention , author =. ICCV , year =

-

[42]

IC3D , year =

A spatio-temporal transformer for human motion prediction , author =. IC3D , year =

-

[43]

Auxiliary Tasks in Multi-task Learning , journal =

Lukas Liebel and Marco K. Auxiliary Tasks in Multi-task Learning , journal =. 2018 , url =. 1805.06334 , timestamp =

-

[44]

Bidirectional recurrent neural networks , volume =

Schuster, Mike and Paliwal, Kuldip , year =. Bidirectional recurrent neural networks , volume =. Signal Processing, IEEE Transactions on , doi =

-

[45]

Neural Computation 9, 1735–1780

Hochreiter, Sepp and Schmidhuber, Jürgen , title =. Neural Computation , volume =. 1997 , month =. doi:10.1162/neco.1997.9.8.1735 , url =

-

[46]

Framewise phoneme classification with bidirectional LSTM and other neural network architectures , journal =. 2005 , note =. doi:https://doi.org/10.1016/j.neunet.2005.06.042 , url =

-

[47]

Proceedings of the 25th

Optuna: A Next-generation Hyperparameter Optimization Framework , author =. Proceedings of the 25th

-

[48]

On the Continuity of Rotation Representations in Neural Networks , year =

Zhou, Yi and Barnes, Connelly and Lu, Jingwan and Yang, Jimei and Li, Hao , booktitle =. On the Continuity of Rotation Representations in Neural Networks , year =

-

[49]

Advances in Neural Information Processing Systems 32 , pages =

PyTorch: An Imperative Style, High-Performance Deep Learning Library , author =. Advances in Neural Information Processing Systems 32 , pages =. 2019 , publisher =

2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.