Recognition: 2 theorem links

· Lean TheoremGeometry-Aware Neural Optimizer for Shape Optimization and Inversion

Pith reviewed 2026-05-13 07:09 UTC · model grok-4.3

The pith

A single latent-space loop unifies shape encoding, field prediction, and optimization for PDE-governed design.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

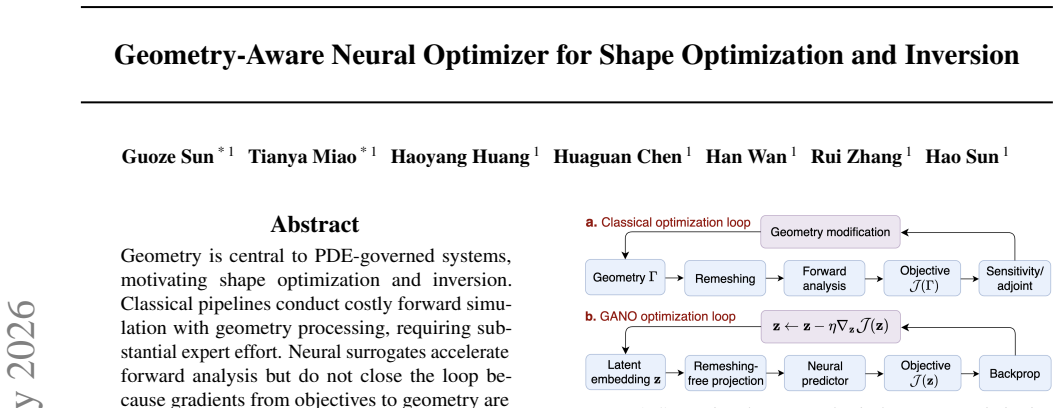

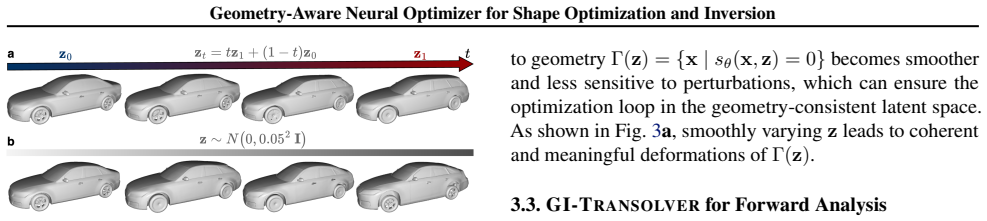

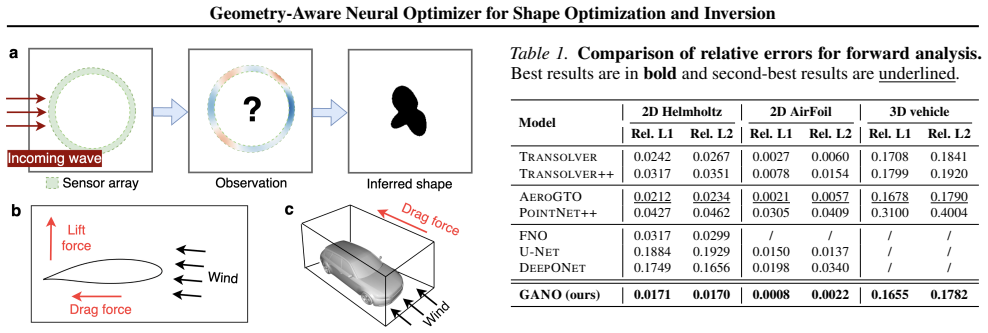

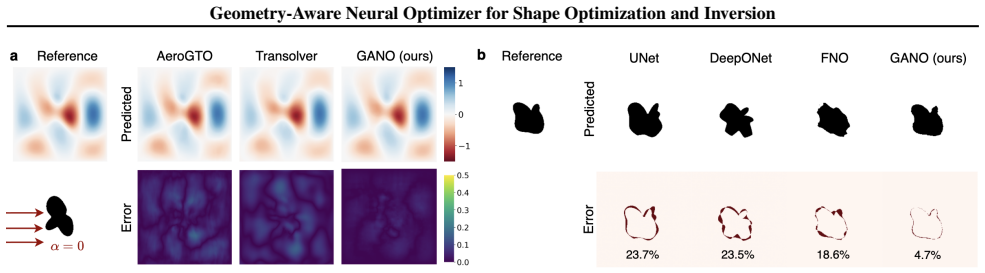

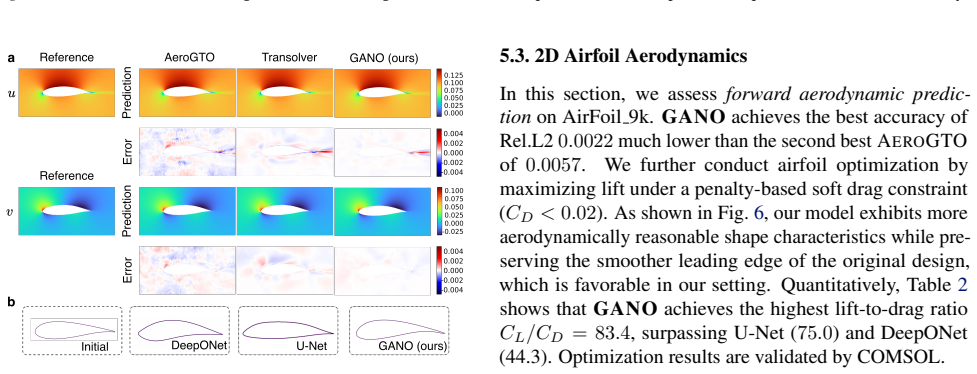

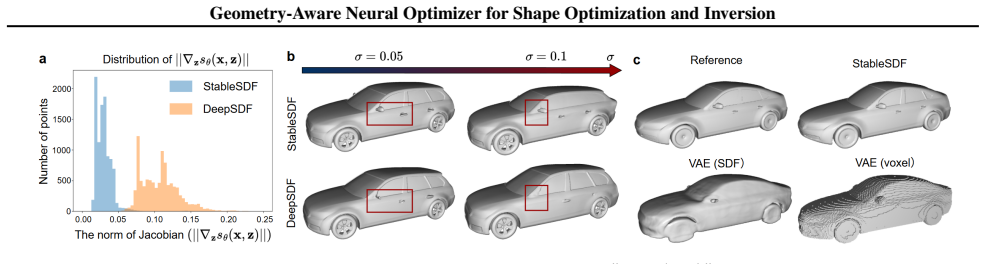

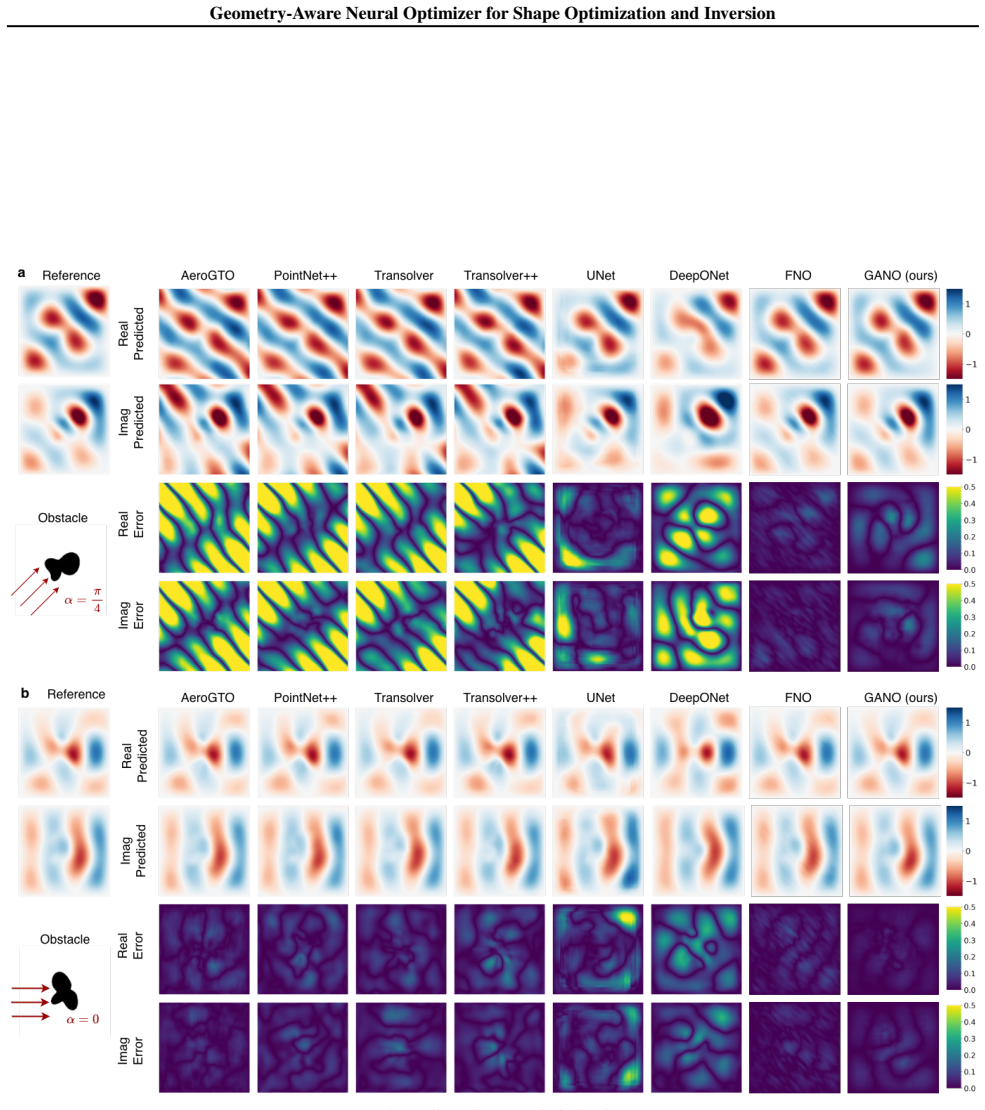

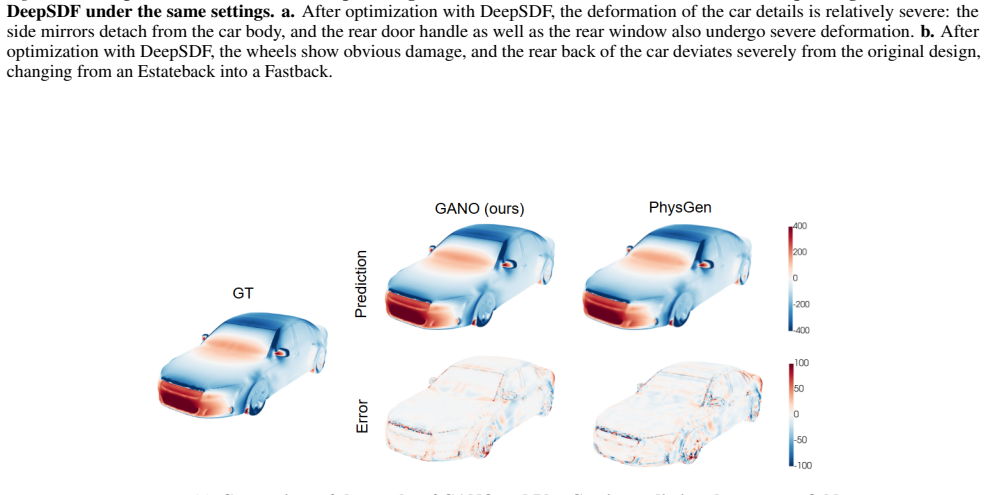

GANO is an end-to-end differentiable framework that encodes shapes with an auto-decoder, stabilizes latent updates via a denoising mechanism, and uses a geometry-injected surrogate to provide reliable gradient pathways for geometry updates, thereby unifying representation, prediction, and optimization in a single latent-space loop. The denoising induces an implicit Jacobian regularization that reduces decoder sensitivity and yields controlled deformations. On benchmarks including 2D Helmholtz, 2D airfoil, and 3D vehicles, it demonstrates state-of-the-art accuracy with stable updates, improving lift-to-drag by up to 55.9 percent for airfoils and reducing drag by about 7 percent for vehicles.

What carries the argument

The Geometry-Aware Neural Optimizer (GANO), which relies on an auto-decoder for shape representation and a denoising mechanism in latent space to enable stable, controllable updates guided by a geometry-injected field predictor.

If this is right

- Supports part-wise control of shape changes through null-space projection.

- Accelerates geometry processing with remeshing-free projection.

- Achieves state-of-the-art accuracy on PDE-governed optimization tasks.

- Delivers up to 55.9% improvement in lift-to-drag ratio for airfoils.

- Reduces drag by approximately 7% for 3D vehicle shapes.

Where Pith is reading between the lines

- This latent optimization strategy could be adapted to inverse problems in other scientific domains like medical imaging or materials design.

- Combining it with physics-informed constraints might further improve physical consistency of the optimized shapes.

- Exploring its behavior on highly deformable or topology-changing geometries would test the limits of the current auto-decoder approach.

Load-bearing premise

The auto-decoder must capture the full range of relevant shape variations and the geometry-injected surrogate must accurately approximate the underlying physical equations for the latent gradients to guide meaningful and artifact-free optimizations.

What would settle it

If applying the method to a held-out shape category produces divergent or non-physical results despite training convergence, or if the reported performance gains disappear under stricter mesh resolution checks, that would indicate the gradients are not reliably tied to the true geometry changes.

Figures

read the original abstract

Geometry is central to PDE-governed systems, motivating shape optimization and inversion. Classical pipelines conduct costly forward simulation with geometry processing, requiring substantial expert effort. Neural surrogates accelerate forward analysis but do not close the loop because gradients from objectives to geometry are often unavailable. Existing differentiable methods either rely on restrictive parameterizations or unstable latent optimization driven by scalar objectives, limiting interpretability and part-wise control. To address these challenges, we propose Geometry-Aware Neural Optimizer (GANO), an end-to-end differentiable framework that unifies geometry representation, field-level prediction, and automated optimization/inversion in a single latent-space loop. GANO encodes shapes with an auto-decoder and stabilizes latent updates via a denoising mechanism, and a geometry-injected surrogate provides a reliable gradient pathway for geometry updates. Moreover, GANO supports part-wise control through null-space projection and uses remeshing-free projection to accelerate geometry processing. We further prove that denoising induces an implicit Jacobian regularization that reduces decoder sensitivity, yielding controlled deformations. Experiments on three benchmarks spanning 2D Helmholtz, 2D airfoil, and 3D vehicles show state-of-the-art accuracy and stable, controllable updates, achieving up to +55.9% lift-to-drag improvement for airfoils and ~7% drag reduction for vehicles.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces Geometry-Aware Neural Optimizer (GANO), an end-to-end differentiable framework that encodes shapes via an auto-decoder, stabilizes latent updates with a denoising mechanism, and employs a geometry-injected surrogate to supply gradients for PDE-governed shape optimization and inversion. It proves that denoising induces implicit Jacobian regularization for controlled deformations, supports part-wise control via null-space projection, and reports state-of-the-art results on 2D Helmholtz, 2D airfoil, and 3D vehicle benchmarks, including up to +55.9% lift-to-drag gains and ~7% drag reduction.

Significance. If verified, the work would advance differentiable shape optimization by unifying geometry representation, field prediction, and optimization in latent space without remeshing or restrictive parameterizations. The theoretical result on denoising-induced regularization and the empirical gains on engineering benchmarks indicate potential utility for PDE-constrained design tasks.

major comments (2)

- [Abstract and Experiments] The central claim that the geometry-injected surrogate provides a reliable gradient pathway for optimization (Abstract) is load-bearing for all reported performance gains, yet the manuscript supplies no quantitative bound on surrogate-to-PDE discrepancy along the optimized latent trajectories; without this, it remains possible that surrogate error correlates with the update direction and inflates the lift-to-drag and drag-reduction figures.

- [Experiments] §4 (or equivalent experimental section): the SOTA accuracy claims on the three benchmarks are presented without error bars, ablation studies isolating the surrogate's contribution, or independent comparisons against ground-truth PDE solvers at the final optimized shapes, preventing verification that the latent gradients remain faithful to the underlying physics.

minor comments (2)

- [Methods] Clarify the precise form of the null-space projection operator and its interaction with the denoising step in the methods section to improve reproducibility of the part-wise control results.

- [§3] Add a short discussion of the auto-decoder's training data coverage relative to the optimization trajectories to address potential extrapolation concerns.

Simulated Author's Rebuttal

We thank the referee for the constructive comments. We address each major concern point-by-point below. We will revise the manuscript to incorporate the requested quantitative analyses, error bars, ablations, and ground-truth verifications.

read point-by-point responses

-

Referee: [Abstract and Experiments] The central claim that the geometry-injected surrogate provides a reliable gradient pathway for optimization (Abstract) is load-bearing for all reported performance gains, yet the manuscript supplies no quantitative bound on surrogate-to-PDE discrepancy along the optimized latent trajectories; without this, it remains possible that surrogate error correlates with the update direction and inflates the lift-to-drag and drag-reduction figures.

Authors: We agree that a quantitative bound on surrogate-to-PDE discrepancy along the latent trajectories is needed to fully substantiate the gradient reliability claim. The manuscript reports surrogate validation errors below 2% relative L2 on held-out shapes but does not track discrepancy during optimization. In the revision we will add trajectory-specific measurements at multiple intermediate latent points for each benchmark, together with a Lipschitz-derived bound on the composed decoder-surrogate map, to show that errors remain bounded and do not systematically align with the objective gradient direction. revision: yes

-

Referee: [Experiments] §4 (or equivalent experimental section): the SOTA accuracy claims on the three benchmarks are presented without error bars, ablation studies isolating the surrogate's contribution, or independent comparisons against ground-truth PDE solvers at the final optimized shapes, preventing verification that the latent gradients remain faithful to the underlying physics.

Authors: We acknowledge that the experimental section lacks error bars, surrogate-specific ablations, and final ground-truth PDE checks. While baseline comparisons are included, these elements are absent. We will revise the experimental section to report error bars over multiple random seeds, add an ablation that replaces the geometry-injected surrogate with a standard MLP to isolate its contribution, and include independent high-fidelity PDE evaluations at the final decoded shapes to confirm that surrogate-predicted objectives (lift-to-drag, drag) align with ground-truth physics. revision: yes

Circularity Check

No significant circularity; framework validated on independent benchmarks

full rationale

The paper introduces a novel end-to-end framework (GANO) combining an auto-decoder for geometry encoding, a denoising mechanism for latent stabilization, and a geometry-injected surrogate for gradient pathways, along with a claimed proof that denoising induces implicit Jacobian regularization. These elements are presented as new constructions and are evaluated through experiments on external benchmarks (2D Helmholtz, 2D airfoil, 3D vehicles) that are independent of the model's fitted parameters. No derivation step reduces by construction to its own inputs, no load-bearing self-citations are invoked for uniqueness or ansatz, and no predictions are statistically forced from fitted subsets. The central claims rest on external validation rather than internal redefinition.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Denoising induces an implicit Jacobian regularization that reduces decoder sensitivity

invented entities (1)

-

Geometry-Aware Neural Optimizer (GANO)

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe propose STABLESDF... adding small Gaussian perturbations to the latent code... induces an implicit latent-Jacobian regularizer... Theorem A.1... E[|f(z+ε)−s|]≤|f(z)−s|+σ√(2/π)∥∇zf(z)∥2+O(σ²)

-

IndisputableMonolith/Foundation/DimensionForcing.leanalexander_duality_circle_linking uncleardenoising mechanism... reduces decoder sensitivity... controlled surface displacement... dH(Γ(z),Γ(z+δz))≤(Lz/m)∥δz∥2+O(∥δz∥²2)

Reference graph

Works this paper leans on

-

[1]

Seminar on Differential Geometry , year=

Survey on partial differential equations in differential geometry , author=. Seminar on Differential Geometry , year=

-

[2]

A survey of geometric optimization for deep learning: from Euclidean space to Riemannian manifold , author=. ACM Computing Surveys , year=

-

[3]

Microlocal Analysis and Inverse Problems in Tomography and Geometry , year=

On geometric inverse problems and microlocal analysis , author=. Microlocal Analysis and Inverse Problems in Tomography and Geometry , year=

-

[4]

Numerical sensitivity analysis for aerodynamic optimization: A survey of approaches , author=. Computers & Fluids , year=

-

[5]

Transolver: A Fast Transformer Solver for PDEs on General Geometries , author=. ICML , year=

-

[6]

Bocheng Zeng and Qi Wang and Mengtao Yan and Yang Liu and Ruizhi Chengze and Yi Zhang and Hongsheng Liu and Zidong Wang and Hao Sun , booktitle=. Phy

-

[7]

Engineering Applications of Artificial Intelligence , year=

Deep neural operators as accurate surrogates for shape optimization , author=. Engineering Applications of Artificial Intelligence , year=

-

[8]

arXiv preprint arXiv:2511.10761 , year=

Surrogate-Based Differentiable Pipeline for Shape Optimization , author=. arXiv preprint arXiv:2511.10761 , year=

-

[9]

Fourier Neural Operator for Parametric Partial Differential Equations , author =. ICLR , year =

-

[10]

Deeponet: Learning nonlinear operators for identifying differential equations based on the universal approximation theorem of operators , author=. arXiv preprint arXiv:1910.03193 , year=

-

[11]

Transolver++: An Accurate Neural Solver for PDEs on Million-Scale Geometries , author=. ICML , year=

-

[12]

Aerogto: An efficient graph-transformer operator for learning large-scale aerodynamics of 3d vehicle geometries , author=. AAAI , year=

-

[13]

Pointnet++: Deep hierarchical feature learning on point sets in a metric space , author=. NeurIPS , year=

-

[14]

Airfoil Computational Fluid Dynamics - 9k shapes, 2 AoA's ,author =. 2023 ,doi =

work page 2023

-

[15]

DrivAerNet++ a large-scale multimodal car dataset with computational fluid dynamics simulations and deep learning benchmarks , author=. NeurIPS , year=

-

[16]

Park, Jeong Joon and Florence, Peter and Straub, Julian and Newcombe, Richard and Lovegrove, Steven , booktitle =

-

[17]

Hao, Yuze and Zhu, Linchao and Yang, Yi , booktitle =

-

[18]

Communications Engineering , year =

Aerodynamics-guided machine learning for design optimization of electric vehicles , author =. Communications Engineering , year =

-

[19]

TripOptimizer: Generative three-dimensional shape optimization and drag prediction using triplane variational autoencoder networks , author=. Physics of Fluids , year=

-

[20]

arXiv preprint arXiv:2510.22491 , year=

LAMP: Data-Efficient Linear Affine Weight-Space Models for Parameter-Controlled 3D Shape Generation and Extrapolation , author=. arXiv preprint arXiv:2510.22491 , year=

-

[21]

arXiv preprint arXiv:2512.00422 , year=

PhysGen: Physically Grounded 3D Shape Generation for Industrial Design , author=. arXiv preprint arXiv:2512.00422 , year=

-

[22]

VehicleSDF: A 3D generative model for constrained engineering design via surrogate modeling , author=. NeurIPS 2024 Workshop on Data-driven and Differentiable Simulations, Surrogates, and Solvers (D3S3) , year=

work page 2024

-

[23]

A review of the artificial neural network surrogate modeling in aerodynamic design , author=. Proceedings of the Institution of Mechanical Engineers, Part G: Journal of Aerospace Engineering , year=

-

[24]

Hao, Zhongkai and Su, Chang and Liu, Songming and Berner, Julius and Ying, Chengyang and Su, Hang and Anandkumar, Anima and Song, Jian and Zhu, Jun , title =. 2024 , booktitle =

work page 2024

- [25]

-

[26]

Kingma and Max Welling , editor =

Diederik P. Kingma and Max Welling , editor =. Auto-Encoding Variational Bayes , booktitle =

-

[27]

Wu, Haixu and Hu, Tengge and Luo, Huakun and Wang, Jianmin and Long, Mingsheng , title =. 2023 , booktitle =

work page 2023

-

[28]

GridMix: Exploring Spatial Modulation for Neural Fields in

Honghui Wang and Shiji Song and Gao Huang , booktitle=. GridMix: Exploring Spatial Modulation for Neural Fields in

-

[29]

Geometry-informed neural operator for large-scale 3D PDEs , author=. NeurIPS , year=

-

[30]

Gnot: A general neural operator transformer for operator learning , author=. ICML , year=

-

[31]

Computer Methods in Applied Mechanics and Engineering , year=

Physics-informed latent neural operator for real-time predictions of time-dependent parametric PDEs , author=. Computer Methods in Applied Mechanics and Engineering , year=

- [32]

-

[33]

Computer Methods in Applied Mechanics and Engineering , year=

Geometry-informed neural operator transformer for partial differential equations on arbitrary geometries , author=. Computer Methods in Applied Mechanics and Engineering , year=

-

[34]

Lectures at the Von Karman Institute, Brussels , year=

Aerodynamic shape optimization using the adjoint method , author=. Lectures at the Von Karman Institute, Brussels , year=

-

[35]

Inverse design for fluid-structure interactions using graph network simulators , author=. NeurIPS , year=

-

[36]

arXiv preprint arXiv:2503.17400 , year=

TripNet: Learning Large-scale High-fidelity 3D Car Aerodynamics with Triplane Networks , author=. arXiv preprint arXiv:2503.17400 , year=

- [37]

-

[38]

Nature Machine Intelligence , year=

Inverse design of nonlinear mechanical metamaterials via video denoising diffusion models , author=. Nature Machine Intelligence , year=

-

[39]

Automatic chemical design using a data-driven continuous representation of molecules , author=. ACS central science , year=

-

[40]

Occupancy networks: Learning 3d reconstruction in function space , author=. CVPR , year=

-

[41]

Flow Straight and Fast: Learning to Generate and Transfer Data with Rectified Flow , author=. ICLR , year=

-

[42]

Proceedings of the SIGGRAPH Asia 2025 Conference Papers , year=

PhysiOpt: Physics-Driven Shape Optimization for 3D Generative Models , author=. Proceedings of the SIGGRAPH Asia 2025 Conference Papers , year=

work page 2025

-

[43]

Aligning optimization trajectories with diffusion models for constrained design generation , author=. NeurIPS , year=

- [44]

-

[45]

National Science Review , volume=

Deciphering and integrating invariants for neural operator learning with various physical mechanisms , author=. National Science Review , volume=. 2024 , publisher=

work page 2024

-

[46]

Computer Physics Communications , year=

JAX-FEM: A differentiable GPU-accelerated 3D finite element solver for automatic inverse design and mechanistic data science , author=. Computer Physics Communications , year=

-

[47]

Physically compatible 3D object modeling from a single image , author=. NeurIPS , year=

-

[48]

DragSolver: A Multi-Scale Transformer for Real-World Automotive Drag Coefficient Estimation , author =. ICML , year =

-

[49]

GeoFormer: Mesh-free geometry-to-flow alignment framework for real-time aerodynamics on non-watertight vehicle geometries , author =. Physics of Fluids , year =

-

[50]

Implicit Neural Representations with Periodic Activation Functions , author=. NeurIPS , year=

-

[51]

Operator Learning with Neural Fields: Tackling PDEs on General Geometries , author=. NeurIPS , year=

-

[52]

arXiv preprint arXiv:2602.03582 , year=

Optimization and Generation in Aerodynamics Inverse Design , author=. arXiv preprint arXiv:2602.03582 , year=

-

[53]

Machine Intelligence Research , volume=

Evolutionary Computation for Expensive Optimization: A Survey , author=. Machine Intelligence Research , volume=

-

[54]

Machine Intelligence Research , volume=

Accelerated Elliptical PDE Solver for Computational Fluid Dynamics Based on Configurable U-Net Architecture: Analogy to V-Cycle Multigrid , author=. Machine Intelligence Research , volume=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.