Recognition: unknown

Diffusion-Based Posterior Sampling: A Feynman-Kac Analysis of Bias and Stability

Pith reviewed 2026-05-08 12:29 UTC · model grok-4.3

The pith

A Feynman-Kac path expectation quantifies the exact bias in diffusion posterior samplers even when prior scores are exact.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

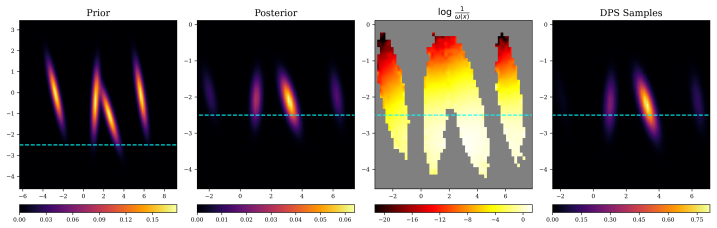

By comparing the sampler trajectory to a tractable surrogate path that connects the true posterior to a Gaussian, the density ratio satisfies a parabolic PDE whose reaction term accumulates the accumulated bias. A Feynman-Kac representation then writes the Radon-Nikodym correction as an explicit path expectation. For DPS this expectation couples the data-conditional covariance with the reward curvature; for STSL it corresponds to an auxiliary drift that flattens the spatially varying part of the reaction term.

What carries the argument

The surrogate path from posterior to standard Gaussian together with the Feynman-Kac representation of the solution to the governing parabolic PDE for the density ratio.

If this is right

- For DPS the bias correction reduces to an Ornstein-Uhlenbeck path expectation that couples conditional covariance with reward curvature.

- STSL corresponds to an auxiliary drift that removes the spatially varying component of the reaction term.

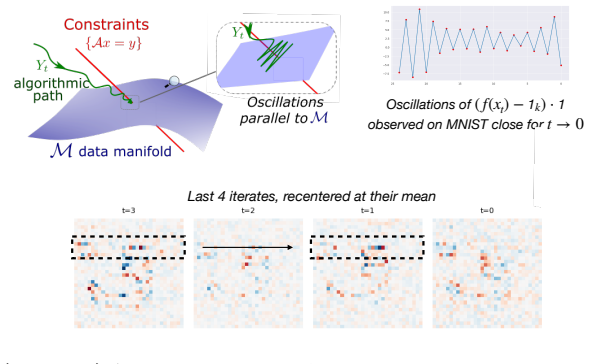

- Early stopping of guidance prevents forward-Euler instabilities that arise in low-temperature regimes.

- The same framework supplies a systematic way to design new variants whose reaction term is closer to zero.

Where Pith is reading between the lines

- The path-expectation formula could be used to derive a low-cost importance-weighting correction that is added after sampling.

- Similar surrogate-path constructions may apply to score-based samplers outside the diffusion setting.

- The stability analysis suggests that higher-order integrators or implicit schemes would reduce the need for early stopping.

Load-bearing premise

The chosen surrogate path stays tractable and the associated parabolic PDE admits a well-behaved Feynman-Kac representation when the exact prior score is used.

What would settle it

Compute the explicit path expectation for a low-dimensional Gaussian posterior with known reward, run the DPS sampler on the same instance, and check whether the empirical frequency of samples in each region matches the predicted correction factor.

Figures

read the original abstract

Diffusion-based posterior samplers use pretrained diffusion priors to sample from measurement- or reward-conditioned posteriors, and are widely used for inverse problems. Yet their theoretical behavior remains poorly understood: even with exact prior scores, their outputs are biased, and in low-temperature regimes their discretizations can become unstable. We characterize this bias by introducing a tractable surrogate path connecting the true posterior to a standard Gaussian and comparing it to the sampler's path. Their density ratio satisfies a parabolic PDE whose reaction term measures the accumulated bias. A Feynman-Kac representation then expresses the Radon-Nikodym correction as an explicit path expectation, identifying which posterior regions are over- or under-sampled. We apply this framework to DPS and STSL, a related sampler. For DPS, the correction is an Ornstein-Uhlenbeck path expectation coupling the data conditional covariance with the reward curvature, revealing where DPS over- or under-samples. Next, we reinterpret STSL as an auxiliary drift that steers trajectories toward low-uncertainty regions, flattening the spatially varying part of the DPS reaction term. Finally, we characterize early guidance-stopping, a common mitigation for low-temperature instabilities caused by forward-Euler integration of the vector field. Together, these results clarify sampler bias, explain existing correctives, and guide stable variant designs.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces a surrogate path connecting the true posterior to a standard Gaussian to analyze bias in diffusion-based posterior samplers (e.g., DPS and STSL). It derives a parabolic PDE for the density ratio along this path, with a reaction term capturing accumulated bias, and applies a Feynman-Kac representation to express the Radon-Nikodym correction as an explicit path expectation. This is used to characterize over-/under-sampling in DPS via an Ornstein-Uhlenbeck expectation, reinterpret STSL as an auxiliary drift, and analyze early guidance-stopping for stability in low-temperature regimes.

Significance. If the central derivation holds with the required regularity, the framework offers a principled way to quantify and mitigate bias and instability in widely used diffusion posterior samplers for inverse problems, potentially informing more stable variants and correctives. The explicit path-expectation form for the correction and the reinterpretation of existing methods are notable strengths.

major comments (1)

- [§3 and §4] §3 (surrogate path construction) and §4 (PDE derivation): The central claim requires that the surrogate path remains tractable and that the reaction term (accumulated bias) satisfies growth conditions ensuring the Feynman-Kac path expectation is finite and the Radon-Nikodym correction is well-defined pointwise. The manuscript asserts tractability under the exact prior score but does not explicitly verify these conditions for the low-temperature or high-curvature regimes highlighted in the abstract and applications to DPS/STSL; without this, the representation may fail to hold globally.

minor comments (2)

- [Introduction] Notation for the surrogate path and reaction term could be clarified with an explicit definition or diagram early in the manuscript to aid readability.

- [Applications section] The abstract mentions applications to DPS and STSL but the main text would benefit from a brief comparison table of the resulting bias expressions.

Simulated Author's Rebuttal

We thank the referee for their constructive and insightful comments, which help clarify the scope and assumptions of our analysis. We address the major comment point by point below.

read point-by-point responses

-

Referee: [§3 and §4] §3 (surrogate path construction) and §4 (PDE derivation): The central claim requires that the surrogate path remains tractable and that the reaction term (accumulated bias) satisfies growth conditions ensuring the Feynman-Kac path expectation is finite and the Radon-Nikodym correction is well-defined pointwise. The manuscript asserts tractability under the exact prior score but does not explicitly verify these conditions for the low-temperature or high-curvature regimes highlighted in the abstract and applications to DPS/STSL; without this, the representation may fail to hold globally.

Authors: We agree that an explicit discussion of the requisite growth conditions is valuable for rigor. The derivation in §§3–4 proceeds under the standing assumption that the prior score is exact and Lipschitz continuous (standard in the diffusion literature) and that the measurement model induces a reaction term with at most linear growth along the surrogate path. In the revised manuscript we will insert a short paragraph in §3 that invokes standard Feynman-Kac theory: when the potential (accumulated bias) satisfies |V(t,x)| ≤ C(1 + |x|) uniformly in t, the path expectation remains finite by Gronwall-type bounds. For the low-temperature and high-curvature regimes highlighted in the abstract, we note that the DPS correction reduces to an Ornstein–Uhlenbeck expectation whose moments are explicitly finite (Gaussian integrals), while STSL’s auxiliary drift only flattens the spatially varying component without introducing super-linear growth. These observations are already implicit in the explicit forms derived in §5, but we will state the growth condition and its verification explicitly. We therefore plan to revise the manuscript to include this clarification. revision: yes

Circularity Check

No circularity: standard Feynman-Kac application to derived PDE

full rationale

The paper constructs a surrogate path from posterior to Gaussian, derives that the density ratio obeys a parabolic PDE whose reaction term encodes accumulated bias, and then applies the classical Feynman-Kac theorem to represent the Radon-Nikodym correction as an explicit path expectation. This chain relies on the external Feynman-Kac theorem rather than redefining the bias in terms of itself or fitting parameters to the target quantity. No load-bearing self-citation, ansatz smuggling, or uniqueness theorem imported from the authors' prior work appears in the derivation; the framework is applied to DPS and STSL by direct substitution into the general representation. The tractability assumption is stated explicitly rather than smuggled in as a tautology, leaving the central bias expression independent of the inputs by construction.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption The density ratio between sampler path and true posterior satisfies a parabolic PDE whose reaction term measures accumulated bias

- standard math Feynman-Kac representation converts the PDE solution into an explicit path expectation

invented entities (1)

-

surrogate path connecting true posterior to standard Gaussian

no independent evidence

Reference graph

Works this paper leans on

-

[1]

doi:10.1016/0304-4149(82)90051-5 Mikołaj Bi´nkowski, Danica J

Brian D.O. Anderson , abstract =. Reverse-time diffusion equation models , journal =. 1982 , issn =. doi:https://doi.org/10.1016/0304-4149(82)90051-5 , url =

-

[2]

Karatzas, Ioannis and Shreve, Steven E. , TITLE =. 1991 , PAGES =. doi:10.1007/978-1-4612-0949-2 , URL =

-

[3]

2021 , eprint=

Score-Based Generative Modeling through Stochastic Differential Equations , author=. 2021 , eprint=

2021

-

[4]

2020 , eprint=

Denoising Diffusion Probabilistic Models , author=. 2020 , eprint=

2020

-

[5]

2015 , eprint=

Deep Unsupervised Learning using Nonequilibrium Thermodynamics , author=. 2015 , eprint=

2015

-

[6]

2024 , eprint=

Diffusion Posterior Sampling for General Noisy Inverse Problems , author=. 2024 , eprint=

2024

-

[7]

2023 , eprint=

Beyond First-Order Tweedie: Solving Inverse Problems using Latent Diffusion , author=. 2023 , eprint=

2023

-

[8]

The Fourteenth International Conference on Learning Representations , year=

DriftLite: Lightweight Drift Control for Inference-Time Scaling of Diffusion Models , author=. The Fourteenth International Conference on Learning Representations , year=

-

[9]

arXiv preprint arXiv:2602.05533 , year=

Conditional Diffusion Guidance under Hard Constraint: A Stochastic Analysis Approach , author=. arXiv preprint arXiv:2602.05533 , year=

-

[10]

2021 , eprint=

Diffusion Models Beat GANs on Image Synthesis , author=. 2021 , eprint=

2021

-

[11]

2021 , eprint=

Classifier-Free Diffusion Guidance , author=. 2021 , eprint=

2021

-

[12]

The Thirteenth International Conference on Learning Representations , year=

Variational Diffusion Posterior Sampling with Midpoint Guidance , author=. The Thirteenth International Conference on Learning Representations , year=

-

[13]

Advances in Neural Information Processing Systems , editor=

Improving Diffusion Models for Inverse Problems using Manifold Constraints , author=. Advances in Neural Information Processing Systems , editor=. 2022 , url=

2022

-

[14]

The Twelfth International Conference on Learning Representations , year=

Diffusion Posterior Sampling for Linear Inverse Problem Solving: A Filtering Perspective , author=. The Twelfth International Conference on Learning Representations , year=

-

[15]

International Conference on Learning Representations , year=

Score-Based Generative Modeling through Stochastic Differential Equations , author=. International Conference on Learning Representations , year=

-

[16]

International Conference on Learning Representations , year=

Diffusion Posterior Sampling for General Inverse Problems , author=. International Conference on Learning Representations , year=

-

[17]

Advances in Neural Information Processing Systems , volume=

Denoising Diffusion Restoration Models , author=. Advances in Neural Information Processing Systems , volume=

-

[18]

An empirical Bayes approach to statistics

Robbins, H. An empirical Bayes approach to statistics. Proc. 3rd Berkeley Symp. Math. Statist. Probab., 1956. 1956

1956

-

[19]

Thirty-seventh Conference on Neural Information Processing Systems , year=

Solving Inverse Problems Provably via Posterior Sampling with Latent Diffusion Models , author=. Thirty-seventh Conference on Neural Information Processing Systems , year=

-

[20]

Advances in Neural Information Processing Systems , volume=

Denoising diffusion restoration models , author=. Advances in Neural Information Processing Systems , volume=

-

[21]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Repaint: Inpainting using denoising diffusion probabilistic models , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[22]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Style aligned image generation via shared attention , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[23]

The Thirteenth International Conference on Learning Representations , year=

Semantic Image Inversion and Editing using Rectified Stochastic Differential Equations , author=. The Thirteenth International Conference on Learning Representations , year=

-

[24]

International Conference on Learning Representations , year=

Pseudoinverse-Guided Diffusion Models for Inverse Problems , author=. International Conference on Learning Representations , year=

-

[25]

Black Forest Labs , year =

-

[26]

Photorealistic Text-to-Image Diffusion Models with Deep Language Understanding

Photorealistic Text-to-Image Diffusion Models with Deep Language Understanding , author=. arXiv preprint arXiv:2205.11487 , year=

work page internal anchor Pith review arXiv

-

[27]

International Conference on Machine Learning , pages=

Zero-shot text-to-image generation , author=. International Conference on Machine Learning , pages=. 2021 , organization=

2021

-

[28]

Proceedings of the 41st International Conference on Machine Learning , pages =

Diffusion Posterior Sampling is Computationally Intractable , author =. Proceedings of the 41st International Conference on Machine Learning , pages =. 2024 , editor =

2024

-

[29]

Diffusion Posterior Sampling for General Noisy Inverse Problems

Diffusion posterior sampling for general noisy inverse problems , author=. arXiv preprint arXiv:2209.14687 , year=

work page internal anchor Pith review arXiv

-

[30]

2024 , eprint=

Provably Robust Score-Based Diffusion Posterior Sampling for Plug-and-Play Image Reconstruction , author=. 2024 , eprint=

2024

-

[31]

2025 , url=

Litu Rout and Yujia Chen and Nataniel Ruiz and Abhishek Kumar and Constantine Caramanis and Sanjay Shakkottai and Wen-Sheng Chu , booktitle=. 2025 , url=

2025

-

[32]

Linear and Quasi-linear Equations of Parabolic Type , series =

Lady. Linear and Quasi-linear Equations of Parabolic Type , series =

-

[33]

2023 , eprint=

Plug-and-Play split Gibbs sampler: embedding deep generative priors in Bayesian inference , author=. 2023 , eprint=

2023

-

[34]

2017 , eprint=

The sample size required in importance sampling , author=. 2017 , eprint=

2017

-

[35]

2023 , eprint=

Sampling is as easy as learning the score: theory for diffusion models with minimal data assumptions , author=. 2023 , eprint=

2023

-

[36]

2023 , eprint=

Convergence for score-based generative modeling with polynomial complexity , author=. 2023 , eprint=

2023

-

[37]

Journal of the ACM , volume=

Faster high-accuracy log-concave sampling via algorithmic warm starts , author=. Journal of the ACM , volume=. 2024 , publisher=

2024

-

[38]

2022 , eprint=

High-Resolution Image Synthesis with Latent Diffusion Models , author=. 2022 , eprint=

2022

-

[39]

2024 , eprint=

Posterior Sampling with Denoising Oracles via Tilted Transport , author=. 2024 , eprint=

2024

-

[40]

Bernoulli , volume=

Functional inequalities for perturbed measures with applications to log-concave measures and to some Bayesian problems , author=. Bernoulli , volume=. 2022 , publisher=

2022

-

[41]

2024 , eprint=

Target Score Matching , author=. 2024 , eprint=

2024

-

[42]

Non-asymptotic Analysis of Diffusion Annealed Langevin Monte Carlo for Generative Modelling , author=. arXiv preprint arXiv:2502.09306 , year=

-

[43]

arXiv preprint arXiv:2407.16936 , year=

Provable benefit of annealed langevin monte carlo for non-log-concave sampling , author=. arXiv preprint arXiv:2407.16936 , year=

-

[44]

Stochastic Processes and their Applications , year=

Reverse-time diffusion equation models , author=. Stochastic Processes and their Applications , year=

-

[45]

2022 , eprint=

Towards a Theory of Non-Log-Concave Sampling: First-Order Stationarity Guarantees for Langevin Monte Carlo , author=. 2022 , eprint=

2022

-

[46]

2018 , eprint=

Sampling as optimization in the space of measures: The Langevin dynamics as a composite optimization problem , author=. 2018 , eprint=

2018

-

[47]

2022 , eprint=

Improved analysis for a proximal algorithm for sampling , author=. 2022 , eprint=

2022

-

[48]

SIAM Journal on Mathematical Analysis , volume=

Jordan, Richard and Kinderlehrer, David and Otto, Felix , title=. SIAM Journal on Mathematical Analysis , volume=. 1998 , doi=

1998

-

[49]

2025 , eprint=

Mixing Time of the Proximal Sampler in Relative Fisher Information via Strong Data Processing Inequality , author=. 2025 , eprint=

2025

-

[50]

Proceedings of The 34th International Conference on Algorithmic Learning Theory , pages =

Fisher information lower bounds for sampling , author =. Proceedings of The 34th International Conference on Algorithmic Learning Theory , pages =. 2023 , editor =

2023

-

[51]

Holley, Richard and Stroock, Daniel , date =. Logarithmic Sobolev inequalities and stochastic Ising models , url =. Journal of Statistical Physics , number =. 1987 , bdsk-url-1 =. doi:10.1007/BF01011161 , id =

-

[52]

2025 , eprint=

Fast Convergence of -Divergence Along the Unadjusted Langevin Algorithm and Proximal Sampler , author=. 2025 , eprint=

2025

-

[53]

2021 , eprint=

Dimension-free log-Sobolev inequalities for mixture distributions , author=. 2021 , eprint=

2021

-

[54]

2022 , eprint=

Diffusion Posterior Sampling for General Noisy Inverse Problems , author=. 2022 , eprint=

2022

-

[55]

Chapter 1 - Gradient Flows of Probability Measures , editor =. 2007 , issn =. doi:https://doi.org/10.1016/S1874-5717(07)80004-1 , url =

-

[56]

Transformation of measure on Wiener space , year =

Zakai, Moshe and Üstünel, Ali Süleyman , keywords =. Transformation of measure on Wiener space , year =

-

[57]

R. H. Cameron and W. T. Martin , journal =. Transformations of Weiner Integrals Under Translations , urldate =

-

[58]

2022 , eprint=

Rapid Convergence of the Unadjusted Langevin Algorithm: Isoperimetry Suffices , author=. 2022 , eprint=

2022

-

[59]

Marinari, E and Parisi, G , year=. Simulated Tempering: A New Monte Carlo Scheme , volume=. Europhysics Letters (EPL) , publisher=. doi:10.1209/0295-5075/19/6/002 , number=

-

[60]

Simulated annealing — to cool or not , journal =. 1989 , issn =. doi:https://doi.org/10.1016/0167-6911(89)90081-9 , url =

-

[61]

Book draft available at https://chewisinho

Log-concave sampling , author=. Book draft available at https://chewisinho. github. io , volume=

-

[62]

2022 , eprint=

Solving Inverse Problems in Medical Imaging with Score-Based Generative Models , author=. 2022 , eprint=

2022

-

[63]

2022 , eprint=

Denoising Diffusion Implicit Models , author=. 2022 , eprint=

2022

-

[64]

2020 , eprint=

Generative Modeling by Estimating Gradients of the Data Distribution , author=. 2020 , eprint=

2020

-

[65]

arXiv preprint arXiv:2410.00083 , year=

A survey on diffusion models for inverse problems , author=. arXiv preprint arXiv:2410.00083 , year=

-

[66]

2024 , eprint=

Practical and Asymptotically Exact Conditional Sampling in Diffusion Models , author=. 2024 , eprint=

2024

-

[67]

2019 , eprint=

Langevin Monte Carlo and JKO splitting , author=. 2019 , eprint=

2019

-

[68]

2021 , eprint=

Structured Logconcave Sampling with a Restricted Gaussian Oracle , author=. 2021 , eprint=

2021

-

[69]

2014 , MONTH = Jan, HAL_ID =

Bakry, Dominique and Gentil, Ivan and Ledoux, Michel , URL =. 2014 , MONTH = Jan, HAL_ID =

2014

-

[70]

2024 , eprint=

Tweedie Moment Projected Diffusions For Inverse Problems , author=. 2024 , eprint=

2024

-

[71]

International Conference on Learning Representations (ICLR) , year=

Pseudoinverse-Guided Diffusion Models for Inverse Problems , author=. International Conference on Learning Representations (ICLR) , year=

-

[72]

2003 , publisher=

Stochastic differential equations , author=. 2003 , publisher=

2003

-

[73]

F. Otto and C. Villani , abstract =. Generalization of an Inequality by Talagrand and Links with the Logarithmic Sobolev Inequality , journal =. 2000 , issn =. doi:https://doi.org/10.1006/jfan.1999.3557 , url =

-

[74]

2022 , eprint=

Stability of hypercontractivity, the logarithmic Sobolev inequality, and Talagrand's cost inequality , author=. 2022 , eprint=

2022

-

[75]

Gradient Flows in Metric Spaces and in the Space of Probability Measures , year =

Luigi Ambrosio and Nicola Gigli and Giuseppe Savar. Gradient Flows in Metric Spaces and in the Space of Probability Measures , year =

-

[76]

2017 , eprint=

Convergence of Langevin MCMC in KL-divergence , author=. 2017 , eprint=

2017

-

[77]

SIAM Journal on Control and Optimization , volume =

Geman, Stuart and Hwang, Chii-Ruey , title =. SIAM Journal on Control and Optimization , volume =. 1986 , doi =

1986

-

[78]

Advances in Neural Information Processing Systems , volume=

Snips: Solving noisy inverse problems stochastically , author=. Advances in Neural Information Processing Systems , volume=

-

[79]

2026 , eprint=

Steering diffusion models with quadratic rewards: a fine-grained analysis , author=. 2026 , eprint=

2026

-

[80]

2025 , eprint=

Efficient Approximate Posterior Sampling with Annealed Langevin Monte Carlo , author=. 2025 , eprint=

2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.