Recognition: 2 theorem links

· Lean TheoremPhysics Aware Representation Learning on Electronic Charge Density for Materials Property Prediction

Pith reviewed 2026-05-11 02:02 UTC · model grok-4.3

The pith

A convolutional autoencoder compresses three-dimensional electronic charge density into a compact latent space that accurately predicts mechanical and thermodynamic properties of inorganic crystals.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that an unsupervised three-dimensional convolutional autoencoder can compress DFT-derived electronic charge-density grids from 128x128x128 to 16x16x16x16 while preserving the physically meaningful features needed for downstream regression, yielding R2 scores of 0.94 for bulk modulus K, 0.88 for Young's modulus E, 0.87 for shear modulus G, 0.96 for formation energy Eform, and 0.89 for Debye temperature Theta when the latent vectors are supplied to LightGBM or attention-based 3D CNN models, with further gains when composition-based MAGPIE descriptors are added.

What carries the argument

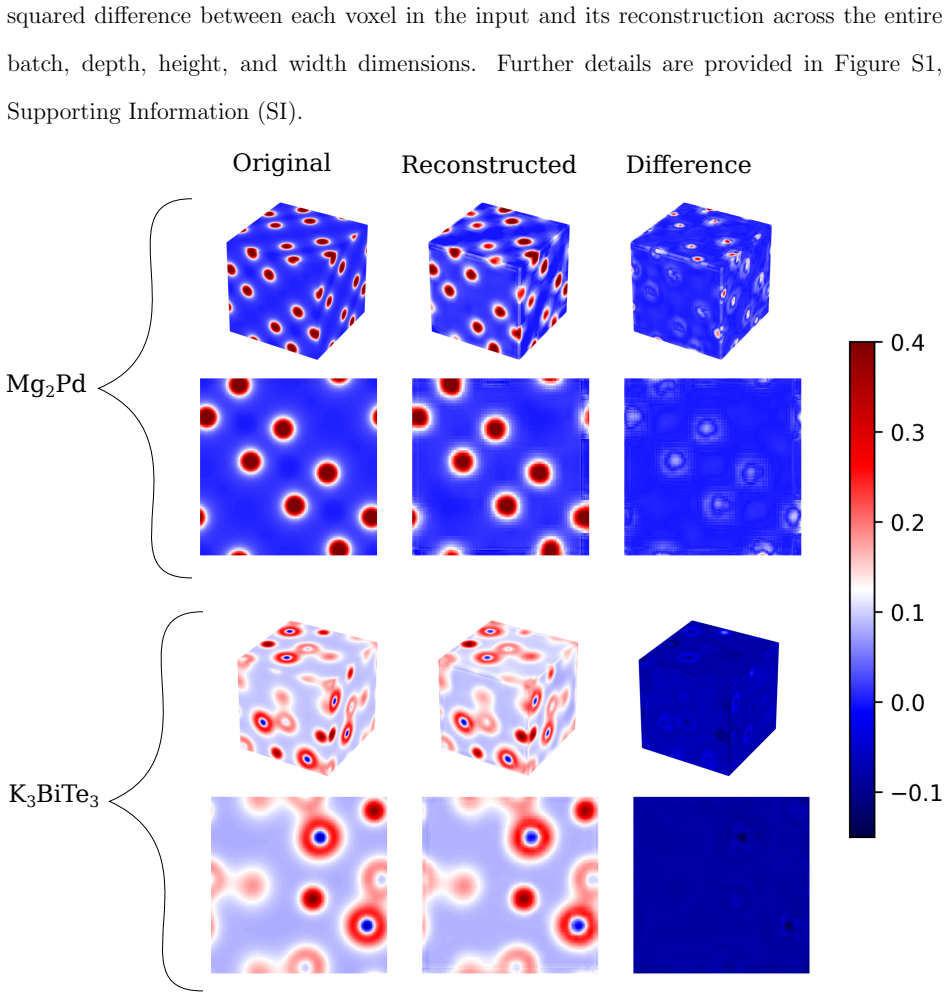

A three-dimensional convolutional autoencoder that learns a 16x16x16x16 latent representation from 128x128x128 charge-density grids while maintaining negligible reconstruction error across diverse crystal symmetries.

If this is right

- Latent charge-density vectors alone suffice for high-accuracy prediction of bulk, shear, and Young's moduli as well as formation energy and Debye temperature.

- Augmenting the latent vectors with composition descriptors raises predictive accuracy still further.

- The workflow requires approximately one-twenty-fifth the computational cost of a complete DFT calculation for each new material.

- The same compression step succeeds uniformly across multiple crystal symmetries in a large inorganic dataset.

Where Pith is reading between the lines

- The same autoencoder architecture could be applied to other spatially resolved quantum fields, such as electrostatic potentials, to predict additional properties without new training from scratch.

- Because the latent space is continuous and low-dimensional, it might support gradient-based optimization to generate hypothetical charge densities that optimize a target property.

- If the learned features align with interpretable quantities such as bond lengths or valence electron counts, the model could guide physical intuition rather than function only as a black-box predictor.

- Extending the approach to defective or disordered structures would test whether the compression remains robust when perfect periodicity is broken.

Load-bearing premise

The compressed latent representation retains every physically relevant aspect of the full charge-density grid that determines the five target properties across different crystal structures.

What would settle it

Repeating the training and evaluation on an independent set of several thousand compounds drawn from crystal systems or chemical families absent from the original 6059-compound collection and observing R2 values consistently below 0.70 for the same properties would falsify the claim that the latent features are sufficient and transferable.

Figures

read the original abstract

The fundamental quantity governing the mechanical and thermodynamic properties of a crystalline solid is its electronic charge density. Yet, its direct use for the rapid prediction of materials properties remains challenging due to its high dimensionality. Here, we present a physics-informed deep learning framework that directly predicts mechanical and thermodynamic properties from the three-dimensional electronic charge density derived from density functional theory (DFT). The proposed approach first utilizes a three-dimensional convolutional autoencoder for unsupervised dimensionality reduction, compressing a high-resolution charge-density grid (128 x 128 x 128) into a compact latent representation (16 x 16 x 16 x 16) while preserving physically meaningful features, as confirmed by negligible reconstruction errors across diverse crystal systems. The compressed latent-space representation of charge density is then used by two different regression models for property prediction: Light Gradient Boosting Machine (LightGBM) and Attention-based 3D Convolutional Neural Networks (Att CNN), and their performance is compared. Combining composition-based descriptors (Material Agnostic Platform for Informatics and Exploration or MAGPIE) with electronic charge density data further improves the model accuracy. Using a dataset of about 6059 inorganic compounds spanning multiple crystal symmetries, the models achieve strong predictive performance for bulk modulus K (R2 = 0.94), Young's modulus E (R2 = 0.88), shear modulus G (R2 = 0.87), formation energy Eform (R2 = 0.96), and Debye temperature {\Theta} (R2 = 0.89). This work establishes electronic charge density as a transferable, physics-grounded descriptor for materials property prediction, requiring ~ 1/25 the computational resources of full-fledged DFT calculations.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents a physics-informed deep learning framework that compresses 128³ DFT-derived electronic charge-density grids into a 16×16×16×16 latent representation via a 3D convolutional autoencoder, then uses this compressed representation (alone or combined with MAGPIE composition descriptors) as input to LightGBM and attention-based 3D CNN regressors to predict bulk modulus K, Young's modulus E, shear modulus G, formation energy Eform, and Debye temperature Θ. On a dataset of ~6059 inorganic compounds spanning multiple crystal systems, the models report R² values of 0.94 (K), 0.88 (E), 0.87 (G), 0.96 (Eform), and 0.89 (Θ), with the claim that the approach requires ~1/25 the computational cost of full DFT while establishing charge density as a transferable descriptor.

Significance. If the reported performance is shown to generalize without data leakage and if the latent space demonstrably retains property-relevant charge-density features, the work would provide a practical route to property prediction that bypasses full electronic-structure calculations for screening. The use of a sizable, symmetry-diverse dataset, the direct comparison of two regression architectures, and the optional fusion with composition-based features are positive elements. The central limitation is that reconstruction error alone does not establish retention of the specific density variations that govern elastic and vibrational properties.

major comments (2)

- [Results (paragraphs presenting R² values and dataset description)] The results section reporting R² = 0.94 for K (and the corresponding values for E, G, Eform, Θ) provides no information on train/test splits, cross-validation strategy, or error bars. Because the autoencoder and downstream regressors are trained on the same ~6059-compound dataset, the absence of these details leaves open the possibility of leakage or overfitting, directly undermining confidence in the quoted predictive performance.

- [Methods (autoencoder architecture) and Results (latent-space usage)] The claim that the 16×16×16×16 latent representation 'preserves physically meaningful features' rests solely on negligible reconstruction error (Abstract and Methods). Reconstruction fidelity does not guarantee that density gradients, curvatures, or bonding signatures relevant to elastic moduli and Debye temperature are retained; an ablation study, latent-dimension importance analysis, or comparison against property-specific descriptors is required to support the central assertion that the compressed space is sufficient for the reported regressions.

minor comments (2)

- [Abstract] The abstract states the framework is 'physics-informed,' yet the autoencoder training is purely unsupervised with no explicit physics-based loss terms or constraints; this terminology should be clarified or qualified.

- [Figure captions and Methods] Figure captions and text should explicitly state the number of compounds used for autoencoder training versus regression training to allow readers to assess potential overlap.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed comments, which have helped us identify areas for improvement in clarity and validation. We address each major comment point by point below and have revised the manuscript accordingly.

read point-by-point responses

-

Referee: [Results (paragraphs presenting R² values and dataset description)] The results section reporting R² = 0.94 for K (and the corresponding values for E, G, Eform, Θ) provides no information on train/test splits, cross-validation strategy, or error bars. Because the autoencoder and downstream regressors are trained on the same ~6059-compound dataset, the absence of these details leaves open the possibility of leakage or overfitting, directly undermining confidence in the quoted predictive performance.

Authors: We agree that the original manuscript did not provide sufficient detail on the data partitioning and validation procedures, which is a valid concern for assessing potential leakage or overfitting. In the revised manuscript, we have expanded the Methods section to explicitly describe our protocol: the dataset was randomly partitioned into an 80/20 train/test split (with stratification by crystal system to maintain diversity), the autoencoder was trained solely on the training portion for unsupervised compression, and the downstream regressors (LightGBM and Att CNN) were trained and evaluated using 5-fold cross-validation on the training set with the test set held completely out. We now report mean R² values along with standard deviations across the folds as error bars in the updated results tables and figures. These changes eliminate ambiguity regarding data leakage and strengthen confidence in the reported performance. revision: yes

-

Referee: [Methods (autoencoder architecture) and Results (latent-space usage)] The claim that the 16×16×16×16 latent representation 'preserves physically meaningful features' rests solely on negligible reconstruction error (Abstract and Methods). Reconstruction fidelity does not guarantee that density gradients, curvatures, or bonding signatures relevant to elastic moduli and Debye temperature are retained; an ablation study, latent-dimension importance analysis, or comparison against property-specific descriptors is required to support the central assertion that the compressed space is sufficient for the reported regressions.

Authors: We acknowledge that reconstruction error alone does not fully establish retention of the specific charge-density features (e.g., gradients and bonding signatures) that govern the target properties, and the original manuscript's reliance on this metric for the central claim was insufficient. While the manuscript already shows that the latent representation yields strong predictive performance and that fusing it with MAGPIE descriptors further improves accuracy (indicating complementary information beyond composition), this indirect evidence is not conclusive. In the revised manuscript, we have added an ablation study comparing regression performance using the learned latent vectors versus randomized latent vectors of the same dimensionality, as well as a latent-dimension sensitivity analysis that perturbs individual dimensions and quantifies the resulting change in predicted properties. These additions provide direct support that the compressed representation retains property-relevant physical information. revision: yes

Circularity Check

No circularity: unsupervised compression followed by independent supervised regression

full rationale

The derivation chain consists of (1) unsupervised training of a 3D convolutional autoencoder solely on 128^3 DFT charge-density grids to produce a 16x16x16x16 latent vector, justified by reconstruction error, and (2) separate supervised regression (LightGBM or Att-CNN) trained on the latent vectors plus optional MAGPIE descriptors to predict the five target properties. The reported R2 values are test-set performance metrics of the regression step; they are not obtained by fitting any parameter to the targets and then relabeling the fit as a prediction. No equation equates a derived quantity to its own input by construction, no self-citation supplies a load-bearing uniqueness theorem, and the latent-space features are learned from the density input alone rather than from the property labels. The workflow is therefore self-contained against external benchmarks and receives the default non-circularity finding.

Axiom & Free-Parameter Ledger

free parameters (2)

- latent grid size 16x16x16x16

- autoencoder depth, filter counts, and training hyperparameters

axioms (1)

- domain assumption DFT-computed charge density is a sufficient descriptor for mechanical and thermodynamic properties

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearthree-dimensional convolutional autoencoder for unsupervised dimensionality reduction, compressing a high-resolution charge-density grid (128×128×128) into a compact latent representation (16×16×16×16) while preserving physically meaningful features

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearmodels achieve R² = 0.94 for bulk modulus K, 0.88 for Young's modulus E, ...

Reference graph

Works this paper leans on

-

[1]

Crystal Graph Convolutional Neural Networks for an Accurate and Interpretable Prediction of Material Properties , author =. Phys. Rev. Lett. , volume =. 2018 , month =. doi:10.1103/PhysRevLett.120.145301 , url =

-

[2]

Projector augmented-wave method , author =. Phys. Rev. B , volume =. 1994 , month =. doi:10.1103/PhysRevB.50.17953 , url =

-

[3]

Generalized Gradient Approximation Made Simple , author =. Phys. Rev. Lett. , volume =. 1996 , month =. doi:10.1103/PhysRevLett.77.3865 , url =

- [4]

-

[5]

Casey, Alex D. and Son, Steven F. and Bilionis, Ilias and Barnes, Brian C. , title =. Journal of Chemical Information and Modeling , volume =. 2020 , doi =

work page 2020

-

[6]

Ward, Logan and Agrawal, Ankit and Choudhary, Alok and Wolverton, Christopher , year=. A general-purpose machine learning framework for predicting properties of inorganic materials , volume=. npj Computational Materials , publisher=

-

[7]

Persaud, Daniel and Ward, Logan and Hattrick-Simpers, Jason. Reproducibility in materials informatics: lessons from ‘A general-purpose machine learning framework for predicting properties of inorganic materials’. Digital Discovery. 2024. doi:10.1039/D3DD00199G

-

[8]

VESTA : a three-dimensional visualization system for electronic and structural analysis

Momma, Koichi and Izumi, Fujio. VESTA : a three-dimensional visualization system for electronic and structural analysis. Journal of Applied Crystallography. 2008. doi:10.1107/S0021889808012016 , url =

-

[9]

Kumar, Rajan and Joshi, Ablokit and Khan, Salman A. and Misra, Shikhar. Automated extraction of synthesis parameters of pulsed laser-deposited materials from scientific literature. Digital Discovery. 2024. doi:10.1039/D4DD00051J

-

[10]

Journal of Chemical Information and Modeling , volume =

Sinha, Prashant and Joshi, Ablokit and Dey, Rik and Misra, Shikhar , title =. Journal of Chemical Information and Modeling , volume =. 2024 , doi =

work page 2024

-

[11]

Journal of Computational Chemistry , volume=

Ab-initio simulations of materials using VASP: Density-functional theory and beyond , author=. Journal of Computational Chemistry , volume=. 2008 , url=

work page 2008

-

[12]

Yusuf Khan and Shahid Bashir and Maryam Hina and S. Ramesh and K. Ramesh and M.A. Mujtaba and Indranil Lahiri and S. Ramesh , title =. Journal of Materials Science , year =

-

[13]

First-principles study on the charge density and the bulk modulus of the transition metals and their carbides and nitrides , author=. Chinese Physics , volume=

-

[14]

Modern Charge-Density Analysis , editor =

Cesare Pisani and Roberto Dovesi and Alessandro Erba and Paolo Giannozzi , title =. Modern Charge-Density Analysis , editor =. 2012 , publisher =. doi:10.1007/978-90-481-3836-4_2 , url =

-

[15]

Nature Communications , volume=

Insightful classification of crystal structures using deep learning , author=. Nature Communications , volume=. 2018 , url=

work page 2018

-

[16]

Integrating Materials and Manufacturing Innovation , volume=

High throughput quantitative metallography for complex microstructures using deep learning: A case study in ultrahigh carbon steel , author=. Integrating Materials and Manufacturing Innovation , volume=. 2020 , url=

work page 2020

-

[17]

npj Computational Materials , volume =

Yabo Dan and Yong Zhao and Xiang Li and Shaobo Li and Ming Hu and Jianjun Hu , title =. npj Computational Materials , volume =. 2020 , publisher =

work page 2020

-

[18]

Linda, Albert and Akhtar, Md. Faiz and Bhowmick, Somnath. Deformation in Metals: Insights from ab-initio Calculations. Proceedings of the International Conference on Metallurgical Engineering and Centenary Celebration. 2024

work page 2024

-

[19]

Materials Characterization , volume=

A robust method of denoising experimental micrographs using deep learning , author=. Materials Characterization , volume=. 2023 , publisher=

work page 2023

-

[20]

Accelerating microstructure modeling via machine learning: A method combining Autoencoder and ConvLSTM , author =. Phys. Rev. Mater. , volume =. 2023 , month =. doi:10.1103/PhysRevMaterials.7.083802 , url =

-

[21]

Ahmad, Owais and Panwar, Vishal and Das, Kaushik and Mukherjee, Rajdip and Bhowmick, Somnath , title =. Physica Scripta , abstract =. 2025 , month =. doi:10.1088/1402-4896/ade832 , url =

-

[22]

Deep learning-driven prediction of microstructure evolution via latent space interpolation , author =. Phys. Rev. Mater. , volume =. 2025 , month =. doi:10.1103/5ngk-4v9j , url =

-

[23]

arXiv preprint arXiv:2411.12280 , year=

Large Language Models for Material Property Predictions: elastic constant tensor prediction and materials design , author=. arXiv preprint arXiv:2411.12280 , year=

-

[24]

APL Machine Learning , volume =

Elastic constants from charge density distribution in FCC high-entropy alloys using CNN and DFT , author =. APL Machine Learning , volume =. 2024 , publisher =. doi:10.1063/5.0229105 , url =

-

[25]

Machine Learning-Based Prediction of Elastic Properties Using Reduced Datasets of Accurate Calculations Results , author=. Metals , volume=. 2024 , publisher=. doi:10.3390/met14040438 , url=

-

[26]

H. Levämäki and F. Tasnádi and D. G. Sangiovanni and L. J. S. Johnson and R. Armiento and I. A. Abrikosov , title =. npj Computational Materials , volume =. 2022 , url =

work page 2022

-

[27]

The Journal of Physical Chemistry C , year=

Zhao, Yong and Yuan, Kunpeng and Liu, Yinqiao and Louis, Steph-Yves and Hu, Ming and Hu, Jianjun , title=. The Journal of Physical Chemistry C , year=. doi:10.1021/acs.jpcc.0c02348 , url=

-

[28]

Diamond and Related Materials , volume=

Explorative prediction of novel superhard carbon allotropes with larger cell: Density functional theory-assisted deep learning , author=. Diamond and Related Materials , volume=. 2024 , publisher=. doi:10.1016/j.diamond.2024.111320 , url=

-

[29]

Charge-density based convolutional neural networks for stacking fault energy prediction in concentrated alloys , author=. Materialia , volume=. 2022 , publisher=. doi:10.1016/j.mtla.2022.101620 , url=

-

[30]

International Journal of Hydrogen Energy , year =

Xuhao Liu and Zilin Yan and Zheng Zhong , title =. International Journal of Hydrogen Energy , year =. doi:10.1016/j.ijhydene.2021.04.033 , url =

-

[31]

Iman Peivaste and Saba Ramezani and Ghasem Alahyarizadeh and Reza Ghaderi and Ahmed Makradi and Salim Belouettar , title =. Scientific Reports , volume =. 2024 , publisher =. doi:10.1038/s41598-023-50893-9 , url =

-

[32]

Pranoy Ray and Kamal Choudhary and Surya R. Kalidindi , title =. Integrating Materials and Manufacturing Innovation , volume =. 2025 , publisher =. doi:10.1007/s40192-024-00389-9 , url =

-

[33]

npj Computational Materials , volume =

Seonghwan Kim and Byung Do Lee and Min Young Cho and Myoungho Pyo and Young-Kook Lee and Woon Bae Park and Kee-Sun Sohn , title =. npj Computational Materials , volume =. 2024 , publisher =

work page 2024

-

[34]

Machine Learning: Science and Technology , volume =

Deepak Kamal and Anand Chandrasekaran and Rohit Batra and Rampi Ramprasad , title =. Machine Learning: Science and Technology , volume =. 2020 , publisher =. doi:10.1088/2632-2153/ab7a3a , url =

-

[35]

Siboni and Bai-Xiang Xu and Dierk Raabe , title =

Jaber Rezaei Mianroodi and Shahed Rezaei and Nima H. Siboni and Bai-Xiang Xu and Dierk Raabe , title =. npj Computational Materials , volume =. 2022 , publisher =

work page 2022

-

[36]

arXiv:2109.03020v1 [cond-mat.mtrl-sci] , year =

Bernhard Eidel , title =. arXiv:2109.03020v1 [cond-mat.mtrl-sci] , year =

-

[37]

Xuehai Wu and Parameshwaran Pasupathy and Assimina A. Pelegri , title =. Computer Methods and Programs in Biomedicine , volume =. 2024 , doi =

work page 2024

-

[38]

Efficient Data Compression for 3D Sparse TPC via Bicephalous Convolutional Autoencoder , year=

Huang, Yi and Ren, Yihui and Yoo, Shinjae and Huang, Jin , booktitle=. Efficient Data Compression for 3D Sparse TPC via Bicephalous Convolutional Autoencoder , year=

-

[39]

Significant Dimension Reduction of 3D Brain MRI using 3D Convolutional Autoencoders , year=

Arai, Hayato and Chayama, Yusuke and Iyatomi, Hitoshi and Oishi, Kenichi , booktitle=. Significant Dimension Reduction of 3D Brain MRI using 3D Convolutional Autoencoders , year=

-

[40]

Auto-encoder based dimensionality reduction , journal =

Yasi Wang and Hongxun Yao and Sicheng Zhao , keywords =. Auto-encoder based dimensionality reduction , journal =. 2016 , note =. doi:https://doi.org/10.1016/j.neucom.2015.08.104 , url =

-

[41]

Pande, Shivam and Banerjee, Biplab , booktitle=. Dimensionality Reduction Using 3D Residual Autoencoder for Hyperspectral Image Classification , year=

-

[42]

Yasi Wang and Hongxun Yao and Sicheng Zhao and Ying Zheng , title =. Proceedings of the 7th International Conference on Internet Multimedia Computing and Service (ICIMCS '15) , pages =. 2015 , doi =

work page 2015

-

[43]

Autoencoder-based Dimensionality Reduction for QSAR Modeling , year=

Alsenan, Shrooq and Al-Turaiki, Isra and Hafez, Alaaeldin , booktitle=. Autoencoder-based Dimensionality Reduction for QSAR Modeling , year=

-

[44]

Density-Functional Theory of Atoms and Molecules , author=

-

[45]

Electronic Structure: Basic Theory and Practical Methods , author=

-

[46]

Zur Quantentheorie der Molekeln , author=. Annalen der Physik , volume=. 1927 , doi=

work page 1927

-

[47]

Inhomogeneous Electron Gas , author=. Physical Review , volume=. 1964 , publisher=

work page 1964

-

[48]

Self-Consistent Equations Including Exchange and Correlation Effects , author=. Physical Review , volume=. 1965 , publisher=

work page 1965

-

[49]

Self-interaction correction to the exchange-correlation approximation for inhomogeneous electron systems , author=. Physical Review B , volume=. 1981 , publisher=

work page 1981

-

[50]

Physical Review Letters , volume=

Generalized Gradient Approximation Made Simple , author=. Physical Review Letters , volume=. 1996 , publisher=

work page 1996

-

[51]

Efficient iterative schemes for ab initio total-energy calculations using a plane-wave basis set , author=. Physical Review B , volume=. 1996 , publisher=

work page 1996

-

[52]

Ab initio molecular dynamics for liquid metals , author=. Physical Review B , volume=. 1993 , url=

work page 1993

-

[53]

The Materials Project: A materials database and online interface for high-throughput materials computation , author=. APL Materials , volume=. 2013 , url=

work page 2013

-

[54]

Projector augmented-wave method , author=. Physical Review B , volume=. 1994 , publisher=

work page 1994

-

[55]

From ultrasoft pseudopotentials to the projector augmented-wave method , author=. Physical Review B , volume=. 1999 , publisher=

work page 1999

-

[56]

Special points for Brillouin-zone integrations , author=. Physical Review B , volume=. 1976 , publisher=

work page 1976

-

[57]

Computational Materials Science , volume =

Calculation of elastic constants of 4d transition metals , author =. Computational Materials Science , volume =. 2015 , publisher =

work page 2015

- [58]

-

[59]

Proceedings of the 9th Python in Science Conference , year =

Wes McKinney , title =. Proceedings of the 9th Python in Science Conference , year =

-

[60]

Journal of Machine Learning Research , volume=

Scikit-learn: Machine Learning in Python , author=. Journal of Machine Learning Research , volume=

-

[61]

Proceedings of the Physical Society

The elastic behaviour of a crystalline aggregate , author=. Proceedings of the Physical Society. Section A , volume=. 1952 , publisher=

work page 1952

-

[62]

and Toher, Cormac and Curtarolo, Stefano and Ceder, Gerbrand and Persson, Kristin A

de Jong, Maarten and Chen, Wei and Angsten, Thomas and Jain, Anubhav and Notestine, Randy and Gamst, Anthony and Sluiter, Marcel and Ande, Chaitanya Krishna and van der Zwaag, Sybrand and Plata, Jose J. and Toher, Cormac and Curtarolo, Stefano and Ceder, Gerbrand and Persson, Kristin A. and Asta, Mark , title =. Scientific Data , volume =. 2015 , publishe...

-

[63]

Larsen and Jens Jørgen Mortensen and Jakob Blomqvist and Ivano E

Ask H. Larsen and Jens Jørgen Mortensen and Jakob Blomqvist and Ivano E. Castelli and Rasmus Christensen and Marcin Dułak and Jesper Friis and Michael N. Groves and Bjørk Hammer and Cory Hargus and Eric D. Hermes and Peter C. Jennings and Peter Bjerre Jensen and James Kermode and John R. Kitchin and Esben Lundgaard and Andrew Maxson and Thomas Olsen and L...

-

[64]

Reducing the dimensionality of data with neural networks

Reducing the dimensionality of data with neural networks , author =. Science , volume =. 2006 , publisher =. doi:10.1126/science.1127647 , url =

-

[65]

IEEE Transactions on Pattern Analysis and Machine Intelligence , volume =

3D Convolutional Neural Networks for Human Action Recognition , author =. IEEE Transactions on Pattern Analysis and Machine Intelligence , volume =. 2013 , publisher =

work page 2013

-

[66]

TensorFlow: Large-Scale Machine Learning on Heterogeneous Systems , author =. 2015 , howpublished =

work page 2015

-

[67]

O’Malley, L. and Bursztein, E. and Chollet, F. , title =. 2019 , url =

work page 2019

-

[68]

Samaei and Tu Phan and Maarten

George Kim and Haoyan Diao and Chanho Lee and A.T. Samaei and Tu Phan and Maarten. First-principles and machine learning predictions of elasticity in severely lattice-distorted high-entropy alloys with experimental validation , journal =. 2019 , issn =. doi:https://doi.org/10.1016/j.actamat.2019.09.026 , url =

-

[69]

Johnson and Raymundo Arróyave , keywords =

Guillermo Vazquez and Prashant Singh and Daniel Sauceda and Richard Couperthwaite and Nicholas Britt and Khaled Youssef and Duane D. Johnson and Raymundo Arróyave , keywords =. Efficient machine-learning model for fast assessment of elastic properties of high-entropy alloys , journal =. 2022 , issn =. doi:https://doi.org/10.1016/j.actamat.2022.117924 , url =

-

[70]

Machine learning elastic constants of multi-component alloys , journal =

Vivek Revi and Saurabh Kasodariya and Anjana Talapatra and Ghanshyam Pilania and Alankar Alankar , keywords =. Machine learning elastic constants of multi-component alloys , journal =. 2021 , issn =. doi:https://doi.org/10.1016/j.commatsci.2021.110671 , url =

-

[71]

Ross and Iyer, Krithika and Held, Leander I

Grant, Michael and Kunz, M. Ross and Iyer, Krithika and Held, Leander I. and Tasdizen, Tolga and Aguiar, Jeffery A. and Dholabhai, Pratik P. , title=. Journal of Materials Research , year=. doi:10.1557/s43578-022-00557-7 , url=

-

[72]

Khakurel, Hrishabh and Taufique, M. F. N. and Roy, Ankit and Balasubramanian, Ganesh and Ouyang, Gaoyuan and Cui, Jun and Johnson, Duane D. and Devanathan, Ram , title=. Scientific Reports , year=. doi:10.1038/s41598-021-96507-0 , url=

-

[73]

G. Hayashi and K. Suzuki and T. Terai and H. Fujii and M. Ogura and K. Sato , title =. Science and Technology of Advanced Materials: Methods , volume =. 2022 , publisher =. doi:10.1080/27660400.2022.2125853 , URL =

-

[74]

Wei Mei and Gaoshang Zhang and Kuang Yu , keywords =. Predicting elastic properties of refractory high-entropy alloys via machine-learning approach , journal =. 2023 , issn =. doi:https://doi.org/10.1016/j.commatsci.2023.112249 , url =

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.