Recognition: no theorem link

Hierarchical Multi-Fidelity Learning for Predicting Three-Dimensional Flame Wrinkling and Turbulent Burning Velocity

Pith reviewed 2026-05-12 00:45 UTC · model grok-4.3

The pith

Hierarchical multi-fidelity neural networks predict three-dimensional flame wrinkling and turbulent burning velocity by combining low-fidelity physical trend models with sparse high-fidelity experimental measurements.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

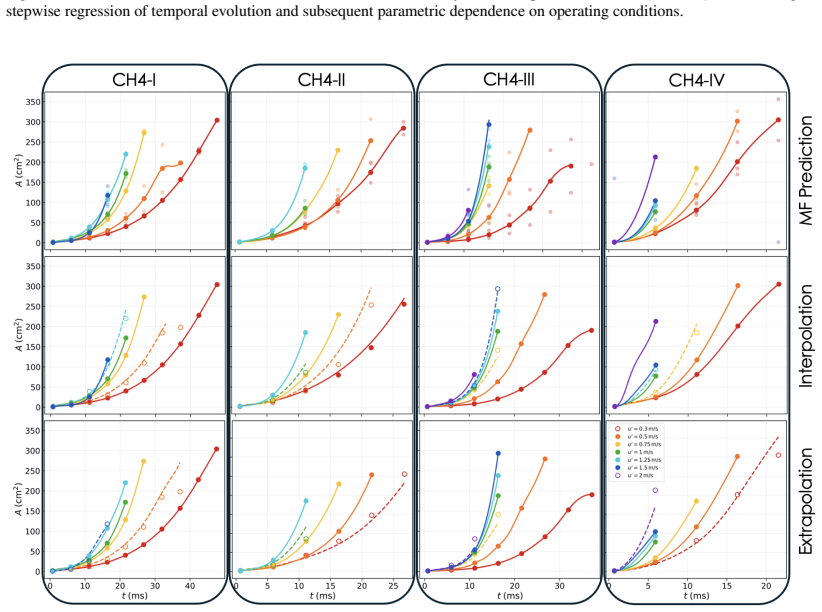

The central claim is that a hierarchical multi-fidelity neural network framework integrates structured low-fidelity representations of dominant physical trends with nonlinear multi-fidelity corrections trained on sparse high-fidelity experimental data, thereby accurately predicting three-dimensional flame wrinkling dynamics and turbulent mass burning velocity for expanding premixed flames across varying fuels, pressures, and turbulence intensities, while supporting interpolation within observed conditions and robust extrapolation beyond the training domain.

What carries the argument

MuFiNNs, the hierarchical multi-fidelity neural network that performs hierarchical low-fidelity construction followed by nonlinear multi-fidelity correction to recover discrepancies between simplified trend models and observations.

If this is right

- MuFiNNs accurately reconstructs observed three-dimensional flame wrinkling and turbulent burning velocity from sparse high-fidelity measurements.

- The trained models enable interpolation across unseen combinations of fuel, pressure, and turbulence intensity.

- The framework demonstrates robust extrapolation to conditions beyond the training domain.

- MuFiNNs remains effective in noisy, weakly structured, or experimentally inaccessible regimes where conventional data-driven approaches fail.

Where Pith is reading between the lines

- The same hierarchical correction strategy could be tested on other turbulence-sensitive flows such as non-premixed flames or reacting jets where high-fidelity diagnostics are equally costly.

- If the low-fidelity trend models can be replaced by inexpensive simulations rather than analytic approximations, the framework might scale to full three-dimensional time-dependent predictions.

- The method points toward a general pattern for scientific machine learning: embed the dominant physics once in a cheap trend model and let data-driven corrections handle only the residuals.

Load-bearing premise

The low-fidelity trend models must already encode the main physical trends so that the learned nonlinear corrections can fill in the remaining gaps.

What would settle it

Collect new high-fidelity measurements at operating conditions far outside the training range where the low-fidelity models are known to deviate strongly from reality; if MuFiNNs predictions then show large systematic errors that do not improve with additional sparse data, the framework is falsified.

Figures

read the original abstract

High-fidelity experimental characterization of turbulent premixed flames remains limited by the cost and complexity of advanced diagnostics, particularly under elevated pressures and intense turbulence where measurements of coupled flame morphology and burning dynamics are sparse. Here, we develop a hierarchical multi-fidelity neural network framework (MuFiNNs) to address this challenge by integrating sparse high-fidelity experimental data with structured low-fidelity representations encoding dominant physical trends. The framework combines hierarchical low-fidelity construction with nonlinear multi-fidelity correction to learn coupled geometric and reactive flame behavior while recovering discrepancies that simplified models alone cannot capture. The methodology is applied to expanding turbulent premixed flames to predict three-dimensional flame wrinkling dynamics and turbulent mass burning velocity across varying fuels, pressures, and turbulence intensities. Using experimentally informed low-fidelity trend models with sparse high-fidelity measurements, MuFiNNs accurately reconstruct observed flame behavior, enable interpolation across unseen operating conditions, and demonstrate robust extrapolation beyond the training domain. Importantly, the framework remains effective in noisy, weakly structured, or experimentally inaccessible regimes where conventional data-driven approaches often fail. These results show that hierarchical multi-fidelity learning provides a scalable and physically grounded strategy for predictive combustion modeling in data-limited regimes. More broadly, this work establishes multi-fidelity scientific machine learning as a practical framework for extracting physically meaningful predictive models from sparse experiments, particularly for instability-dominated and turbulence-sensitive reactive flows where high-fidelity data acquisition is demanding.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces a hierarchical multi-fidelity neural network framework (MuFiNNs) that combines structured low-fidelity trend models (informed by experimental physics) with sparse high-fidelity data to predict three-dimensional flame wrinkling dynamics and turbulent mass burning velocity for expanding turbulent premixed flames across fuels, pressures, and turbulence intensities. It claims the approach enables accurate reconstruction of observed behavior, interpolation to unseen operating conditions, and robust extrapolation beyond the training domain, remaining effective in noisy or data-limited regimes where conventional methods fail.

Significance. If the quantitative validation holds, the work would be significant for combustion modeling and scientific machine learning: it offers a scalable, physically grounded strategy for predictive modeling in data-scarce, turbulence-sensitive reactive flows by leveraging hierarchical low-fidelity encodings to recover discrepancies that simplified models miss. This could reduce reliance on costly high-fidelity diagnostics while producing falsifiable, extrapolative predictions.

major comments (2)

- [Abstract and Results] Abstract and Results (implied): The central claims of 'accurate reconstruction', 'enable interpolation', and 'robust extrapolation' are asserted without any quantitative metrics, error bars, validation protocols, cross-validation details, or data-exclusion criteria. This is load-bearing for the performance assertions and must be supported with specific measures (e.g., relative L2 errors, extrapolation error vs. distance from training domain) before the claims can be evaluated.

- [Methods] Methods (hierarchical construction): The weakest assumption—that low-fidelity trend models sufficiently encode dominant physical trends so the nonlinear correction recovers the rest—requires explicit validation. Without reported comparisons (e.g., low-fidelity-only predictions vs. MuFiNNs vs. high-fidelity data) or sensitivity tests on the low-fidelity model fidelity, it is unclear whether the multi-fidelity correction is genuinely learning physics or compensating for model deficiencies.

minor comments (2)

- Notation for the hierarchical low-fidelity construction and the nonlinear correction term should be defined more explicitly, ideally with a schematic diagram or pseudocode, to improve reproducibility.

- The manuscript would benefit from a dedicated limitations section discussing the range of applicability (e.g., flame regimes where low-fidelity trends break down) and any observed failure modes during extrapolation.

Simulated Author's Rebuttal

We thank the referee for their constructive and insightful review of our manuscript. We have carefully considered each major comment and provide point-by-point responses below. Revisions have been made to address the concerns and strengthen the quantitative validation and methodological transparency of the work.

read point-by-point responses

-

Referee: [Abstract and Results] Abstract and Results (implied): The central claims of 'accurate reconstruction', 'enable interpolation', and 'robust extrapolation' are asserted without any quantitative metrics, error bars, validation protocols, cross-validation details, or data-exclusion criteria. This is load-bearing for the performance assertions and must be supported with specific measures (e.g., relative L2 errors, extrapolation error vs. distance from training domain) before the claims can be evaluated.

Authors: We thank the referee for highlighting the importance of explicit quantitative support for these claims. We agree that the original presentation would benefit from additional metrics and details. In the revised manuscript, we have added a new subsection in the Results section reporting relative L2 errors for reconstruction, interpolation, and extrapolation tasks, along with error bars obtained from ensemble training runs. We have also included a description of the cross-validation protocol (k-fold with held-out test sets) and data-exclusion criteria for extrapolation experiments, including quantitative plots of prediction error versus distance from the training domain in operating-condition space. revision: yes

-

Referee: [Methods] Methods (hierarchical construction): The weakest assumption—that low-fidelity trend models sufficiently encode dominant physical trends so the nonlinear correction recovers the rest—requires explicit validation. Without reported comparisons (e.g., low-fidelity-only predictions vs. MuFiNNs vs. high-fidelity data) or sensitivity tests on the low-fidelity model fidelity, it is unclear whether the multi-fidelity correction is genuinely learning physics or compensating for model deficiencies.

Authors: We appreciate this comment on the need to validate the hierarchical construction. We agree that direct comparisons and sensitivity analyses strengthen the interpretation. In the revised manuscript, we have added new figures and tables that explicitly compare low-fidelity-only predictions, full MuFiNNs predictions, and high-fidelity experimental data across the range of fuels, pressures, and turbulence intensities. We have also performed and reported sensitivity tests in which the fidelity of the low-fidelity trend models was systematically varied (e.g., from simplified empirical forms to more detailed physics-informed representations), showing the resulting impact on the learned nonlinear correction term and overall accuracy. These additions demonstrate that the correction recovers physically meaningful discrepancies. revision: yes

Circularity Check

No significant circularity detected in derivation chain

full rationale

The paper describes a hierarchical multi-fidelity neural network (MuFiNNs) that combines external experimental high-fidelity data with independently constructed low-fidelity trend models encoding physical trends. Predictions of flame wrinkling and turbulent burning velocity arise from training this network on sparse measurements to learn corrections, rather than from any internal redefinition, fitted parameter renamed as output, or self-citation chain. The abstract and methodology explicitly ground the approach in external data sources and low-fidelity representations that are not derived from the target quantities, rendering the framework self-contained with no load-bearing reductions to its own inputs.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Y . B. Zel’dovich, On the theory of flame propaga- tion, Zhurnal Eksperimental’noi i Teoreticheskoi Fizi- kiClassic early work (in Russian); bibliographic vari- ants exist. (1944)

work page 1944

-

[2]

G. H. Markstein, Nonsteady flame propagation, Jour- nal of the Aeronautical SciencesBibliographic details may vary by archive edition. (1951)

work page 1951

-

[3]

P. Clavin, Dynamic behavior of premixed flame fronts in laminar and turbulent flows, Progress in Energy and Combustion Science 11 (1985) 1–59

work page 1985

-

[4]

M. Matalon, B. J. Matkowsky, Flames as gasdynamic discontinuities. part II. unsteady flame dynamics, Jour- nal of Fluid Mechanics 124 (1982) 239–259

work page 1982

-

[5]

C. K. Law, Combustion Physics, Cambridge University Press, 2006

work page 2006

-

[6]

J. K. Bechtold, M. Matalon, The dynamics of curved premixed flames, Annual Review of Fluid Mechanic- sAdd volume/pages if needed from your library man- ager. (2001)

work page 2001

-

[7]

G. I. Sivashinsky, Nonlinear analysis of hydrodynamic instability in laminar flames. part 1. derivation of basic equations, Acta Astronautica 4 (1977) 1177–1206

work page 1977

-

[8]

D. M. Michelson, G. I. Sivashinsky, Nonlinear analy- sis of hydrodynamic instability in laminar flames. part

-

[9]

numerical experiments, Acta Astronautica 4 (1977) 1207–1222

work page 1977

-

[10]

V . V . Bychkov, M. A. Liberman, Dynamics and sta- bility of premixed flames, Physics Reports 325 (4–

-

[11]

(2000) 115–237.doi:10.1016/S0370-1573(99) 00081-2

-

[12]

F. A. Williams, Combustion Theory, 2nd Edition, Addison-Wesley, 1985

work page 1985

-

[13]

Peters, Turbulent Combustion, Cambridge Univer- sity Press, 2000

N. Peters, Turbulent Combustion, Cambridge Univer- sity Press, 2000

work page 2000

-

[14]

T. Poinsot, D. Veynante, Theoretical and Numerical Combustion, 2nd Edition, R. T. Edwards, 2005

work page 2005

-

[15]

Y . Xie, M. E. Morsy, J. Li, J. Yang, Intrinsic cellular instabilities of hydrogen laminar outwardly propagat- ing spherical flames, Fuel 327 (2022) 125149.doi: 10.1016/j.fuel.2022.125149

-

[16]

J. Li, Y . Xie, M. E. Morsy, J. Yang, Laminar burn- ing velocities, Markstein numbers and cellular instabil- ity of spherically propagation ethane/hydrogen/air pre- mixed flames at elevated pressures, Fuel 364 (2024) 131078.doi:10.1016/j.fuel.2024.131078

-

[18]

Y . Xie, J. Yang, X. Gu, Flame wrinkling and self- disturbance in cellularly unstable hydrogen–air lami- nar flames, Combustion and Flame 265 (2024) 113505. doi:10.1016/j.combustflame.2024.113505

-

[20]

Matalon, Flame dynamics, Proceedings of the Combustion Institute 32 (1) (2009) 57–82

M. Matalon, Flame dynamics, Proceedings of the Combustion Institute 32 (1) (2009) 57–82

work page 2009

-

[21]

J. H. Chen, H. G. Im, Dynamics of turbulent premixed hydrogen flames with differential diffusion, Combus- tion and FlameRepresentative supporting reference; fill volume/pages if desired. (2018)

work page 2018

-

[22]

D. Veynante, L. Vervisch, Turbulent combustion mod- eling, Progress in Energy and Combustion Science 28 (2002) 193–266

work page 2002

-

[23]

S. M. Candel, T. J. Poinsot, Flame stretch and the balance equation for the flame area, Combustion Sci- ence and Technology 70 (1–3) (1990) 1–15.doi: 10.1080/00102209008951608. Zolfaghari et al./Combustion and Flame (2026) 13

-

[24]

K. N. C. Bray, Studies of the turbulent burning velocity, Proceedings of the Royal Society of London. Series A: Mathematical and Physical Sciences 431 (1882) (1990) 315–335

work page 1990

-

[25]

A. J. Aspden, M. S. Day, J. B. Bell, Turbulence–flame interactions in lean premixed hydrogen: Transition to the distributed burning regime, Journal of Fluid Me- chanics 680 (2011) 287–320.doi:10.1017/jfm. 2011.164

work page doi:10.1017/jfm 2011

-

[26]

D. Bradley, A. K. C. Lau, M. Lawes, others, Turbu- lent burning velocities of freely propagating flames in a combustion bomb, Combustion and Flame 135 (2003) 503–523

work page 2003

-

[27]

T. Howarth, A. J. Aspden, A mixing model study of the high-pressure turbulent burning velocity of methane– air flames, Journal of Fluid Mechanics 701 (2012) 87– 115

work page 2012

-

[28]

P. Ahmed, B. Thorne, M. Lawes, S. Hochgreb, G. V . Nivarti, R. S. Cant, Three dimensional measurements of surface areas and burning velocities of turbulent spherical flames, Combustion and Flame 233 (2021) 111586.doi:10.1016/j.combustflame.2021. 111586

-

[29]

P. Ahmed, B. J. A. Thorne, J. Yang, Development of a multiple laser-sheet imaging technique for the analysis of three-dimensional turbulent explosion flame struc- tures, Physics of Fluids 36 (8) (2024) 085112.doi: 10.1063/5.0207937

-

[31]

M. Raissi, P. Perdikaris, G. E. Karniadakis, Physics- informed neural networks: A deep learning frame- work for solving forward and inverse problems in- volving nonlinear partial differential equations, Jour- nal of Computational Physics 378 (2019) 686–707. doi:10.1016/j.jcp.2018.10.045

-

[32]

G. E. Karniadakis, I. G. Kevrekidis, L. Lu, P. Perdikaris, S. Wang, L. Yang, Physics-informed ma- chine learning, Nature Reviews Physics 3 (6) (2021) 422–440.doi:10.1038/s42254-021-00314-5

-

[33]

Science367(6481), 1026– 1030 (2020) https://doi.org/10.1126/science.aaw4741

M. Raissi, A. Yazdani, G. E. Karniadakis, Hidden fluid mechanics: Learning velocity and pressure fields from flow visualizations, Science 367 (6481) (2020) 1026– 1030.doi:10.1126/science.aaw4741

-

[34]

M. Raissi, Deep hidden physics models: Deep learning of nonlinear partial differential equations, Journal of Machine Learning Research 19 (25) (2018) 1–24. URLhttp://jmlr.org/papers/v19/18-046. html

work page 2018

-

[35]

L. Zhang, P. N. Suganthan, A survey of randomized algorithms for training neural networks, Information Sciences 364–365 (2016) 146–155.doi:10.1016/j. ins.2016.01.039

work page doi:10.1016/j 2016

-

[36]

M. Mahmoudabadbozchelou, M. Caggioni, S. Shah- savari, W. H. Hartt, G. E. Karniadakis, S. Jamali, Data-driven physics-informed constitutive metamod- eling of complex fluids: A multifidelity neural net- work (MFNN) framework, Journal of Rheology 65 (2) (2021) 179–198.doi:10.1122/8.0000138

-

[37]

D. V . Carvalho, E. M. Pereira, J. S. Cardoso, Ma- chine learning interpretability: A survey on methods and metrics, Electronics 8 (8) (2019) 832.doi:10. 3390/electronics8080832

work page 2019

-

[38]

K. R. Lennon, G. H. McKinley, J. W. Swan, Scientific machine learning for modeling and simulating com- plex fluids, Proceedings of the National Academy of Sciences 120 (17) (2023) e2304669120.doi:10. 1073/pnas.2304669120

work page 2023

-

[39]

V . Buhrmester, D. Münch, M. Arens, Analysis of ex- plainers of black box deep neural networks for com- puter vision: A survey, Machine Learning and Knowl- edge Extraction 3 (4) (2021) 966–989.doi:10.3390/ make3040048

work page 2021

-

[40]

S. Zolfaghari, S. Jamali, Non-local physics-informed neural networks for forward and inverse solutions of granular flows, arXiv preprint arXiv:2602.16081 (2026).doi:10.48550/arXiv.2602.16081. URLhttps://arxiv.org/abs/2602.16081

-

[41]

M. Mahmoudabadbozchelou, G. E. Karniadakis, S. Ja- mali, nn-pinns: Non-newtonian physics-informed neu- ral networks for complex fluid modeling, Soft Matter 18 (2022) 172–185.doi:10.1039/d1sm01298c

- [42]

-

[43]

X. Meng, G. E. Karniadakis, A composite neural net- work that learns from multi-fidelity data: Application to function approximation and inverse pde problems, Journal of Computational Physics 401 (2020) 109020. doi:10.1016/j.jcp.2019.109020

-

[44]

P. Perdikaris, M. Raissi, A. Damianou, N. D. Lawrence, G. E. Karniadakis, Nonlinear information fusion algorithms for data-efficient multi-fidelity mod- elling, Proceedings of the Royal Society A 473 (2198) (2017) 20160751.doi:10.1098/rspa.2016.0751

-

[45]

A. I. J. Forrester, A. Sóbester, A. J. Keane, Multi- fidelity optimization via surrogate modelling, Proceed- ings of the Royal Society A 463 (2088) (2007) 3251– 3269.doi:10.1098/rspa.2007.1900

-

[46]

M. Mahmoudabadbozchelou, K. M. Kamani, S. A. Rogers, S. Jamali, Digital rheometer twins: Learn- ing the hidden rheology of complex fluids through rheology-informed graph neural networks, Proceed- ings of the National Academy of Sciences of the United States of America 119 (20) (2022) e2202234119.doi:10.1073/pnas.2202234119

-

[47]

M. Saadat, W. H. Hartt, N. J. Wagner, S. Jamali, Data-driven constitutive meta-modeling of nonlinear rheology via multifidelity neural networks, Journal of Rheology 68 (5) (2024) 679–693.doi:10.1122/8. 0000831

work page doi:10.1122/8 2024

-

[48]

D. Dabiri, M. Saadat, D. Mangal, S. Jamali, Frac- tional rheology-informed neural networks for data- driven identification of viscoelastic constitutive mod- els, Rheologica Acta 62 (10) (2023) 557–568.doi: 10.1007/s00397-023-01430-0

- [49]

-

[50]

G. Pang, L. Lu, G. E. Karniadakis, fpinns: Fractional physics-informed neural networks, SIAM Journal on Scientific Computing 41 (4) (2019) A2603–A2626. doi:10.1137/18M1229845

- [51]

- [52]

- [53]

-

[54]

M. Saberi, A. B. Farimani, S. Jamali, Rheoformer: A generative transformer model for simulation of com- plex fluids and flows, arXiv preprint arXiv:2510.01365 (2025).doi:10.48550/arXiv.2510.01365

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.