Recognition: no theorem link

Spherical Boltzmann machines: a solvable theory of learning and generation in energy-based models

Pith reviewed 2026-05-12 02:00 UTC · model grok-4.3

The pith

The spherical Boltzmann machine provides an exactly solvable model for the training dynamics and phase transitions in energy-based generative models.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

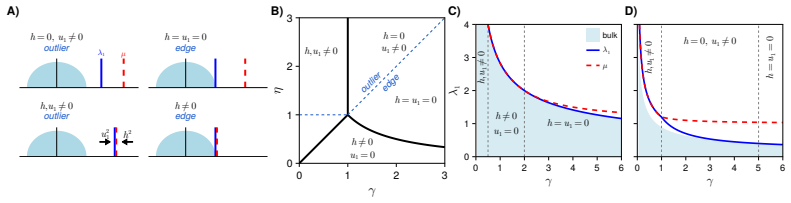

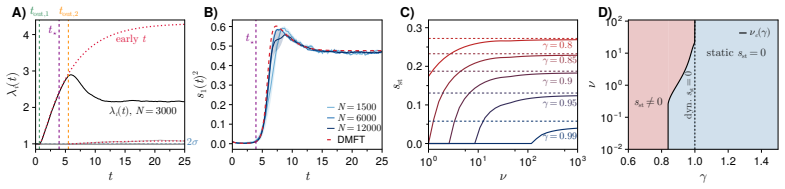

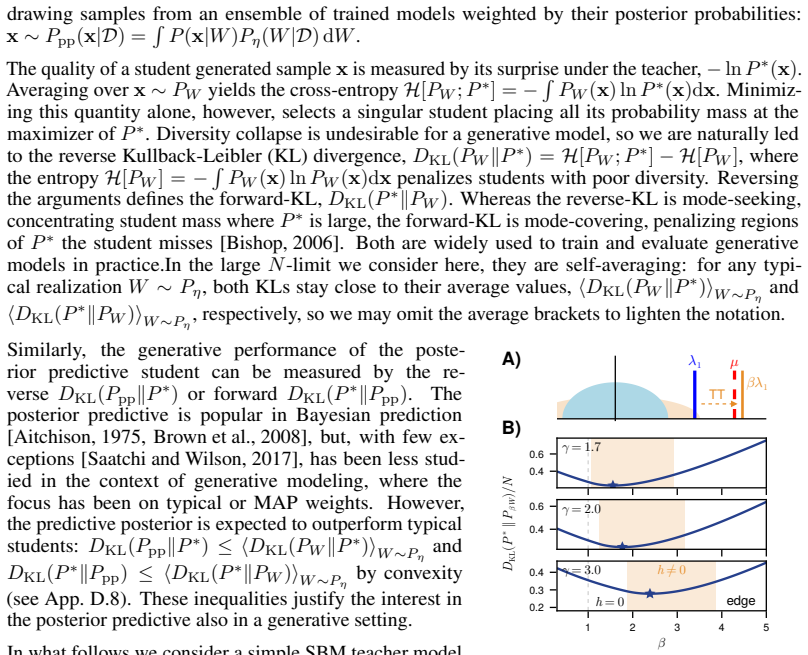

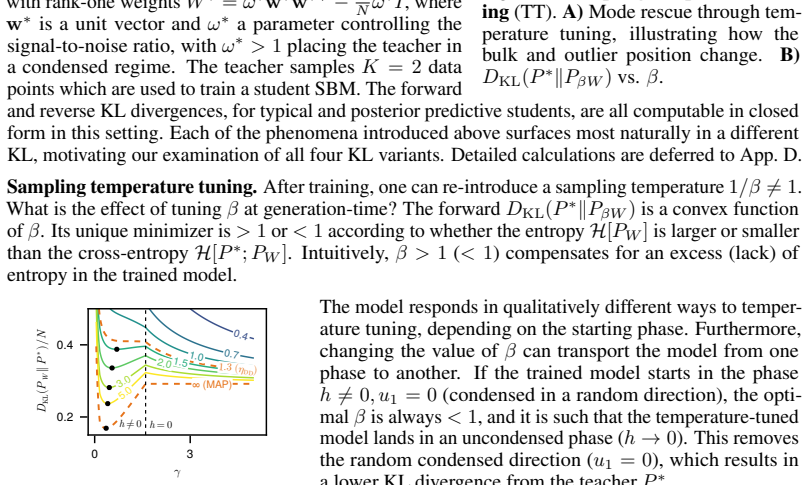

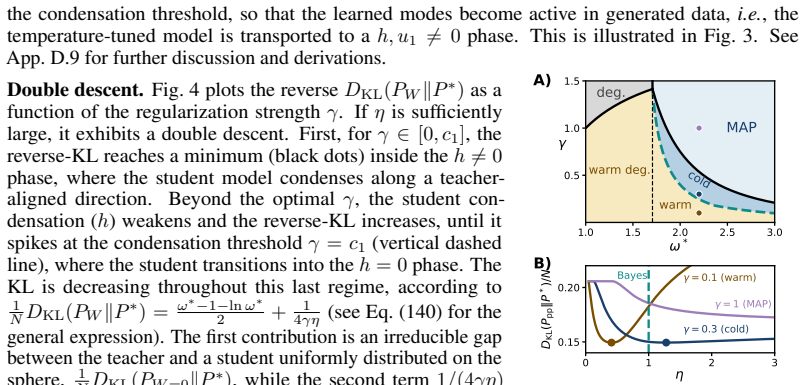

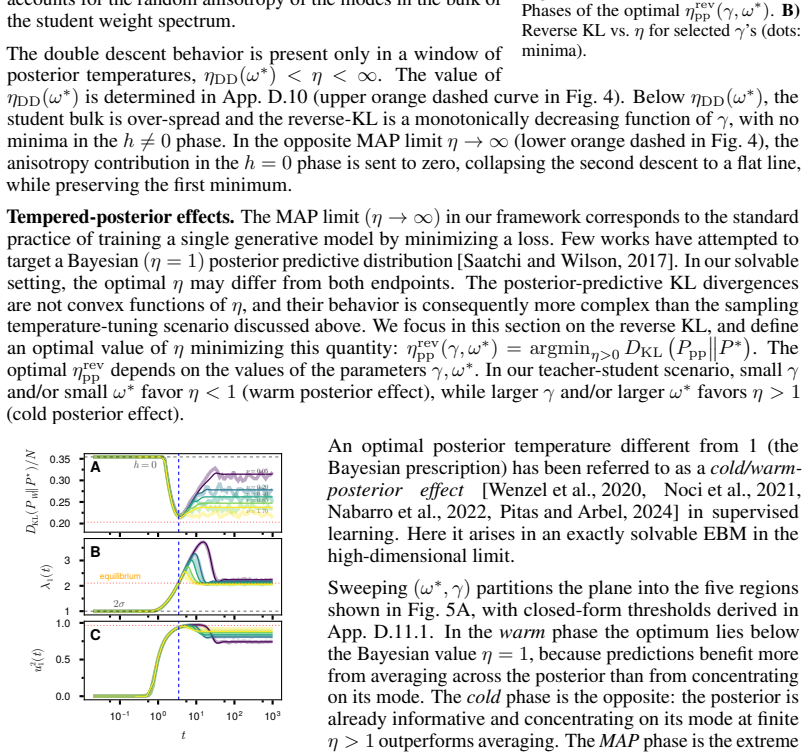

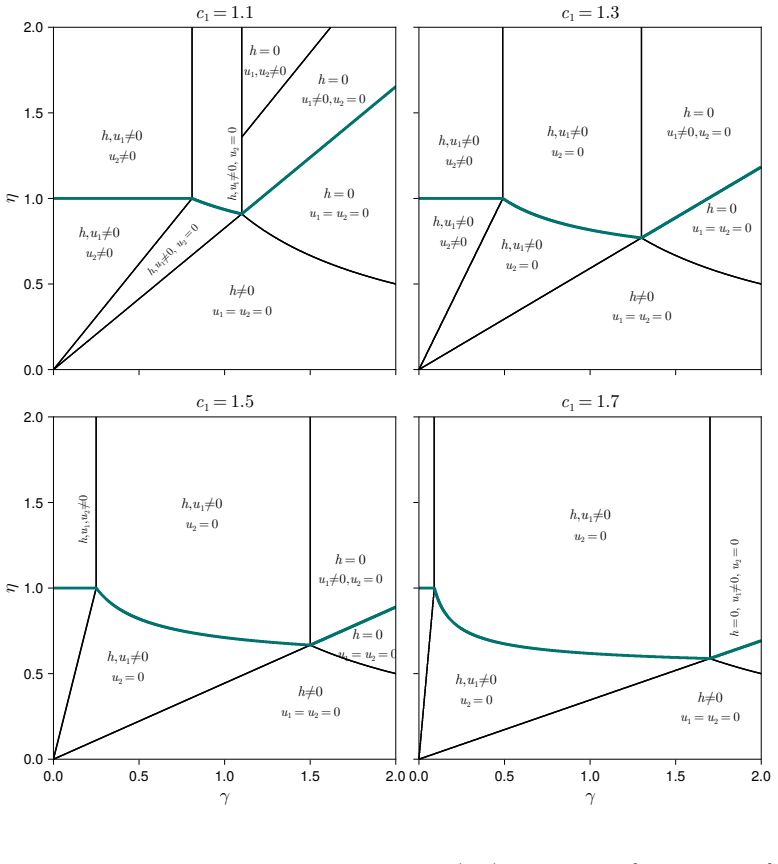

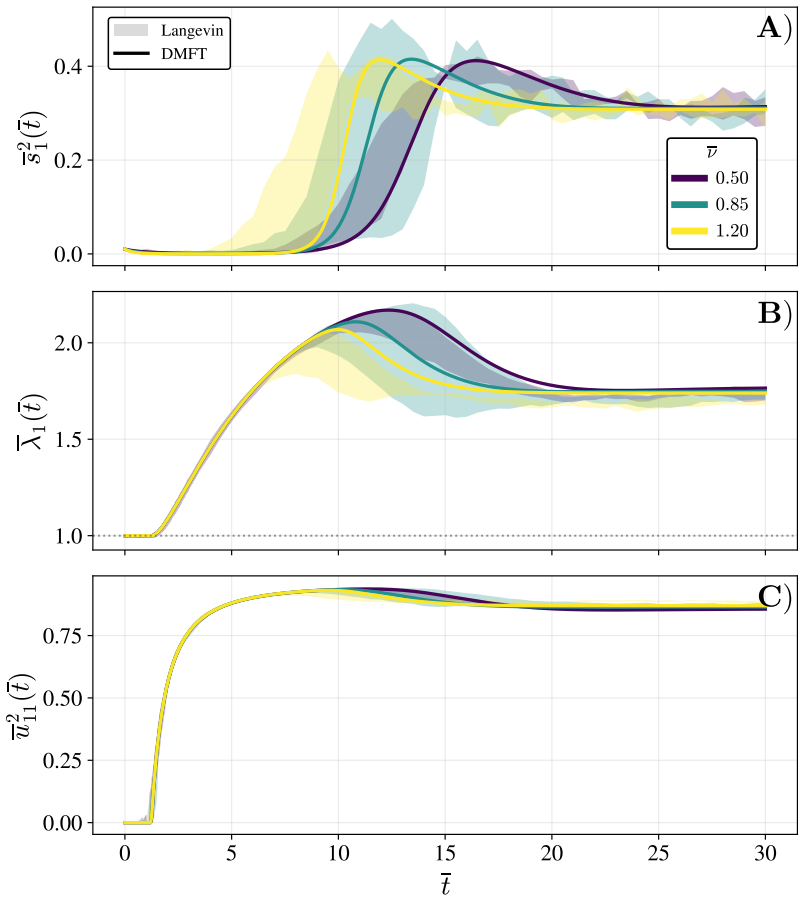

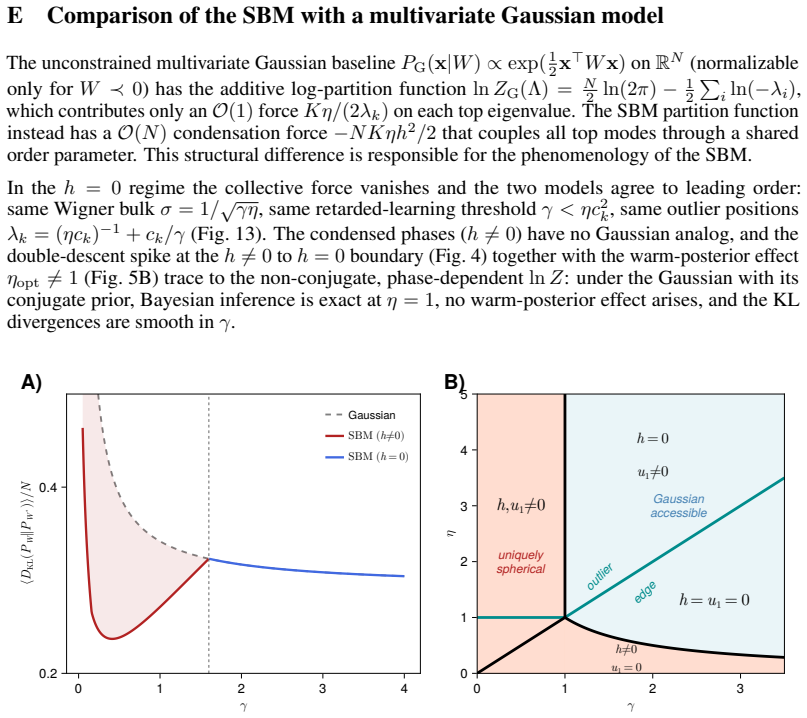

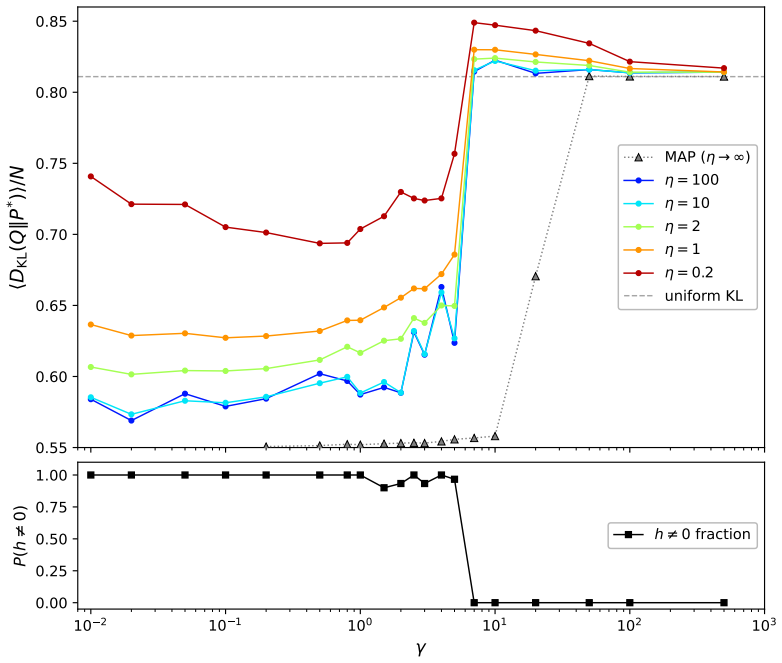

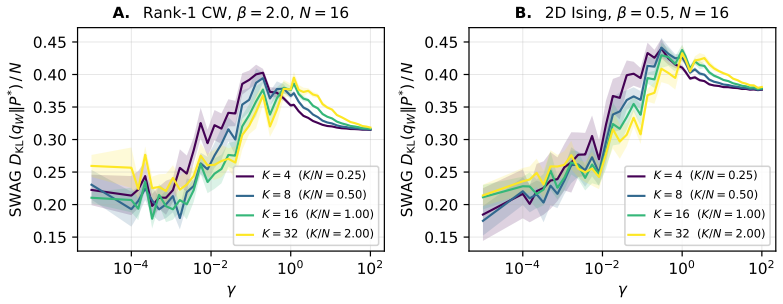

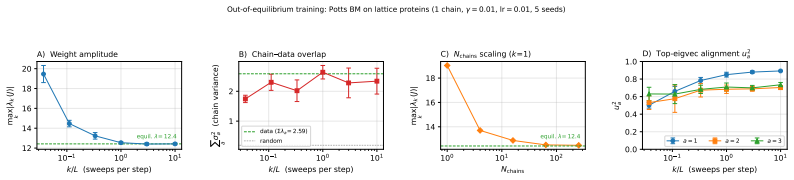

The spherical Boltzmann machine (SBM) allows exact solution of its training dynamics via random matrix and dynamical mean-field methods. The Bayesian evidence, acting as a partition function over parameters, reveals global properties of the trained model. Cascades of phase transitions arise from successive alignment and condensation of the top modes of the coupling matrix to the data, both during training and as hyperparameters change. These transitions connect to generative behaviors such as sampling temperature tuning, double descent with regularization, tempered posteriors, and out-of-equilibrium training biases.

What carries the argument

The spherical Boltzmann machine under random matrix theory and dynamical mean-field theory analysis, which solves exact training equations and computes the Bayesian evidence to reveal mode alignment phase transitions.

Load-bearing premise

The analysis assumes the high-dimensional limit with spherical constraints, with numerical evidence bridging to finite-dimensional non-spherical cases.

What would settle it

Observing no phase transitions, no double descent, or no tempered effects in a finite non-spherical energy-based model trained similarly would falsify the generality claim.

Figures

read the original abstract

Energy-based models (EBMs) are flexible generative architectures inspired by statistical physics, but their learning and generative properties remain poorly understood. Here, we analyze a solvable EBM in the high-dimensional limit: the spherical Boltzmann machine (SBM). Combining tools from random matrix theory and dynamical mean-field theory, we: solve exact equations describing the training dynamics of the SBM; compute the Bayesian evidence, which acts as a partition function in parameter space and encodes global properties of the trained model; and uncover cascades of phase transitions that occur both during training and as a function of hyperparameters, related to successive alignment and condensation of the top modes of the coupling matrix to the data. We connect these transitions to sampling-time generative phenomena in a teacher-student scenario, including: sampling temperature tuning, double descent as a function of regularization strength, tempered posterior effects, and out-of-equilibrium effects during training that induce biases in the trained model. We provide numerical evidence demonstrating that all these phenomena appear in standard generative architectures, beyond the SBM.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper analyzes the spherical Boltzmann machine (SBM) as a solvable high-dimensional energy-based model. Using random matrix theory and dynamical mean-field theory, it derives exact equations for the training dynamics, computes the Bayesian evidence as a partition function over parameters, and identifies cascades of phase transitions tied to successive alignment and condensation of the top modes of the coupling matrix. These transitions are connected to generative phenomena including sampling temperature effects, double descent under regularization, tempered posteriors, and training-induced biases, with numerical evidence that the same phenomena appear in standard (non-spherical, finite-dimensional) EBMs.

Significance. If the exact high-dimensional derivations hold and the numerical mappings are robust, the work supplies a rare solvable limit that explains several otherwise opaque behaviors in EBM training and sampling. The combination of RMT and DMFT to obtain closed equations for dynamics and evidence is a clear technical strength, and the identification of mode-condensation transitions offers a concrete mechanism for double-descent and out-of-equilibrium biases.

major comments (1)

- [Numerical experiments (section describing validation on standard architectures)] The central claim that the SBM phase transitions and generative phenomena explain behavior in practical EBMs rests on numerical evidence whose quantitative accuracy is not assessed. No finite-N scaling, deviation-from-sphericity metrics, or error bars on critical hyperparameter values are reported, so it is impossible to judge how faithfully the high-dimensional spherical predictions carry over to finite non-spherical models.

minor comments (1)

- [Theory sections] Notation for the spherical constraint and the coupling-matrix eigenvalues should be introduced once with a clear table or glossary, as the same symbols appear in both the RMT and DMFT sections.

Simulated Author's Rebuttal

We thank the referee for their careful reading and constructive feedback. We address the single major comment below and outline the revisions we will make to strengthen the numerical validation.

read point-by-point responses

-

Referee: The central claim that the SBM phase transitions and generative phenomena explain behavior in practical EBMs rests on numerical evidence whose quantitative accuracy is not assessed. No finite-N scaling, deviation-from-sphericity metrics, or error bars on critical hyperparameter values are reported, so it is impossible to judge how faithfully the high-dimensional spherical predictions carry over to finite non-spherical models.

Authors: We agree that the current numerical section provides primarily qualitative demonstrations that the phenomena appear in standard architectures, without quantitative metrics of agreement or robustness. This limitation weakens the strength of the mapping claim. In the revised manuscript we will add: (i) error bars on all reported critical hyperparameter values obtained from multiple independent runs, (ii) finite-N scaling plots for the non-spherical models to illustrate convergence toward the high-dimensional predictions, and (iii) explicit deviation-from-sphericity metrics (e.g., the Frobenius distance of the trained coupling matrix from its spherical projection) evaluated at the observed transition points. These additions will allow a clearer assessment of how faithfully the spherical limit carries over. revision: yes

Circularity Check

No circularity: exact solutions derived from external RMT and DMFT frameworks

full rationale

The paper solves exact training dynamics, Bayesian evidence, and phase transitions for the spherical Boltzmann machine by combining random matrix theory and dynamical mean-field theory in the high-dimensional spherical limit. These are independent external mathematical tools applied to the model, not reductions of the model's own fitted parameters, data, or self-citations. The subsequent connections to sampling phenomena and numerical checks on standard EBMs are presented as separate evidence rather than load-bearing derivations. No self-definitional steps, fitted inputs renamed as predictions, or ansatz smuggling via self-citation appear in the derivation chain. The analysis is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption High-dimensional limit (N→∞) with spherical constraint on weights

Reference graph

Works this paper leans on

-

[1]

Nature communications , volume=

Exploring the space of self-reproducing ribozymes using generative models , author=. Nature communications , volume=. 2025 , publisher=

work page 2025

-

[2]

There Will Be a Scientific Theory of Deep Learning , author=. 2026 , eprint=

work page 2026

-

[3]

Replica Theory of Spherical Boltzmann Machine Ensembles

Thomas Tulinski and Jorge Fernandez-De-Cossio-Diaz and Simona Cocco and Rémi Monasson , year=. Replica Theory of Spherical. 2604.17936 , archivePrefix=

work page internal anchor Pith review Pith/arXiv arXiv

-

[4]

Calvanese, Francesco and Lombardi, Gianluca and Weigt, Martin and. Steering Sequence Generation in Protein Language Models through Iterative Lookback Monte Carlo Sampling , elocation-id =. 2026 , doi =. https://www.biorxiv.org/content/early/2026/05/07/2026.05.01.722156.full.pdf , journal =

work page 2026

-

[5]

Bayesian Learning in Undirected Graphical Models: Approximate

Iain Murray and Zoubin Ghahramani , year=. Bayesian Learning in Undirected Graphical Models: Approximate. 1207.4134 , archivePrefix=

- [6]

-

[7]

The space of interactions in neural network models , author =. J. Phys. A: Math. Gen. , volume =. 1988 , doi =

work page 1988

-

[8]

Sampling the space of solutions of an artificial neural network , author =. Phys. Rev. E , volume =. 2025 , doi =

work page 2025

-

[9]

Zambon, Alessandro and Caruso, Francesca and Zecchina, Riccardo and Tiana, Guido , year =. Controlled. 2603.15367 , archivePrefix =

-

[10]

Differential operators on a semisimple

Harish-Chandra , journal=. Differential operators on a semisimple. 1957 , publisher=

work page 1957

-

[11]

Itzykson, C. and Zuber, J.-B. , journal=. The planar approximation. 1980 , publisher=

work page 1980

-

[12]

Introduction to Random Matrices: Theory and Practice , author=. 2018 , publisher=

work page 2018

- [13]

-

[14]

Probability Theory and Related Fields , volume=

Spherical integrals of sublinear rank , author=. Probability Theory and Related Fields , volume=. 2025 , publisher=

work page 2025

-

[15]

ALEA: Latin American Journal of Probability and Mathematical Statistics , volume=

Asymptotics of k dimensional spherical integrals and Applications , author=. ALEA: Latin American Journal of Probability and Mathematical Statistics , volume=

-

[16]

Electronic Communications in Probability , number =

Giulio Biroli and Alice Guionnet , title =. Electronic Communications in Probability , number =. 2020 , doi =

work page 2020

-

[17]

Principal-component-analysis eigenvalue spectra from data with symmetry-breaking structure , author=. Physical Review E , volume=. 2004 , publisher=

work page 2004

-

[18]

Statistical mechanics of learning multiple orthogonal signals: asymptotic theory and fluctuation effects , author=. Physical Review E , volume=. 2007 , publisher=

work page 2007

-

[19]

The Annals of Probability , number =

Jinho Baik and G. The Annals of Probability , number =. 2005 , doi =

work page 2005

-

[20]

IEEE Transactions on Information Theory , volume=

Matrix inference in growing rank regimes , author=. IEEE Transactions on Information Theory , volume=. 2024 , publisher=

work page 2024

-

[21]

Phase diagram of extensive-rank symmetric matrix denoising beyond rotational invariance , author=. Physical Review X , volume=. 2025 , publisher=

work page 2025

-

[22]

Extreme value statistics of eigenvalues of Gaussian random matrices , author =. Phys. Rev. E , volume =. 2008 , month =. doi:10.1103/PhysRevE.77.041108 , url =

-

[23]

A first course in random matrix theory: for physicists, engineers and data scientists , author=. 2020 , publisher=

work page 2020

-

[24]

Emergence of compositional representations in restricted

Tubiana, J. Emergence of compositional representations in restricted. Physical Review Letters , volume=. 2017 , publisher=

work page 2017

-

[25]

Coolen, A.C.C. and Penney, R. and Sherrington, D. , booktitle =. Coupled Dynamics of Fast Neurons and Slow Interactions , url =

-

[26]

Physical Review Letters , volume=

Rigorous Bounds to Retarded Learning , author=. Physical Review Letters , volume=. 2002 , publisher=

work page 2002

-

[27]

Dynamical decoupling of generalization and overfitting in large two-layer networks,

Dynamical decoupling of generalization and overfitting in large two-layer networks , author=. arXiv preprint arXiv:2502.21269 , year=

-

[28]

Information theory, inference and learning algorithms , author=. 2003 , publisher=

work page 2003

-

[29]

Understanding temperature tuning in energy-based models , author=. 2025 , eprint=

work page 2025

-

[30]

Nature Communications , volume=

Designing molecular. Nature Communications , volume=. 2025 , publisher=

work page 2025

-

[31]

Recent Applications of Dynamical Mean-Field Methods

Cugliandolo, Leticia F. Recent Applications of Dynamical Mean-Field Methods. Annual Review of Condensed Matter Physics. 2024. doi:https://doi.org/10.1146/annurev-conmatphys-040721-022848

-

[32]

International Conference on Machine Learning , pages=

Normalizing flows on tori and spheres , author=. International Conference on Machine Learning , pages=. 2020 , organization=

work page 2020

-

[33]

The Annals of Statistics , pages=

An antipodally symmetric distribution on the sphere , author=. The Annals of Statistics , pages=. 1974 , publisher=

work page 1974

-

[34]

Hamelryck, Thomas and Mardia, Kanti V. , year=. Unfolding. 2505.19763 , archivePrefix=

work page internal anchor Pith review Pith/arXiv arXiv

-

[35]

Detection of a particle shower at the Glashow resonance with IceCube , author=. Nature , volume=. 2021 , publisher=

work page 2021

-

[36]

PLoS Computational Biology , volume=

Sampling realistic protein conformations using local structural bias , author=. PLoS Computational Biology , volume=. 2006 , publisher=

work page 2006

-

[37]

An evolution-based model for designing chorismate mutase enzymes , author=. Science , volume=. 2020 , publisher=

work page 2020

-

[38]

Journal of the Royal Statistical Society: Series B (Methodological) , volume=

The Fisher-Bingham distribution on the sphere , author=. Journal of the Royal Statistical Society: Series B (Methodological) , volume=. 1982 , publisher=

work page 1982

-

[39]

Reasoning with Sampling: Your Base Model is Smarter Than You Think , author=. 2025 , eprint=

work page 2025

-

[40]

Advances in Neural Information Processing Systems , volume=

Implicit generation and modeling with energy based models , author=. Advances in Neural Information Processing Systems , volume=

-

[41]

Phase Transitions in the Output Distribution of Large Language Models , author=. 2024 , eprint=

work page 2024

-

[42]

Journal of Statistical Mechanics: Theory and Experiment , volume=

Spin-glass theory for pedestrians , author=. Journal of Statistical Mechanics: Theory and Experiment , volume=. 2005 , publisher=

work page 2005

-

[43]

Journal of Statistical Mechanics: Theory and Experiment , volume=

Replica method for computational problems with randomness: principles and illustrations , author=. Journal of Statistical Mechanics: Theory and Experiment , volume=. 2024 , publisher=

work page 2024

-

[44]

Physical Review Letters , volume=

Spherical model of a spin-glass , author=. Physical Review Letters , volume=. 1976 , publisher=

work page 1976

-

[45]

Information theory and statistical mechanics , author=. Physical Review , volume=. 1957 , publisher=

work page 1957

-

[46]

A new method to simulate the Bingham and related distributions in directional data analysis with applications , author=. 2013 , eprint=

work page 2013

- [47]

-

[48]

Spin-glass models of neural networks , author=. Physical Review A , volume=. 1985 , publisher=

work page 1985

-

[49]

Valentina Ros , journal=. 2025 , publisher=. doi:10.21468/SciPostPhysLectNotes.102 , url=

-

[50]

The spherical model of a ferromagnet , author=. Physical Review , volume=. 1952 , publisher=

work page 1952

-

[51]

Journal of Physics A: Mathematical and Theoretical , volume=

Gaussian-spherical restricted Boltzmann machines , author=. Journal of Physics A: Mathematical and Theoretical , volume=. 2020 , publisher=

work page 2020

-

[52]

Proceedings of the National Academy of Sciences , volume =

Mikhail Belkin and Daniel Hsu and Siyuan Ma and Soumik Mandal , title =. Proceedings of the National Academy of Sciences , volume =. 2019 , doi =

work page 2019

-

[53]

Restricted Boltzmann machine: Recent advances and mean-field theory , author=. Chinese Physics B , volume=. 2021 , publisher=

work page 2021

-

[54]

Nabarro, Seth and Ganev, Stoil and Garriga-Alonso, Adri. Data augmentation in. Proceedings of the 38th Conference on Uncertainty in Artificial Intelligence , series =

-

[55]

Advances in Neural Information Processing Systems , volume =

Disentangling the roles of curation, data-augmentation and the prior in the cold posterior effect , author =. Advances in Neural Information Processing Systems , volume =

-

[56]

Proceedings of the 15th Asian Conference on Machine Learning , series =

The fine print on tempered posteriors , author =. Proceedings of the 15th Asian Conference on Machine Learning , series =

-

[57]

Fachechi, Alberto and Agliari, Elena and Aquaro, Miriam and Coolen, Anthony and Mulder, Menno , journal=. Fundamental operating regimes, hyper-parameter fine-tuning and glassiness: towards an interpretable replica-theory for trained restricted. 2025 , publisher=

work page 2025

-

[58]

Cascade of phase transitions in the training of Energy-based models , author=. 2024 , eprint=

work page 2024

-

[59]

A theoretical framework for overfitting in energy-based modeling , author=. 2025 , eprint=

work page 2025

-

[60]

Cheema, Prasad and Sugiyama, Mahito , booktitle =. The Volume of Non-Restricted. 2020 , url =

work page 2020

-

[61]

Modeling structured data learning with Restricted Boltzmann machines in the teacher--student setting , author=. Neural Networks , volume=. 2025 , publisher=

work page 2025

-

[62]

Wenzel, Florian and Roth, Kevin and Veeling, Bastiaan S and. How Good is the. International Conference on Machine Learning , pages=. 2020 , organization=

work page 2020

-

[63]

Communications on Pure and Applied Mathematics , volume=

The generalization error of random features regression: Precise asymptotics and the double descent curve , author=. Communications on Pure and Applied Mathematics , volume=. 2022 , publisher=

work page 2022

-

[64]

Journal of Multivariate Analysis , volume=

The singular values and vectors of low rank perturbations of large rectangular random matrices , author=. Journal of Multivariate Analysis , volume=. 2012 , publisher=

work page 2012

-

[65]

Overlaps between eigenvectors of spiked, correlated random matrices , author=. Physical Review E , volume=. 2023 , publisher=

work page 2023

-

[66]

High-dimensional dynamics of generalization error in neural networks , author=. Neural Networks , volume=. 2020 , publisher=

work page 2020

-

[67]

Pattern Recognition and Machine Learning , author=. 2006 , publisher=

work page 2006

-

[68]

The largest eigenvalue of small rank perturbations of

P. The largest eigenvalue of small rank perturbations of. Probability Theory and Related Fields , publisher=. 2005 , pages=. doi:10.1007/s00440-005-0466-z , number=

-

[69]

The largest eigenvalues of finite rank deformation of large

Capitaine, Mireille and Donati-Martin, Catherine and F. The largest eigenvalues of finite rank deformation of large. The Annals of Probability , publisher=. doi:10.1214/08-AOP394 , number=

-

[70]

Zdeborov. Statistical physics of inference: thresholds and algorithms , volume=. Advances in Physics , publisher=. 2016 , pages=. doi:10.1080/00018732.2016.1211393 , number=

-

[71]

Sebastian Seung, Haim Sompolinsky, and Naftali Tishby

Seung, Hyunjune S. and Sompolinsky, Haim and Tishby, Naftali , year=. Statistical mechanics of learning from examples , volume=. Physical Review A , publisher=. doi:10.1103/PhysRevA.45.6056 , number=

-

[72]

Statistical Mechanics of Learning , DOI=

Engel, Andreas and Van den Broeck, Chris , year=. Statistical Mechanics of Learning , DOI=

-

[73]

Hastie, Trevor and Montanari, Andrea and Rosset, Saharon and Tibshirani, Ryan J. , year=. Surprises in high-dimensional ridgeless least squares interpolation , volume=. The Annals of Statistics , publisher=. doi:10.1214/21-AOS2133 , number=

-

[74]

Crisanti, A. and Sommers, H.-J. , year=. The spherical p -spin interaction spin glass model: the statics , volume=. Zeitschrift f. doi:10.1007/BF01309287 , number=

- [75]

-

[76]

Gr. The Safe. 2012 , pages=. doi:10.1007/978-3-642-34106-9_16 , booktitle=

-

[77]

de Freitas Pimenta, Pedro H. and Stariolo, Daniel A. , year=. Finite-Size Relaxational Dynamics of a Spike Random Matrix Spherical Model , volume=. Entropy , publisher=. doi:10.3390/e25060957 , number=

-

[78]

Exact solutions to the nonlinear dynamics of learning in deep linear neural networks

Exact solutions to the nonlinear dynamics of learning in deep linear neural networks , author=. International Conference on Learning Representations , year=. 1312.6120 , archivePrefix=

-

[79]

Grokking: Generalization Beyond Overfitting on Small Algorithmic Datasets

Grokking: Generalization beyond overfitting on small algorithmic datasets , author=. ICLR 2022 MATH-AI Workshop , year=. 2201.02177 , archivePrefix=

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[80]

Progress measures for grokking via mechanistic interpretability,

Progress measures for grokking via mechanistic interpretability , author=. arXiv preprint arXiv:2301.05217 , year=. 2301.05217 , archivePrefix=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.