Recognition: no theorem link

Accelerating Zeroth-Order Spectral Optimization with Partial Orthogonalization from Power Iteration

Pith reviewed 2026-05-12 02:14 UTC · model grok-4.3

The pith

Partial orthogonalization from power iteration speeds up zeroth-order spectral optimization for LLM fine-tuning.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Full orthogonalization of zeroth-order gradient estimates harms performance because the estimates are too noisy; replacing it with partial orthogonalization obtained from a streaming power-iteration method applied inside a momentum-projected subspace recovers the spectral advantage and accelerates convergence.

What carries the argument

Partial orthogonalization, which uses power iteration instead of Newton-Schulz to strengthen only dominant spectral directions while restricting updates to a low-variance momentum subspace.

Load-bearing premise

That noisy zeroth-order gradient estimates make complete orthogonalization harmful while power iteration focused on dominant directions remains beneficial.

What would settle it

An experiment that applies full Newton-Schulz orthogonalization to the identical zeroth-order gradient estimates and still records faster or equal convergence speed would remove the stated reason for switching to power iteration.

Figures

read the original abstract

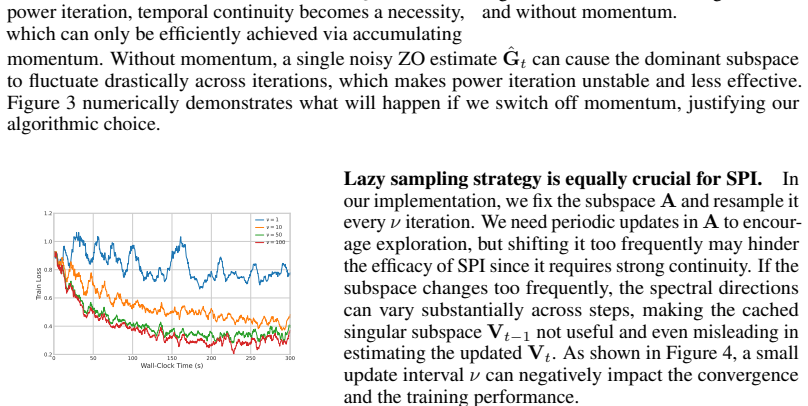

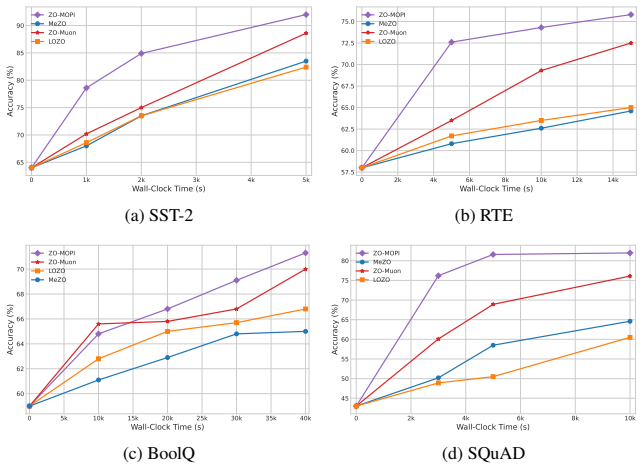

Zeroth-order (ZO) optimization has become increasingly popular and important in fine-tuning large language models (LLMs), especially on edge devices due to its ability to adjust the model to local data without the need for memory-intensive back-propagation. Recent works try to reduce ZO variance through low-dimensional subspace search, but subspace restriction alone leaves key optimization geometry under-exploited, motivating additional acceleration. In this work, we focus on the hidden layer training problem in which spectral optimizers like Muon outperform AdamW due to its ability to exploit weak spectral directions by orthogonalization. However, we have discovered that unlike in the first-order setting, full orthogonalization works poorly in the ZO setting since the gradient estimates are highly noisy and unreliable. To address this issue, we propose a key approach we call partial orthogonalization. To do so, we replace the iconic Newton-Schulz procedure in Muon with the faster, more concentrated power-iteration method so that it only amplifies dominant spectral directions. Furthermore, to improve the efficiency and generalization of the algorithm, we adopted a streaming variant of power-iteration that requires low variance in gradients, which was achieved through constraining our search inside a subspace obtained through the projection of momentum, echoing recent advances. Experiments on LLM fine-tuning show that our method can achieve from 1.5x to 4x the convergence speed of ZO-Muon, the current SOTA algorithm, across SuperGlue datasets in the OPT-13B model. Across different models, we also reach competitive final accuracies with less time in most cases compared with strong ZO baselines such as MeZO, LOZO and ZO-Muon. Code is available at https://github.com/MOFA-LAB/ZO-MOPI.git.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes partial orthogonalization for zeroth-order spectral optimization in LLM fine-tuning. It replaces the Newton-Schulz procedure from Muon with a power-iteration method to amplify only dominant spectral directions under noisy ZO gradients, combined with a streaming power-iteration variant and momentum-subspace projection for variance reduction. The central claim is that this yields 1.5x to 4x faster convergence than ZO-Muon on the OPT-13B model across SuperGlue datasets, with competitive final accuracies versus MeZO, LOZO, and ZO-Muon baselines. Code is provided at https://github.com/MOFA-LAB/ZO-MOPI.git.

Significance. If the reported speedups are reproducible and attributable to the partial-orthogonalization change, the work would offer a practical improvement to ZO methods for memory-efficient LLM adaptation by exploiting spectral geometry more robustly under noise. The open-sourced implementation is a clear strength supporting verification and extension.

major comments (3)

- The central empirical claim of 1.5x–4x acceleration over ZO-Muon rests on experiments whose setup is not detailed (no mention of run count, error bars, hyperparameter grids, or statistical significance tests). This directly affects assessment of whether the gains are reliable and generalizable.

- The motivation that full orthogonalization (Newton-Schulz) fails in the ZO regime because of unreliable gradient estimates is stated without supporting measurements, such as variance of the estimated spectral directions or side-by-side comparison of full versus partial orthogonalization under identical momentum-subspace constraints.

- No ablation isolating the power-iteration replacement from the momentum-subspace projection is reported. For example, there is no experiment comparing ZO-Muon augmented only with the subspace projection but retaining full Newton-Schulz, so the specific contribution of partial orthogonalization to the observed speedups cannot be verified.

minor comments (2)

- The description of the streaming power-iteration update and the exact form of the momentum-subspace projection would benefit from explicit pseudocode or additional equations to aid re-implementation.

- Figure captions and axis labels in the experimental plots should explicitly state the number of independent runs and whether shaded regions represent standard deviation or standard error.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback. The comments identify key areas where additional details and evidence will strengthen the manuscript. We address each major comment below and will revise accordingly.

read point-by-point responses

-

Referee: The central empirical claim of 1.5x–4x acceleration over ZO-Muon rests on experiments whose setup is not detailed (no mention of run count, error bars, hyperparameter grids, or statistical significance tests). This directly affects assessment of whether the gains are reliable and generalizable.

Authors: We agree that the experimental protocol requires more detail for reproducibility and to support the reliability of the claims. In the revised manuscript we will expand Section 4 to explicitly state the number of independent runs (3 random seeds), report standard deviation error bars on all convergence plots, describe the hyperparameter grids and tuning procedure applied to each baseline and our method, and include statistical significance tests (paired t-tests on final accuracies) where relevant. The reported speedups reflect wall-clock time to target accuracy on SuperGLUE tasks with OPT-13B. revision: yes

-

Referee: The motivation that full orthogonalization (Newton-Schulz) fails in the ZO regime because of unreliable gradient estimates is stated without supporting measurements, such as variance of the estimated spectral directions or side-by-side comparison of full versus partial orthogonalization under identical momentum-subspace constraints.

Authors: We acknowledge that the manuscript presents the motivation without accompanying quantitative measurements. Our observation arose from early development runs showing unstable updates with full Newton-Schulz under ZO noise. We will add a new figure and accompanying text in the revision that reports the variance of estimated dominant singular vectors for full Newton-Schulz versus partial power iteration, both with and without the momentum-subspace projection, thereby providing direct evidence for the robustness advantage of partial orthogonalization. revision: yes

-

Referee: No ablation isolating the power-iteration replacement from the momentum-subspace projection is reported. For example, there is no experiment comparing ZO-Muon augmented only with the subspace projection but retaining full Newton-Schulz, so the specific contribution of partial orthogonalization to the observed speedups cannot be verified.

Authors: We agree that isolating the contribution of the partial-orthogonalization change is important. The current experiments compare the complete proposed algorithm against ZO-Muon. We will add an ablation study in the revised experimental section that includes ZO-Muon augmented solely with the momentum-subspace projection (retaining Newton-Schulz) alongside the full ZO-MOPI method. This will allow direct verification of the incremental benefit from replacing Newton-Schulz with power iteration. revision: yes

Circularity Check

No circularity in derivation; algorithmic proposal validated empirically

full rationale

The paper proposes a practical algorithmic change—replacing Newton-Schulz full orthogonalization in Muon with a streaming power-iteration partial orthogonalization combined with momentum-subspace projection—to address observed noise in zeroth-order gradient estimates. No equations are presented that reduce the method or its claimed speedups to self-definitions, fitted parameters renamed as predictions, or load-bearing self-citations. The central claims rest on empirical results across SuperGlue tasks and OPT-13B, which are independent of the derivation itself. The approach echoes prior subspace ideas but does not smuggle in ansatzes or uniqueness theorems from the authors' own prior work as unverified axioms. This is a standard self-contained algorithmic contribution.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Yiming Chen, Yuan Zhang, Liyuan Cao, Kun Yuan, and Zaiwen Wen. Enhancing zeroth-order fine-tuning for language models with low-rank structures.arXiv preprint arXiv:2410.07698,

-

[2]

Boolq: Exploring the surprising difficulty of natural yes/no questions

Christopher Clark, Kenton Lee, Ming-Wei Chang, Tom Kwiatkowski, Michael Collins, and Kristina Toutanova. Boolq: Exploring the surprising difficulty of natural yes/no questions. InProceedings of the 2019 conference of the north American chapter of the association for computational linguistics: Human language technologies, volume 1 (long and short papers), ...

work page 2019

-

[3]

Tanmay Gautam, Youngsuk Park, Hao Zhou, Parameswaran Raman, and Wooseok Ha. Variance- reduced zeroth-order methods for fine-tuning language models.arXiv preprint arXiv:2404.08080,

-

[4]

Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, et al. The llama 3 herd of models.arXiv preprint arXiv:2407.21783,

work page internal anchor Pith review Pith/arXiv arXiv

-

[5]

Spectra: Rethinking optimizers for llms under spectral anisotropy.arXiv preprint arXiv:2602.11185,

Zhendong Huang, Hengjie Cao, Fang Dong, Ruijun Huang, Mengyi Chen, Yifeng Yang, Xin Zhang, Anrui Chen, Mingzhi Dong, Yujiang Wang, et al. Spectra: Rethinking optimizers for llms under spectral anisotropy.arXiv preprint arXiv:2602.11185,

-

[6]

Muon: An optimizer for hidden layers in neural networks, 2024.URL https://kellerjordan

Keller Jordan, Yuchen Jin, Vlado Boza, You Jiacheng, Franz Cesista, Laker Newhouse, and Jeremy Bernstein. Muon: An optimizer for hidden layers in neural networks, 2024.URL https://kellerjordan. github. io/posts/muon, 6(3):4,

work page 2024

-

[7]

Adam: A Method for Stochastic Optimization

Diederik P Kingma and Jimmy Ba. Adam: A method for stochastic optimization.arXiv preprint arXiv:1412.6980,

work page internal anchor Pith review Pith/arXiv arXiv

-

[8]

10 Yicheng Lang, Changsheng Wang, Yihua Zhang, Mingyi Hong, Zheng Zhang, Wotao Yin, and Sijia Liu. Powering up zeroth-order training via subspace gradient orthogonalization.arXiv preprint arXiv:2602.17155,

-

[9]

Yong Liu, Zirui Zhu, Chaoyu Gong, Minhao Cheng, Cho-Jui Hsieh, and Yang You. Sparse mezo: Less parameters for better performance in zeroth-order llm fine-tuning.arXiv preprint arXiv:2402.15751,

-

[10]

Egor Petrov, Grigoriy Evseev, Aleksey Antonov, Andrey Veprikov, Nikolay Bushkov, Stanislav Moiseev, and Aleksandr Beznosikov. Leveraging coordinate momentum in signsgd and muon: Memory-optimized zero-order.arXiv preprint arXiv:2506.04430,

-

[11]

Wic: the word-in-context dataset for evaluating context-sensitive meaning representations

Mohammad Taher Pilehvar and Jose Camacho-Collados. Wic: the word-in-context dataset for evaluating context-sensitive meaning representations. InProceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pages 1267–1273,

work page 2019

-

[12]

Squad: 100,000+ questions for machine comprehension of text

Pranav Rajpurkar, Jian Zhang, Konstantin Lopyrev, and Percy Liang. Squad: 100,000+ questions for machine comprehension of text. InProceedings of the 2016 conference on empirical methods in natural language processing, pages 2383–2392,

work page 2016

-

[13]

Multitask Prompted Training Enables Zero-Shot Task Generalization

Victor Sanh, Albert Webson, Colin Raffel, Stephen H Bach, Lintang Sutawika, Zaid Alyafeai, Antoine Chaffin, Arnaud Stiegler, Teven Le Scao, Arun Raja, et al. Multitask prompted training enables zero-shot task generalization.arXiv preprint arXiv:2110.08207,

work page internal anchor Pith review arXiv

-

[14]

Refining adaptive zeroth-order optimization at ease.arXiv preprint arXiv:2502.01014,

Yao Shu, Qixin Zhang, Kun He, and Zhongxiang Dai. Refining adaptive zeroth-order optimization at ease.arXiv preprint arXiv:2502.01014,

-

[15]

Recursive deep models for semantic compositionality over a sentiment treebank

Richard Socher, Alex Perelygin, Jean Wu, Jason Chuang, Christopher D Manning, Andrew Y Ng, and Christopher Potts. Recursive deep models for semantic compositionality over a sentiment treebank. InProceedings of the 2013 conference on empirical methods in natural language processing, pages 1631–1642,

work page 2013

-

[16]

Gemma 2: Improving Open Language Models at a Practical Size

Gemma Team, Morgane Riviere, Shreya Pathak, Pier Giuseppe Sessa, Cassidy Hardin, Surya Bhu- patiraju, Léonard Hussenot, Thomas Mesnard, Bobak Shahriari, Alexandre Ramé, et al. Gemma 2: Improving open language models at a practical size, 2024.URL https://arxiv. org/abs/2408.00118, 1(3),

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[17]

Soap: Improving and stabilizing shampoo using adam.arXiv preprint arXiv:2409.11321, 2024

11 Nikhil Vyas, Depen Morwani, Rosie Zhao, Mujin Kwun, Itai Shapira, David Brandfonbrener, Lucas Janson, and Sham Kakade. Soap: Improving and stabilizing shampoo using adam.arXiv preprint arXiv:2409.11321,

-

[18]

OPT: Open Pre-trained Transformer Language Models

Susan Zhang, Stephen Roller, Naman Goyal, Mikel Artetxe, Moya Chen, Shuohui Chen, Christopher Dewan, Mona Diab, Xian Li, Xi Victoria Lin, et al. Opt: Open pre-trained transformer language models.arXiv preprint arXiv:2205.01068,

work page internal anchor Pith review Pith/arXiv arXiv

-

[19]

Yihua Zhang, Pingzhi Li, Junyuan Hong, Jiaxiang Li, Yimeng Zhang, Wenqing Zheng, Pin-Yu Chen, Jason D Lee, Wotao Yin, Mingyi Hong, et al. Revisiting zeroth-order optimization for memory-efficient llm fine-tuning: A benchmark.arXiv preprint arXiv:2402.11592,

-

[20]

Yanjun Zhao, Sizhe Dang, Haishan Ye, Guang Dai, Yi Qian, and Ivor W Tsang. Second-order fine-tuning without pain for llms: A hessian informed zeroth-order optimizer.arXiv preprint arXiv:2402.15173,

-

[21]

12 A Analysis of Subspace Power Iteration In Section 4, for computation efficiency, we operate the streaming power iteration (SPI) in the subspace instead of the full space, and in this section, we try to prove that the projection-based SPI is equivalent to the full-space SPI. Let the SVD ofM∈R m×n be M=Udiag(σ)V ⊤,(13) and let U[:,1:k] and V[:,1:k] denot...

work page 2018

-

[22]

We compare our method with ZO baselines through fine-tuning OPT-13B on SST-2. Our method reaches the highest accuracy of 91.7%, with almost the same wall-clock time and slightly additional memory usage. These results show that our method achieves a better accuracy performance without the requirement of extra memory and computation overhead. D Hyperparamet...

work page 2024

-

[23]

to ensure the same total query budget with other baselines. For LoRA (Malladi et al., 2023), 17 0 100 200 300 400 500 Wall-Clock Time (s) 60 65 70 75 80 85 90Accuracy (%) k=8 k=16 k=32 k=64 0 100 200 300 400 500 Wall-Clock Time (s) 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1.0Train Loss k=8 k=16 k=32 k=64 (a) Accuracy vs. wall-clock time (b) Training loss vs. wall-...

work page 2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.