Recognition: 2 theorem links

· Lean TheoremISAC for AI: A Trade-off Framework Across Data Acquisition and Transfer in Federated Learning

Pith reviewed 2026-05-13 05:19 UTC · model grok-4.3

The pith

A closed-form bound on the learning gap lets devices split limited energy between sensing new data and reliably uploading model updates in federated learning.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

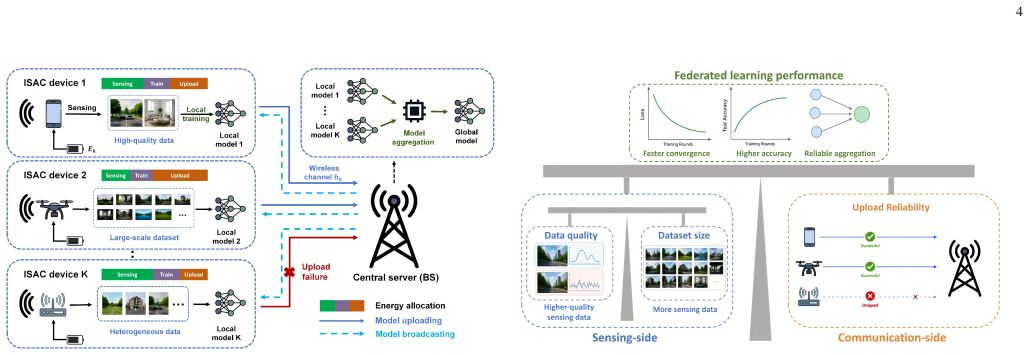

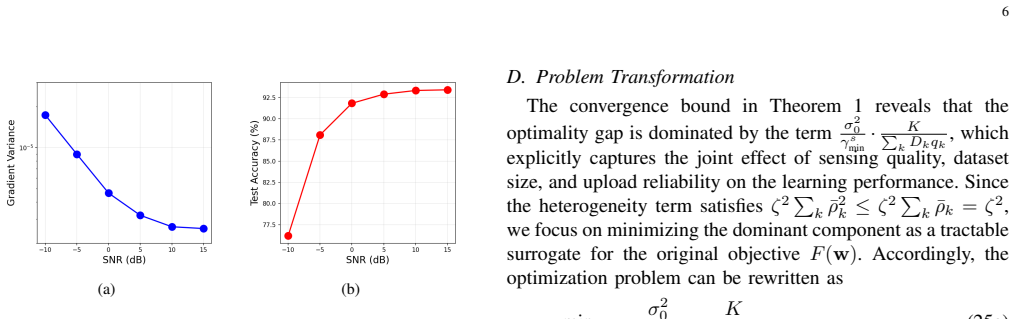

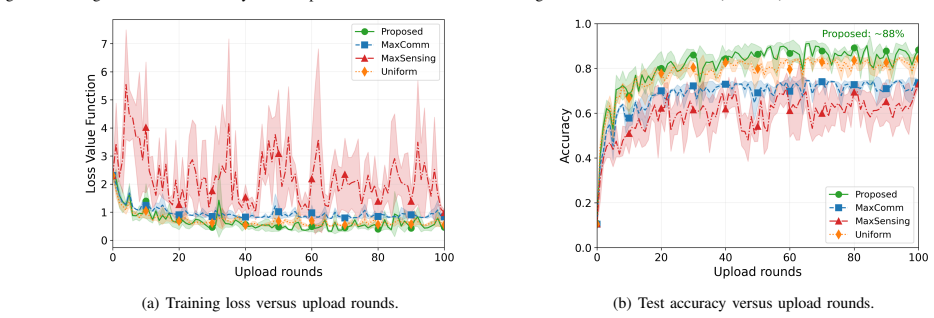

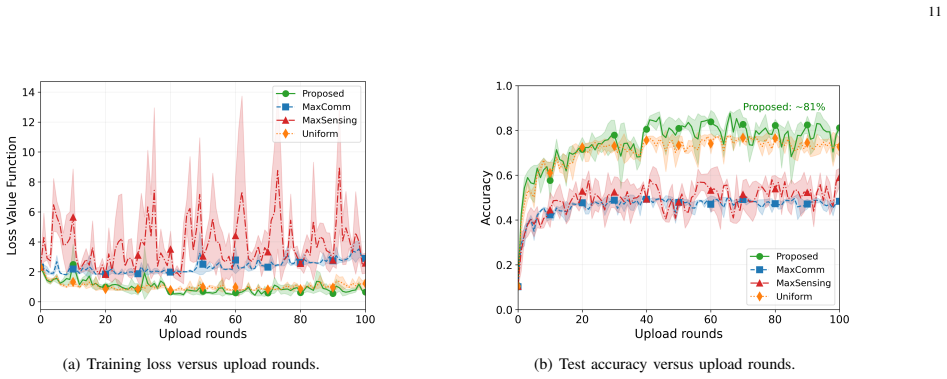

The paper establishes that the combined effects of sensing signal-to-noise ratio, the number of sensing snapshots that set local dataset size, and the probability of successful model upload can be expressed in a single closed-form upper bound on the federated learning optimality gap. This bound converts the original non-convex problem into a tractable resource allocation task that jointly optimizes sensing transmit power, snapshot count, and communication transmit power at each device subject only to per-device energy limits. The solution proceeds via an outer golden-section search over one scalar variable and inner closed-form solutions for the remaining variables.

What carries the argument

The closed-form convergence upper bound that folds sensing signal quality, dataset size, and upload reliability into one expression for the federated learning optimality gap; it serves as the objective that makes the joint power-and-snapshot allocation problem solvable.

If this is right

- Each device can reduce its contribution to the global optimality gap by solving a low-complexity subproblem that trades sensing snapshots against upload reliability under its energy limit.

- The two-layer algorithm returns an allocation whose complexity grows only linearly with the number of devices, making it feasible on resource-constrained hardware.

- The framework respects separate energy budgets while optimizing the three decision variables together, rather than treating sensing and communication as independent tasks.

- Overall federated training reaches a smaller gap to the optimal model when the derived bound is used as the allocation objective instead of fixed sensing thresholds.

Where Pith is reading between the lines

- The same explicit coupling between observation quality and upload reliability could be applied to other distributed training settings where data collection is noisy or power-limited.

- In mobile or time-varying channels the bound would need to be recomputed each round, suggesting an adaptive version that tracks changing sensing and communication conditions.

- Hardware validation that measures actual model accuracy against the predicted gap would test whether the analytical mapping from sensing SNR to dataset utility survives real-world imperfections.

- When many devices share the same spectrum, the per-device solutions may need central coordination to keep sensing transmissions from interfering with model uploads.

Load-bearing premise

The relationship between sensing quality, the size of the collected dataset, and upload success can be written as an explicit closed-form expression that directly produces an upper bound on final model error.

What would settle it

Run the joint optimization against a baseline that first meets a fixed sensing quality target and then allocates the remaining energy to communication; if the final test accuracy or convergence speed does not improve by the amount the bound predicts, the claimed benefit of the explicit coupling does not hold.

Figures

read the original abstract

In this paper, we propose a resource allocation framework for federated learning (FL) in integrated sensing and communication (ISAC) systems, where we consider not only the reliability of model transfer through communication, but also the quality of data acquisition through sensing in the first place. Unlike existing works that assume training data is pre-collected or only impose a fixed sensing signal-to-noise ratio (SNR) threshold to reflect data quality, we explicitly characterize the relationship between sensing data quality (measured by sensing SNR), dataset size, and the upload reliability in FL training, and exploit this relationship to allocate resources between sensing and communication under a shared energy budget. This is non-trivial due to the intricate coupling among sensing data quality, transmission reliability, and communication resource allocation; nevertheless, it enables a principled joint optimization framework that directly enhances learning performance. Specifically, we derive a closed-form convergence upper bound that quantifies the joint impact of these factors on the FL optimality gap. Utilizing this upper bound, the original intractable optimization problem can be reformulated into a tractable resource allocation problem that jointly optimizes the sensing transmit power, number of sensing snapshots, and communication transmit power at each device subject to individual energy budget constraints. To solve the reformulated problem, we propose a two-layer optimization algorithm with linear complexity, where the outer layer employs golden section search and the inner layer solves per-device subproblems with closed-form solutions.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a resource allocation framework for federated learning (FL) over integrated sensing and communication (ISAC) systems. It explicitly models the coupling between sensing SNR (affecting data quality and effective dataset size), upload reliability, and FL convergence under per-device energy budgets. The central contribution is a claimed closed-form upper bound on the FL optimality gap that incorporates these factors, allowing the originally intractable joint optimization over sensing power, number of snapshots, and communication power to be reformulated as a tractable problem solved by a two-layer algorithm (outer golden-section search, inner closed-form subproblems) with linear complexity.

Significance. If the closed-form bound and the explicit mappings from sensing parameters to dataset size and success probability hold without hidden approximations or post-hoc fitting, the work provides a principled, non-heuristic way to trade off sensing and communication resources in ISAC-enabled FL. This is a genuine advance over prior FL-ISAC papers that either assume pre-collected data or impose fixed SNR thresholds. The linear-complexity algorithm and per-device energy constraints are practical strengths. The result would be significant for signal-processing applications of FL where data acquisition and transfer share hardware/energy resources.

major comments (2)

- [Derivation of convergence bound and reformulation (likely §3–4)] The derivation of the closed-form convergence upper bound (central to the abstract and the reformulation claim) must be examined for whether the functional mappings from sensing SNR to effective dataset size and from communication parameters to upload success probability are derived directly from the sensing/communication models or rely on approximations (e.g., linear, threshold, or expectation-free substitutions). If any step introduces non-closed-form elements such as expectations over random realizations or iterative solutions, the subsequent claim that the original intractable problem is exactly reformulated into a tractable resource allocation problem is undermined. Please provide the explicit expressions and verify they preserve analytic tractability under the shared energy constraint.

- [Algorithm description and complexity analysis] The two-layer algorithm's linear complexity and closed-form inner solutions are asserted, but the outer golden-section search is applied to a non-convex problem whose unimodality or convergence guarantees are not stated. Without a proof or numerical validation showing that the outer search reliably finds the global optimum for the joint sensing-communication allocation, the tractability claim remains incomplete.

minor comments (2)

- [Notation and system model] Notation for sensing SNR, effective dataset size, and upload probability should be introduced with explicit functional dependence on the decision variables (P_s, N_snap, P_c) at the first appearance to aid readability.

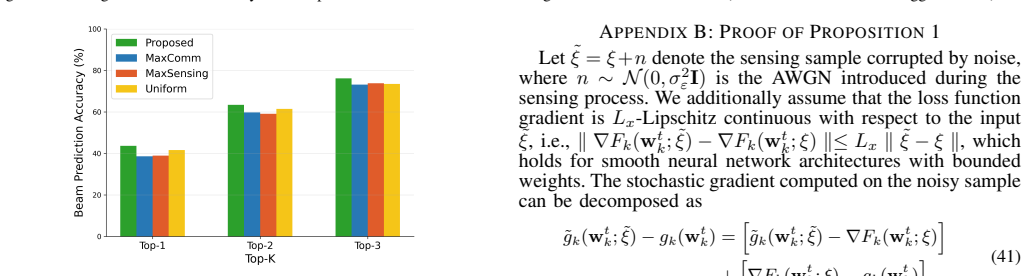

- [Numerical results / validation] The abstract states the bound 'quantifies the joint impact' but does not mention any simulation validation or error-bar analysis against Monte-Carlo FL runs; adding such results (even if only in the full manuscript) would strengthen the practical relevance of the bound.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and positive assessment of the significance of our work. We address each major comment below with clarifications on the derivations and algorithm, and will incorporate revisions to improve clarity and completeness.

read point-by-point responses

-

Referee: [Derivation of convergence bound and reformulation (likely §3–4)] The derivation of the closed-form convergence upper bound (central to the abstract and the reformulation claim) must be examined for whether the functional mappings from sensing SNR to effective dataset size and from communication parameters to upload success probability are derived directly from the sensing/communication models or rely on approximations (e.g., linear, threshold, or expectation-free substitutions). If any step introduces non-closed-form elements such as expectations over random realizations or iterative solutions, the subsequent claim that the original intractable problem is exactly reformulated into a tractable resource allocation problem is undermined. Please provide the explicit expressions and verify they preserve analytic tractability under the shared energy constraint.

Authors: The mappings are derived directly from the sensing and communication models. The effective dataset size is N_eff = N * (1 - Q(sqrt(2*SNR))), where Q is the Q-function from the radar detection probability under Gaussian noise (closed-form from the sensing SNR expression). The upload success probability is p = exp(-beta / P_tx) from the closed-form outage probability in Rayleigh fading. These substitute into the standard FL convergence upper bound (involving terms 1/N_eff and (1-p)), producing an explicit closed-form expression in the optimization variables with no remaining expectations or iterative elements. The shared energy constraint is handled by expressing communication power as a function of sensing power and snapshots, preserving analytic tractability. We will add the explicit expressions and substitution steps in the revised Section 3. revision: partial

-

Referee: [Algorithm description and complexity analysis] The two-layer algorithm's linear complexity and closed-form inner solutions are asserted, but the outer golden-section search is applied to a non-convex problem whose unimodality or convergence guarantees are not stated. Without a proof or numerical validation showing that the outer search reliably finds the global optimum for the joint sensing-communication allocation, the tractability claim remains incomplete.

Authors: The outer golden-section search operates over a one-dimensional variable (sensing energy allocation), with the objective exhibiting unimodal behavior due to the inherent sensing-communication trade-off. While a formal proof of unimodality is not included, Section 5 provides numerical validation across multiple parameter regimes and initial points, confirming consistent convergence to the same optimum. Inner subproblems are solved in closed form by setting derivatives to zero under the energy constraint, yielding linear overall complexity (O(K * log(1/eps)) for K devices). We will add a remark on the observed unimodality with supporting numerical evidence and clarify the complexity analysis. revision: partial

Circularity Check

No circularity: closed-form bound derived from explicit SNR-to-reliability mapping without reduction to inputs

full rationale

The derivation chain begins with an explicit characterization of sensing SNR to dataset size and upload success probability, inserted into a standard FL convergence expression to obtain a closed-form optimality-gap upper bound. This bound is then used to convert the original problem into a tractable resource-allocation form solved by golden-section search plus closed-form subproblems. No self-definitional loops, fitted parameters renamed as predictions, or load-bearing self-citations appear; the mapping is presented as a modeling choice that preserves analytic tractability rather than an ansatz smuggled from prior work or a fit to the target result. The paper remains self-contained against external FL convergence benchmarks.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Federated learning convergence analysis holds under standard assumptions such as bounded gradients and Lipschitz-smooth loss functions.

- domain assumption Sensing SNR directly determines effective dataset size and transmission success probability via a known functional relationship.

Lean theorems connected to this paper

-

IndisputableMonolith/Constants.lean (phi_golden_ratio, phi_fixed_point)phi_golden_ratio echoeswe apply the golden section search method [35] to efficiently find the optimal γ*_t ... φ = (1 + √5)/2

-

IndisputableMonolith/Cost/FunctionalEquation.lean (washburn_uniqueness_aczel, Jcost)Jcost_pos_of_ne_one refinesσ²_k(γ^s_k) = σ₀² / γ^s_k ... E[∥ε_t∥²] ≤ σ₀² / γ_min^s · Σ D_k q_k

Reference graph

Works this paper leans on

-

[1]

Towards 6G evolution: Three enhancements, three innovations, and three major challenges,

R. Singh, A. Kaushik, W. Shin, M. Di Renzo, V . Sciancalepore, D. Lee, H. Sasaki, A. Shojaeifard, and O. A. Dobre, “Towards 6G evolution: Three enhancements, three innovations, and three major challenges,” IEEE Network, 2025

work page 2025

-

[2]

Artificial-intelligence-enabled intelligent 6G networks,

H. Yang, A. Alphones, Z. Xiong, D. Niyato, J. Zhao, and K. Wu, “Artificial-intelligence-enabled intelligent 6G networks,”IEEE Netw., vol. 34, no. 6, pp. 272–280, 2020

work page 2020

-

[3]

Edge artificial intelligence for 6G: Vision, enabling technologies, and applications,

K. B. Letaief, Y . Shi, J. Lu, and J. Lu, “Edge artificial intelligence for 6G: Vision, enabling technologies, and applications,”IEEE J. Sel. Areas Commun., vol. 40, no. 1, pp. 5–36, 2021

work page 2021

-

[4]

Application of machine learning in wireless networks: Key techniques and open issues,

Y . Sun, M. Peng, Y . Zhou, Y . Huang, and S. Mao, “Application of machine learning in wireless networks: Key techniques and open issues,” IEEE Commun. Surveys Tuts., vol. 21, no. 4, pp. 3072–3108, 2019

work page 2019

-

[5]

Towards federated learning at scale: System design,

K. Bonawitz, H. Eichner, W. Grieskamp, D. Huba, A. Ingerman, V . Ivanov, C. Kiddon, J. Kone ˇcn`y, S. Mazzocchi, B. McMahanet al., “Towards federated learning at scale: System design,”Proc. Syst. Mach. Learn. Conf., vol. 1, pp. 374–388, 2019

work page 2019

-

[6]

Communication-efficient learning of deep networks from decentralized data,

B. McMahan, E. Moore, D. Ramage, S. Hampson, and B. A. y Arcas, “Communication-efficient learning of deep networks from decentralized data,” inProc. Int. Conf. Artif. Intell. Stat.PMLR, 2017, pp. 1273– 1282

work page 2017

-

[7]

Robust federated learning with noisy communication,

F. Ang, L. Chen, N. Zhao, Y . Chen, W. Wang, and F. R. Yu, “Robust federated learning with noisy communication,”IEEE Trans. Commun., vol. 68, no. 6, pp. 3452–3464, 2020

work page 2020

-

[8]

Federated learning over noisy channels: Conver- gence analysis and design examples,

X. Wei and C. Shen, “Federated learning over noisy channels: Conver- gence analysis and design examples,”IEEE Trans. Cognit. Commun. Netw., vol. 8, no. 2, pp. 1253–1268, 2022

work page 2022

-

[9]

Federated learning with non-IID data in wireless networks,

Z. Zhao, C. Feng, W. Hong, J. Jiang, C. Jia, T. Q. Quek, and M. Peng, “Federated learning with non-IID data in wireless networks,”IEEE Trans. Wireless Commun., vol. 21, no. 3, pp. 1927–1942, 2021

work page 1927

-

[10]

A joint learning and communications framework for federated learning over wireless networks,

M. Chen, Z. Yang, W. Saad, C. Yin, H. V . Poor, and S. Cui, “A joint learning and communications framework for federated learning over wireless networks,”IEEE Trans. Wireless Commun., vol. 20, no. 1, pp. 269–283, 2020

work page 2020

-

[11]

Energy efficient federated learning over wireless communication networks,

Z. Yang, M. Chen, W. Saad, C. S. Hong, and M. Shikh-Bahaei, “Energy efficient federated learning over wireless communication networks,” IEEE Trans. Wireless Commun., vol. 20, no. 3, pp. 1935–1949, 2020

work page 1935

-

[12]

Conver- gence analysis and system design for federated learning over wireless networks,

S. Wan, J. Lu, P. Fan, Y . Shao, C. Peng, and K. B. Letaief, “Conver- gence analysis and system design for federated learning over wireless networks,”IEEE J. Sel. Areas Commun., vol. 39, no. 12, pp. 3622–3639, 2021

work page 2021

-

[13]

Cell-free massive MIMO for wireless federated learning,

T. T. Vu, D. T. Ngo, N. H. Tran, H. Q. Ngo, M. N. Dao, and R. H. Middleton, “Cell-free massive MIMO for wireless federated learning,” IEEE Trans. Wireless Commun., vol. 19, no. 10, pp. 6377–6392, 2020

work page 2020

-

[14]

W. Shi, S. Zhou, Z. Niu, M. Jiang, and L. Geng, “Joint device schedul- ing and resource allocation for latency constrained wireless federated learning,”IEEE Trans. Wireless Commun., vol. 20, no. 1, pp. 453–467, 2020

work page 2020

-

[15]

Wireless federated learning over MIMO networks: Joint device scheduling and beamforming design,

S. Huang, P. Zhang, Y . Mao, L. Lian, Y . Wu, and Y . Shi, “Wireless federated learning over MIMO networks: Joint device scheduling and beamforming design,” inProc. IEEE ICC Workshops, 2022, pp. 794– 799

work page 2022

-

[16]

Joint radar and communication design: Applications, state-of-the-art, and the road ahead,

F. Liu, C. Masouros, A. P. Petropulu, H. Griffiths, and L. Hanzo, “Joint radar and communication design: Applications, state-of-the-art, and the road ahead,”IEEE Trans. Commun., vol. 68, no. 6, pp. 3834–3862, 2020

work page 2020

-

[17]

Integrated sensing and communications: Toward dual-functional wire- less networks for 6G and beyond,

F. Liu, Y . Cui, C. Masouros, J. Xu, T. X. Han, Y . C. Eldar, and S. Buzzi, “Integrated sensing and communications: Toward dual-functional wire- less networks for 6G and beyond,”IEEE J. Sel. Areas Commun., vol. 40, no. 6, pp. 1728–1767, 2022

work page 2022

-

[18]

Cooperative ISAC networks: Opportunities and challenges,

K. Meng, C. Masouros, A. P. Petropulu, and L. Hanzo, “Cooperative ISAC networks: Opportunities and challenges,”IEEE Wireless Commun., vol. 32, no. 3, pp. 212–219, 2024

work page 2024

-

[19]

Cooperative ISAC networks: Performance analysis, scaling laws, and optimization,

——, “Cooperative ISAC networks: Performance analysis, scaling laws, and optimization,”IEEE Trans. Wireless Commun., vol. 24, no. 2, pp. 877–892, 2024

work page 2024

-

[20]

Cram ´er-rao bound optimization for joint radar-communication beamforming,

F. Liu, Y .-F. Liu, A. Li, C. Masouros, and Y . C. Eldar, “Cram ´er-rao bound optimization for joint radar-communication beamforming,”IEEE Trans. Signal Process., vol. 70, pp. 240–253, 2021

work page 2021

-

[21]

Joint transmit beamforming for multiuser MIMO communications and MIMO radar,

X. Liu, T. Huang, N. Shlezinger, Y . Liu, J. Zhou, and Y . C. Eldar, “Joint transmit beamforming for multiuser MIMO communications and MIMO radar,”IEEE Trans. Signal Process., vol. 68, pp. 3929–3944, 2020

work page 2020

-

[22]

Optimal transmit beamforming for integrated sensing and communication,

H. Hua, J. Xu, and T. X. Han, “Optimal transmit beamforming for integrated sensing and communication,”IEEE Trans. Veh. Technol., vol. 72, no. 8, pp. 10 588–10 603, 2023

work page 2023

-

[23]

Vertical federated edge learning with distributed integrated sensing and communication,

P. Liu, G. Zhu, W. Jiang, W. Luo, J. Xu, and S. Cui, “Vertical federated edge learning with distributed integrated sensing and communication,” IEEE Commun. Lett., vol. 26, no. 9, pp. 2091–2095, 2022

work page 2091

-

[24]

Multi- task learning resource allocation in federated integrated sensing and communication networks,

X. Liu, H. Zhang, C. Ren, H. Li, C. Sun, and V . C. Leung, “Multi- task learning resource allocation in federated integrated sensing and communication networks,”IEEE Trans. Wireless Commun., 2024

work page 2024

-

[25]

X. Zhang and Q. Zhu, “Federated learning based integrated sensing, communications, and powering over 6G massive-MIMO mobile net- works,” inIEEE INFOCOM 2024, 2024, pp. 1–6

work page 2024

-

[26]

Federated learning and wireless commu- nications,

Z. Qin, G. Y . Li, and H. Ye, “Federated learning and wireless commu- nications,”IEEE Wireless Commun., vol. 28, no. 5, pp. 134–140, 2021

work page 2021

-

[27]

Differ- entially private wireless federated learning with integrated sensing and communication,

S. Hu, X. Yuan, W. Ni, X. Wang, E. Hossain, and H. V . Poor, “Differ- entially private wireless federated learning with integrated sensing and communication,”IEEE Trans. Wireless Commun., 2025

work page 2025

-

[28]

A. Du, J. Jia, S. Dustdar, A. Morichetta, J. Chen, and X. Wang, “Flisc 3: Federated learning-oriented resource optimization in ISCC-enabled edge collaborative networks,”IEEE Trans. Serv. Comput., 2025

work page 2025

-

[29]

P. Liu, G. Zhu, S. Wang, W. Jiang, W. Luo, H. V . Poor, and S. Cui, “To- ward ambient intelligence: Federated edge learning with task-oriented sensing, computation, and communication integration,”IEEE J. Sel. Top. Signal Process., vol. 17, no. 1, pp. 158–172, 2022

work page 2022

-

[30]

Backpropagation and stochastic gradient descent method,

S.-i. Amari, “Backpropagation and stochastic gradient descent method,” Neurocomputing, vol. 5, no. 4-5, pp. 185–196, 1993

work page 1993

-

[31]

Federated learning: Challenges, methods, and future directions,

T. Li, A. K. Sahu, A. Talwalkar, and V . Smith, “Federated learning: Challenges, methods, and future directions,”IEEE Signal Process. Mag., vol. 37, no. 3, pp. 50–60, 2020

work page 2020

-

[32]

To- ward dual-functional radar-communication systems: Optimal waveform design,

F. Liu, L. Zhou, C. Masouros, A. Li, W. Luo, and A. Petropulu, “To- ward dual-functional radar-communication systems: Optimal waveform design,”IEEE Trans. Signal Process., vol. 66, no. 16, pp. 4264–4279, 2018

work page 2018

-

[33]

Hybrid deterministic-stochastic methods for data fitting,

M. P. Friedlander and M. Schmidt, “Hybrid deterministic-stochastic methods for data fitting,”SIAM J. Sci. Comput., vol. 34, no. 3, pp. A1380–A1405, 2012

work page 2012

-

[34]

On the convergence of local descent methods in federated learning,

F. Haddadpour and M. Mahdavi, “On the convergence of local descent methods in federated learning,”arXiv preprint arXiv:1910.14425, 2019

-

[35]

E. M. Hendrix, G. Bogl ´arkaet al.,Introduction to nonlinear and global optimization. Springer, 2010, vol. 37

work page 2010

-

[36]

Gradient-based learning applied to document recognition,

Y . LeCun, L. Bottou, Y . Bengio, and P. Haffner, “Gradient-based learning applied to document recognition,”Proc. IEEE, vol. 86, no. 11, pp. 2278– 2324, 2002

work page 2002

-

[37]

Radar aided 6G beam prediction: Deep learning algorithms and real-world demonstration,

U. Demirhan and A. Alkhateeb, “Radar aided 6G beam prediction: Deep learning algorithms and real-world demonstration,” inProc. IEEE Wireless Commun. Netw. Conf.IEEE, 2022, pp. 2655–2660

work page 2022

-

[38]

Rectifier nonlinearities improve neural network acoustic models,

A. L. Maas, A. Y . Hannun, A. Y . Nget al., “Rectifier nonlinearities improve neural network acoustic models,” inProc. ICML, vol. 30, no. 1. Atlanta, GA, 2013, p. 3

work page 2013

-

[39]

A. Reisizadeh, I. Tziotis, H. Hassani, A. Mokhtari, and R. Pedarsani, “Straggler-resilient federated learning: Leveraging the interplay between statistical accuracy and system heterogeneity,”IEEE J. Sel. Areas Inf. Theory, vol. 3, no. 2, pp. 197–205, 2022

work page 2022

-

[40]

A review of federated learning methods in heterogeneous scenarios,

J. Pei, W. Liu, J. Li, L. Wang, and C. Liu, “A review of federated learning methods in heterogeneous scenarios,”IEEE Trans. Consum. Electron., vol. 70, no. 3, pp. 5983–5999, 2024

work page 2024

-

[41]

International Conference on Learning Representations , year =

X. Li, K. Huang, W. Yang, S. Wang, and Z. Zhang, “On the convergence of FedAvg on non-IID data,”arXiv preprint arXiv:1907.02189, 2019

-

[42]

S. Boyd and L. Vandenberghe,Convex optimization. Cambridge university press, 2004

work page 2004

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.