Recognition: 2 theorem links

· Lean TheoremSpatial Power Estimation via Riemannian Covariance Matching

Pith reviewed 2026-05-13 05:12 UTC · model grok-4.3

The pith

Matching sample and model covariance matrices using the Jensen-Bregman LogDet divergence on their Riemannian manifold produces superior spatial power spectrum estimates compared to Euclidean approaches.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

SERCOM performs spatial power spectrum estimation by minimizing the Jensen-Bregman LogDet divergence between the sample covariance matrix and a parameterized model covariance matrix on the Riemannian manifold of Hermitian positive definite matrices. This divergence can be evaluated efficiently without eigen-decomposition. Theoretical comparison shows greater robustness to spectral distortions than Euclidean or other Riemannian distances, while experiments confirm lower estimation errors than existing methods under low SNR, limited snapshots, and correlated sources.

What carries the argument

The Jensen-Bregman LogDet divergence on the Riemannian manifold of Hermitian positive definite matrices, which supplies an efficient, geometry-respecting measure for covariance matching.

If this is right

- Direction-of-arrival estimates remain accurate even when signal-to-noise ratios drop.

- Power spectrum estimates improve when only a small number of snapshots are available.

- Performance holds up better when the sources being observed are mutually correlated.

- The method tolerates mismatches between the assumed and actual spectral shape of the signals.

Where Pith is reading between the lines

- The same manifold-based matching could be substituted into other covariance-driven tasks such as adaptive beamforming or source separation.

- Avoidance of eigen-decomposition may allow faster real-time updates on embedded hardware.

- The divergence choice might generalize to covariance estimation problems outside array processing.

Load-bearing premise

The performance gains observed in the simulated test cases will translate to real measured array data without being artifacts of the particular signal and noise models used in those simulations.

What would settle it

An experiment on measured array data at low SNR in which the mean squared error of power or DOA estimates from SERCOM is not smaller than the error from standard Euclidean covariance matching.

Figures

read the original abstract

We propose a new method for spatial power spectrum estimation in array processing that leverages the Riemannian geometry of Hermitian positive definite (HPD) matrices. We show that conventional approaches minimize variants of the Euclidean distance between the sample covariance matrix and a model covariance matrix, without considering the fact that covariance matrices lie on the Riemannian manifold of HPD matrices. By exploiting this manifold, we present a Riemannian-aware covariance matching algorithm, termed SERCOM, using the Jensen-Bregman LogDet (JBLD) divergence, which, unlike other Riemannian distances, can be evaluated efficiently without eigen-decomposition. We theoretically compare the JBLD divergence to other Euclidean- and Riemannian-based distances, demonstrating robustness to spectral distortions. Experimental results demonstrate that SERCOM consistently outperforms existing methods in direction-of-arrival (DOA) and power estimation, particularly in challenging scenarios with low SNR, limited number of snapshots, and correlated sources.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes SERCOM, a covariance-matching algorithm for spatial power spectrum estimation that operates on the Riemannian manifold of Hermitian positive definite matrices using the Jensen-Bregman LogDet (JBLD) divergence. It contrasts this approach with conventional Euclidean-distance methods, provides theoretical comparisons of JBLD to other distances to argue robustness against spectral distortions, and reports experimental results claiming consistent outperformance in DOA and power estimation under low SNR, limited snapshots, and correlated sources.

Significance. If the performance claims hold under broader conditions, the work supplies a computationally efficient Riemannian alternative to Euclidean covariance matching that avoids eigen-decomposition. The emphasis on manifold geometry and the specific choice of JBLD are technically motivated and could influence array-processing algorithms in challenging regimes. However, the significance is limited by the exclusive reliance on synthetic data whose generative assumptions are not shown to be representative of real arrays.

major comments (2)

- [§5 (Experimental Results)] §5 (Experimental Results): All reported performance gains (DOA RMSE, power estimation error, robustness to correlation and low SNR) are obtained exclusively from synthetic Monte-Carlo trials generated under a fixed uniform-linear-array model, white Gaussian noise, and a specific snapshot count. No real-array recordings or cross-dataset validation are presented, so the central claim that SERCOM “consistently outperforms” existing methods rests on unverified simulation assumptions.

- [§3 (Theoretical Comparison)] §3 (Theoretical Comparison): The robustness argument for JBLD versus Euclidean and other Riemannian distances is derived under the same array manifold and noise model used in the simulations. No independent analytic bound (e.g., worst-case deviation under model mismatch) or counter-example under relaxed assumptions is supplied, leaving the “demonstrating robustness to spectral distortions” claim dependent on the simulation generative process.

minor comments (2)

- Notation for the model covariance matrix and the sample covariance matrix should be introduced once with consistent symbols (currently alternates between R and Ĉ in different sections).

- Figure captions for the DOA spectra and RMSE curves should explicitly state the number of Monte-Carlo trials and the exact SNR/snapshot values used in each panel.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed review of our manuscript. We address each major comment point by point below, indicating the revisions we will incorporate.

read point-by-point responses

-

Referee: [§5 (Experimental Results)] §5 (Experimental Results): All reported performance gains (DOA RMSE, power estimation error, robustness to correlation and low SNR) are obtained exclusively from synthetic Monte-Carlo trials generated under a fixed uniform-linear-array model, white Gaussian noise, and a specific snapshot count. No real-array recordings or cross-dataset validation are presented, so the central claim that SERCOM “consistently outperforms” existing methods rests on unverified simulation assumptions.

Authors: We agree that the reported results rely exclusively on synthetic Monte-Carlo trials under a standard uniform linear array model with white Gaussian noise. This controlled setting is conventional in array signal processing to isolate the impact of SNR, snapshot count, and source correlation. We will revise §5 and the conclusions to include an explicit discussion of these modeling assumptions, their relation to practical scenarios, and the need for future real-array validation. The performance trends observed remain informative for the regimes studied, but we will moderate the language of the central claims to reflect the simulation-based nature of the evidence. revision: partial

-

Referee: [§3 (Theoretical Comparison)] §3 (Theoretical Comparison): The robustness argument for JBLD versus Euclidean and other Riemannian distances is derived under the same array manifold and noise model used in the simulations. No independent analytic bound (e.g., worst-case deviation under model mismatch) or counter-example under relaxed assumptions is supplied, leaving the “demonstrating robustness to spectral distortions” claim dependent on the simulation generative process.

Authors: The analysis in §3 compares JBLD to Euclidean and other Riemannian distances under the standard array manifold and noise model to demonstrate its relative robustness to spectral distortions within that framework. We do not claim model-independent bounds. We will revise §3 to clarify the scope of the analysis, explicitly note its dependence on the assumed generative model, and add a remark on potential sensitivities to model mismatch. This will better delineate the conditions under which the robustness properties are shown. revision: yes

Circularity Check

No circularity detected; derivation is a direct algorithmic construction from established manifold geometry

full rationale

The paper presents SERCOM as a new covariance-matching procedure that replaces Euclidean distances with the JBLD divergence on the known Riemannian manifold of HPD matrices. The theoretical comparison of JBLD to Euclidean and other Riemannian distances is offered as an independent analytic exercise, and the performance claims rest on experimental results rather than any fitted parameter being relabeled as a prediction or any self-referential definition. No load-bearing self-citation, uniqueness theorem imported from the authors' prior work, or ansatz smuggled via citation appears in the derivation chain. The method is therefore self-contained against external benchmarks and does not reduce to its inputs by construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Covariance matrices lie on the Riemannian manifold of Hermitian positive definite matrices.

Lean theorems connected to this paper

-

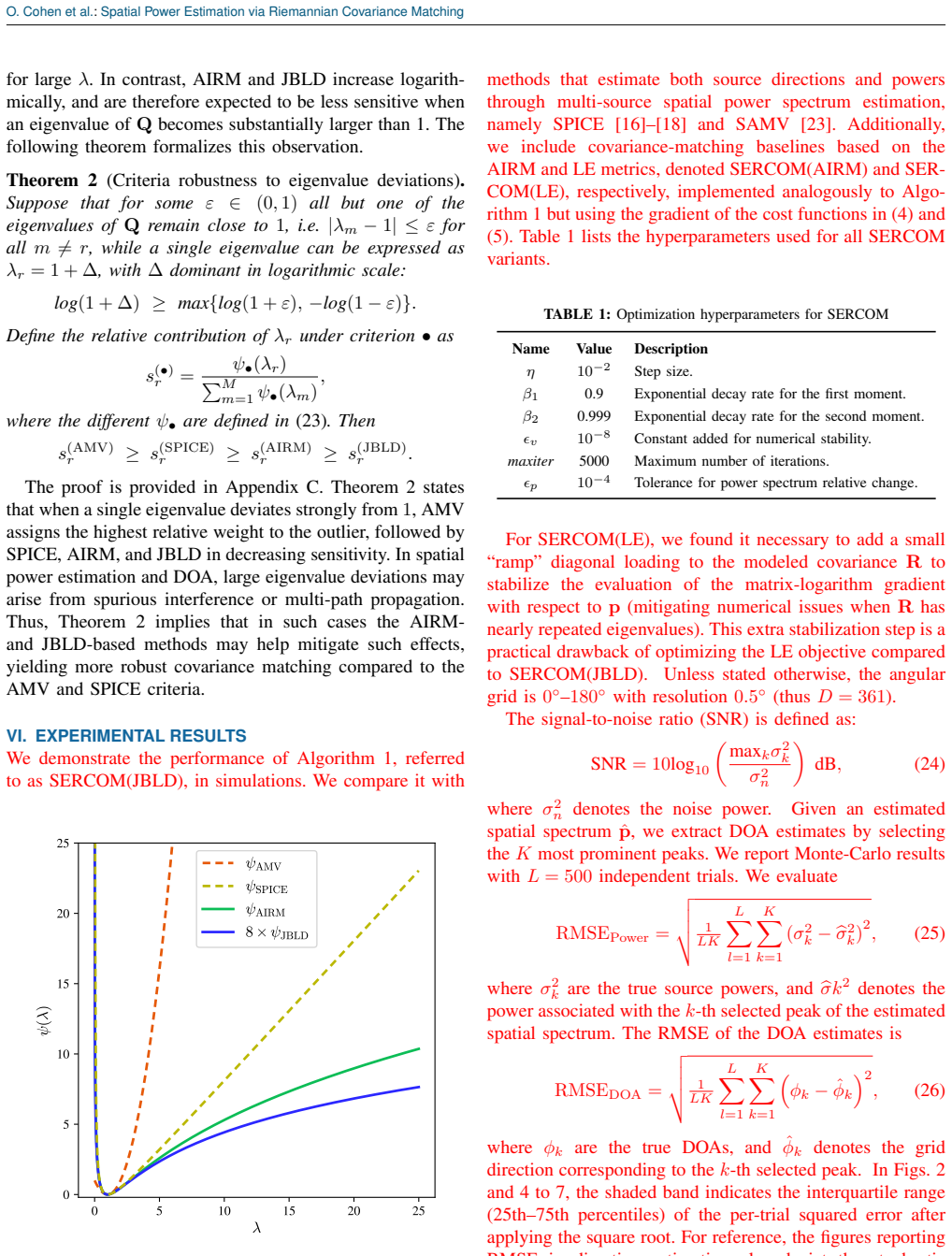

IndisputableMonolith/Cost/FunctionalEquation.lean, IndisputableMonolith/Foundation/AlphaCoordinateFixation.leanJ_uniquely_calibrated_via_higher_derivative, costAlphaLog_high_calibrated_iff echoesD²_JBLD(R1,R2) = log|(R1+R2)/2| - ½log|R1 R2| ... ψ_JBLD(λ) = log((1+λ)/(2√λ)) ... AIRM and JBLD increase logarithmically, and are therefore expected to be less sensitive when an eigenvalue of Q becomes substantially larger than 1 (Theorem 2)

-

IndisputableMonolith/Foundation/BranchSelection.leanbranch_selection refinesTheorem 1 (Asymptotic equivalence of criteria) ... all criteria become equivalent in a neighborhood of the shared minimizer R = bR

Reference graph

Works this paper leans on

- [1]

-

[2]

Two decades of array signal processing research: the parametric approach,

H. Krim and M. Viberg, “Two decades of array signal processing research: the parametric approach,” IEEE signal processing magazine, vol. 13, no. 4, pp. 67–94, 2002

work page 2002

-

[3]

Beamforming for direction- of-arrival (doa) estimation-a survey,

V . Krishnaveni, T. Kesavamurthy et al., “Beamforming for direction- of-arrival (doa) estimation-a survey,” International Journal of Computer Applications, vol. 61, no. 11, 2013

work page 2013

-

[4]

M. Pesavento, M. Trinh-Hoang, and M. Viberg, “Three more decades in array signal processing research: An optimization and structure exploitation perspective,” IEEE Signal Processing Magazine, vol. 40, no. 4, pp. 92–106, 2023

work page 2023

-

[5]

Deep convolution network for direction of arrival estimation with sparse prior,

L. Wu, Z.-M. Liu, and Z.-T. Huang, “Deep convolution network for direction of arrival estimation with sparse prior,” IEEE Signal Processing Letters, vol. 26, no. 11, pp. 1688–1692, 2019

work page 2019

-

[6]

Z.-M. Liu, C. Zhang, and S. Y . Philip, “Direction-of-arrival estimation based on deep neural networks with robustness to array imperfections,” IEEE Transactions on Antennas and Propagation, vol. 66, no. 12, pp. 7315–7327, 2018

work page 2018

-

[7]

Deep networks for direction-of-arrival estimation in low snr,

G. K. Papageorgiou, M. Sellathurai, and Y . C. Eldar, “Deep networks for direction-of-arrival estimation in low snr,” IEEE Transactions on Signal Processing, vol. 69, pp. 3714–3729, 2021

work page 2021

-

[8]

C. M. Mylonakis and Z. D. Zaharis, “A novel three-dimensional direction-of-arrival estimation approach using a deep convolutional neural network,” IEEE Open Journal of Vehicular Technology, vol. 5, pp. 643–657, 2024

work page 2024

-

[9]

High-resolution frequency-wavenumber spectrum analysis,

J. Capon, “High-resolution frequency-wavenumber spectrum analysis,” Proceedings of the IEEE, vol. 57, no. 8, pp. 1408–1418, 2005

work page 2005

-

[10]

A weighted mvdr beam- former based on svm learning for sound source localization,

D. Salvati, C. Drioli, and G. L. Foresti, “A weighted mvdr beam- former based on svm learning for sound source localization,” Pattern Recognition Letters, vol. 84, pp. 15–21, 2016

work page 2016

-

[11]

Multiple emitter location and signal parameter estima- tion,

R. Schmidt, “Multiple emitter location and signal parameter estima- tion,” IEEE transactions on antennas and propagation, vol. 34, no. 3, pp. 276–280, 1986

work page 1986

-

[12]

Modified subspace algorithms for doa estimation with large arrays,

X. Mestre and M. ´A. Lagunas, “Modified subspace algorithms for doa estimation with large arrays,” IEEE Transactions on Signal Processing, vol. 56, no. 2, pp. 598–614, 2008

work page 2008

-

[13]

Low-complexity doa estimation based on compressed music and its performance analysis,

F. Yan, M. Jin, and X. Qiao, “Low-complexity doa estimation based on compressed music and its performance analysis,” IEEE Transactions on Signal Processing, vol. 61, no. 8, pp. 1915–1930, 2013

work page 1915

-

[14]

Theoretical results on sparse representa- tions of multiple-measurement vectors,

J. Chen and X. Huo, “Theoretical results on sparse representa- tions of multiple-measurement vectors,” IEEE Transactions on Signal processing, vol. 54, no. 12, pp. 4634–4643, 2006

work page 2006

-

[15]

Enhancing sparsity and resolution via reweighted atomic norm minimization,

Z. Yang and L. Xie, “Enhancing sparsity and resolution via reweighted atomic norm minimization,” IEEE Transactions on Signal Processing, vol. 64, no. 4, pp. 995–1006, 2015

work page 2015

-

[16]

Spice: A sparse covariance-based estimation method for array processing,

P. Stoica, P. Babu, and J. Li, “Spice: A sparse covariance-based estimation method for array processing,” IEEE Transactions on Signal Processing, vol. 59, no. 2, pp. 629–638, 2010

work page 2010

-

[17]

Spice and likes: Two hyperparameter-free methods for sparse-parameter estimation,

P. Stoica and P. Babu, “Spice and likes: Two hyperparameter-free methods for sparse-parameter estimation,” Signal Processing, vol. 92, no. 7, pp. 1580–1590, 2012

work page 2012

-

[18]

Weighted spice: A unifying approach for hyperparameter-free sparse estimation,

P. Stoica, D. Zachariah, and J. Li, “Weighted spice: A unifying approach for hyperparameter-free sparse estimation,” Digital Signal Processing, vol. 33, pp. 1–12, 2014

work page 2014

-

[19]

Covariance matching estimation techniques for array signal processing applications,

B. Ottersten, P. Stoica, and R. Roy, “Covariance matching estimation techniques for array signal processing applications,” Digital Signal Processing, vol. 8, no. 3, pp. 185–210, 1998

work page 1998

-

[20]

Computationally efficient maximum likeli- hood estimation of structured covariance matrices,

H. Li, P. Stoica, and J. Li, “Computationally efficient maximum likeli- hood estimation of structured covariance matrices,” IEEE Transactions on Signal Processing, vol. 47, no. 5, pp. 1314–1323, 1999

work page 1999

-

[21]

A toeplitz covariance matrix reconstruction approach for direction-of-arrival estimation,

X. Wu, W.-P. Zhu, and J. Yan, “A toeplitz covariance matrix reconstruction approach for direction-of-arrival estimation,” IEEE Transactions on Vehicular Technology, vol. 66, no. 9, pp. 8223–8237, 2017

work page 2017

-

[22]

P. Stoica, P. Babu, and J. Li, “New method of sparse parameter estimation in separable models and its use for spectral analysis of irregularly sampled data,” IEEE Transactions on Signal Processing, vol. 59, no. 1, pp. 35–47, 2010

work page 2010

-

[23]

Iterative sparse asymptotic minimum variance based approaches for array processing,

H. Abeida, Q. Zhang, J. Li, and N. Merabtine, “Iterative sparse asymptotic minimum variance based approaches for array processing,” IEEE Transactions on Signal Processing, vol. 61, no. 4, pp. 933–944, 2012

work page 2012

-

[24]

R. Bhatia, “Positive definite matrices,” in Positive Definite Matrices. Princeton university press, 2009

work page 2009

-

[25]

A riemannian framework for tensor computing,

X. Pennec, P. Fillard, and N. Ayache, “A riemannian framework for tensor computing,” International Journal of computer vision, vol. 66, no. 1, pp. 41–66, 2006

work page 2006

-

[26]

K.-X. Chen and X.-J. Wu, “Component spd matrices: A low- dimensional discriminative data descriptor for image set classification,” Computational Visual Media, vol. 4, no. 3, pp. 245–252, 2018

work page 2018

-

[27]

D. Jiang, M. Yu, and W. Yuanyuan, “Sleep stage classification using covariance features of multi-channel physiological signals on rieman- nian manifolds,” Computer Methods and Programs in Biomedicine, vol. 178, pp. 19–30, 2019

work page 2019

-

[28]

Review of riemannian distances and divergences, applied to ssvep- based bci,

S. Chevallier, E. K. Kalunga, Q. Barth ´elemy, and E. Monacelli, “Review of riemannian distances and divergences, applied to ssvep- based bci,” Neuroinformatics, vol. 19, no. 1, pp. 93–106, 2021

work page 2021

-

[29]

On interference-rejection using riemannian geometry for direction of arrival estimation,

A. Bar and R. Talmon, “On interference-rejection using riemannian geometry for direction of arrival estimation,” IEEE Transactions on Signal Processing, vol. 72, pp. 260–274, 2023

work page 2023

-

[30]

Direction of arrival estima- tion based on information geometry,

M. Coutino, R. Pribic, and G. Leus, “Direction of arrival estima- tion based on information geometry,” in 2016 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2016, pp. 3066–3070

work page 2016

-

[31]

Scaling transform based information geometry method for doa estimation,

Y .-Y . Dong, C.-X. Dong, W. Liu, M.-M. Liu, and Z.-Z. Tang, “Scaling transform based information geometry method for doa estimation,” IEEE Transactions on Aerospace and Electronic Systems, vol. 55, no. 6, pp. 3640–3650, 2019

work page 2019

-

[32]

Direction of arrival estimation using riemannian mean and distance,

H. Chahrour, R. Dansereau, S. Rajan, and B. Balaji, “Direction of arrival estimation using riemannian mean and distance,” in 2019 IEEE Radar Conference (RadarConf). IEEE, 2019, pp. 1–5

work page 2019

-

[33]

Target detection through riemannian geometric approach with application to drone detection,

H. Chahrour, R. M. Dansereau, S. Rajan, and B. Balaji, “Target detection through riemannian geometric approach with application to drone detection,” IEEE Access, vol. 9, pp. 123 950–123 963, 2021

work page 2021

-

[34]

Grid-less doa estimation using sparse linear arrays based on wasserstein distance,

M. Wang, Z. Zhang, and A. Nehorai, “Grid-less doa estimation using sparse linear arrays based on wasserstein distance,” IEEE Signal Processing Letters, vol. 26, no. 6, pp. 838–842, 2019

work page 2019

-

[35]

Riemannian covariance fitting for direction-of-arrival estimation,

J. S. Picard, A. Bar, and R. Talmon, “Riemannian covariance fitting for direction-of-arrival estimation,” arXiv preprint arXiv:2404.03401, 2024

-

[36]

Log-euclidean metrics for fast and simple calculus on diffusion tensors,

V . Arsigny, P. Fillard, X. Pennec, and N. Ayache, “Log-euclidean metrics for fast and simple calculus on diffusion tensors,” Magnetic Resonance in Medicine: An Official Journal of the International Society for Magnetic Resonance in Medicine, vol. 56, no. 2, pp. 411– 421, 2006

work page 2006

-

[37]

Geometric means in a novel vector space structure on symmet- ric positive-definite matrices,

——, “Geometric means in a novel vector space structure on symmet- ric positive-definite matrices,” SIAM journal on matrix analysis and applications, vol. 29, no. 1, pp. 328–347, 2007

work page 2007

-

[38]

A. Cherian, S. Sra, A. Banerjee, and N. Papanikolopoulos, “Jensen- bregman logdet divergence with application to efficient similarity search for covariance matrices,” IEEE transactions on pattern analysis and machine intelligence, vol. 35, no. 9, pp. 2161–2174, 2012

work page 2012

-

[39]

A new metric on the manifold of kernel matrices with ap- plication to matrix geometric means,

S. Sra, “A new metric on the manifold of kernel matrices with ap- plication to matrix geometric means,” Advances in neural information processing systems, vol. 25, 2012

work page 2012

-

[40]

Clustering with bregman divergences,

A. Banerjee, S. Merugu, I. S. Dhillon, and J. Ghosh, “Clustering with bregman divergences,” Journal of machine learning research, vol. 6, no. Oct, pp. 1705–1749, 2005

work page 2005

-

[41]

On the centroids of symmetrized bregman divergences,

F. Nielsen and R. Nock, “On the centroids of symmetrized bregman divergences,” arXiv preprint arXiv:0711.3242, 2007

-

[42]

Adam: A Method for Stochastic Optimization

D. P. Kingma and J. Ba, “Adam: A method for stochastic optimiza- tion,” arXiv preprint arXiv:1412.6980, 2014

work page internal anchor Pith review Pith/arXiv arXiv 2014

-

[43]

A method for solving the convex programming problem with convergence rate o(1/k2),

Y . Nesterov, “A method for solving the convex programming problem with convergence rate o(1/k2),” p. 543, 1983. [Online]. Available: https://cir.nii.ac.jp/crid/1370862715914709505

-

[44]

Adaptive subgradient methods for online learning and stochastic optimization

J. Duchi, E. Hazan, and Y . Singer, “Adaptive subgradient methods for online learning and stochastic optimization.” Journal of machine learning research, vol. 12, no. 7, 2011

work page 2011

-

[45]

Music, maximum likelihood, and cramer- rao bound,

P. Stoica and A. Nehorai, “Music, maximum likelihood, and cramer- rao bound,” IEEE Transactions on Acoustics, speech, and signal processing, vol. 37, no. 5, pp. 720–741, 2002

work page 2002

-

[46]

Vershynin, High-dimensional probability: An introduction with applications in data science

R. Vershynin, High-dimensional probability: An introduction with applications in data science. Cambridge university press, 2018, vol. 47. VOLUME , 11 O. Cohen et al.: Spatial Power Estimation via Riemannian Covariance Matching Appendix A Derivation ofψfunctions The AIRM criterion. We would like to show thatψ AIRM(λ) = (logλ)2. FromD 2 AIRM(R,bR) =∥log(Q)∥...

work page 2018

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.