Recognition: no theorem link

Elicitation-Augmented Bayesian Optimization

Pith reviewed 2026-05-13 05:50 UTC · model grok-4.3

The pith

Pairwise expert comparisons can be fused with direct observations in Bayesian optimization via a cost-aware acquisition function to improve sample efficiency when cheap and fall back to standard performance otherwise.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The proposed elicitation-augmented Bayesian optimization method approaches the convex hull of the trajectories of the individual information sources: when pairwise queries are cheap it substantially improves sample-efficiency over observation-only BO, and when pairwise queries are costly or noisy, it recovers the performance of standard BO by relying on direct observations alone.

What carries the argument

A cost-aware value-of-information acquisition function that balances the expected utility of a direct observation against a pairwise query.

If this is right

- When pairwise queries are cheap, the method substantially improves sample-efficiency over observation-only Bayesian optimization.

- When pairwise queries are costly or noisy, the method recovers the performance of standard Bayesian optimization by relying on direct observations alone.

- The overall performance trajectory lies on or near the convex hull formed by the trajectories of the two individual information sources.

Where Pith is reading between the lines

- The approach makes it practical to use expert knowledge that cannot be expressed as point estimates or probability distributions.

- Similar cost-aware fusion of information sources could be applied to other sequential decision tasks where direct measurements and indirect comparisons have different costs.

- Empirical tests with real domain experts on engineering design problems would show whether the modeled noise level matches actual judgment consistency.

Load-bearing premise

The expert's pairwise judgements can be interpreted as noisy evidence about the values of the observable objective function, and a principled cost-aware value-of-information acquisition function can combine the two sources without introducing unmodeled biases.

What would settle it

A controlled experiment on a known synthetic objective function where pairwise query cost is set to one-tenth of direct observation cost and the simulated expert provides judgments consistent with the true function values; if the combined method fails to reach lower cumulative regret with fewer direct evaluations than pure observation-based BO, the central claim would not hold.

Figures

read the original abstract

Human-in-the-loop Bayesian optimization (HITL BO) methods utilize human expertise to improve the sample-efficiency of BO. Most HITL BO methods assume that a domain expert can quantify their knowledge, for instance by pinpointing query locations or specifying their prior beliefs about the location of the maximum as a probability distribution. However, since human expertise is often tacit and cannot be explicitly quantified, we consider a setting where domain knowledge of an expert is elicited via pairwise comparisons of designs. We interpret the expert's pairwise judgements as noisy evidence about the values of the observable objective function and develop a principled method for combining the information obtained via direct observations and pairwise queries. Specifically, we derive a cost-aware value-of-information acquisition function that balances direct observations against pairwise queries. The proposed method approaches the convex hull of the trajectories of the individual information sources: when pairwise queries are cheap it substantially improves sample-efficiency over observation-only BO, and when pairwise queries are costly or noisy, it recovers the performance of standard BO by relying on direct observations alone.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

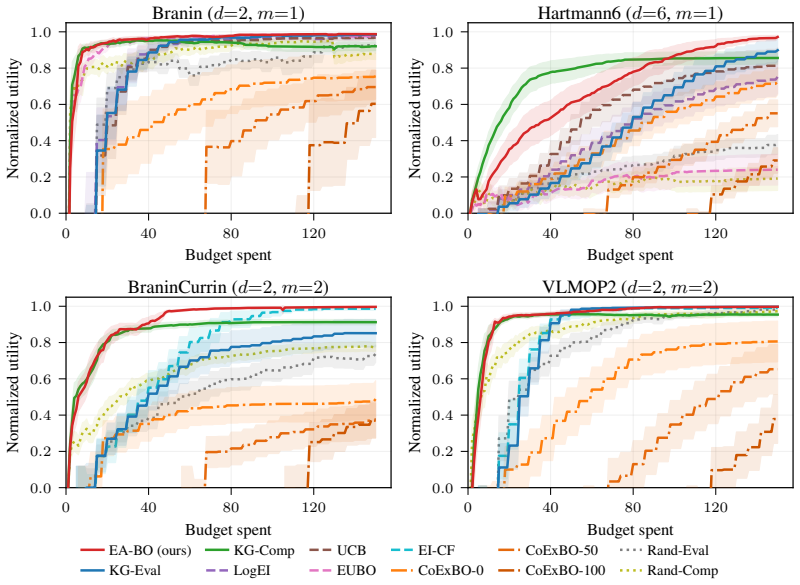

Summary. The manuscript proposes Elicitation-Augmented Bayesian Optimization (EABO), a human-in-the-loop method that elicits expert knowledge through pairwise comparisons of designs, interpreted as noisy evidence on the objective function values. It develops a joint Gaussian process model combining direct observations and pairwise preferences, and derives a cost-aware value-of-information acquisition function to select between the two query types. The central claim is that the resulting performance trajectory lies on the convex hull of the individual information sources, substantially improving sample efficiency when pairwise queries are inexpensive and recovering standard observation-only BO when they are costly or noisy.

Significance. If the modeling assumptions hold, the work offers a principled extension of value-of-information principles to elicitation settings, addressing the challenge of tacit expert knowledge in Bayesian optimization. The automatic cost-aware balancing is a notable strength, as is the theoretical guarantee of recovering standard BO performance under high cost or noise. This could be impactful in applications like hyperparameter tuning or experimental design where experts can compare candidates but struggle to provide absolute scores. The approach builds on standard GP and VoI tools without introducing new free parameters, which is a positive aspect.

major comments (3)

- [§3.2] §3.2, likelihood model: The assumption that pairwise preferences follow a logistic or probit model on f(x) - f(y) is load-bearing for the claim that performance recovers standard BO when pairwise noise is high. The manuscript should include a sensitivity analysis or experiment where the expert's comparison criterion deviates from the modeled objective (e.g., comparing on a different feature) to verify that the VoI acquisition still assigns zero value to pairwise queries in the high-noise regime.

- [§5] §5, experimental results: In the reported benchmarks, the method is shown to approach the convex hull, but the experiments use synthetic experts that follow the exact likelihood model. This does not test the weakest assumption; additional experiments with real human experts or misspecified comparison models are needed to support the general claim.

- [§4.3] §4.3, acquisition function derivation: The cost-aware VoI is derived by computing expected information gain minus cost for each query type. However, the paper does not explicitly show that the pairwise VoI goes to zero as cost increases or noise variance increases, which is necessary for exact recovery of standard BO. A proof sketch or limiting case analysis would strengthen this.

minor comments (3)

- [§3.1] The notation for the joint posterior in §3.1 could be clarified with an explicit equation for the combined covariance.

- [Table 2] Table 2: the units for query costs are not specified, making it hard to interpret the 'cheap' vs 'costly' regimes.

- Some references to prior HITL BO work (e.g., on preference-based BO) are missing in the related work section.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback. We address each major comment point by point below and will revise the manuscript accordingly where the suggestions strengthen the work without misrepresenting our contributions.

read point-by-point responses

-

Referee: [§3.2] §3.2, likelihood model: The assumption that pairwise preferences follow a logistic or probit model on f(x) - f(y) is load-bearing for the claim that performance recovers standard BO when pairwise noise is high. The manuscript should include a sensitivity analysis or experiment where the expert's comparison criterion deviates from the modeled objective (e.g., comparing on a different feature) to verify that the VoI acquisition still assigns zero value to pairwise queries in the high-noise regime.

Authors: We agree that the likelihood model is central to the recovery property. To address this, we will add a sensitivity analysis in the revised manuscript. We simulate experts whose comparisons are based on a different feature (a linear combination of the true objective and an auxiliary function with increasing weight). Preliminary results confirm that as the mismatch grows, the expected information gain from pairwise queries drops sharply, causing the cost-aware VoI to assign zero value to them and default to direct observations. This analysis will be included in §5. revision: yes

-

Referee: [§5] §5, experimental results: In the reported benchmarks, the method is shown to approach the convex hull, but the experiments use synthetic experts that follow the exact likelihood model. This does not test the weakest assumption; additional experiments with real human experts or misspecified comparison models are needed to support the general claim.

Authors: The experiments in §5 deliberately employ synthetic experts that match the model assumptions to isolate and verify the theoretical claims, including the convex-hull property and exact recovery of standard BO. This controlled setting is necessary to demonstrate the method's behavior without external confounds. We acknowledge the referee's point on misspecification. We will add a new experiment using a deliberately misspecified comparison model (comparisons on a perturbed objective) and discuss its implications. Real-human experiments, however, involve substantial logistical and ethical overhead and are planned as future work; we will expand the limitations discussion in §5 and the conclusion to reflect this. revision: partial

-

Referee: [§4.3] §4.3, acquisition function derivation: The cost-aware VoI is derived by computing expected information gain minus cost for each query type. However, the paper does not explicitly show that the pairwise VoI goes to zero as cost increases or noise variance increases, which is necessary for exact recovery of standard BO. A proof sketch or limiting case analysis would strengthen this.

Authors: We thank the referee for highlighting this gap. While the derivation implies the limiting behavior, we did not spell it out. We will add the following limiting-case analysis to the revised §4.3: As the pairwise cost c_p → ∞, the net utility EIG_p − c_p becomes negative for any finite EIG_p, so the acquisition function selects only direct observations. As the comparison noise variance σ² → ∞, the pairwise likelihood flattens to uniform, driving EIG_p → 0; hence for any c_p > 0 the pairwise VoI is negative and the method recovers standard BO. A short proof sketch will be included. revision: yes

Circularity Check

No circularity: derivation builds on standard VoI without reduction to inputs

full rationale

The paper derives a cost-aware value-of-information acquisition function that combines direct observations with pairwise comparisons modeled as noisy evidence on the observable objective f. This follows standard Bayesian optimization principles for posterior updating and VoI computation, with the recovery of observation-only performance when pairwise queries are costly or noisy arising directly from the explicit cost term in the acquisition function rather than any self-referential definition or fitted parameter. No self-citation load-bearing steps, ansatz smuggling, or renaming of known results appear in the derivation chain; the convex-hull claim is presented as an empirical outcome of the combined information sources rather than a mathematical tautology.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Human-ai collaborative bayesian optimisation

Arun Kumar A V, Santu Rana, Alistair Shilton, and Svetha Venkatesh. Human-ai collaborative bayesian optimisation. In S. Koyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, and A. Oh, editors, Advances in Neural Information Processing Systems, volume 35, pages 16233--16245. Curran Associates, Inc., 2022

work page 2022

-

[2]

Masaki Adachi. High-dimensional discrete bayesian optimization with self-supervised representation learning for data-efficient materials exploration. In NeurIPS 2021 AI for Science Workshop, 2021

work page 2021

-

[3]

Looping in the human: Collaborative and explainable Bayesian optimization

Masaki Adachi, Brady Planden, David Howey, Michael A Osborne, Sebastian Orbell, Natalia Ares, Krikamol Muandet, and Siu Lun Chau. Looping in the human: Collaborative and explainable Bayesian optimization. In International Conference on Artificial Intelligence and Statistics, pages 505--513. PMLR, 2024

work page 2024

-

[4]

Unexpected improvements to expected improvement for Bayesian optimization

Sebastian Ament, Samuel Daulton, David Eriksson, Maximilian Balandat, and Eytan Bakshy. Unexpected improvements to expected improvement for Bayesian optimization. In Advances in Neural Information Processing Systems, volume 36, 2023

work page 2023

-

[5]

Multi-attribute bayesian optimization with interactive preference learning

Raul Astudillo and Peter Frazier. Multi-attribute bayesian optimization with interactive preference learning. In International Conference on Artificial Intelligence and Statistics, pages 4496--4507. PMLR, 2020

work page 2020

-

[6]

Raul Astudillo and Peter I. Frazier. B ayesian optimization of composite functions. In Proceedings of the 36th International Conference on Machine Learning (ICML), volume 97, pages 354--363. PMLR, 2019

work page 2019

-

[7]

qeubo: A decision-theoretic acquisition function for preferential bayesian optimization

Raul Astudillo, Zhiyuan Jerry Lin, Eytan Bakshy, and Peter Frazier. qeubo: A decision-theoretic acquisition function for preferential bayesian optimization. In International conference on artificial intelligence and statistics, pages 1093--1114. PMLR, 2023

work page 2023

-

[8]

The Skew-Normal and Related Families

Adelchi Azzalini. The Skew-Normal and Related Families. Institute of Mathematical Statistics Monographs. Cambridge University Press, 2013

work page 2013

-

[9]

Jiang, Samuel Daulton, Benjamin Letham, Andrew Gordon Wilson, and Eytan Bakshy

Maximilian Balandat, Brian Karrer, Daniel R. Jiang, Samuel Daulton, Benjamin Letham, Andrew Gordon Wilson, and Eytan Bakshy. BoTorch : A framework for efficient Monte-Carlo Bayesian optimization. In Advances in Neural Information Processing Systems, volume 33, 2020

work page 2020

-

[10]

Preferential Bayesian optimisation with skew Gaussian processes

Alessio Benavoli, Dario Azzimonti, and Dario Piga. Preferential Bayesian optimisation with skew Gaussian processes. In Proceedings of the Genetic and Evolutionary Computation Conference Companion (GECCO), pages 1842--1850, 2021

work page 2021

-

[11]

Preference learning with Gaussian processes

Wei Chu and Zoubin Ghahramani. Preference learning with Gaussian processes. In Proceedings of the 22nd International Conference on Machine Learning (ICML), pages 137--144, 2005

work page 2005

-

[12]

Abdoulatif Ciss\' e , Xenophon Evangelopoulos, Sam Carruthers, Vladimir V. Gusev, and Andrew I. Cooper. Hypbo: Accelerating black-box scientific experiments using experts' hypotheses. In Proceedings of the Thirty-Third International Joint Conference on Artificial Intelligence, pages 3881--3889, 2024. doi:10.24963/ijcai.2024/429

-

[13]

Peter I. Frazier. A tutorial on Bayesian optimization. arXiv preprint arXiv:1807.02811, 2018

work page internal anchor Pith review arXiv 2018

-

[14]

Peter I. Frazier, Warren B. Powell, and Savas Dayanik. The knowledge-gradient policy for correlated normal beliefs. INFORMS Journal on Computing, 21 0 (4): 0 599--613, 2009

work page 2009

-

[15]

Roman Garnett. Bayesian Optimization . Cambridge University Press, 2023

work page 2023

- [16]

-

[17]

Robust prior-biased acquisition function for human-in-the-loop Bayesian optimization

Rose Guay-Hottin, Lison Kardassevitch, Hugo Pham, Guillaume Lajoie, and Marco Bonizzato. Robust prior-biased acquisition function for human-in-the-loop Bayesian optimization. Knowledge-Based Systems, page 113039, 2025

work page 2025

-

[18]

BO -muse: A human expert and ai teaming framework for accelerated experimental design

Sunil Gupta, Alistair Shilton, Arun Kumar AV, Shannon Ryan, Majid Abdolshah, Hung Le, Santu Rana, Julian Berk, Mahad Rashid, and Svetha Venkatesh. BO -muse: A human expert and ai teaming framework for accelerated experimental design. arXiv preprint arXiv:2303.01684, 2023

- [19]

-

[20]

James Hensman, Alexander G. de G. Matthews, and Zoubin Ghahramani. Scalable variational Gaussian process classification. In Proceedings of the Eighteenth International Conference on Artificial Intelligence and Statistics, volume 38 of Proceedings of Machine Learning Research. PMLR, 2015

work page 2015

-

[21]

BO : Augmenting acquisition functions with user beliefs for Bayesian optimization

Carl Hvarfner, Danny Stoll, Artur Souza, Luigi Nardi, Marius Lindauer, and Frank Hutter. BO : Augmenting acquisition functions with user beliefs for Bayesian optimization. In International Conference on Learning Representations, 2022

work page 2022

-

[22]

A general framework for user-guided Bayesian optimization

Carl Hvarfner, Frank Hutter, and Luigi Nardi. A general framework for user-guided Bayesian optimization. In International Conference on Learning Representations, 2024

work page 2024

-

[23]

On the interpretation of intuitive probability: A reply to jonathan cohen

Daniel Kahneman and Amos Tversky. On the interpretation of intuitive probability: A reply to jonathan cohen. Cognition, 7 0 (4): 0 409--411, 1979

work page 1979

-

[24]

Multi-fidelity Bayesian optimisation with continuous approximations

Kirthevasan Kandasamy, Gautam Dasarathy, Jeff Schneider, and Barnab \'a s P \'o czos. Multi-fidelity Bayesian optimisation with continuous approximations. In Proceedings of the 34th International Conference on Machine Learning (ICML), pages 1799--1808, 2017

work page 2017

-

[25]

Cooperative Bayesian optimization for imperfect agents

Ali Khoshvishkaie, Petrus Mikkola, Pierre-Alexandre Murena, and Samuel Kaski. Cooperative Bayesian optimization for imperfect agents. In Joint European Conference on Machine Learning and Knowledge Discovery in Databases, pages 475--490. Springer, 2023

work page 2023

-

[26]

Preference exploration for efficient Bayesian optimization with multiple outcomes

Zhiyuan Jerry Lin, Raul Astudillo, Peter Frazier, and Eytan Bakshy. Preference exploration for efficient Bayesian optimization with multiple outcomes. In International Conference on Artificial Intelligence and Statistics, pages 4235--4258. PMLR, 2022

work page 2022

-

[27]

Projective preferential Bayesian optimization

Petrus Mikkola, Milica Todorovi \'c , Jari J \"a rvi, Patrick Rinke, and Samuel Kaski. Projective preferential Bayesian optimization. In Proceedings of the 37th International Conference on Machine Learning (ICML), pages 6884--6892, 2020

work page 2020

-

[28]

Multi-fidelity Bayesian optimization with unreliable information sources

Petrus Mikkola, Julien Martinelli, Louis Filstroff, and Samuel Kaski. Multi-fidelity Bayesian optimization with unreliable information sources. In International Conference on Artificial Intelligence and Statistics, pages 7425--7454. PMLR, 2023

work page 2023

-

[29]

Pablo Moreno-Mu \ n oz, Antonio Art \'e s-Rodr \'i guez, and Mauricio A. \'A lvarez. Heterogeneous multi-output G aussian process prediction. In Advances in Neural Information Processing Systems, volume 31, 2018

work page 2018

-

[30]

Multi-objective Bayesian optimization with active preference learning

Ryota Ozaki, Kazuki Ishikawa, Youhei Kanzaki, Shion Takeno, Ichiro Takeuchi, and Masayuki Karasuyama. Multi-objective Bayesian optimization with active preference learning. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 38, pages 14490--14498, 2024

work page 2024

-

[31]

Matthias Poloczek, Jialei Wang, and Peter I. Frazier. Multi-information source optimization. In Advances in Neural Information Processing Systems, volume 30, 2017

work page 2017

-

[32]

Incorporating expert prior in bayesian optimisation via space warping

Anil Ramachandran, Sunil Gupta, Santu Rana, Cheng Li, and Svetha Venkatesh. Incorporating expert prior in bayesian optimisation via space warping. Knowledge-Based Systems, 195: 0 105663, 2020

work page 2020

-

[33]

When is it better to compare than to score? arXiv preprint arXiv:1406.6618, 2014

Nihar B Shah, Sivaraman Balakrishnan, Joseph Bradley, Abhay Parekh, Kannan Ramchandran, and Martin Wainwright. When is it better to compare than to score? arXiv preprint arXiv:1406.6618, 2014

-

[34]

Bobak Shahriari, Kevin Swersky, Ziyu Wang, Ryan P. Adams, and Nando de Freitas. Taking the human out of the loop: A review of Bayesian optimization. Proceedings of the IEEE, 104 0 (1): 0 148--175, 2016

work page 2016

-

[35]

Preferential batch Bayesian optimization

Eero Siivola, Akash Kumar Dhaka, Michael Riis Andersen, Javier Gonz \'a lez, Pablo Garc \' a Moreno, and Aki Vehtari. Preferential batch Bayesian optimization. In IEEE International Workshop on Machine Learning for Signal Processing (MLSP), 2021

work page 2021

-

[36]

Bayesian optimization with a prior for the optimum

Artur Souza, Luigi Nardi, Leonardo B Oliveira, Kunle Olukotun, Marius Lindauer, and Frank Hutter. Bayesian optimization with a prior for the optimum. In Joint European Conference on Machine Learning and Knowledge Discovery in Databases, pages 265--296. Springer, 2021

work page 2021

-

[37]

Niranjan Srinivas, Andreas Krause, Sham M. Kakade, and Matthias W. Seeger. Gaussian process optimization in the bandit setting: No regret and experimental design. In Proceedings of the 27th International Conference on Machine Learning, pages 1015--1022, 2010

work page 2010

-

[38]

Kevin Swersky, Jasper Snoek, and Ryan P. Adams. Multi-task Bayesian optimization. In Advances in Neural Information Processing Systems, volume 26, 2013

work page 2013

-

[39]

Towards practical preferential Bayesian optimization with skew Gaussian processes

Shion Takeno, Masahiro Nomura, and Masayuki Karasuyama. Towards practical preferential Bayesian optimization with skew Gaussian processes. In Proceedings of the 40th International Conference on Machine Learning (ICML), pages 33516--33533, 2023

work page 2023

-

[40]

Michalis K. Titsias. Variational learning of inducing variables in sparse Gaussian processes. In Proceedings of the Twelfth International Conference on Artificial Intelligence and Statistics, volume 5 of Proceedings of Machine Learning Research. PMLR, 2009

work page 2009

-

[41]

When to elicit preferences in multi-objective Bayesian optimization

Juan Ungredda and J \"u rgen Branke. When to elicit preferences in multi-objective Bayesian optimization. In Proceedings of the Companion Conference on Genetic and Evolutionary Computation ( GECCO '23 Companion) . ACM, 2023

work page 2023

-

[42]

One step preference elicitation in multi-objective bayesian optimization

Juan Ungredda, Juergen Branke, Mariapia Marchi, and Teresa Montrone. One step preference elicitation in multi-objective bayesian optimization. In Proceedings of the Genetic and Evolutionary Computation Conference Companion, pages 195--196, 2021

work page 2021

-

[43]

Wilson, Viacheslav Borovitskiy, Alexander Terenin, Peter Mostowsky, and Marc Peter Deisenroth

James T. Wilson, Viacheslav Borovitskiy, Alexander Terenin, Peter Mostowsky, and Marc Peter Deisenroth. Efficiently sampling functions from Gaussian process posteriors. In Proceedings of the 37th International Conference on Machine Learning, volume 119 of Proceedings of Machine Learning Research. PMLR, 2020

work page 2020

-

[44]

Wilson, Viacheslav Borovitskiy, Alexander Terenin, Peter Mostowsky, and Marc Peter Deisenroth

James T. Wilson, Viacheslav Borovitskiy, Alexander Terenin, Peter Mostowsky, and Marc Peter Deisenroth. Pathwise conditioning of Gaussian processes. Journal of Machine Learning Research, 22 0 (105), 2021

work page 2021

-

[45]

Jian Wu and Peter I. Frazier. The parallel knowledge gradient method for batch Bayesian optimization. In Advances in Neural Information Processing Systems, volume 29, 2016

work page 2016

-

[46]

Frazier, and Andrew Gordon Wilson

Jian Wu, Saul Toscano-Palmerin, Peter I. Frazier, and Andrew Gordon Wilson. Practical multi-fidelity Bayesian optimization for hyperparameter tuning. In Uncertainty in Artificial Intelligence (UAI), 2020

work page 2020

- [47]

-

[48]

Mixed likelihood variational Gaussian processes

Kaiwen Wu, Craig Sanders, Benjamin Letham, and Phillip Guan. Mixed likelihood variational Gaussian processes. arXiv preprint arXiv:2503.04138, 2025

-

[49]

Principled bayesian optimization in collaboration with human experts

Wenjie Xu, Masaki Adachi, Colin Jones, and Michael A Osborne. Principled bayesian optimization in collaboration with human experts. In Advances in Neural Information Processing Systems, volume 37, pages 104091--104137. Curran Associates, Inc., 2024 a

work page 2024

-

[50]

Wenjie Xu, Wenbin Wang, Yuning Jiang, Bratislav Svetozarevic, and Colin N. Jones. Principled preferential Bayesian optimization. In Proceedings of the 41st International Conference on Machine Learning (ICML), pages 55305--55336, 2024 b

work page 2024

-

[51]

B ayesian optimization with binary auxiliary information

Yehong Zhang, Zhongxiang Dai, and Bryan Kian Hsiang Low. B ayesian optimization with binary auxiliary information. In Proceedings of the 35th Conference on Uncertainty in Artificial Intelligence (UAI), volume 115, pages 1222--1232. PMLR, 2020

work page 2020

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.