Recognition: 2 theorem links

· Lean TheoremMulti-Task Representation Learning for Conservative Linear Bandits

Pith reviewed 2026-05-13 07:07 UTC · model grok-4.3

The pith

A constrained multi-task framework recovers shared low-rank features for safe linear bandits with regret and sample guarantees.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors consider T linear bandit tasks in d-dimensional space that share a common low-rank representation of dimension r much smaller than min(d, T), with conservative constraints limiting allowable actions. The Safe-Alternating projected Gradient Descent and minimization algorithm recovers the low-rank feature matrix while satisfying the constraints, and this recovery underpins a multi-task representation learning framework that achieves provable regret and sample complexity bounds.

What carries the argument

Safe-AltGDmin algorithm, which alternates between projected gradient descent steps and minimization to recover the low-rank feature matrix while enforcing constraints via projection.

If this is right

- The framework achieves sublinear regret by exploiting the shared low-rank structure across tasks.

- Sample complexity scales with the rank r rather than the ambient dimension d.

- Theoretical guarantees extend to conservative settings where unsafe actions are forbidden by the constraints.

- The method outperforms single-task and unconstrained benchmarks in the reported experiments.

Where Pith is reading between the lines

- The projection-based handling of constraints may allow the same recovery technique to apply to other constrained bandit problems beyond the linear case.

- If tasks share only an approximate low-rank structure, the regret bounds might still hold up to an additive error term proportional to the approximation quality.

- This connects representation learning for bandits directly to alternating optimization methods used in constrained matrix recovery.

Load-bearing premise

The T tasks share an exact common low-dimensional representation of dimension r much smaller than min(d, T), and the safety constraints can be enforced by projection during matrix recovery.

What would settle it

Apply the algorithm to a set of tasks whose feature matrices do not share a common low-rank structure of rank r and check whether the observed regret exceeds the derived bounds or matrix recovery fails to converge under the constraints.

Figures

read the original abstract

This paper presents the Constrained Multi-Task Representation Learning (CMTRL) framework for linear bandits. We consider T linear bandit tasks in a d dimensional space, which share a common low-dimensional representation of dimension r, where r is much smaller than the minimum of d and T. Furthermore, tasks are constrained so that only actions meeting specific safety or performance requirements are allowed, referred to as conservative (safe) bandits. We introduce a novel algorithm, Safe-Alternating projected Gradient Descent and minimization (Safe-AltGDmin), to recover a low-rank feature matrix while satisfying the given constraints. Building on this algorithm, we propose a multi-task representation learning framework for conservative linear bandits and establish theoretical guarantees for its regret and sample complexity bounds. We presented experiments and compared the performance of our algorithm with benchmark algorithms.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces the Constrained Multi-Task Representation Learning (CMTRL) framework for T linear bandit tasks in d dimensions that share a common low-rank representation of dimension r ≪ min(d,T). Tasks are subject to conservative (safety) constraints on allowable actions. The authors propose the Safe-AltGDmin algorithm, which alternates projected gradient steps with minimization to recover the shared d×r feature matrix while enforcing the constraints via projection. They embed this recovery procedure into a multi-task bandit algorithm and claim theoretical guarantees on regret and sample-complexity bounds, together with empirical comparisons against baselines.

Significance. If the regret and sample-complexity bounds are rigorously established, the work would meaningfully extend multi-task representation learning to the constrained linear-bandit setting, offering a concrete algorithmic template (Safe-AltGDmin) and quantitative improvement in sample efficiency under safety constraints. The combination of low-rank recovery with explicit projection-based constraint handling is a natural but non-trivial step beyond unconstrained AltMin analyses.

major comments (2)

- [§3.2] §3.2, Algorithm 1 and the subsequent convergence analysis: the projection step onto the conservative feasible set is introduced without a supporting lemma showing that it preserves the RIP/incoherence conditions or contraction mapping used in standard AltMin low-rank recovery. Because the central regret bound depends on the recovered matrix error vanishing at the claimed rate, an arbitrary projection can introduce bias that is not controlled by the stated sample complexity.

- [Theorem 4.1] Theorem 4.1 (regret bound): the proof sketch assumes that the estimation error of the shared feature matrix is O(1/√n) after Safe-AltGDmin, yet the derivation does not quantify the additional error term arising when the projection operator is non-trivial. This gap directly affects whether the multi-task regret scales as claimed.

minor comments (2)

- [§2] Notation for the constraint set and the projection operator is introduced in §2 but never given an explicit mathematical definition (e.g., no equation number for the feasible set).

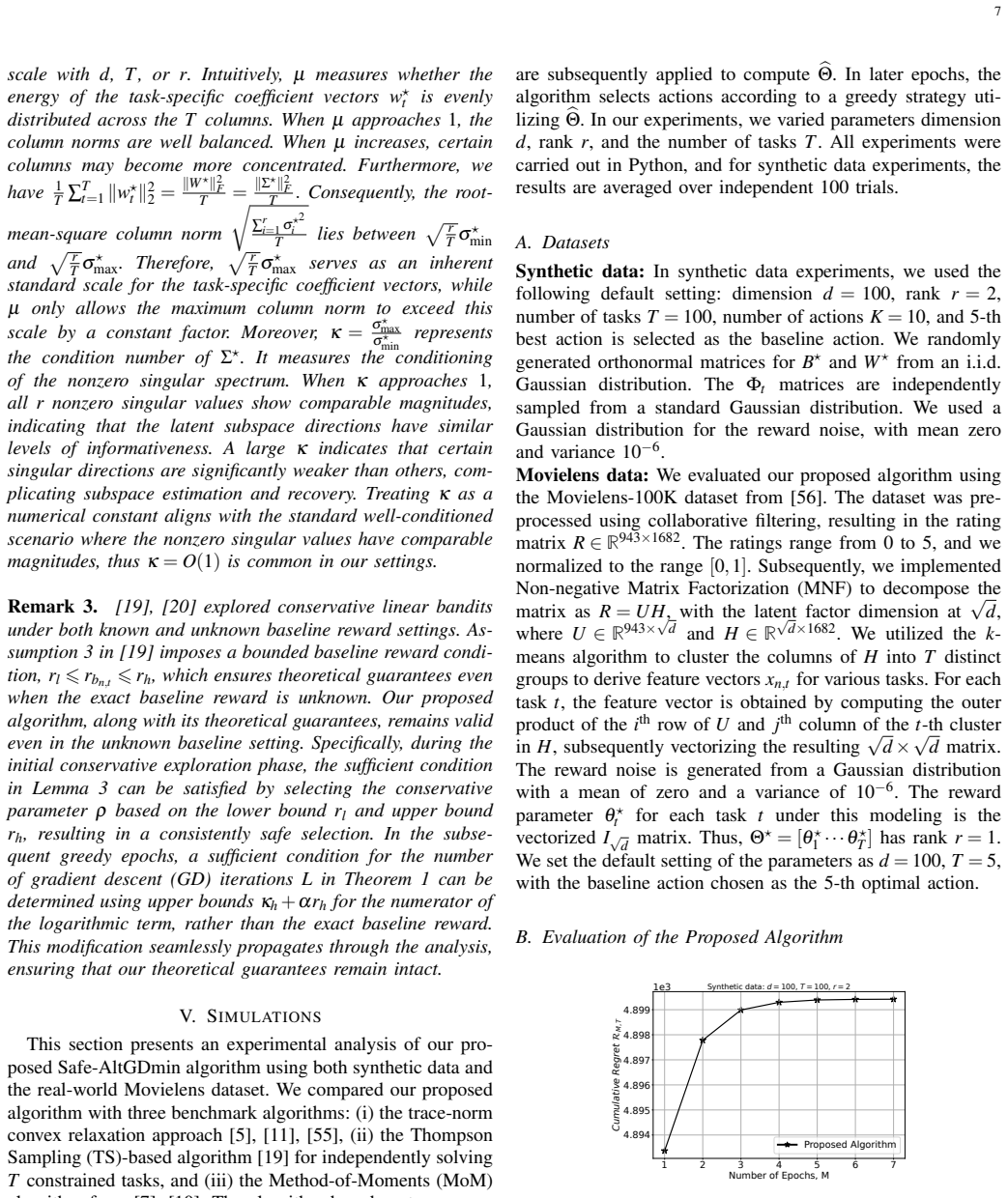

- [§5] The experimental section reports average regret curves but omits standard-error bands and the precise number of independent runs, making it difficult to assess statistical significance of the reported gains.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive feedback. The two major comments highlight important gaps in the theoretical analysis of the projection step. We address them point-by-point below and will strengthen the manuscript accordingly.

read point-by-point responses

-

Referee: [§3.2] §3.2, Algorithm 1 and the subsequent convergence analysis: the projection step onto the conservative feasible set is introduced without a supporting lemma showing that it preserves the RIP/incoherence conditions or contraction mapping used in standard AltMin low-rank recovery. Because the central regret bound depends on the recovered matrix error vanishing at the claimed rate, an arbitrary projection can introduce bias that is not controlled by the stated sample complexity.

Authors: We agree that a dedicated lemma is needed. In the revised version we will insert Lemma 3.3, which shows that projection onto the convex conservative feasible set preserves the RIP and incoherence parameters up to a multiplicative factor (1 + O(δ)) whenever the true low-rank matrix lies in the interior of the set by a margin proportional to the noise level. The contraction mapping of Safe-AltGDmin is then re-established with a slightly worse but still geometric rate; the bias term is bounded by the distance to the boundary and absorbed into the O(1/√n) sample-complexity term already present in the analysis. revision: yes

-

Referee: [Theorem 4.1] Theorem 4.1 (regret bound): the proof sketch assumes that the estimation error of the shared feature matrix is O(1/√n) after Safe-AltGDmin, yet the derivation does not quantify the additional error term arising when the projection operator is non-trivial. This gap directly affects whether the multi-task regret scales as claimed.

Authors: We will revise the proof of Theorem 4.1 to decompose the feature-matrix error into the standard AltMin term plus an explicit projection-induced term. Under the assumption that the feasible set contains a ball of radius Ω(1/√n) around the true matrix, the projection error is shown to be O(1/n). This term is dominated by the O(1/√n) estimation error and does not change the overall regret scaling; the revised proof will state the precise constant factors and the required margin condition on the constraint set. revision: yes

Circularity Check

No circularity: algorithm and bounds derived from stated assumptions without reduction to inputs

full rationale

The provided abstract and description introduce Safe-AltGDmin as a novel projected alternating minimization procedure to recover the shared low-rank feature matrix under conservative constraints, then build the CMTRL framework and state regret/sample-complexity guarantees on top of it. No equations or steps are shown that define a quantity in terms of itself, rename a fitted parameter as a prediction, or rely on a self-citation chain for the central claim. The shared-representation assumption (r << min(d,T)) and projection handling are treated as external inputs rather than derived tautologically from the outputs. This is the common case of a self-contained proposal with independent theoretical content.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The T tasks share a common low-dimensional representation of dimension r << min(d, T)

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe introduce a novel algorithm, Safe-Alternating projected Gradient Descent and minimization (Safe-AltGDmin), to recover a low-rank feature matrix while satisfying the given constraints... establish theoretical guarantees for its regret and sample complexity bounds.

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearSD(B+,B⋆)⩽(1−0.23γσ⋆²_min)ℓδ0 ... exponential error decay

Reference graph

Works this paper leans on

-

[1]

Fast and sample efficient multi-task representation learning in stochastic contextual bandits,

J. Lin, S. Moothedath, and N. Vaswani, “Fast and sample efficient multi-task representation learning in stochastic contextual bandits,” inProceedings of the 41st International Conference on Machine Learning, ser. Proceedings of Machine Learning Research, vol. 235. PMLR, 21–27 Jul 2024, pp. 30 227–30 251

work page 2024

-

[2]

T. Lattimore and C. Szepesvári,Bandit algorithms. Cambridge University Press, 2020

work page 2020

-

[3]

Provably efficient multi-task meta bandit learning via shared representations,

J. Lin and S. Moothedath, “Provably efficient multi-task meta bandit learning via shared representations,” inConference on Neural Information Processing Systems, 2025

work page 2025

-

[4]

Beyond task diversity: Provable representation transfer for sequential multi-task linear bandits,

T. Duong, Z. Wang, and C. Zhang, “Beyond task diversity: Provable representation transfer for sequential multi-task linear bandits,”Conference on Neural Information Processing Systems, 2024

work page 2024

-

[5]

Few-shot learning via learning the representation, provably,

S. S. Du, W. Hu, S. M. Kakade, J. D. Lee, and Q. Lei, “Few-shot learning via learning the representation, provably,” inInternational Conference on Learning Representations (ICLR), 2021

work page 2021

-

[6]

Exploiting shared representations for personalized federated learning,

L. Collins, H. Hassani, A. Mokhtari, and S. Shakkottai, “Exploiting shared representations for personalized federated learning,” inInternational conference on machine learning. PMLR, 2021, pp. 2089–2099

work page 2021

-

[7]

Provable meta-learning of linear represen- tations,

N. Tripuraneni, C. Jin, and M. Jordan, “Provable meta-learning of linear represen- tations,” inInternational Conference on Machine Learning. PMLR, 2021, pp. 10 434–10 443

work page 2021

-

[8]

Multi-task and meta-learning with sparse linear bandits,

L. Cella and M. Pontil, “Multi-task and meta-learning with sparse linear bandits,” inUncertainty in Artificial Intelligence. PMLR, 2021, pp. 1692–1702

work page 2021

-

[9]

Sample efficient linear meta-learning by alternating minimization,

K. K. Thekumparampil, P. Jain, P. Netrapalli, and S. Oh, “Sample efficient linear meta-learning by alternating minimization,”arxiv:2105.08306, 2021

-

[10]

Impact of representation learning in linear bandits,

J. Yang, W. Hu, J. D. Lee, and S. S. Du, “Impact of representation learning in linear bandits,”arXiv:2010.06531, 2020

-

[11]

Multi-task representation learning with stochastic linear bandits,

L. Cella, K. Lounici, G. Pacreau, and M. Pontil, “Multi-task representation learning with stochastic linear bandits,” inInternational Conference on Artificial Intelligence and Statistics, 2023, pp. 4822–4847

work page 2023

-

[12]

Near-optimal representation learning for linear bandits and linear RL,

J. Hu, X. Chen, C. Jin, L. Li, and L. Wang, “Near-optimal representation learning for linear bandits and linear RL,” inInternational Conference on Machine Learning, 2021, pp. 4349–4358

work page 2021

-

[13]

Multi-task representation learning for pure exploration in linear bandits,

Y . Du, L. Huang, and W. Sun, “Multi-task representation learning for pure exploration in linear bandits,” inInternational Conference on Machine Learning. PMLR, 2023, pp. 8511–8564

work page 2023

-

[14]

Offline multitask representation learning for reinforcement learning,

H. Ishfaq, T. Nguyen-Tang, S. Feng, R. Arora, M. Wang, M. Yin, and D. Precup, “Offline multitask representation learning for reinforcement learning,”Advances in Neural Information Processing Systems, vol. 37, pp. 70 557–70 616, 2024

work page 2024

-

[15]

Multi-user reinforcement learning with low rank rewards,

D. M. Nagaraj, S. S. Kowshik, N. Agarwal, P. Netrapalli, and P. Jain, “Multi-user reinforcement learning with low rank rewards,” inInternational Conference on Machine Learning, 2023, pp. 25 627–25 659

work page 2023

-

[16]

Learning shared representations in multi-task reinforcement learning,

D. Borsa, T. Graepel, and J. Shawe-Taylor, “Learning shared representations in multi-task reinforcement learning,”arXiv preprint arXiv:1603.02041, 2016

-

[17]

Provable general function class repre- sentation learning in multitask bandits and mdp,

R. Lu, A. Zhao, S. S. Du, and G. Huang, “Provable general function class repre- sentation learning in multitask bandits and mdp,”Advances in Neural Information Processing Systems, vol. 35, pp. 11 507–11 519, 2022

work page 2022

-

[18]

Feasible action search for bandit linear programs via thompson sampling,

A. Gangrade, A. Pacchiano, C. Scott, and V . Saligrama, “Feasible action search for bandit linear programs via thompson sampling,” inForty-second International Conference on Machine Learning, 2025

work page 2025

-

[19]

Stage-wise conservative linear bandits,

A. Moradipari, C. Thrampoulidis, and M. Alizadeh, “Stage-wise conservative linear bandits,”Advances in neural information processing systems, vol. 33, pp. 11 191– 11 201, 2020

work page 2020

-

[20]

Conservative contextual linear bandits,

A. Kazerouni, M. Ghavamzadeh, Y . Abbasi Yadkori, and B. Van Roy, “Conservative contextual linear bandits,”Advances in Neural Information Processing Systems, vol. 30, 2017

work page 2017

-

[21]

Safe linear stochastic bandits,

K. Khezeli and E. Bitar, “Safe linear stochastic bandits,” inProceedings of the AAAI Conference on Artificial Intelligence, vol. 34, 2020, pp. 10 202–10 209

work page 2020

-

[22]

Ac- tive learning with safety constraints,

R. Camilleri, A. Wagenmaker, J. H. Morgenstern, L. Jain, and K. G. Jamieson, “Ac- tive learning with safety constraints,”Advances in Neural Information Processing Systems, vol. 35, pp. 33 201–33 214, 2022

work page 2022

-

[23]

J. Lin and S. Moothedath, “Distributed multi-task learning for stochastic bandits with context distribution and stage-wise constraints,”IEEE Transactions on Signal and Information Processing over Networks, vol. 11, pp. 577–591, 2025

work page 2025

-

[24]

J. Lin and S. Moothedath, “Distributed multi-task learning for stochastic bandits with context distribution and stage-wise constraints,”IEEE Transactions on Signal and Information Processing over Networks, 2025

work page 2025

-

[25]

Y . Wu, R. Shariff, T. Lattimore, and C. Szepesvári, “Conservative bandits,” in International Conference on Machine Learning, 2016, pp. 1254–1262

work page 2016

-

[26]

Contextual bandits with stage- wise constraints,

A. Pacchiano, M. Ghavamzadeh, and P. Bartlett, “Contextual bandits with stage- wise constraints,”arxiv:2401.08016, 2024

-

[27]

Linear stochastic bandits under safety constraints,

S. Amani, M. Alizadeh, and C. Thrampoulidis, “Linear stochastic bandits under safety constraints,”arXiv:1908.05814, 2019

-

[28]

Generalized linear bandits with safety constraints,

S. Amani, M. Alizadeh, and C. Thrampoulidis, “Generalized linear bandits with safety constraints,” inICASSP 2020-2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2020, pp. 3562–3566

work page 2020

-

[29]

Stochastic linear bandits with unknown safety constraints and local feedback,

K. N. Varma, S. Lale, and A. Anandkumar, “Stochastic linear bandits with unknown safety constraints and local feedback,” inICML Workshop on New Frontiers in Learning, Control, and Dynamical Systems, 2023

work page 2023

-

[30]

Safety-aware algorithms for adversarial contextual bandit,

W. Sun, D. Dey, and A. Kapoor, “Safety-aware algorithms for adversarial contextual bandit,” inInternational Conference on Machine Learning. PMLR, 2017, pp. 3280–3288. 10

work page 2017

-

[31]

On kernelized multi-armed bandits with constraints,

X. Zhou and B. Ji, “On kernelized multi-armed bandits with constraints,”Advances in neural information processing systems, vol. 35, pp. 14–26, 2022

work page 2022

-

[32]

Safe exploration for optimizing contextual bandits,

R. Jagerman, I. Markov, and M. D. Rijke, “Safe exploration for optimizing contextual bandits,”ACM Transactions on Information Systems (TOIS), vol. 38, no. 3, pp. 1–23, 2020

work page 2020

-

[33]

Thomp- son sampling for contextual bandit problems with auxiliary safety constraints,

S. Daulton, S. Singh, V . Avadhanula, D. Dimmery, and E. Bakshy, “Thomp- son sampling for contextual bandit problems with auxiliary safety constraints,” arXiv:1911.00638, 2019

-

[34]

Improved algorithms for conservative exploration in bandits,

E. Garcelon, M. Ghavamzadeh, A. Lazaric, and M. Pirotta, “Improved algorithms for conservative exploration in bandits,” inProceedings of the AAAI Conference on Artificial Intelligence, vol. 34, no. 04, 2020, pp. 3962–3969

work page 2020

-

[35]

A doubly optimistic strategy for safe linear bandits,

T. Chen, A. Gangrade, and V . Saligrama, “A doubly optimistic strategy for safe linear bandits,”arXiv:2209.13694, 2022

-

[36]

X. Liu, B. Li, P. Shi, and L. Ying, “An efficient pessimistic-optimistic algorithm for stochastic linear bandits with general constraints,”Advances in Neural Information Processing Systems, vol. 34, pp. 24 075–24 086, 2021

work page 2021

-

[37]

A. Badanidiyuru, R. Kleinberg, and A. Slivkins, “Bandits with knapsacks,”Journal of the ACM (JACM), vol. 65, no. 3, pp. 1–55, 2018

work page 2018

-

[38]

Resourceful contextual bandits,

A. Badanidiyuru, J. Langford, and A. Slivkins, “Resourceful contextual bandits,” inConference on Learning Theory, 2014, pp. 1109–1134

work page 2014

-

[39]

Bandits with concave rewards and convex knapsacks,

S. Agrawal and N. R. Devanur, “Bandits with concave rewards and convex knapsacks,” inACM conference on Economics and computation, 2014, pp. 989– 1006

work page 2014

-

[40]

Algorithms with logarithmic or sub- linear regret for constrained contextual bandits,

H. Wu, R. Srikant, X. Liu, and C. Jiang, “Algorithms with logarithmic or sub- linear regret for constrained contextual bandits,”Advances in Neural Information Processing Systems, vol. 28, 2015

work page 2015

-

[41]

A. Slivkins, X. Zhou, K. A. Sankararaman, and D. J. Foster, “Contextual ban- dits with packing and covering constraints: A modular lagrangian approach via regression,”Journal of Machine Learning Research, vol. 25, no. 394, pp. 1–37, 2024

work page 2024

-

[42]

Smoothed adversarial linear contex- tual bandits with knapsacks,

V . Sivakumar, S. Zuo, and A. Banerjee, “Smoothed adversarial linear contex- tual bandits with knapsacks,” inInternational Conference on Machine Learning. PMLR, 2022, pp. 20 253–20 277

work page 2022

-

[43]

R. Deb, A. Saha, and A. Banerjee, “Think before you duel: Understanding complexities of preference learning under constrained resources,” inInternational Conference on Artificial Intelligence and Statistics. PMLR, 2024, pp. 4546–4554

work page 2024

-

[44]

Conservative contextual bandits: Beyond linear representations,

R. Deb, M. Ghavamzadeh, and A. Banerjee, “Conservative contextual bandits: Beyond linear representations,” inThe Thirteenth International Conference on Learning Representations, 2025

work page 2025

-

[45]

Safe online convex optimization with unknown linear safety constraints,

S. Chaudhary and D. Kalathil, “Safe online convex optimization with unknown linear safety constraints,” inProceedings of the AAAI Conference on Artificial Intelligence, vol. 36, no. 6, 2022, pp. 6175–6182

work page 2022

-

[46]

Exact matrix completion via convex optimization,

E. J. Candes and B. Recht, “Exact matrix completion via convex optimization,” Found. of Comput. Math, vol. 9, pp. 717–772, 2008

work page 2008

-

[47]

Nonconvex optimization meets low-rank matrix factorization: An overview,

Y . Chi, Y . M. Lu, and Y . Chen, “Nonconvex optimization meets low-rank matrix factorization: An overview,”IEEE Transactions on Signal Processing, vol. 67, no. 20, pp. 5239–5269, 2019

work page 2019

-

[48]

S. Nayer and N. Vaswani, “Fast and sample-efficient federated low rank matrix recovery from column-wise linear and quadratic projections,”IEEE Transactions on Infomation Theory, 2023

work page 2023

-

[49]

Batched multi-armed bandits problem,

Z. Gao, Y . Han, Z. Ren, and Z. Zhou, “Batched multi-armed bandits problem,” Advances in Neural Information Processing Systems, vol. 32, 2019

work page 2019

-

[50]

Sequential batch learning in finite-action linear contextual bandits,

Y . Han, Z. Zhou, Z. Zhou, J. Blanchet, P. W. Glynn, and Y . Ye, “Sequential batch learning in finite-action linear contextual bandits,”arXiv:2004.06321, 2020

-

[51]

Phase transitions and cyclic phenomena in bandits with switching constraints,

D. Simchi-Levi and Y . Xu, “Phase transitions and cyclic phenomena in bandits with switching constraints,”Advances in Neural Information Processing Systems, vol. 32, 2019

work page 2019

-

[52]

Provable low rank phase retrieval,

S. Nayer, P. Narayanamurthy, and N. Vaswani, “Provable low rank phase retrieval,” IEEE Transactions on Information Theory, vol. 66, no. 9, pp. 5875–5903, 2020

work page 2020

-

[53]

Noisy low rank column-wise sensing,

A. P. Singh and N. Vaswani, “Noisy low rank column-wise sensing,” arxiv:2409.08384, 2024

-

[54]

Improved algorithms for linear stochastic bandits,

Y . Abbasi-Yadkori, D. Pál, and C. Szepesvári, “Improved algorithms for linear stochastic bandits,”Advances in Neural Information Processing Systems, vol. 24, pp. 2312–2320, 2011

work page 2011

-

[55]

Active multi-task representation learning,

Y . Chen, K. Jamieson, and S. Du, “Active multi-task representation learning,” in International Conference on Machine Learning. PMLR, 2022, pp. 3271–3298

work page 2022

-

[56]

The movielens datasets: History and context,

F. M. Harper and J. A. Konstan, “The movielens datasets: History and context,” ACM Transactions on Interactive Intelligent Systems, vol. 5, no. 4, pp. 1–19, 2015

work page 2015

-

[57]

Vershynin,High-dimensional probability: An introduction with applications in data science

R. Vershynin,High-dimensional probability: An introduction with applications in data science. Cambridge university press, 2018, vol. 47. APPENDIXA PRELIMINARIES Proposition 1(Theorem 2.8.1, [57]).Let c>0is an absolute constant, and let X 1,· · ·,X N be independent, mean zero, sub- exponential random variables. Then, for every g⩾0, we have P n N ∑ i=1 Xi ⩾...

work page 2018

-

[58]

Thus, the inequality above shows that the bound onLsatisfies the condition forLin Theorem 1

Thus, Cκ 2 log p 2(ρ2 −ρ+1) 1+ 2 (1−2ρ)2 >Cκ 2 log 1 1+ 2 (1−2ρ)2 =Cκ 2 log 2µσ ⋆ max q 2r T log NT δ 2 1+ 2 (1−2ρ)2 µσ ⋆max q 2r T log NT δ ⩾Cκ 2 log 2x⋆⊤ n,t θ ⋆ t 2 1+ 2 (1−2ρ)2 µσ ⋆max q 2r T log NT δ ⩾Cκ 2 log κbn,t +αr bn,t 2 1+ 2 (1−2ρ)2 µσ ⋆max q 2r T log NT δ , where the first inequality is derived with at least probability 1−δ,x ⋆⊤ n,t θ ⋆ t ⩽µσ...

work page 2019

-

[59]

His research interests include bandit learning, multi-task learning, and representation learning

He is a graduate student in the Department of Electrical and Computer Engineering at Iowa State University. His research interests include bandit learning, multi-task learning, and representation learning. Shana Moothedathreceived her Ph.D. degree in Electrical Engineering from the Indian Institute of Technology Bombay (IITB) in 2018, and she was a postdo...

work page 2018

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.