Recognition: 2 theorem links

· Lean TheoremFrom EEG Cleaning to Decoding: The Role of Artifact Rejection in MI-based BCIs

Pith reviewed 2026-05-13 03:29 UTC · model grok-4.3

The pith

Automated EEG artifact rejection improves motor imagery BCI decoding most for low-SNR subjects and reduces performance gaps across users.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

FAAR computes compact artifact-sensitive features to derive an epoch-level Signal Quality Index, then adaptively selects rejection thresholds to remove contaminated epochs without prior knowledge of artifact types or manual tuning. Evaluation across 13 MI datasets shows rejection effects are strongly subject- and regime-dependent, with largest gains in low-baseline or low-SNR conditions; FAAR reduces inter-subject performance variability without aggressive data removal and maintains consistent behavior from offline curation through online filtering.

What carries the argument

Fast Automatic Artifact Rejection (FAAR), a lightweight pipeline that derives an adaptive Signal Quality Index from a compact set of artifact-sensitive features to identify and reject contaminated EEG epochs.

If this is right

- Rejection policies should be chosen adaptively according to each subject's baseline performance and data SNR rather than applied uniformly.

- FAAR lowers inter-subject variability in MI-BCI accuracy, supporting more consistent reliability and helping address BCI illiteracy.

- The largest accuracy improvements occur in low-baseline or low-SNR regimes while high-quality data sees little change.

- The method preserves enough data for training while remaining fast enough for both offline and real-time BCI pipelines.

- FAAR produces rejection decisions that stay consistent from training-set curation through online filtering.

Where Pith is reading between the lines

- Standard MI-BCI pipelines could incorporate adaptive rejection as a default preprocessing step to improve usability for more users.

- Similar lightweight adaptive rejection might benefit EEG decoding tasks beyond motor imagery, such as P300 or SSVEP paradigms.

- Lowering inter-subject spread while keeping data removal modest could raise overall data efficiency when training subject-specific or transfer decoders.

- Patient-facing BCI applications may gain from such methods because they handle variable real-world signal quality without requiring expert oversight.

Load-bearing premise

The chosen artifact-sensitive features and resulting Signal Quality Index can correctly flag contaminated epochs across many different MI datasets and artifact varieties without any prior knowledge or manual threshold adjustment.

What would settle it

On a new MI dataset, applying FAAR either fails to raise or lowers decoding accuracy for low-baseline subjects, or manual expert review finds that many rejected epochs were actually clean.

Figures

read the original abstract

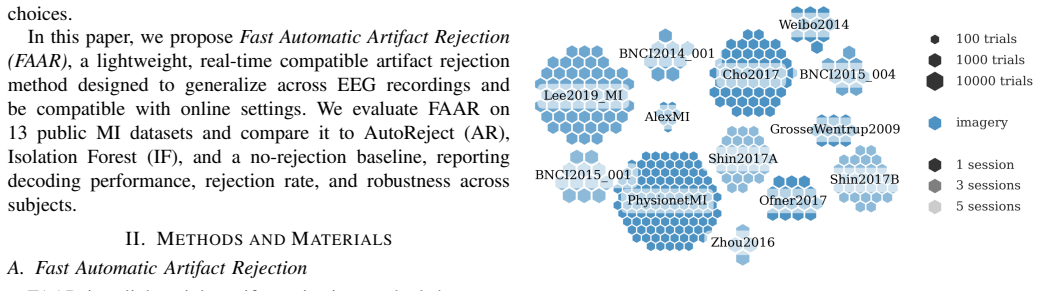

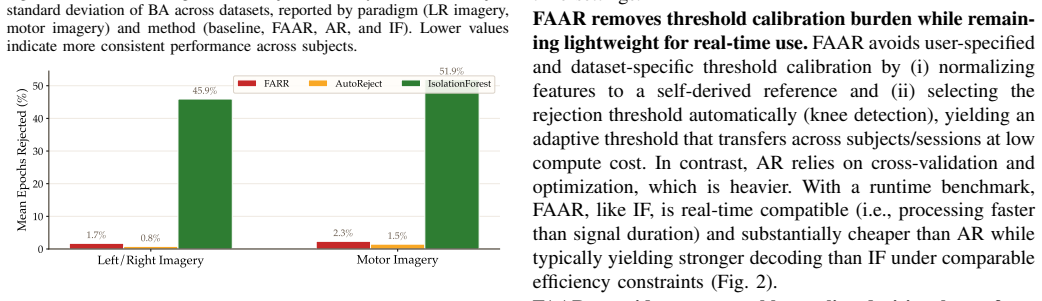

Motor imagery (MI) BCIs are sensitive to EEG artifacts, yet the practical impact of automated artifact rejection on downstream MI decoding performance remains unclear. While most work focuses on decoder design, the contribution of data curation, particularly automated rejection policies, has received comparatively less attention, despite its importance for robust ML pipelines. Here, we propose Fast Automatic Artifact Rejection (FAAR), a lightweight method that computes a compact set of artifact-sensitive features, derives an epoch-level Signal Quality Index, adaptively selects rejection thresholds, and automatically rejects contaminated epochs without requiring prior knowledge of artifact types or manual threshold tuning. We evaluate FAAR on 13 publicly available MI datasets and compare it to a no-rejection baseline, AutoReject, and Isolation Forest. We show rejection effects are strongly subject- and regime-dependent, with the largest gains in low-baseline/low-SNR conditions, so it should be used adaptively. FAAR reduces inter-subject performance variability, an important property for MI-BCI reliability and BCI-illiteracy, without aggressive data removal. Finally, FAAR's lightweight and fully automated thresholding yields consistent rejection behavior across offline curation, training, and online filtering, and supports real-time BCI constraints.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes Fast Automatic Artifact Rejection (FAAR), a lightweight automated method for EEG artifact rejection in motor imagery (MI) BCIs. It computes a compact set of artifact-sensitive features to derive an epoch-level Signal Quality Index (SQI), adaptively selects rejection thresholds without prior artifact knowledge or manual tuning, and evaluates the approach on 13 public MI datasets. The central claims are that rejection effects are strongly subject- and regime-dependent (largest gains in low-baseline/low-SNR conditions), that FAAR reduces inter-subject performance variability without aggressive data removal, and that it supports consistent offline-to-online use.

Significance. If the quantitative results, statistical tests, and mechanistic controls hold, the work would be significant for MI-BCI research by shifting focus from decoder design alone to data curation, showing that adaptive rather than blanket rejection improves reliability especially for low-SNR subjects and BCI-illiteracy, and by supplying a practical, real-time-compatible tool. The scale of the 13-dataset evaluation and emphasis on downstream decoding performance (rather than isolated cleaning metrics) are strengths that would strengthen the contribution if properly documented.

major comments (3)

- [Evaluation (likely §4–5)] Evaluation section: the abstract and summary claim subject- and regime-dependent gains with largest improvements in low-baseline/low-SNR conditions and reduced inter-subject variability, yet no quantitative performance deltas, error bars, statistical tests (e.g., paired t-tests or ANOVA across subjects), or details on how the three baselines were configured are provided. This prevents verification of the central claims and of whether FAAR outperforms the baselines in a load-bearing way.

- [Method and Evaluation] Method and Evaluation sections: the claim that performance gains and variability reduction are attributable to FAAR’s artifact-specific features and adaptive SQI (rather than generic data culling or dataset-specific selection effects) requires an explicit control that rejects an identical fraction of epochs at random while preserving the same downstream decoder. Current comparisons to no-rejection, AutoReject, and Isolation Forest do not isolate the mechanism; without it the attribution to artifact rejection remains unproven.

- [Method] Method section: the assumption that the compact artifact-sensitive features and derived SQI reliably identify contaminated epochs across 13 diverse MI datasets and artifact types without any prior knowledge or manual tuning is load-bearing for the “fully automated” and “no prior knowledge” claims, yet no ablation on feature choice, cross-dataset generalization tests, or failure-case analysis is referenced.

minor comments (2)

- [Abstract/Introduction] Abstract and Introduction: the phrase “compact set of artifact-sensitive features” is used without naming the features or their dimensionality; a brief enumeration or reference to the exact feature list should appear in the abstract or early introduction for immediate clarity.

- [Results] The manuscript would benefit from an explicit statement of the exact fraction of epochs rejected by FAAR on average (and per regime) to allow readers to judge whether the “without aggressive data removal” claim is supported by the numbers.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment point-by-point below. We will revise the manuscript to incorporate the requested quantitative reporting, mechanistic controls, and additional analyses.

read point-by-point responses

-

Referee: [Evaluation (likely §4–5)] Evaluation section: the abstract and summary claim subject- and regime-dependent gains with largest improvements in low-baseline/low-SNR conditions and reduced inter-subject variability, yet no quantitative performance deltas, error bars, statistical tests (e.g., paired t-tests or ANOVA across subjects), or details on how the three baselines were configured are provided. This prevents verification of the central claims and of whether FAAR outperforms the baselines in a load-bearing way.

Authors: We agree that more explicit quantitative support is needed to verify the central claims. While the manuscript reports subject-level performance across the 13 datasets and notes regime-dependent patterns, we acknowledge that aggregated deltas, error bars, and formal statistical tests were not presented in sufficient detail. In the revised version we will add: mean performance deltas with standard deviations, error bars on all figures, paired t-tests and ANOVA results (with p-values) focused on low-SNR subjects, and full configuration details for AutoReject and Isolation Forest. These changes will allow direct assessment of whether FAAR produces load-bearing improvements and reduces inter-subject variability. revision: yes

-

Referee: [Method and Evaluation] Method and Evaluation sections: the claim that performance gains and variability reduction are attributable to FAAR’s artifact-specific features and adaptive SQI (rather than generic data culling or dataset-specific selection effects) requires an explicit control that rejects an identical fraction of epochs at random while preserving the same downstream decoder. Current comparisons to no-rejection, AutoReject, and Isolation Forest do not isolate the mechanism; without it the attribution to artifact rejection remains unproven.

Authors: The referee correctly notes that isolating the contribution of the artifact-sensitive features and adaptive SQI requires a matched random-rejection control. Our existing baselines provide useful contrasts but do not directly test whether equivalent random culling yields similar gains. We will therefore add a new control experiment: for each subject and dataset, randomly reject the identical fraction of epochs that FAAR rejects and evaluate the same downstream decoder. Results will be reported alongside the existing comparisons to strengthen the mechanistic attribution. revision: yes

-

Referee: [Method] Method section: the assumption that the compact artifact-sensitive features and derived SQI reliably identify contaminated epochs across 13 diverse MI datasets and artifact types without any prior knowledge or manual tuning is load-bearing for the “fully automated” and “no prior knowledge” claims, yet no ablation on feature choice, cross-dataset generalization tests, or failure-case analysis is referenced.

Authors: We agree that explicit validation of the feature set and SQI would reinforce the automation claims. Although the 13-dataset evaluation already demonstrates broad applicability, we will add in revision: (1) an ablation removing individual features or the SQI to quantify their individual contributions, (2) leave-one-dataset-out generalization tests, and (3) a failure-case analysis identifying subjects or artifact regimes where FAAR yields limited benefit, with discussion of possible causes. These additions will be placed in the Method and Evaluation sections. revision: yes

Circularity Check

No significant circularity; empirical method and evaluation are self-contained.

full rationale

The paper proposes and evaluates an empirical artifact-rejection pipeline (FAAR) that extracts features, computes an SQI, and applies adaptive thresholding on 13 MI datasets, with comparisons to no-rejection, AutoReject, and Isolation Forest baselines. No equations, first-principles derivations, fitted-parameter predictions, or load-bearing self-citations appear in the provided text or abstract. Performance claims (subject- and regime-dependent gains, reduced inter-subject variability) rest on experimental outcomes rather than reducing by construction to inputs, definitions, or prior author work. This matches the default case of an applied ML study whose central results are externally falsifiable via the reported dataset comparisons and do not invoke uniqueness theorems or ansatzes smuggled through citations.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearFAAR computes five complementary descriptors... Band-limited spectral magnitude... RMS amplitude... Maximum temporal gradient... Zero-crossing rate... Kurtosis... derives an epoch-level Signal Quality Index... adaptive SQI threshold... knee-detection algorithm

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanreality_from_one_distinction unclearWe evaluate FAAR on 13 publicly available MI datasets... compare it to a no-rejection baseline, AutoReject, and Isolation Forest

Reference graph

Works this paper leans on

-

[1]

An EEG- based brain-computer interface for cursor control,

J. R. Wolpaw, D. J. McFarland, G. W. Neat, and C. A. Forneris, “An EEG- based brain-computer interface for cursor control,”Electroencephalogra- phy and Clinical Neurophysiology, 1991

work page 1991

-

[2]

M. A. Khan, R. Das, H. K. Iversen, and S. Puthusserypady, “Review on motor imagery based BCI systems for upper limb post-stroke neurore- habilitation: From designing to application,”Computers in Biology and Medicine, 2020

work page 2020

-

[3]

Five-class motor imagery BCI classification and its application to brain-controlled wheelchairs,

H. Pan, B. Teng, Z. Liu, S. Tong, X. Yu, and Z. Li, “Five-class motor imagery BCI classification and its application to brain-controlled wheelchairs,”Cognitive Neurodynamics, 2026

work page 2026

-

[4]

Adaptable Neuro- physiological Biometric Authentication System and Method,

A. H. Caillet, A. Shtarbanov, and T. Semah, “Adaptable Neuro- physiological Biometric Authentication System and Method,” Patent WO2025219294, published Oct. 22, 2025; filing no. WO2025EP60157 (Apr. 11, 2025)

work page 2025

-

[5]

A comprehen- sive review of EEG-based brain-computer interface paradigms,

R. Abiri, S. Borhani, E. W. Sellers, Y . Jiang, and X. Zhao, “A comprehen- sive review of EEG-based brain-computer interface paradigms,”J. Neural Eng., 2019

work page 2019

-

[6]

A. Bashashati, M. Fatourechi, R. K. Ward, and G. E. Birch, “A survey of signal processing algorithms in brain-computer interfaces based on electrical brain signals,”J. Neural Eng., 2007

work page 2007

-

[7]

Review of challenges associated with the EEG artifact removal methods,

W. Mumtaz, S. Rasheed, and A. Irfan, “Review of challenges associated with the EEG artifact removal methods,”Biomedical Signal Processing and Control, 2021

work page 2021

-

[8]

Improved Riemannian potato field: An automatic artifact rejection method for EEG,

D. Hajhassani, Q. Barth ´elemy, J. Mattout, and M. Congedo, “Improved Riemannian potato field: An automatic artifact rejection method for EEG,” Biomedical Signal Processing and Control, 2026

work page 2026

-

[9]

Methods for artifact detection and removal from scalp EEG: A review,

M. K. Islam, A. Rastegarnia, and Z. Yang, “Methods for artifact detection and removal from scalp EEG: A review,”Neurophysiologie Clinique/Clinical Neurophysiology, 2016

work page 2016

-

[10]

Removal of artifacts from EEG signals: a review,

X. Jiang, B. G. Bin, and Z. Tian, “Removal of artifacts from EEG signals: a review,”Sensors (Basel), 2019

work page 2019

-

[11]

Investigating effects of different artefact types on motor imagery BCI,

L. Frølich, I. Winkler, K.-R. M ¨uller, and W. Samek, “Investigating effects of different artefact types on motor imagery BCI,” inProc. 2015 37th Annu. Int. Conf. IEEE Eng. Med. Biol. Soc. (EMBC), 2015

work page 2015

-

[12]

A. Adolf, C. M. K ¨oll˝od, G. M ´arton, W. Fadel, and I. Ulbert, “The effect of processing techniques on the classification accuracy of brain-computer interface systems,”Brain Sciences, 2024

work page 2024

-

[13]

X. Geng, D. Li, H. Chen, P. Yu, H. Yan, and M. Yue, “An improved feature extraction algorithms of EEG signals based on motor imagery brain-computer interface,”Alexandria Engineering Journal, 2022

work page 2022

-

[14]

M. Mohammadi and M. R. Mosavi, “Comparison of two methods of removing EOG artifacts for use in a motor imagery-based brain computer interface,”Evolving Systems, 2021

work page 2021

-

[15]

M. Tob ´on-Henao, A. ´Alvarez-Meza, and G. Castellanos-Dom ´ınguez, “Subject-dependent artifact removal for enhancing motor imagery classi- fier performance under poor skills,”Sensors, 2022

work page 2022

-

[16]

Removing electroencephalographic ar- tifacts by blind source separation,

T.-P. Jung, S. Makeig, C. Humphries, T.-W. Lee, M. J. McKeown, V . Iragui, and T. J. Sejnowski, “Removing electroencephalographic ar- tifacts by blind source separation,”Psychophysiology, 2000

work page 2000

- [17]

-

[18]

A. Delorme and S. Makeig, “EEGLAB: an open source toolbox for analysis of single-trial EEG dynamics including independent component analysis,”J. Neurosci. Methods, 2004

work page 2004

-

[19]

Real-time neuroimaging and cognitive monitoring using wearable dry EEG,

T. R. Mullen, C. A. Kothe, Y . M. Chi, A. Ojeda, T. Kerth, S. Makeig, T.-P. Jung, and G. Cauwenberghs, “Real-time neuroimaging and cognitive monitoring using wearable dry EEG,”IEEE Transactions on Biomedical Engineering, vol. 62, no. 11, pp. 2553–2567, 2015

work page 2015

-

[20]

MEG and EEG data analysis with MNE-Python,

A. Gramfort, M. Luessi, E. Larson, D. A. Engemann, D. Strohmeier, C. Brodbeck,et al., “MEG and EEG data analysis with MNE-Python,” Front. Neurosci., 2013

work page 2013

-

[21]

FASTER: fully automated statistical thresholding for EEG artifact rejection,

H. Nolan, R. Whelan, and R. B. Reilly, “FASTER: fully automated statistical thresholding for EEG artifact rejection,”J. Neurosci. Methods, 2010

work page 2010

-

[22]

Autoreject: automated artifact rejection for MEG and EEG data,

M. Jas, D. A. Engemann, Y . Bekhti, F. Raimondo, and A. Gramfort, “Autoreject: automated artifact rejection for MEG and EEG data,”Neu- roImage, 2017

work page 2017

-

[23]

R. Zhang, R. Rong, J. Q. Gan, Y . Xu, H. Wang, and X. Wang, “Reliable and fast automatic artifact rejection of long-term EEG recordings based on Isolation Forest,”Med. Biol. Eng. Comput., 2024

work page 2024

-

[24]

A. Barachant, A. Andreev, and M. Congedo, “The Riemannian Potato: an automatic and adaptive artifact detection method for online experiments using Riemannian geometry,” inProc. TOBI Workshop IV, 2013

work page 2013

-

[25]

The Rieman- nian potato field: a tool for online signal quality index of EEG,

Q. Barth ´elemy, L. Mayaud, D. Ojeda, and M. Congedo, “The Rieman- nian potato field: a tool for online signal quality index of EEG,”IEEE Trans. Neural Syst. Rehabil. Eng., 2019

work page 2019

-

[26]

An automatic Riemannian artifact rejection method for P300-based BCIs,

D. Hajhassani, J. Mattout, and M. Congedo, “An automatic Riemannian artifact rejection method for P300-based BCIs,” inProc. 2024 32nd Eur . Signal Process. Conf. (EUSIPCO), 2024

work page 2024

-

[27]

Good data? The EEG Quality Index for automated assessment of signal quality,

S. D. Fickling, C. C. Liu, R. C. N. D’Arcy, S. Ghosh Hajra, and X. Song, “Good data? The EEG Quality Index for automated assessment of signal quality,” inProc. 2019 IEEE 10th Annu. Inf. Technol., Electron. Mobile Commun. Conf. (IEMCON), 2019

work page 2019

-

[28]

The effects of electrode impedance on data quality and statistical significance in ERP recordings,

E. S. Kappenman and S. J. Luck, “The effects of electrode impedance on data quality and statistical significance in ERP recordings,”Psychophys- iology, 2010

work page 2010

-

[29]

J. Sun, B. Wang, Y . Niu, Y . Tan, C. Fan, N. Zhang, J. Xue, J. Wei, J. Xiang, “Complexity analysis of EEG, MEG, and fMRI in mild cognitive impairment and Alzheimer’s disease: A review,”Entropy (Basel), 2020

work page 2020

-

[30]

Najimet al.,Stochastic Processes: Estimation, Optimisation and Analysis

K. Najimet al.,Stochastic Processes: Estimation, Optimisation and Analysis. Elsevier Ltd., 2004

work page 2004

-

[31]

A. Delorme, T. Sejnowski, and S. Makeig, “Enhanced detection of arti- facts in EEG data using higher-order statistics and independent component analysis,”NeuroImage, 2007

work page 2007

-

[32]

C.-Y . Chang, S.-H. Hsu, L. Pion-Tonachini, and T.-P. Jung, “Evaluation of artifact subspace reconstruction for automatic artifact components removal in multi-channel EEG recordings,”IEEE Trans. Biomed. Eng., 2020

work page 2020

-

[33]

Developing brain vital signs: Initial framework for monitoring brain function changes over time,

S. Ghosh Hajraet al., “Developing brain vital signs: Initial framework for monitoring brain function changes over time,”Front. Neurosci., 2016

work page 2016

-

[34]

Finding a ‘Kneedle’ in a haystack: Detecting knee points in system behavior,

V . Satopaa, J. Albrecht, D. Irwin, and B. Raghavan, “Finding a ‘Kneedle’ in a haystack: Detecting knee points in system behavior,” inProc. 2011 31st Int. Conf. Distributed Computing Systems Workshops (ICDCSW), 2011

work page 2011

-

[35]

Aristimunhaet al.,Mother of All BCI Benchmarks (MOABB), ver

B. Aristimunhaet al.,Mother of All BCI Benchmarks (MOABB), ver. 1.4.3. Zenodo, 2025

work page 2025

-

[36]

Multiclass brain- computer interface classification by Riemannian geometry,

A. Barachant, S. Bonnet, M. Congedo, and C. Jutten, “Multiclass brain- computer interface classification by Riemannian geometry,”IEEE Trans. Biomed. Eng., 2012

work page 2012

-

[37]

The largest EEG- based BCI reproducibility study for open science: the MOABB bench- mark,

S. Chevallier, I. Carrara, B. Aristimunha, P. Guetschel, B. Jun- queira Lopes, S. Velut, S. Khazem, and T. Moreau, “The largest EEG- based BCI reproducibility study for open science: the MOABB bench- mark,”HAL, working paper, hal-04537061, 2024

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.