Recognition: 2 theorem links

· Lean TheoremNeural posterior estimation for scalable and accurate inverse parameter inference in Li-ion batteries

Pith reviewed 2026-05-13 20:33 UTC · model grok-4.3

The pith

Neural posterior estimation calibrates Li-ion battery parameters as accurately as Bayesian calibration but in milliseconds rather than minutes.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Neural posterior estimation trains a neural network on many simulated voltage curves generated from the physics-based model so that, after training, it directly outputs the full posterior distribution over parameters for any new observed voltage trace. When tested against Bayesian calibration on the same experimental fast-charge dataset, NPE produces parameter estimates that match or exceed accuracy while cutting inference time from minutes to milliseconds. The method additionally supplies local sensitivity maps that link each parameter to particular regions of the voltage response, and the recovered parameters align with independent measurements of loss of lithium inventory and loss of cycl

What carries the argument

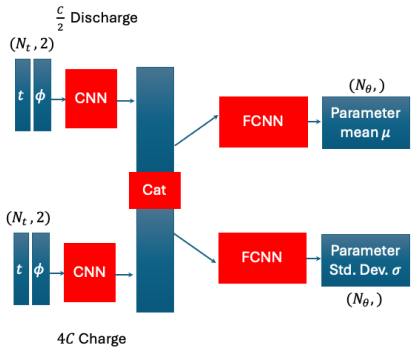

Neural posterior estimation (NPE), a simulation-based inference method that trains a neural network to map observed voltage data directly to the posterior distribution of model parameters.

If this is right

- Parameter estimation becomes fast enough for real-time diagnostics during battery operation.

- The approach scales to high-dimensional cases with up to 27 parameters while remaining tractable.

- Local sensitivity information identifies which parts of the voltage curve constrain each parameter.

- Validation against physical degradation measurements confirms the estimates reflect actual cell state.

Where Pith is reading between the lines

- The same trained network could be reused across many cells or operating conditions once the initial simulation budget is spent.

- Combining NPE outputs with streaming sensor data could support continuous online updating of battery state estimates.

- The interpretability maps may help identify which measurements are most informative for future sensor design.

Load-bearing premise

The neural network trained only on simulated data from the physics-based model generalizes accurately to real experimental voltage curves without substantial distribution shift.

What would settle it

New experimental voltage cycles where the voltage prediction error from NPE-derived parameters exceeds that from Bayesian calibration, or where the inferred parameters fail to match independent measurements of lithium inventory loss.

Figures

read the original abstract

Diagnosing the internal state of Li-ion batteries is critical for battery research, operation of real-world systems, and prognostic evaluation of remaining lifetime. By using physics-based models to perform probabilistic parameter estimation via Bayesian calibration, diagnostics can account for the uncertainty due to model fitness, data noise, and the observability of any given parameter. However, Bayesian calibration in Li-ion batteries using electrochemical data is computationally intensive even when using a fast surrogate in place of physics-based models, requiring many thousands of model evaluations. A fully amortized alternative is neural posterior estimation (NPE). NPE shifts the computational burden from the parameter estimation step to data generation and model training, reducing the parameter estimation time from minutes to milliseconds, enabling real-time applications. The present work shows that NPE calibrates parameters equally or more accurately than Bayesian calibration, and we demonstrate that the higher computational costs for data generation are tractable even in high-dimensional cases (ranging from 6 to 27 estimated parameters), but the NPE method can lead to higher voltage prediction errors. The NPE method also offers several interpretability advantages over Bayesian calibration, such as local parameter sensitivity to specific regions of the voltage curve. The NPE method is demonstrated using an experimental fast charge dataset, with parameter estimates validated against measurements of loss of lithium inventory and loss of active material. The implementation is made available in a companion repository (https://github.com/NatLabRockies/BatFIT).

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces neural posterior estimation (NPE) as a fully amortized alternative to Bayesian calibration for inferring parameters in physics-based Li-ion battery models from voltage curves. It claims that NPE achieves equal or superior parameter accuracy, reduces inference time from minutes to milliseconds, provides interpretability advantages such as local sensitivity to voltage-curve regions, and is validated on experimental fast-charge data against independent loss-of-lithium-inventory and loss-of-active-material measurements, with open-source code provided.

Significance. If the central generalization claim holds, the work would enable scalable, real-time probabilistic diagnostics for high-dimensional battery models (6–27 parameters), shifting computational cost to offline training while preserving calibration quality; the explicit validation against independent degradation measurements and the open repository are notable strengths that could accelerate adoption in battery research and management systems.

major comments (2)

- [Abstract] Abstract: the claim of equal or superior parameter calibration accuracy is immediately qualified by the statement that NPE 'can lead to higher voltage prediction errors'; without a side-by-side quantitative comparison of voltage reconstruction RMSE or posterior predictive coverage on the experimental dataset, the accuracy assertion remains under-supported.

- [Validation] Validation section: the central generalization assumption—that NPE posteriors trained exclusively on physics-model simulations remain well-calibrated on real experimental fast-charge curves—is not accompanied by an explicit sim-to-real discrepancy metric, domain-adaptation diagnostic, or posterior predictive check on held-out real voltage segments, leaving open the possibility that apparent parameter accuracy reflects model mismatch rather than true inference quality.

minor comments (1)

- [Implementation] The companion repository link is provided; confirming that it contains the exact NPE architecture, training hyperparameters, and simulation data-generation scripts would strengthen reproducibility.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback. We address each major comment point by point below and indicate the planned revisions.

read point-by-point responses

-

Referee: [Abstract] Abstract: the claim of equal or superior parameter calibration accuracy is immediately qualified by the statement that NPE 'can lead to higher voltage prediction errors'; without a side-by-side quantitative comparison of voltage reconstruction RMSE or posterior predictive coverage on the experimental dataset, the accuracy assertion remains under-supported.

Authors: We agree that a direct quantitative comparison on the experimental dataset would strengthen the accuracy claim. In the revised manuscript we will add a table reporting voltage reconstruction RMSE and posterior predictive coverage metrics for both NPE and Bayesian calibration posteriors evaluated on the experimental fast-charge curves. revision: yes

-

Referee: [Validation] Validation section: the central generalization assumption—that NPE posteriors trained exclusively on physics-model simulations remain well-calibrated on real experimental fast-charge curves—is not accompanied by an explicit sim-to-real discrepancy metric, domain-adaptation diagnostic, or posterior predictive check on held-out real voltage segments, leaving open the possibility that apparent parameter accuracy reflects model mismatch rather than true inference quality.

Authors: The current validation relies on independent experimental measurements of loss-of-lithium-inventory and loss-of-active-material, which are obtained outside the voltage-curve fitting process and therefore provide a check against model mismatch. We acknowledge that additional diagnostics would further address the sim-to-real concern. In the revision we will include posterior predictive checks on held-out segments of the experimental voltage curves together with a quantitative sim-to-real discrepancy metric based on residual distributions. revision: yes

Circularity Check

No circularity: NPE trained on independent simulations, validated externally

full rationale

The paper applies standard neural posterior estimation (NPE) to infer Li-ion battery parameters from voltage curves. Training data are generated from the physics-based model via independent forward simulations; the resulting amortized posterior is then applied to experimental data. Parameter accuracy is checked against separate loss-of-lithium-inventory and loss-of-active-material measurements, not against quantities derived from the same fitted voltage segments. No self-definitional equations, fitted-inputs-renamed-as-predictions, or load-bearing self-citations appear in the derivation chain. The central claim therefore remains independent of its own outputs.

Axiom & Free-Parameter Ledger

free parameters (1)

- NPE network architecture and training hyperparameters

axioms (1)

- domain assumption Physics-based electrochemical model sufficiently captures real battery behavior for training data generation

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearNPE shifts the computational burden... reducing the parameter estimation time from minutes to milliseconds... q_ϕ(θ|x)=N(μ(x),σ(x)I)

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearWe show with synthetic data that NPE is as accurate as Bayesian calibration... validated against LLI and LAMPE

Reference graph

Works this paper leans on

-

[1]

P. J. Weddle, S. Kim, B.-R. Chen, Z. Yi, P. Gasper, A. M. Colclasure, K. Smith, K. L. Gering, T. R. Tanim, E. J. Dufek, Battery state-of-health diagnostics during fast cycling using physics-informed deep-learning, Journal of Power Sources 585 (2023) 233582

work page 2023

-

[2]

S. Kim, Z. Yi, B.-R. Chen, T. R. Tanim, E. J. Dufek, Rapid failure mode classification and quantification in batteries: A deep learning modeling framework, Energy Storage Materials 45 (2022) 1002–1011

work page 2022

-

[3]

X. Duan, F. Liu, E. Agar, X. Jin, Parameter identification of lithium-ion batteries by coupling electrochemi- cal impedance spectroscopy with a physics-based model, Journal of The Electrochemical Society 169 (2022) 040561

work page 2022

-

[4]

W. Li, I. Demir, D. Cao, D. Jöst, F. Ringbeck, M. Junker, D. U. Sauer, Data-driven systematic parameter identification of an electrochemical model for lithium-ion batteries with artificial intelligence, Energy Storage Materials 44 (2022) 557–570

work page 2022

-

[5]

M. Andersson, M. Streb, J. Y . Ko, V . L. Klass, M. Klett, H. Ekström, M. Johansson, G. Lindbergh, Parametriza- tion of physics-based battery models from input–output data: A review of methodology and current research, Journal of Power Sources 521 (2022) 230859

work page 2022

-

[6]

E. J. Dufek, D. P. Abraham, I. Bloom, B.-R. Chen, P. R. Chinnam, A. M. Colclasure, K. L. Gering, M. Keyser, S. Kim, W. Mai, et al., Developing extreme fast charge battery protocols–A review spanning materials to systems, Journal of Power Sources 526 (2022) 231129

work page 2022

-

[7]

D. Guittet, P. Gasper, M. Shirk, M. Mitchell, M. Gilleran, E. Bonnema, K. Smith, P. Mishra, M. Mann, Levelized cost of charging of extreme fast charging with stationary LMO/LTO batteries, Journal of Energy Storage 82 (2024) 110568

work page 2024

-

[8]

J. M. Reniers, G. Mulder, D. A. Howey, Unlocking extra value from grid batteries using advanced models, Journal of power sources 487 (2021) 229355

work page 2021

- [9]

-

[10]

M. Hassanaly, P. J. Weddle, R. N. King, S. De, A. Doostan, C. R. Randall, E. J. Dufek, A. M. Colclasure, K. Smith, PINN surrogate of Li-ion battery models for parameter inference, Part II: Regularization and applica- tion of the pseudo-2D model, Journal of Energy Storage 98 (2024) 113104

work page 2024

-

[11]

S. R. Reddy, M. K. Scharrer, F. Pichler, D. Watzenig, G. S. Dulikravich, Accelerating parameter estimation in Doyle–Fuller–Newman model for lithium-ion batteries, COMPEL-The international journal for computation and mathematics in electrical and electronic engineering 38 (2019) 1533–1544. 18

work page 2019

-

[12]

S. Santhanagopalan, Q. Guo, P. Ramadass, R. E. White, Review of models for predicting the cycling performance of lithium ion batteries, Journal of power sources 156 (2006) 620–628

work page 2006

- [13]

-

[14]

T. F. Fuller, M. Doyle, J. Newman, Relaxation phenomena in lithium-ion-insertion cells, Journal of the Electro- chemical Society 141 (1994) 982

work page 1994

-

[15]

V . Ramadesigan, K. Chen, N. A. Burns, V . Boovaragavan, R. D. Braatz, V . R. Subramanian, Parameter estimation and capacity fade analysis of lithium-ion batteries using reformulated models, Journal of the Electrochemical society 158 (2011) A1048

work page 2011

-

[16]

H. Yu, H. Zhang, Z. Zhang, S. Yang, State estimation of lithium-ion batteries via physics-machine learning combined methods: A methodological review and future perspectives, ETransportation (2025) 100420

work page 2025

-

[17]

M. Hassanaly, P. J. Weddle, R. N. King, S. De, A. Doostan, C. R. Randall, E. J. Dufek, A. M. Colclasure, K. Smith, PINN surrogate of Li-ion battery models for parameter inference, Part I: Implementation and multi- fidelity hierarchies for the single-particle model, Journal of Energy Storage 98 (2024) 113103

work page 2024

-

[18]

J. Li, X. Li, X. Yuan, Y . Zhang, Deep learning method for online parameter identification of lithium-ion batteries using electrochemical synthetic data, Energy Storage Materials 72 (2024) 103697

work page 2024

- [19]

- [20]

-

[21]

P. Brendel, I. Mele, A. Rosskopf, T. Katrašnik, V . Lorentz, Parametrized physics-informed deep operator net- works for Design of Experiments applied to Lithium-Ion-Battery cells, Journal of Energy Storage 128 (2025) 117055

work page 2025

-

[22]

L. A. Román-Ramírez, J. Marco, Design of experiments applied to lithium-ion batteries: A literature review, Applied Energy 320 (2022) 119305

work page 2022

-

[23]

Z. Wang, X. Zhou, W. Zhang, B. Sun, J. Shi, Q. Huang, Parameter sensitivity analysis and parameter iden- tifiability analysis of electrochemical model under wide discharge rate, Journal of Energy Storage 68 (2023) 107788

work page 2023

-

[24]

R. G. Nascimento, F. A. Viana, M. Corbetta, C. S. Kulkarni, A framework for Li-ion battery prognosis based on hybrid Bayesian physics-informed neural networks, Scientific Reports 13 (2023) 13856

work page 2023

-

[25]

S. Kim, S. Kim, Y . Y . Choi, J.-I. Choi, Bayesian parameter identification in electrochemical model for lithium- ion batteries, Journal of Energy Storage 71 (2023) 108129

work page 2023

- [26]

- [27]

-

[28]

K. Cranmer, J. Brehmer, G. Louppe, The frontier of simulation-based inference, Proceedings of the National Academy of Sciences 117 (2020) 30055–30062

work page 2020

-

[29]

M. Deistler, J. Boelts, P. Steinbach, G. Moss, T. Moreau, M. Gloeckler, P. L. Rodrigues, J. Linhart, J. K. Lap- palainen, B. K. Miller, et al., Simulation-Based Inference: A Practical Guide, arXiv preprint arXiv:2508.12939 (2025). 19

-

[30]

M. D. Hoffman, A. Gelman, et al., The No-U-Turn sampler: adaptively setting path lengths in Hamiltonian Monte Carlo, J. Mach. Learn. Res. 15 (2014) 1593–1623

work page 2014

- [31]

-

[32]

S. Kullback, R. A. Leibler, On information and sufficiency, The annals of mathematical statistics 22 (1951) 79–86

work page 1951

-

[33]

D. M. Blei, A. Kucukelbir, J. D. McAuliffe, Variational inference: A review for statisticians, Journal of the American statistical Association 112 (2017) 859–877

work page 2017

-

[34]

G. Papamakarios, I. Murray, Fastε-free inference of simulation models with bayesian conditional density estimation, Advances in neural information processing systems 29 (2016)

work page 2016

- [35]

-

[36]

M. Hassanaly, J. M. Parra-Alvarez, M. J. Rahimi, F. Municchi, H. Sitaraman, Bayesian calibration of bubble size dynamics applied to CO2 gas fermenters, Chemical Engineering Research and Design 215 (2025) 312–328

work page 2025

-

[37]

J. Wildberger, M. Dax, S. Buchholz, S. Green, J. H. Macke, B. Schölkopf, Flow matching for scalable simulation-based inference, Advances in Neural Information Processing Systems 36 (2023) 16837–16864

work page 2023

-

[38]

M. Hassanaly, A. Glaws, K. Stengel, R. N. King, Adversarial sampling of unknown and high-dimensional conditional distributions, Journal of Computational Physics 450 (2022) 110853

work page 2022

-

[39]

C. R. Randall, BATMODS-lite: Packaged battery models and material properties [SWR-25-108], 2025. URL: github.com/NatLabRockies/batmods-lite. doi:10.11578/dc.20260114.1

-

[40]

B.-R. Chen, C. M. Walker, S. Kim, M. R. Kunz, T. R. Tanim, E. J. Dufek, Battery aging mode identification across NMC compositions and designs using machine learning, Joule 6 (2022) 2776–2793

work page 2022

-

[41]

G. Villalobos, J. Rudi, A. Mang, Neural Networks for Bayesian Inverse Problems Governed by a Nonlinear ODE, arXiv preprint arXiv:2510.14197 (2025)

-

[42]

G. Papamakarios, E. Nalisnick, D. J. Rezende, S. Mohamed, B. Lakshminarayanan, Normalizing flows for probabilistic modeling and inference, Journal of Machine Learning Research 22 (2021) 1–64

work page 2021

- [43]

-

[44]

A. N. Angelopoulos, S. Bates, A gentle introduction to conformal prediction and distribution-free uncertainty quantification, arXiv preprint arXiv:2107.07511 (2021)

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[45]

R. Flamary, N. Courty, A. Gramfort, M. Z. Alaya, A. Boisbunon, S. Chambon, L. Chapel, A. Corenflos, K. Fa- tras, N. Fournier, L. Gautheron, N. T. Gayraud, H. Janati, A. Rakotomamonjy, I. Redko, A. Rolet, A. Schutz, V . Seguy, D. J. Sutherland, R. Tavenard, A. Tong, T. Vayer, POT: Python Optimal Transport, Journal of Machine Learning Research 22 (2021) 1–8...

work page 2021

-

[46]

N. Bonneel, M. Van De Panne, S. Paris, W. Heidrich, Displacement interpolation using Lagrangian mass trans- port, in: Proceedings of the 2011 SIGGRAPH Asia conference, 2011, pp. 1–12

work page 2011

-

[47]

J. Perr-Sauer, J. Ugirumurera, J. Gafur, E. A. Bensen, T. Nguyen, S. Paul, J. Severino, A. Nag, S. Vijayshankar, P. Gasper, et al., Applications of explainable artificial intelligence in renewable energy research, Energy Reports 14 (2025) 2217–2235. 20

work page 2025

-

[48]

S. M. Lundberg, S.-I. Lee, A unified approach to interpreting model predictions, Advances in neural information processing systems 30 (2017)

work page 2017

-

[49]

A. Shrikumar, P. Greenside, A. Kundaje, Learning important features through propagating activation differences, in: International conference on machine learning, PMlR, 2017, pp. 3145–3153

work page 2017

-

[50]

S. Griesemer, D. Cao, Z. Cui, C. Osorio, Y . Liu, Active sequential posterior estimation for sample-efficient simulation-based inference, Advances in Neural Information Processing Systems 37 (2024) 127907–127936

work page 2024

- [51]

-

[52]

Flow Matching for Generative Modeling

Y . Lipman, R. T. Chen, H. Ben-Hamu, M. Nickel, M. Le, Flow matching for generative modeling, arXiv preprint arXiv:2210.02747 (2022)

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[53]

V . Sulzer, S. G. Marquis, R. Timms, M. Robinson, S. J. Chapman, Python Battery Mathematical Modelling (PyBaMM), Journal of Open Research Software 9 (2021) 14. doi:10.5334/jors.309

-

[54]

C. R. Randall, scikit-SUNDAE: Python bindings to SUNDIALS differential algebraic equation solvers [SWR- 24-137], 2024. URL:github.com/NatLabRockies/scikit-sundae. doi:10.11578/dc.20241104.3

-

[55]

A. C. Hindmarsh, P. N. Brown, K. E. Grant, S. L. Lee, R. Serban, D. E. Shumaker, C. S. Woodward, SUNDIALS: Suite of nonlinear and differential/algebraic equation solvers, ACM Transactions on Mathematical Software (TOMS) 31 (2005) 363–396

work page 2005

-

[56]

C. J. Balos, M. Day, L. Esclapez, A. M. Felden, D. J. Gardner, M. Hassanaly, D. R. Reynolds, J. S. Rood, J. M. Sexton, N. T. Wimer, et al., SUNDIALS time integrators for exascale applications with many independent sys- tems of ordinary differential equations, The International Journal of High Performance Computing Applications 39 (2025) 123–146. Appendix ...

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.