Workbench links weather embeddings to physical data for event retrieval

Researchers characterize known phenomena in labeled sets then search large unlabeled archives for matching storms and other weather events.

full image

full image

Data Analysis, Statistics and Probability

Methods, software and hardware for physics data analysis: data processing and storage; measurement methodology; statistical and mathematical aspects such as parametrization and uncertainties.

Researchers characterize known phenomena in labeled sets then search large unlabeled archives for matching storms and other weather events.

full image

full image

FitED is a new software package offering interactive and automated nonlinear fitting of conventional peak shapes and custom analytical…

Emergent Self-Attention from Astrocyte-Gated Associative Memory Dynamics

Entropy-regularized replicator dynamics on gains lead to softmax routing at fixed points and better retrieval under interference.

full image

full image

By maximizing predictive mutual information between past and future latent windows, it yields coordinates aligned with angle and angular vel

full image

full image

Bayesian approach for uncertainty quantification of hybrid spectral unmixing in γ-ray spectrometry

Laplace approximation loses accuracy when spectral deformation constraints activate or background dominates, but MCMC does not.

full image

full image

Application of a Mixture of Experts-based Foundation Model to the GlueX DIRC Detector

A shared transformer backbone with expert routing performs simulation, identification and hit filtering of Cherenkov photons without task-by

full image

full image

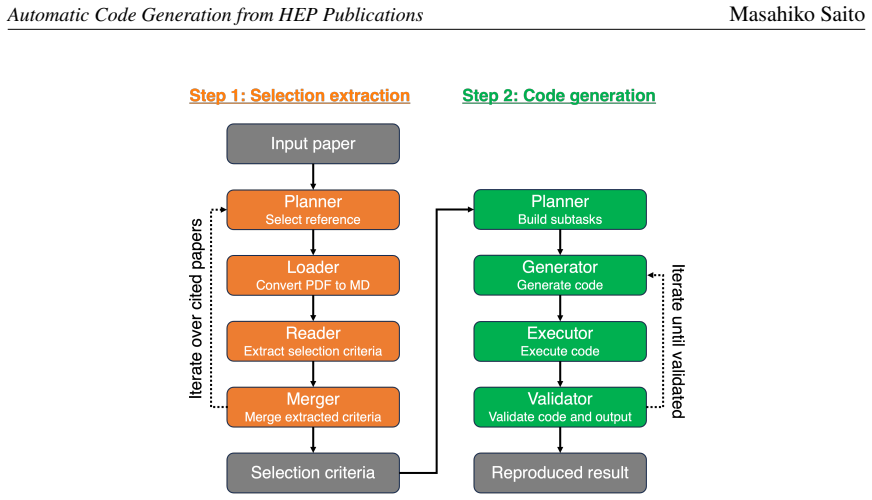

Development of an LLM-Based System for Automatic Code Generation from HEP Publications

A two-stage LLM system extracts structured analysis selections from HEP papers and references then generates and validates executable code…

full image

full image

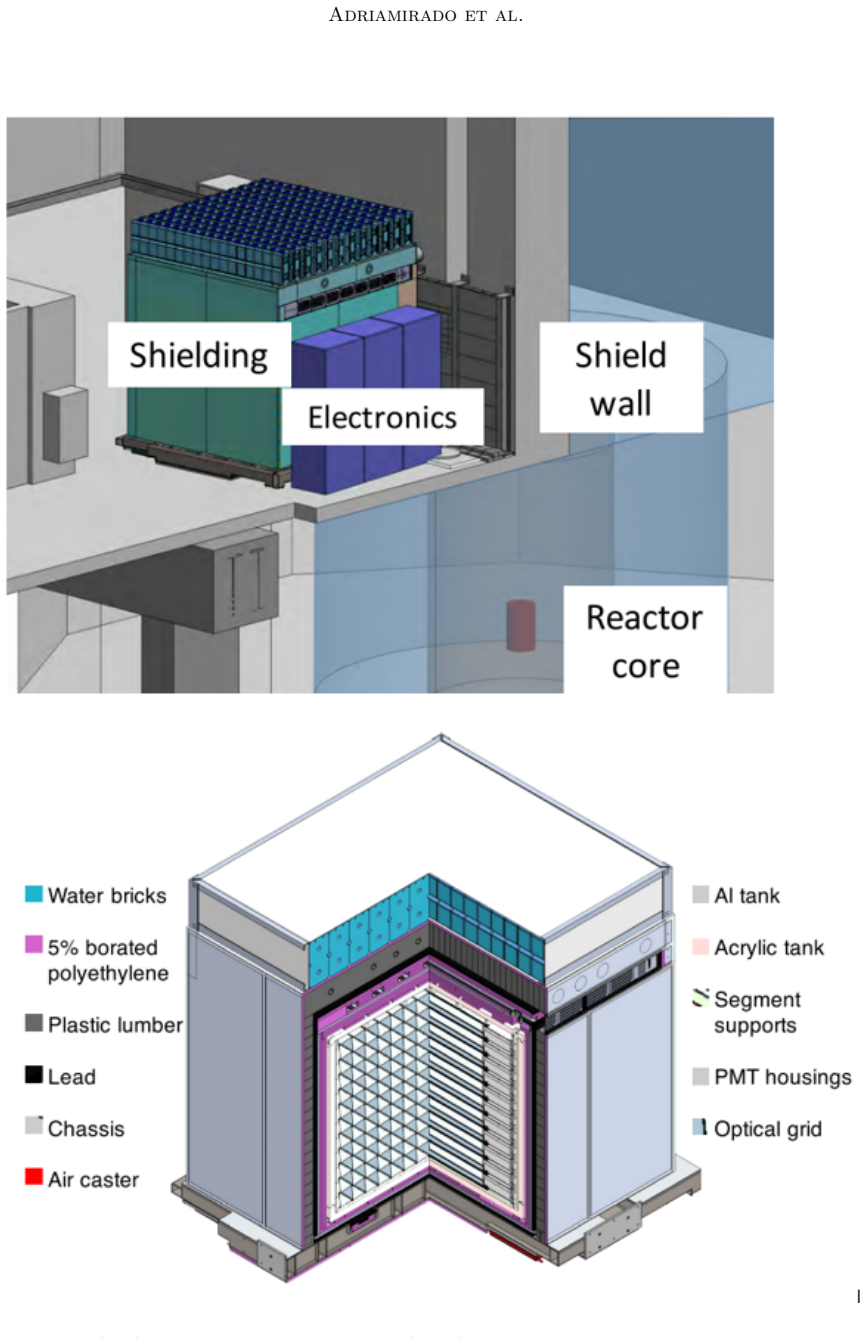

New Deep Learning Data Analysis Method for PROSPECT using GAPE: Genetic Algorithm Powered Evolution

Genetic evolution selects deep learning models that outperform traditional analysis on identical input data for cleaner reactor antineutrino

full image

full image

Fast and accurate noise removal by curve fitting using orthogonal polynomials

Recursive algorithms cut memory use and raise numerical precision by orders of magnitude for repeated local polynomial fits.

full image

full image

Matches Bayesian accuracy on experimental voltage data and enables real-time diagnostics for Li-ion cells

full image

full image

Asymptotic formulae for likelihood-based tests of new physics

They supply closed-form behaviour for likelihood statistics with systematics and use an Asimov dataset to find median experimental reach.

The information bottleneck method

Compressing X while maximizing information about Y yields self-consistent equations solvable by re-estimation.