Recognition: 2 theorem links

· Lean TheoremSAIL: Scene-aware Adaptive Iterative Learning for Long-Tail Trajectory Prediction in Autonomous Vehicles

Pith reviewed 2026-05-10 19:26 UTC · model grok-4.3

The pith

SAIL improves long-tail trajectory prediction for autonomous vehicles by defining rare events across error, risk and complexity then applying adaptive contrastive learning.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

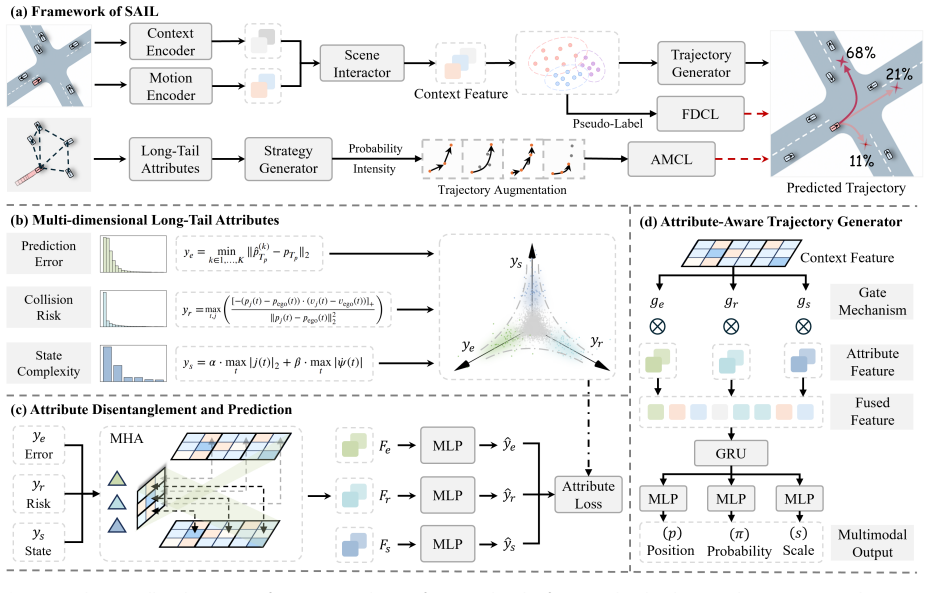

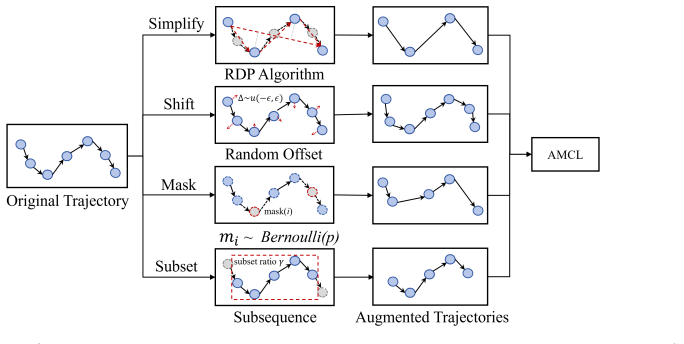

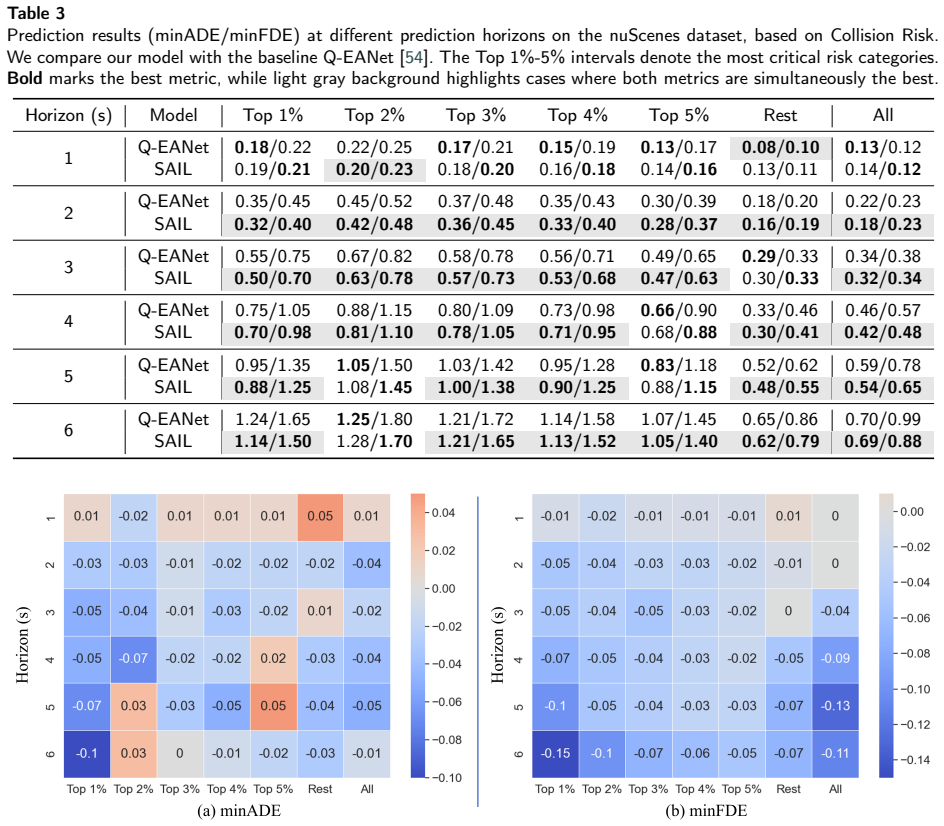

SAIL defines long-tail trajectories through three attribute dimensions of prediction error, collision risk, and state complexity, then integrates attribute-guided augmentation with an adaptive contrastive learning strategy that employs a continuous cosine momentum schedule, similarity-weighted hard-negative mining, dynamic pseudo-labeling based on evolving feature clustering, and a hard-positive focusing mechanism to improve learning on rare and challenging events.

What carries the argument

The SAIL framework's attribute-guided augmentation combined with adaptive contrastive learning using cosine momentum scheduling, similarity-weighted mining, dynamic pseudo-labeling, and hard-positive focusing to target long-tail samples.

If this is right

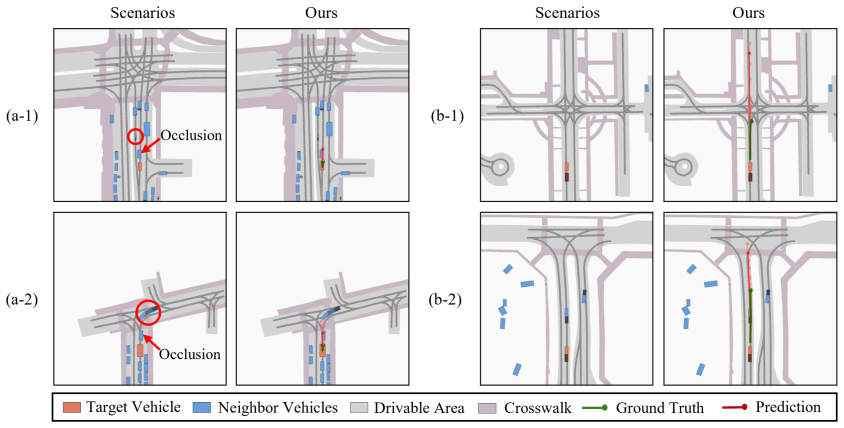

- Prediction error drops by up to 28.8 percent on the hardest long-tail samples while overall accuracy stays competitive.

- The method directly counters data imbalance and the tendency of standard training to ignore infrequent maneuvers.

- Reliable performance extends to diverse real-world mixed-autonomy traffic settings.

- The framework systematically identifies and forecasts a wider range of safety-critical events than prior approaches.

Where Pith is reading between the lines

- Similar attribute definitions could be applied to related tasks such as pedestrian trajectory forecasting to test transfer.

- The iterative clustering and pseudo-labeling steps might support continual online adaptation once a vehicle is deployed.

- Pairing the focused contrastive training with explicit uncertainty outputs could produce more conservative and therefore safer planning decisions.

Load-bearing premise

The three attribute dimensions adequately capture long-tail trajectories and the listed contrastive mechanisms improve learning on rare events without introducing bias or overfitting to augmented data.

What would settle it

An experiment on the same test splits that shows no reduction in error for the hardest 1 percent of samples, or that shows a clear drop in accuracy on common scenarios, would falsify the central effectiveness claim.

Figures

read the original abstract

Autonomous vehicles (AVs) rely on accurate trajectory prediction for safe navigation in diverse traffic environments, yet existing models struggle with long-tail scenarios-rare but safety-critical events characterized by abrupt maneuvers, high collision risks, and complex interactions. These challenges stem from data imbalance, inadequate definitions of long-tail trajectories, and suboptimal learning strategies that prioritize common behaviors over infrequent ones. To address this, we propose SAIL, a novel framework that systematically tackles the long-tail problem by first defining and modeling trajectories across three key attribute dimensions: prediction error, collision risk, and state complexity. Our approach then synergizes an attribute-guided augmentation and feature extraction process with a highly adaptive contrastive learning strategy. This strategy employs a continuous cosine momentum schedule, similarity-weighted hard-negative mining, and a dynamic pseudo-labeling mechanism based on evolving feature clustering. Furthermore, it incorporates a focusing mechanism to intensify learning on hard-positive samples within each identified class. This comprehensive design enables SAIL to excel at identifying and forecasting diverse and challenging long-tail events. Extensive evaluations on the nuScenes and ETH/UCY datasets demonstrate SAIL's superior performance, achieving up to 28.8% reduction in prediction error on the hardest 1% of long-tail samples compared to state-of-the-art baselines, while maintaining competitive accuracy across all scenarios. This framework advances reliable AV trajectory prediction in real-world, mixed-autonomy settings.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces SAIL, a scene-aware adaptive iterative learning framework for long-tail trajectory prediction in autonomous vehicles. It first defines long-tail trajectories across three attribute dimensions (prediction error, collision risk, and state complexity), then combines attribute-guided augmentation and feature extraction with an adaptive contrastive learning strategy that uses a continuous cosine momentum schedule, similarity-weighted hard-negative mining, dynamic pseudo-labeling based on evolving feature clustering, and a hard-positive focusing mechanism. Evaluations on the nuScenes and ETH/UCY datasets claim up to 28.8% reduction in prediction error on the hardest 1% of long-tail samples relative to state-of-the-art baselines while maintaining competitive accuracy across all scenarios.

Significance. If the central performance claims hold under a fixed, model-independent definition of the long-tail subset, the work could meaningfully advance safety-critical AV prediction by providing a structured way to handle rare events through multi-attribute modeling and adaptive contrastive mechanisms. The explicit handling of data imbalance via augmentation and iterative learning, together with evaluations on standard benchmarks, represents a practical contribution to the field. The design choices (cosine momentum, dynamic pseudo-labeling, hard-positive focusing) are clearly motivated and could generalize to other imbalanced prediction tasks.

major comments (1)

- [Abstract] Abstract: The headline result of up to 28.8% reduction in prediction error on the hardest 1% of long-tail samples rests on the three-attribute definition of long-tail trajectories, which explicitly includes prediction error. The manuscript does not state whether this prediction-error attribute (used to select the 1% cutoff) is computed with a held-out baseline (e.g., constant-velocity predictor), a fixed external model, or the proposed SAIL model itself. If the latter or any correlated model is used, the test subset becomes model-dependent, so the reported gain may reflect differences in error profiles rather than improvement on a pre-defined long-tail regime. This is load-bearing for the central claim and requires explicit clarification plus, if needed, re-computation of the 1% subset with an independent baseline.

minor comments (1)

- [Abstract] Abstract: The specific state-of-the-art baselines, ablation studies, and whether error bars or statistical significance tests are reported are not mentioned; these details should be summarized to allow immediate assessment of the strength of the 28.8% figure.

Simulated Author's Rebuttal

We thank the referee for the careful review and for identifying this critical point about the independence of the long-tail subset definition. We address the comment below and will revise the manuscript to make the evaluation protocol fully transparent.

read point-by-point responses

-

Referee: [Abstract] Abstract: The headline result of up to 28.8% reduction in prediction error on the hardest 1% of long-tail samples rests on the three-attribute definition of long-tail trajectories, which explicitly includes prediction error. The manuscript does not state whether this prediction-error attribute (used to select the 1% cutoff) is computed with a held-out baseline (e.g., constant-velocity predictor), a fixed external model, or the proposed SAIL model itself. If the latter or any correlated model is used, the test subset becomes model-dependent, so the reported gain may reflect differences in error profiles rather than improvement on a pre-defined long-tail regime. This is load-bearing for the central claim and requires explicit clarification plus, if needed, re-computation of the 1% subset with an independent baseline.

Authors: We agree that the manuscript should have stated this explicitly and thank the referee for catching the omission. The three attributes used to identify long-tail trajectories (prediction error, collision risk, and state complexity) were computed from fixed, model-independent baselines before any training of SAIL: prediction error was obtained from a constant-velocity predictor evaluated on a held-out validation split, collision risk from standard kinematic models, and state complexity from trajectory statistics and interaction counts. Consequently, the hardest 1% subset is a pre-defined, model-independent regime. We will add this clarification to the abstract, Section 3 (Long-Tail Trajectory Definition), and the experimental setup. Because the subset was already constructed independently of SAIL, no re-computation is required. This revision will eliminate any ambiguity and strengthen the central claim. revision: yes

Circularity Check

No significant circularity: high-level empirical framework with no derivational reduction

full rationale

The paper presents SAIL as a descriptive framework combining attribute-guided augmentation (prediction error, collision risk, state complexity) with adaptive contrastive learning mechanisms, evaluated empirically on nuScenes and ETH/UCY. No equations, closed-form derivations, or parameter-fitting steps appear that could reduce a claimed prediction or result to its inputs by construction. The 28.8% error reduction is reported as an observed outcome on dataset subsets, not a mathematical output derived from the model's own fitted quantities. While the long-tail definition incorporates prediction error as one attribute, this is a fixed modeling choice for sample selection rather than a self-referential loop in any derivation chain; the central claims remain independent empirical comparisons against baselines. This matches the absence of any load-bearing self-citation, ansatz smuggling, or uniqueness theorem in the text.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Long-tail trajectories can be effectively modeled and augmented using the three attributes of prediction error, collision risk, and state complexity.

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel uncleardefining and modeling trajectories across three key attribute dimensions: prediction error, collision risk, and state complexity... adaptive contrastive learning strategy... continuous cosine momentum schedule, similarity-weighted hard-negative mining, and a dynamic pseudo-labeling mechanism

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearExtensive evaluations on the nuScenes and ETH/UCY datasets demonstrate SAIL's superior performance, achieving up to 28.8% reduction in prediction error on the hardest 1% of long-tail samples

Reference graph

Works this paper leans on

-

[1]

H. Liao, C. Wang, K. Zhu, Y. Ren, B. Gao, S. E. Li, C. Xu, Z. Li, Minds on the move: Decoding trajectory prediction in autonomous driving with cognitive insights, IEEE Transactions on Intelligent Transportation Systems (2025)

2025

-

[2]

C. Wang, H. Liao, K. Zhu, G. Zhang, Z. Li, A dynamics-enhanced learning model for multi-horizon trajectory prediction in autonomous vehicles, Information Fusion (2025) 102924

2025

-

[3]

T.Salzmann,B.Ivanovic,P.Chakravarty,M.Pavone, Trajectron++:Dynamically-feasibletrajectoryforecastingwithheterogeneousdata, in: ECCV, Springer, 2020, pp. 683–700

2020

-

[4]

X. Li, J. Liu, J. Li, W. Yu, Z. Cao, S. Qiu, J. Hu, H. Wang, X. Jiao, Graph structure-based implicit risk reasoning for long-tail scenarios of automated driving, in: 2023 4th International Conference on Big Data, Artificial Intelligence and Internet of Things Engineering (ICBAIE), IEEE, 2023, pp. 415–420

2023

-

[5]

W. Zhou, Z. Cao, Y. Xu, N. Deng, X. Liu, K. Jiang, D. Yang, Long-tail prediction uncertainty aware trajectory planning for self-driving vehicles, in: 2022 IEEE 25th International Conference on Intelligent Transportation Systems (ITSC), IEEE, 2022, pp. 1275–1282

2022

-

[6]

Z. Lan, Y. Ren, H. Yu, L. Liu, Z. Li, Y. Wang, Z. Cui, Hi-scl: Fighting long-tailed challenges in trajectory prediction with hierarchical wave-semantic contrastive learning, Transportation Research Part C: Emerging Technologies 165 (2024) 104735. Rao et al.:Preprint submitted to ElsevierPage 25 of 28

2024

-

[7]

H. Liao, Z. Li, H. Shen, W. Zeng, D. Liao, G. Li, C. Xu, Bat: Behavior-aware human-like trajectory prediction for autonomous driving, in: AAAI, volume 38, 2024, pp. 10332–10340

2024

-

[8]

Girgis, F

R. Girgis, F. Golemo, F. Codevilla, M. Weiss, J. A. D’Souza, S. E. Kahou, F. Heide, C. Pal, Latent variable sequential set transformers for joint multi-agent motion prediction, in: International Conference on Learning Representations, 2022. URL:https://openreview.net/ forum?id=Dup_dDqkZC5

2022

-

[9]

R.Shi,X.Wang,Y.Zhou,M.Zhu,Rulenet:rule-priority-awaremulti-agenttrajectorypredictioninambiguoustrafficscenarios,Transportation Research Part C: Emerging Technologies 180 (2025) 105339

2025

-

[10]

C.-F. Lin, A. G. Ulsoy, D. J. LeBlanc, Vehicle dynamics and external disturbance estimation for vehicle path prediction, IEEE Transactions on Control Systems Technology 8 (2000) 508–518

2000

-

[11]

C. Wong, B. Xia, Z. Hong, Q. Peng, W. Yuan, Q. Cao, Y. Yang, X. You, View vertically: A hierarchical network for trajectory prediction via fourier spectrums, in: ECCV, Springer, 2022, pp. 682–700

2022

-

[12]

J. Gao, C. Sun, H. Zhao, Y. Shen, D. Anguelov, C. Li, C. Schmid, Vectornet: Encoding hd maps and agent dynamics from vectorized representation, in: CVPR, 2020, pp. 11525–11533

2020

-

[13]

Alahi, K

A. Alahi, K. Goel, V. Ramanathan, A. Robicquet, L. Fei-Fei, S. Savarese, Social lstm: Human trajectory prediction in crowded spaces, in: CVPR, 2016, pp. 961–971

2016

-

[14]

Huang, M

M. Huang, M. Zhu, Y. Xiao, Y. Liu, Bayonet-corpus: a trajectory prediction method based on bayonet context and bidirectional gru, Digital Communications and Networks 7 (2021) 72–81

2021

-

[15]

H. Liao, C. Wang, Z. Li, Y. Li, B. Wang, G. Li, C. Xu, Physics-informed trajectory prediction for autonomous driving under missing observation, in: International Joint Conference On Artificial Intelligence (IJCAI 2024), 2024

2024

-

[16]

Gilles, S

T. Gilles, S. Sabatini, D. Tsishkou, B. Stanciulescu, F. Moutarde, Home: Heatmap output for future motion estimation, in: 2021 IEEE International Intelligent Transportation Systems Conference (ITSC), IEEE, 2021, pp. 500–507

2021

-

[17]

B. Fan, H. Yuan, Y. Dong, Z. Zhu, H. Liu, Bidirectional agent-map interaction feature learning leveraged by map-related tasks for trajectory prediction in autonomous driving, IEEE Transactions on Automation Science and Engineering (2025)

2025

-

[18]

N. Deo, M. M. Trivedi, Convolutional social pooling for vehicle trajectory prediction, in: Proceedings of the IEEE conference on computer vision and pattern recognition workshops, 2018, pp. 1468–1476

2018

-

[19]

Marchetti, F

F. Marchetti, F. Becattini, L. Seidenari, A. Del Bimbo, Smemo: social memory for trajectory forecasting, IEEE Transactions on Pattern Analysis and Machine Intelligence 46 (2024) 4410–4425

2024

-

[20]

Munir, T

F. Munir, T. P. Kucner, Context-aware multi-task learning for pedestrian intent and trajectory prediction, Transportation Research Part C: Emerging Technologies 178 (2025) 105203

2025

-

[21]

H. Liu, Z. Huang, W. Huang, H. Yang, X. Mo, C. Lv, Hybrid-prediction integrated planning for autonomous driving, IEEE Transactions on Pattern Analysis and Machine Intelligence (2025)

2025

-

[22]

H. Liao, Y. Li, Z. Li, C. Wang, Z. Cui, S. E. Li, C. Xu, A cognitive-based trajectory prediction approach for autonomous driving, IEEE Transactions on Intelligent Vehicles (2024)

2024

-

[23]

H. Liao, Z. Li, C. Wang, H. Shen, B. Wang, D. Liao, G. Li, C. Xu, Mftraj: Map-free, behavior-driven trajectory prediction for autonomous driving, in: International Joint Conference On Artificial Intelligence (IJCAI 2024), 2024

2024

-

[24]

C.Wang,H.Liao,Z.Li,C.Xu, Wake:Towardsrobustandphysicallyfeasibletrajectorypredictionforautonomousvehicleswithwaveletand kinematics synergy, IEEE Transactions on Pattern Analysis and Machine Intelligence (2025)

2025

-

[25]

Z.Lan,L.Liu,B.Fan,Y.Lv,Y.Ren,Z.Cui, Traj-llm:Anewexplorationforempoweringtrajectorypredictionwithpre-trainedlargelanguage models, IEEE Transactions on Intelligent Vehicles (2024)

2024

- [26]

-

[27]

C. Min, D. Zhao, L. Xiao, J. Zhao, X. Xu, Z. Zhu, L. Jin, J. Li, Y. Guo, J. Xing, et al., Driveworld: 4d pre-trained scene understanding via world models for autonomous driving, in: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 15522–15533

2024

-

[28]

Makansi, Ö

O. Makansi, Ö. Cicek, Y. Marrakchi, T. Brox, On exposing the challenging long tail in future prediction of traffic actors, in: ICCV, 2021, pp. 13147–13157

2021

-

[29]

Y. Wang, P. Zhang, L. Bai, J. Xue, Fend: A future enhanced distribution-aware contrastive learning framework for long-tail trajectory prediction, in: CVPR, 2023, pp. 1400–1409

2023

- [30]

-

[31]

T. Chen, S. Kornblith, M. Norouzi, G. Hinton, A simple framework for contrastive learning of visual representations, in: International conference on machine learning, PMLR, 2020, pp. 1597–1607

2020

-

[32]

B. Yang, K. Yan, C. Hu, H. Hu, Z. Yu, R. Ni, Dynamic subclass-balancing contrastive learning for long-tail pedestrian trajectory prediction with progressive refinement, IEEE Transactions on Automation Science and Engineering (2024)

2024

-

[33]

K. He, H. Fan, Y. Wu, S. Xie, R. Girshick, Momentum contrast for unsupervised visual representation learning, in: CVPR, 2020, pp. 9729–9738

2020

-

[34]

Khosla, P

P. Khosla, P. Teterwak, C. Wang, A. Sarna, Y. Tian, P. Isola, A. Maschinot, C. Liu, D. Krishnan, Supervised contrastive learning, Advances in neural information processing systems 33 (2020) 18661–18673

2020

-

[35]

S. Xuan, S. Zhang, Decoupled contrastive learning for long-tailed recognition, in: AAAI, volume 38, 2024, pp. 6396–6403

2024

-

[36]

Q.Shi,H.Zhang, Animprovedlearning-basedlstmapproachforlanechangeintentionpredictionsubjecttoimbalanceddata, Transportation research part C: emerging technologies 133 (2021) 103414

2021

-

[37]

L. Wang, F. Y. Jin, Y. L. Cui, M. M. Zhao, W. Yang, Multi-dimensional analysis of long-tail lane changes: Feature coupling and prediction patterns in vehicle trajectories, in: CICTP 2025, ????, pp. 1447–1455. Rao et al.:Preprint submitted to ElsevierPage 26 of 28

2025

-

[38]

H.Han,W.-Y.Wang,B.-H.Mao, Borderline-smote:anewover-samplingmethodinimbalanceddatasetslearning, in:Internationalconference on intelligent computing, Springer, 2005, pp. 878–887

2005

-

[39]

T.-Y. Ross, G. Dollár, Focal loss for dense object detection, in: CVPR, 2017, pp. 2980–2988

2017

-

[40]

D. Park, M. Surana, P. Desai, A. Mehta, R. John, K.-J. Yoon, Generative active learning for long-tail trajectory prediction via controllable diffusion model, in: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025, pp. 27839–27850

2025

-

[41]

Y. Yao, S. Bhatnagar, M. Mazzola, V. Belagiannis, I. Gilitschenski, L. Palmieri, S. Razniewski, M. Hallgarten, Agents-llm: Augmentative generation of challenging traffic scenarios with an agentic llm framework, in: 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), IEEE, 2025, pp. 18400–18407

2025

-

[42]

K. Yang, Z. Guo, G. Lin, H. Dong, Z. Huang, Y. Wu, D. Zuo, J. Peng, Z. Zhong, X. Wang, et al., Trajectory-llm: A language-based data generator for trajectory prediction in autonomous driving, in: The Thirteenth International Conference on Learning Representations, 2025

2025

-

[43]

14421–14427

D.Thuremella,L.Ince,L.Kunze, Risk-awaretrajectorypredictionbyincorporatingspatio-temporaltrafficinteractionanalysis, in:2024IEEE International Conference on Robotics and Automation (ICRA), IEEE, 2024, pp. 14421–14427

2024

- [44]

-

[45]

H. Liao, B. Wang, J. Yang, C. Wang, Z. He, G. Zhang, C. Xu, Z. Li, Addressing corner cases in autonomous driving: A world model-based approach with mixture of experts and llms, Transportation Research Part C: Emerging Technologies 183 (2026) 105456

2026

-

[46]

J. A. Hartigan, M. A. Wong, Algorithm as 136: A k-means clustering algorithm, Journal of the royal statistical society. series c (applied statistics) 28 (1979) 100–108

1979

-

[47]

H.Caesar, V.Bankiti, A.H. Lang,S. Vora,V. E.Liong, Q.Xu, A.Krishnan, Y.Pan, G.Baldan, O.Beijbom, nuscenes: Amultimodaldataset for autonomous driving, in: CVPR, 2020, pp. 11621–11631

2020

-

[48]

S.Pellegrini,A.Ess,K.Schindler,L.VanGool, You’llneverwalkalone:Modelingsocialbehaviorformulti-targettracking, in:ICCV,IEEE, 2009, pp. 261–268

2009

-

[49]

Leal-Taixé, M

L. Leal-Taixé, M. Fenzi, A. Kuznetsova, B. Rosenhahn, S. Savarese, Learning an image-based motion context for multiple people tracking, in: CVPR, 2014, pp. 3542–3549

2014

-

[50]

G.Ganeshaaraj,T.Fernando,S.Sridharan,C.Fookes,Enhancingpredictiveperformanceonlong-tailtrajectoriesviaclusteringandspecialized decoders, Pattern Recognition (2025) 112315

2025

-

[51]

L. Shen, Z. Lin, Q. Huang, Relay backpropagation for effective learning of deep convolutional neural networks, in: ECCV, Springer, 2016, pp. 467–482

2016

-

[52]

Y. Cui, M. Jia, T.-Y. Lin, Y. Song, S. Belongie, Class-balanced loss based on effective number of samples, in: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 9268–9277

2019

-

[53]

K.Cao,C.Wei,A.Gaidon,N.Arechiga,T.Ma, Learningimbalanceddatasetswithlabel-distribution-awaremarginloss, Advancesinneural information processing systems 32 (2019)

2019

-

[54]

J.Chen,Z.Wang,J.Wang,B.Cai, Q-eanet:Implicitsocialmodelingfortrajectorypredictionviaexperience-anchoredqueries, IETIntelligent Transport Systems 18 (2024) 1004–1015

2024

-

[55]

N. Deo, E. Wolff, O. Beijbom, Multimodal trajectory prediction conditioned on lane-graph traversals, in: Conference on Robot Learning, PMLR, 2022, pp. 203–212

2022

-

[56]

M. Liu, H. Cheng, L. Chen, H. Broszio, J. Li, R. Zhao, M. Sester, M. Y. Yang, Laformer: Trajectory prediction for autonomous driving with lane-aware scene constraints, in: CVPR, 2024, pp. 2039–2049

2024

-

[57]

L. Feng, M. Bahari, K. M. B. Amor, É. Zablocki, M. Cord, A. Alahi, Unitraj: A unified framework for scalable vehicle trajectory prediction, in: European Conference on Computer Vision, Springer, 2024, pp. 106–123

2024

-

[58]

B. Kim, S. H. Park, S. Lee, E. Khoshimjonov, D. Kum, J. Kim, J. S. Kim, J. W. Choi, Lapred: Lane-aware prediction of multi-modal future trajectories of dynamic agents, in: CVPR, 2021, pp. 14636–14645

2021

-

[59]

Messaoud, N

K. Messaoud, N. Deo, M. M. Trivedi, F. Nashashibi, Trajectory prediction for autonomous driving based on multi-head attention with joint agent-map representation, in: 2021 IEEE intelligent vehicles symposium (IV), IEEE, 2021, pp. 165–170

2021

-

[60]

Gilles, S

T. Gilles, S. Sabatini, D. Tsishkou, B. Stanciulescu, F. Moutarde, Gohome: Graph-oriented heatmap output for future motion estimation, in: 2022 international conference on robotics and automation (ICRA), IEEE, 2022, pp. 9107–9114

2022

-

[61]

Xu, J.-B

P. Xu, J.-B. Hayet, I. Karamouzas, Context-aware timewise vaes for real-time vehicle trajectory prediction, IEEE Robotics and Automation Letters (2023)

2023

-

[62]

Y. Xu, Y. Fu, Adapting to length shift: Flexilength network for trajectory prediction, in: CVPR, 2024, pp. 15226–15237

2024

-

[63]

Y. Ren, Z. Lan, L. Liu, H. Yu, Emsin: Enhanced multistream interaction network for vehicle trajectory prediction, IEEE Transactions on Fuzzy Systems 33 (2024) 54–68

2024

-

[64]

Q.Zhang,Y.Yang,P.Li,O.Andersson,P.Jensfelt, Seflow:Aself-supervisedsceneflowmethodinautonomousdriving, in:ECCV,Springer, 2025, pp. 353–369

2025

-

[65]

K.Mangalam,H.Girase,S.Agarwal,K.-H.Lee,E.Adeli,J.Malik,A.Gaidon, Itisnotthejourneybutthedestination:Endpointconditioned trajectory prediction, in: ECCV, Springer, 2020, pp. 759–776

2020

-

[66]

Y. Yuan, X. Weng, Y. Ou, K. M. Kitani, Agentformer: Agent-aware transformers for socio-temporal multi-agent forecasting, in: Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 9813–9823

2021

-

[67]

I.Bae,J.-H.Park,H.-G.Jeon, Non-probabilitysamplingnetworkforstochastichumantrajectoryprediction, in:CVPR,2022,pp.6477–6487

2022

-

[68]

17113–17122

T.Gu,G.Chen,J.Li,C.Lin,Y.Rao,J.Zhou,J.Lu, Stochastictrajectorypredictionviamotionindeterminacydiffusion, in:CVPR,2022,pp. 17113–17122

2022

-

[69]

L. Shi, L. Wang, S. Zhou, G. Hua, Trajectory unified transformer for pedestrian trajectory prediction, in: ICCV, 2023, pp. 9675–9684

2023

-

[70]

X. Lin, T. Liang, J. Lai, J.-F. Hu, Progressive pretext task learning for human trajectory prediction, in: ECCV, Springer, 2024, pp. 197–214. Rao et al.:Preprint submitted to ElsevierPage 27 of 28

2024

-

[71]

R. Li, T. Qiao, S. Katsigiannis, Z. Zhu, H. P. Shum, Unified spatial–temporal edge-enhanced graph networks for pedestrian trajectory prediction, IEEE Transactions on Circuits and Systems for Video Technology 35 (2025) 7047–7060

2025

- [72]

-

[73]

S. Moon, H. Woo, H. Park, H. Jung, R. Mahjourian, H.-g. Chi, H. Lim, S. Kim, J. Kim, Visiontrap: Vision-augmented trajectory prediction guided by textual descriptions, in: ECCV, Springer, 2024, pp. 361–379. Rao et al.:Preprint submitted to ElsevierPage 28 of 28

2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.