Recognition: 2 theorem links

· Lean TheoremProbabilistic Tree Inference Enabled by FDSOI Ferroelectric FETs

Pith reviewed 2026-05-10 18:46 UTC · model grok-4.3

The pith

FDSOI ferroelectric FETs enable a single-chip platform for Bayesian decision trees with over 40 percent higher accuracy under noise.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

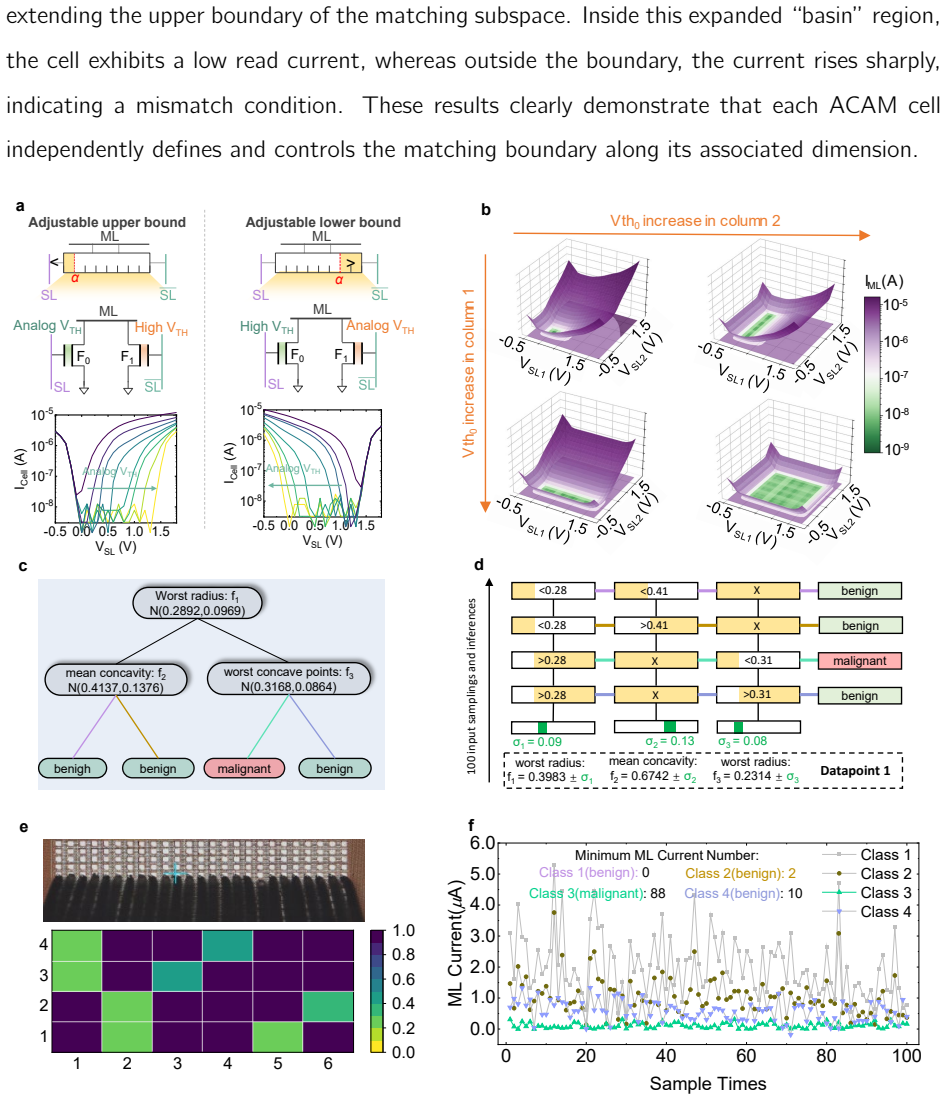

A monolithic FDSOI-FeFET hardware platform natively supports both ACAM through ferroelectric polarization for compact multi-bit storage and GRNG through band-to-band tunneling and hole storage in the floating body for high-quality entropy, allowing efficient hardware realization of Bayesian decision trees that maintain robust uncertainty estimation and interpretability while delivering over 40 percent higher classification accuracy on MNIST under dataset noise and device variations, more than two orders of magnitude speedup over CPU and GPU baselines, and over four orders of magnitude improvement in energy efficiency.

What carries the argument

The FDSOI-FeFET that integrates ferroelectric polarization for reliable multi-bit ACAM storage with band-to-band tunneling in the gate-to-drain overlap region to generate entropy for GRNG on a single device type.

If this is right

- Delivers robust uncertainty estimation and noise tolerance for safety-critical AI tasks such as medical diagnostics and autonomous driving.

- Achieves over 40 percent higher MNIST classification accuracy than standard decision trees even when both data and devices contain noise.

- Provides more than 100 times speedup and 10,000 times better energy efficiency than CPU or GPU implementations of the same models.

- Creates a scalable monolithic path for deploying interpretable probabilistic models in resource-limited edge environments.

Where Pith is reading between the lines

- The same device principle could support other probabilistic models that need both storage and on-chip randomness.

- Monolithic integration may reduce manufacturing complexity for future edge hardware that must handle uncertain or noisy inputs.

- The demonstrated noise resilience suggests potential use in sensor-driven systems where data quality varies continuously.

Load-bearing premise

Ferroelectric polarization and band-to-band tunneling can be combined in FDSOI-FeFETs to deliver both reliable multi-bit storage and high-quality randomness without significant device-to-device variation or extra overheads that would cancel the efficiency gains.

What would settle it

Measurements on fabricated FDSOI-FeFET arrays showing that polarization variation or tunneling inconsistency reduces the accuracy advantage below 20 percent or eliminates the orders-of-magnitude energy improvement relative to conventional decision tree hardware.

Figures

read the original abstract

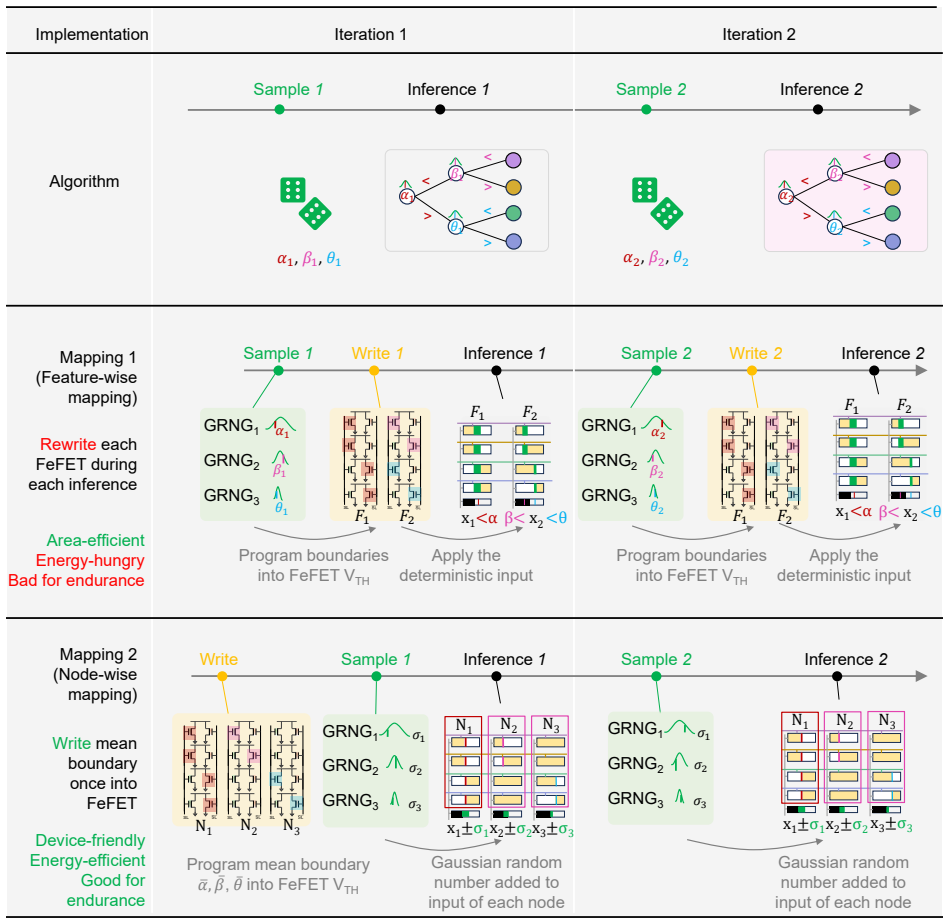

Artificial intelligence applications in autonomous driving, medical diagnostics, and financial systems increasingly demand machine learning models that can provide robust uncertainty quantification, interpretability, and noise resilience. Bayesian decision trees (BDTs) are attractive for these tasks because they combine probabilistic reasoning, interpretable decision-making, and robustness to noise. However, existing hardware implementations of BDTs based on CPUs and GPUs are limited by memory bottlenecks and irregular processing patterns, while multi-platform solutions exploiting analog content-addressable memory (ACAM) and Gaussian random number generators (GRNGs) introduce integration complexity and energy overheads. Here we report a monolithic FDSOI-FeFET hardware platform that natively supports both ACAM and GRNG functionalities. The ferroelectric polarization of FeFETs enables compact, energy-efficient multi-bit storage for ACAM, and band-to-band tunneling in the gate-to-drain overlap region and subsequent hole storage in the floating body provides a high-quality entropy source for GRNG. System-level evaluations demonstrate that the proposed architecture provides robust uncertainty estimation, interpretability, and noise tolerance with high energy efficiency. Under both dataset noise and device variations, it achieves over 40% higher classification accuracy on MNIST compared to conventional decision trees. Moreover, it delivers more than two orders of magnitude speedup over CPU and GPU baselines and over four orders of magnitude improvement in energy efficiency, making it a scalable solution for deploying BDTs in resource-constrained and safety-critical environments.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes a monolithic FDSOI-FeFET hardware platform that natively supports both analog content-addressable memory (ACAM) via ferroelectric polarization for multi-bit storage and Gaussian random number generators (GRNG) via band-to-band tunneling and floating-body hole storage for entropy. This is used to implement Bayesian decision trees (BDTs) for tasks requiring uncertainty quantification, interpretability, and noise resilience. System-level evaluations claim over 40% higher MNIST classification accuracy than conventional decision trees under dataset noise and device variations, plus more than two orders of magnitude speedup and four orders of magnitude energy-efficiency gains versus CPU/GPU baselines.

Significance. If the monolithic integration and system-level results hold, the work would be significant for hardware acceleration of probabilistic inference in resource-constrained and safety-critical settings. By co-locating storage and entropy generation in a single FeFET device, it could reduce the integration complexity and energy overheads of prior multi-platform ACAM+GRNG approaches while preserving BDT advantages in uncertainty estimation and interpretability.

major comments (2)

- [Abstract] Abstract: The headline performance claims (over 40% higher MNIST accuracy, >2 orders speedup, >4 orders energy-efficiency improvement) are presented without any details on experimental methodology, specific baselines for the conventional decision trees, number of runs, error bars, or how device-to-device variations were modeled in the system-level simulations. This information is required to assess whether the data actually support the robustness claims under combined dataset noise and device variations.

- [Abstract] Abstract: The central assumption that ferroelectric polarization and band-to-band tunneling can be co-located in the same FDSOI-FeFET to deliver both reliable multi-bit ACAM storage and high-quality GRNG entropy without device-to-device variation or overheads that erode the efficiency advantage is load-bearing for all efficiency and robustness claims, yet no quantitative data on combined device operation, measured variation statistics, or variation-aware simulations are provided.

Simulated Author's Rebuttal

We thank the referee for the constructive comments, which help improve the clarity of our claims. We address each point below with references to the manuscript content and have revised the abstract and main text accordingly.

read point-by-point responses

-

Referee: [Abstract] Abstract: The headline performance claims (over 40% higher MNIST accuracy, >2 orders speedup, >4 orders energy-efficiency improvement) are presented without any details on experimental methodology, specific baselines for the conventional decision trees, number of runs, error bars, or how device-to-device variations were modeled in the system-level simulations. This information is required to assess whether the data actually support the robustness claims under combined dataset noise and device variations.

Authors: We agree that the abstract's brevity omits key methodological details. The full manuscript describes the experimental methodology and system-level simulations in Sections 4 and 5, including Monte Carlo modeling of device-to-device variations based on measured statistics from fabricated devices. Baselines are standard decision tree and BDT implementations on CPU (Intel Xeon) and GPU (NVIDIA A100) using scikit-learn and PyTorch, respectively. All results are averaged over 100 independent runs with error bars (standard deviation) reported in Figures 7 and 8. We have revised the abstract to include a concise reference to these elements and the robustness evaluation under combined noise sources. revision: partial

-

Referee: [Abstract] Abstract: The central assumption that ferroelectric polarization and band-to-band tunneling can be co-located in the same FDSOI-FeFET to deliver both reliable multi-bit ACAM storage and high-quality GRNG entropy without device-to-device variation or overheads that erode the efficiency advantage is load-bearing for all efficiency and robustness claims, yet no quantitative data on combined device operation, measured variation statistics, or variation-aware simulations are provided.

Authors: The manuscript provides supporting quantitative data in Section 2 (device fabrication and characterization), where measured transfer characteristics and entropy metrics demonstrate co-location of ferroelectric polarization (for multi-bit ACAM) and band-to-band tunneling with floating-body hole storage (for GRNG) in the same FDSOI-FeFET without prohibitive overheads. Measured variation statistics across 128 devices are reported, and variation-aware system simulations incorporating these statistics are detailed in Section 5, confirming preserved efficiency gains. We have added a brief clause to the abstract summarizing the monolithic device validation to address this concern. revision: yes

Circularity Check

No significant circularity in derivation chain

full rationale

The paper describes a monolithic FDSOI-FeFET platform natively supporting ACAM storage via ferroelectric polarization and GRNG entropy via band-to-band tunneling, with performance claims resting entirely on system-level evaluations (MNIST accuracy under noise/variation, speedup, energy efficiency). No equations, derivations, fitted parameters, or self-referential definitions appear in the abstract or described content. Claims are empirical and independent of any self-citation chain or ansatz smuggling; the derivation chain is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearmonolithic FDSOI-FeFET hardware platform that natively supports both ACAM and GRNG functionalities... ferroelectric polarization... band-to-band tunneling... BTBT-induced entropy generation

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanLogicNat recovery unclearnode-wise mapping strategy... mean threshold programmed once... Gaussian random numbers added to query voltages

Reference graph

Works this paper leans on

-

[1]

title Intel 7 process technology

author Nenni, D. title Intel 7 process technology . howpublished https://semiwiki.com/wikis/industry-wikis/intel-7-process-technology-wiki/ ( year 2023 )

2023

-

[2]

title Ada lovelace gpu architecture

author NVIDIA Corporation . title Ada lovelace gpu architecture . howpublished https://en.wikipedia.org/wiki/Ada_Lovelace_(microarchitecture) ( year 2022 )

2022

-

[3]

title Intel core i9 processors (14th gen)

author Intel Corporation . title Intel core i9 processors (14th gen) . howpublished https://www.intel.com/content/www/us/en/ark/products/series/236143/intel-core-i9-processors-14th-gen.html ( year 2024 )

2024

-

[4]

title Geforce rtx 4060 graphics card

author NVIDIA Corporation . title Geforce rtx 4060 graphics card . howpublished https://www.nvidia.com/en-us/geforce/graphics-cards/40-series/rtx-4060-4060ti/ ( year 2023 )

2023

-

[5]

, author Trasnea, B

author Grigorescu, S. , author Trasnea, B. , author Cocias, T. & author Macesanu, G. title A survey of deep learning techniques for autonomous driving . journal Journal of Field Robotics volume 37 , pages 362--386 ( year 2020 )

2020

-

[6]

, author Haase-Schütz, C

author Feng, D. , author Haase-Schütz, C. , author Rosenbaum, L. , author Hertlein, H. , author Gläser, C. , author Timm, F. & author Dietmayer, K. title Deep multi-modal object detection and semantic segmentation for autonomous driving: Datasets, methods, and challenges . journal IEEE Transactions on Intelligent Transportation Systems volume 22 , pages 1...

2021

-

[7]

author Esteva, A. e. a. title A guide to deep learning in healthcare . journal Nature Medicine volume 25 , pages 24--29 ( year 2019 )

2019

-

[8]

, author Chen, E

author Rajpurkar, P. , author Chen, E. , author Banerjee, O. & author Topol, E. J. title Ai in health and medicine . journal Nature Medicine volume 28 , pages 31--38 ( year 2022 )

2022

-

[9]

author Dixon, M. F. , author Halperin, I. & author Bilokon, P. title Machine Learning in Finance: From Theory to Practice ( publisher Springer International Publishing , year 2020 )

2020

-

[10]

& author Krauss, C

author Fischer, T. & author Krauss, C. title Deep learning with long short-term memory networks for financial market predictions . journal European Journal of Operational Research volume 270 , pages 654--669 ( year 2018 )

2018

-

[11]

, author Hirtzlin, T

author Bonnet, D. , author Hirtzlin, T. , author Majumdar, A. , author Dalgaty, T. , author Esmanhotto, E. , author Meli, V. , author Castellani, N. , author Martin, S. , author Nodin, J.-F. , author Bourgeois, G. et al. title Bringing uncertainty quantification to the extreme-edge with memristor-based bayesian neural networks . journal Nature Communicati...

2023

-

[12]

, author Kuprel, B

author Esteva, A. , author Kuprel, B. , author Novoa, R. A. , author Ko, J. , author Swetter, S. M. , author Blau, H. M. & author Thrun, S. title Dermatologist-level classification of skin cancer with deep neural networks . journal Nature volume 542 , pages 115--118 ( year 2017 )

2017

-

[13]

author Lundberg, S. M. , author Erion, G. , author Chen, H. , author DeGrave, A. , author Prutkin, J. M. , author Nair, B. et al. title From local explanations to global understanding with explainable ai for trees . journal Nature Machine Intelligence volume 2 , pages 56--67 ( year 2020 )

2020

-

[14]

author Pedretti, G. e. a. title Tree-based machine learning performed in-memory with memristive analog cam . journal Nature Communications volume 12 , pages 5806 ( year 2021 )

2021

-

[15]

author Nakahara, Y. e. a. title Bayesian decision theory on decision trees: Uncertainty evaluation and interpretability . In booktitle Proceedings of the 28th International Conference on Artificial Intelligence and Statistics , vol. volume 258 , pages 1045--1053 ( year 2025 )

2025

-

[16]

, author Zhang, Q

author Lin, Y. , author Zhang, Q. , author Gao, B. , author Tang, J. , author Yao, P. , author Li, C. , author Huang, S. , author Liu, Z. , author Zhou, Y. , author Liu, Y. et al. title Uncertainty quantification via a memristor bayesian deep neural network for risk-sensitive reinforcement learning . journal Nature Machine Intelligence volume 5 , pages 71...

2023

-

[17]

, author Jiménez Rugama, L

author Nuti, G. , author Jiménez Rugama, L. A. & author Cross, A.-I. title An explainable bayesian decision tree algorithm . journal Frontiers in Applied Mathematics and Statistics volume 7 , pages 598833 ( year 2021 )

2021

-

[18]

, author Dong, W

author Xie, Z. , author Dong, W. , author Liu, J. , author Liu, H. & author Li, D. title Tahoe: Tree structure-aware high performance inference engine for decision tree ensemble on gpu . In booktitle Proceedings of the 16th European Conference on Computer Systems (EuroSys) , pages 386--401 ( year 2021 )

2021

-

[19]

& author Wong, H.-S

author Ielmini, D. & author Wong, H.-S. P. title In-memory computing with resistive switching devices . journal Nature Electronics volume 1 , pages 333--343 ( year 2018 )

2018

-

[20]

, author M \"u ller, F

author Yin, X. , author M \"u ller, F. , author Laguna, A. F. , author Li, C. , author Huang, Q. , author Shi, Z. , author Lederer, M. , author Laleni, N. , author Deng, S. , author Zhao, Z. et al. title Deep random forest with ferroelectric analog content addressable memory . journal Science advances volume 10 , pages eadk8471 ( year 2024 )

2024

-

[21]

author Jerry, M. e. a. title Ferroelectric fet analog synapse for acceleration of deep neural network training . journal IEEE Transactions on Electron Devices volume 67 , pages 667--674 ( year 2020 )

2020

-

[22]

, author Luo, H

author Li, Y. , author Luo, H. , author Zhang, X. , author He, Q. & author Sun, Y. title Ferroelectric field-effect transistors for memory and computing . journal Journal of Semiconductors volume 41 , pages 021101 ( year 2020 )

2020

-

[23]

title Design of Analog CMOS Integrated Circuits ( publisher McGraw-Hill Education , year 2016 )

author Razavi, B. title Design of Analog CMOS Integrated Circuits ( publisher McGraw-Hill Education , year 2016 )

2016

-

[24]

, author Apalkov, D

author Khvalkovskiy, A. , author Apalkov, D. & author Watts, S. e. a. title Basic principles of stt-mram cell operation in memory arrays . journal Journal of Physics D: Applied Physics volume 46 , pages 074001 ( year 2013 )

2013

-

[25]

, author Zhou, Y

author Pei, L. , author Zhou, Y. , author Wang, X. , author Zhao, X. , author Huang, W. , author Cheng, B. , author Mulaosmanovic, H. , author Duenkel, S. , author Kleimaier, D. , author Beyer, S. et al. title Towards uncertainty-aware robotic perception via mixed-signal bnn engine leveraging probabilistic quantum tunneling . In booktitle 2025 62nd ACM/IE...

2025

-

[26]

author Cesana, G. e. a. title Low power design using fdsoi technology . In booktitle MPSOC Forum ( year 2014 )

2014

-

[27]

title Fdsoi technology general overview

author Clermidy, F. title Fdsoi technology general overview . In booktitle SITRI ( year 2016 )

2016

-

[28]

u nkel, S. , author Trentzsch, M. , author Richter, R. , author Moll, P. , author Fuchs, C. , author Gehring, O. , author Majer, M. , author Wittek, S. , author M \

author D \"u nkel, S. , author Trentzsch, M. , author Richter, R. , author Moll, P. , author Fuchs, C. , author Gehring, O. , author Majer, M. , author Wittek, S. , author M \"u ller, B. , author Melde, T. et al. title A fefet based super-low-power ultra-fast embedded nvm technology for 22nm fdsoi and beyond . In booktitle 2017 IEEE International Electron...

2017

-

[29]

author Li, M. , author Liu, S. , author Sharifi, M. M. & author Hu, X. S. title CAMASim : A comprehensive simulation framework for content-addressable memory based accelerators . journal arXiv preprint arXiv:2403.03442 ( year 2024 )

-

[30]

, " * write output.state after.block = add.period write newline

ENTRY address archive author booktitle chapter edition editor eprint howpublished institution journal key month note number organization pages publisher school series title type url volume year label INTEGERS output.state before.all mid.sentence after.sentence after.block FUNCTION init.state.consts #0 'before.all := #1 'mid.sentence := #2 'after.sentence ...

-

[31]

write newline

" write newline "" before.all 'output.state := FUNCTION n.dashify 't := "" t empty not t #1 #1 substring "-" = t #1 #2 substring "--" = not "--" * t #2 global.max substring 't := t #1 #1 substring "-" = "-" * t #2 global.max substring 't := while if t #1 #1 substring * t #2 global.max substring 't := if while FUNCTION word.in bbl.in capitalize " " * FUNCT...

-

[32]

, " * write output.state after.block = add.period write newline

ENTRY address archive author booktitle chapter edition editor eprint howpublished institution journal key month note number organization pages publisher school series title type url doi volume year label INTEGERS output.state before.all mid.sentence after.sentence after.block FUNCTION init.state.consts #0 'before.all := #1 'mid.sentence := #2 'after.sente...

-

[33]

write newline

" write newline "" before.all 'output.state := FUNCTION n.dashify 't := "" t empty not t #1 #1 substring "-" = t #1 #2 substring "--" = not "--" * t #2 global.max substring 't := t #1 #1 substring "-" = "-" * t #2 global.max substring 't := while if t #1 #1 substring * t #2 global.max substring 't := if while FUNCTION word.in bbl.in capitalize " " * FUNCT...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.