Recognition: 2 theorem links

· Lean TheoremEnsemble size effects on conditional reliability estimates: slope attenuation bias and correction methods

Pith reviewed 2026-05-10 18:31 UTC · model grok-4.3

The pith

Finite ensemble sizes systematically attenuate slopes in conditional reliability diagnostics

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

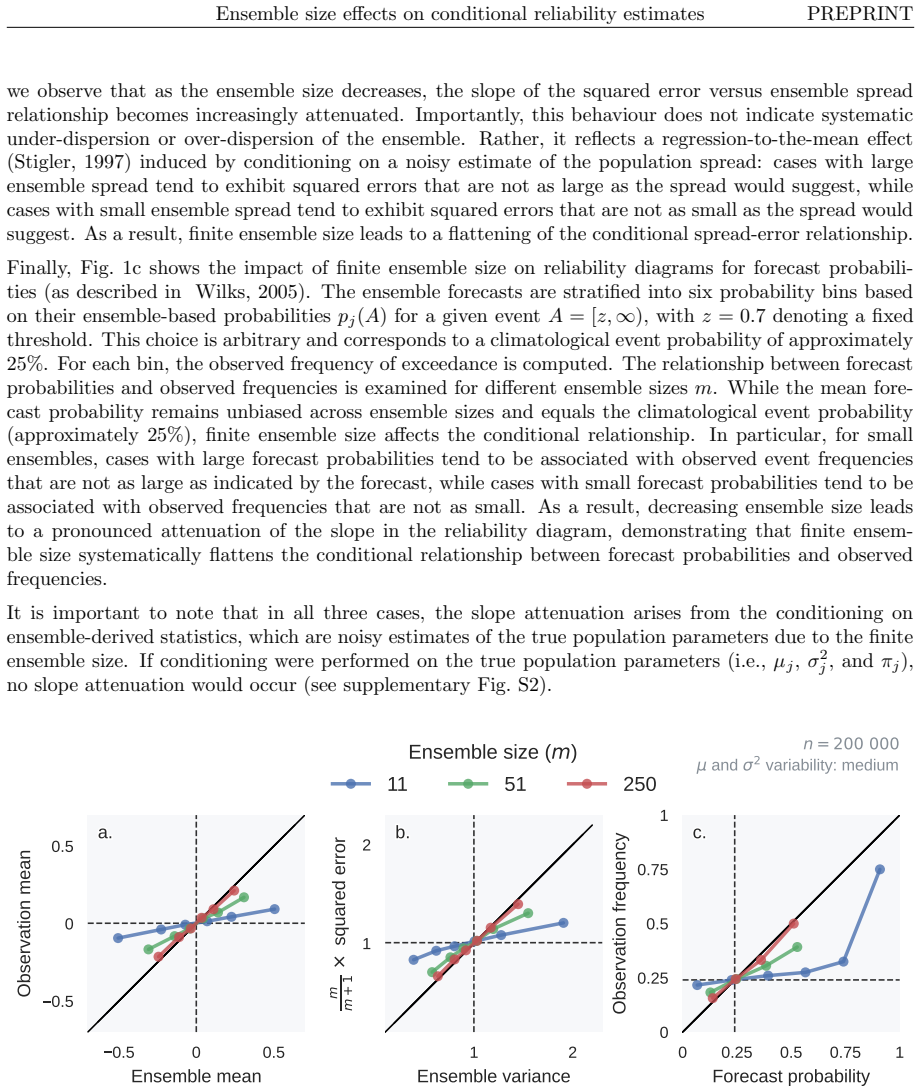

Conditional reliability diagnostics are systematically biased for finite ensemble sizes. We present a unified framework for slope attenuation caused by finite-ensemble sampling noise, which affects conditional diagnostics for ensemble means, spreads, and probabilities. Using synthetic forecasts that are perfectly reliable by construction, we isolate finite-ensemble effects. We derive analytical expressions for the expected attenuation and propose practical estimators computable directly from ensemble data. The framework is illustrated using 2-metre temperature sub-seasonal ensemble forecasts from ECMWF, where finite-ensemble slope attenuation substantially affects the spread-error relation

What carries the argument

Unified framework for slope attenuation caused by finite-ensemble sampling noise, supplying analytical expressions for the bias and practical correction estimators

If this is right

- Attenuated conditional slopes must be corrected for ensemble size before interpreting them as evidence of forecast deficiencies

- The attenuation and its correction apply uniformly to diagnostics based on ensemble means, spreads, and event probabilities

- Practical estimators for the true slopes can be calculated directly from existing ensemble output without extra simulations

- In the ECMWF sub-seasonal temperature case, accounting for the effect substantially changes the apparent spread-error relationship and tercile reliability

Where Pith is reading between the lines

- The same correction approach could be tested on other variables and forecast horizons to see how much it alters reliability conclusions

- Subsampling from larger ensembles would provide a direct empirical check of the analytical bias predictions

- Real forecasts with both sampling noise and model biases might require extensions of the framework to handle their combined effects

Load-bearing premise

Synthetic forecasts can be constructed to be perfectly reliable so that only finite-ensemble sampling effects are isolated

What would settle it

Varying ensemble size in synthetic experiments and verifying that measured slope reductions match the analytical attenuation formulas for each size

Figures

read the original abstract

The goal of ensemble forecasting is to maximise sharpness subject to reliability. Marginal reliability means that, over all cases, the ensemble is statistically consistent with reality: the ensemble mean is unbiased, the expected ensemble variance equals the expected mean-squared error of the ensemble mean, and the variance of the ensemble members matches the variance of the truth. Equivalently, forecasts that assign probability $p$ to an event verify with relative frequency $p$. However, climatological consistency is not sufficient for users acting on individual forecasts. A natural extension is to assess reliability conditional on the forecast itself, by examining whether, on average, larger ensemble means imply larger observed values, larger spreads imply larger forecast errors, or higher probabilities imply higher event frequencies. This motivates conditional reliability diagnostics such as reliability diagrams and spread-error relationships. Here we show that conditional reliability diagnostics are systematically biased for finite ensemble sizes. We present a unified framework for slope attenuation caused by finite-ensemble sampling noise, which affects conditional diagnostics for ensemble means, spreads, and probabilities. Using synthetic forecasts that are perfectly reliable by construction, we isolate finite-ensemble effects. We derive analytical expressions for the expected attenuation and propose practical estimators computable directly from ensemble data. The framework is illustrated using 2-metre temperature sub-seasonal ensemble forecasts from ECMWF, where finite-ensemble slope attenuation substantially affects the spread-error relationship and tercile-based reliability diagrams. These results demonstrate that attenuated conditional slopes should not be interpreted as evidence of forecast deficiencies unless finite-ensemble effects are explicitly taken into account.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that conditional reliability diagnostics in ensemble forecasting—such as spread-error relationships and reliability diagrams for ensemble means, spreads, and probabilities—are systematically biased by finite ensemble sizes, manifesting as slope attenuation due to sampling noise. It develops a unified analytical framework deriving the expected attenuation from statistical properties of sampling, proposes practical estimators computable from ensemble data alone, validates the framework on synthetic forecasts constructed to be perfectly reliable, and demonstrates substantial effects and corrections on ECMWF sub-seasonal 2 m temperature forecasts for spread-error slopes and tercile reliability diagrams. The central message is that attenuated conditional slopes should not be interpreted as forecast deficiencies without accounting for ensemble-size effects.

Significance. If the derivations and corrections hold, this work is significant for ensemble verification practice: it provides a concrete, analytical basis to distinguish sampling artifacts from genuine conditional unreliability, potentially preventing misdiagnosis of ensemble systems. Strengths include the isolation of finite-ensemble effects via perfectly reliable synthetics, the unified treatment across multiple conditional diagnostics, and the proposal of practical estimators directly from data. These elements offer a falsifiable, parameter-light correction that can be tested on any ensemble dataset.

major comments (2)

- [§4] §4 (ECMWF application): The attenuation correction derived from synthetics is applied to real 2 m temperature forecasts and shown to alter spread-error slopes and reliability diagrams, but the manuscript does not quantify or model the potential interaction between sampling noise and other real-world biases (e.g., mean bias or flow-dependent errors) whose covariance with ensemble sampling is not characterized. This interaction is load-bearing for the claim that the proposed estimators recover the true conditional slope on operational data.

- [§3] §3 (analytical framework): The expected attenuation expressions assume that the only source of conditional slope reduction is finite-ensemble sampling noise applied to perfectly reliable synthetics; the paper does not derive or bound the additional attenuation (or inflation) that would arise if the underlying forecast already contains conditional biases, leaving the robustness of the correction under realistic conditions untested.

minor comments (2)

- [Abstract and §3] The abstract and introduction use 'parameter-free' for the analytical expressions, but the practical estimators involve choices (e.g., binning or regression method) whose sensitivity is not reported; clarify whether these are truly free of tunable parameters.

- [Figures in §4] Figure captions for the ECMWF results should explicitly state the ensemble size used and the number of cases, to allow readers to assess the magnitude of the reported attenuation relative to sampling uncertainty.

Simulated Author's Rebuttal

We thank the referee for their careful reading and constructive comments, which have helped us clarify the scope and limitations of our work. We address each major comment below and have revised the manuscript accordingly to strengthen the presentation of the framework's assumptions and its application to real data.

read point-by-point responses

-

Referee: [§4] §4 (ECMWF application): The attenuation correction derived from synthetics is applied to real 2 m temperature forecasts and shown to alter spread-error slopes and reliability diagrams, but the manuscript does not quantify or model the potential interaction between sampling noise and other real-world biases (e.g., mean bias or flow-dependent errors) whose covariance with ensemble sampling is not characterized. This interaction is load-bearing for the claim that the proposed estimators recover the true conditional slope on operational data.

Authors: We agree that the manuscript does not explicitly model or quantify interactions between finite-ensemble sampling noise and other real-world error sources such as mean biases or flow-dependent errors. The derivations and estimators are constructed under the assumption that the underlying forecast is reliable apart from sampling variability. In the revised version we have added a dedicated paragraph in §4 that acknowledges this limitation, clarifies that the correction isolates and removes only the ensemble-size-induced attenuation component, and states that any residual discrepancies after correction may reflect other conditional biases whose covariance with sampling is uncharacterized. This addition prevents over-interpretation of the corrected slopes as fully recovering the 'true' conditional relationship on operational data. revision: partial

-

Referee: [§3] §3 (analytical framework): The expected attenuation expressions assume that the only source of conditional slope reduction is finite-ensemble sampling noise applied to perfectly reliable synthetics; the paper does not derive or bound the additional attenuation (or inflation) that would arise if the underlying forecast already contains conditional biases, leaving the robustness of the correction under realistic conditions untested.

Authors: The analytical expressions are derived specifically for the case of an underlying perfectly reliable forecast subject only to finite-ensemble sampling. A general derivation that bounds additional attenuation or inflation arising from arbitrary conditional biases would require parametrizing the form of those biases and is therefore outside the intended scope of the unified framework. The practical value of the estimators lies in removing the known sampling-induced component so that any remaining attenuation can be more confidently attributed to genuine conditional deficiencies. In the revision we have expanded the discussion at the end of §3 to state this rationale explicitly, added a cautionary note on applicability, and included a short illustrative example using synthetics that incorporate controlled conditional bias to demonstrate the combined effect. revision: yes

Circularity Check

No significant circularity; derivations are self-contained statistical analysis

full rationale

The paper derives analytical expressions for expected slope attenuation directly from the statistical properties of sampling noise in finite ensembles. Synthetic forecasts are constructed to be perfectly reliable by design solely to isolate sampling effects, not to fit or define the target attenuation formulas. Practical estimators are then proposed as direct functions of observable ensemble statistics. No load-bearing steps reduce to self-citation chains, fitted parameters renamed as predictions, or ansatzes imported from prior author work. The central framework remains independent of the specific real-forecast application and is grounded in standard sampling theory.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Synthetic forecasts can be constructed to be perfectly reliable by construction

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

We present a unified framework for slope attenuation caused by finite-ensemble sampling noise... derive analytical expressions for the expected attenuation and propose practical estimators computable directly from ensemble data.

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanabsolute_floor_iff_bare_distinguishability unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

slope(E[yj | x̄j], x̄j) = Varj(μj) / (Varj(μj) + Varj(ϵμ,j)) = 1 − Varj(ϵμ,j)/Varj(x̄j)

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Br¨ ocker, J. and Kantz, H. (2011) The concept of exchangeability in ensemble forecasting.Nonlin. Pro- cesses Geophys.,18, 1–5. URL:https://npg.copernicus.org/articles/18/1/2011/. Br¨ ocker, J. and Smith, L. A. (2007) Increasing the Reliability of Reliability Diagrams.Weather and Forecasting,22, 651–661. URL:https://journals.ametsoc.org/doi/10.1175/WAF993.1

-

[2]

J., Ruppert, D., Stefanski, L

Carroll, R. J., Ruppert, D., Stefanski, L. A. and Crainiceanu, C. M. (2006)Measurement Error in Nonlinear Models: A Modern Perspective. Chapman and Hall/CRC, 2 edn

2006

-

[3]

and Berger, R

Casella, G. and Berger, R. L. (2002)Statistical Inference. Duxbury Press, 2 edn

2002

-

[4]

and Jordan, A

Dimitriadis, T., Gneiting, T. and Jordan, A. I. (2021) Stable reliability diagrams for probabilistic classi- fiers.Proc. Natl. Acad. Sci. U.S.A.,118, e2016191118. URL:https://pnas.org/doi/full/10.1073/ pnas.2016191118

2021

-

[5]

and Thompson, S

Frost, C. and Thompson, S. G. (2000) Correcting for Regression Dilution Bias: Comparison of Methods for a Single Predictor Variable.Journal of the Royal Statistical Society Series A: Statistics in Society, 163, 173–189. URL:https://academic.oup.com/jrsssa/article/163/2/173/7102308

2000

-

[6]

Fuller, W. A. (1987)Measurement Error Models. Wiley Series in Probability and Statistics. John Wiley & Sons

1987

-

[7]

Verifying probabilistic forecasts: Calibration and sharpness

Gneiting, T., Balabdaoui, F. and Raftery, A. E. (2007) Probabilistic forecasts, calibration and sharpness. Journal of the Royal Statistical Society: Series B (Statistical Methodology),69, 243–268. URL:https: //rss.onlinelibrary.wiley.com/doi/abs/10.1111/j.1467-9868.2007.00587.x

-

[8]

Grimit, E. P. and Mass, C. F. (2007) Measuring the Ensemble Spread–Error Relationship with a Prob- abilistic Approach: Stochastic Ensemble Results.Monthly Weather Review,135, 203–221. URL: http://journals.ametsoc.org/doi/10.1175/MWR3262.1

-

[9]

Haiden, T., Janousek, M., Vitart, F., Prates, F., Maier-Gerber, M., Li, C. W. Y. and Cheval- lier, M. (2025) Evaluation of ECMWF forecasts.Technical Memorandum 931, European Centre for Medium-Range Weather Forecasts (ECMWF). URL:https://www.ecmwf.int/en/elibrary/ 81680-evaluation-ecmwf-forecasts

2025

-

[10]

Hamill, T. M. and Juras, J. (2006) Measuring forecast skill: Is it real skill or is it the varying climatology? Quart J Royal Meteoro Soc,132, 2905–2923. URL:https://rmets.onlinelibrary.wiley.com/doi/ 10.1256/qj.06.25

-

[11]

Hopson, T. M. (2014) Assessing the Ensemble Spread–Error Relationship.Monthly Weather Review, 142, 1125–1142. URL:http://journals.ametsoc.org/doi/10.1175/MWR-D-12-00111.1

-

[12]

and Bowler, N

Johnson, C. and Bowler, N. (2009) On the Reliability and Calibration of Ensemble Forecasts. Monthly Weather Review,137, 1717–1720. URL:http://journals.ametsoc.org/doi/10.1175/ 2009MWR2715.1

2009

-

[13]

Kenney, J. F. (1947)Mathematics of Statistics. Van Nostrand, 2nd ed. edn. 21 Ensemble size effects on conditional reliability estimates PREPRINT

1947

-

[14]

and Kok, C

Kruizinga, S. and Kok, C. J. (1988) Evaluation of the ECMWF experimental skill prediction scheme and a statistical analysis of forecast errors. InProceedings of the Workshop on Predictability in the Medium and Extended Range, 403–415. ECMWF

1988

-

[15]

and Palmer, T

Leutbecher, M. and Palmer, T. (2008) Ensemble forecasting.Journal of Computational Physics,227, 3515–3539. URL:https://linkinghub.elsevier.com/retrieve/pii/S0021999107000812

2008

-

[16]

Manzanas, R., Torralba, V., family=Lled´ o, given=Ll., g.-i. and Bretonni` ere, P. A. (2022) On the Reliabil- ity of Global Seasonal Forecasts: Sensitivity to Ensemble Size, Hindcast Length and Region Definition. Geophysical Research Letters,49, e2021GL094662. URL:https://agupubs.onlinelibrary.wiley. com/doi/10.1029/2021GL094662

-

[17]

M., Graybill, F

Mood, A. M., Graybill, F. A. and Boes, D. C. (1974)Introduction to the Theory of Statistics. McGraw- Hill, 3 edn

1974

-

[18]

Murphy, A. H. and Winkler, R. L. (1977) Reliability of subjective probability forecasts of precipitation and temperature.Journal of the Royal Statistical Society: Series C (Applied Statistics),26, 41–47. URL:https://rss.onlinelibrary.wiley.com/doi/abs/10.2307/2346866

-

[19]

Murphy, J. M. (1988) The impact of ensemble forecasts on predictability.Quarterly Journal of the Royal Meteorological Society,114, 463–493. URL:https://rmets.onlinelibrary.wiley.com/doi/abs/ 10.1002/qj.49711448010

-

[20]

Palmer, T. N., Doblas-Reyes, F. J., Weisheimer, A. and Rodwell, M. J. (2008) Toward Seamless Predic- tion: Calibration of Climate Change Projections Using Seasonal Forecasts.Bull. Amer. Meteor. Soc., 89, 459–470. URL:https://journals.ametsoc.org/doi/10.1175/BAMS-89-4-459

-

[21]

Richardson, D. S. (2001) Measures of skill and value of ensemble prediction systems, their interrelationship and the effect of ensemble size.Quarterly Journal of the Royal Meteorological Society,127, 2473–2489. URL:https://rmets.onlinelibrary.wiley.com/doi/10.1002/qj.49712757715

-

[22]

Roberts, C. D. and Leutbecher, M. (2025) Unbiased calculation, evaluation, and calibration of ensemble forecast anomalies.Quart J Royal Meteoro Soc, e4993. URL:https://rmets.onlinelibrary.wiley. com/doi/10.1002/qj.4993

- [23]

-

[24]

and Birner, T

Rupp, P., Spaeth, J. and Birner, T. (2025) A spread-versus-error framework to reliably quantify the potential for subseasonal windows of forecast opportunity. URL:https://egusphere.copernicus. org/preprints/2025/egusphere-2025-4925/

2025

-

[25]

Siegel, A. F. (2012) Chapter 8 - random sampling: Planning ahead for data gathering. InPractical Business Statistics (Sixth Edition)(ed. A. F. Siegel), 189–218. Academic Press, sixth edition edn. URL:https://www.sciencedirect.com/science/article/pii/B9780123852083000080

2012

-

[26]

Snedecor, G. W. and Cochran, W. G. (1967)Statistical Methods. Iowa State University Press, 6 edn

1967

-

[27]

Stigler, S. M. (1997) Regression towards the mean, historically considered.Stat Methods Med Res,6, 103–114

1997

-

[28]

Strommen, K., MacRae, M. and Christensen, H. (2023) On the relationship between reliability diagrams and the “signal-to-noise paradox”.Geophysical Research Letters,50, e2023GL103710. URL:https: //agupubs.onlinelibrary.wiley.com/doi/abs/10.1029/2023GL103710

-

[29]

Weigel, A. P. (2011) Verification of ensemble forecasts. InForecast Verification: A Practitioner’s Guide in Atmospheric Science(eds. I. T. Jolliffe and D. B. Stephenson), 141–166. Wiley & Sons, 2 edn

2011

-

[30]

Weisheimer, A. and Palmer, T. N. (2014) On the reliability of seasonal climate forecasts.Journal of The Royal Society Interface,11, 20131162. URL:https://doi.org/10.1098/rsif.2013.1162

-

[31]

Wilks, D. S. (2005)Statistical Methods in the Atmospheric Sciences. Academic Press. — (2011) On the reliability of the rank histogram.Monthly Weather Review,139, 311–316. URL: https://journals.ametsoc.org/view/journals/mwre/139/1/2010mwr3446.1.xml. 22

2005

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.