Recognition: 2 theorem links

· Lean TheoremCalibration of a neural network ocean closure for improved mean state and variability

Pith reviewed 2026-05-10 17:57 UTC · model grok-4.3

The pith

Calibrating a neural network parameterization of mesoscale eddies with ensemble inversion reduces errors in coarse ocean model mean state and variability by a factor of two.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

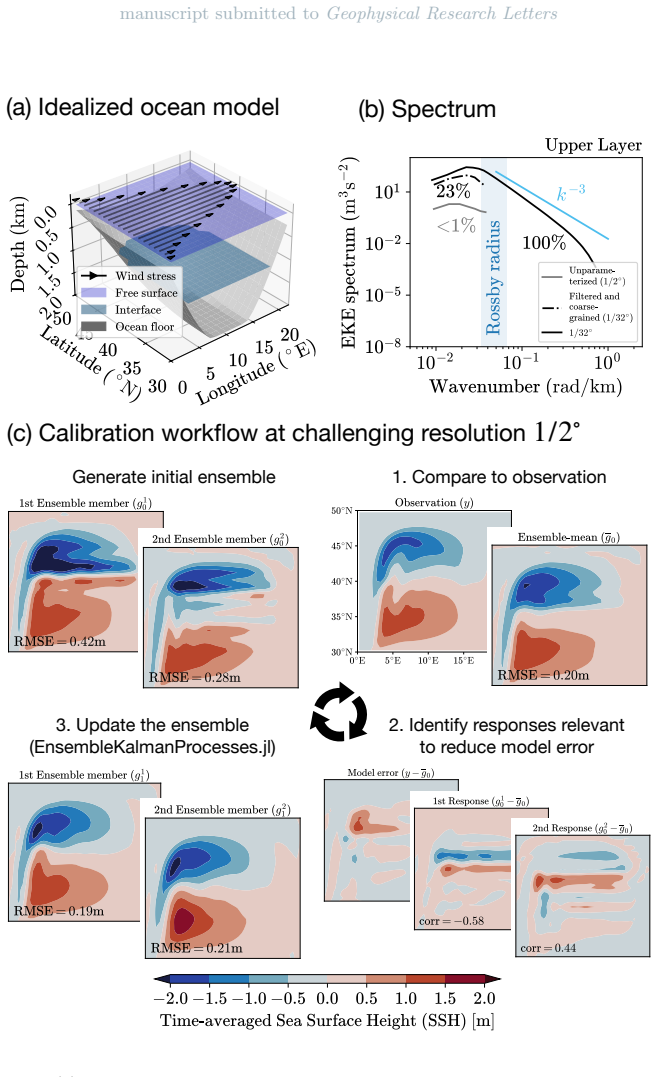

Optimizing the coefficients of a neural network closure for mesoscale eddies via Ensemble Kalman Inversion in coarse-resolution idealized ocean models yields a parameterization that reduces errors in time-averaged fluid interfaces and their variability by approximately a factor of two relative to the unparameterized case or an offline-trained network. The inversion is robust to noise in the target statistics caused by chaotic dynamics, and a calibration protocol is introduced that selects an initial condition to bypass integration to statistical equilibrium.

What carries the argument

Ensemble Kalman Inversion used to calibrate the parameters of a neural network that approximates the net forcing from mesoscale eddies.

If this is right

- The calibrated neural network improves both mean state and variability metrics in coarse models.

- Calibration via ensemble inversion handles the stochastic nature of ocean simulations without special noise treatment.

- The bypass protocol reduces computational cost by avoiding long equilibrium runs.

- Results indicate a route to better global ocean models through systematic parameter optimization.

- Offline training alone is outperformed by this online calibration approach.

Where Pith is reading between the lines

- Similar calibration could be applied to other subgrid closures in atmosphere or climate models.

- Success in idealized domains suggests testing transferability to full global configurations with realistic forcing.

- Improved eddy representation may affect simulated ocean heat uptake and circulation patterns in climate projections.

- Future work could explore combining this with observational data targets instead of model-derived statistics.

Load-bearing premise

The neural network structure is flexible enough to capture the essential integrated effects of mesoscale eddies, and the statistics chosen from idealized models remain relevant targets when the parameterization is used in more complex global configurations.

What would settle it

Integrate the calibrated neural network parameterization into a global ocean model at coarse resolution and compare the resulting mean interfaces, variability, and other diagnostics against a high-resolution reference simulation or against observational datasets; if the factor-of-two error reduction does not appear, the claim does not hold.

Figures

read the original abstract

Global ocean models exhibit biases in the mean state and variability, particularly at coarse resolution, where mesoscale eddies are unresolved. To address these biases, parameterization coefficients are typically tuned ad hoc. Here, we formulate parameter tuning as a calibration problem using Ensemble Kalman Inversion (EKI). We optimize parameters of a neural network parameterization of mesoscale eddies in two idealized ocean models at coarse resolution. The calibrated parameterization reduces errors in the time-averaged fluid interfaces and their variability by approximately a factor of two compared to the unparameterized model or the offline-trained parameterization. The EKI method is robust to noise in time-averaged statistics arising from chaotic ocean dynamics. Furthermore, we propose an efficient calibration protocol that bypasses integration to statistical equilibrium by carefully choosing an initial condition. These results demonstrate that systematic calibration can substantially improve coarse-resolution ocean simulations and provide a practical pathway for reducing biases in global ocean models.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper formulates the tuning of a neural network parameterization for mesoscale eddies as a calibration problem solved via Ensemble Kalman Inversion (EKI). In two idealized coarse-resolution ocean models, the calibrated NN reduces errors in time-averaged fluid interfaces and their variability by a factor of approximately two relative to the unparameterized model and an offline-trained NN. The method is reported to be robust to noise in the target statistics, and an efficient protocol is proposed that uses a carefully selected initial condition to avoid integrating to full statistical equilibrium.

Significance. If the central results hold under closer scrutiny, the work provides a systematic, data-driven route to improving coarse ocean models' mean state and variability without ad-hoc tuning. Notable strengths include the online EKI calibration against external reference statistics, explicit demonstration of robustness to chaotic noise, and the attempt at an efficient non-equilibrium protocol. These elements address a long-standing practical challenge in ocean parameterization and could scale to global configurations if the idealized-case gains generalize.

major comments (2)

- [Abstract and efficient calibration protocol] Abstract and the efficient calibration protocol section: the claim that a specific initial condition allows bypassing integration to statistical equilibrium while still yielding parameters that improve the model's intrinsic equilibrium behavior is load-bearing for the factor-of-two error reduction. In chaotic ocean dynamics, finite-window statistics from a chosen IC can differ from those of a long equilibrated run; if the EKI targets are not demonstrably equivalent (within noise) to true equilibrium statistics, the reported improvement may be protocol- and metric-dependent rather than a robust property of the calibrated closure.

- [Abstract] Abstract: the quantitative claim of 'approximately a factor of two' error reduction is presented without error bars, confidence intervals, or statistical significance tests on the time-averaged diagnostics. This omission makes it difficult to judge whether the improvement is distinguishable from sampling variability in the chaotic system or from the choice of the two specific idealized configurations.

minor comments (2)

- [Abstract] The abstract and methods would benefit from explicitly naming the two idealized ocean models and the precise target statistics (e.g., which fluid interfaces and variability measures) used for EKI calibration.

- [Methods] Notation for the neural network architecture, loss function, and EKI update equations should be introduced with a clear table or diagram to aid reproducibility.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback. We respond to each major comment below and will revise the manuscript accordingly to address the concerns raised.

read point-by-point responses

-

Referee: [Abstract and efficient calibration protocol] Abstract and the efficient calibration protocol section: the claim that a specific initial condition allows bypassing integration to statistical equilibrium while still yielding parameters that improve the model's intrinsic equilibrium behavior is load-bearing for the factor-of-two error reduction. In chaotic ocean dynamics, finite-window statistics from a chosen IC can differ from those of a long equilibrated run; if the EKI targets are not demonstrably equivalent (within noise) to true equilibrium statistics, the reported improvement may be protocol- and metric-dependent rather than a robust property of the calibrated closure.

Authors: We thank the referee for highlighting this important consideration. The initial condition was chosen following a brief spin-up period from a high-resolution reference simulation, ensuring that the short-time statistics align closely with equilibrium values within the inherent noise of the system. To strengthen this, we will include in the revised manuscript a direct comparison of the target statistics computed from the selected initial condition against those from a fully equilibrated long integration. This will demonstrate their equivalence within sampling variability and confirm that the calibrated parameters improve the intrinsic equilibrium behavior independently of the protocol. revision: yes

-

Referee: [Abstract] Abstract: the quantitative claim of 'approximately a factor of two' error reduction is presented without error bars, confidence intervals, or statistical significance tests on the time-averaged diagnostics. This omission makes it difficult to judge whether the improvement is distinguishable from sampling variability in the chaotic system or from the choice of the two specific idealized configurations.

Authors: We agree that quantifying the uncertainty is essential for robust interpretation. In the revised version, we will augment the abstract and the results section with error bars derived from ensemble variability or bootstrap methods on the time-averaged diagnostics. Additionally, we will report p-values or confidence intervals from statistical tests comparing the errors across the different model configurations to establish the significance of the factor-of-two reduction. revision: yes

Circularity Check

No significant circularity: calibration targets external reference statistics

full rationale

The paper formulates parameter tuning as an EKI optimization problem for a neural network mesoscale eddy parameterization. Targets are time-averaged fluid interfaces and variability drawn from higher-resolution or reference runs (external to the coarse model being calibrated). The reported factor-of-two error reduction is measured by comparing the calibrated coarse model against those same independent reference statistics, not by construction from the fitted parameters. The efficient protocol selects a particular initial condition to avoid long equilibration but still optimizes against the external targets; this does not reduce the improvement metric to a tautology. No self-definitional steps, fitted-input predictions, or load-bearing self-citations appear in the derivation chain. The central claim therefore retains independent content relative to its inputs.

Axiom & Free-Parameter Ledger

free parameters (1)

- neural network weights and biases

axioms (2)

- domain assumption Neural network parameterization can represent the net effect of unresolved mesoscale eddies

- domain assumption Ensemble Kalman Inversion remains effective when observations are noisy time averages from chaotic dynamics

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe use the recently developed data-driven parameterization of mesoscale eddies by Perezhogin et al. (2025), which modifies the horizontal momentum balance equation: ∂tu=⋯+∇·T

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanLogicNat recovery unclearWe solve the optimization problem using Ensemble Kalman Inversion (ETKI) ... loss function L(θ)=||y−gj||22 on time-averaged interfaces

Reference graph

Works this paper leans on

-

[1]

Bachman, S. D. (2024). An eigenvalue-based framework for constraining anisotropic eddy viscosity.Journal of Advances in Modeling Earth Systems,16(8), e2024MS004375

2024

-

[2]

Cesa, G., Lang, L., & Weiler, M. (2022). A program to build E(N)-equivariant steerable CNNs. InInternational conference on learning representations (iclr)

2022

-

[3]

Connolly, A., Cheng, Y., Walters, R., Wang, R., Yu, R., & Gentine, P. (2025). Deep learning turbulence closures generalize best with physics-based methods.Authorea Preprints. doi: https://doi.org/10.22541/essoar.173869578.80400701/v1

-

[4]

Griffies, S. M., & Hallberg, R. W. (2000). Biharmonic friction with a Smagorinsky-like viscosity for use in large-scale eddy-permitting ocean models.Monthly Weather Review,128(8), 2935–2946. doi: https://doi.org/10.1175/1520-0493(2000)128⟨2935:BFWASL⟩2.0.CO;2

-

[5]

M., Winton, M., Anderson, W

Griffies, S. M., Winton, M., Anderson, W. G., Benson, R., Delworth, T. L., Dufour, C. O., . . . others (2015). Impacts on ocean heat from transient mesoscale eddies in a hierarchy of climate models.Journal of Climate,28(3), 952–977. doi: https://doi.org/10.1175/ JCLI-D-14-00353.1

2015

-

[6]

Guan, Y., Subel, A., Chattopadhyay, A., & Hassanzadeh, P. (2022). Learning physics- constrained subgrid-scale closures in the small-data regime for stable and accurate LES. Physica D: Nonlinear Phenomena, 133568. doi: https://doi.org/10.1016/j.physd.2022 .133568

-

[7]

Pawar, S., San, O., Rasheed, A., & Vedula, P. (2023). Frame invariant neural network closures for Kraichnan turbulence.Physica A: Statistical Mechanics and its Applications,609, 128327. doi: https://doi.org/10.1016/j.physa.2022.128327 April 9, 2026, 12:10am X - 18:

-

[8]

Perezhogin, P., Adcroft, A., & Zanna, L. (2025). Generalizable neural-network parameterization of mesoscale eddies in idealized and global ocean models.Geophysical Research Letters, 52(19), e2025GL117046. doi: https://doi.org/10.1029/2025GL117046

-

[9]

Ross, A., Li, Z., Perezhogin, P., Fernandez-Granda, C., & Zanna, L. (2023). Benchmarking of machine learning ocean subgrid parameterizations in an idealized model.Journal of Advances in Modeling Earth Systems,15(1), e2022MS003258. doi: https://doi.org/10.1029/ 2022MS003258

2023

-

[10]

D., & McWilliams, J

Smith, R. D., & McWilliams, J. C. (2003). Anisotropic horizontal viscosity for ocean models. Ocean Modelling,5(2), 129–156

2003

-

[11]

Vallis, G. K. (2017).Atmospheric and oceanic fluid dynamics. Cambridge University Press. doi: https://doi.org/10.1017/9781107588417

-

[12]

Weiler, M., & Cesa, G. (2019). General E(2)-Equivariant Steerable CNNs. InConference on neural information processing systems (neurips)

2019

-

[13]

Zanna, L., & Bolton, T. (2020). Data-driven equation discovery of ocean mesoscale clo- sures.Geophysical Research Letters,47(17), e2020GL088376. doi: https://doi.org/10.1029/ 2020GL088376 April 9, 2026, 12:10am

2020

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.