Recognition: no theorem link

LungCURE: Benchmarking Multimodal Real-World Clinical Reasoning for Precision Lung Cancer Diagnosis and Treatment

Pith reviewed 2026-05-10 18:08 UTC · model grok-4.3

The pith

LungCURE supplies the first standardized benchmark of 1,000 real-world multimodal lung-cancer cases and pairs it with a multi-agent framework that reduces cascading errors in guideline-based decisions.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

LungCURE is the first standardized multimodal benchmark built from 1,000 real-world, clinician-labeled lung-cancer cases collected across more than ten hospitals; LCAgent is a multi-agent framework that enforces guideline compliance by breaking the clinical pathway into separate agents that suppress cascading reasoning errors.

What carries the argument

LungCURE benchmark (1,000 multimodal cases) and LCAgent multi-agent decomposition that isolates staging, recommendation, and pathway steps to block error cascades.

If this is right

- Current large language models exhibit large capability gaps when forced to follow precise oncological guidelines on multimodal inputs.

- Decomposing the clinical pathway into separate agents measurably improves adherence to staging and treatment rules.

- A public benchmark of real hospital cases makes it possible to measure progress on end-to-end clinical decision support rather than isolated subtasks.

- The same decomposition strategy can be applied to any multi-stage medical workflow that requires sequential, guideline-constrained reasoning.

Where Pith is reading between the lines

- If LungCURE-style benchmarks become standard, hospitals could run routine audits of AI decision tools against the same cases used for training.

- The multi-agent pattern may transfer to other cancer types or chronic-disease pathways where errors accumulate across diagnostic and therapeutic stages.

- A practical next test would be to measure whether LCAgent-style agents reduce the rate of guideline violations in prospective, live clinical deployments.

Load-bearing premise

The 1,000 clinician-labeled cases collected from more than ten hospitals form an unbiased and consistently labeled sample of real-world lung-cancer presentations, and the multi-agent split reliably removes errors rather than creating new ones.

What would settle it

A replication study that finds no statistically significant accuracy gain when the same models run with versus without the LCAgent decomposition on a fresh set of cases drawn from the same hospitals.

Figures

read the original abstract

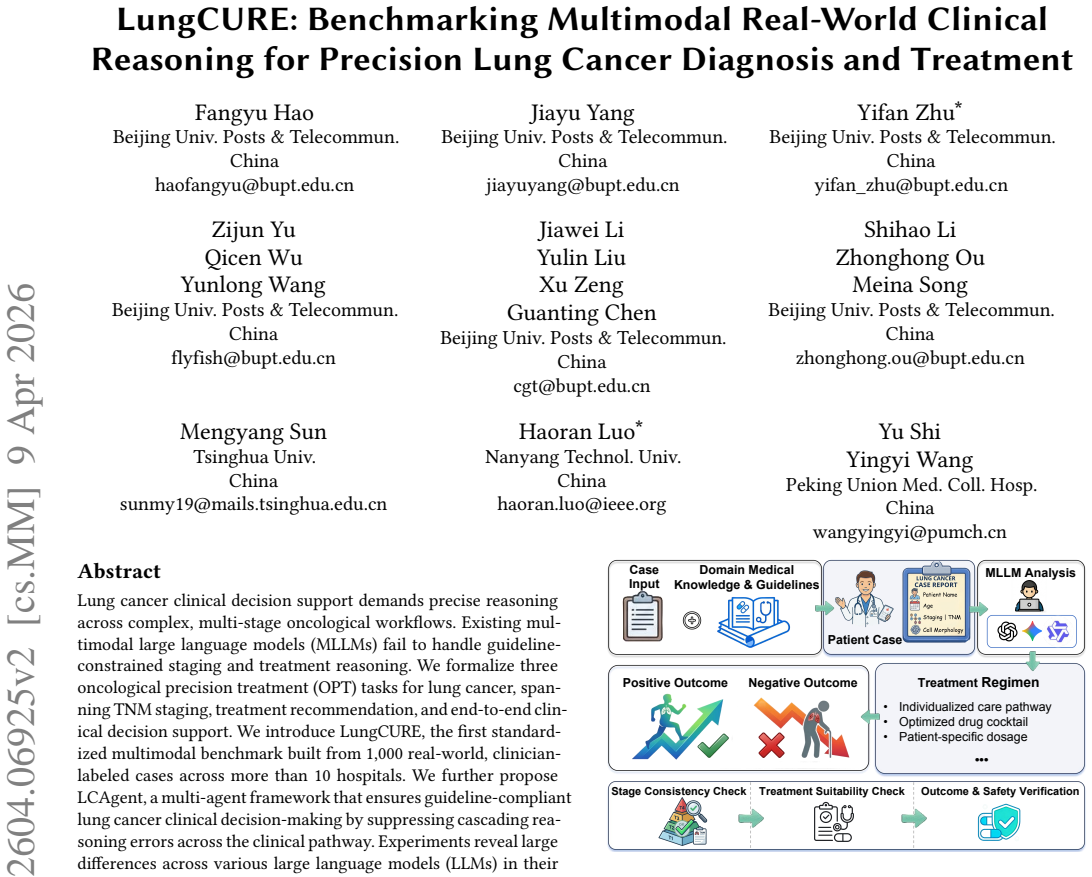

Lung cancer clinical decision support demands precise reasoning across complex, multi-stage oncological workflows. Existing multimodal large language models (MLLMs) fail to handle guideline-constrained staging and treatment reasoning. We formalize three oncological precision treatment (OPT) tasks for lung cancer, spanning TNM staging, treatment recommendation, and end-to-end clinical decision support. We introduce LungCURE, the first standardized multimodal benchmark built from 1,000 real-world, clinician-labeled cases across more than 10 hospitals. We further propose LCAgent, a multi-agent framework that ensures guideline-compliant lung cancer clinical decision-making by suppressing cascading reasoning errors across the clinical pathway. Experiments reveal large differences across various large language models (LLMs) in their capabilities for complex medical reasoning, when given precise treatment requirements. We further verify that LCAgent, as a simple yet effective plugin, enhances the reasoning performance of LLMs in real-world medical scenarios.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims to introduce LungCURE, the first standardized multimodal benchmark built from 1,000 real-world clinician-labeled lung cancer cases across more than 10 hospitals, formalizing three oncological precision treatment (OPT) tasks: TNM staging, treatment recommendation, and end-to-end clinical decision support. It proposes LCAgent, a multi-agent framework that ensures guideline-compliant decision-making by suppressing cascading reasoning errors across the clinical pathway. Experiments are described as revealing large differences in LLMs' complex medical reasoning capabilities and verifying that LCAgent enhances LLM performance in real-world medical scenarios.

Significance. If the benchmark construction is rigorously documented with validation details and LCAgent is shown via quantitative results and ablations to improve reasoning reliability, the work could offer a valuable real-world testbed for multimodal clinical AI in oncology. The multi-hospital sourcing and focus on guideline-constrained workflows address an important gap, provided selection biases and error dynamics are properly characterized.

major comments (3)

- [Abstract] Abstract: The central claim that LungCURE constitutes a 'standardized' benchmark from '1,000 real-world, clinician-labeled cases across more than 10 hospitals' is load-bearing for all subsequent experimental interpretations, yet the abstract supplies no information on case selection criteria, inclusion/exclusion rules, sampling strategy for diversity/complexity, labeling protocol, number of labelers per case, or inter-rater agreement (e.g., Cohen's kappa). Without these, performance gaps between LLMs and gains attributed to LCAgent cannot be reliably interpreted.

- [Abstract] Abstract: The assertions of 'large differences across various LLMs' and that 'LCAgent enhances the reasoning performance' are presented without any quantitative metrics, specific accuracy numbers, error analysis, or ablation results. This absence prevents assessment of effect sizes, statistical significance, or whether the multi-agent decomposition reduces net errors rather than redistributing failure modes across TNM staging, treatment recommendation, and end-to-end tasks.

- [LCAgent description] LCAgent description (likely §3 or §4): The claim that the multi-agent framework 'suppresses cascading reasoning errors across the clinical pathway' lacks supporting evidence such as error-tracing experiments, single-agent vs. multi-agent comparisons, or analysis showing net error reduction rather than introduction of new coordination failures. This is central to the proposed plugin's value.

minor comments (1)

- [Abstract] Abstract: Consider adding one sentence summarizing the LLMs tested and the magnitude of reported gains to give readers immediate context for the 'large differences' claim.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment point by point below, clarifying where the manuscript already provides the requested information and outlining targeted revisions to improve clarity and self-containment.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central claim that LungCURE constitutes a 'standardized' benchmark from '1,000 real-world, clinician-labeled cases across more than 10 hospitals' is load-bearing for all subsequent experimental interpretations, yet the abstract supplies no information on case selection criteria, inclusion/exclusion rules, sampling strategy for diversity/complexity, labeling protocol, number of labelers per case, or inter-rater agreement (e.g., Cohen's kappa). Without these, performance gaps between LLMs and gains attributed to LCAgent cannot be reliably interpreted.

Authors: We agree that the abstract, constrained by length, omits these details. The full manuscript documents them rigorously in Section 2 (Benchmark Construction), covering case selection from over 10 hospitals, inclusion/exclusion criteria, sampling for diversity and complexity, multi-clinician labeling protocol, and inter-rater agreement. We will revise the abstract to include a concise summary of these elements so that the standardization claim is more self-contained. revision: yes

-

Referee: [Abstract] Abstract: The assertions of 'large differences across various LLMs' and that 'LCAgent enhances the reasoning performance' are presented without any quantitative metrics, specific accuracy numbers, error analysis, or ablation results. This absence prevents assessment of effect sizes, statistical significance, or whether the multi-agent decomposition reduces net errors rather than redistributing failure modes across TNM staging, treatment recommendation, and end-to-end tasks.

Authors: The abstract summarizes high-level findings while the detailed quantitative metrics, per-task accuracies, error analyses, statistical tests, and ablation studies (including single- vs. multi-agent comparisons and failure-mode breakdowns) appear in Sections 4 and 5. We will revise the abstract to incorporate representative quantitative highlights that convey effect sizes without exceeding length limits. revision: partial

-

Referee: [LCAgent description] LCAgent description (likely §3 or §4): The claim that the multi-agent framework 'suppresses cascading reasoning errors across the clinical pathway' lacks supporting evidence such as error-tracing experiments, single-agent vs. multi-agent comparisons, or analysis showing net error reduction rather than introduction of new coordination failures. This is central to the proposed plugin's value.

Authors: The manuscript supplies this evidence via dedicated experiments that trace errors along the clinical pathway, compare single-agent and multi-agent configurations, and quantify net error reduction while analyzing coordination overhead. We will revise the LCAgent description to more explicitly cross-reference and summarize these results. revision: yes

Circularity Check

No significant circularity; benchmark and framework are independently constructed from external data.

full rationale

The paper introduces LungCURE as a new benchmark built from 1,000 external real-world clinician-labeled cases across >10 hospitals and proposes LCAgent as a multi-agent plugin for guideline-compliant reasoning. No equations, fitted parameters, self-citations, uniqueness theorems, or ansatzes are present in the provided text. The central claims rest on the creation and empirical evaluation of these artifacts against the external cases rather than any reduction to self-referential inputs or prior author work by construction. The derivation chain is therefore self-contained.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Clinician-labeled real-world cases from multiple hospitals accurately capture the complexity and distribution of lung cancer clinical reasoning scenarios.

invented entities (1)

-

LCAgent

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Kenza Benkirane, Jackie Kay, and María Pérez-Ortiz. 2025. How Can We Diag- nose and Treat Bias in Large Language Models for Clinical Decision-Making?. In Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the As- sociation for Computational Linguistics: Human Language Technologies, NAACL

2025

-

[2]

Freddie Bray, Mathieu Laversanne, Hyuna Sung, et al. 2024. Global cancer sta- tistics 2022: GLOBOCAN estimates of incidence and mortality worldwide for 36 cancers in 185 countries. CA: A Cancer Journal for Clinicians 74, 3 (2024), 229–263

2024

-

[3]

Nicolas Captier, Marvin Lerousseau, Fanny Orlhac, et al. 2025. Integration of clinical, pathological, radiological, and transcriptomic data improves prediction for first-line immunotherapy outcome in metastatic non-small cell lung cancer. Nature Communications 16, 1 (2025), 614

2025

-

[4]

Junying Chen, Chi Gui, Ruyi Ouyang, et al. 2024. Towards Injecting Medical Visual Knowledge into Multimodal LLMs at Scale. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, EMNLP 2024 . 7346–7370

2024

-

[5]

Edward Choi, Mohammad Taha Bahadori, Jimeng Sun, Joshua Kulas, Andy Schuetz, and Walter F. Stewart. 2016. RETAIN: An Interpretable Predictive Model for Healthcare using Reverse Time Attention Mechanism. In Annual Con- ference on Neural Information Processing Systems, NeurIPS 2016 . 3504–3512

2016

-

[6]

Wenliang Dai, Junnan Li, Dongxu Li, et al. 2023. InstructBLIP: Towards General- purpose Vision-Language Models with Instruction Tuning. InAnnual Conference on Neural Information Processing Systems 2023, NeurIPS 2023 . 49250–49267

2023

-

[7]

Detterbeck, Gavitt A

Frank C. Detterbeck, Gavitt A. Woodard, Anna S. Bader, et al. 2024. The Proposed Ninth Edition TNM Classification of Lung Cancer.CHEST 166, 4 (2024), 882–895

2024

-

[8]

Zhiyun Duan, Xu Huang, Rong Lu, et al. 2025. Multi-center benchmarking of large language models for clinical decision support in lung cancer screening. Cell Reports Medicine 6, 12 (2025)

2025

- [9]

-

[10]

Joschka Haltaufderheide and Robert Ranisch. 2024. The Ethics of ChatGPT in Medicine and Healthcare: A Systematic Review on Large Language Models (LLMs). npj Digital Medicine 7, 1 (2024), 183

2024

-

[11]

Shuo Huang, Lujia Zhong, and Yonggang Shi. 2025. Multistage Alignment and Fusion for Multimodal Multiclass Alzheimer’s Disease Diagnosis. In In 28th In- ternational Conference on Medical Image Computing and Computer-Assisted Inter- vention, MICCAI 2025. 375–385

2025

-

[12]

Jonathan Kim, Anna Podlasek, Kie Shidara, Feng Liu, Ahmed Alaa, and Danilo Bernardo. 2025. Limitations of Large Language Models in Clinical Problem- Solving Arising from Inflexible Reasoning. Scientific reports 15, 1 (2025), 39426

2025

-

[13]

Kyungwon Kim, Yongmoon Lee, Doohyun Park, et al. 2024. LLM-guided Multi- modal Multiple Instance Learning for 5-year Overall Survival Prediction of Lung Cancer. In International Conference on Medical Image Computing and Computer- Assisted Intervention, MICCAI 2024 . 239–249

2024

-

[14]

Yubin Kim, Chanwoo Park, Hyewon Jeong, et al. 2024. MDAgents: An Adap- tive Collaboration of LLMs for Medical Decision-Making. In Advances in Neural Information Processing Systems, Vol. 37. Curran Associates, Inc., 79410–79452

2024

-

[15]

Jong Eun Lee, Ki-Seong Park, Yun-Hyeon Kim, et al. 2024. Lung Cancer Stag- ing Using Chest CT and FDG PET/CT Free-Text Reports: Comparison Among Three ChatGPT Large Language Models and Six Human Readers of Varying Ex- perience. American Journal of Roentgenology 223, 6 (2024), e2431696

2024

-

[16]

Chunyuan Li, Cliff Wong, Sheng Zhang, et al. 2023. LLaV A-Med: Training a Large Language-and-Vision Assistant for Biomedicine in One Day. In Annual Conference on Neural Information Processing Systems 2023, NeurIPS 2023

2023

-

[17]

Cheng-Yi Li, Kao-Jung Chang, Cheng-Fu Yang, et al. 2025. Towards a holistic framework for multimodal LLM in 3D brain CT radiology report generation. Nature Communications 16, 1 (2025), 2258

2025

-

[18]

Xiao Liang, Yanlei Zhang, Di Wang, et al. 2024. Divide and Conquer: Isolating Normal-Abnormal Attributes in Knowledge Graph-Enhanced Radiology Report Generation. In Proceedings of the 32nd ACM International Conference on Multime- dia, MM 2024 . 4967–4975

2024

-

[19]

Haotian Liu, Chunyuan Li, Qingyang Wu, and Yong Jae Lee. 2023. Visual Instruc- tion Tuning. In Annual Conference on Neural Information Processing Systems 2023, NeurIPS 2023,. 34892–34916

2023

- [20]

-

[21]

Pablo Meseguer, Rocío del Amor, and Valery Naranjo. 2025. Benchmarking Histopathology Foundation Models in a Multi-center Datase for Skin Cancer Subtyping. In 29th Annual Conference on Medical Image Understanding and Anal- ysis, MIUA 2025. 16–28

2025

-

[22]

Chuang Niu, Qing Lyu, Christopher D Carothers, Parisa Kaviani, Josh Tan, Pingkun Yan, Mannudeep K Kalra, Christopher T Whitlow, and Ge Wang. 2025. Medical multimodal multitask foundation model for lung cancer screening. Na- ture Communications 16, 1 (2025), 1523

2025

-

[23]

OpenAI. 2023. GPT-4 Technical Report. (2023). arXiv: 2303.08774

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[24]

Shrey Pandit, Jiawei Xu, Junyuan Hong, et al. 2025. MedHallu: A Comprehensive Benchmark for Detecting Medical Hallucinations in Large Language Models. In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, EMNLP 2025. 2858–2873

2025

-

[25]

Robinson, et al

Martin Reck, Delvys Rodríguez-Abreu, Andrew G. Robinson, et al. 2021. Five- Year Outcomes With Pembrolizumab Versus Chemotherapy for Metastatic Non– Small-Cell Lung Cancer With PD-L1 Tumor Proportion Score ≥ 50%. Journal of Clinical Oncology 39, 21 (2021), 2339–2349

2021

-

[26]

Samuel Rutunda, Gwydion Williams, Kleber Kabanda, et al. 2026. Large language models for frontline healthcare support in low-resource settings. Nature Health 1, 2 (2026), 191–197

2026

-

[27]

Yuyang Sha, Hongxin Pan, Weiyu Meng, and Kefeng Li. 2025. Contrastive Knowledge-Guided Large Language Models for Medical Report Generation. In Medical Image Computing and Computer Assisted Intervention, MICCAI 2025. 111– 120

2025

-

[28]

Junjie Shi, Caozhi Shang, Zhaobin Sun, et al. 2024. PASSION: Towards Effective Incomplete Multi-Modal Medical Image Segmentation with Imbalanced Miss- ing Rates. In Proceedings of the 32nd ACM International Conference on Multime- dia,MM 2024. 456–465

2024

-

[29]

Gorman, Robert A

Ida Sim, Paul N. Gorman, Robert A. Greenes, et al. 2001. White Paper: Clinical Decision Support Systems for the Practice of Evidence-based Medicine. Journal of the American Medical Informatics Association 8, 6 (2001), 527–534

2001

-

[30]

Karan Singhal, Tao Tu, Juraj Gottweis, et al. 2025. Toward Expert-Level Medical Question Answering with Large Language Models. Nature Medicine 31, 3 (2025), 943–950

2025

-

[31]

Anindya Pradipta Susanto, David Lyell, Bambang Widyantoro, Shlomo Berkovsky, and Farah Magrabi. 2023. Effects of machine learning-based clini- cal decision support systems on decision-making, care delivery, and patient out- comes: a scoping review. Journal of the American Medical Informatics Association 30, 12 (2023), 2050–2063

2023

-

[32]

Reed T Sutton, David Pincock, Daniel C Baumgart, et al. 2020. An overview of clinical decision support systems: benefits, risks, and strategies for success. npj Digital Medicine 3, 1 (2020), 17

2020

-

[33]

Gemini Team. 2023. Gemini: A Family of Highly Capable Multimodal Models. (2023). arXiv: 2312.11805 https://arxiv.org/abs/2312.11805

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[34]

Qwen Team. 2025. Qwen3-VL Technical Report. (2025). arXiv: 2511.21631

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[35]

Dmitriy Umerenkov, Alexandr Nesterov, Vladimir Shaposhnikov, et al. 2025. AI Diagnostic Assistant (AIDA): A Predictive Model for Diagnoses from Health Records in Clinical Decision Support Systems. In Proceedings of the Thirty-Fourth International Joint Conference on Artificial Intelligence, IJCAI 2025, . 9880–9889

2025

-

[36]

Alyssa Unell, Noel C. F. Codella, Sam Preston, et al. 2025. CancerGUIDE: Can- cer Guideline Understanding via Internal Disagreement Estimation. (2025). arXiv:2509.07325 https://doi.org/10.48550/arXiv.2509.07325

-

[37]

Jialun Wu, Kai He, Rui Mao, Xuequn Shang, and Erik Cambria. 2025. Harness- ing the potential of multimodal EHR data: A comprehensive survey of clinical predictive modeling for intelligent healthcare. Information Fusion 123 (2025), 103283

2025

-

[38]

Jiageng Wu, Xian Wu, and Jie Yang. 2024. Guiding Clinical Reasoning with Large Language Models via Knowledge Seeds. In Proceedings of the Thirty-Third International Joint Conference on Artificial Intelligence, IJCAI 2024 . 7491–7499

2024

-

[39]

Peng Xia, Ze Chen, Juanxi Tian, et al. 2024. CARES: A Comprehensive Bench- mark of Trustworthiness in Medical Vision Language Models. In Annual Con- ference on Neural Information Processing Systems 2024, NeurIPS 2024 . 140334– 140365

2024

-

[40]

Peng Xia, Jinglu Wang, Yibo Peng, et al. 2026. MMedAgent-RL: Optimizing Multi-Agent Collaboration for Multimodal Medical Reasoning. In The Fourteenth International Conference on Learning Representations, ICLR 2026

2026

-

[41]

Peng Xia, Kangyu Zhu, Haoran Li, et al. 2025. MMed-RAG: Versatile Multimodal RAG System for Medical Vision Language Models. InThe Thirteenth International Conference on Learning Representations, ICLR 2025

2025

-

[42]

Cao Xiao, Edward Choi, and Jimeng Sun. 2018. Opportunities and challenges in developing deep learning models using electronic health records data: a sys- tematic review. Journal of the American Medical Informatics Association 25, 10 (2018), 1419–1428

2018

-

[43]

Hongyan Xu, Arcot Sowmya, Ian Katz, and Dadong Wang. 2025. UniMRG: Refin- ing Medical Semantic Understanding Across Modalities via LLM-Orchestrated Synergistic Evolution. In 28th International Conference on Medical Image Com- puting and Computer Assisted Intervention, MICCAI 2025 . 636–646

2025

-

[44]

Wenxuan Yang, Weimin Tan, Yuqi Sun, and Bo Yan. 2024. A Medical Data- Effective Learning Benchmark for Highly Efficient Pre-training of Foundation Models. In Proceedings of the 32nd ACM International Conference on Multimedia, MM 2024. 3499–3508

2024

-

[45]

Kangyu Zhu, Peng Xia, Yun Li, et al. 2025. MMedPO: Aligning Medical Vision- Language Models with Clinical-Aware Multimodal Preference Optimization. In Forty-second International Conference on Machine Learning, ICML 2025 . Submission Under Review, –, – Hao et al. Appendix A LungCURE Construction Details A.1 Dataset Construction Details Step 1: Data Collect...

2025

-

[46]

bilateral hilar and mediastinal nodes

Semantic Standardization and Feature Routing: We first em- ploy a document-extraction agent ℳextract to parse unstructured multi-modal reports ℛ (e.g., CT, PET/CT, pathology reports). To prevent spatial logic confusion, we introduce a Composite Anatom- ical Site Splitting algorithm. For instance, composite phrases like “bilateral hilar and mediastinal nod...

-

[47]

uncertain/suspicious

Independent Staging Agents: Three specialized LLM agents (ℳ𝑇 , ℳ𝑁 , and ℳ𝑀 ) process their respective feature sets concurrently. Each agent acts under rigorous Rule-Constrained Chain-of-Thought (RC-CoT) 𝜋𝑘 . For example, the T-Agent strictly executes an abso- lute maximum diameter extraction rule, while the M-Agent eval- uates distant metastasis via multi...

-

[48]

Deterministic Aggregation and Uncertainty Projection: Finally, the independent outputs are aggregated using a deterministic code execution node ΓAJCC(⋅). Rather than generating the final stage via LLM, this node utilizes a strict logic matrix derived from the AJCC manual to compute the comprehensive stage 𝑆final (e.g., IA1, IIIA): 𝑆final = Γ AJCC(𝑠𝑇 , 𝑠𝑁 ...

-

[49]

Early-Stage Post-Radical Resection

Structured Profiling & Algorithmic Triage: A specialized agent first extracts critical decision-making factors (e.g., Histology, PS Score, PD-L1 expression) from the patient record, standardizing them into a profile vector 𝑉profile. A deterministic routing script Φroute acts as a clinical triage system, mapping the combination of 𝑉profile and 𝑆final to a ...

-

[50]

Highly dense, localized clinical guide- lines and landmark trial literatures 𝒢𝑖𝑑 ⊂ 𝒢 (e.g., NCCN/CSCO protocols, KEYNOTE series) are retrieved and injected as hard con- straints

In-Context Guideline Injection and Recommendation: Based on the triage result𝒞𝑖𝑑 , the system dynamically activates a correspond- ing Expert Agent ℳexpert. Highly dense, localized clinical guide- lines and landmark trial literatures 𝒢𝑖𝑑 ⊂ 𝒢 (e.g., NCCN/CSCO protocols, KEYNOTE series) are retrieved and injected as hard con- straints. The final treatment re...

-

[51]

Whether T_reasoning identifies the decisive evidence for T staging

T_score Rules Evaluation Focus Whether T_stage exactly matches the ground truth. Whether T_reasoning identifies the decisive evidence for T staging. Whether the reasoning follows T-dimension workflow logic. Key Checks Prefer label-level clinical evidence over superficial repetition of exact measurements or SUV values. If multiple T criteria are ...

-

[52]

scores": {

N_score Rules Evaluation Focus Whether N_stage exactly matches the ground truth. Whether N_reasoning follows the logic of full nodal scanning and final landing on the highest confirmed N stage. Whether confirmed and uncertain nodal evidence are handled correctly. Key Checks The reasoning should reflect scanning for N1, N2, and N3 evidence rather t...

-

[53]

gt_medications

M_score Rules Evaluation Focus Whether M_stage exactly matches the ground truth. Whether M_reasoning follows the decision path for M0/M1a / M1b / M1c. Whether organ count, lesion count, and metastatic scope are handled correctly. Key Checks The reasoning should first identify M1a-related evidence, such as contralateral pulmonary lesions, pleural m...

-

[54]

Evidence consolidation: merge related findings from Diagnostic Impression and Main Description, while preserving uncertain, suspicious, and confirmed lesions

-

[55]

consider metastasis

Anatomical dispatch: assign each candidate lesion to either n_assessment_context or m_assessment_context. Inputs The normalized Stage I representation produced by the report extraction module, containing: - Main Description - Diagnostic Impression. Key Constraints Comprehensive Staging: Preserve all abnormalities (confirmed, suspicious, or indeterminate...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.