Recognition: unknown

Open Preparation, Human Explanation, and Instructor Synthesis: A Human-Scale Methodology for AI-Rich Higher Education

Pith reviewed 2026-05-10 16:36 UTC · model grok-4.3

The pith

In AI-rich mathematics education, evidence of student understanding relocates from written work to live oral explanation and cumulative instructor observation.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By shifting evidential trust away from written artifacts toward live human explanation and cumulative observation, combined with instructor synthesis after student attempts, a pedagogically coherent and operationally plausible methodology arises for maintaining trustworthy evidence of understanding in AI-rich undergraduate mathematics service courses.

What carries the argument

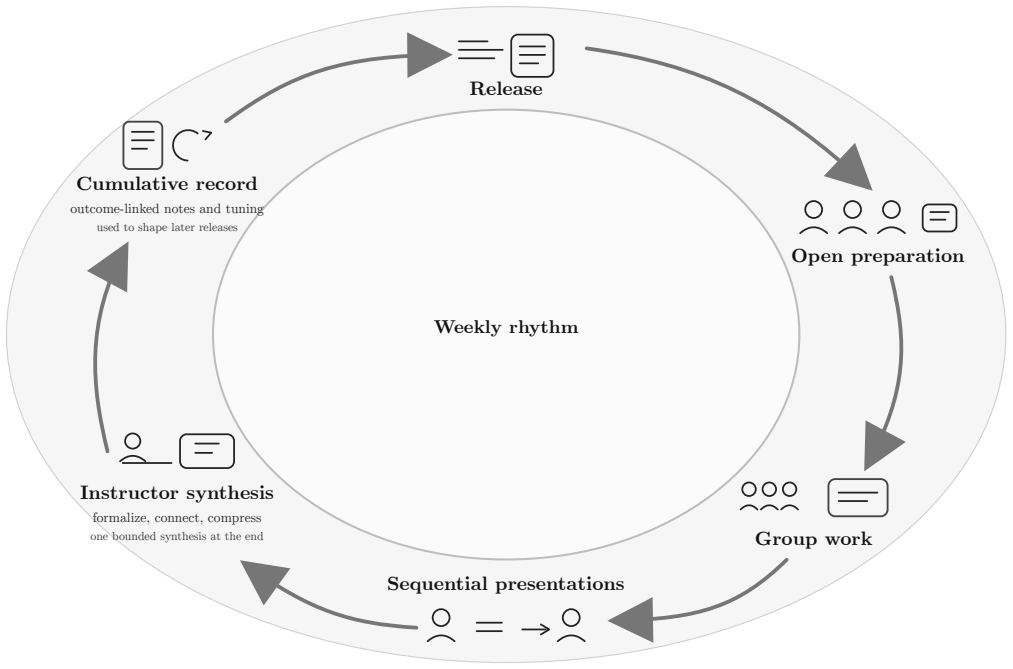

The cumulative oral-evidence model, in which weekly live sessions and ongoing observation replace traditional written submissions as the primary source of grade-relevant evidence.

If this is right

- Students prepare openly with digital assistance yet demonstrate understanding through real-time oral explanation and response to contingent questions.

- Instructors perform post-attempt synthesis to integrate observations against explicit course outcomes and maintain an evidence hierarchy.

- A middle-out stance permits bounded, retrieval-grounded AI assistance for students and teachers while preserving human-scale interactions.

- Task construction follows a realism framework that balances professional practice, disciplinary norms, and student experience.

- Implementation uses provided artifacts such as problem-type ecology, time estimates, and a five-grade oral rubric for small and medium cohorts.

Where Pith is reading between the lines

- The model could extend to other fields where written output is easily automated, by emphasizing live demonstration of reasoning.

- Regular oral interactions might foster active learning habits that persist beyond the course by making explanation a routine expectation.

- Instructor workload might shift from grading stacks of written work to real-time observation and brief synthesis notes.

- A pilot could test whether the approach scales by recording selected explanations for later review in larger classes.

Load-bearing premise

Live oral explanation combined with cumulative instructor observation will reliably and sufficiently evidence student understanding in place of written work, and the weekly synthesis process remains feasible without additional empirical validation of outcomes.

What would settle it

A semester-long implementation in which independent measures of conceptual understanding, such as unassisted oral interviews or concept inventories, are compared between sections using this methodology and traditional written-assessment sections, while instructor time logs track the feasibility of the synthesis step.

Figures

read the original abstract

In AI-rich higher education, polished written mathematics has become easier to produce than trustworthy evidence of understanding. This article develops a human-scale methodology for service mathematics, with informatics as its main running case. Its central move is not prohibition of tools but relocation of evidential trust. Students prepare openly, often with digital assistance, but grade-relevant evidence shifts toward live explanation, contingent questioning, and cumulative observation against course outcomes. The design is guided by Realistic Mathematics Education, question-first task construction, short human-scale mathematical tasks, and instructor synthesis after student attempt. It contributes a weekly operational cycle, a realism framework distinguishing professional, disciplinary, and experiential realism, a middle-out white-box / black-box stance on tools, a bounded role for retrieval-grounded AI assistants for students and teachers, and a cumulative oral-evidence model for small and medium cohorts. The paper also provides concrete implementation artifacts: process figures, an ecology of problem types, time-budget estimates, an evidence hierarchy, and a five-grade oral rubric. This is a methodology paper rather than an effectiveness study. Its claim is that the proposed design is pedagogically coherent, operationally plausible for human-scale teaching settings, and responsive to current concerns about AI, oral evidencing, and active learning in undergraduate mathematics education.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes a human-scale methodology for undergraduate service mathematics (with informatics as the running example) in AI-rich settings. Rather than prohibiting tools, it relocates evidential trust from polished written work to open preparation followed by live oral explanation, contingent questioning, and cumulative instructor observation. The design is grounded in Realistic Mathematics Education, question-first task construction, and short human-scale tasks. It supplies a weekly operational cycle, a three-way realism framework (professional/disciplinary/experiential), a middle-out white-box/black-box stance on tools, bounded retrieval-grounded AI use, and a cumulative oral-evidence model. Concrete artifacts include process figures, a problem-type ecology, time-budget estimates, an evidence hierarchy, and a five-grade oral rubric. The paper explicitly frames itself as a methodology proposal, not an effectiveness study, and claims only pedagogical coherence and operational plausibility for small-to-medium cohorts.

Significance. If the internal design holds, the work supplies instructors with immediately usable artifacts (rubric, time budgets, evidence hierarchy) that directly address timely concerns about AI-generated submissions, oral evidencing, and active learning. The explicit grounding in established RME principles and the provision of bounded AI roles give the proposal a practical character that could seed classroom trials or departmental pilots. Credit is due for the concrete, inspectable artifacts rather than abstract principles alone.

major comments (1)

- [cumulative oral-evidence model and evidence hierarchy] The cumulative oral-evidence model (described in the section on instructor synthesis and the evidence hierarchy) asserts that live explanation plus cumulative observation can reliably substitute for written work. However, the text does not supply a concrete mechanism for scaling contingent questioning across medium cohorts while preserving the claimed human-scale feasibility; this assumption is load-bearing for the operational-plausibility claim.

minor comments (2)

- [process figures] The process figures would benefit from explicit call-outs linking each step to the corresponding time-budget estimates and to the five-grade rubric.

- [ecology of problem types] The ecology of problem types is introduced but the mapping from each type to the three realism categories is left implicit; a short table would improve operational clarity.

Simulated Author's Rebuttal

We thank the referee for the positive assessment of the manuscript's practical artifacts and timely focus on AI-rich mathematics education. We address the single major comment below by agreeing that additional concrete detail on scaling is warranted and will revise accordingly to strengthen the operational-plausibility claim.

read point-by-point responses

-

Referee: The cumulative oral-evidence model (described in the section on instructor synthesis and the evidence hierarchy) asserts that live explanation plus cumulative observation can reliably substitute for written work. However, the text does not supply a concrete mechanism for scaling contingent questioning across medium cohorts while preserving the claimed human-scale feasibility; this assumption is load-bearing for the operational-plausibility claim.

Authors: We accept this observation. Although the manuscript supplies time-budget estimates (e.g., 2–3 minutes per student for oral components within a 50-student cohort) and an evidence hierarchy to guide prioritization, these elements stop short of an explicit, step-by-step scaling protocol for contingent questioning. In the revised version we will expand the 'Instructor Synthesis' section with a concrete mechanism: (1) pre-class triage of open-preparation artifacts against the three realism criteria to identify high-yield targets; (2) in-class use of a rotating question bank drawn from the problem-type ecology, limited to 4–6 targeted probes per session that cover the hierarchy without requiring exhaustive coverage of every student; and (3) post-class cumulative logging via a lightweight shared spreadsheet that records only whether each outcome has received at least one confirming observation across the week. This protocol is designed to keep total instructor effort below four hours per week for cohorts up to 60 while preserving the human-scale character. The addition will be presented as an operational elaboration rather than new empirical evidence, consistent with the paper’s methodology-proposal framing. revision: yes

Circularity Check

No significant circularity in methodology proposal

full rationale

The paper presents a design framework for AI-rich mathematics education rather than any derivation chain, equations, fitted parameters, or empirical predictions. Its claims rest on internal pedagogical coherence of the weekly cycle, realism distinctions, tool stance, and oral-evidence model, illustrated by concrete artifacts (process figures, problem ecology, time budgets, rubric). These are presented as self-contained proposals drawing on prior literature (e.g., Realistic Mathematics Education) without reducing any 'result' to its own inputs by construction, self-citation load-bearing, or renaming. No uniqueness theorems, ansatzes, or fitted-input predictions appear. The central claim is limited to plausibility for human-scale settings, which does not collapse into circularity.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Realistic Mathematics Education principles are appropriate for guiding task construction in service mathematics

- domain assumption Short human-scale mathematical tasks and live explanation provide reliable evidence of understanding

Reference graph

Works this paper leans on

-

[1]

Abc learning design, 2020

ABC Learning Design . Abc learning design, 2020. URL https://abc-ld.org/. Storyboard-based learning design workshop and toolkit

2020

-

[2]

Artificial intelligence and mathematics, 2026

American Mathematical Society . Artificial intelligence and mathematics, 2026. URL https://www.ams.org/about-us/ai. AMS page aggregating advisory-group work, white papers, videos, and articles on AI and mathematics

2026

-

[3]

Programmatic assessment design choices in nine programs in higher education

Liesbeth Baartman, Tamara van Schilt-Mol, and Cees van der Vleuten. Programmatic assessment design choices in nine programs in higher education. Frontiers in Education, 7: 0 931980, 2022. doi:10.3389/feduc.2022.931980

-

[4]

Enhancing teaching through constructive alignment

John Biggs. Enhancing teaching through constructive alignment. Higher Education, 32 0 (3): 0 347--364, 1996. doi:10.1007/BF00138871

-

[5]

Diaspora

Greg Egan. Diaspora. Orion/Millennium, London, 1997. ISBN 1-85798-438-2

1997

-

[6]

Revisiting Mathematics Education: China Lectures

Hans Freudenthal. Revisiting Mathematics Education: China Lectures. Kluwer Academic Publishers, Dordrecht, 1991

1991

-

[7]

Understand anything with notebooklm, 2026

Google for Education . Understand anything with notebooklm, 2026. URL https://edu.google.com/ai-notebooklm/. Official NotebookLM for education page describing grounded use over uploaded sources

2026

-

[8]

Context problems in realistic mathematics education: A calculus course as an example

Koeno Gravemeijer and Michiel Doorman. Context problems in realistic mathematics education: A calculus course as an example. Educational Studies in Mathematics, 39 0 (1--3): 0 111--129, 1999. doi:10.1023/A:1003749919816

-

[9]

Cindy E. Hmelo-Silver. Problem-based learning: What and how do students learn? Educational Psychology Review, 16 0 (3): 0 235--266, 2004. doi:10.1023/B:EDPR.0000034022.16470.f3

-

[10]

A database approach to course timetabling

Douglas Johnson. A database approach to course timetabling. INFORMS Journal on Computing, 5 0 (3): 0 302--310, 1993

1993

-

[11]

Znanstvenost u nastavi matematike

Zdravko Kurnik. Znanstvenost u nastavi matematike. Metodika, 9 0 (17): 0 318--327, 2008. URL https://hrcak.srce.hr/34802

2008

-

[12]

Problemska nastava

Zdravko Kurnik. Problemska nastava. Mi S -- Matematika i S kola , 2009. URL https://mis.element.hr/clanak/problemska-nastava/

2009

-

[13]

Sandra L. Laursen and Chris Rasmussen. I on the prize: Inquiry approaches in undergraduate mathematics. International Journal of Research in Undergraduate Mathematics Education, 5 0 (1): 0 129--146, 2019. doi:10.1007/s40753-019-00085-6

-

[14]

Li et al

Z. Li et al. Retrieval-augmented generation for educational application: A survey. Smart Learning Environments, 2025. Survey discussing RAG in educational settings and ongoing challenges such as hallucination, outdated knowledge, and reliability limits

2025

-

[15]

Chung Kwan Lo, Khe Foon Hew, and Gaowei Chen. Toward a set of design principles for mathematics flipped classrooms: A synthesis of research in mathematics education. Educational Research Review, 22: 0 50--73, 2017. doi:10.1016/j.edurev.2017.08.002

-

[16]

Categories for the Working Mathematician, volume 5 of Graduate Texts in Mathematics

Saunders Mac Lane. Categories for the Working Mathematician, volume 5 of Graduate Texts in Mathematics. Springer, New York, 2 edition, 1998. ISBN 978-0-387-98403-2

1998

-

[17]

Interactive timetabling: Concepts, techniques, and practical results

Tom \'a s M \"u ller and Roman Bart \'a k. Interactive timetabling: Concepts, techniques, and practical results. Practice and Theory of Automated Timetabling, 2002

2002

-

[18]

Benjamin C. Pierce. Basic Category Theory for Computer Scientists. Foundations of Computing. MIT Press, Cambridge, MA, 1991. ISBN 978-0-262-66071-6

1991

-

[19]

Peter Strelan, Amanda Osborn, and Edward Palmer. The flipped classroom: A meta-analysis of effects on student performance across disciplines and education levels. Educational Research Review, 30: 0 100314, 2020. doi:10.1016/j.edurev.2020.100314

-

[20]

David C. D. van Alten, Chris Phielix, Jeroen Janssen, and Liesbeth Kester. Effects of flipping the classroom on learning outcomes and satisfaction: A meta-analysis. Educational Research Review, 28: 0 100281, 2019. doi:10.1016/j.edurev.2019.05.003

-

[21]

Realistic mathematics education

Marja van den Heuvel-Panhuizen and Paul Drijvers. Realistic mathematics education. In Stephen Lerman, editor, Encyclopedia of Mathematics Education, pages 521--525. Springer, Dordrecht, 2014. doi:10.1007/978-94-007-4978-8_170

-

[22]

Cees P. M. van der Vleuten, Lambert W. T. Schuwirth, Erik W. Driessen, Janke Dijkstra, Dineke Tigelaar, Liesbeth K. J. Baartman, and Jan van Tartwijk. A model for programmatic assessment fit for purpose. Medical Teacher, 34 0 (3): 0 205--214, 2012. doi:10.3109/0142159X.2012.652239

-

[23]

The edge of mathematics

Matteo Wong. The edge of mathematics. The Atlantic, February 2026. URL https://www.theatlantic.com/technology/2026/02/ai-math-terrance-tao/686107/

2026

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.