Recognition: unknown

New Deep Learning Data Analysis Method for PROSPECT using GAPE: Genetic Algorithm Powered Evolution

Pith reviewed 2026-05-10 16:46 UTC · model grok-4.3

The pith

A genetic algorithm evolves deep learning models that raise PROSPECT's neutrino signal-to-background ratio by nearly 2.8 times.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

When benchmarked against conventional PROSPECT neutrino identification pathways using the same underlying information, the classifier offers the promise of improving the signal-to-background ratio by nearly 2.8 times. Performance biases uncovered during initial IBD classifier validation were primarily caused by differences in time-dependent response between background and signal training datasets. Biases were effectively mitigated through a data-period-specific training regimen, offering a pathway towards realizing an unbiased IBD signal classifier for future reactor neutrino datasets. The same GAPE procedure also produces models for energy and position estimation.

What carries the argument

GAPE (Genetic Algorithm Powered Evolution), an evolutionary search that optimizes both the architecture and parameters of deep neural networks for energy/position reconstruction and IBD classification tasks.

If this is right

- Higher-purity IBD samples become available for reactor neutrino oscillation and spectrum measurements without requiring new hardware.

- The GAPE procedure itself can be reused to optimize machine-learning models for other particle-physics reconstruction or classification problems.

- Data-period-specific training provides a practical route to stable performance in detectors whose response drifts over months or years.

- Improved energy and position estimates from GAPE models can feed into higher-level physics analyses that depend on accurate event kinematics.

Where Pith is reading between the lines

- If GAPE scales to other long-running neutrino detectors, automated model evolution could replace much of the current manual tuning of background-rejection cuts.

- The method may generalize to any experiment where detector response changes slowly with time, offering a way to maintain classification performance across calendar periods.

- Running GAPE on purely simulated data with injected time variations would provide an independent check of robustness before deployment on real detector data.

Load-bearing premise

Period-specific retraining fully removes time-dependent response biases without introducing new selection effects or reducing overall efficiency, and the genetic search has not overfit to the particular training and validation splits used.

What would settle it

Applying the final GAPE-selected classifier to an independent data period whose detector response characteristics differ from all training periods and finding the signal-to-background improvement falls below a factor of two would falsify the central performance claim.

Figures

read the original abstract

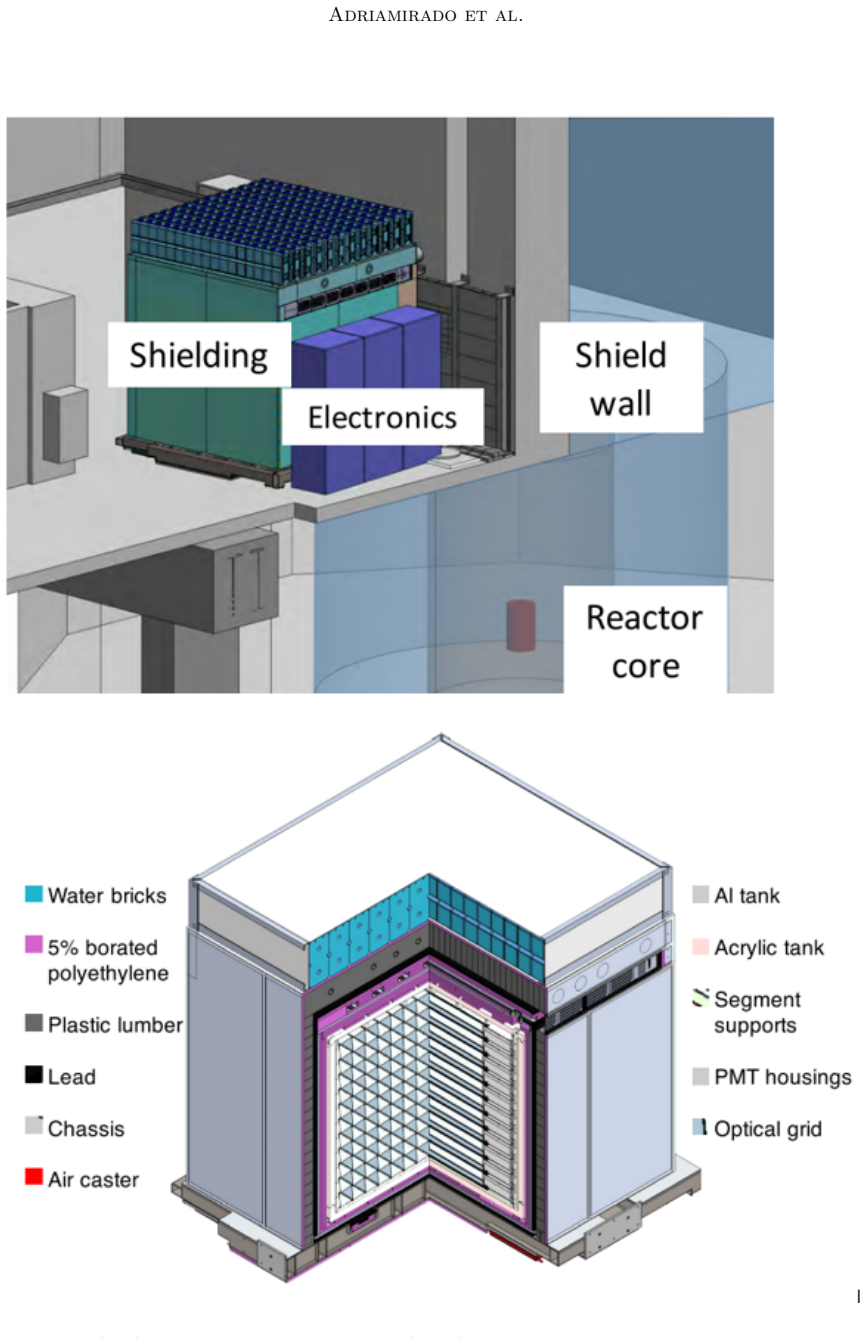

We propose a genetic algorithm powered evolution (GAPE) method to create deep learning solutions for energy and position estimation for reactor antineutrino interactions in the Precision Reactor Oscillation and Spectrum Experiment (PROSPECT) at the highly enriched High Flux Isotope Reactor (HFIR) at Oak Ridge National Laboratory. We also apply GAPE to create classification models to distinguish signatures of inverse beta decay (IBD) interactions of reactor antineutrinos from common background types. The GAPE method can also be adopted for optimization of other types of problems that utilize machine learning (ML) models for particle physics applications. When applied in the PROSPECT context, we find that the models selected by GAPE can, in some cases, outperform the traditional models previously used for PROSPECT data analysis. In particular, when benchmarked against conventional PROSPECT neutrino identification pathways using the same underlying information, the classifier offers the promise of improving the signal-to-background ratio by nearly 2.8 times. Performance biases uncovered during initial IBD classifier validation were primarily caused by differences in time-dependent response between background and signal training datasets. Biases were effectively mitigated through a data-period-specific training regimen, offering a pathway towards realizing an unbiased IBD signal classifier for future reactor neutrino datasets.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces a Genetic Algorithm Powered Evolution (GAPE) method to evolve deep learning architectures and hyperparameters for energy/position estimation and inverse beta decay (IBD) classification in the PROSPECT reactor antineutrino experiment. It reports that GAPE-selected classifiers, when benchmarked against conventional PROSPECT neutrino identification pathways using the same information, can improve the signal-to-background ratio by nearly 2.8 times, with time-dependent response biases mitigated via period-specific retraining.

Significance. If the reported performance gain is shown to be robust, GAPE could serve as a practical tool for automating ML model optimization in particle physics data analysis, particularly for experiments facing time-varying detector responses. The work directly addresses a real experimental challenge in PROSPECT and provides a pathway for unbiased IBD classification. However, the current presentation does not include sufficient methodological detail or quantitative validation to allow independent assessment of the 2.8x claim.

major comments (2)

- [Abstract] Abstract: The central claim of a nearly 2.8 times improvement in signal-to-background ratio is stated without error bars, without specification of the exact conventional baseline algorithms or their performance metrics, and without details on training/validation/test splits or whether the genetic search was isolated from the final benchmark set. This information is required to determine whether the reported gain reflects genuine generalization or optimization leakage.

- [Abstract] Abstract: The bias mitigation procedure is described only at a high level as 'data-period-specific training.' No information is given on how periods were defined or selected, whether period boundaries were chosen after inspecting performance, or whether the final S/B comparison used a completely held-out dataset independent of the evolutionary fitness evaluations. These details are load-bearing for the claim that the classifier is unbiased.

Simulated Author's Rebuttal

We thank the referee for their careful and constructive review of our manuscript. The comments on the abstract correctly identify areas where additional clarity is needed to support the central claims. We have revised the abstract and expanded the methods section to include the requested details on error bars, baseline specifications, data splits, isolation of the genetic search, period definitions, and held-out validation. These changes allow independent assessment of the reported performance gains and the unbiased nature of the classifier. We believe the revisions address the concerns while preserving the paper's focus.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central claim of a nearly 2.8 times improvement in signal-to-background ratio is stated without error bars, without specification of the exact conventional baseline algorithms or their performance metrics, and without details on training/validation/test splits or whether the genetic search was isolated from the final benchmark set. This information is required to determine whether the reported gain reflects genuine generalization or optimization leakage.

Authors: We agree that the original abstract omitted these supporting details. In the revised manuscript, the abstract has been updated to report the improvement with error bars, to name the exact conventional baselines (standard PROSPECT IBD selection cuts together with the ML classifiers from prior PROSPECT publications), to include their performance metrics, to state the training/validation/test split ratios, and to confirm that the genetic algorithm search was performed on a dedicated subset with the final benchmark executed on a completely held-out test set. This structure prevents optimization leakage and supports the claim of genuine generalization. revision: yes

-

Referee: [Abstract] Abstract: The bias mitigation procedure is described only at a high level as 'data-period-specific training.' No information is given on how periods were defined or selected, whether period boundaries were chosen after inspecting performance, or whether the final S/B comparison used a completely held-out dataset independent of the evolutionary fitness evaluations. These details are load-bearing for the claim that the classifier is unbiased.

Authors: The referee is correct that the abstract presented the bias mitigation at a high level. We have revised the abstract to specify that data periods were predefined from reactor operation logs and detector calibration records, with boundaries fixed a priori and independent of any performance inspection. Evolutionary fitness evaluations occurred within each period's training data, and the final signal-to-background comparison was performed on a test set withheld from all training, evolution, and period selection steps. A new methods subsection now provides the full period definitions and the held-out dataset protocol to substantiate the unbiased classification claim. revision: yes

Circularity Check

No significant circularity detected

full rationale

The paper applies a genetic algorithm (GAPE) to evolve deep learning architectures and hyperparameters for IBD classification and energy/position estimation in PROSPECT data. The central claim of a ~2.8x signal-to-background improvement is presented as an empirical benchmark result against conventional PROSPECT pathways using the same underlying information, after applying period-specific retraining to address time-dependent biases. No equations, derivations, or self-referential definitions appear in the abstract or described content that would reduce the reported performance gain to a fitted parameter or input by construction. No load-bearing self-citations, uniqueness theorems, or ansatzes imported from prior author work are invoked to justify the result. The evaluation is framed as a direct comparison on the dataset after bias mitigation, which does not constitute circularity under the specified patterns.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

ISSN 0168-9002. doi: https://doi.org/10.1016/S0168-9002(03)01368-8. URL https://www.sciencedirect.com/science/article/pii/S0168900203013688. J. K. Ahn, S. Chebotaryov, J. H. Choi, S. Choi, W. Choi, Y. Choi, H. I. Jang, J. S. Jang, E. J. Jeon, I. S. Jeong, K. K. Joo, B. R. Kim, B. C. Kim, H. S. Kim, J. Y. Kim, S. B. Kim, S. H. Kim, S. Y. Kim, W. Kim, Y. D....

-

[2]

doi: 10.1016/j.nima.2018.12.079

ISSN 0168-9002. doi: 10.1016/j.nima.2018.12.079. URL http://dx.doi.org/10. 1016/j.nima.2018.12.079. 35 Adriamirado et al. J. Ashenfelter et al. First search for short-baseline neutrino oscillations at HFIR with PROSPECT. Phys. Rev. Lett. , 121(25):251802, 2018. doi: 10.1103/PhysRevLett.121. 251802. Anke Biekötter, Parisa Gregg, Frank Krauss, and Marek Sch...

-

[3]

Asymptotic formulae for likelihood-based tests of new physics

ISSN 1434-6052. doi: 10.1140/epjc/s10052-011-1554-0. URL http://dx.doi.org/ 10.1140/epjc/s10052-011-1554-0 . G. Cybenko, D.P. O’Leary, and J. Rissanen. The Mathematics of Information Coding, Extraction and Distribution . The IMA Volumes in Mathematics and its Applications. Springer New York, 1998. ISBN 9780387986654. URL https://books.google.ca/ books?id=...

work page internal anchor Pith review doi:10.1140/epjc/s10052-011-1554-0 1998

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.