Recognition: unknown

Relaxing in Warped Spaces: Generalized Hierarchical and Modular Dynamical Neural Network

Pith reviewed 2026-05-10 15:55 UTC · model grok-4.3

The pith

A hierarchical neural network lets state variables relax in individually warped spaces, creating mappings and a certainty-uncertainty relation between input and output trajectories.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Minimizing the energy function yields subspaces whose dynamics are governed by layered internetworks; periodic inputs of differing frequencies then induce mapping relations across subspaces, while in association mode each internal state variable relaxes inside a warped space tailored to its own requirements, with constrained slow inputs producing output trajectories that behave as if inverse mappings were applied hierarchically from outer to inner layers, thereby implying a certainty/uncertainty relation between input and output trajectories.

What carries the argument

Hierarchical subspaces linked by forward-backward internetworks, obtained directly from energy minimization over neurons of two distinct time constants, that enable both mapping formation and warped relaxation.

If this is right

- Periodic inputs of different frequencies produce two-dimensional mapping relationships between adjacent subspaces.

- In unconstrained association mode each state variable relaxes inside its own warped space.

- In constrained association mode a slow periodic input produces a warped output trajectory equivalent to an inverse mapping applied across layers.

- The overall dynamics imply a certainty/uncertainty relation linking input-trajectory precision to output-trajectory warping.

Where Pith is reading between the lines

- The same energy-minimization principle could be applied to design recurrent networks that automatically separate timescales without explicit multi-rate scheduling.

- The warped spaces may correspond to effective coordinate transformations that simplify optimization or inference tasks in hierarchical systems.

- Biological circuits with neurons of widely separated time constants might exploit analogous warped relaxation to achieve robust multi-scale computation.

- Testing the model with non-periodic inputs would reveal whether the certainty/uncertainty relation persists beyond the Lissajous regime.

Load-bearing premise

Minimizing the energy function alone, without added constraints or parameter tuning, is sufficient to generate the subspaces, internetworks, and warped relaxation dynamics described.

What would settle it

Simulate the network with two-frequency periodic inputs and check whether the resulting inter-subspace trajectories match the predicted Lissajous-like mappings without manual adjustment of connection strengths.

Figures

read the original abstract

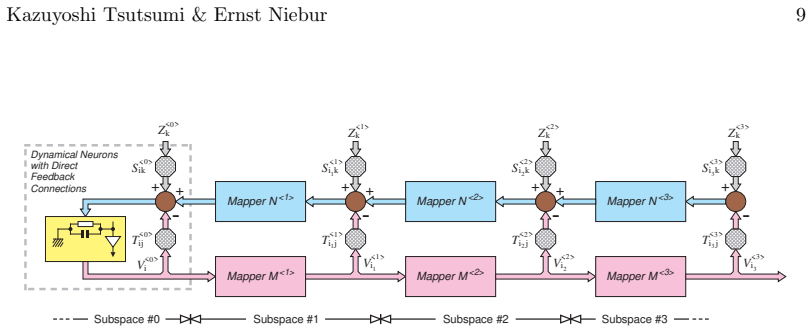

We propose a dynamical neural network model with a hierarchical and modular structure. The network architecture can be derived by minimizing an energy function that is originally designed based on two kinds of neurons with quite different time constants. It has multiple subspaces that are spanned by neural parameters employed in the energy function, and adjacent subspaces are related to each other with a layered internetwork. Each internetwork further consists of a pair of a forward subnet and a backward one, and signals flowing through these subnets determine total dynamics of the network. The model can operate in either a learning or an association mode. In the learning mode, when periodic signals equivalent to repetitive neuronal bursting are suitably applied to input ports in all subspaces, mapping relationships corresponding to those input signals are eventually formed in internetworks between subspaces. Various two-dimensional mapping relationships between subspaces can be shaped by employing an appropriate set of periodic input signals with different frequencies based on the same mechanism as a Lissajous curve. The model in the association mode provides an overall framework such that state variables inside the network individually relax in warped spaces, each of which has been designed as favorable for a (or some) state variable(s). The association mode is further classified into two modes; unconstrained and constrained. In the latter mode, for instance, when a sufficiently slow periodic trajectory is set as an input, a warped output trajectory appears in each subspace as if imaginary layered networks with the inverse mapping relationships to existing forward subnets' were located hierarchically from outside to inside. These results suggest that a certainty/uncertainty relation exists between an input trajectory and an output trajectory.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a dynamical neural network with hierarchical modular structure derived by minimizing an energy function based on two neuron types with distinct time constants. The architecture consists of subspaces spanned by neural parameters, connected by layered forward/backward internetworks. In learning mode, periodic inputs (e.g., bursting-like signals with different frequencies) form mapping relationships between subspaces via a Lissajous-curve mechanism. In association mode (unconstrained or constrained), state variables relax individually in 'warped spaces' favorable to specific variables; constrained mode with slow periodic inputs produces warped output trajectories implying inverse mappings, suggesting a certainty/uncertainty relation between input and output trajectories.

Significance. If the energy minimization, derivations, and dynamical behaviors hold, the work could provide a principled framework for constructing hierarchical modular networks from first principles in neural modeling, linking energy-based architectures to emergent mapping formations and trajectory-dependent relaxations. This might offer insights into biological associative processes and dynamical hierarchies, with potential for explaining how distinct time constants lead to modular internetworks without ad-hoc wiring.

major comments (3)

- [Abstract] Abstract: The central claim that 'the network architecture can be derived by minimizing an energy function' is stated without providing the explicit functional form of the energy function, the two neuron types' definitions, the minimization procedure, or any resulting dynamical equations. This is load-bearing for all subsequent claims about subspaces, internetworks, and warped relaxations.

- [Abstract] Abstract (learning mode description): The assertion that mappings 'are eventually formed' and 'can be shaped by employing an appropriate set of periodic input signals... based on the same mechanism as a Lissajous curve' lacks any derivation, stability analysis, or simulation evidence showing how the subnet dynamics produce these mappings rather than presupposing them.

- [Abstract] Abstract (association mode): The description of state variables 'individually relax[ing] in warped spaces' and the 'certainty/uncertainty relation' between trajectories is presented without dynamical equations for the forward/backward subnets, any analysis of relaxation trajectories, or demonstration that these behaviors emerge from energy minimization rather than being built into the subspace definitions.

minor comments (2)

- [Abstract] The terms 'warped spaces' and 'subspaces spanned by neural parameters' are introduced without precise mathematical definitions or notation clarifying their relation to the energy function parameters.

- No mention of parameter values, simulation methods, or reproducibility details for the claimed behaviors, which would aid verification even if derivations are added.

Simulated Author's Rebuttal

We thank the referee for their thorough review and insightful comments on our manuscript. We address each major comment below, focusing on the abstract as noted. The full derivations, equations, and analyses are provided in the main text (Sections 2–5), but we agree the abstract can be strengthened with brief references to these elements for better clarity.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central claim that 'the network architecture can be derived by minimizing an energy function' is stated without providing the explicit functional form of the energy function, the two neuron types' definitions, the minimization procedure, or any resulting dynamical equations. This is load-bearing for all subsequent claims about subspaces, internetworks, and warped relaxations.

Authors: We agree that the abstract's brevity omits the explicit energy function and derivation steps. These are detailed in Section 2, where the energy function is defined using two neuron types with distinct time constants, and minimization yields the coupled dynamical equations that generate the hierarchical subspaces and internetworks. We will revise the abstract to concisely reference the energy function form and direct readers to Section 2 for the full minimization procedure and resulting equations. revision: yes

-

Referee: [Abstract] Abstract (learning mode description): The assertion that mappings 'are eventually formed' and 'can be shaped by employing an appropriate set of periodic input signals... based on the same mechanism as a Lissajous curve' lacks any derivation, stability analysis, or simulation evidence showing how the subnet dynamics produce these mappings rather than presupposing them.

Authors: The mapping formation mechanism is derived from the forward/backward subnet dynamics under periodic inputs, as shown in Sections 3 and 4, with stability following from the energy minimization framework. Simulations (Figures 3–5) illustrate the Lissajous-curve-like mappings for varying frequencies. We will revise the abstract to note that these mappings emerge from the subnet dynamics and reference the supporting analyses and simulations in the main text. revision: yes

-

Referee: [Abstract] Abstract (association mode): The description of state variables 'individually relax[ing] in warped spaces' and the 'certainty/uncertainty relation' between trajectories is presented without dynamical equations for the forward/backward subnets, any analysis of relaxation trajectories, or demonstration that these behaviors emerge from energy minimization rather than being built into the subspace definitions.

Authors: The warped-space relaxations and certainty/uncertainty relation are analyzed in Section 5 using the dynamical equations of the subnets, which arise directly from the original energy minimization. Trajectory analyses for constrained and unconstrained modes demonstrate the emergent inverse mappings and warped outputs. We will revise the abstract to indicate that these behaviors are derived from the subnet dynamics and refer to the detailed analysis in the main text. revision: yes

Circularity Check

Warped spaces pre-designed as favorable for state variables renders relaxation claim self-definitional

specific steps

-

self definitional

[Abstract (association mode paragraph)]

"The model in the association mode provides an overall framework such that state variables inside the network individually relax in warped spaces, each of which has been designed as favorable for a (or some) state variable(s)."

The spaces are stated to have been 'designed as favorable' for the variables; therefore the assertion that the variables relax in those spaces is true by the design definition itself, not as an independent consequence of energy minimization or internetwork dynamics.

full rationale

The paper asserts that the hierarchical modular architecture 'can be derived by minimizing an energy function' on two neuron populations with distinct time constants, after which state variables 'individually relax in warped spaces' in association mode. However, the text explicitly states that each warped space 'has been designed as favorable for a (or some) state variable(s)', so the claimed relaxation behavior follows directly from that design choice rather than being shown to emerge from the minimization or subnet dynamics. No functional form of the energy, minimization procedure, or dynamical equations are supplied to demonstrate independent derivation. This constitutes self-definitional circularity on the central association-mode claim. The Lissajous mapping is referenced as a known mechanism but is not load-bearing for the circularity. The derivation chain therefore reduces partially to its own definitional inputs.

Axiom & Free-Parameter Ledger

free parameters (2)

- time constants of the two neuron types

- frequencies of periodic input signals

axioms (2)

- domain assumption Minimizing the energy function produces a network with multiple subspaces connected by layered internetworks consisting of forward and backward subnets.

- domain assumption Periodic signals equivalent to repetitive neuronal bursting applied to input ports form mapping relationships in the internetworks.

invented entities (2)

-

warped spaces

no independent evidence

-

subspaces spanned by neural parameters

no independent evidence

Reference graph

Works this paper leans on

-

[1]

[2]Amari, S.-I.Theory of adaptive pattern classifiers.IEEE Transactions on Electronic Computers 16(1967), 299–307

[1]Aihara, K., and Ichinose, N.Modeling and complexity in neural networks.Artificial Life and Robotics 3(1999), 148–154. [2]Amari, S.-I.Theory of adaptive pattern classifiers.IEEE Transactions on Electronic Computers 16(1967), 299–307. [3]Amari, S.-I.Neural theory of association and concept-formation.Biological Cybernetics 26(1977), 175–185. Kazuyoshi Tsu...

1999

-

[2]

[16]Hinton, G

[15]Hect-Nielsen, R.Neurocomputing: Picking the human brain.IEEE Spectrum(March 1988), 36–41. [16]Hinton, G. E., Osindero, S., and Teh, Y.A fast learning algorithm for deep belief nets.Neural Computation 18(2006), 1527–1554. [17]Hinton, J., and Salakhutdinov, R. R.Reducing the dimensionality of data with neural networks.Science 313(2006), 504–507. [18]Hod...

1988

-

[3]

[26]Kosko, B.Adaptive bidirectional associative memories.Applied Optics 26, 23 (1987), 4947–4960

[25]Kohonen, T.Correlation matrix memories.IEEE Transactions on Computers C-21 (1972), 353–359. [26]Kosko, B.Adaptive bidirectional associative memories.Applied Optics 26, 23 (1987), 4947–4960. [27]Kosko, B.Bidirectional associative memories.IEEE Transactions on Systems, Man, and Cybernetics 18(1988), 49–60. [28]Krizhevsky, A., Sutskever, I., and Hinton, ...

1972

-

[4]

[31]McCulloch, W

[30]Matsuoka, K.Sustained oscillations generated by mutually inhibiting neurons with adaptation.Biological Cybernetics 52(1985), 367–376. [31]McCulloch, W. S., and Pitts, W.A logical calculus of the ideas immanent in nervous activity.Bulletin of Mathematical Biophysics 5(1943), 115–133. [32]Meunier, D., Lambiotte, R., and Bullmore, E. T.Modular and hierar...

1985

-

[5]

[36]Nagumo, J., Arimoto, S., and Yoshizawa, S.An active pulse transmission line simulating nerve axon

[35]Morishita, I., and Yajima, A.Analysis and simulation of networks of mutually inhibiting neurons.Kybernetik 11(1972), 154–165. [36]Nagumo, J., Arimoto, S., and Yoshizawa, S.An active pulse transmission line simulating nerve axon. InProc. IRE(1962), vol. 50, pp. 2061–2070. [37]Nakano, K.Associatron - a model of associative memory.IEEE Transactions on Sy...

1972

-

[6]

[43]Rumelhart, D

[42]Rosenblatt, F.The perceptron: A probabilistic model for information storage and organization in the brain.Psychological Review 65(1958), 386–408. [43]Rumelhart, D. E., Hinton, G. E., and Williams, R. J.Learning internal repre- sentation by error propagation. InParallel distributed processing: Explorations in the microstructures of cognition, D. E. Rum...

1958

-

[7]

The MIT Press, Cambridge, MA, U.S.A., 1986, pp. 318–362. [44]Rumelhart, D. E., Hinton, G. E., and Williams, R. J.Learning representations by back-propogating errors.Nature 323(1986), 533–536. [45]Steinbuch, K., and Piske, U. A. W.Learning matrices and their applications.IEEE Transactions on Electronic Computers 12(1963), 846–862. [46]Szentagothai, J.Struc...

1986

-

[8]

and hopfield nets and internal space representation

[50]Tsutsumi, K.A multi-layered neural network composed of back-prop. and hopfield nets and internal space representation. InProc. International Joint Conference on Neural Networks(1989), pp. 365–371. [51]Tsutsumi, K.Cross-coupled hopfield nets via generalized-delta-rule-based internet- works. InProc. International Joint Conference on Neural Networks(1990...

1989

-

[9]

[54]Tsutsumi, K.Relaxing in a warped space: An effect due to the cooperation of static and dynamical neurons. InProc. International Joint Conference on Neural Networks (2003), pp. 897–901. [55]Tsutsumi, K., Katayama, K., and Matsumoto, H.Neural computation for con- trolling the configuration of 2-dimentional truss structure. InProc. the IEEE Annual Intern...

work page internal anchor Pith review Pith/arXiv arXiv 2003

-

[10]

E.Adaptive switching circuits

[65]Widrow, B., and Hoff, M. E.Adaptive switching circuits. InIRE WESCON Convention Record(1960), pp. 96–104. [66]Williams, R. J., and Zipser, D.Gradient-based learning algorithms for recurrent connectionist networks. Tech. Rep. Tech. Rep. NU-CCS-90-9, College of Computer Science, Northeastern University, Boston, MA, U.S.A.,

1960

-

[11]

J., and Zipser, D.Gradient-based learning algorithms for recurrent networks and their computational complexity

[67]Williams, R. J., and Zipser, D.Gradient-based learning algorithms for recurrent networks and their computational complexity. InBack-propagation: theory, architectures and applications, Y. Chauvin and D. E. Rumelhart, Eds. L. Erlbaum Associates Inc., Hillsdale, NJ, U.S.A., 1995, pp. 433–486. Acknowledgements:The authors are grateful to the deceased Pro...

1995

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.