Neuronal electricality founded in murburn-thermodynamic principles: 2. Comparisons, evidenced explanations, and predictions

Murburn model predicts conduction velocity and waveforms from oxygen, redox balance, and transport rates, extending to cardiac and photorece

abstract

click to expand

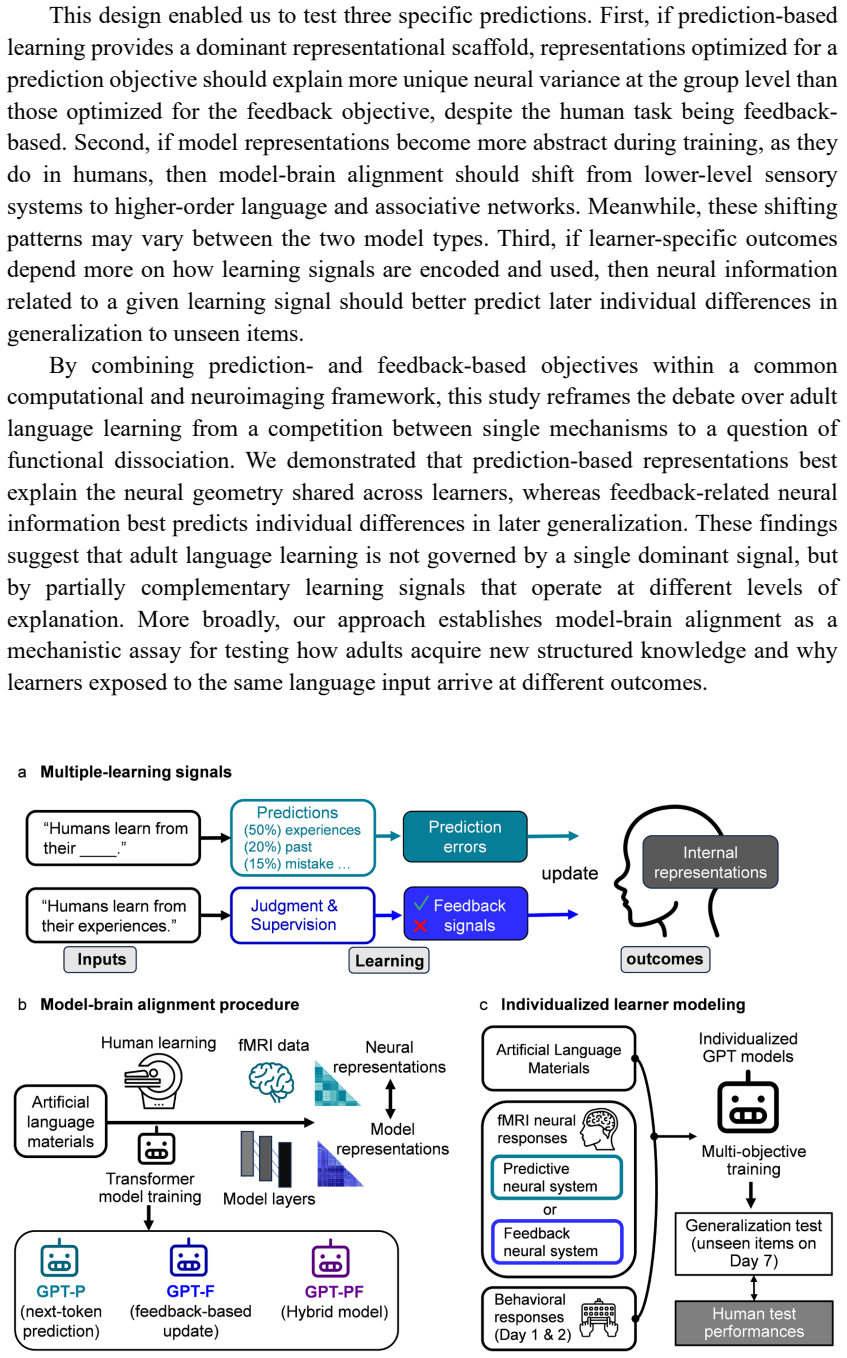

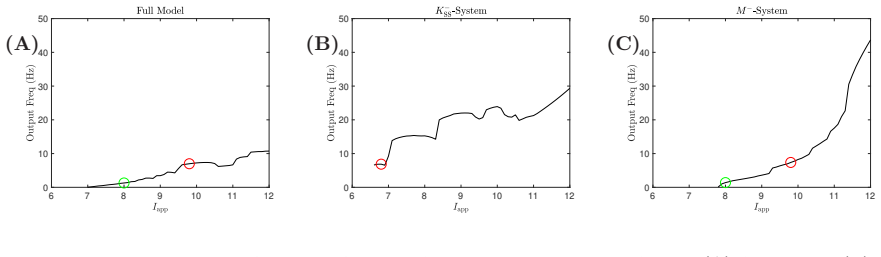

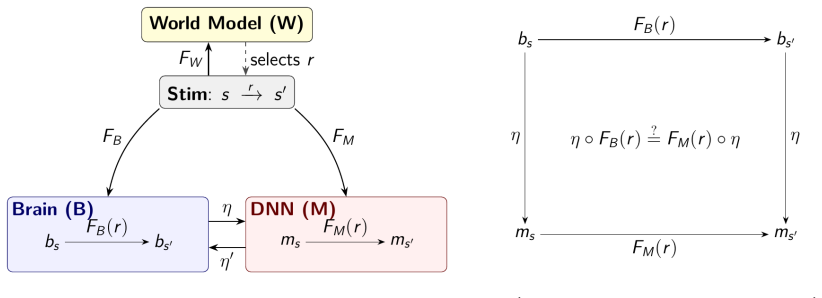

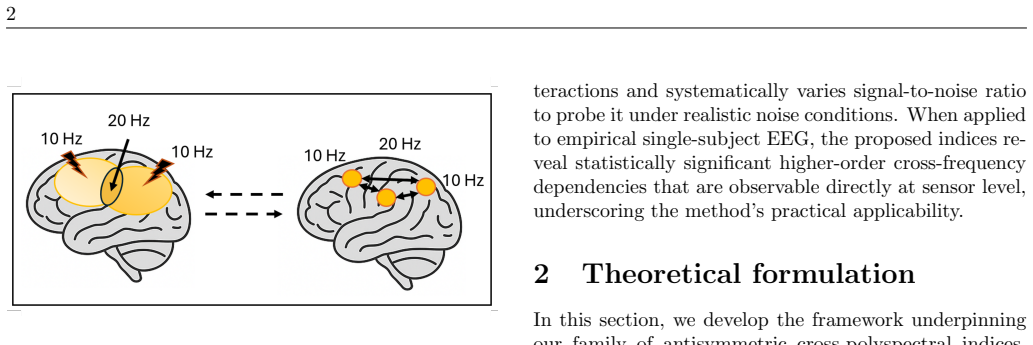

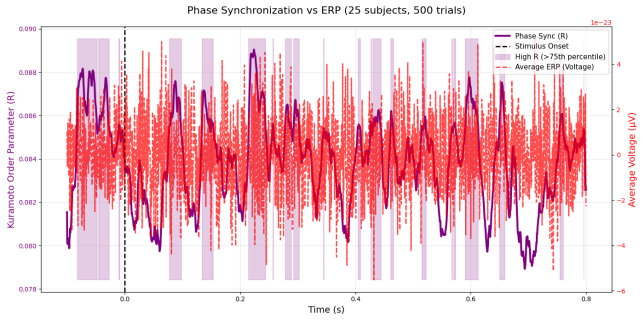

The analyses presented herein demonstrate that neuronal electrical activity can be consistently interpreted as a manifestation of murburn redox-mediated electronic dynamics rather than as a process fundamentally driven by transmembrane ionic flux. By integrating comparison with established models, quantitative predictions, and diverse experimental observations, the murburn framework emerges as a unified and chemically grounded description of excitability. A key strength of the model lies in its predictive structure. Unlike phenomenological frameworks that rely on parameter fitting, the murburn formulation links measurable electrophysiological outputs: such as conduction velocity, waveform morphology, and threshold behavior; to physically interpretable variables including redox kinetics, transport efficiency, and environmental conditions. This enables direct experimental validation through perturbations in oxygen availability, redox balance, solvent properties, ionic strength, and external fields. Importantly, the framework extends beyond neurons to a broader class of excitable systems, including cardiac tissue, photoreceptors, and artificial redox-active materials, suggesting that excitability is a general physicochemical phenomenon rooted in reaction-transport dynamics. While the present work establishes the mid-scale dynamics of neuronal electricality, further developments are required to connect quantum-level electron transfer processes with macroscopic electrophysiological signals such as EEG and EMG. These extensions, along with targeted experimental tests, will determine the ultimate scope and applicability of the murburn paradigm.

full image

full image