Recognition: unknown

CapBench: A Multi-PDK Dataset for Machine-Learning-Based Post-Layout Capacitance Extraction

Pith reviewed 2026-05-10 16:13 UTC · model grok-4.3

The pith

CapBench releases a multi-PDK dataset of 61,855 layout windows with high-accuracy capacitance labels to train and benchmark machine learning extractors.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We present CapBench, a fully reproducible, multi-PDK dataset for capacitance extraction. The dataset is derived from open-source designs placed and routed in 14 independent OpenROAD runs across ASAP7, NanGate45, and Sky130HD. From these layouts we extract 61,855 3D windows across three size tiers. High-fidelity labels generated by RWCap match the industry-standard Raphael solver to 0.64 percent mean absolute error on total capacitance. Ten machine-learning architectures evaluated on the pre-processed windows show CNNs at 1.75 percent error and GNNs offering up to 41.4 times speedup at 10.2 percent error.

What carries the argument

The CapBench dataset of 3D windows extracted from placed-and-routed layouts, each converted into density maps, graph representations, and point clouds and labeled with total capacitance from the RWCap random-walk solver.

If this is right

- Researchers can train and compare machine-learning models for capacitance extraction on a single, standardized, multi-technology collection of labeled windows.

- A measurable accuracy-speed trade-off exists between convolutional and graph architectures on this task.

- The three size tiers and three technology nodes support studies of transfer learning and scaling behavior.

- The 0.64 percent validation error of the RWCap labels against Raphael supplies a reliable target for machine-learning accuracy.

Where Pith is reading between the lines

- Teams could embed the faster GNN predictors inside early-stage design loops to cut the number of full commercial extractions required.

- The dataset's open format invites extension to additional technology nodes or larger designs once more open-source layouts become available.

- Integration of these models into iterative placement-and-routing loops would let extraction feedback influence layout decisions at lower cost.

Load-bearing premise

The open-source designs and chosen 3D windows are representative of the capacitance extraction problems that arise in real commercial post-layout flows across technology nodes.

What would settle it

Models trained on CapBench produce total capacitance predictions on a commercial proprietary design whose error exceeds the 1.75 percent CNN baseline when compared against the same Raphael reference used to label the dataset.

Figures

read the original abstract

We present CapBench, a fully reproducible, multi-PDK dataset for capacitance extraction. The dataset is derived from open-source designs, including single-core CPUs, systems-on-chip, and media accelerators. All designs are fully placed and routed using 14 independent OpenROAD flow runs spanning three technology nodes: ASAP7, NanGate45, and Sky130HD. From these layouts, we extract 61,855 3D windows across three size tiers to enable transfer learning and scalability studies. High-fidelity capacitance labels are generated using RWCap, a state-of-the-art random-walk solver, and validated against the industry-standard Raphael, achieving a mean absolute error of 0.64% for total capacitance. Each window is pre-processed into density maps, graph representations, and point clouds. We evaluate 10 machine learning architectures that illustrate dataset usage and serve as baselines, including convolutional neural networks (CNNs), point cloud transformers, and graph neural networks (GNNs). CNNs demonstrate the lowest errors (1.75%), while GNNs are up to 41.4x faster but exhibit larger errors (10.2%), illustrating a clear accuracy-speed trade-off. Code and dataset are available at https://github.com/THU-numbda/CapBench.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents CapBench, a multi-PDK dataset for ML-based post-layout capacitance extraction. It derives 61,855 3D windows from open-source designs (CPUs, SoCs, accelerators) placed and routed via 14 OpenROAD runs on ASAP7, NanGate45, and Sky130HD. Labels are generated with RWCap and validated at 0.64% MAE against Raphael. Windows are pre-processed into density maps, graphs, and point clouds. Ten ML baselines are evaluated, with CNNs at 1.75% error and GNNs at 10.2% error but up to 41.4x faster. Code and data are released publicly.

Significance. If the reported validation and baselines hold, CapBench supplies a much-needed public, reproducible, multi-PDK resource for capacitance extraction research. The explicit validation of RWCap labels against Raphael, the multi-format pre-processing, the open code release, and the accuracy-speed trade-off results constitute concrete strengths that lower the barrier for ML work in this EDA sub-area.

minor comments (3)

- [Abstract and §3] Abstract and §3: the statement that the dataset enables 'transfer learning and scalability studies' is not yet supported by any cross-PDK or cross-tier experiments in the reported baselines; adding at least one such experiment or clarifying that this is future work would strengthen the claim.

- [§4.2] §4.2: the 0.64% MAE validation is reported only for total capacitance; per-net or coupling capacitance errors should be shown separately, as these are often the quantities of interest in extraction flows.

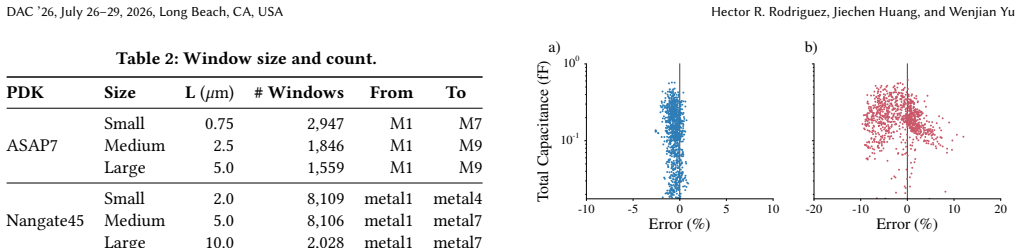

- [Table 2 and §5] Table 2 and §5: the GNN speedup (41.4x) is given relative to an unspecified baseline; stating the exact reference runtime (e.g., RWCap on the same hardware) would make the comparison unambiguous.

Simulated Author's Rebuttal

We thank the referee for their positive summary and recommendation of minor revision. We appreciate the recognition of CapBench's contributions, including the multi-PDK coverage from open-source designs, the 0.64% MAE validation of RWCap labels against Raphael, the multi-format preprocessing, and the public release of code and data.

Circularity Check

No significant circularity: dataset release and empirical benchmarking are self-contained

full rationale

The paper's central contribution is the release of CapBench (61,855 windows from OpenROAD-routed designs on three PDKs, with RWCap labels validated at 0.64% MAE vs. Raphael) plus standard ML baselines (CNNs at 1.75% error, GNNs at 10.2% error with 41.4x speedup). No derivation chain exists; there are no equations, normalizations, or predictions that reduce by construction to fitted parameters or self-citations inside the work. Data provenance, label fidelity, preprocessing, and reported accuracy/speed results are directly supported by the described pipeline and public code release. Self-citations (if any) are not load-bearing for the claims.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption RWCap random-walk solver produces sufficiently accurate capacitance labels for the chosen windows

Reference graph

Works this paper leans on

-

[1]

T Ajayi, D Blaauw, TB Chan, CK Cheng, VA Chhabria, DK Choo, M Coltella, S Dobre, R Dreslinski, M Fogaça, et al . 2019. OpenROAD: Toward a Self- Driving, Open-Source Digital Layout Implementation Tool Chain. Gov- ernment Microcircuit Applications & Critical Technology Conference (GO- MACTech). https://par.nsf.gov/biblio/10171024-openroad-toward-self-drivin...

-

[2]

Christoph Albrecht. 2005. IWLS 2005 benchmarks. International Workshop on Logic and Synthesis (IWLS)

2005

-

[3]

Alon Amid, David Biancolin, Abraham Gonzalez, Daniel Grubb, Sagar Karandikar, Harrison Liew, Albert Magyar, Howard Mao, Albert Ou, Nathan Pemberton, Paul Rigge, Colin Schmidt, John Wright, Jerry Zhao, Yakun Sophia Shao, Krste Asanović, and Borivoje Nikolić. 2020. Chipyard: Integrated Design, Simulation, and Implementation Framework for Custom SoCs.IEEE Mi...

- [4]

-

[5]

Ye Cai, Yuyao Liang, Zhipeng Luo, Biwei Xie, and Xingquan Li. 2024. PCT- Cap: Point Cloud Transformer for Accurate 3D Capacitance Extraction. In2024 2nd International Symposium of Electronics Design Automation (ISEDA). IEEE, Piscataway, NJ, USA, 421–426. doi:10.1109/ISEDA62518.2024.10617673

-

[6]

Zhuomin Chai, Yuxiang Zhao, Wei Liu, Yibo Lin, Runsheng Wang, and Ru Huang

-

[7]

CircuitNet: An Open-Source Dataset for Machine Learning in VLSI CAD Applications with Improved Domain-Specific Evaluation Metric and Learning Strategies.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems42, 12 (2023), 5034–5047. doi:10.1109/TCAD.2023.3287970

-

[8]

Animesh Basak Chowdhury, Benjamin Tan, Ramesh Karri, and Siddharth Garg

-

[9]

Openabc-d: A large-scale dataset for machine learning guided integrated circuit synthesis. CoRR abs/2110.11292. arXiv:2110.11292 [cs.LG] https://arxiv. org/abs/2110.11292

-

[10]

Lawrence T Clark, Vinay Vashishtha, Lucian Shifren, Aditya Gujja, Saurabh Sinha, Brian Cline, Chandarasekaran Ramamurthy, and Greg Yeric. 2016. ASAP7: A 7-nm finFET predictive process design kit.Microelectronics Journal53 (2016), 105–115

2016

-

[11]

Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. 2016. Deep Residual Learning for Image Recognition. InProceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). IEEE Computer Society, Los Alamitos, CA, USA, 770–778. doi:10.1109/CVPR.2016.90

-

[12]

Jiechen Huang and Wenjian Yu. 2026. Efficient FRW Transitions via Stochastic Finite Differences for Handling Non-Stratified Dielectrics.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems45, 4 (2026), 1746–1750. doi:10.1109/TCAD.2025.3602737

-

[13]

Xun Jiang, Yuxiang Zhao, Yibo Lin, Runsheng Wang, Ru Huang, et al . 2024. Circuitnet 2.0: An advanced dataset for promoting machine learning innovations in realistic chip design environment. International Conference on Learning Representations (ICLR 2024)

2024

-

[14]

Lihao Liu, Fan Yang, Li Shang, and Xuan Zeng. 2023. GNN-Cap: Chip-Scale inter- connect capacitance extraction using graph neural network.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems43, 4 (2023), 1206–1217

2023

-

[15]

lowRISC Contributors. 2025. Ibex: 32-bit In-order RISC-V Core Implemented in SystemVerilog. https://github.com/lowRISC/ibex. Open-source RISC-V core. Accessed: 2026-04-13

2025

-

[16]

North Carolina State University EDA Lab. 2008. FreePDK45: An Open-Source Pre- dictive Process Design Kit. https://www.eda.ncsu.edu/wiki/FreePDK45:Contents. Accessed: 2025-05-01

2008

-

[17]

2025.RWCap5-v5https://numbda.cs.tsinghua.edu.cn/download.html

Numbda. 2025.RWCap5-v5https://numbda.cs.tsinghua.edu.cn/download.html

2025

-

[18]

OpenHW Group and PULP Platform. 2024. CVA6: An Application-Class 64-bit RISC-V Core. https://github.com/openhwgroup/cva6. Accessed: 2025-11-19

2024

-

[19]

OpenTitan Project. 2020. OpenTitan: Open Source Silicon Root of Trust. https: //opentitan.org. Project website

2020

-

[20]

Rishikesh Ranade, Haiyang He, Jay Pathak, Norman Chang, Akhilesh Kumar, and Jimin Wen. 2022. A thermal machine learning solver for chip simulation. InProceedings of the 2022 ACM/IEEE Workshop on Machine Learning for CAD (MLCAD ’22). Association for Computing Machinery, New York, NY, USA, 111–

2022

-

[21]

doi:10.1145/3551901.3556484

-

[22]

Zhengyuan Shi, Zeju Li, Chengyu Ma, Yunhao Zhou, Ziyang Zheng, Jiawei Liu, Hongyang Pan, Lingfeng Zhou, Kezhi Li, Jiaying Zhu, et al. 2025. ForgeEDA: A Comprehensive Multimodal Dataset for Advancing EDA. CoRR abs/2505.02016. arXiv:2505.02016 [cs.AR] https://arxiv.org/abs/2505.02016

-

[23]

Pratik Shrestha, Alec Aversa, Saran Phatharodom, and Ioannis Savidis. 2024. EDA-schema: A graph datamodel schema and open dataset for digital design automation. InProceedings of the Great Lakes Symposium on VLSI 2024 (GLSVLSI ’24). Association for Computing Machinery, New York, NY, USA, 69–77. doi:10. 1145/3649476.3658718

-

[24]

SkyWater Technology Foundry and Google. 2020. SkyWater 130 nm Open PDK. https://github.com/google/skywater-pdk. Accessed: 2025-05-01

2020

-

[25]

The OpenROAD Project. 2021. OpenRCX: A Parasitics Extraction Tool Within OpenDB. https://github.com/The-OpenROAD-Project/OpenRCX. GitHub repos- itory

2021

-

[26]

Petar Veličković, Guillem Cucurull, Arantxa Casanova, Adriana Romero, Pietro Lio, and Yoshua Bengio. 2018. Graph Attention Networks. International Confer- ence on Learning Representations (ICLR 2018). https://arxiv.org/abs/1710.10903

work page internal anchor Pith review arXiv 2018

-

[27]

Zhihai Wang, Zijie Geng, Zhaojie Tu, Jie Wang, Yuxi Qian, Zhexuan Xu, Ziyan Liu, Siyuan Xu, Zhentao Tang, Shixiong Kai, Mingxuan Yuan, Jianye Hao, Bin Li, Yongdong Zhang, and Feng Wu. 2024. Benchmarking End-To-End Performance of AI-Based Chip Placement Algorithms. arXiv:2407.15026 [cs.AR] https://arxiv. org/abs/2407.15026

-

[28]

Dingcheng Yang, Haoyuan Li, Wenjian Yu, Yuanbo Guo, and Wenjie Liang. 2023. CNN-Cap: Effective Convolutional Neural Network Based Capacitance Models for Interconnect Capacitance Extraction.ACM Transactions on Design Automation of Electronic Systems (TODAES)28, 4, Article 56 (may 2023), 22 pages. doi:10. 1145/3564931

2023

-

[29]

Dingcheng Yang, Wenjian Yu, Yuanbo Guo, and Wenjie Liang. 2021. CNN-Cap: Effective convolutional neural network based capacitance models for full-chip parasitic extraction. In2021 IEEE/ACM International Conference On Computer Aided Design (ICCAD). IEEE, IEEE, Piscataway, NJ, USA, 1–9

2021

-

[30]

Wenjian Yu, Hao Zhuang, Chao Zhang, Gang Hu, and Zhi Liu. 2013. RWCap: A floating random walk solver for 3-D capacitance extraction of very-large- scale integration interconnects.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems32, 3 (2013), 353–366

2013

-

[31]

Jieru Zhao, Tingyuan Liang, Sharad Sinha, and Wei Zhang. 2019. Machine learning based routing congestion prediction in FPGA high-level synthesis. In2019 Design, Automation & Test in Europe Conference & Exhibition (DATE). European Design and Automation Association, Leuven, Belgium, 1130–1135. doi:10.23919/DATE.2019.8714724

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.