PoisonCap gives CHERI strict use-after-free at zero overhead

PoisonCap: Efficient Hierarchical Temporal Safety for CHERI

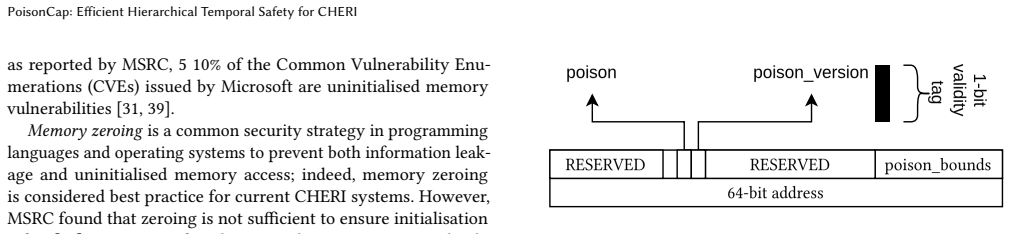

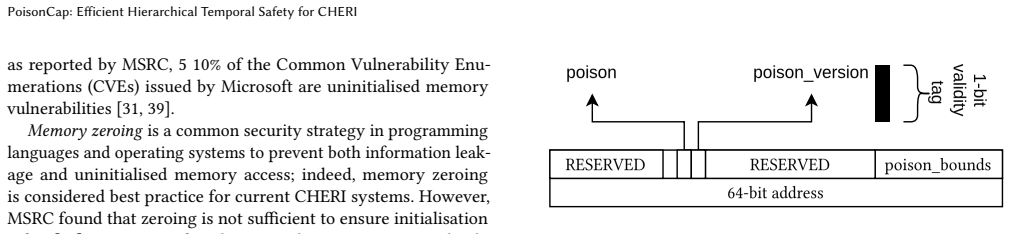

Poison capability format replaces shadow bitmaps, auto-zeros on reuse, and supports hierarchical delegation.

full image

full image

Hardware Architecture

Covers systems organization and hardware architecture. Roughly includes material in ACM Subject Classes C.0, C.1, and C.5.

PoisonCap: Efficient Hierarchical Temporal Safety for CHERI

Poison capability format replaces shadow bitmaps, auto-zeros on reuse, and supports hierarchical delegation.

full image

full image

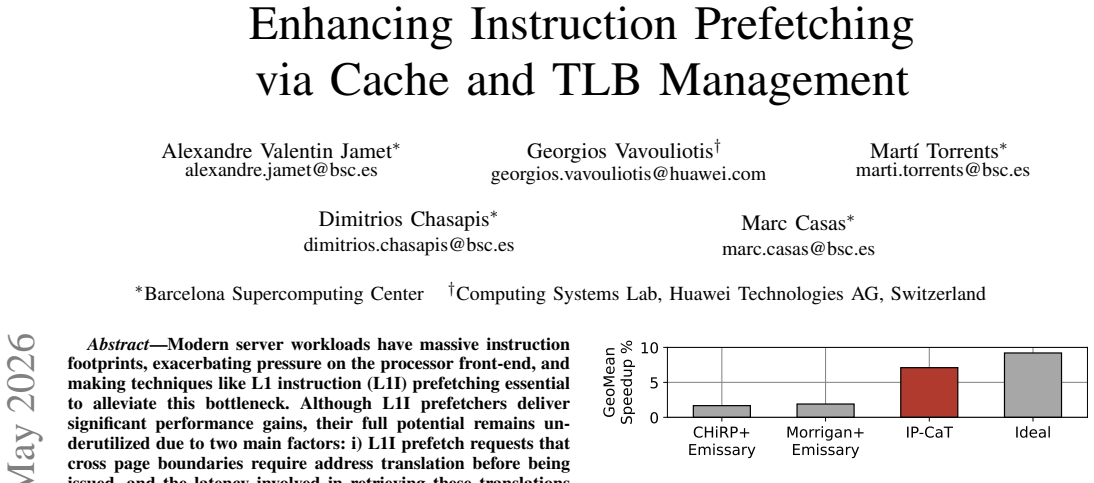

Enhancing Instruction Prefetching via Cache and TLB Management

Small translation buffer and trimodal replacement policy cut delays and evict unused code lines in large-footprint server workloads.

full image

full image

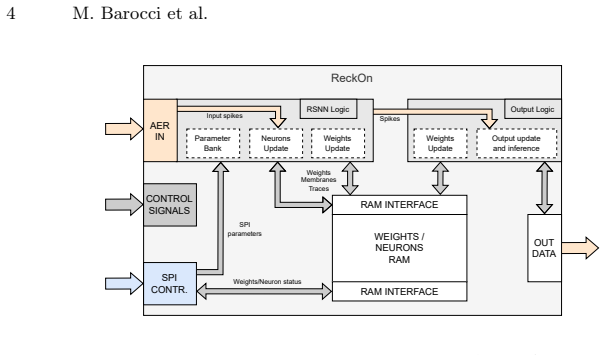

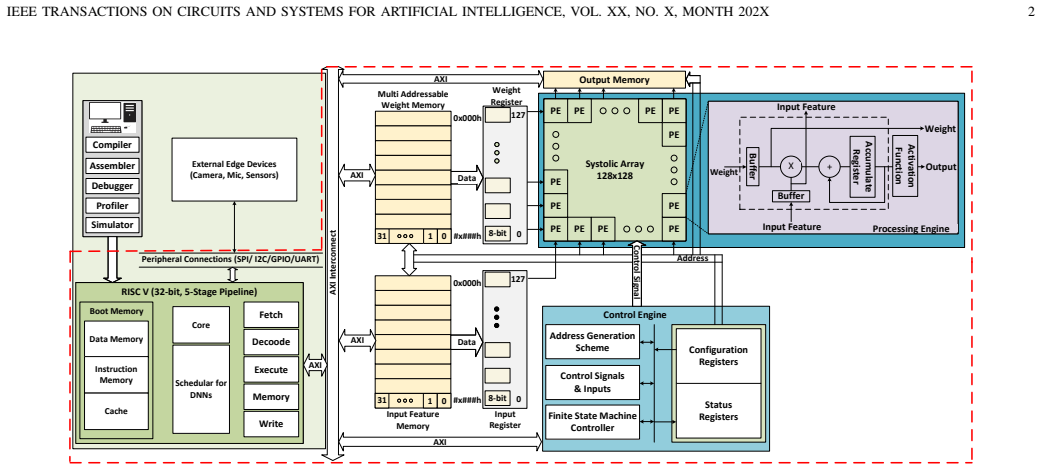

Heterogeneous integration of open-source ReckOn accelerator with RISC-V and ARM enables validation and online learning on Braille data.

full image

full image

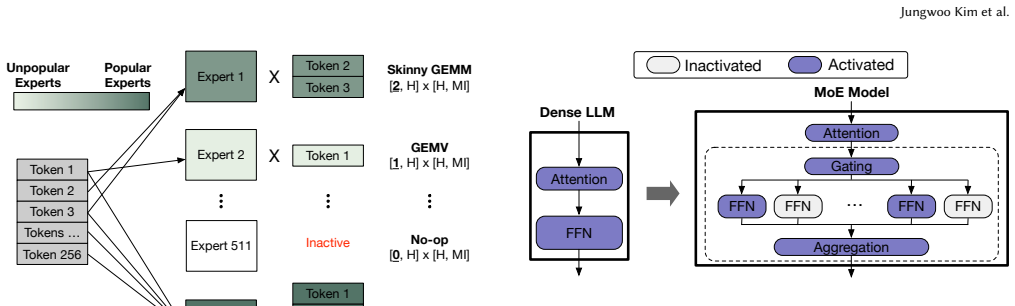

Sieve: Dynamic Expert-Aware PIM Acceleration for Evolving Mixture-of-Experts Models

Runtime token-distribution decisions reduce imbalance for modern models that activate few experts unevenly.

full image

full image

TLX: Hardware-Native, Evolvable MIMW GPU Compiler for Large-scale Production Environments

Preserves blocked programming while enabling customization for async hardware and cluster features in production systems.

full image

full image

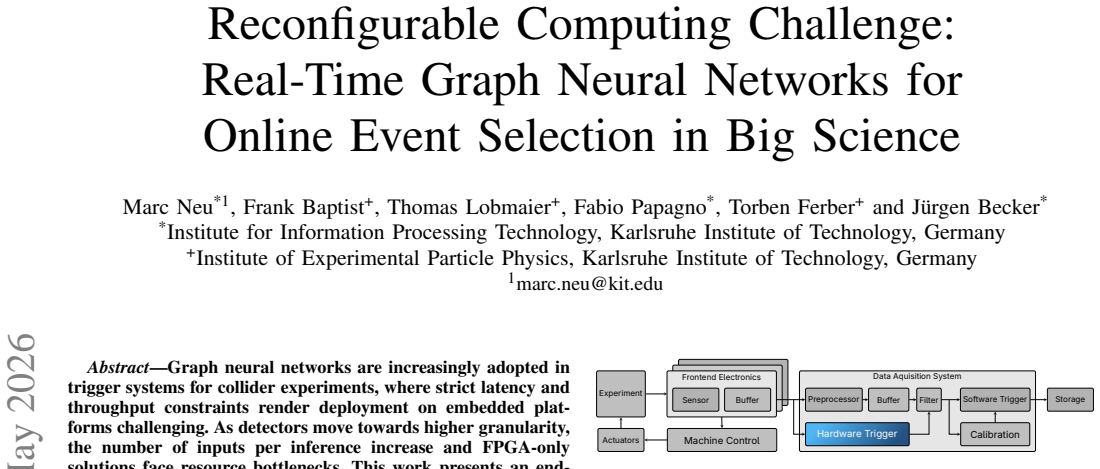

FPGA plus AI Engine tiles deliver 53 percent higher throughput than pure FPGA while using only 19 percent DSP resources for Belle II event s

full image

full image

ObfAx: Obfuscation and IP Piracy Detection in Approximate Circuits

A comparison method spots pirated approximate hardware even when attackers adjust function to match error rates and hardware costs.

full image

full image

Towards an End-To-End System for Real-Time Gesture Recognition from Surface Vibrations

End-to-end pipeline with 8722-parameter CNN reaches high accuracy even on unseen users

full image

full image

The framework converts parameter tuning to resource distribution and reuses past designs to reach 10-50 GHz targets without heavy fine-tuned

full image

full image

KV-RM: Regularizing KV-Cache Movement for Static-Graph LLM Serving

Variable-length requests no longer force over-reservation when transfers are coalesced beneath the fixed decoder.

full image

full image

Emerging 2D Materials for Beyond von Neumann Computing: A Perspective

Graphene transistors, memristors, and photonic structures must share one silicon wafer to close the memory-processor gap.

full image

full image

Outlier-free quantization and parallel speculative decoding yield 4.5-7x speedup over standard methods in a 55nm stacked design.

full image

full image

HyDRA: Deadline and Reuse-Aware Cacheability for Hardware Accelerators

LERN clustering predicts accelerator reuse at the shared cache to guide HyDRA decisions that cut misses and raise throughput across variedSo

full image

full image

HyDRA: Deadline and Reuse-Aware Cacheability for Hardware Accelerators

A predictor learns accelerator access patterns at the shared cache and decides bypasses to raise throughput while cutting deadline misses.

full image

full image

A Reconfigurable Multiplier Architecture for Error-Resilient Applications in RISC-V Core

A mulscr register lets the processor switch accuracy levels at runtime, saving energy on error-tolerant tasks like matrix multiplication.

full image

full image

Single 32-bit Sub-Channel DDR5 DIMMs: Architecture, Performance Bounds, and Standardisation

The 32-bit x BL16 identity grounds the design and enables cheaper modules, yet roofline analysis shows clear penalties for bandwidth tasks.

full image

full image

MerkleTree pruning, boothing lookup, and adaptive posit format enable efficient inference in 28nm CMOS

full image

full image

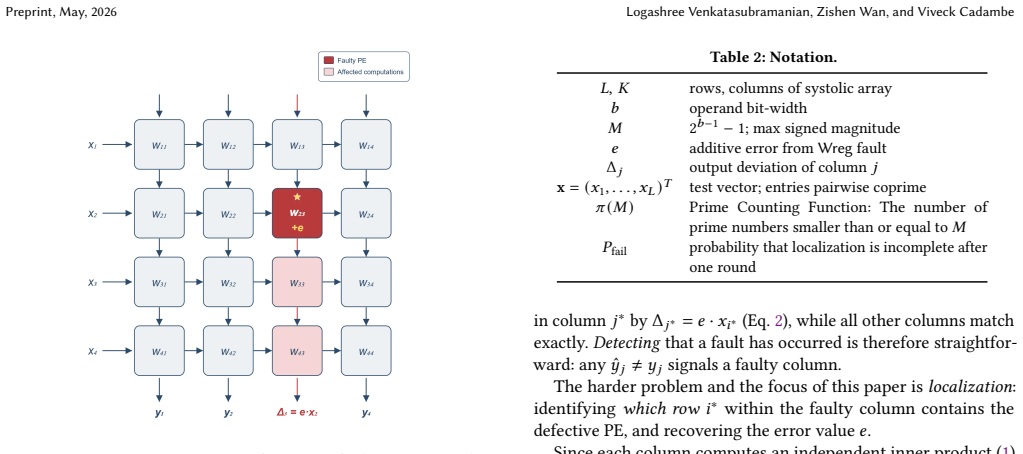

FLARE: One-Shot PE-Level Fault Localization in Systolic Arrays via Algebraic Test Vectors

Pairwise coprime inputs yield unique divisibility signatures that identify the source row with over 98 percent probability at under 1% GEMM-

full image

full image

AccelSync: Verifying Synchronization Coverage in Accelerator Pipeline Programs

It reduces cross-unit visibility to happens-before ordering and proves the check runs in quadratic time, surfacing hazards missed by testing

full image

full image

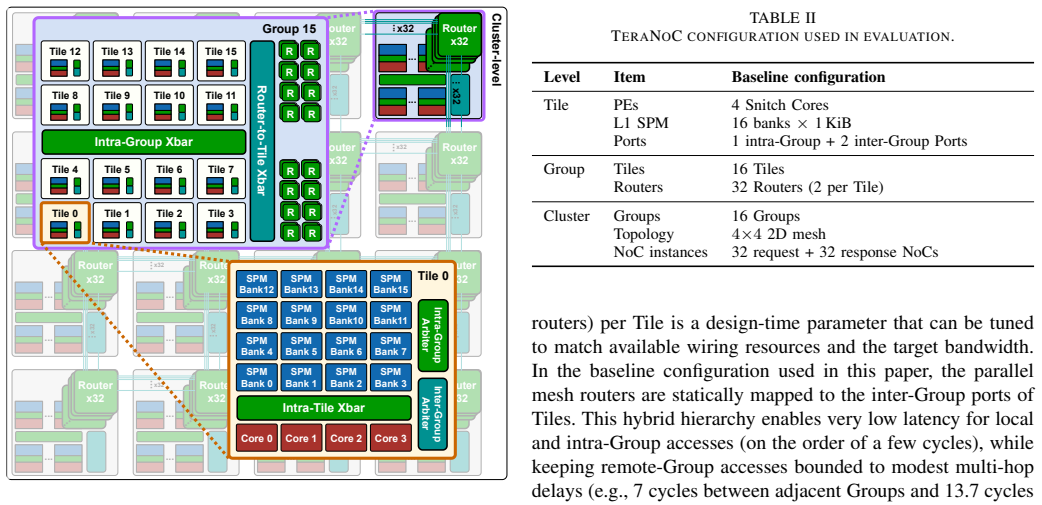

Accelerating Precise End-to-End Simulation: Latency-Sensitive Many-core System Modeling

It keeps accurate timing for shared scratchpad accesses by simplifying less critical hardware parts.

full image

full image

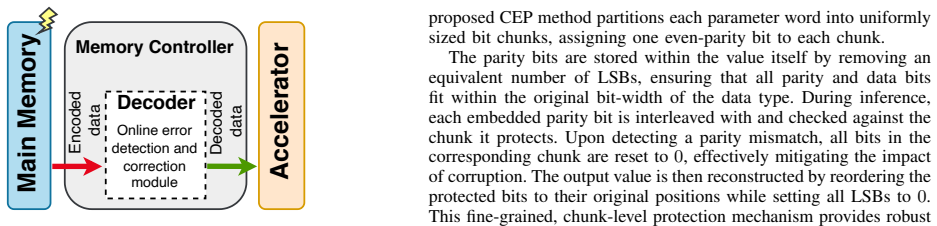

Effective and Memory-Efficient Alternatives to ECC for Reliable Large-Scale DNNs

MSET and CEP techniques harden critical bits in CNNs and ViTs, outperforming SECDED with lower area and faster decoding.

full image

full image

Dual-precision hardware reuse and structured pruning keep utilization high for real-time vision on small chips.

full image

full image

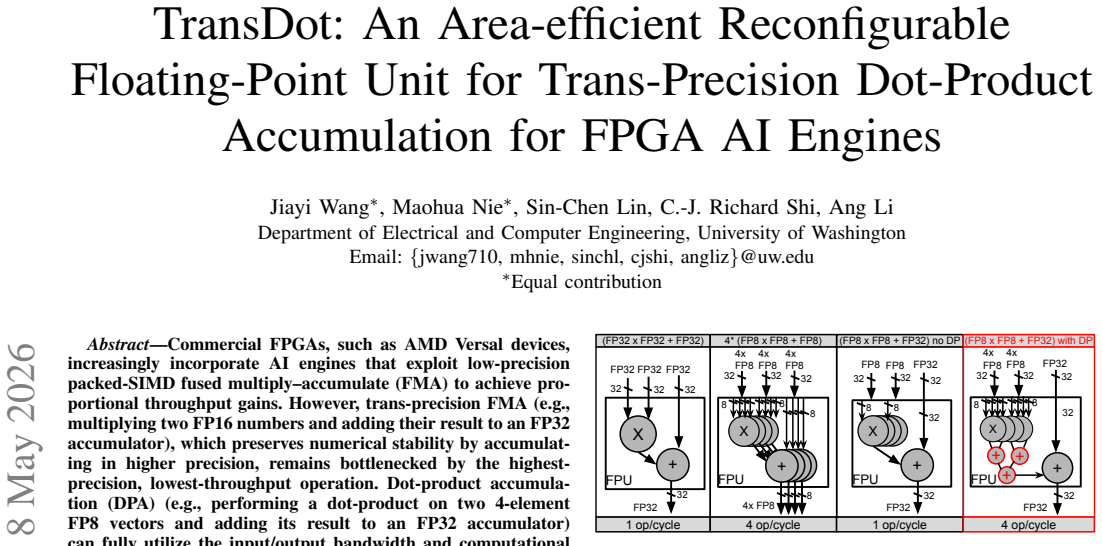

TransDot shares one datapath between SIMD FMA and FP32-accumulated DPA to raise efficiency in FPGA AI engines.

full image

full image

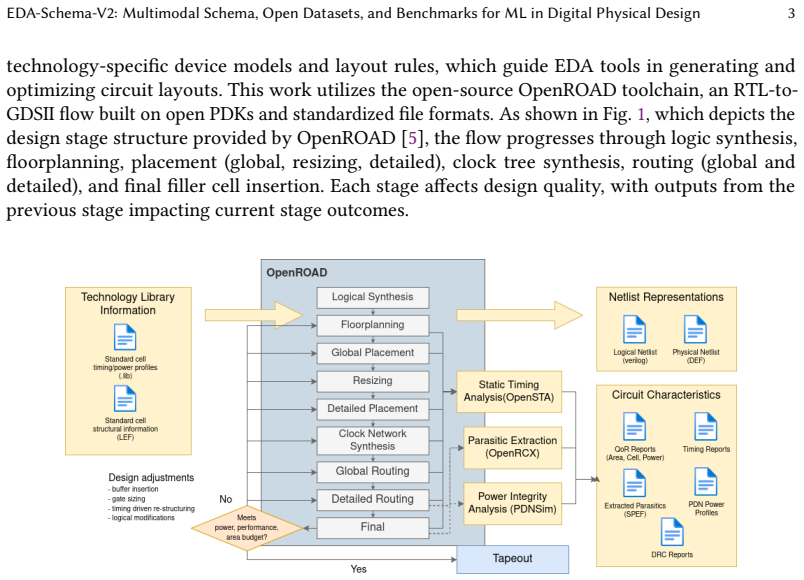

EDA-Schema-V2 structures 7776 design instances from synthesis to routing with 12 tasks and baselines for reproducible research.

full image

full image

Benchmark shows LLMs manage simple fixes but drop sharply when reasoning about violations or balancing power, speed, and area goals.

full image

full image

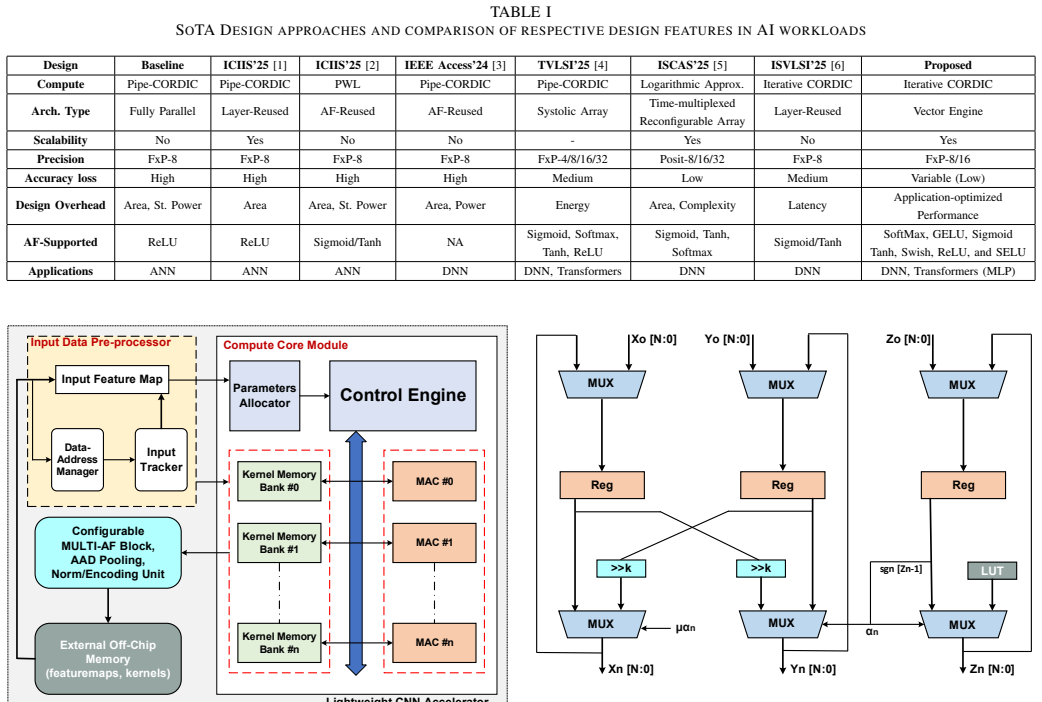

CARMEN: CORDIC-Accelerated Resource-Efficient Multi-Precision Inference Engine for Deep Learning

A single hardware unit switches between fast approximate and accurate modes at runtime to cut power and raise density in 28 nm silicon.

full image

full image

SIMD unified bounded Posit design with log approximations achieves major hardware savings and 1.5 point accuracy tolerance on vehicle tasks.

full image

full image

PoTAcc: A Pipeline for End-to-End Acceleration of Power-of-Two Quantized DNNs

Custom shift accelerators with TFLite deliver 78 percent lower energy use versus CPU-only runs on constrained boards.

full image

full image

XtraMAC: An Efficient MAC Architecture for Mixed-Precision LLM Inference on FPGA

Shared integer mantissa products enable constant-latency datatype switching and up to 51% lower resource use on Xilinx FPGAs.

full image

full image

Virtual connections avoid embedding and sparsification, letting simulations predict orders-of-magnitude faster ground-state approximations.

full image

full image

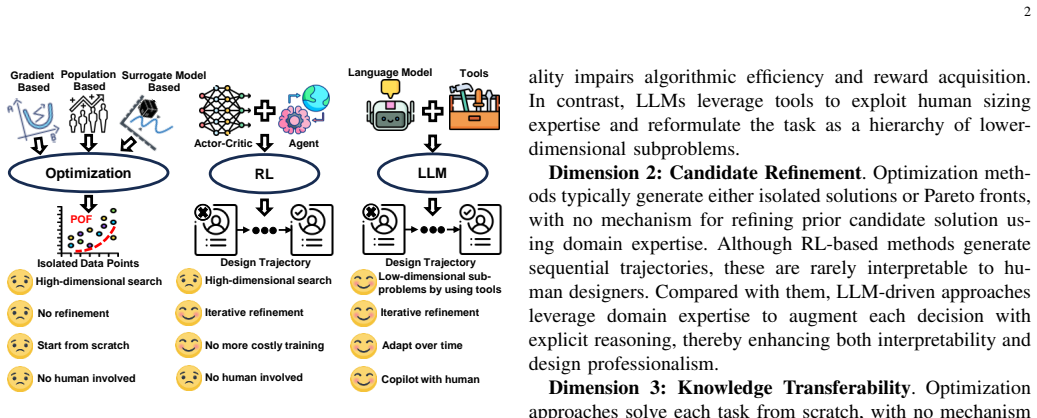

LLM-Driven Design Space Exploration of FPGA-based Accelerators

SECDA-DSE uses retrieval-augmented generation and chain-of-thought prompting to produce configurations that meet FPGA synthesis constraints.

full image

full image

Decoupling transmission from address allocation removes software delays and enables transparent overlap on multi-GPU systems.

full image

full image

TokenStack: A Heterogeneous HBM-PIM Architecture and Runtime for Efficient LLM Inference

Splitting stacks into dense and PIM layers plus local KV management raises serving capacity 1.7x and cuts energy 30-47% versus uniform PIM.

full image

full image

Towards Compute-Aware In-Switch Computing for LLMs Tensor-Parallelism on Multi-GPU Systems

Aligning switch operations with kernel memory semantics enables tighter compute-communication overlap than prior NVLS approaches.

full image

full image

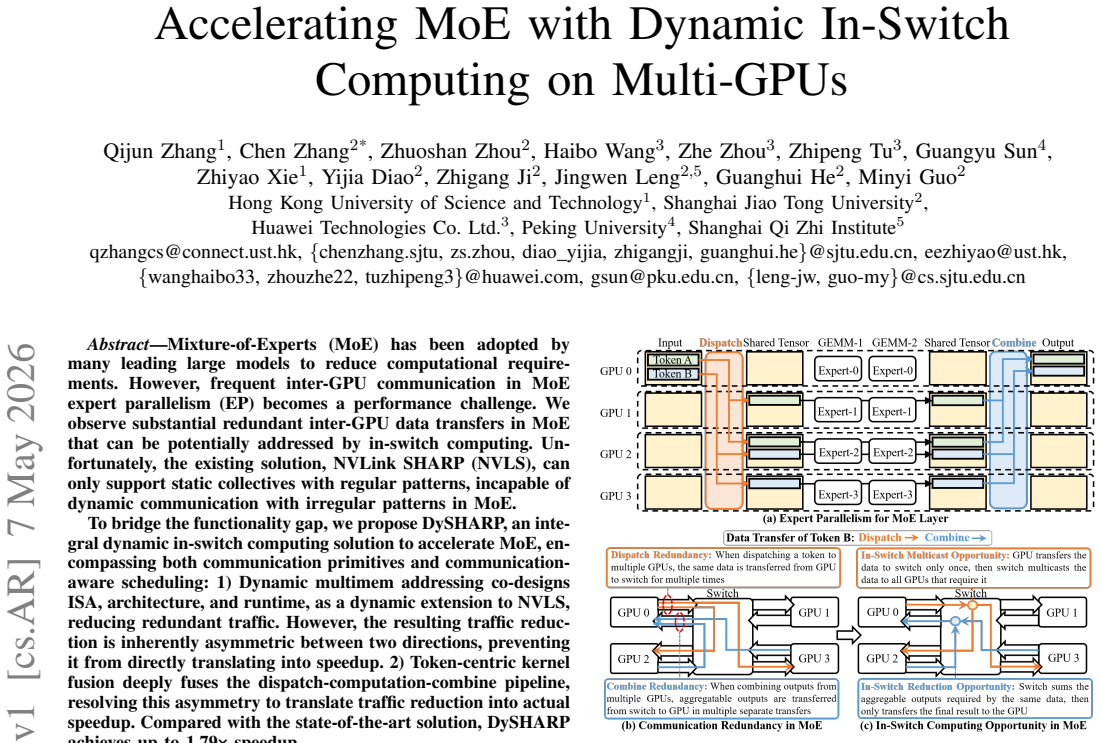

Accelerating MoE with Dynamic In-Switch Computing on Multi-GPUs

Dynamic multimem addressing plus token fusion cuts redundant GPU traffic in expert parallelism and converts savings into real gains.

full image

full image

Pipelined execution with direct data flow between elements reduces register accesses by 68 percent at no cost to performance.

full image

full image

Dynamic switching matches oracle performance in 52.65% of phases across 49 benchmarks.

full image

full image

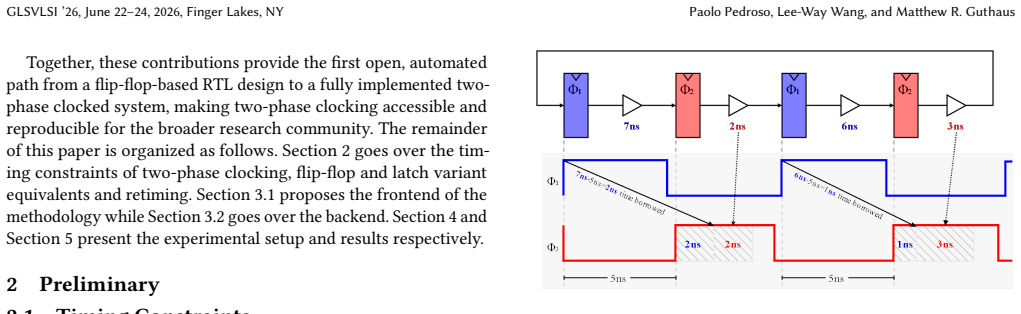

The conversion delivers power savings and allows timing closure where single-phase fails through automated mapping and validation.

full image

full image

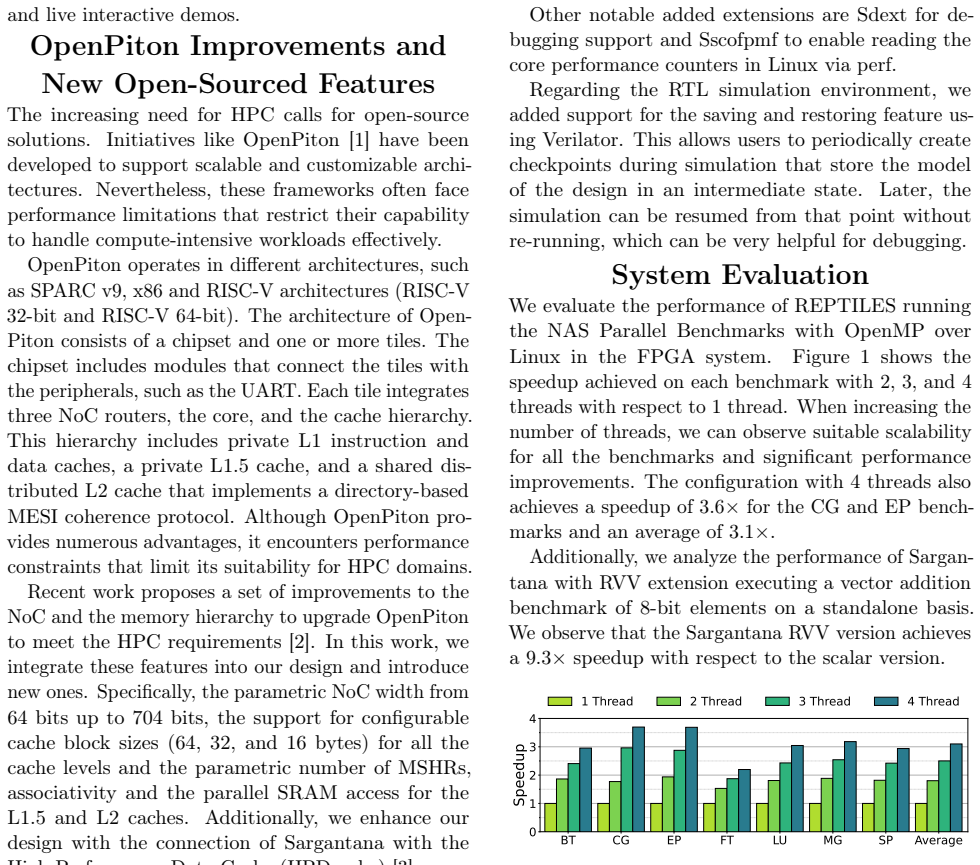

REPTILES: Repeated Tiles of Sargantana, a RISC-V multicore based on OpenPiton

Repeated core tiles with memory hierarchy deliver scalable gains and boost vector addition performance 9.3 times.

full image

full image

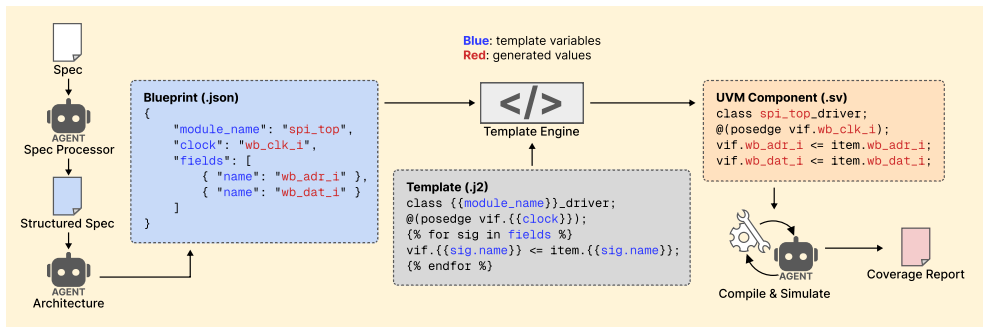

Design Conductor 2.0: An agent builds a TurboQuant inference accelerator in 80 hours

Multi-agent harness autonomously creates 5129-unit LLM chip with 240-cycle pipeline from paper spec

MCFlash: Bulk Bitwise Processing in 3D NAND with Dynamic Sensing and Multi-level Encoding

Standard commands plus dynamic voltage tuning keep errors below 0.015 percent even after 10,000 program-erase cycles.

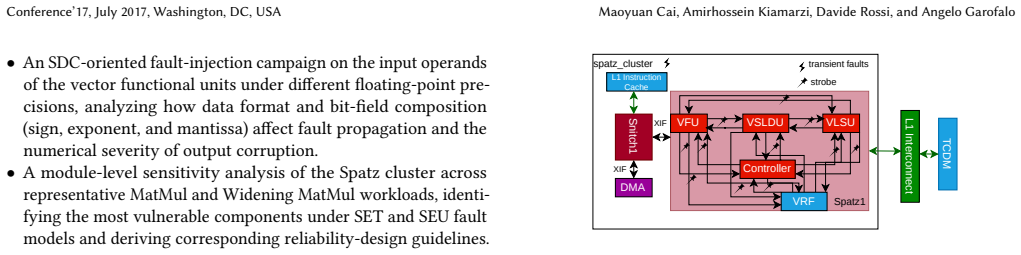

Over 86% of SET and 91% of SEU injections produce faulty outputs in matrix multiplies, with FP8 least affected and exponent bits most severe

full image

full image

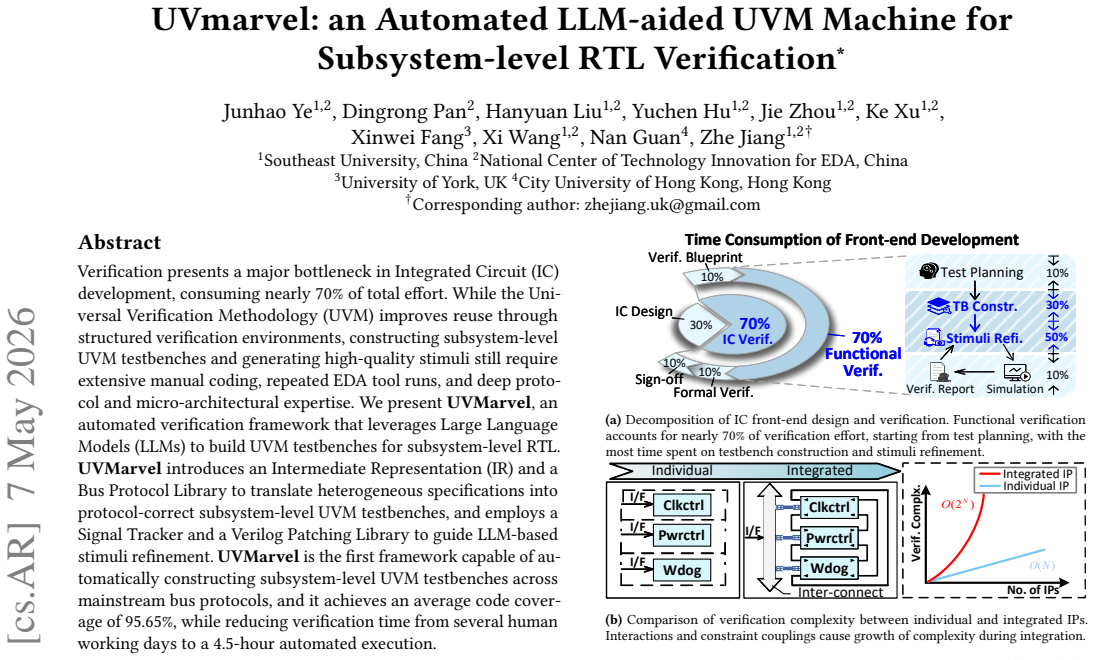

UVMarvel: an Automated LLM-aided UVM Machine for Subsystem-level RTL Verification

UVMarvel translates specs into protocol-correct environments via intermediate representation and trackers, replacing days of manual work.

full image

full image

Ultra Low-Power SDM-based Circuit-Switching for Networks-on-Chip

For chips with fixed inter-core traffic, dedicated wire circuits from a hybrid router lower power, area, and latency compared with packet-sw

full image

full image

RangeGuard: Efficient, Bounded Approximate Error Correction for Reliable DNNs

Numerical range metadata allows bounding errors from memory faults while preserving model accuracy with minimal redundancy.

full image

full image

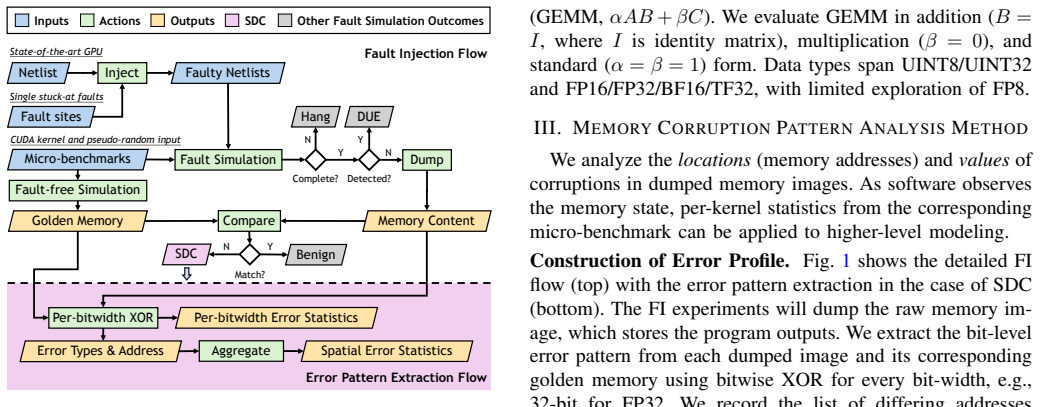

The Anatomy of Silent Data Corruption: GPU Error Pattern Study and Modeling Guidance

Millions of fault injections reveal multi-bit flips and periodic addresses dominate, calling for updated high-level error models.

full image

full image

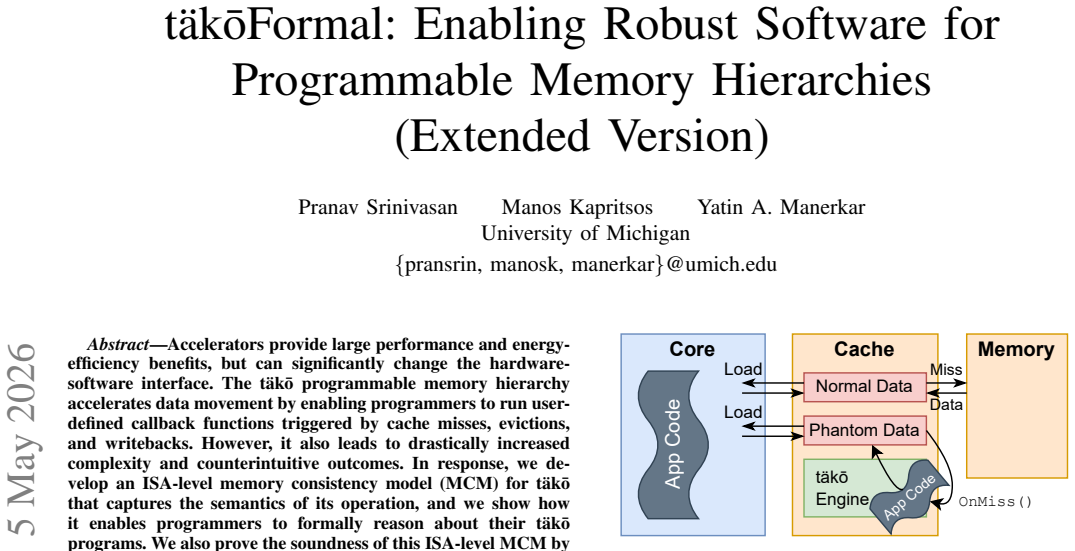

t\"{a}k\={o}Formal: Enabling Robust Software for Programmable Memory Hierarchies (Extended Version)

The consistency model for tākō specifies allowed executions under user-defined cache callbacks and proves soundness against a hardware model

full image

full image

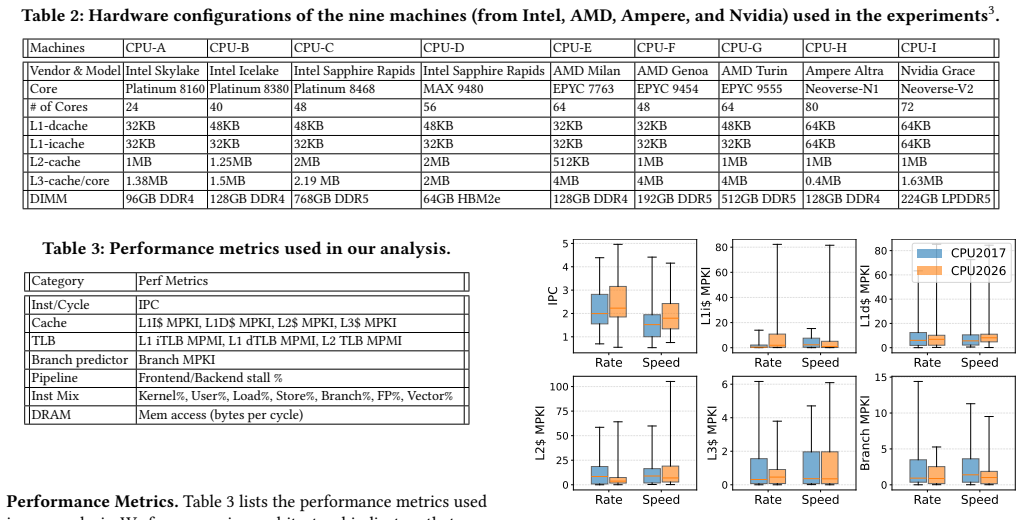

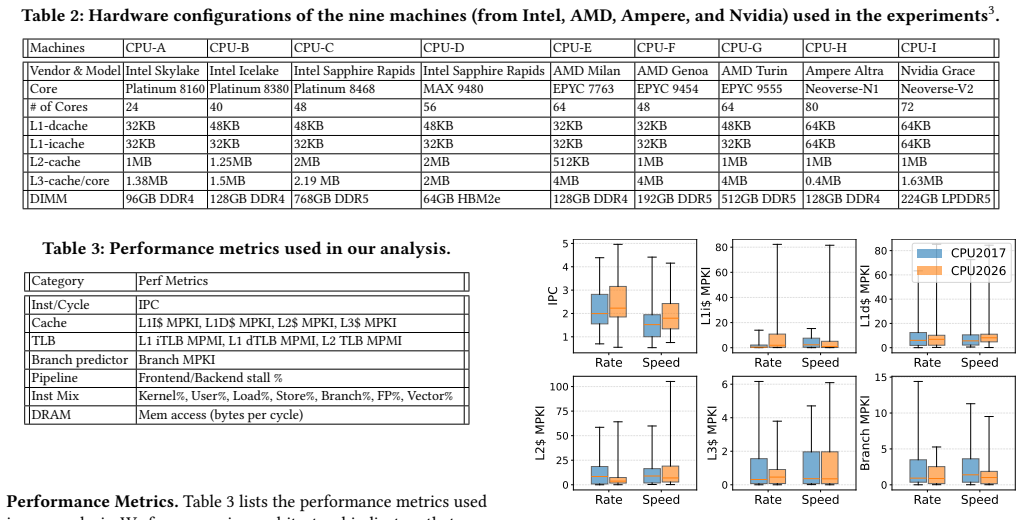

SPEC CPU2026: Characterization, Representativeness, and Cross-Suite Comparison

Compact subsets of 4-5 workloads retain 96.4-99.9% of full suite metrics across recent Intel, AMD, Ampere, and Nvidia processors

full image

full image

SPEC CPU2026: Characterization, Representativeness, and Cross-Suite Comparison

Clustering on modern processors shows compact groups match full suite instruction and cache pressures at far lower cost

full image

full image

Design and Implementation of BNN-Based Object Detection on FPGA

Verilog implementation of YOLOv3-tiny variant reaches 39.6% mAP50 on VOC with 0.098 GFLOPs and 0.74M parameters.

full image

full image

Design and Implementation of BNN-Based Object Detection on FPGA

The 0.74M-parameter model hits 39.6% mAP on VOC using 0.098 GFLOPs in full Verilog RTL simulation.

full image

full image

Resource Utilization of Differentiable Logic Gate Networks Deployed on FPGAs

This lets deeper networks fit on hardware with lower power and faster speeds for on-device AI inference.

full image

full image

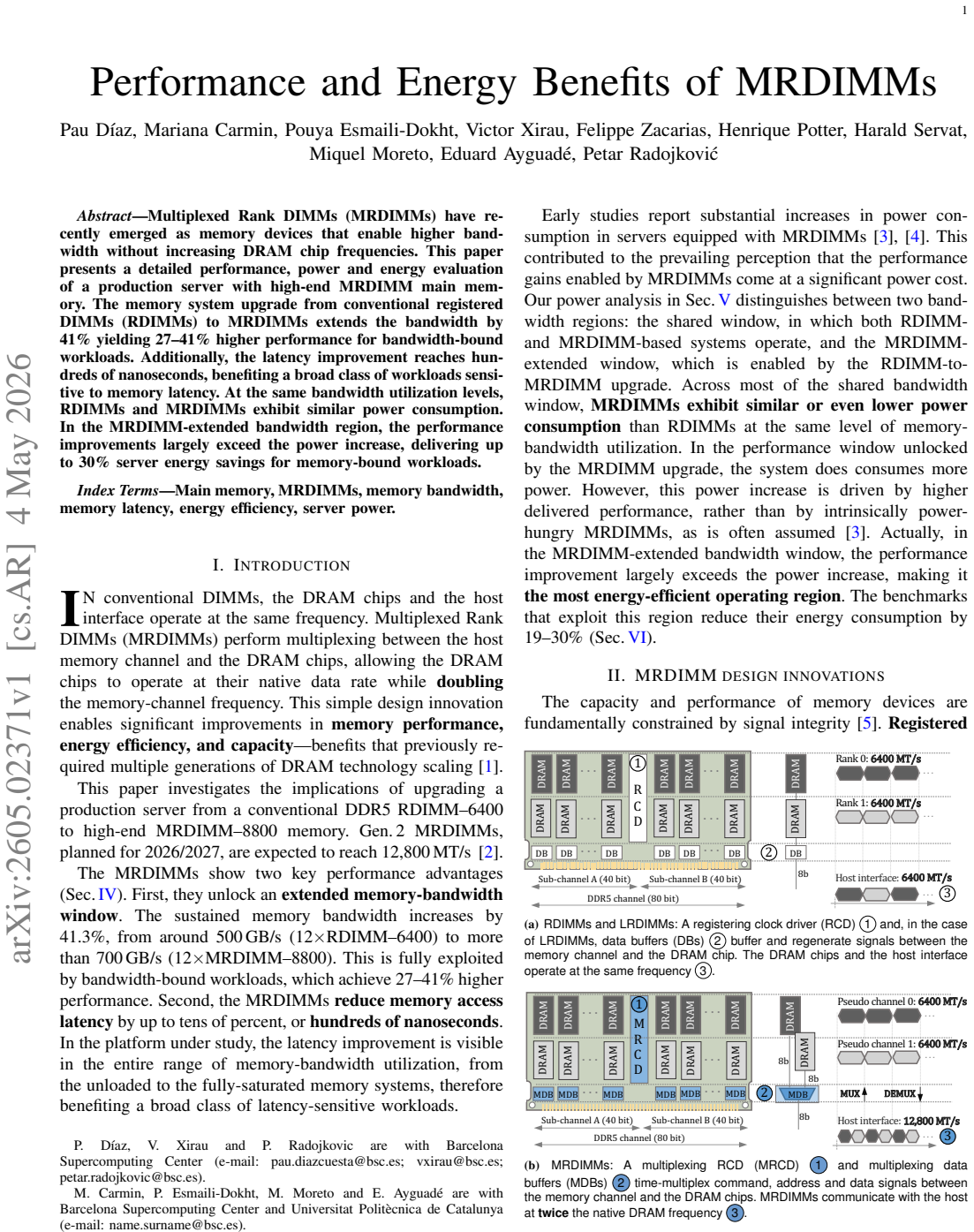

Performance and Energy Benefits of MRDIMMs

The upgrade improves speed for bandwidth-limited apps and lowers energy use without faster DRAM chips

full image

full image

Cerberus: Cross-Layer ECC Co-Design for Robust and Efficient Memory Protection

Cerberus co-design lets controller redundancy serve on-die repair, link retry, and end-to-end recovery while lowering total overhead.

full image

full image

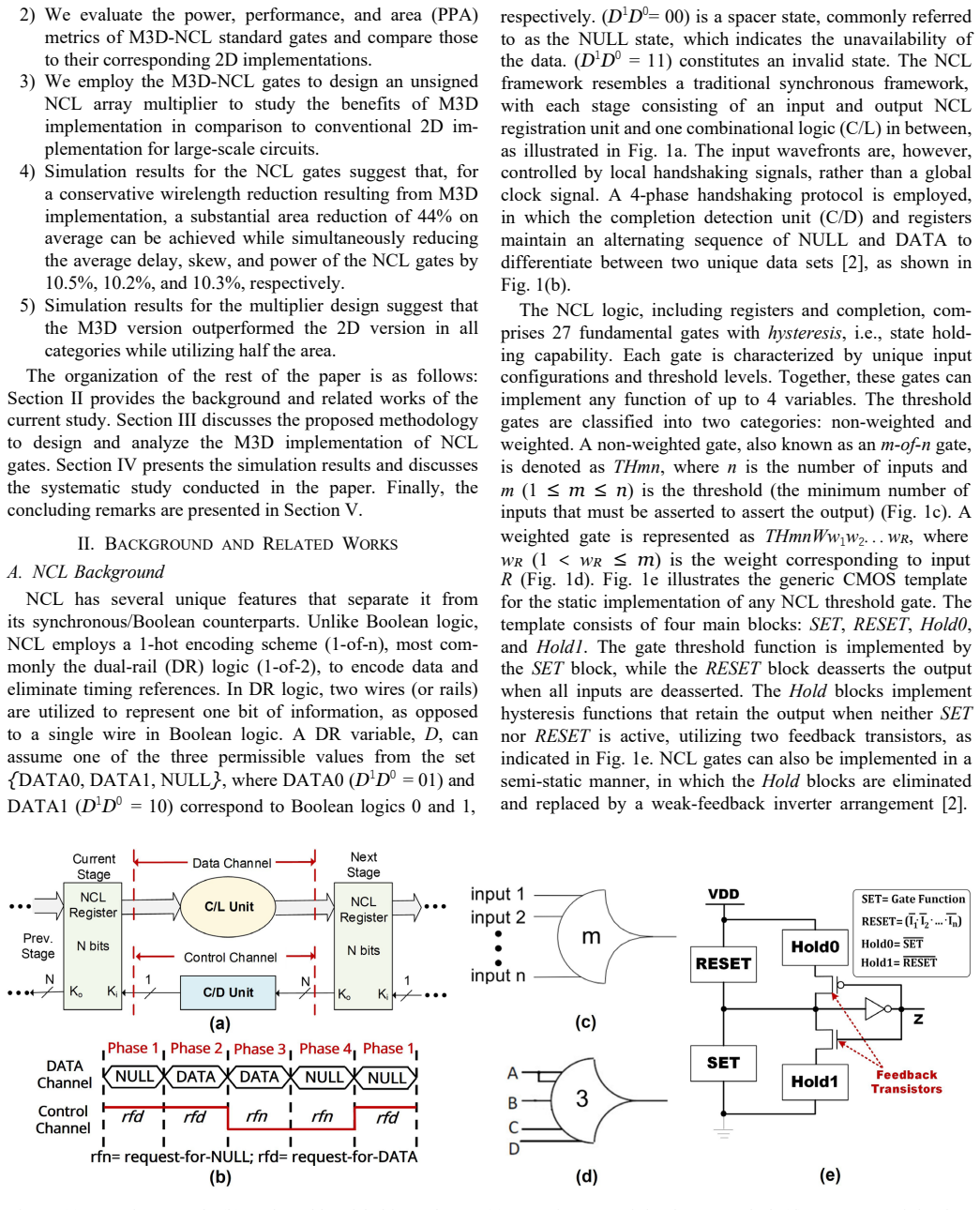

Monolithic 3D Integration for Null Convention Logic (NCL)-Based Asynchronous Circuits

Monolithic integration also trims delay by 31% and power by 17% in simulated asynchronous multipliers.

full image

full image

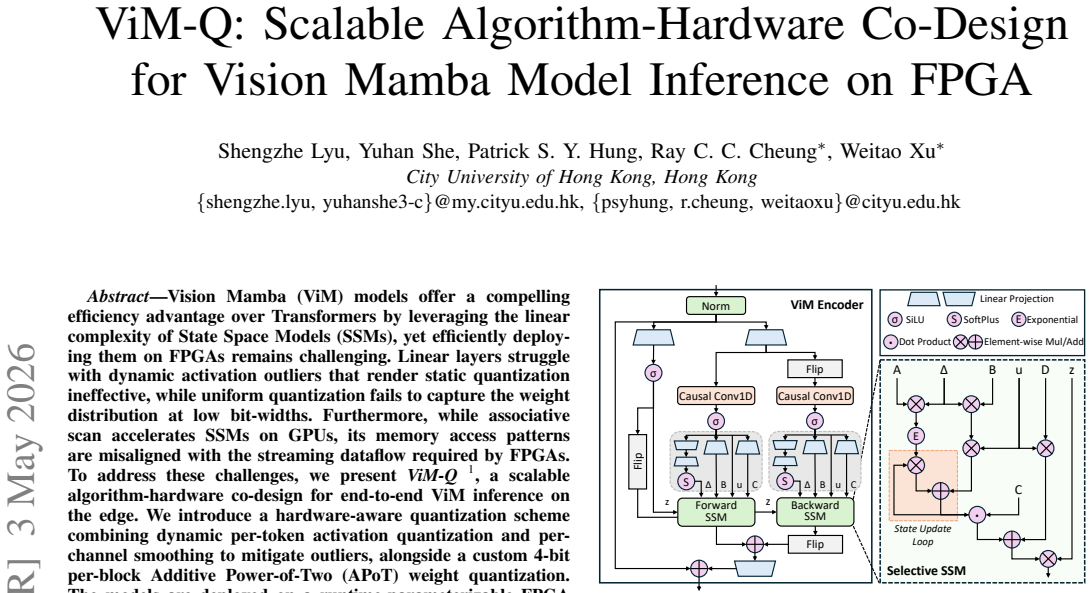

ViM-Q: Scalable Algorithm-Hardware Co-Design for Vision Mamba Model Inference on FPGA

ViM-Q delivers 4.96x speedup and 59.8x energy efficiency for Vision Mamba inference on FPGA versus a quantized GPU baseline using dynamic…

full image

full image

RV32IM gains speed at the cost of 41 percent lower per-MHz efficiency and uses far fewer resources than RV64 equivalents.

full image

full image

PipeRTL: Timing-Aware Pipeline Optimization at IR-Level for RTL Generation

Solving pipeline placement as a min-cost flow with a timing predictor at compiler IR level delivers better starting points for commercial sy

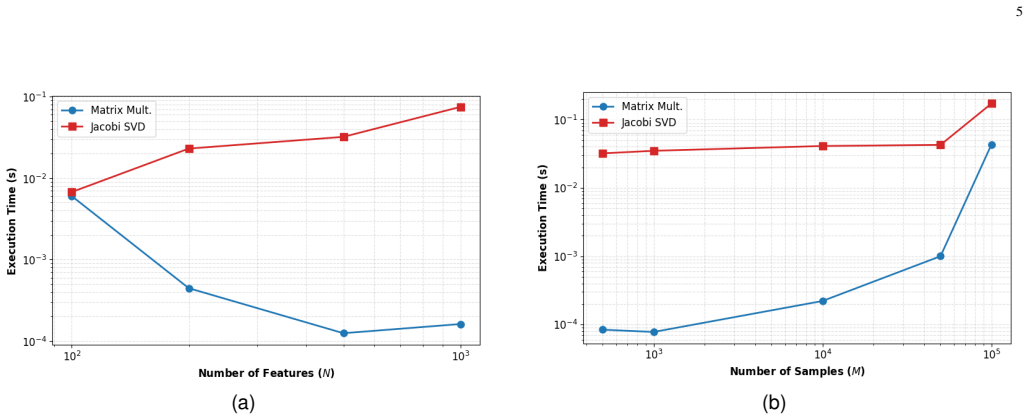

MANOJAVAM merges systolic matrix multiplication and parallel CORDIC SVD in one scalable design, slashing energy use on real datasets.

full image

full image

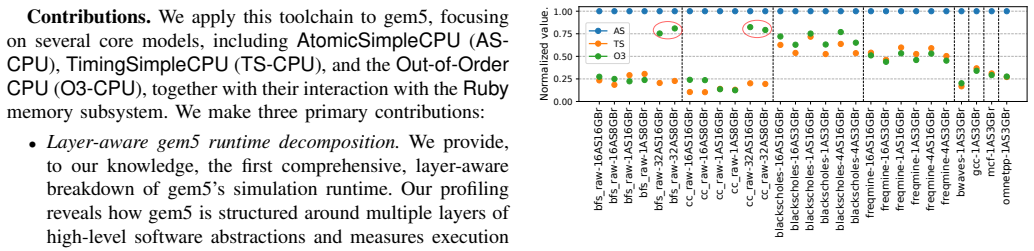

Understanding Simulated Architecture via gem5 Call-Stack Profiling

A separate profiler samples the simulator's stacks to spot inefficiencies in CPU models and coherence deadlocks that standard outputs miss.

full image

full image

AMSnet-q: Unsupervised Circuit Identification and Performance Labeling for AMS Circuits

AMSnet-q is an unsupervised pipeline that automates schematic-to-netlist conversion, topology-aware testbench creation, and…

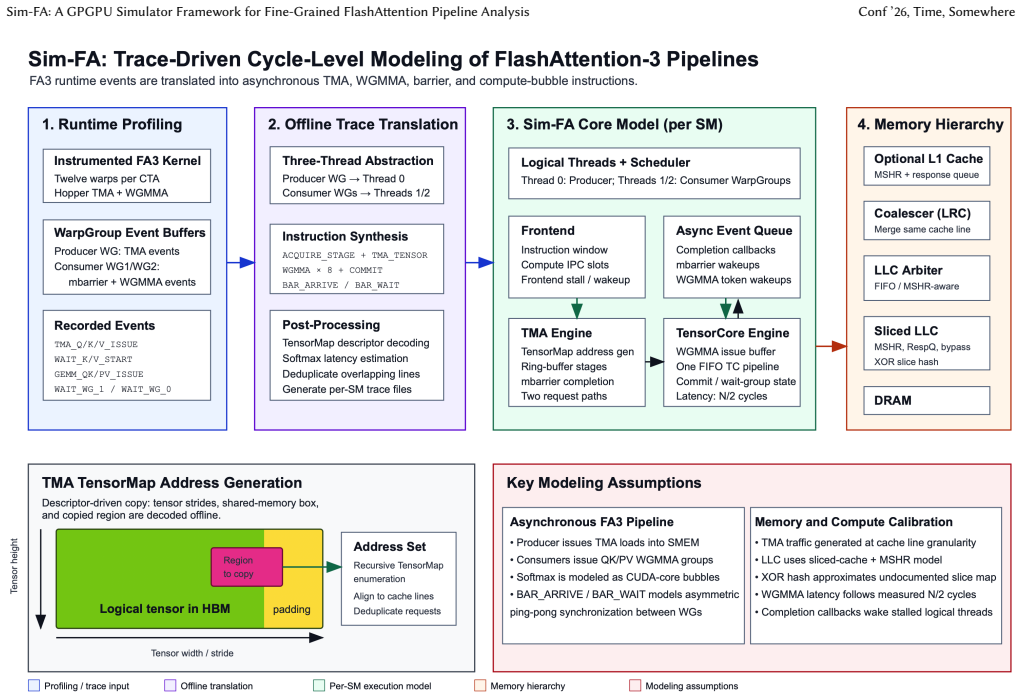

Sim-FA: A GPGPU Simulator Framework for Fine-Grained FlashAttention Pipeline Analysis

Kernel instrumentation plus cycle-accurate execution also reveals why analytical models misestimate DRAM traffic.

full image

full image

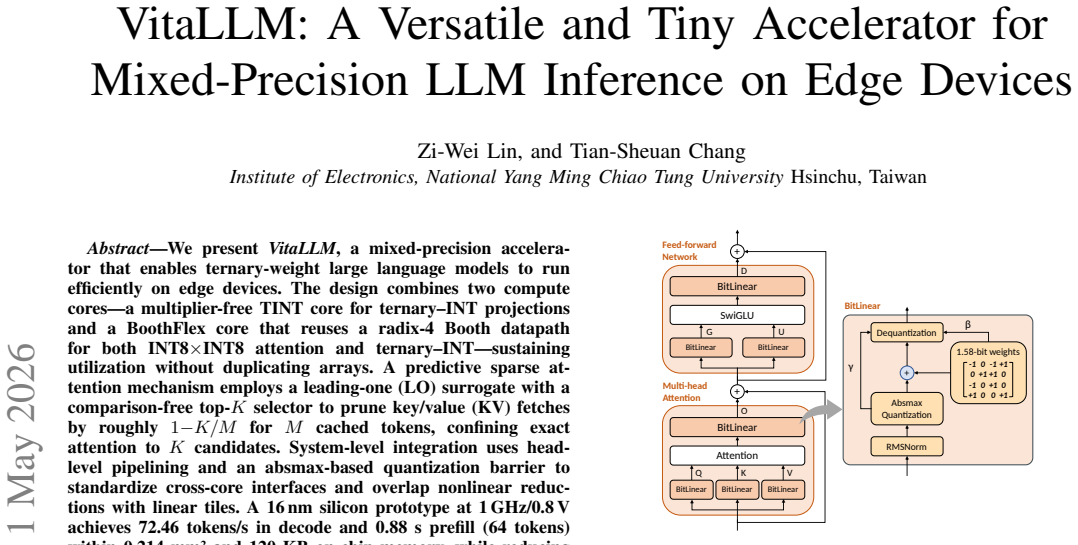

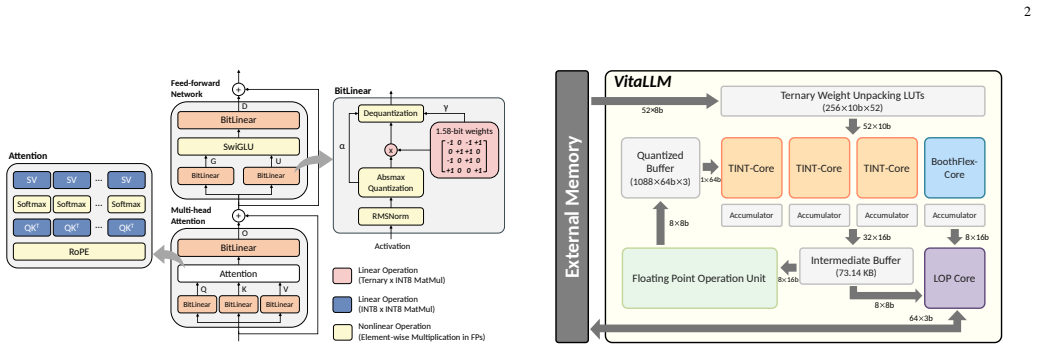

VitaLLM: A Versatile and Tiny Accelerator for Mixed-Precision LLM Inference on Edge Devices

VitaLLM uses dual cores and sparse KV pruning in 0.214 mm² and 120 KB memory for edge inference of BitNet b1.58

full image

full image

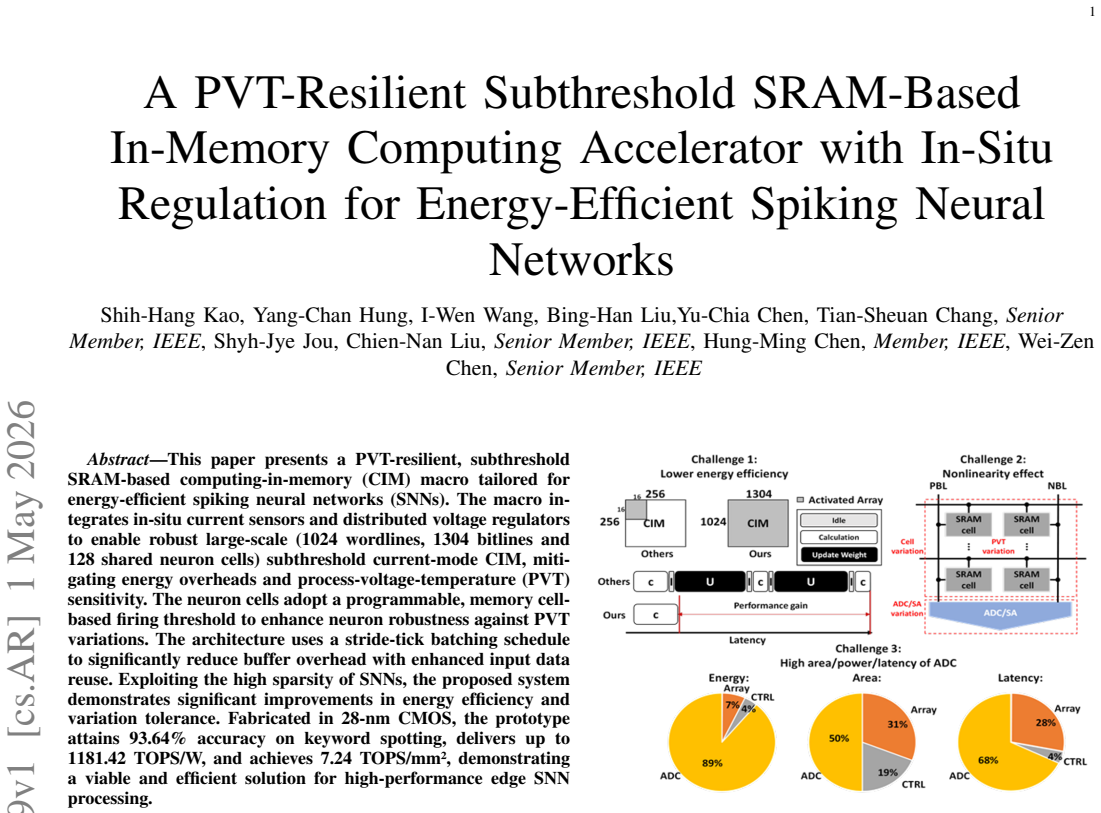

In-situ current sensors and regulators stabilize a large 28-nm array, delivering 93.64% keyword-spotting accuracy with 7.24 TOPS/mm².

full image

full image

DPU or GPU for Accelerating Neural Networks Inference -- Why not both? Split CNN Inference

Graph neural network picks the layer split so early stages run near the data source while later stages run on the GPU.

full image

full image

Bidirectional ring and dataflow architecture reaches 0.83 real-time factor on two devices while preserving reference statistics and showing

full image

full image

AME-PIM: Can Memory be Your Next Tensor Accelerator?

Mapping RISC-V AME instructions to HBM-PIM with outer-product dataflow lets accumulation stay inside memory.

full image

full image

RuC: HDL-Agnostic Rule Completion Benchmark Generation

RuC hides grammar-defined regions in real hardware designs and asks models to restore them, showing fill-in-the-middle prompting works best.

full image

full image

HAVEN: Hybrid Automated Verification ENgine for UVM Testbench Synthesis with LLMs

Predefined Jinja2 templates and a protocol DSL let LLMs plan rather than write code, yielding 100 percent compilation and 90 percent average

full image

full image

CuLifter: Lifting GPU Binaries to Typed IR

Constraint propagation from the unified register file restores the types that binary analysis needs for semantic correctness.

full image

full image

VitaLLM: A Versatile, Ultra-Compact Ternary LLM Accelerator with Dependency-Aware Scheduling

Dual-core strategy with cache prediction and latency hiding enables compact low-power decode on edge devices.

full image

full image

RCW-CIM: A Digital CIM-based LLM Accelerator with Read-Compute/Write

Weight-update hiding plus nonlinear fusion and column-stationary dataflow yield 4.2 ms prefill and 27 tokens per second for INT4 Llama2-7B.

full image

full image

Autoformalizing Memory Specifications with Agents

Natural language standards become models that generate assertions, stimulus, and coverage automatically.

full image

full image

Exploring the Efficiency of 3D-Stacked AI Chip Architecture for LLM Inference with Voxel

Voxel is a new end-to-end simulator showing that 3D-stacked AI chip efficiency for LLMs depends on the joint effects of compute paradigms…

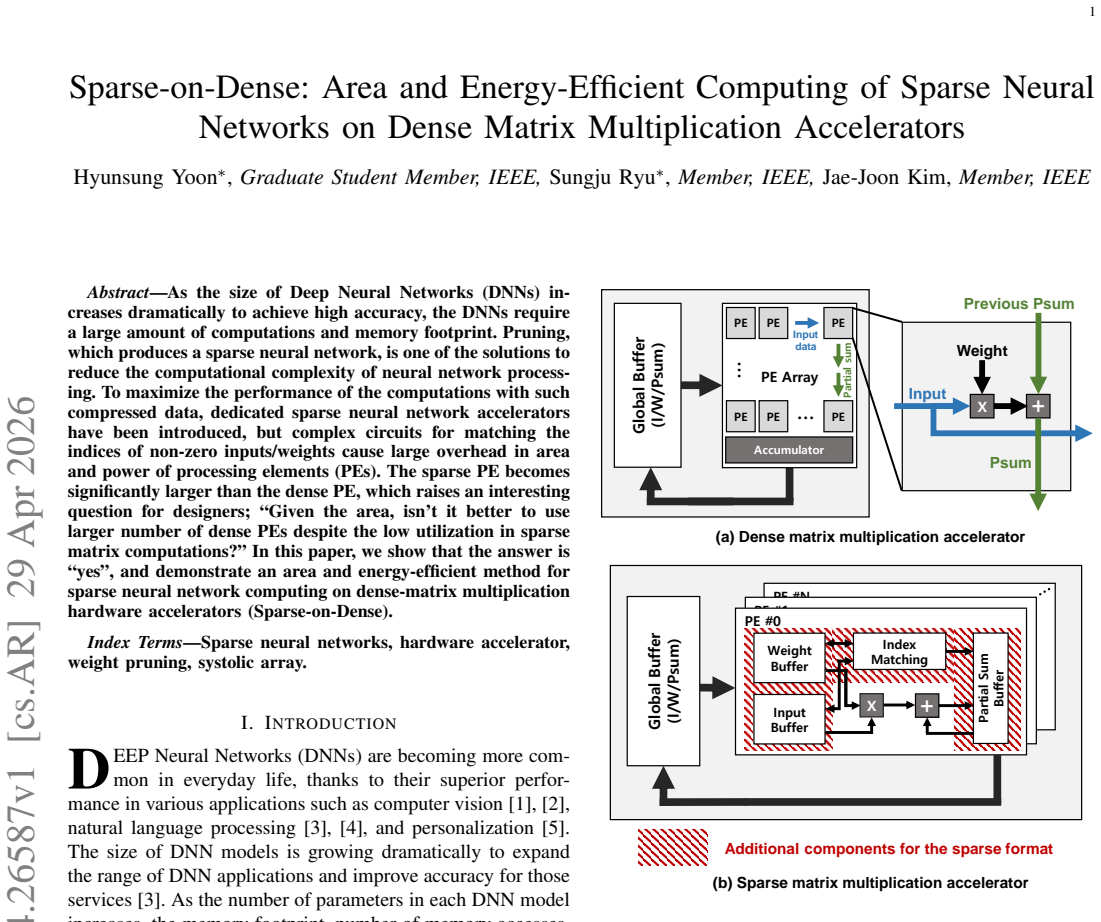

Allocating area to simple units instead of index-matching circuits improves throughput and energy despite lower utilization.

full image

full image

Verification and Validation (V&V)-in-the-Loop for RISC-V Design: The Holistic Vision of BZL

Pre-silicon methodology automates testing across RTL, system level and continuous integration to support European HPC designs.

full image

full image

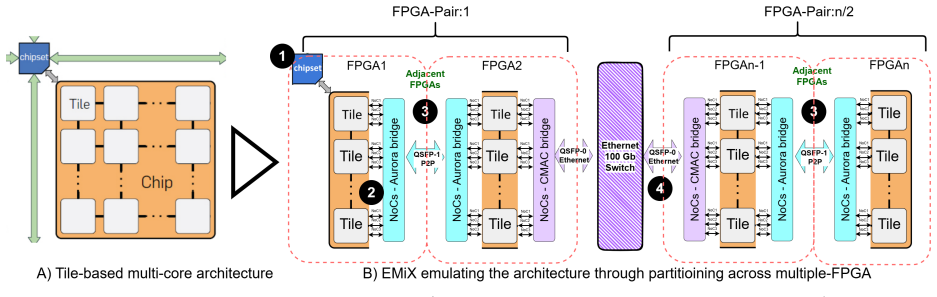

EMiX: Emulating Beyond Single-FPGA Limits

Partitioning plus interconnects let designs exceed single-FPGA limits while keeping RTL unchanged and booting Linux.

full image

full image

RKHS uses RAG-enhanced kernel templates and LLM iteration to synthesize list-scheduling heuristics that cut average schedule length by up…

AMMA: A Multi-Chiplet Memory-Centric Architecture for Low-Latency 1M Context Attention Serving

HBM-PNM cubes double bandwidth and add targeted microarchitecture to serve million-token contexts at far lower power than GPUs.

full image

full image

98% accuracy in 95 ms on minimal hardware enables real-time cardiac monitoring for space missions.

full image

full image