Recognition: unknown

DiLO: Decoupling Generative Priors and Neural Operators via Diffusion Latent Optimization for Inverse Problems

Pith reviewed 2026-05-10 15:54 UTC · model grok-4.3

The pith

DiLO converts stochastic diffusion sampling into deterministic latent optimization to keep neural operators on physical manifolds for inverse problems.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

DiLO transforms the stochastic sampling process into a deterministic latent trajectory, enabling stable backpropagation of measurement gradients to the initial latent state. By keeping the trajectory on the physical manifold, it ensures physically valid updates and improves reconstruction accuracy while providing theoretical guarantees for convergence.

What carries the argument

Diffusion Latent Optimization (DiLO), which replaces stochastic diffusion sampling with deterministic optimization over the initial latent variable to enforce evaluation of neural surrogates exclusively on fully denoised physical states.

If this is right

- Reconstruction accuracy improves because updates remain on the physical manifold at every step.

- Measurement gradients propagate stably back to the initial latent without out-of-distribution evaluations.

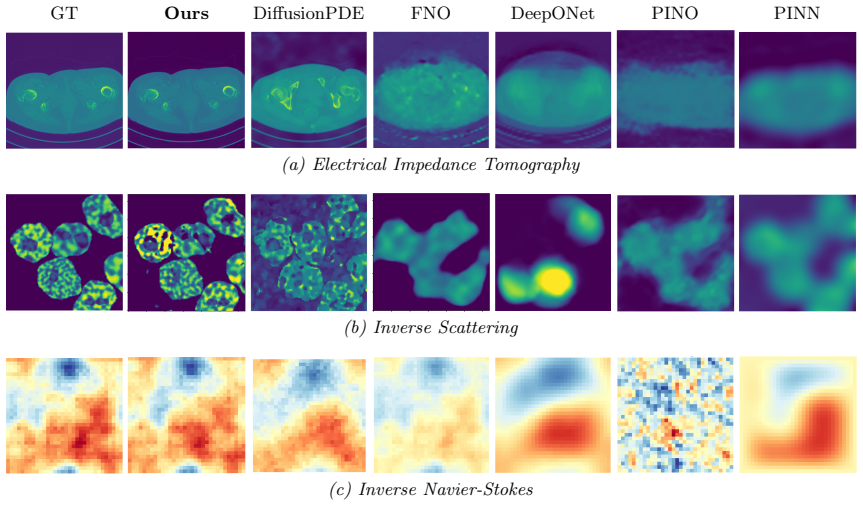

- The approach applies across Electrical Impedance Tomography, Inverse Scattering, and Inverse Navier-Stokes without retraining paired datasets.

- Convergence of the latent trajectory is guaranteed under the stated theoretical conditions.

Where Pith is reading between the lines

- The same latent-optimization idea could be tested on other generative priors such as flow-based models for the same inverse problems.

- Running DiLO on experimental sensor data instead of synthetic measurements would check robustness outside simulated settings.

- Extending the manifold consistency idea to time-evolving or high-dimensional PDEs not included in the current experiments could reveal further limits.

Load-bearing premise

Neural operator surrogates produce unreliable results when evaluated on partially denoised, non-physical intermediate states during diffusion sampling.

What would settle it

Demonstrating that a standard diffusion sampler with neural operators applied at every step achieves comparable accuracy and convergence on the same inverse problems without latent optimization.

Figures

read the original abstract

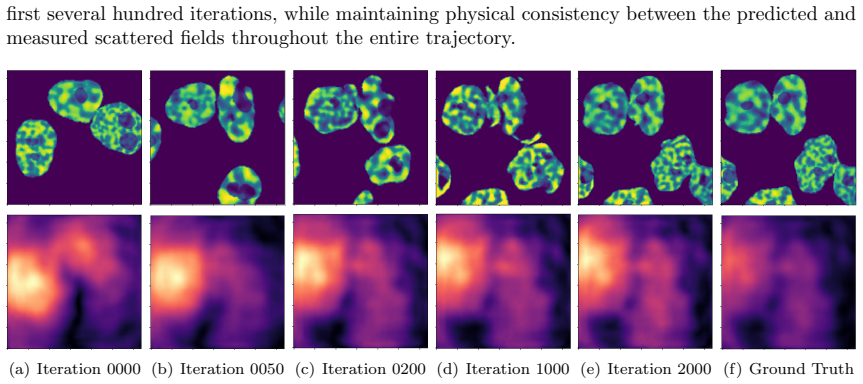

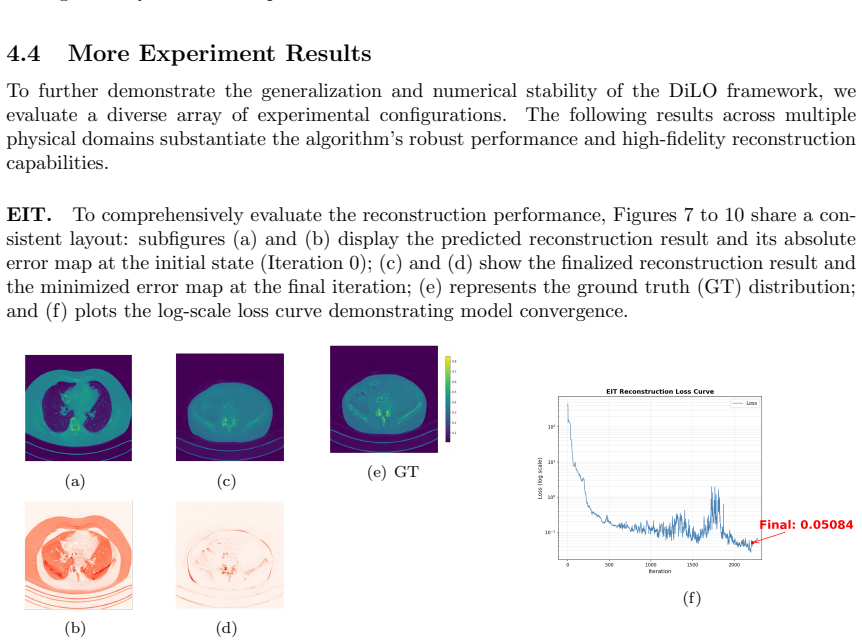

Diffusion models have emerged as powerful generative priors for solving PDE-constrained inverse problems. Compared to end-to-end approaches relying on massive paired datasets, explicitly decoupling the prior distribution of physical parameters from the forward physical model, a paradigm often formalized as Plug-and-Play (PnP) priors, offers enhanced flexibility and generalization. To accelerate inference within such decoupled frameworks, fast neural operators are employed as surrogate solvers. However, directly integrating them into standard diffusion sampling introduces a critical bottleneck: evaluating neural surrogates on partially denoised, non-physical intermediate states forces them into out-of-distribution (OOD) regimes. To eliminate this, the physical surrogate must be evaluated exclusively on the fully denoised parameter, a principle we formalize as the Manifold Consistency Requirement. To satisfy this requirement, we present Diffusion Latent Optimization (DiLO), which transforms the stochastic sampling process into a deterministic latent trajectory, enabling stable backpropagation of measurement gradients to the initial latent state. By keeping the trajectory on the physical manifold, it ensures physically valid updates and improves reconstruction accuracy. We provide theoretical guarantees for the convergence of this optimization trajectory. Extensive experiments across Electrical Impedance Tomography, Inverse Scattering, and Inverse Navier-Stokes problems demonstrate DiLO's accuracy, efficiency, and robustness to noise.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes Diffusion Latent Optimization (DiLO) to address a bottleneck when combining diffusion-based generative priors with neural operators for PDE-constrained inverse problems. It formalizes the Manifold Consistency Requirement (neural operators evaluated only on fully denoised physical parameters) and introduces a deterministic latent trajectory that replaces stochastic diffusion sampling. This enables stable backpropagation of measurement gradients to the initial latent state while preserving physical validity, with claimed theoretical convergence guarantees. Experiments on Electrical Impedance Tomography, Inverse Scattering, and Inverse Navier-Stokes demonstrate gains in accuracy, efficiency, and noise robustness compared to standard approaches.

Significance. If the central construction and convergence analysis hold, the work offers a principled decoupling of pre-trained generative priors from fast physical surrogates, avoiding OOD evaluation issues that plague direct integration. This could improve flexibility and generalization over end-to-end learned solvers while retaining the benefits of diffusion priors for ill-posed inverse problems. The explicit manifold constraint and theoretical guarantees distinguish it from heuristic PnP variants; reproducible code or parameter-free derivations would further strengthen its utility.

major comments (1)

- [§4] §4 (theoretical analysis): The convergence guarantee for the DiLO trajectory is stated to follow from keeping updates on the physical manifold, but the proof sketch does not explicitly bound the deviation introduced by the neural operator approximation or the discretization of the latent trajectory; a quantitative error term relating the surrogate accuracy to the final reconstruction error would be needed to support the claim that DiLO improves accuracy over standard sampling.

minor comments (2)

- [Abstract] Abstract: The phrase 'transforms the stochastic sampling process into a deterministic latent trajectory' is repeated; a single concise definition early in the abstract would improve readability.

- [Experiments] Experiments section: The noise-robustness plots would benefit from error bars over multiple random seeds and a direct comparison table against a vanilla PnP baseline using the same neural operator but without the latent optimization step.

Simulated Author's Rebuttal

We thank the referee for their positive summary, recognition of the work's potential significance, and recommendation for minor revision. We address the single major comment point-by-point below.

read point-by-point responses

-

Referee: [§4] §4 (theoretical analysis): The convergence guarantee for the DiLO trajectory is stated to follow from keeping updates on the physical manifold, but the proof sketch does not explicitly bound the deviation introduced by the neural operator approximation or the discretization of the latent trajectory; a quantitative error term relating the surrogate accuracy to the final reconstruction error would be needed to support the claim that DiLO improves accuracy over standard sampling.

Authors: We appreciate the referee's careful reading and agree that strengthening the error analysis would improve the presentation. The current proof in §4 establishes convergence of the deterministic latent optimization to a stationary point on the physical manifold under the manifold consistency requirement, treating the forward model as exact. In the revised manuscript we will augment §4 with an explicit quantitative error bound. The total reconstruction error will be decomposed as the sum of (i) the optimization error along the latent trajectory (which vanishes with increasing steps under the stated assumptions) and (ii) the neural-operator approximation error, controlled by the surrogate's Lipschitz constant restricted to the manifold. This bound will be contrasted with the additional out-of-distribution error incurred by standard diffusion sampling, thereby providing theoretical support for the accuracy gains observed in the experiments. The added analysis remains fully consistent with the existing convergence result and does not alter any claims. revision: yes

Circularity Check

No significant circularity detected

full rationale

The paper defines the Manifold Consistency Requirement as the principle that neural surrogates must be evaluated only on fully denoised physical parameters, then constructs DiLO as a deterministic latent trajectory optimization to satisfy it while enabling gradient backpropagation. This is a standard design choice for a new method, not a reduction of the claimed result to its own inputs by construction. No equations or steps are shown to equate a 'prediction' with a fitted parameter, no load-bearing self-citations reduce the central claim, and no ansatz or uniqueness theorem is smuggled in. The derivation remains self-contained with separate theoretical convergence guarantees claimed for the trajectory.

Axiom & Free-Parameter Ledger

axioms (1)

- ad hoc to paper Manifold Consistency Requirement that neural operators are only evaluated on fully denoised physical parameters

invented entities (1)

-

Diffusion Latent Optimization (DiLO)

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Electrical impedance tomography.Inverse problems, 18(6):R99, 2002

Liliana Borcea. Electrical impedance tomography.Inverse problems, 18(6):R99, 2002

2002

-

[2]

CRC press, 2004

David S Holder.Electrical impedance tomography: methods, history and applications. CRC press, 2004

2004

-

[3]

Springer, 1998

David L Colton, Rainer Kress, and Rainer Kress.Inverse acoustic and electromagnetic scat- tering theory, volume 93. Springer, 1998

1998

-

[4]

Extended contrast source inversion.Inverse problems, 15(5):1325, 1999

Peter M van den Berg, AL Van Broekhoven, and Aria Abubakar. Extended contrast source inversion.Inverse problems, 15(5):1325, 1999

1999

-

[5]

Maziar Raissi, Paris Perdikaris, and George E Karniadakis. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations.Journal of Computational physics, 378:686–707, 2019

2019

-

[6]

SIAM, 2002

Curtis R Vogel.Computational methods for inverse problems. SIAM, 2002

2002

-

[7]

Walter De Gruyter, 2010

Anatoly B Bakushinsky, Mihail Yu Kokurin, and Alexandra Smirnova.Iterative methods for ill-posed problems: An introduction, volume 54. Walter De Gruyter, 2010

2010

-

[8]

Solving inverse obstacle scattering problem with latent surface representations.Inverse Problems, 40(6):065013, 2024

Junqing Chen, Bangti Jin, and Haibo Liu. Solving inverse obstacle scattering problem with latent surface representations.Inverse Problems, 40(6):065013, 2024. 20

2024

-

[9]

Alexander Denker, Fabio Margotti, Jianfeng Ning, Kim Knudsen, Derick Nganyu Tanyu, Bangti Jin, Andreas Hauptmann, and Peter Maass. Deep learning based reconstruction meth- ods for electrical impedance tomography.arXiv preprint arXiv:2508.06281, 2025

-

[10]

Generative prior-guided neural interface recon- struction for 3d electrical impedance tomography.Journal of Computational Physics, page 114841, 2026

Haibo Liu, Junqing Chen, and Guang Lin. Generative prior-guided neural interface recon- struction for 3d electrical impedance tomography.Journal of Computational Physics, page 114841, 2026

2026

-

[11]

Denoising diffusion probabilistic models

Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffusion probabilistic models. In H. Larochelle, M. Ranzato, R. Hadsell, M.F. Balcan, and H. Lin, editors,Advances in Neural Information Processing Systems, volume 33, pages 6840–6851. Curran Associates, Inc., 2020

2020

-

[12]

Score-based generative modeling through stochastic differential equations

Yang Song, Jascha Sohl-Dickstein, Diederik P Kingma, Abhishek Kumar, Stefano Ermon, and Ben Poole. Score-based generative modeling through stochastic differential equations. In International Conference on Learning Representations, 2021

2021

-

[13]

Yang Song, Liyue Shen, Lei Xing, and Stefano Ermon

Yang Song, Liyue Shen, Lei Xing, and Stefano Ermon. Solving inverse problems in medical imaging with score-based generative models.arXiv preprint arXiv:2111.08005, 2021

-

[14]

Diffusion posterior sampling for general noisy inverse problems

Hyungjin Chung, Jeongsol Kim, Michael Thompson Mccann, Marc Louis Klasky, and Jong Chul Ye. Diffusion posterior sampling for general noisy inverse problems. InThe Eleventh International Conference on Learning Representations, 2023

2023

-

[15]

arXiv preprint arXiv:2305.04391 , year=

Morteza Mardani, Jiaming Song, Jan Kautz, and Arash Vahdat. A variational perspective on solving inverse problems with diffusion models.arXiv preprint arXiv:2305.04391, 2023

-

[16]

Inversebench: Benchmarking plug-and-play diffusion priors for inverse problems in phys- ical sciences

Hongkai Zheng, Wenda Chu, Bingliang Zhang, Zihui Wu, Austin Wang, Berthy Feng, Caifeng Zou, Yu Sun, Nikola Borislavov Kovachki, Zachary E Ross, Katherine Bouman, and Yisong Yue. Inversebench: Benchmarking plug-and-play diffusion priors for inverse problems in phys- ical sciences. InThe Thirteenth International Conference on Learning Representations, 2025

2025

-

[17]

Unleashing the denoising capability of diffusion prior for solving inverse problems.Advances in Neural Information Processing Systems, 37:47636–47677, 2024

Jiawei Zhang, Jiaxin Zhuang, Cheng Jin, Gen Li, and Yuantao Gu. Unleashing the denoising capability of diffusion prior for solving inverse problems.Advances in Neural Information Processing Systems, 37:47636–47677, 2024

2024

-

[18]

Deep data consistency: a fast and robust diffusion model-based solver for inverse problems.Neural Networks, page 108334, 2025

Hanyu Chen, Zhixiu Hao, and Liying Xiao. Deep data consistency: a fast and robust diffusion model-based solver for inverse problems.Neural Networks, page 108334, 2025

2025

-

[19]

Ode-dps: Ode-based diffusion posterior sampling for linear inverse problems in partial differential equation.Journal of Scientific Computing, 102(3):69, 2025

Enze Jiang, Jishen Peng, Zheng Ma, and Xiong-Bin Yan. Ode-dps: Ode-based diffusion posterior sampling for linear inverse problems in partial differential equation.Journal of Scientific Computing, 102(3):69, 2025

2025

-

[20]

Diffusion mod- els as probabilistic neural operators for recovering unobserved states of dynamical systems

Katsiaryna Haitsiukevich, Onur Poyraz, Pekka Marttinen, and Alexander Ilin. Diffusion mod- els as probabilistic neural operators for recovering unobserved states of dynamical systems. In 2024 IEEE 34th International Workshop on Machine Learning for Signal Processing (MLSP), pages 1–6. IEEE, 2024

2024

-

[21]

Dgno: A novel physics-aware neural op- erator for solving forward and inverse pde problems based on deep, generative probabilistic modeling.Generative Probabilistic Modeling, 2025

Yaohua Zang and Phaedon-Stelios Koutsourelakis. Dgno: A novel physics-aware neural op- erator for solving forward and inverse pde problems based on deep, generative probabilistic modeling.Generative Probabilistic Modeling, 2025

2025

-

[22]

Cocogen: Physically consistent and conditioned score-based generative models for forward and inverse problems.SIAM Jour- nal on Scientific Computing, 47(2):C399–C425, 2025

Christian Jacobsen, Yilin Zhuang, and Karthik Duraisamy. Cocogen: Physically consistent and conditioned score-based generative models for forward and inverse problems.SIAM Jour- nal on Scientific Computing, 47(2):C399–C425, 2025

2025

-

[23]

Utkarsh Utkarsh, Pengfei Cai, Alan Edelman, Rafael Gomez-Bombarelli, and Christopher Vin- cent Rackauckas. Physics-constrained flow matching: Sampling generative models with hard constraints.arXiv preprint arXiv:2506.04171, 2025

-

[24]

Da Long, Zhitong Xu, Qiwei Yuan, Yin Yang, and Shandian Zhe. Invertible fourier neural operators for tackling both forward and inverse problems.arXiv preprint arXiv:2402.11722, 2024. 21

-

[25]

Sung Woong Cho and Hwijae Son. Physics-informed deep inverse operator networks for solving pde inverse problems.arXiv preprint arXiv:2412.03161, 2024

-

[26]

Diffusionpde: Generative pde-solving under partial observation.Advances in Neural Information Processing Systems, 37:130291–130323, 2024

Jiahe Huang, Guandao Yang, Zichen Wang, and Jeong Joon Park. Diffusionpde: Generative pde-solving under partial observation.Advances in Neural Information Processing Systems, 37:130291–130323, 2024

2024

-

[27]

Rombach, R., Blattmann, A., Lorenz, D., Esser, P., and Ommer, B

Jiachen Yao, Abbas Mammadov, Julius Berner, Gavin Kerrigan, Jong Chul Ye, Kamyar Azizzadenesheli, and Anima Anandkumar. Guided diffusion sampling on function spaces with applications to pdes.ArXiv, abs/2505.17004, 2025

-

[28]

Y ., Yao, J., Chiang, L., Berner, J., and Anandkumar, A

Thomas YL Lin, Jiachen Yao, Lufang Chiang, Julius Berner, and Anima Anandkumar. Decoupled diffusion sampling for inverse problems on function spaces.arXiv preprint arXiv:2601.23280, 2026

-

[29]

Siam, 1990

David L Colton, Richard E Ewing, William Rundell, et al.Inverse problems in partial differ- ential equations, volume 42. Siam, 1990

1990

-

[30]

The calder´ on problem–an introduction to inverse problems.Preliminary notes on the book in preparation, 30, 2019

Joel Feldman, Mikko Salo, and Gunther Uhlmann. The calder´ on problem–an introduction to inverse problems.Preliminary notes on the book in preparation, 30, 2019

2019

-

[31]

Fourier Neural Operator for Parametric Partial Differential Equations

Zongyi Li, Nikola Kovachki, Kamyar Azizzadenesheli, Burigede Liu, Kaushik Bhattacharya, Andrew Stuart, and Anima Anandkumar. Fourier neural operator for parametric partial differential equations.arXiv preprint arXiv:2010.08895, 2020

work page internal anchor Pith review arXiv 2010

-

[32]

Samuel G Armato III, Geoffrey McLennan, Luc Bidaut, Michael F McNitt-Gray, Charles R Meyer, Anthony P Reeves, Binsheng Zhao, Denise R Aberle, Claudia I Henschke, Eric A Hoffman, et al. The lung image database consortium (lidc) and image database resource initiative (idri): a completed reference database of lung nodules on ct scans.Medical physics, 38(2):9...

2011

-

[33]

Physics-informed neural operator for learning partial differential equations.ACM/IMS Journal of Data Science, 1(3):1–27, 2024

Zongyi Li, Hongkai Zheng, Nikola Kovachki, David Jin, Haoxuan Chen, Burigede Liu, Kamyar Azizzadenesheli, and Anima Anandkumar. Physics-informed neural operator for learning partial differential equations.ACM/IMS Journal of Data Science, 1(3):1–27, 2024

2024

-

[34]

arXiv preprint arXiv:1910.03193 , year=

Lu Lu, Pengzhan Jin, and George Em Karniadakis. Deeponet: Learning nonlinear opera- tors for identifying differential equations based on the universal approximation theorem of operators.arXiv preprint arXiv:1910.03193, 2019. 22 A Proof of Lemma 3.1 Proof.LetL exact(zT ) =L phys(M(zT )). The proof proceeds by evaluating the exact first- and second-order Fr...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.