Finding accurate eigenvalues and eigenvectors of positive semi-definite matrices given a subspace

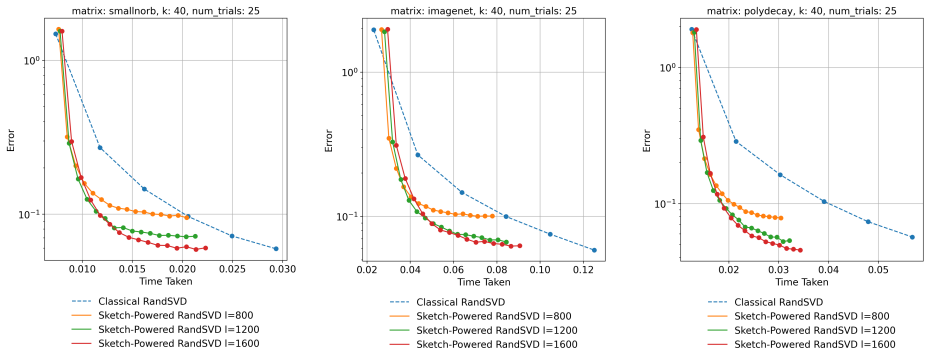

Given a subspace approximation, Nyström delivers strictly higher accuracy at identical cost, with gains that grow unbounded for fast-decayng

abstract

click to expand

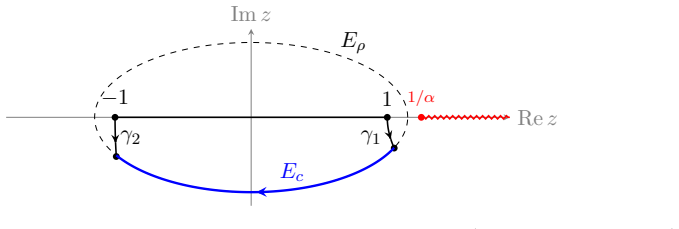

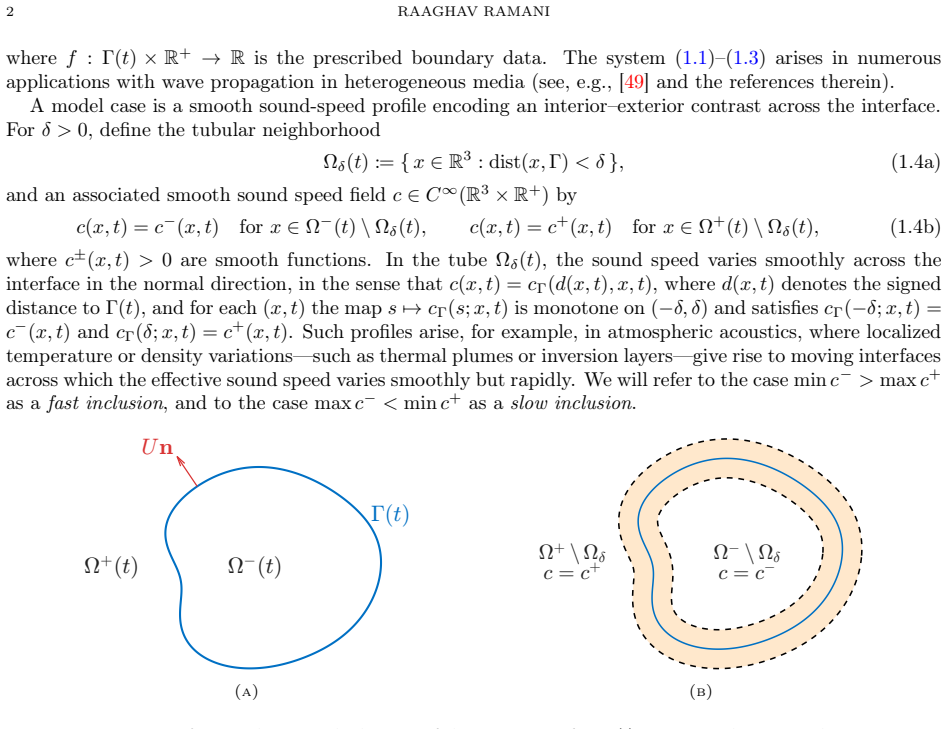

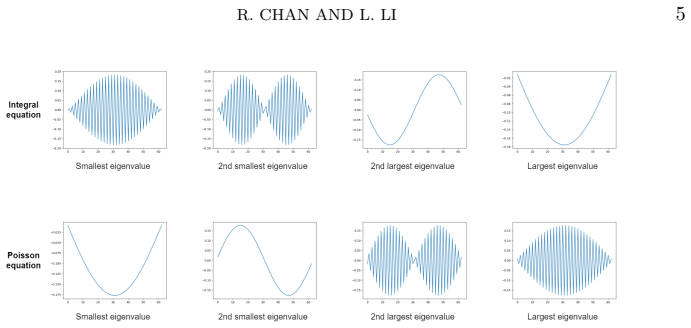

We revisit a classical problem in numerical linear algebra: given an $k$-dimensional subspace $\mathcal{Q}$ that approximates the leading eigenspace of an $n\times n$ positive semi-definite matrix $A$, the goal is to extract high-accuracy eigenvalues. The Rayleigh-Ritz (RR) method is the standard algorithm for the task, which has been shown to be optimal in several ways (when $A$ is symmetric, not necessarily positive semi-definite $A\succeq 0$). In this paper, we show that when $A \succeq 0$, alternative methods can outperform RR, while having the same computational complexity, that is, the main cost is in computing $AQ$, plus an $O(nk^2)$ term. In particular, we advocate the use of Nystr{\"o}m's method, showing that the approximate eigenvalues always have higher accuracy than RR, and the improvement can be arbitrarily large. The difference is significant, especially when $A$ has a fast-decaying spectrum. A similar improvement is numerically observed for the purpose of approximating the leading eigenvectors. In contrast, when the target eigenvalues are the trailing ones, the situation is reversed, and the Nystr{\"o}m method performs poorly; we suggest a remedy for this situation.

full image

full image