Recognition: unknown

Robust Reasoning and Learning with Brain-Inspired Representations under Hardware-Induced Nonlinearities

Pith reviewed 2026-05-10 14:56 UTC · model grok-4.3

The pith

A hardware-aware optimization for Hyperdimensional Computing compensates for nonlinear distortions in compute-in-memory hardware while preserving accuracy in classification and reasoning.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Formulating hypervector encoding as the minimization of the Frobenius norm between an ideal kernel and its hardware-constrained counterpart, together with joint end-to-end calibration of the representations, allows QuantHD to reach 84 percent accuracy under severe hardware-induced perturbations (a 48 percent gain over the naive baseline) and allows RelHD to retain its accuracy on the Cora graph dataset (a 5.4 times gain over naive RelHD) while preserving the symbolic properties needed for interpretable reasoning.

What carries the argument

The hardware-aware optimization framework that minimizes the Frobenius norm between ideal and hardware kernels and performs joint end-to-end calibration of hypervector representations.

If this is right

- QuantHD reaches 84 percent accuracy under severe hardware perturbations.

- This is a 48 percent improvement over the unoptimized QuantHD baseline.

- RelHD keeps its original accuracy on the Cora dataset for graph reasoning.

- It delivers a 5.4 times accuracy gain over naive RelHD in nonlinear settings.

- The calibrated representations support both classification and interpretable variable-binding on CIM hardware without losing HDC's robustness.

Where Pith is reading between the lines

- The same kernel-matching principle could be tested on other non-ideal arithmetic substrates beyond CIM arrays.

- If the symbolic properties survive, hybrid neural-symbolic pipelines might run directly on the same low-power hardware.

- Scaling the calibration to larger graphs or multi-task settings would test whether the overhead remains acceptable.

Load-bearing premise

Minimizing the Frobenius norm between ideal and hardware kernels plus joint end-to-end calibration of hypervectors will reliably compensate for non-ideal similarity computations while preserving HDC robustness and symbolic properties.

What would settle it

Measuring accuracy and reasoning fidelity after loading the calibrated hypervectors onto physical CIM hardware that exhibits the modeled nonlinear distortions and checking whether the 84 percent and 5.4 times gains are observed.

Figures

read the original abstract

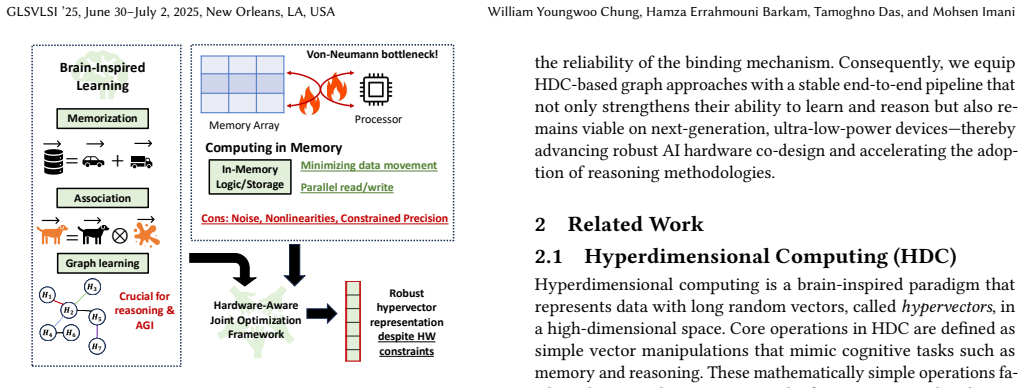

Traditional machine learning depends on high-precision arithmetic and near-ideal hardware assumptions, which is increasingly challenged by variability in aggressively scaled semiconductor devices. Compute-in-memory (CIM) architectures alleviate data-movement bottlenecks and improve energy efficiency yet introduce nonlinear distortions and reliability concerns. We address these issues with a hardware-aware optimization framework based on Hyperdimensional Computing (HDC), systematically compensating for non-ideal similarity computations in CIM. Our approach formulates encoding as an optimization problem, minimizing the Frobenius norm between an ideal kernel and its hardware-constrained counterpart, and employs a joint optimization strategy for end-to-end calibration of hypervector representations. Experimental results demonstrate that our method when applied to QuantHD achieves 84\% accuracy under severe hardware-induced perturbations, a 48\% increase over naive QuantHD under the same conditions. Additionally, our optimization is vital for graph-based HDC reliant on precise variable-binding for interpretable reasoning. Our framework preserves the accuracy of RelHD on the Cora dataset, achieving a 5.4$\times$ accuracy improvement over naive RelHD under nonlinear environments. By preserving HDC's robustness and symbolic properties, our solution enables scalable, energy-efficient intelligent systems capable of classification and reasoning on emerging CIM hardware.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces a hardware-aware optimization framework for Hyperdimensional Computing (HDC) to compensate for nonlinear distortions and reliability issues in Compute-in-Memory (CIM) architectures. Encoding is formulated as an optimization problem that minimizes the Frobenius norm between an ideal kernel and its hardware-constrained counterpart, combined with joint end-to-end calibration of hypervector representations. The authors report that the method applied to QuantHD achieves 84% accuracy under severe hardware-induced perturbations (a 48% increase over naive QuantHD) and that the optimization is essential for graph-based HDC, preserving accuracy of RelHD on the Cora dataset with a 5.4× improvement over naive RelHD under nonlinear conditions while retaining HDC robustness and symbolic properties for interpretable reasoning.

Significance. If the central claims are substantiated with rigorous validation, this framework could enable practical deployment of energy-efficient, brain-inspired HDC systems on imperfect CIM hardware for both classification and symbolic reasoning tasks. The kernel-alignment approach provides a principled adaptation mechanism that addresses a key barrier in scaling HDC beyond ideal hardware assumptions. Strengths include the explicit formulation of hardware compensation and the focus on preserving interpretability in graph-based applications, which aligns with needs in robust, low-power AI.

major comments (2)

- Abstract and optimization formulation: The central claim that the framework 'preserves' HDC robustness and symbolic properties (precise variable-binding for interpretable graph reasoning in RelHD) is load-bearing for the 5.4× accuracy improvement result, yet the objective only minimizes ||K_ideal - K_hw||_F with end-to-end hypervector calibration. This alignment of similarity scores imposes no explicit constraints on binding invertibility, orthogonality, or unbinding algebra, creating a risk that reported gains reflect classification accuracy without retained symbolic fidelity. A post-optimization metric or algebraic invariance check is required to support the preservation assertion.

- Experimental results (as described in abstract): The reported accuracy numbers (84% for QuantHD, 5.4× for RelHD on Cora) lack error bars, ablation studies on the Frobenius term versus calibration, or full experimental protocols including perturbation models and dataset splits. Without these, the numerical gains cannot be independently verified and may depend on post-hoc choices, undermining confidence in the hardware-robustness claims.

Simulated Author's Rebuttal

We thank the referee for the thoughtful and constructive feedback on our manuscript. We address each major comment below and describe the revisions we will incorporate to strengthen the presentation and validation of our hardware-aware HDC optimization framework.

read point-by-point responses

-

Referee: Abstract and optimization formulation: The central claim that the framework 'preserves' HDC robustness and symbolic properties (precise variable-binding for interpretable graph reasoning in RelHD) is load-bearing for the 5.4× accuracy improvement result, yet the objective only minimizes ||K_ideal - K_hw||_F with end-to-end hypervector calibration. This alignment of similarity scores imposes no explicit constraints on binding invertibility, orthogonality, or unbinding algebra, creating a risk that reported gains reflect classification accuracy without retained symbolic fidelity. A post-optimization metric or algebraic invariance check is required to support the preservation assertion.

Authors: We appreciate the referee's emphasis on rigorously substantiating the preservation of symbolic properties. The Frobenius-norm kernel alignment directly targets the similarity structures that underpin HDC binding and unbinding operations, which in turn support the variable-binding algebra used in RelHD for graph reasoning. Nevertheless, we agree that an explicit post-optimization verification would eliminate any ambiguity. In the revised manuscript we will add a dedicated evaluation section that reports binding-invertibility accuracy, orthogonality preservation, and unbinding fidelity metrics on the Cora dataset both before and after optimization, under the same hardware nonlinearity models. revision: yes

-

Referee: Experimental results (as described in abstract): The reported accuracy numbers (84% for QuantHD, 5.4× for RelHD on Cora) lack error bars, ablation studies on the Frobenius term versus calibration, or full experimental protocols including perturbation models and dataset splits. Without these, the numerical gains cannot be independently verified and may depend on post-hoc choices, undermining confidence in the hardware-robustness claims.

Authors: We agree that additional experimental detail is necessary for reproducibility and confidence. The current manuscript contains the core protocols, but we will expand the experimental section to include: (i) error bars computed over at least five independent random seeds for all reported accuracies, (ii) ablation studies that isolate the contribution of the Frobenius-norm term from the joint end-to-end calibration, and (iii) complete specifications of the perturbation models (nonlinearity parameters and noise distributions) together with the exact train/validation/test splits employed for QuantHD and RelHD on Cora. revision: yes

Circularity Check

No significant circularity; optimization references external ideal kernel

full rationale

The paper formulates an optimization that minimizes the Frobenius norm between an ideal kernel and its hardware counterpart, then reports empirical accuracy gains on QuantHD and RelHD under perturbations. No derivation step reduces a claimed prediction or uniqueness result to a fitted parameter or self-citation by construction. The preservation of binding algebra is asserted as an outcome of joint calibration but is not derived from the objective itself in a self-referential loop; the abstract treats it as an empirical property verified on Cora. This is a standard hardware-aware training setup with external benchmarks, yielding a self-contained result.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Hussam Amrouch, Mohsen Imani, et al. 2022. Brain-Inspired Hyperdimensional Computing for Ultra-Efficient Edge AI. In2022 International Conference on Hard- ware/Software Codesign and System Synthesis (CODES+ISSS)

2022

-

[2]

Hamza Errahmouni Barkam, Sanggeon Yun, et al. 2023. Reliable hyperdimen- sional reasoning on unreliable emerging technologies. In2023 IEEE/ACM Inter- national Conference On Computer Aided Design (ICCAD). IEEE, 1–9

2023

-

[3]

Barkam, Sanggeon Yun, Paul R

Hamza E. Barkam, Sanggeon Yun, Paul R. Genssler, Che-Kai Liu, Zhuowen Zou, Hussam Amrouch, and Mohsen Imani. 2024. In-Memory Acceleration of Hy- perdimensional Genome Matching on Unreliable Emerging Technologies.IEEE Transactions on Circuits and Systems I: Regular Papers(2024)

2024

-

[4]

Jiahao Cai, Hamza E Barkam, et al. 2024. A Scalable 2T-1FeFET Based Content Addressable Memory Design for Energy Efficient Data Search.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems(2024)

2024

-

[5]

H. Chen, A. Zakeri, F. Wen, H. E. Barkam, and M. Imani. 2023. HyperGRAF: Hyperdimensional Graph-Based Reasoning Acceleration on FPGA. InProceedings of the 33rd International Conference on Field-Programmable Logic and Applications (FPL). 1–8

2023

-

[6]

Ron Cole and Mark Fanty. 1991. ISOLET. UCI Machine Learning Repository

1991

-

[7]

Dalvi and V

N. Dalvi and V. Honavar. 2024. Hyperdimensional Representation Learning for Node Classification and Link Prediction. InProceedings of WSDM 2025

2024

-

[8]

Hamza Errahmouni Barkam, Tamoghno Das, et al . 2025. Bayesian-Informed Hyperdimensional Learning for Intelligent and Efficient Data Processing. In Proceedings of the 43rd IEEE/ACM International Conference on Computer-Aided Design (ICCAD ’24)

2025

-

[9]

Said Hamdioui, Lei Xie, et al. 2015. Memristor based computation-in-memory architecture for data-intensive applications. In2015 Design, Automation & Test in Europe Conference & Exhibition (DATE). IEEE

2015

-

[10]

Y. Han, J. Wang, and D. Wang. 2024. CiliaGraph: Enriched Graph Hyperdimen- sional Computing via Symmetric Aggregation. InarXiv preprint arXiv:2401.xxxxx

2024

-

[11]

Alejandro Hernández-Cano, Namiko Matsumoto, et al. 2021. Onlinehd: Robust, efficient, and single-pass online learning using hyperdimensional system. In2021 Design, Automation & Test in Europe Conference & Exhibition (DATE). IEEE

2021

-

[12]

Qingrong Huang, Zeyu Yang, Kai Ni, Mohsen Imani, Cheng Zhuo, and Xunzhao Yin. 2023. FeFET-based in-memory hyperdimensional encoding design.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems42, 11 (2023), 3829–3839

2023

-

[13]

Mohsen Imani, Samuel Bosch, Sohum Datta, Sharadhi Ramakrishna, Sahand Salamat, Jan M Rabaey, and Tajana Rosing. 2019. Quanthd: A quantization framework for hyperdimensional computing.IEEE Transactions on Computer- Aided Design of Integrated Circuits and Systems39, 10 (2019), 2268–2278

2019

-

[14]

Mohsen Imani, Ali Zakeri, et al. 2022. Neural computation for robust and holo- graphic face detection. InProceedings of the 59th ACM/IEEE Design Automation Conference. 31–36

2022

-

[15]

Pentti Kanerva. 2009. Hyperdimensional computing: An introduction to com- puting in distributed representation with high-dimensional random vectors. Cognitive computation1, 2 (2009), 139–159

2009

-

[16]

Jaeyoung Kang, Minxuan Zhou, et al . 2022. RelHD: A Graph-based Learning on FeFET with Hyperdimensional Computing. In2022 IEEE 40th International Conference on Computer Design (ICCD). IEEE, 553–560

2022

-

[17]

Geethan Karunaratne, Manuel Le Gallo, Giovanni Cherubini, Luca Benini, Abbas Rahimi, and Abu Sebastian. 2020. In-memory hyperdimensional computing. Nature Electronics3, 6 (2020), 327–337

2020

-

[18]

Arman Kazemi, Mohammad Mehdi Sharifi, Zhuowen Zou, Michael Niemier, X Sharon Hu, and Mohsen Imani. 2021. Mimhd: Accurate and efficient hyperdi- mensional inference using multi-bit in-memory computing. In2021 IEEE/ACM International Symposium on Low Power Electronics and Design (ISLPED). IEEE

2021

-

[19]

Bing Li, Bonan Yan, and Hai Li. 2019. An overview of in-memory processing with emerging non-volatile memory for data-intensive applications. InProceedings of the 2019 Great Lakes Symposium on VLSI. 381–386

2019

-

[20]

Haomin Li, Fangxin Liu, Yichi Chen, and Li Jiang. 2023. HyperNode: An Efficient Node Classification Framework Using HyperDimensional Computing. In2023 IEEE/ACM International Conference on Computer Aided Design (ICCAD). IEEE, 1–9

2023

-

[21]

X. Liu, A. Kumar, and B. Brown. 2020. Hardware-Aware Hyperdimensional Com- puting for Compute-In-Memory Systems. InProceedings of the IEEE International Conference on Machine Learning (ICML). 150–160

2020

-

[22]

Francesco Locatello, Dirk Weissenborn, Thomas Unterthiner, Aravindh Mahen- dran, Georg Heigold, Jakob Uszkoreit, Alexey Dosovitskiy, and Thomas Kipf

-

[23]

Object-centric learning with slot attention.Advances in neural information processing systems33 (2020), 11525–11538

2020

-

[24]

Cédric Marchand, Ian O’Connor, Mayeul Cantan, Evelyn T Breyer, Stefan Sle- sazeck, and Thomas Mikolajick. 2021. FeFET based Logic-in-Memory: an overview. In2021 16th International Conference on Design & Technology of Inte- grated Systems in Nanoscale Era (DTIS). IEEE, 1–6

2021

-

[25]

Andrew Kachites McCallum, Kamal Nigam, Jason Rennie, and Kristie Seymore

-

[26]

Information Retrieval3, 2 (2000), 127–163

Automating the construction of internet portals with machine learning. Information Retrieval3, 2 (2000), 127–163

2000

-

[27]

Yang Ni, William Y Chung, Samuel Cho, Zhuowen Zou, and Mohsen Imani. 2024. Efficient exploration in edge-friendly hyperdimensional reinforcement learning. InProceedings of the Great Lakes Symposium on VLSI 2024. 111–118

2024

- [28]

-

[29]

Vivek Parmar, Franz Müller, et al. 2023. Demonstration of Differential Mode Ferroelectric Field-Effect Transistor Array-Based in-Memory Computing Macro for Realizing Multiprecision Mixed-Signal Artificial Intelligence Accelerator. Advanced Intelligent Systems5, 6 (2023), 2200389

2023

-

[30]

Tony Plate. 1995. Holographic Reduced Representations.IEEE transactions on neural networks / a publication of the IEEE Neural Networks Council6 (02 1995), 623–41

1995

-

[31]

Prathyush Poduval, Haleh Alimohamadi, et al. 2022. Graphd: Graph-based hy- perdimensional memorization for brain-like cognitive learning.Frontiers in Neuroscience16 (2022), 757125

2022

-

[32]

Poduval, H

P. Poduval, H. Alimohamadi, A. Zakeri, F. Imani, M. H. Najafi, T. Givargis, and M. Imani. 2022. GrapHD: Graph-based Hyperdimensional Memorization for Reasoning. InFrontiers in Neuroscience, Vol. 16. 757215

2022

-

[33]

Abbas Rahimi, Pentti Kanerva, and Jan M Rabaey. 2016. Robust and efficient hyperdimensional computing using brain-inspired representation.IEEE Transac- tions on Circuits and Systems I: Regular Papers63, 11 (2016), 1997–2007

2016

-

[34]

Muhammad Rashedul Haq Rashed, Sumit Kumar Jha, and Rickard Ewetz. 2021. Hybrid analog-digital in-memory computing. In2021 IEEE/ACM International Conference On Computer Aided Design (ICCAD). IEEE, 1–9

2021

-

[35]

Ali Shafiee, Anirban Nag, et al. 2016. ISAAC: A convolutional neural network accelerator with in-situ analog arithmetic in crossbars.ACM SIGARCH Computer Architecture News44, 3 (2016), 14–26

2016

-

[36]

Christian Shewmake, Domas Buracas, Hansen Lillemark, Jinho Shin, Erik Bekkers, Nina Miolane, and Bruno Olshausen. 2023. Visual Scene Representation with Hier- archical Equivariant Sparse Coding. Proceedings of Machine Learning Research- NeurIPS workshop

2023

-

[37]

Philip Wong, Simone Raoux, SangBum Kim, Jiale Liang, John P

H.-S. Philip Wong, Simone Raoux, SangBum Kim, Jiale Liang, John P. Reifenberg, Bipin Rajendran, Mehdi Asheghi, and Kenneth E. Goodson. 2010. Phase Change Memory.Proc. IEEE98, 12 (2010), 2201–2227. doi:10.1109/JPROC.2010.2070050

- [38]

-

[39]

Han Xiao, Kashif Rasul, and Roland Vollgraf. 2017. Fashion-mnist: a novel image dataset for benchmarking machine learning algorithms.arXiv preprint arXiv:1708.07747(2017)

work page internal anchor Pith review arXiv 2017

-

[40]

Zhicheng Xu, Che-Kai Liu, Chao Li, Ruibin Mao, Jianyi Yang, Thomas Kämpfe, Mohsen Imani, Can Li, Cheng Zhuo, and Xunzhao Yin. 2024. Ferex: A reconfig- urable design of multi-bit ferroelectric compute-in-memory for nearest neighbor search. In2024 Design, Automation & Test in Europe Conference & Exhibition (DATE). IEEE, 1–6

2024

-

[41]

Shimeng Yu. 2018. Neuro-inspired computing with emerging nonvolatile memo- rys.Proc. IEEE106, 2 (2018), 260–285

2018

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.