Recognition: unknown

FRTSearch: Unified Detection and Parameter Inference of Fast Radio Transients using Instance Segmentation

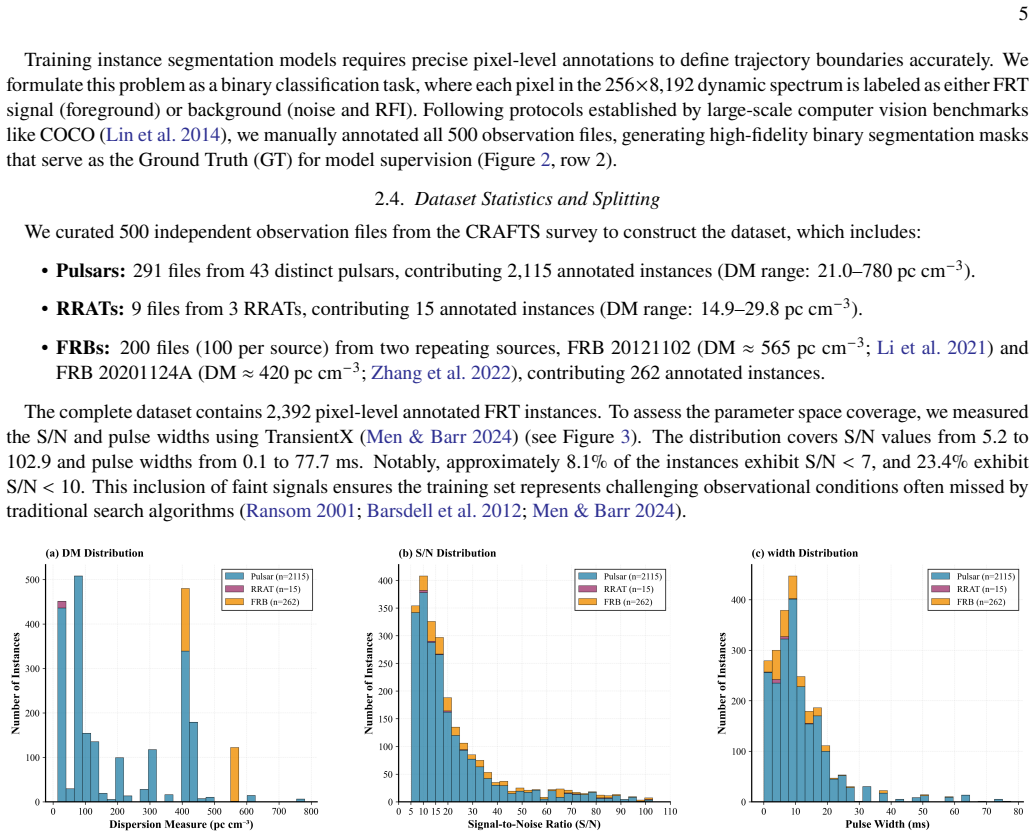

Pith reviewed 2026-05-10 14:37 UTC · model grok-4.3

The pith

A Mask R-CNN segments curved radio signal paths in dynamic spectra to detect fast radio transients and infer their dispersion measures directly from geometry.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

FRTSearch unifies detection and physical characterization by treating dispersive trajectories as universal morphological patterns governed by the cold plasma dispersion relation. A Mask R-CNN trained on the CRAFTS-FRT dataset performs precise trajectory segmentation in dynamic spectra, after which the IMPIC algorithm maps geometric coordinates directly to dispersion measure and time of arrival. On the FAST-FREX benchmark this produces 98 percent recall while cutting false positives by more than 99.9 percent relative to PRESTO and delivering up to 13.9 times faster processing; the same model detects all 19 tested FRBs from the ASKAP survey without retraining.

What carries the argument

Mask R-CNN instance segmentation of dispersive trajectories in time-frequency dynamic spectra, followed by the IMPIC algorithm that converts segmented geometric coordinates into dispersion measure and time of arrival via the cold plasma dispersion relation.

If this is right

- Detection recall reaches 98 percent on benchmark data, matching exhaustive search performance.

- False positive rate drops by more than 99.9 percent compared with PRESTO.

- Processing speed increases by up to 13.9 times.

- The trained model transfers directly to ASKAP survey data and recovers all tested events without retraining.

Where Pith is reading between the lines

- Combining detection and parameter inference in one step could shorten the overall analysis pipeline for large radio surveys.

- The same segmentation-plus-mapping structure might extend to other transient signals that produce predictable curved features in time-frequency data.

- Real-time operation on incoming data streams becomes feasible once the model runs at survey speeds.

Load-bearing premise

The shapes of dispersive trajectories remain similar enough across different sources, instruments, and observing conditions that a single model trained on one facility's data can segment them accurately enough for direct mapping to physical parameters without facility-specific adjustments.

What would settle it

A test set from an independent telescope where the model misses more than a few percent of confirmed fast radio bursts, or where the inferred dispersion measures deviate systematically from values obtained by conventional dedispersion methods, would show the claim does not hold.

Figures

read the original abstract

The exponential growth of data from modern radio telescopes presents a significant challenge to traditional single-pulse search algorithms, which are computationally intensive and prone to high false-positive rates due to Radio Frequency Interference (RFI). In this work, we introduce FRTSearch, an end-to-end framework unifying the detection and physical characterization of Fast Radio Transients (FRTs). Leveraging the morphological universality of dispersive trajectories in time-frequency dynamic spectra, we reframe FRT detection as a pattern recognition problem governed by the cold plasma dispersion relation. To facilitate this, we constructed CRAFTS-FRT, a pixel-level annotated dataset derived from the Commensal Radio Astronomy FAST Survey (CRAFTS), comprising 2{,}392 instances across diverse source classes. This dataset enables the training of a Mask R-CNN model for precise trajectory segmentation. Coupled with our physics-driven IMPIC algorithm, the framework maps the geometric coordinates of segmented trajectories to directly infer the Dispersion Measure (DM) and Time of Arrival (ToA). Benchmarking on the FAST-FREX dataset shows that FRTSearch achieves a 98.0\% recall, competitive with exhaustive search methods, while reducing false positives by over 99.9\% compared to PRESTO and delivering a processing speedup of up to $13.9\times$. Furthermore, the framework demonstrates robust cross-facility generalization, detecting all 19 tested FRBs from the ASKAP survey without retraining. By shifting the paradigm from ``search-then-identify'' to ``detect-and-infer,'' FRTSearch provides a scalable, high-precision solution for real-time discovery in the era of petabyte-scale radio astronomy.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces FRTSearch, an end-to-end framework that reframes fast radio transient (FRT) detection as an instance segmentation task on time-frequency dynamic spectra. A Mask R-CNN model is trained on the newly constructed CRAFTS-FRT dataset (2,392 pixel-level annotated instances from the CRAFTS survey) to segment dispersive trajectories; the physics-driven IMPIC algorithm then maps the segmented masks to dispersion measure (DM) and time of arrival (ToA) via the cold-plasma dispersion relation. On the FAST-FREX benchmark the method reports 98.0% recall, >99.9% false-positive reduction relative to PRESTO, and up to 13.9× speedup; it further claims robust cross-facility generalization by detecting all 19 tested ASKAP FRBs without retraining.

Significance. If the performance numbers and generalization claims are substantiated, the work would offer a practical route to real-time, low-FP processing of the petabyte-scale data volumes expected from next-generation radio surveys. The creation of a publicly usable pixel-annotated dataset and the explicit coupling of learned segmentation with an external physical mapping are concrete strengths that could be adopted by other transient-search pipelines.

major comments (3)

- [Benchmarking section (results on ASKAP)] Benchmarking / cross-facility results: The claim of 'robust cross-facility generalization' rests on successful detection of all 19 ASKAP FRBs without retraining. No quantitative segmentation metrics (IoU, precision-recall on masks), DM residuals, or ToA errors are reported for these events, only binary detection success. Because ASKAP and FAST differ in channel bandwidth, total bandwidth, integration time, and RFI environment, the morphological universality assumption remains untested at the level required to support the stronger claim.

- [Dataset construction (Methods)] Methods / dataset construction: The manuscript provides no detailed protocol for constructing CRAFTS-FRT (annotation guidelines, inter-annotator agreement, handling of RFI-contaminated or low-S/N events, train/validation/test splits). Without these, it is impossible to judge whether the reported 98% recall on FAST-FREX is robust or partly reflects annotation bias or test-set leakage.

- [Performance comparison (Results)] Results / baseline comparison: The >99.9% false-positive reduction versus PRESTO is a central performance claim, yet the exact PRESTO configuration (DM search range, S/N threshold, dedispersion parameters) and the precise definition of a false positive in the test set are not stated. This prevents direct reproduction and assessment of whether the reduction is load-bearing or configuration-dependent.

minor comments (2)

- [Abstract] Abstract: The number '2{,}392' uses an atypical thousands separator; conventional notation is 2,392 or 2392.

- [Introduction / Methods] Notation: The acronym 'IMPIC' is introduced without an explicit expansion on first use; a parenthetical definition would improve readability.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed review. The comments identify areas where additional transparency and quantitative detail will strengthen the manuscript. We address each major comment below and will revise the paper accordingly.

read point-by-point responses

-

Referee: Benchmarking / cross-facility results: The claim of 'robust cross-facility generalization' rests on successful detection of all 19 ASKAP FRBs without retraining. No quantitative segmentation metrics (IoU, precision-recall on masks), DM residuals, or ToA errors are reported for these events, only binary detection success. Because ASKAP and FAST differ in channel bandwidth, total bandwidth, integration time, and RFI environment, the morphological universality assumption remains untested at the level required to support the stronger claim.

Authors: We agree that reporting only binary detection success for the 19 ASKAP FRBs provides limited quantitative support for the cross-facility claim. While the fact that every event was detected without retraining is consistent with the morphological universality of dispersive trajectories under the cold-plasma relation, we acknowledge that instrumental differences between ASKAP and FAST warrant more rigorous testing. In the revised Benchmarking section we will add IoU, mask-level precision-recall, DM residuals, and ToA errors for the ASKAP events (computed from the available ground-truth parameters) together with a brief discussion of the differing channelization, bandwidth, and RFI environments. revision: yes

-

Referee: Methods / dataset construction: The manuscript provides no detailed protocol for constructing CRAFTS-FRT (annotation guidelines, inter-annotator agreement, handling of RFI-contaminated or low-S/N events, train/validation/test splits). Without these, it is impossible to judge whether the reported 98% recall on FAST-FREX is robust or partly reflects annotation bias or test-set leakage.

Authors: We accept this criticism. The current Methods section is insufficiently detailed on dataset provenance. In the revised manuscript we will add a dedicated subsection describing the annotation guidelines, the number of annotators and inter-annotator agreement statistics (Cohen’s kappa), the criteria used to include or exclude RFI-contaminated and low-S/N events, and the exact train/validation/test split ratios and randomization procedure. revision: yes

-

Referee: Results / baseline comparison: The >99.9% false-positive reduction versus PRESTO is a central performance claim, yet the exact PRESTO configuration (DM search range, S/N threshold, dedispersion parameters) and the precise definition of a false positive in the test set are not stated. This prevents direct reproduction and assessment of whether the reduction is load-bearing or configuration-dependent.

Authors: We thank the referee for noting this reproducibility gap. In the revised Results section we will explicitly document the PRESTO configuration employed (DM search range, S/N threshold, dedispersion step size, and RFI excision settings) and provide the precise operational definition of a false positive used on the FAST-FREX test set, enabling direct replication of the comparison. revision: yes

Circularity Check

No significant circularity in the derivation chain.

full rationale

The paper's chain consists of constructing a pixel-annotated dataset (CRAFTS-FRT), training a Mask R-CNN for trajectory segmentation, and applying the external cold-plasma dispersion relation through the IMPIC algorithm to convert segmented coordinates into DM and ToA values. Benchmark metrics (98% recall, FP reduction, speedup) are empirical results from testing on FAST-FREX and qualitative checks on ASKAP events; none of these steps reduce by construction to the training inputs or to a self-referential fit. The morphological-universality premise is an assumption, not a definitional loop, and no load-bearing self-citations, ansatzes, or renamed known results appear in the provided derivation.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Dispersive trajectories of fast radio transients obey the cold plasma dispersion relation and exhibit sufficient morphological universality to be treated as a pattern-recognition task.

Reference graph

Works this paper leans on

-

[1]

2020, Monthly Notices of the Royal Astronomical Society, 497, 1661, doi: 10.1093/mnras/staa1856

Garver-Daniels, N. 2020, Monthly Notices of the Royal Astronomical Society, 497, 1661, doi: 10.1093/mnras/staa1856

-

[2]

Aggarwal, K., Agarwal, D., Kania, J. W., et al. 2020, Journal of Open Source Software, 5, 2750, doi: 10.21105/joss.02750

-

[3]

2017, Astronomy and Computing, 18, 35, doi: 10.1016/j.ascom.2017.01.002

Akeret, J., Chang, C., Lucchi, A., & Refregier, A. 2017, Astronomy and Computing, 18, 35, doi: 10.1016/j.ascom.2017.01.002

-

[4]

Amiri, M., Andersen, B. C., Bandura, K., et al. 2021, The Astrophysical Journal Supplement Series, 257, 59, doi: 10.3847/1538-4365/ac33ab

-

[5]

Aveiro, J., Freitas, F. F., Ferreira, M., et al. 2022, Physical Review D, 106, 084059, doi: 10.1103/PhysRevD.106.084059

-

[6]

Barsdell, B. R., Bailes, M., Barnes, D. G., & Fluke, C. J. 2012, Monthly Notices of the Royal Astronomical Society, 422, 379, doi: 10.1111/j.1365-2966.2012.20622.x

-

[7]

Bottou, L. 2010, in Proceedings of COMPSTAT’2010: 19th International Conference on Computational Statistics, Springer, 177–186, doi: 10.1007/978-3-7908-2604-3_16

-

[8]

Burke, C. J., Aleo, P. D., Chen, Y.-C., et al. 2019, Monthly Notices of the Royal Astronomical Society, 490, 3952, doi: 10.1093/mnras/stz2845

-

[9]

Cao, J. H., Wang, P., Li, D., et al. 2025, The Astrophysical Journal Supplement Series, 280, 12, doi: 10.3847/1538-4365/aded18

-

[10]

MMDetection: Open mmlab detection toolbox and benchmark,

Chen, K., Wang, J., Pang, J., et al. 2019, arXiv preprint arXiv:1906.07155

-

[11]

2023, Research in Astronomy and Astrophysics, 23, 055014, doi: 10.1088/1674-4527/acc505

Chen, Z.-H., You, S.-P., Yu, X.-H., et al. 2023, Research in Astronomy and Astrophysics, 23, 055014, doi: 10.1088/1674-4527/acc505

-

[12]

2018, The Astronomical Journal, 156, 256, doi: 10.3847/1538-3881/aae649

Connor, L., & van Leeuwen, J. 2018, The Astronomical Journal, 156, 256, doi: 10.3847/1538-3881/aae649

-

[13]

Cordes, J. M., & McLaughlin, M. A. 2003, The Astrophysical Journal, 596, 1142, doi: 10.1086/378231 da Costa-Luis, C. O. 2019, Journal of Open Source Software, 4, 1277, doi: 10.21105/joss.01277

-

[14]

Deneva, J. S., Stovall, K., McLaughlin, M. A., et al. 2016, The Astrophysical Journal, 821, 10, doi: 10.3847/0004-637X/821/1/10

-

[15]

R., Goseva-Popstojanova, K., & McLaughlin, M

Devine, T. R., Goseva-Popstojanova, K., & McLaughlin, M. 2016, Monthly Notices of the Royal Astronomical Society, 459, 1519, doi: 10.1093/mnras/stw655 Dollár, P., Wojek, C., Schiele, B., & Perona, P. 2011, IEEE Transactions on Pattern Analysis and Machine Intelligence, 34, 743, doi: 10.1109/TPAMI.2011.155

-

[16]

2020, Astronomy and Computing, 33, 100420, doi: 10.1016/j.ascom.2020.100420

Farias, H., Ortiz, D., Damke, G., Jaque Arancibia, M., & Solar, M. 2020, Astronomy and Computing, 33, 100420, doi: 10.1016/j.ascom.2020.100420

-

[17]

Fischler, M. A., & Bolles, R. C. 1981, Communications of the ACM, 24, 381, doi: 10.1145/358669.358692

-

[18]

The Astronomical Journal , author =

Gajjar, V., Perez, K. I., Siemion, A. P. V., et al. 2021, The Astronomical Journal, 162, 33, doi: 10.3847/1538-3881/abfd36

-

[19]

Gajjar, V., LeDuc, D., Chen, J., et al. 2022, The Astrophysical Journal, 932, 81, doi: 10.3847/1538-4357/ac6dd5

-

[20]

Ghiasi, G., Cui, Y., Srinivas, A., et al. 2021, in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2918–2928, doi: 10.1109/CVPR46437.2021.00294

-

[21]

Girshick, R. 2015, in Proceedings of the IEEE International Conference on Computer Vision, 1440–1448, doi: 10.1109/ICCV.2015.169 Gu,F.,Hao,L.,Liang,B.,etal.2024,MonthlyNoticesoftheRoyal Astronomical Society, 529, 4719, doi: 10.1093/mnras/stae868

-

[22]

2025, The Astrophysical Journal Supplement Series, 280, 34, doi: 10.3847/1538-4365/adf42d

Guo, X., Wang, H., Xiao, Y., et al. 2025, The Astrophysical Journal Supplement Series, 280, 34, doi: 10.3847/1538-4365/adf42d

-

[23]

Han, J. L., Manchester, R. N., Lyne, A. G., Qiao, G. J., & Van Straten, W. 2006, The Astrophysical Journal, 642, 868, doi: 10.1086/501444

-

[24]

Harris, C. R., Millman, K. J., van der Walt, S. J., et al. 2020, Nature, 585, 357, doi: 10.1038/s41586-020-2649-2

-

[25]

He, K., Gkioxari, G., Dollár, P., & Girshick, R. 2017, in Proceedings of the IEEE International Conference on Computer Vision, 2961–2969, doi: 10.1109/ICCV.2017.322

-

[26]

Deep residual learning for image recognition

He, K., Zhang, X., Ren, S., & Sun, J. 2016, in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 770–778, doi: 10.1109/CVPR.2016.90

-

[27]

, year = 1968, month = feb, volume =

Hewish, A., Bell, S. J., Pilkington, J. D. H., Scott, P. F., & Collins, R. A. 1968, Nature, 217, 709, doi: 10.1038/217709a0

-

[28]

2010, Classical and Quantum Gravity, 27, 084013, doi: 10.1088/0264-9381/27/8/084013

Hobbs, G., Archibald, A., Arzoumanian, Z., et al. 2010, Classical and Quantum Gravity, 27, 084013, doi: 10.1088/0264-9381/27/8/084013

-

[29]

Hulse, R. A., & Taylor, J. H. 1975, The Astrophysical Journal Letters, 195, L51, doi: 10.1086/181708

-

[30]

Hunter, J. D. 2007, Computing in Science & Engineering, 9, 90, doi: 10.1109/MCSE.2007.55

-

[31]

Karako-Argaman, C., Kaspi, V. M., Lynch, R. S., et al. 2015, The Astrophysical Journal, 809, 67, doi: 10.1088/0004-637X/809/1/67

-

[32]

2018, IEEE Microwave Magazine, 19, 112, doi: 10.1109/MMM.2018.2802178

Li, D., Wang, P., Qian, L., et al. 2018, IEEE Microwave Magazine, 19, 112, doi: 10.1109/MMM.2018.2802178

-

[33]

Li, D., Wang, P., Zhu, W. W., et al. 2021, Nature, 598, 267, doi: 10.1038/s41586-021-03878-5

-

[34]

Li, D., Wang, P., Zhu, W., et al. 2023, A bimodal burst energy distribution of a repeating fast radio burst source, V3, Science Data Bank, doi: 10.11922/sciencedb.01092

-

[35]

Feature Pyramid Networks for Object Detection

Lin, T.-Y., Dollár, P., Girshick, R., et al. 2017a, in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2117–2125, doi: 10.1109/CVPR.2017.106 20

-

[36]

Lin, T.-Y., Goyal, P., Girshick, R., He, K., & Dollár, P. 2017b, in Proceedings of the IEEE International Conference on Computer Vision, 2980–2988, doi: 10.1109/ICCV.2017.324

-

[37]

Lin, T.-Y., Maire, M., Belongie, S., et al. 2014, in European Conference on Computer Vision, Springer, 740–755, doi: 10.1007/978-3-319-10602-1_48

-

[38]

2022a, Research in Astronomy and Astrophysics, 22, 105007, doi: 10.1088/1674-4527/ac833a

Liu, Y., Li, J., Liu, Z., et al. 2022a, Research in Astronomy and Astrophysics, 22, 105007, doi: 10.1088/1674-4527/ac833a

-

[39]

Generic attention-model explainability for interpreting bi-modal and encoder-decoder transformers

Liu, Z., Lin, Y., Cao, Y., et al. 2021, in Proceedings of the IEEE/CVF international conference on computer vision, 10012–10022, doi: 10.1109/ICCV48922.2021.00986

-

[40]

Liu, Z., Mao, H., Wu, C.-Y., et al. 2022b, in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 11976–11986, doi: 10.1109/CVPR52688.2022.01167

-

[41]

Lorimer, D. R. 2025, Research in Astronomy and Astrophysics, 25, 018001, doi: 10.1088/1674-4527/ada3b9

-

[42]

Crawford, F. 2007, Science, 318, 777, doi: 10.1126/science.1147532

-

[43]

R., & Kramer, M

Lorimer, D. R., & Kramer, M. 2005, Handbook of Pulsar

2005

-

[44]

2019, in International Conference on Learning Representations

Loshchilov, I., & Hutter, F. 2019, in International Conference on Learning Representations

2019

-

[45]

A., et al., 2006, @doi [Nature] 10.1038/nature04440 , 439, 817

McLaughlin, M. A., Lyne, A. G., Lorimer, D. R., et al. 2006, Nature, 439, 817, doi: 10.1038/nature04440

-

[46]

2024, Astronomy & Astrophysics, 683, A183, doi: 10.1051/0004-6361/202348247

Men, Y., & Barr, E. 2024, Astronomy & Astrophysics, 683, A183, doi: 10.1051/0004-6361/202348247

-

[47]

Michilli, D., Hessels, J. W. T., Lyon, R. J., et al. 2018, Monthly Notices of the Royal Astronomical Society, 480, 3457, doi: 10.1093/mnras/sty2072

-

[48]

Milletari, F., Navab, N., & Ahmadi, S.-A. 2016, in 2016 Fourth International Conference on 3D Vision (3DV), IEEE, 565–571, doi: 10.1109/3DV.2016.79

-

[49]

Morello, V., Rajwade, K., & Stappers, B. 2022, Monthly Notices of the Royal Astronomical Society, 510, 1393, doi: 10.1093/mnras/stab3493

-

[50]

2011, International Journal of Modern Physics D, 20, 989, doi: 10.1142/S0218271811019335

Nan, R., Li, D., Jin, C., et al. 2011, International Journal of Modern Physics D, 20, 989, doi: 10.1142/S0218271811019335

-

[51]

2018, Monthly Notices of the Royal Astronomical Society, 480, 3302, doi: 10.1093/mnras/sty1992

Pang, D., Goseva-Popstojanova, K., Devine, T., & McLaughlin, M. 2018, Monthly Notices of the Royal Astronomical Society, 480, 3302, doi: 10.1093/mnras/sty1992

-

[52]

Pang, D., Goseva-Popstojanova, K., & McLaughlin, M. 2020, Publications of the Astronomical Society of the Pacific, 132, 094502, doi: 10.1088/1538-3873/ab9f20

-

[53]

2019, Advances in Neural Information Processing Systems, 32

Paszke, A., Gross, S., Massa, F., et al. 2019, Advances in Neural Information Processing Systems, 32

2019

-

[54]

Price-Whelan, A. M., Lim, P. L., Earl, N., et al. 2022, The AstrophysicalJournal,935,167,doi:10.3847/1538-4357/ac7c74

work page internal anchor Pith review doi:10.3847/1538-4357/ac7c74 2022

-

[55]

Ransom, S. M. 2001, New search techniques for binary pulsars (Harvard University)

2001

-

[56]

Ransom, S. M., Eikenberry, S. S., & Middleditch, J. 2002, The Astronomical Journal, 124, 1788, doi: 10.1086/342285

-

[57]

You Only Look Once: Unified, Real-Time Object Detection

Redmon, J., Divvala, S., Girshick, R., & Farhadi, A. 2016, in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 779–788, doi: 10.1109/CVPR.2016.91

-

[58]

2015, Advances in Neural Information Processing Systems, 28

Ren, S., He, K., Girshick, R., & Sun, J. 2015, Advances in Neural Information Processing Systems, 28

2015

-

[59]

M., Macquart, J.-P., Bannister, K

Shannon, R. M., Macquart, J.-P., Bannister, K. W., et al. 2018, Nature, 562, 386, doi: 10.1038/s41586-018-0588-y

-

[60]

In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)

Sun, K., Xiao, B., Liu, D., & Wang, J. 2019, in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 5693–5703, doi: 10.1109/CVPR.2019.00584 Van der Walt, S., Schönberger, J. L., Nunez-Iglesias, J., et al. 2014, PeerJ, 2, e453, doi: 10.7717/peerj.453

-

[61]

Virtanen, P., Gommers, R., Oliphant, T. E., et al. 2020, Nature Methods, 17, 261, doi: 10.1038/s41592-019-0686-2

-

[62]

2024a, The Astrophysical Journal Supplement Series, 275, 39, doi: 10.3847/1538-4365/ad7c3f

Wang, P., Li, J., Ji, L., et al. 2024a, The Astrophysical Journal Supplement Series, 275, 39, doi: 10.3847/1538-4365/ad7c3f

-

[63]

2024b, Chinese Physics C, 48, 125107, doi: 10.1088/1674-1137/ad73ac

Wang, Y., Jin, S., Sun, T., Zhang, J., & Zhang, X. 2024b, Chinese Physics C, 48, 125107, doi: 10.1088/1674-1137/ad73ac

-

[64]

Aggregated Residual Transformations for Deep Neural Networks

Xie, S., Girshick, R., Dollár, P., Tu, Z., & He, K. 2017, in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 1492–1500, doi: 10.1109/CVPR.2017.634

-

[65]

2021, Research in Astronomy and Astrophysics, 21, 314, doi: 10.1088/1674-4527/21/12/314

You, S., Wang, P., Yu, X., et al. 2021, Research in Astronomy and Astrophysics, 21, 314, doi: 10.1088/1674-4527/21/12/314

-

[66]

2023, Reviews of Modern Physics, 95, 035005, doi: 10.1103/RevModPhys.95.035005

Zhang, B. 2023, Reviews of Modern Physics, 95, 035005, doi: 10.1103/RevModPhys.95.035005 —. 2026, FRTSearch: An End-to-End Detection and Characterization Tool for Fast Radio Transients, v1.0.1, Zenodo, doi: 10.5281/zenodo.18877413

-

[67]

2025a, Science Bulletin, 70, 1568, doi: 10.1016/j.scib.2025.03.022

Zhang, L., Abbate, F., Li, D., et al. 2025a, Science Bulletin, 70, 1568, doi: 10.1016/j.scib.2025.03.022

-

[68]

Zhang, Y., & Li, D. 2023, FAST observations of an extremely active episode of FRB 20201124A, V2, Science Data Bank, doi: 10.57760/sciencedb.06762

-

[69]

2025b, The Astrophysical Journal Supplement Series, 276, 20, doi: 10.3847/1538-4365/ad8f31

Zhang, Y., Li, D., Feng, Y., et al. 2025b, The Astrophysical Journal Supplement Series, 276, 20, doi: 10.3847/1538-4365/ad8f31

-

[70]

2022, Research in Astronomy and Astrophysics, 22, 124002, doi: 10.1088/1674-4527/ac98f7

Zhang, Y., Wang, P., Feng, Y., et al. 2022, Research in Astronomy and Astrophysics, 22, 124002, doi: 10.1088/1674-4527/ac98f7

-

[71]

G., Gajjar, V., Foster, G., et al

Zhang, Y. G., Gajjar, V., Foster, G., et al. 2018, The Astrophysical Journal, 866, 149, doi: 10.3847/1538-4357/aadf31

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.