Recognition: 2 theorem links

· Lean TheoremDistributional Inverse Homogenization

Pith reviewed 2026-05-13 08:03 UTC · model grok-4.3

The pith

Large collections of bulk mechanical properties can be inverted to recover the statistical distribution of microstructure.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

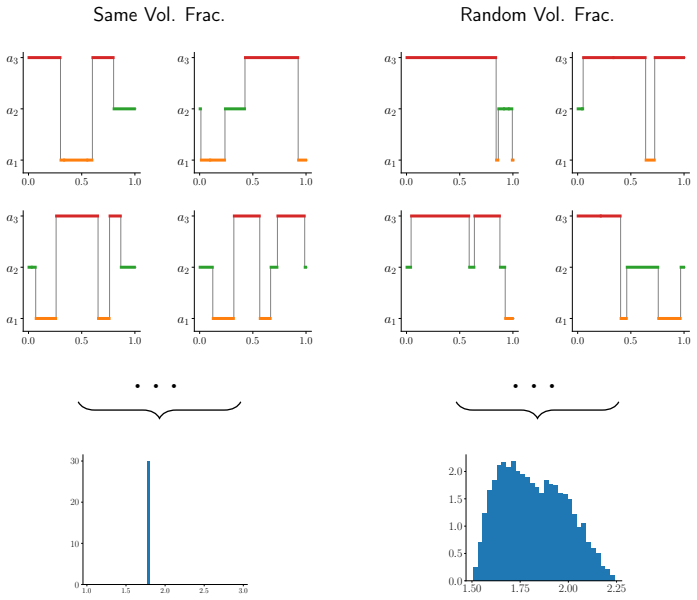

The homogenization map is invertible at the distributional level: given sufficiently many bulk measurements, the probability law on the microstructure can be recovered, as demonstrated empirically for two-dimensional Voronoi constructions and underpinned theoretically in one dimension, while a surrogate model is learned concurrently to accelerate repeated evaluations of the map.

What carries the argument

Distributional inverse homogenization, the statistical inversion procedure that matches the observed distribution of homogenized bulk responses to the unknown distribution over microstructures.

If this is right

- Noninvasive recovery of microstructural statistics becomes feasible using only repeated bulk tests.

- A surrogate model for the homogenization map is obtained as a byproduct and speeds up subsequent calculations.

- Natural spatial variability within a sample supplies independent realizations that enable the distributional inversion.

- The same framework applies to both periodic and stochastic homogenization settings.

Where Pith is reading between the lines

- The approach could be extended to three-dimensional microstructures and more complex constitutive behavior.

- It opens a route to uncertainty quantification on inferred microstructural distributions when combined with Bayesian methods.

- Optimal experimental design could select which bulk tests to perform to maximize information about the microstructure distribution.

- The learned surrogate might serve as a fast forward model inside larger-scale engineering simulations that propagate microstructural variability.

Load-bearing premise

That collections of bulk properties contain enough information for the homogenization map to be invertible at the level of probability distributions over microstructures.

What would settle it

A controlled experiment in which microstructures drawn from the inferred distribution produce bulk-property statistics that fail to match the measured collection.

Figures

read the original abstract

For many materials, macroscopic mechanical behavior is determined by an intricate microstructure. Understanding the relation between these two scales helps scientists and engineers design better materials. The relation which maps microstructure to bulk mechanical properties can be understood via the well-established theory of homogenization. However inverting the homogenization process, to recover microstructural information from measured macroscopic properties, is fraught with difficulties because of the averaging processes that underlie homogenization. Therefore, scientists and engineers usually need recourse to more invasive, often highly localized, investigations to learn about a microstructure. In this work, we develop a noninvasive methodology by which one can leverage large collections of measured bulk mechanical properties to learn information about the statistics of microstructure at a global level. We call this, distributional inverse homogenization. We study this problem in one and two dimensions, considering both periodic and stochastic homogenization. We demonstrate the methodology in the context of 2D Voronoi constructions and underpin the observed empirical success with theory in 1D. We also show how the natural spatial variability of microstructure can be exploited to gather data that enables distributional inversion. And we concurrently learn a surrogate model, approximating the homogenization map, that accelerates the resulting computations in this setting. The work formulates a new class of inverse problems, bridging ideas from probability and homogenization to facilitate the learning of microstructural material variability from macroscopic measurements.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes 'distributional inverse homogenization' as a noninvasive method to infer microstructural statistics from distributions of bulk mechanical properties. It develops the approach for 1D periodic and stochastic homogenization with theoretical support, demonstrates it numerically for 2D Voronoi microstructures, exploits spatial variability for data collection, and learns a surrogate homogenization model.

Significance. If the central claims hold, the work bridges homogenization theory with probabilistic methods to enable learning of microstructural variability from macroscopic measurements, potentially reducing the need for invasive techniques. The 1D theoretical results under periodicity and ergodicity, together with the surrogate model for accelerating computations, represent clear strengths.

major comments (3)

- [Section 3] Section 3: the 1D invertibility result is established under periodicity and ergodicity assumptions, but the manuscript provides no corresponding uniqueness or identifiability theorem for the stochastic 2D case, leaving the central claim that the distributional homogenization map is injective unsupported beyond the 1D setting.

- [Section 4] Section 4: the numerical experiments on 2D Voronoi ensembles show empirical success in recovering statistics, yet no analysis rules out the possibility that distinct two-point or higher-order correlation functions could produce indistinguishable effective-modulus distributions, especially with finite sample sizes.

- [Section 4] Section 4: the surrogate model is learned concurrently but without reported error bounds, convergence rates, or validation against the true homogenization operator in the distributional sense, which is load-bearing for the claimed computational acceleration.

minor comments (2)

- The abstract states the methodology is demonstrated in 2D Voronoi constructions but does not specify the range of volume fractions or contrast ratios tested, which would help assess generality.

- Notation for the microstructure distribution and the induced bulk-property distribution is introduced without an explicit table of symbols or consistent use across sections.

Simulated Author's Rebuttal

We thank the referee for the constructive comments and the recommendation for major revision. We provide point-by-point responses to the major comments below, indicating where revisions will be made to the manuscript.

read point-by-point responses

-

Referee: [Section 3] Section 3: the 1D invertibility result is established under periodicity and ergodicity assumptions, but the manuscript provides no corresponding uniqueness or identifiability theorem for the stochastic 2D case, leaving the central claim that the distributional homogenization map is injective unsupported beyond the 1D setting.

Authors: We agree that a general uniqueness theorem for the stochastic 2D case is not provided. The 1D result serves as theoretical underpinning, while the 2D results are demonstrated numerically. We will revise Section 3 to clarify the assumptions and scope, and add a discussion in the conclusions about extending the identifiability analysis to higher dimensions as future work. revision: partial

-

Referee: [Section 4] Section 4: the numerical experiments on 2D Voronoi ensembles show empirical success in recovering statistics, yet no analysis rules out the possibility that distinct two-point or higher-order correlation functions could produce indistinguishable effective-modulus distributions, especially with finite sample sizes.

Authors: This is a valid concern regarding potential non-uniqueness. Our experiments focus on Voronoi tessellations, which are common in materials science, and show successful recovery. We will add a paragraph in Section 4 discussing the limitations of finite samples and the possibility of non-identifiability for other microstructures, along with suggestions for future validation with diverse correlation structures. revision: partial

-

Referee: [Section 4] Section 4: the surrogate model is learned concurrently but without reported error bounds, convergence rates, or validation against the true homogenization operator in the distributional sense, which is load-bearing for the claimed computational acceleration.

Authors: We acknowledge the need for rigorous validation of the surrogate model. In the revised version, we will include quantitative error analysis, such as L2 error bounds on the effective modulus predictions, convergence rates with respect to training data size, and distributional comparisons (e.g., via Wasserstein distance) between the surrogate and true homogenization outputs. This will substantiate the acceleration claims. revision: yes

Circularity Check

No circularity; derivation is self-contained with independent 1D proof

full rationale

The paper establishes the core invertibility result via an explicit 1D proof under periodicity and ergodicity (Section 3) that does not rely on fitted parameters or self-citations for its validity. The 2D Voronoi results are presented as numerical demonstrations rather than derivations that reduce to inputs. No self-definitional steps, fitted inputs renamed as predictions, or load-bearing self-citations appear in the provided text. The methodology learns a surrogate for the homogenization map concurrently but treats this as an acceleration tool, not a circular justification of the distributional inversion itself.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Homogenization theory provides a map from microstructure to bulk properties

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

homogenization map F† : A ↦ χ ↦ Ā (Eq. 7); distributional objective Jp(θ) = D(PN_A, η∗(F†)#PA(θ)) using sliced-Wasserstein

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanLogicNat recovery unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Dirichlet(γ,β) volume-fraction model and identifiability theorems 4.1–4.2

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

A. D. Rollett, S.-B. Lee, R. Campman, G. Rohrer, Three-dimensional characterization of microstructure by electron back-scatter diffraction, Annu. Rev. Mater. Res. 37 (1) (2007) 627–658

work page 2007

-

[2]

G. Catalanotti, On the generation of rve-based models of composites reinforced with long fibres or spherical particles, Composite Structures 138 (2016) 84–95

work page 2016

-

[3]

L. J. Gibson, Cellular solids, MRS Bulletin 28 (4) (2003) 270–274

work page 2003

- [4]

-

[5]

A. Gent, Hypothetical mechanism of crazing in glassy plastics, Journal of Materials Science 5 (11) (1970) 925–932

work page 1970

-

[6]

B. Martın-Pérez, H. Zibara, R. Hooton, M. Thomas, A study of the effect of chloride binding on service life predictions, Cement and concrete research 30 (8) (2000) 1215– 1223

work page 2000

-

[7]

B. Martın-Pérez, M. Thomas, Numerical solution of mass transport equations in con- crete structures, Computers & Structures 79 (13) (2001) 1251–1264

work page 2001

-

[8]

Martın-Pérez, Service life modelling of rc highway structures exposed to chlorides., University of Toronto (1999)

work page 1999

-

[9]

S. Bond-Taylor, A. Leach, Y. Long, C. G. Willcocks, Deep generative modelling: A comparative review of vaes, gans, normalizing flows, energy-based and autoregressive models, IEEE transactions on pattern analysis and machine intelligence 44 (11) (2021) 7327–7347. 34

work page 2021

-

[10]

Y. Song, J. Sohl-Dickstein, D. P. Kingma, A. Kumar, S. Ermon, B. Poole, Score- based generative modeling through stochastic differential equations, in: International Conference on Learning Representations, 2020

work page 2020

-

[11]

I. J. Goodfellow, J. Pouget-Abadie, M. Mirza, B. Xu, D. Warde-Farley, S. Ozair, A. Courville, Y. Bengio, Generative adversarial nets, Advances in neural information processing systems 27 (2014)

work page 2014

-

[12]

V. M. Panaretos, Y. Zemel, Statistical aspects of wasserstein distances, Annual review of statistics and its application 6 (1) (2019) 405–431

work page 2019

-

[13]

D. Sejdinovic, B. Sriperumbudur, A. Gretton, K. Fukumizu, Equivalence of distance- based and rkhs-based statistics in hypothesis testing, The annals of statistics (2013) 2263–2291

work page 2013

-

[14]

G. J. Székely, M. L. Rizzo, Energy statistics: A class of statistics based on distances, Journal of statistical planning and inference 143 (8) (2013) 1249–1272

work page 2013

-

[15]

A.Gretton, K.M.Borgwardt, M.J.Rasch, B.Schölkopf, A.Smola, Akerneltwo-sample test, The journal of machine learning research 13 (1) (2012) 723–773

work page 2012

-

[16]

N. Bonneel, J. Rabin, G. Peyré, H. Pfister, Sliced and radon wasserstein barycenters of measures, Journal of Mathematical Imaging and Vision 51 (1) (2015) 22–45

work page 2015

-

[17]

R. Flamary, C. Vincent-Cuaz, N. Courty, A. Gramfort, O. Kachaiev, H. Quang Tran, L. David, C. Bonet, N. Cassereau, T. Gnassounou, E. Tanguy, J. Delon, A. Collas, S. Mazelet, L. Chapel, T. Kerdoncuff, X. Yu, M. Feickert, P. Krzakala, T. Liu, E. Fer- nandes Montesuma, Pot python optimal transport (version 0.9.5) (2024)

work page 2024

-

[18]

R. Flamary, N. Courty, A. Gramfort, M. Z. Alaya, A. Boisbunon, S. Chambon, L. Chapel, A. Corenflos, K. Fatras, N. Fournier, L. Gautheron, N. T. Gayraud, H. Ja- nati, A. Rakotomamonjy, I. Redko, A. Rolet, A. Schutz, V. Seguy, D. J. Sutherland, R. Tavenard, A. Tong, T. Vayer, Pot: Python optimal transport, Journal of Machine Learning Research 22 (78) (2021) 1–8

work page 2021

-

[19]

S. Kolouri, K. Nadjahi, U. Simsekli, R. Badeau, G. Rohde, Generalized sliced wasser- stein distances, Advances in neural information processing systems 32 (2019)

work page 2019

-

[20]

X. Chen, Y. Yang, Y. Li, Augmented sliced wasserstein distances, in: International Conference on Learning Representations, 2020

work page 2020

-

[21]

I. Deshpande, Y.-T. Hu, R. Sun, A. Pyrros, N. Siddiqui, S. Koyejo, Z. Zhao, D. Forsyth, A. G. Schwing, Max-sliced wasserstein distance and its use for gans, in: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 10648–10656. 35

work page 2019

- [22]

- [23]

-

[24]

H. Gao, S. Kaltenbach, P. Koumoutsakos, Generative learning for forecasting the dy- namics of high-dimensional complex systems, Nature Communications 15 (1) (2024) 8904

work page 2024

- [25]

-

[26]

A. Glyn-Davies, A. Vadeboncoeur, O. D. Akyildiz, I. Kazlauskaite, M. Girolami, A primer on variational inference for physics-informed deep generative modelling, Philo- sophical Transactions A 383 (2299) (2025) 20240324

work page 2025

-

[27]

O. D. Akyildiz, M. Girolami, A. M. Stuart, A. Vadeboncoeur, Efficient prior calibration from indirect data, SIAM Journal on Scientific Computing 47 (4) (2025) C932–C958

work page 2025

-

[28]

Efficient Deconvolution in Populational Inverse Problems

A. Vadeboncoeur, M. Girolami, A. M. Stuart, Efficient deconvolution in populational inverse problems, arXiv preprint arXiv:2505.19841 (2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

- [29]

-

[30]

H. E. Robbins, An empirical bayes approach to statistics, in: Breakthroughs in Statis- tics: Foundations and basic theory, Springer, 1992, pp. 388–394

work page 1992

-

[31]

R. Z. Zhang, C. E. Miles, X. Xie, J. S. Lowengrub, Bilo: Bilevel local operator learning for pde inverse problems, Journal of Computational Physics (2026) 114679

work page 2026

- [32]

- [33]

- [34]

-

[35]

E. Bernton, P. E. Jacob, M. Gerber, C. P. Robert, On parameter estimation with the wasserstein distance, Information and Inference: A Journal of the IMA 8 (4) (2019) 657–676

work page 2019

-

[36]

A. Vadeboncoeur, G. Duthé, M. Girolami, E. Chatzi, Geometric autoencoder priors for bayesian inversion: Learn first observe later, arXiv preprint arXiv:2509.19929 (2025). 36

work page internal anchor Pith review arXiv 2025

-

[37]

R.Quey, L.Renversade, Optimalpolyhedraldescriptionof3dpolycrystals: Methodand application to statistical and synchrotron x-ray diffraction data, Computer Methods in Applied Mechanics and Engineering 330 (2018) 308–333

work page 2018

-

[38]

R. Quey, M. Kasemer, The neper/fepx project: free/open-source polycrystal genera- tion, deformation simulation, and post-processing, in: IOP conference series: materials science and engineering, Vol. 1249, IOP Publishing, 2022, p. 012021

work page 2022

-

[39]

R. Quey, P. R. Dawson, F. Barbe, Large-scale 3d random polycrystals for the finite element method: Generation, meshing and remeshing, Computer Methods in Applied Mechanics and Engineering 200 (17-20) (2011) 1729–1745

work page 2011

-

[40]

A. Al-Ostaz, A. Diwakar, K. I. Alzebdeh, Statistical model for characterizing random microstructure of inclusion–matrix composites, Journal of materials science 42 (16) (2007) 7016–7030

work page 2007

-

[41]

A. Bensoussan, J.-L. Lions, G. Papanicolaou, Asymptotic analysis for periodic struc- tures, Vol. 374, American Mathematical Soc., 2011

work page 2011

- [42]

-

[43]

G. Pavliotis, A. Stuart, Multiscale methods: averaging and homogenization, Springer Science & Business Media, 2008

work page 2008

-

[44]

A. Gloria, Numerical approximation of effective coefficients in stochastic homogeniza- tion of discrete elliptic equations, ESAIM: Mathematical Modelling and Numerical Analysis 46 (1) (2012) 1–38

work page 2012

- [45]

- [46]

- [47]

-

[48]

K. Bhattacharya, N. B. Kovachki, A. Rajan, A. M. Stuart, M. Trautner, Learning ho- mogenization for elliptic operators, SIAM Journal on Numerical Analysis 62 (4) (2024) 1844–1873

work page 2024

-

[49]

S. M. Kozlov, Averaging of random operators, Sbornik: Mathematics 37 (2) (1980) 167–180. 37

work page 1980

- [50]

-

[51]

E. Jang, S. Gu, B. Poole, Categorical reparameterization with gumbel-softmax, Inter- national Conference on Learning Representations (2017)

work page 2017

-

[52]

M. Figurnov, S. Mohamed, A. Mnih, Implicit reparameterization gradients, Advances in neural information processing systems 31 (2018)

work page 2018

-

[53]

D. M. Bradley, R. C. Gupta, On the distribution of the sum of n non-identically dis- tributed uniform random variables, Annals of the Institute of Statistical Mathematics 54 (3) (2002) 689–700. 38

work page 2002

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.