2B or Not 2B: A Tale of Three Algorithms for Streaming: Covariance Estimation after Welford and Chan-Golub-LeVeque

Gram favors batch speed, Welford resists mean shifts, CGL enables merging, and conformal sets give valid entrywise intervals.

abstract

click to expand

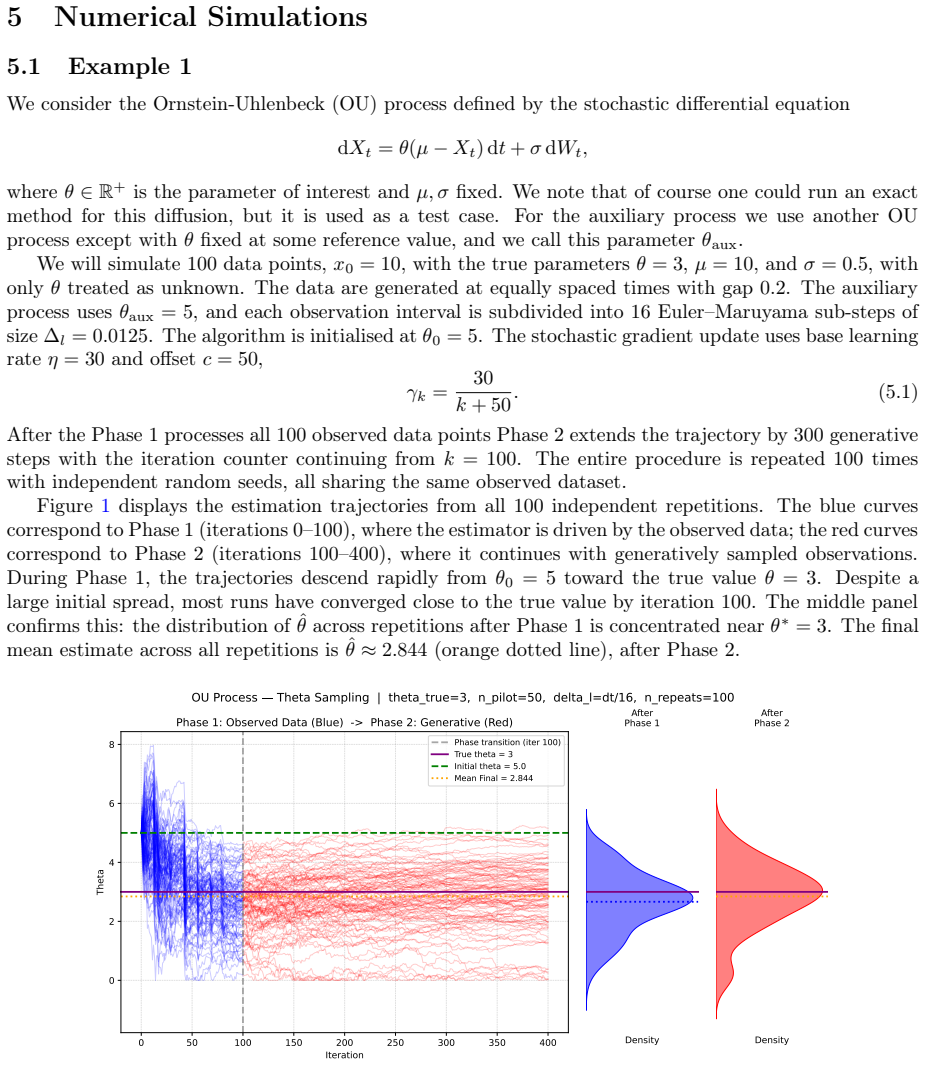

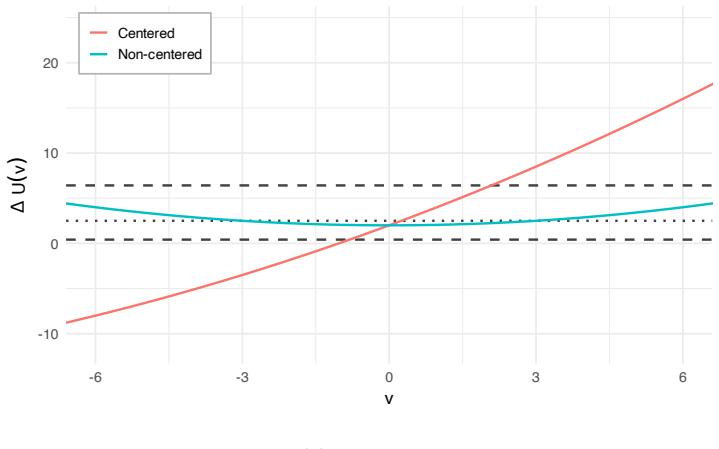

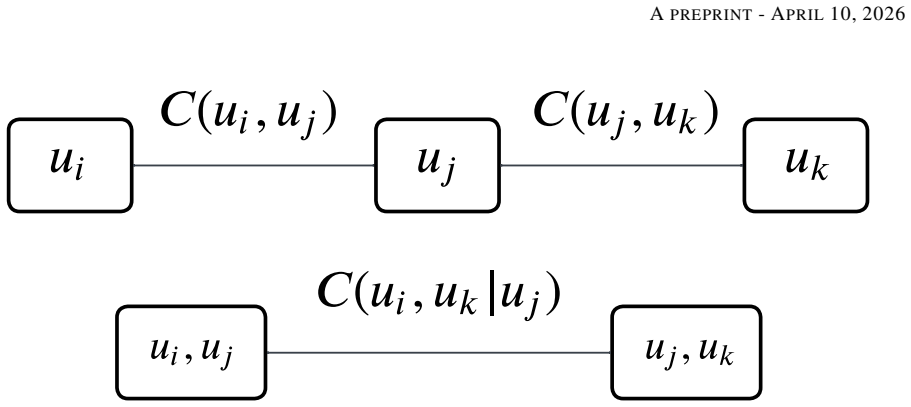

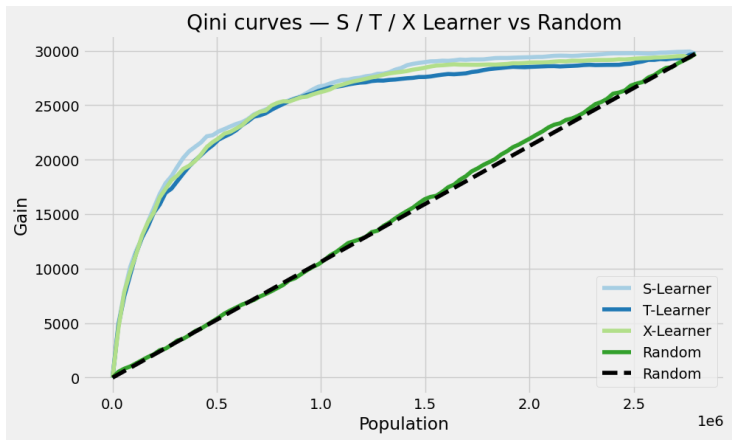

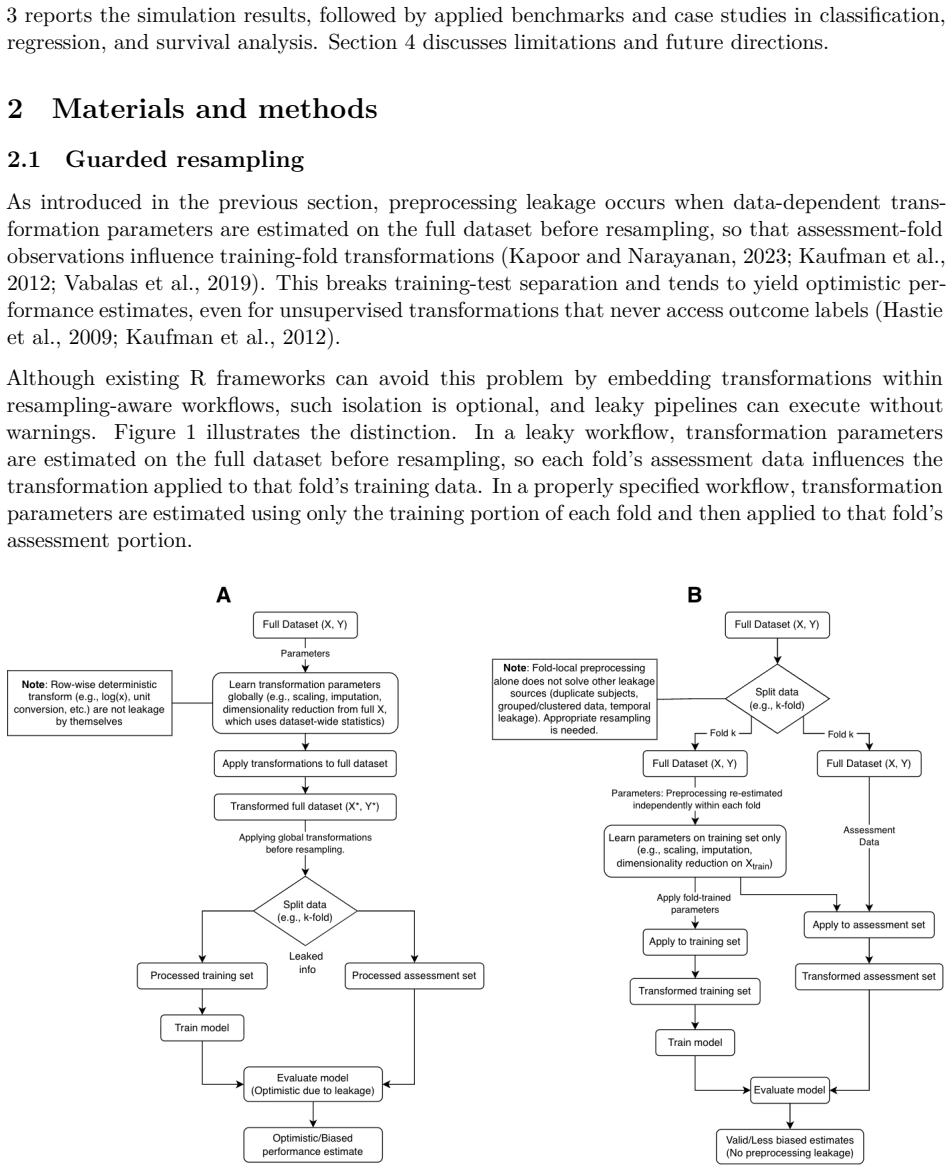

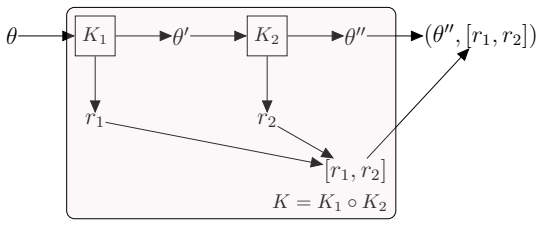

We place three algorithms for computing the unbiased sample covariance matrix in streaming and distributed settings on a common algebraic, numerical, and statistical foundation. The Gram algorithm, derived from the variance reformulation, maintains the running cross-product matrix $G_t = \sum_{i=1}^t x_i x_i^\top$ and the column-sum vector $s_t = \sum_{i=1}^t x_i$, yielding the unbiased covariance estimator $S_t = (t-1)^{-1}(G_t - t^{-1}s_t s_t^\top)$ in $O(p^2)$ time per update. The Welford algorithm propagates a running mean $m_t$ and outer-product corrections $M_t$, with updates $m_t = m_{t-1} + (x_t - m_{t-1})/t$ and $M_t = M_{t-1} + (x_t - m_{t-1})(x_t - m_t)^\top$, achieving the same asymptotic cost with improved numerical stability under large data shifts. The Chan-Golub-LeVeque algorithm supports block-parallel merging through the exact identity $M = M_A + M_B + \frac{n_A n_B}{n_A+n_B}(m_B - m_A)(m_B - m_A)^\top$, making it the natural choice for distributed and map-reduce architectures. All three algorithms produce the same estimator $S_t = M_t/(t-1)$ in exact arithmetic, although their finite-precision behavior differs markedly. Beyond runtime and numerical comparisons, we introduce a conformal prediction framework for streaming covariance estimation that yields finite-sample, distribution-free confidence sets $C_{t,jk}$ for each entry $S_{t,jk}$ of the covariance matrix at any step $t$ of the data stream. Experiments confirm that the Gram algorithm is fastest for batch computation, Welford is uniquely robust to catastrophic cancellation under large mean shifts, CGL is optimal for distributed settings, and conformal intervals achieve the nominal coverage level across all three algorithms.

full image

full image