Recognition: unknown

Continual Learning for fMRI-Based Brain Disorder Diagnosis via Functional Connectivity Matrices Generative Replay

Pith reviewed 2026-05-10 12:21 UTC · model grok-4.3

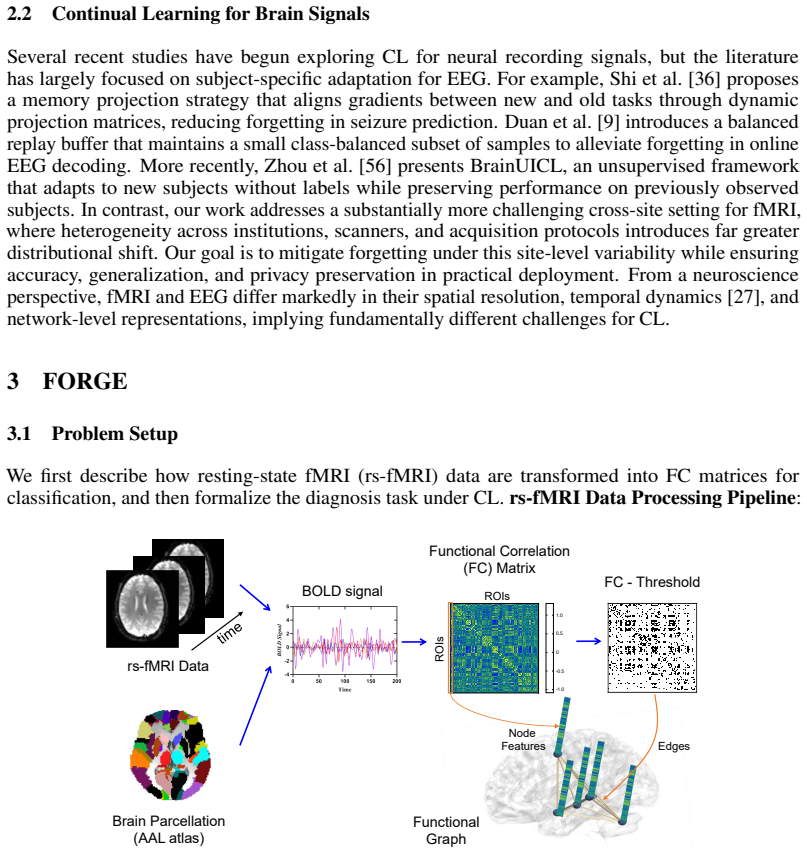

The pith

A structure-aware variational autoencoder enables continual learning for fMRI brain disorder diagnosis from sequentially arriving clinical sites.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors present the first continual learning framework tailored to fMRI-based diagnosis. A structure-aware variational autoencoder synthesizes realistic functional connectivity matrices for both patient and control groups from previous sites. These replayed samples are used with a multi-level knowledge distillation strategy that aligns both predictions and graph representations, plus a hierarchical contextual bandit that selects which samples to replay. Experiments across multi-site MDD, SZ, and ASD datasets show the generative model improves augmentation quality and the full framework substantially reduces catastrophic forgetting compared with existing methods.

What carries the argument

Structure-aware variational autoencoder that generates synthetic functional connectivity matrices, supported by multi-level knowledge distillation for prediction and graph alignment and a hierarchical contextual bandit for adaptive replay sampling.

If this is right

- The framework maintains higher diagnostic accuracy on prior sites than existing continual learning approaches when new sites are added sequentially.

- Synthetic matrices produced by the structure-aware autoencoder improve data augmentation for both patient and control groups.

- The hierarchical bandit reduces the number of replay samples needed while preserving performance gains.

- The method supports continual training across heterogeneous sites for multiple disorders without requiring simultaneous access to all data.

Where Pith is reading between the lines

- If the generated matrices preserve privacy-sensitive properties, the approach could allow institutions to share only model updates rather than raw scans.

- The same generative replay pattern might extend to other sequential neuroimaging tasks, such as longitudinal tracking within a single disorder.

- Performance on rare subtypes of each disorder would need separate testing, since the current experiments aggregate across typical cases.

Load-bearing premise

The synthetic functional connectivity matrices generated by the autoencoder must match the statistical and graph properties of real data from earlier sites closely enough that replay does not create harmful distribution shift.

What would settle it

Measure whether classification accuracy on data from the first site drops sharply after the model has been trained on data from a second site using the replay method, or whether graph metrics such as clustering coefficients and edge-weight distributions of the generated matrices deviate systematically from those of held-out real matrices.

Figures

read the original abstract

Functional magnetic resonance imaging (fMRI) is widely used for studying and diagnosing brain disorders, with functional connectivity (FC) matrices providing powerful representations of large-scale neural interactions. However, existing diagnostic models are trained either on a single site or under full multi-site access, making them unsuitable for real-world scenarios where clinical data arrive sequentially from different institutions. This results in limited generalization and severe catastrophic forgetting. This paper presents the first continual learning framework specifically designed for fMRI-based diagnosis across heterogeneous clinical sites. Our framework introduces a structure-aware variational autoencoder that synthesizes realistic FC matrices for both patient and control groups. Built on this generative backbone, we develop a multi-level knowledge distillation strategy that aligns predictions and graph representations between new-site data and replayed samples. To further enhance efficiency, we incorporate a hierarchical contextual bandit scheme for adaptive replay sampling. Experiments on multi-site datasets for major depressive disorder (MDD), schizophrenia (SZ), and autism spectrum disorder (ASD) show that the proposed generative model enhances data augmentation quality, and the overall continual learning framework substantially outperforms existing methods in mitigating catastrophic forgetting. Our code is available at https://github.com/4me808/FORGE.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims to present the first continual learning framework for fMRI-based brain disorder diagnosis across heterogeneous clinical sites. It introduces a structure-aware variational autoencoder to synthesize realistic FC matrices for replay, a multi-level knowledge distillation strategy to align predictions and graph representations, and a hierarchical contextual bandit scheme for adaptive replay sampling. Experiments on multi-site datasets for MDD, SZ, and ASD show that the generative model enhances data augmentation quality and the overall framework substantially outperforms existing methods in mitigating catastrophic forgetting.

Significance. If the results hold, the framework could enable practical deployment of diagnostic models under sequential data arrival from different institutions, addressing a key limitation in clinical ML applications. The public code release at the GitHub link is a clear strength that supports reproducibility and extension by the community.

major comments (2)

- [Experiments] Experiments section: The abstract and results claim consistent outperformance and enhanced augmentation quality on three disorder datasets, but provide no details on full baseline implementations, statistical significance tests, ablation studies, or data split procedures. This leaves the support for the central claim of substantial forgetting mitigation at a moderate level.

- [Generative Model and Replay] Generative model and replay sections: The structure-aware VAE is presented as producing synthetic FC matrices whose statistical and graph properties (correlation structure, modularity, hub connectivity) match real prior-site data, yet no quantitative fidelity metrics (MMD, Wasserstein distances on eigenvalue spectra or graph features) are reported comparing synthetic vs. real samples. This assumption is load-bearing for the multi-level distillation and bandit replay to avoid harmful shift or artifact alignment.

minor comments (2)

- [Method] The hierarchical contextual bandit scheme description would benefit from pseudocode or an explicit diagram to clarify context parameters, exploration, and sampling efficiency.

- [Method] Notation for the multi-level distillation losses could be made more explicit with equation numbers to facilitate understanding of how predictions and graph representations are aligned.

Simulated Author's Rebuttal

We thank the referee for the thoughtful and constructive comments. We have addressed each major point below with clarifications and revisions to strengthen the manuscript's experimental rigor and validation of the generative component.

read point-by-point responses

-

Referee: [Experiments] Experiments section: The abstract and results claim consistent outperformance and enhanced augmentation quality on three disorder datasets, but provide no details on full baseline implementations, statistical significance tests, ablation studies, or data split procedures. This leaves the support for the central claim of substantial forgetting mitigation at a moderate level.

Authors: We agree that additional experimental details are needed to fully support the claims. In the revised manuscript, we have expanded the Experiments section with: (i) complete specifications of all baseline implementations, including hyperparameters, architectures, and training protocols for methods such as EWC, SI, GEM, and standard replay; (ii) statistical significance testing via paired Wilcoxon signed-rank tests with reported p-values across all comparisons; (iii) comprehensive ablation studies isolating the contributions of the structure-aware VAE, multi-level distillation, and hierarchical bandit sampling; and (iv) explicit data split procedures, including sequential site-wise arrival order, per-site 70/30 train/test splits, and cross-site validation to ensure no data leakage. These additions provide stronger quantitative backing for the forgetting mitigation results. revision: yes

-

Referee: [Generative Model and Replay] Generative model and replay sections: The structure-aware VAE is presented as producing synthetic FC matrices whose statistical and graph properties (correlation structure, modularity, hub connectivity) match real prior-site data, yet no quantitative fidelity metrics (MMD, Wasserstein distances on eigenvalue spectra or graph features) are reported comparing synthetic vs. real samples. This assumption is load-bearing for the multi-level distillation and bandit replay to avoid harmful shift or artifact alignment.

Authors: We acknowledge the value of quantitative fidelity metrics to rigorously validate the generative replay. While the original manuscript demonstrated fidelity through qualitative visualizations of correlation structures and improved downstream diagnostic performance, we have added explicit quantitative comparisons in the revision. These include Maximum Mean Discrepancy (MMD) scores, Wasserstein distances computed on the eigenvalue spectra of the FC matrices, and direct comparisons of graph features (modularity indices, hub node degrees, and clustering coefficients) between real and synthetic samples from each prior site. The results confirm close distributional alignment, supporting that the replayed data do not introduce harmful shifts that would undermine the distillation or bandit components. revision: yes

Circularity Check

No significant circularity; claims rest on external empirical evaluation

full rationale

The paper's derivation chain consists of training a structure-aware VAE on site-specific FC data, using generated samples for replay, applying multi-level distillation, and evaluating diagnostic performance on held-out real multi-site datasets for MDD, SZ, and ASD. No load-bearing step reduces a claimed prediction or result to its own fitted inputs by construction, nor relies on self-citation chains or imported uniqueness theorems. The reported gains in mitigating catastrophic forgetting are measured against external baselines on actual patient data, making the framework self-contained against independent benchmarks.

Axiom & Free-Parameter Ledger

free parameters (3)

- VAE architecture and loss weights

- Distillation hyperparameters

- Bandit exploration and context parameters

axioms (2)

- domain assumption Functional connectivity matrices from fMRI capture diagnostically relevant large-scale neural interactions

- ad hoc to paper Synthetic FC matrices generated by the structure-aware VAE can serve as faithful replay buffers without introducing systematic bias

invented entities (3)

-

Structure-aware variational autoencoder

no independent evidence

-

Multi-level knowledge distillation strategy

no independent evidence

-

Hierarchical contextual bandit scheme

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Leveraging clinical data across healthcare institutions for continual learning of predictive risk models

Fatemeh Amrollahi, Supreeth P Shashikumar, Andre L Holder, and Shamim Nemati. Leveraging clinical data across healthcare institutions for continual learning of predictive risk models. Scientific reports, 12(1):8380, 2022

2022

-

[2]

Dark experience for general continual learning: a strong, simple baseline.Advances in neural information processing systems, 33:15920–15930, 2020

Pietro Buzzega, Matteo Boschini, Angelo Porrello, Davide Abati, and Simone Calderara. Dark experience for general continual learning: a strong, simple baseline.Advances in neural information processing systems, 33:15920–15930, 2020

2020

-

[3]

Antonio Carta, Andrea Cossu, Federico Errica, and Davide Bacciu. Catastrophic forgetting in deep graph networks: an introductory benchmark for graph classification.arXiv preprint arXiv:2103.11750, 2021

-

[4]

Efficient lifelong learning with a-GEM

Arslan Chaudhry, Marc’Aurelio Ranzato, Marcus Rohrbach, and Mohamed Elhoseiny. Efficient lifelong learning with a-GEM. InInternational Conference on Learning Representations, 2019

2019

-

[5]

On Tiny Episodic Memories in Continual Learning

Arslan Chaudhry, Marcus Rohrbach, Mohamed Elhoseiny, Thalaiyasingam Ajanthan, Puneet K Dokania, Philip HS Torr, and Marc’Aurelio Ranzato. On tiny episodic memories in continual learning.arXiv preprint arXiv:1902.10486, 2019

work page Pith review arXiv 1902

-

[6]

Psychosis biotypes: replication and validation from the b-snip consortium.Schizophrenia bulletin, 48(1):56–68, 2022

Brett A Clementz, David A Parker, Rebekah L Trotti, Jennifer E McDowell, Sarah K Keedy, Matcheri S Keshavan, Godfrey D Pearlson, Elliot S Gershon, Elena I Ivleva, Ling-Yu Huang, et al. Psychosis biotypes: replication and validation from the b-snip consortium.Schizophrenia bulletin, 48(1):56–68, 2022

2022

-

[7]

Continual learning with differential privacy

Pradnya Desai, Phung Lai, NhatHai Phan, and My T Thai. Continual learning with differential privacy. InInternational Conference on Neural Information Processing, pages 334–343. Springer, 2021

2021

-

[8]

The autism brain imaging data exchange: towards a large-scale evaluation of the intrinsic brain architecture in autism.Molecular psychiatry, 19(6):659–667, 2014

Adriana Di Martino, Chao-Gan Yan, Qingyang Li, Erin Denio, Francisco X Castellanos, Kaat Alaerts, Jeffrey S Anderson, Michal Assaf, Susan Y Bookheimer, Mirella Dapretto, et al. The autism brain imaging data exchange: towards a large-scale evaluation of the intrinsic brain architecture in autism.Molecular psychiatry, 19(6):659–667, 2014

2014

-

[9]

Online continual decoding of streaming eeg signal with a balanced and informative memory buffer.Neural Networks, 176:106338, 2024

Tiehang Duan, Zhenyi Wang, Fang Li, Gianfranco Doretto, Donald A Adjeroh, Yiyi Yin, and Cui Tao. Online continual decoding of streaming eeg signal with a balanced and informative memory buffer.Neural Networks, 176:106338, 2024

2024

-

[10]

A generalization of transformer networks to graphs.arXiv preprint arXiv:2012.09699, 2020a

Vijay Prakash Dwivedi and Xavier Bresson. A generalization of transformer networks to graphs. arXiv preprint arXiv:2012.09699, 2020

-

[11]

Func- tional connectivity signatures of major depressive disorder: machine learning analysis of two multicenter neuroimaging studies.Molecular Psychiatry, 28(7):3013–3022, 2023

Selene Gallo, Ahmed El-Gazzar, Paul Zhutovsky, Rajat M Thomas, Nooshin Javaheripour, Meng Li, Lucie Bartova, Deepti Bathula, Udo Dannlowski, Christopher Davey, et al. Func- tional connectivity signatures of major depressive disorder: machine learning analysis of two multicenter neuroimaging studies.Molecular Psychiatry, 28(7):3013–3022, 2023. 12

2023

-

[12]

Introduction to medical data privacy

Aris Gkoulalas-Divanis and Grigorios Loukides. Introduction to medical data privacy. In Medical data privacy handbook, pages 1–14. Springer, 2015

2015

-

[13]

Inductive representation learning on large graphs.Advances in neural information processing systems, 30, 2017

Will Hamilton, Zhitao Ying, and Jure Leskovec. Inductive representation learning on large graphs.Advances in neural information processing systems, 30, 2017

2017

-

[14]

Universal graph continual learning.arXiv preprint arXiv:2308.13982, 2023

Thanh Duc Hoang, Do Viet Tung, Duy-Hung Nguyen, Bao-Sinh Nguyen, Huy Hoang Nguyen, and Hung Le. Universal graph continual learning.arXiv preprint arXiv:2308.13982, 2023

-

[15]

Latent space approaches to social network analysis.Journal of the american Statistical association, 97(460):1090–1098, 2002

Peter D Hoff, Adrian E Raftery, and Mark S Handcock. Latent space approaches to social network analysis.Journal of the american Statistical association, 97(460):1090–1098, 2002

2002

-

[16]

Brainnetcnn: Convolutional neural networks for brain networks; towards predicting neurodevelopment.NeuroImage, 146:1038–1049, 2017

Jeremy Kawahara, Colin J Brown, Steven P Miller, Brian G Booth, Vann Chau, Ruth E Grunau, Jill G Zwicker, and Ghassan Hamarneh. Brainnetcnn: Convolutional neural networks for brain networks; towards predicting neurodevelopment.NeuroImage, 146:1038–1049, 2017

2017

-

[17]

Sddgr: Stable diffusion-based deep generative replay for class incremental object detection

Junsu Kim, Hoseong Cho, Jihyeon Kim, Yihalem Yimolal Tiruneh, and Seungryul Baek. Sddgr: Stable diffusion-based deep generative replay for class incremental object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 28772–28781, 2024

2024

-

[18]

Kipf et al.Variational Graph Auto- Encoders

Thomas N Kipf and Max Welling. Variational graph auto-encoders.arXiv preprint arXiv:1611.07308, 2016

-

[19]

Semi-Supervised Classification with Graph Convolutional Networks

Thomas N Kipf and Max Welling. Semi-supervised classification with graph convolutional networks.arXiv preprint arXiv:1609.02907, 2016

work page internal anchor Pith review arXiv 2016

-

[20]

Overcoming catastrophic forgetting in neural networks.Proceedings of the national academy of sciences, 114(13):3521–3526, 2017

James Kirkpatrick, Razvan Pascanu, Neil Rabinowitz, Joel Veness, Guillaume Desjardins, Andrei A Rusu, Kieran Milan, John Quan, Tiago Ramalho, Agnieszka Grabska-Barwinska, et al. Overcoming catastrophic forgetting in neural networks.Proceedings of the national academy of sciences, 114(13):3521–3526, 2017

2017

-

[21]

Begin: Extensive benchmark scenarios and an easy-to-use framework for graph continual learning

Jihoon Ko, Shinhwan Kang, Taehyung Kwon, Heechan Moon, and Kijung Shin. Begin: Extensive benchmark scenarios and an easy-to-use framework for graph continual learning. ACM Transactions on Intelligent Systems and Technology, 16(1):1–22, 2025

2025

-

[22]

Brainnetgan: Data augmentation of brain connectivity using generative adversarial network for dementia classification

Chao Li, Yiran Wei, Xi Chen, and Carola-Bibiane Schönlieb. Brainnetgan: Data augmentation of brain connectivity using generative adversarial network for dementia classification. In MICCAI Workshop on Deep Generative Models, pages 103–111. Springer, 2021

2021

-

[23]

Multi-site fmri analysis using privacy-preserving federated learning and domain adaptation: Abide results.Medical image analysis, 65:101765, 2020

Xiaoxiao Li, Yufeng Gu, Nicha Dvornek, Lawrence H Staib, Pamela Ventola, and James S Duncan. Multi-site fmri analysis using privacy-preserving federated learning and domain adaptation: Abide results.Medical image analysis, 65:101765, 2020

2020

-

[24]

Learning without forgetting.IEEE transactions on pattern analysis and machine intelligence, 40(12):2935–2947, 2017

Zhizhong Li and Derek Hoiem. Learning without forgetting.IEEE transactions on pattern analysis and machine intelligence, 40(12):2935–2947, 2017

2017

-

[25]

Overcoming catastrophic forgetting in graph neural networks

Huihui Liu, Yiding Yang, and Xinchao Wang. Overcoming catastrophic forgetting in graph neural networks. InProceedings of the AAAI conference on artificial intelligence, volume 35, pages 8653–8661, 2021

2021

-

[26]

Graph auto-encoding brain networks with applications to analyzing large-scale brain imaging datasets.Neuroimage, 245:118750, 2021

Meimei Liu, Zhengwu Zhang, and David B Dunson. Graph auto-encoding brain networks with applications to analyzing large-scale brain imaging datasets.Neuroimage, 245:118750, 2021

2021

-

[27]

What we can do and what we cannot do with fmri.Nature, 453(7197): 869–878, 2008

Nikos K Logothetis. What we can do and what we cannot do with fmri.Nature, 453(7197): 869–878, 2008

2008

-

[28]

Packnet: Adding multiple tasks to a single network by iterative pruning

Arun Mallya and Svetlana Lazebnik. Packnet: Adding multiple tasks to a single network by iterative pruning. InProceedings of the IEEE conference on Computer Vision and Pattern Recognition, pages 7765–7773, 2018

2018

-

[29]

Catastrophic interference in connectionist networks: The sequential learning problem

Michael McCloskey and Neal J Cohen. Catastrophic interference in connectionist networks: The sequential learning problem. InPsychology of learning and motivation, volume 24, pages 109–165. Elsevier, 1989. 13

1989

-

[30]

Continual lifelong learning with neural networks: A review.Neural networks, 113:54–71, 2019

German I Parisi, Ronald Kemker, Jose L Part, Christopher Kanan, and Stefan Wermter. Continual lifelong learning with neural networks: A review.Neural networks, 113:54–71, 2019

2019

-

[31]

Functional connectivity classification of autism identifies highly predictive brain features but falls short of biomarker standards.NeuroImage: Clinical, 7:359–366, 2015

Mark Plitt, Kelly Anne Barnes, and Alex Martin. Functional connectivity classification of autism identifies highly predictive brain features but falls short of biomarker standards.NeuroImage: Clinical, 7:359–366, 2015

2015

-

[32]

icarl: Incremental classifier and representation learning

Sylvestre-Alvise Rebuffi, Alexander Kolesnikov, Georg Sperl, and Christoph H Lampert. icarl: Incremental classifier and representation learning. InProceedings of the IEEE conference on Computer Vision and Pattern Recognition, pages 2001–2010, 2017

2001

-

[33]

Expe- rience replay for continual learning.Advances in neural information processing systems, 32, 2019

David Rolnick, Arun Ahuja, Jonathan Schwarz, Timothy Lillicrap, and Gregory Wayne. Expe- rience replay for continual learning.Advances in neural information processing systems, 32, 2019

2019

-

[34]

Andrei A Rusu, Neil C Rabinowitz, Guillaume Desjardins, Hubert Soyer, James Kirkpatrick, Koray Kavukcuoglu, Razvan Pascanu, and Raia Hadsell. Progressive neural networks.arXiv preprint arXiv:1606.04671, 2016

work page internal anchor Pith review arXiv 2016

-

[35]

Towards a new approach to reveal dynamical organization of the brain using topological data analysis.Nature communications, 9(1):1399, 2018

Manish Saggar, Olaf Sporns, Javier Gonzalez-Castillo, Peter A Bandettini, Gunnar Carlsson, Gary Glover, and Allan L Reiss. Towards a new approach to reveal dynamical organization of the brain using topological data analysis.Nature communications, 9(1):1399, 2018

2018

-

[36]

Continual learning for seizure prediction via memory projection strategy.Computers in Biology and Medicine, 181:109028, 2024

Yufei Shi, Shishi Tang, Yuxuan Li, Zhipeng He, Shengsheng Tang, Ruixuan Wang, Weishi Zheng, Ziyi Chen, and Yi Zhou. Continual learning for seizure prediction via memory projection strategy.Computers in Biology and Medicine, 181:109028, 2024

2024

-

[37]

Yiping Song, Juhua Zhang, Zhiliang Tian, Yuxin Yang, Minlie Huang, and Dongsheng Li. Llm-based privacy data augmentation guided by knowledge distillation with a distribution tutor for medical text classification.arXiv preprint arXiv:2402.16515, 2024

-

[38]

Graph-regularized manifold-aware conditional wasserstein gan for brain functional connectivity generation.Human Brain Mapping, 46(12):e70322, 2025

Yee-Fan Tan, Fuad Noman, Raphaël C-W Phan, Hernando Ombao, and Chee-Ming Ting. Graph-regularized manifold-aware conditional wasserstein gan for brain functional connectivity generation.Human Brain Mapping, 46(12):e70322, 2025

2025

-

[39]

Continual learning on graphs: A survey.arXiv preprint arXiv:2402.06330, 2024

Zonggui Tian, Du Zhang, and Hong-Ning Dai. Continual learning on graphs: A survey.arXiv preprint arXiv:2402.06330, 2024

-

[40]

Automated anatomical labeling of activations in spm using a macroscopic anatomical parcellation of the mni mri single-subject brain.Neuroimage, 15(1):273–289, 2002

Nathalie Tzourio-Mazoyer, Brigitte Landeau, Dimitri Papathanassiou, Fabrice Crivello, Octave Etard, Nicolas Delcroix, Bernard Mazoyer, and Marc Joliot. Automated anatomical labeling of activations in spm using a macroscopic anatomical parcellation of the mni mri single-subject brain.Neuroimage, 15(1):273–289, 2002

2002

-

[41]

Three scenarios for continual learning

Gido M Van de Ven and Andreas S Tolias. Three scenarios for continual learning.arXiv preprint arXiv:1904.07734, 2019

work page Pith review arXiv 1904

-

[42]

Petar Veliˇckovi´c, Guillem Cucurull, Arantxa Casanova, Adriana Romero, Pietro Lio, and Yoshua Bengio. Graph attention networks.arXiv preprint arXiv:1710.10903, 2017

work page internal anchor Pith review arXiv 2017

-

[43]

Dpabi: data processing & analysis for (resting-state) brain imaging.Neuroinformatics, 14(3):339–351, 2016

Chao-Gan Yan, Xin-Di Wang, Xi-Nian Zuo, and Yu-Feng Zang. Dpabi: data processing & analysis for (resting-state) brain imaging.Neuroinformatics, 14(3):339–351, 2016

2016

-

[44]

Reduced default mode network functional connectivity in patients with recurrent major depressive disorder.Proceedings of the National Academy of Sciences, 116(18):9078–9083, 2019

Chao-Gan Yan, Xiao Chen, Le Li, Francisco Xavier Castellanos, Tong-Jian Bai, Qi-Jing Bo, Jun Cao, Guan-Mao Chen, Ning-Xuan Chen, Wei Chen, et al. Reduced default mode network functional connectivity in patients with recurrent major depressive disorder.Proceedings of the National Academy of Sciences, 116(18):9078–9083, 2019

2019

-

[45]

Do transformers really perform badly for graph representation?Advances in neural information processing systems, 34:28877–28888, 2021

Chengxuan Ying, Tianle Cai, Shengjie Luo, Shuxin Zheng, Guolin Ke, Di He, Yanming Shen, and Tie-Yan Liu. Do transformers really perform badly for graph representation?Advances in neural information processing systems, 34:28877–28888, 2021

2021

-

[46]

Lifelong learning with dynamically expandable networks

Jaehong Yoon, Eunho Yang, Jeongtae Lee, and Sung Ju Hwang. Lifelong learning with dynamically expandable networks. InInternational Conference on Learning Representations, 2018. 14

2018

-

[47]

Data-free knowledge distillation via feature exchange and activation region constraint

Shikang Yu, Jiachen Chen, Hu Han, and Shuqiang Jiang. Data-free knowledge distillation via feature exchange and activation region constraint. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 24266–24275, 2023

2023

-

[48]

Continual learning through synaptic intelligence

Friedemann Zenke, Ben Poole, and Surya Ganguli. Continual learning through synaptic intelligence. InInternational conference on machine learning, pages 3987–3995. Pmlr, 2017

2017

-

[49]

Efficient and robust continual graph learning for graph classification in biology.IEEE Transactions on Signal and Information Processing over Networks, 2025

Ding Zhang, Jane Downer, Can Chen, and Ren Wang. Efficient and robust continual graph learning for graph classification in biology.IEEE Transactions on Signal and Information Processing over Networks, 2025

2025

-

[50]

Qifan Zhang, Yunhui Guo, and Yu Xiang. Continual distillation learning: Knowledge distillation in prompt-based continual learning.arXiv preprint arXiv:2407.13911, 2024

-

[51]

Hierarchical prototype networks for continual graph representation learning.IEEE Transactions on Pattern Analysis and Machine Intelligence, 45(4):4622–4636, 2022

Xikun Zhang, Dongjin Song, and Dacheng Tao. Hierarchical prototype networks for continual graph representation learning.IEEE Transactions on Pattern Analysis and Machine Intelligence, 45(4):4622–4636, 2022

2022

-

[52]

Topology-aware embedding memory for continual learning on expanding networks

Xikun Zhang, Dongjin Song, Yixin Chen, and Dacheng Tao. Topology-aware embedding memory for continual learning on expanding networks. InProceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, pages 4326–4337, 2024

2024

-

[53]

A generative adaptive replay continual learning model for temporal knowledge graph reasoning

Zhiyu Zhang, Wei Chen, Youfang Lin, and Huaiyu Wan. A generative adaptive replay continual learning model for temporal knowledge graph reasoning. InProceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 10964–10977, 2025

2025

-

[54]

Kaizhong Zheng, Shujian Yu, Baojuan Li, Robert Jenssen, and Badong Chen. Brainib: Inter- pretable brain network-based psychiatric diagnosis with graph information bottleneck.IEEE Transactions on Neural Networks and Learning Systems, 36(7):13066–13079, 2024

2024

-

[55]

Overcoming catastrophic forgetting in graph neural networks with experience replay

Fan Zhou and Chengtai Cao. Overcoming catastrophic forgetting in graph neural networks with experience replay. InProceedings of the AAAI conference on artificial intelligence, volume 35, pages 4714–4722, 2021

2021

-

[56]

Brainuicl: An unsupervised individual continual learning framework for eeg applications

Yangxuan Zhou, Sha Zhao, Jiquan Wang, Haiteng Jiang, Shijian Li, Tao Li, and Gang Pan. Brainuicl: An unsupervised individual continual learning framework for eeg applications. In The Thirteenth International Conference on Learning Representations, 2025. 15 Continual Learning for fMRI-Based Brain Disorder Diagnosis via Functional Connectivity Matrices Gene...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.