Recognition: unknown

Analysis of Multitasking Pareto Optimization for Monotone Submodular Problems

Pith reviewed 2026-05-10 09:52 UTC · model grok-4.3

The pith

Multitasking Pareto optimization solves multiple monotone submodular problems in one run when uniform costs keep Pareto fronts small.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Reformulating multiple problems that share a monotone submodular function f but differ in their knapsack constraints as one multi-objective optimization produces small Pareto fronts under uniform costs per constraint. This enables direct sharing of solutions in the population and yields lower expected runtime to (1-1/e)-approximations for all problems than running independent classical approaches.

What carries the argument

The multitasking formulation that merges multiple knapsack-constrained submodular problems into one Pareto optimization, exploiting uniform costs to maintain small fronts that support cross-task solution sharing.

If this is right

- One population can reach the target approximation for every problem without separate searches.

- Solutions discovered under one constraint transfer directly to others via sharing.

- Expected runtime to (1-1/e) approximation drops compared with independent classical runs.

- The method applies directly to maximum coverage and similar submodular problems under the uniform-cost condition.

Where Pith is reading between the lines

- When costs vary within constraints the fronts may enlarge and the runtime advantage could disappear.

- The uniform-cost sharing benefit might be tested on other submodular objectives such as sensor placement or influence maximization.

- Hybridizing the multitasking population with local search operators could extend the advantage to non-uniform cases.

Load-bearing premise

Elements inside each knapsack constraint must have identical costs, which is what keeps the Pareto fronts small enough for effective sharing.

What would settle it

An instance with uniform costs per constraint where the multitasking Pareto front grows linearly with the number of problems and the observed runtime exceeds that of separate independent runs.

Figures

read the original abstract

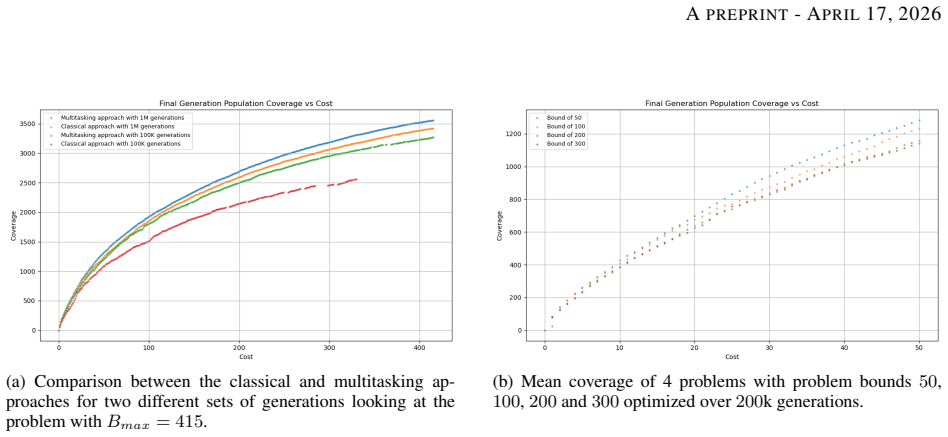

Pareto optimization via evolutionary multi-objective algorithms has been shown to efficiently solve constrained monotone submodular functions. Traditionally when solving multiple problems, the algorithm is run for each problem separately. We introduce multitasking formulations of these problems that are an effective way to solve multiple related problems with a single run. In our setting the given problems share a monotone submodular function $f$ but have different knapsack constraints. We examine the case where elements within a constraint have the same cost and show that our multitasking formulations result in small Pareto fronts. This allows the population to share solutions between all problems leading to significant improvements compared to running several classical approaches independently. Using rigorous runtime analysis, we analyze the expected time until the introduced multitasking approaches obtain a $(1-1/e)$-approximation for each of the given problems. Our experimental investigations for the maximum coverage problem give further insight into the dynamics behind how the approach works and doesn't work in practice for problems where elements within a constraint also have varied costs.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces multitasking multi-objective evolutionary algorithm formulations for simultaneously solving multiple related monotone submodular maximization problems that share the same objective function f but differ in their knapsack constraints. Under the restriction that all elements within a given constraint have identical costs, the authors prove that the resulting Pareto fronts are small (polynomially bounded), enabling a single population to maintain and share high-quality solutions across tasks. They provide a rigorous runtime analysis establishing that the expected time to reach a (1-1/e)-approximation for every problem is asymptotically better than running independent single-objective or multi-objective algorithms. Experiments on the maximum coverage problem illustrate the dynamics and confirm that the approach fails to deliver gains when element costs within constraints are heterogeneous.

Significance. If the uniform-cost analysis holds, the work supplies a concrete example of how multi-objective Pareto optimization can exploit structural similarities across related constrained submodular instances to obtain both theoretical speed-ups and practical efficiency, extending prior single-problem results on (1-1/e) guarantees. The explicit characterization of when Pareto fronts remain small is a useful technical contribution, though the scope is limited by the cost-uniformity assumption.

major comments (2)

- [§4] §4 (Runtime Analysis) and the statement of Theorem 1: the proof that the Pareto front size is O(n^2) (or polynomially bounded) and therefore permits efficient sharing relies entirely on the uniform-cost assumption within each knapsack constraint. No general bound on front size is derived for heterogeneous costs, yet the central multitasking claim—that a single run reaches (1-1/e) for all tasks faster than independent runs—depends on this bounded-front property.

- [Experiments] Experimental section (maximum-coverage results): the paper reports that the multitasking approach “doesn’t work in practice” for varied-cost instances, but supplies neither measurements of realized Pareto-front cardinality nor population-coverage statistics that would confirm the hypothesized cause (front-size explosion). Without these diagnostics, it is unclear whether the observed failure is due to the missing general analysis or to other implementation factors.

minor comments (2)

- [§3] Notation for the vector of capacities and the multi-objective formulation could be introduced earlier and used consistently to improve readability of the multitasking definitions.

- [Abstract] The abstract and introduction should more explicitly qualify that all theoretical guarantees and the small-front claim apply only under uniform intra-constraint costs.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments. We address each major comment point by point below, providing clarifications on the scope of our theoretical results and committing to improvements in the experimental diagnostics.

read point-by-point responses

-

Referee: [§4] §4 (Runtime Analysis) and the statement of Theorem 1: the proof that the Pareto front size is O(n^2) (or polynomially bounded) and therefore permits efficient sharing relies entirely on the uniform-cost assumption within each knapsack constraint. No general bound on front size is derived for heterogeneous costs, yet the central multitasking claim—that a single run reaches (1-1/e) for all tasks faster than independent runs—depends on this bounded-front property.

Authors: We agree that the proof of the polynomially bounded Pareto front (O(n^2)) and the resulting runtime improvements for multitasking rely on the uniform-cost assumption within each knapsack constraint. This restriction is explicitly stated in the manuscript, including the abstract, introduction, and the statement of Theorem 1. The paper does not derive or claim a general bound for heterogeneous costs, nor does it assert that the multitasking speed-up holds without the uniform-cost condition. The central claim is therefore limited to the uniform-cost setting where solution sharing is feasible due to the small front size. The experiments are consistent with this by showing failure for heterogeneous costs. No revision is needed, as the scope and assumptions are already clearly delimited. revision: no

-

Referee: [Experiments] Experimental section (maximum-coverage results): the paper reports that the multitasking approach “doesn’t work in practice” for varied-cost instances, but supplies neither measurements of realized Pareto-front cardinality nor population-coverage statistics that would confirm the hypothesized cause (front-size explosion). Without these diagnostics, it is unclear whether the observed failure is due to the missing general analysis or to other implementation factors.

Authors: We acknowledge that the experimental section would benefit from explicit measurements to substantiate the hypothesized cause of failure in the heterogeneous-cost case. Although the manuscript discusses the dynamics and notes the lack of gains for varied costs, we did not report quantitative data on realized Pareto-front cardinalities or population-coverage statistics. In the revised manuscript we will add these diagnostics, including tables or plots of average Pareto front sizes over optimization steps for both uniform and heterogeneous instances in the maximum-coverage experiments. This will help confirm that front-size growth is the primary factor and distinguish it from other potential implementation issues. revision: yes

Circularity Check

No circularity: runtime analysis is independent mathematical proof under explicit uniform-cost assumption

full rationale

The paper's central claims rest on a rigorous expected-time analysis proving that multitasking MOEAs reach (1-1/e)-approximations for multiple monotone submodular problems when elements inside each knapsack constraint share identical cost. This produces polynomially bounded Pareto fronts, enabling solution sharing. The derivation proceeds from standard submodular maximization properties and multi-objective evolutionary algorithm runtime techniques; it does not reduce any claimed runtime bound to a fitted parameter, a self-defined quantity, or a prior self-citation whose validity depends on the present result. The uniform-cost restriction is stated explicitly as the setting for the analysis, and the paper separately reports that the approach fails to produce small fronts (and thus loses sharing benefits) for heterogeneous costs. No equation equates a prediction to its own input by construction, and the experimental section on maximum coverage is presented as supplementary insight rather than load-bearing evidence for the runtime theorems.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption The objective function f is monotone and submodular

- domain assumption Elements within each knapsack constraint have identical cost

Reference graph

Works this paper leans on

-

[1]

Towards evolutionary multitasking: A new paradigm

Yew-Soon Ong. Towards evolutionary multitasking: A new paradigm. InProceedings of the Sixth International Symposium on Information and Communication Technology, page 2. ACM, 2015

2015

-

[2]

Pareto optimization with small data by learning across common objective spaces.Scientific Reports, 13:7842, 2023

Chin Sheng Tan, Abhishek Gupta, Yew-Soon Ong, Mahardhika Pratama, Puay Siew Tan, and Siew Kei Lam. Pareto optimization with small data by learning across common objective spaces.Scientific Reports, 13:7842, 2023

2023

-

[3]

Evolutionary multitasking across single and multi-objective formulations for improved problem solving

Bingshui Da, Abhishek Gupta, Yew-Soon Ong, and Liang Feng. Evolutionary multitasking across single and multi-objective formulations for improved problem solving. InCEC, pages 1695–1701. IEEE, 2016

2016

-

[4]

Martinez, and Amir Hussain

Eneko Osaba, Javier Del Ser, Aritz D. Martinez, and Amir Hussain. Evolutionary multitask optimization: a methodological overview, challenges, and future research directions.Cogn. Comput., 14(3):927–954, 2022

2022

-

[5]

Submodular function maximization

Andreas Krause and Daniel Golovin. Submodular function maximization. InTractability, pages 71–104. Cam- bridge University Press, 2014

2014

-

[6]

Nemhauser and Laurence A

George L. Nemhauser and Laurence A. Wolsey. Best algorithms for approximating the maximum of a submod- ular set function.Math. Oper. Res., 3(3):177–188, 1978

1978

-

[7]

The budgeted maximum coverage problem.Inf

Samir Khuller, Anna Moss, and Joseph Naor. The budgeted maximum coverage problem.Inf. Process. Lett., 70(1):39–45, 1999

1999

-

[8]

Goemans and David P

Michel X. Goemans and David P. Williamson. Improved approximation algorithms for maximum cut and satis- fiability problems using semidefinite programming.J. ACM, 42(6):1115–1145, 1995

1995

-

[9]

Archive-based single-objective evolutionary algorithms for submodular optimization

Frank Neumann and Günter Rudolph. Archive-based single-objective evolutionary algorithms for submodular optimization. InPPSN (3), volume 15150 ofLecture Notes in Computer Science, pages 166–180. Springer, 2024

2024

-

[10]

Natural Computing Series

Benjamin Doerr and Frank Neumann, editors.Theory of Evolutionary Computation - Recent Developments in Discrete Optimization. Natural Computing Series. Springer, 2020

2020

-

[11]

Bioinspired computation in combinatorial optimization: algorithms and their computational com- plexity

Carsten Witt. Bioinspired computation in combinatorial optimization: algorithms and their computational com- plexity. InGECCO (Companion), pages 647–686. ACM, 2014

2014

-

[12]

An effective knowledge transfer approach for multi- objective multitasking optimization.IEEE Trans

Jiabin Lin, Hai-Lin Liu, Kay Chen Tan, and Fangqing Gu. An effective knowledge transfer approach for multi- objective multitasking optimization.IEEE Trans. Cybern., 51(6):3238–3248, 2021

2021

-

[13]

Coello Coello

Qiuzhen Lin, Zhongjian Wu, Lijia Ma, Maoguo Gong, Jianqiang Li, and Carlos A. Coello Coello. Multiobjective multitasking optimization with decomposition-based transfer selection.IEEE Trans. Cybern., 54(5):3146–3159, 2024

2024

-

[14]

Multi-objective evolutionary multitasking algorithm based on cross-task transfer solution matching strategy.ISA Transactions, 138:504–520, 2023

Hao Sun, Pengfei Chen, Ziyu Hu, and Lixin Wei. Multi-objective evolutionary multitasking algorithm based on cross-task transfer solution matching strategy.ISA Transactions, 138:504–520, 2023

2023

-

[15]

Multitasking multiobjective optimization based on transfer com- ponent analysis.Inf

Ziyu Hu, Yulin Li, Hao Sun, and Xuemin Ma. Multitasking multiobjective optimization based on transfer com- ponent analysis.Inf. Sci., 605:182–201, 2022

2022

-

[16]

On the use of matching algorithms to transfer solutions for the travelling salesperson problem

Liam Wigney, Aneta Neumann, Yew-Soon Ong, and Frank Neumann. On the use of matching algorithms to transfer solutions for the travelling salesperson problem. InGECCO, pages 845–853. ACM, 2025. 11 APREPRINT- APRIL17, 2026

2025

-

[17]

Evolutionary multitasking for the scenario-based travelling thief problem

Thilina Pathirage Don, Aneta Neumann, and Frank Neumann. Evolutionary multitasking for the scenario-based travelling thief problem. InGECCO, pages 809–817. ACM, 2025

2025

-

[18]

Evolutionary multitasking in permutation-based combinatorial optimization problems: Realization with tsp, qap, lop, and jsp

Yuan Yuan, Yew-Soon Ong, Abhishek Gupta, Puay Siew Tan, and Hua Xu. Evolutionary multitasking in permutation-based combinatorial optimization problems: Realization with tsp, qap, lop, and jsp. In2016 IEEE Region 10 Conference (TENCON), pages 3157–3164, 2016

2016

-

[19]

Scott and Kenneth A

Eric O. Scott and Kenneth A. De Jong. Varying difficulty of knowledge reuse in benchmarks for evolutionary knowledge transfer. InCEC, pages 1–8. IEEE, 2024

2024

-

[20]

Scott and Kenneth A

Eric O. Scott and Kenneth A. De Jong. First complexity results for evolutionary knowledge transfer. InFOGA, pages 140–151. ACM, 2023

2023

-

[21]

Runtime analysis of evolutionary multitasking for classical benchmark problems

Johannes Lengler, Aneta Neumann, and Frank Neumann. Runtime analysis of evolutionary multitasking for classical benchmark problems. InGECCO, pages 1613–1621. ACM, 2025

2025

-

[22]

Maximizing submodular functions under matroid constraints by evolu- tionary algorithms.Evol

Tobias Friedrich and Frank Neumann. Maximizing submodular functions under matroid constraints by evolu- tionary algorithms.Evol. Comput., 23(4):543–558, 2015

2015

-

[23]

Pareto optimization for subset selec- tion with dynamic cost constraints.Artif

Vahid Roostapour, Aneta Neumann, Frank Neumann, and Tobias Friedrich. Pareto optimization for subset selec- tion with dynamic cost constraints.Artif. Intell., 302:103597, 2022

2022

-

[24]

Optimising monotone chance-constrained submodular functions using evolutionary multi-objective algorithms.Theor

Aneta Neumann and Frank Neumann. Optimising monotone chance-constrained submodular functions using evolutionary multi-objective algorithms.Theor. Comput. Sci., 974:114112, 2023

2023

-

[25]

Sliding window bi-objective evolutionary algorithms for optimizing chance-constrained monotone submodular functions

Xiankun Yan, Aneta Neumann, and Frank Neumann. Sliding window bi-objective evolutionary algorithms for optimizing chance-constrained monotone submodular functions. InPPSN (1), volume 15148 ofLecture Notes in Computer Science, pages 20–35. Springer, 2024

2024

-

[26]

Maximizing submodular or monotone approximately submodular functions by multi-objective evolutionary algorithms.Artif

Chao Qian, Yang Yu, Ke Tang, Xin Yao, and Zhi-Hua Zhou. Maximizing submodular or monotone approximately submodular functions by multi-objective evolutionary algorithms.Artif. Intell., 275:279–294, 2019

2019

-

[27]

Maximizing submodular or monotone functions under partition matroid constraints by multi-objective evolutionary algorithms

Anh Viet Do and Frank Neumann. Maximizing submodular or monotone functions under partition matroid constraints by multi-objective evolutionary algorithms. InPPSN (2), volume 12270 ofLecture Notes in Computer Science, pages 588–603. Springer, 2020

2020

-

[28]

Crawford

Victoria G. Crawford. Faster guarantees of evolutionary algorithms for maximization of monotone submodular functions. InIJCAI, pages 1661–1667. ijcai.org, 2021

2021

-

[29]

Fast pareto optimization for subset selection with dynamic cost constraints

Chao Bian, Chao Qian, Frank Neumann, and Yang Yu. Fast pareto optimization for subset selection with dynamic cost constraints. InIJCAI, pages 2191–2197. ijcai, 2021

2021

-

[30]

Rossi and Nesreen K

Ryan A. Rossi and Nesreen K. Ahmed. The network data repository with interactive graph analytics and visual- ization. InAAAI, pages 4292–4293. AAAI Press, 2015. 12

2015

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.