Recognition: unknown

Presenting Neural Networks via Coherent Functors

Pith reviewed 2026-05-10 08:58 UTC · model grok-4.3

The pith

Dense feed-forward neural networks over floats can be presented as coherent categories G whose Set-models are the networks, with inference as precomposition along a coherent functor from a span category.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

any dense feed-forward neural network architecture over the floating point numbers may be presented as a coherent category G whose Set-models are the networks of that architecture, with inference arising as the precomposition functor Coh(ι, Set) along a coherent functor ι : RSpan(a_0, a_n) → G

Load-bearing premise

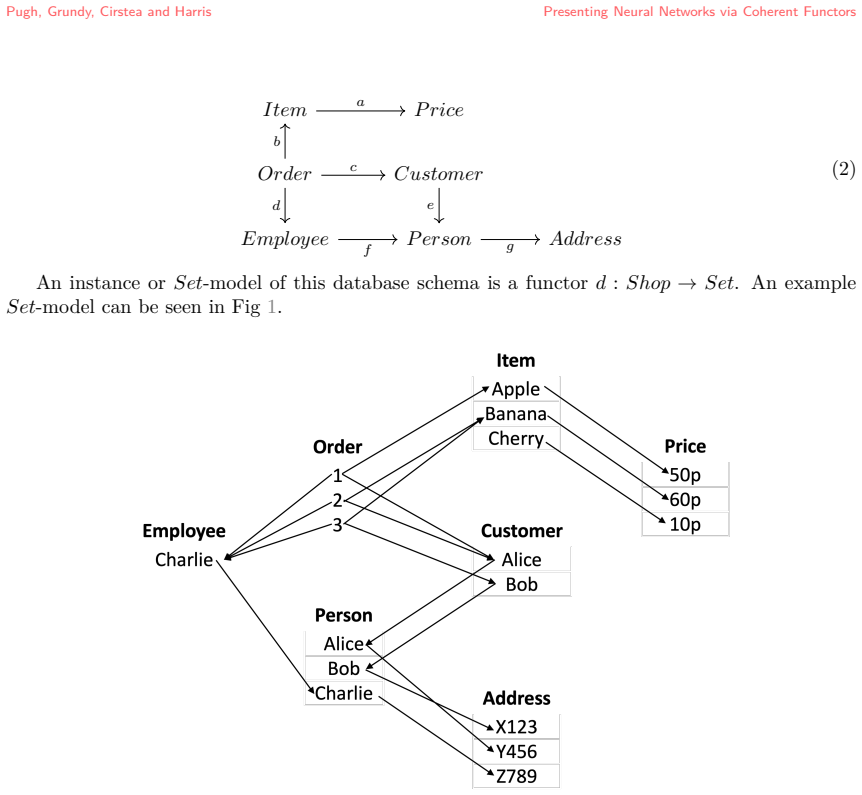

that machine learning models are a form of database and that databases are models of theories in coherent logic, allowing any dense feed-forward neural network to be encoded via a functorial database schema whose models coincide with network instances

Figures

read the original abstract

This paper develops a methodology for representing machine learning models as models of formal theories, grounded in the perspective that machine learning models are a form of database and that databases are models of theories in coherent logic. Two intermediate results support this approach: any functorial database schema has an associated $\kappa$-coherent theory whose models coincide with its instances, and data may be hard-coded into a coherent category such that any model of the resulting theory necessarily contains it. These tools are used to show that any dense feed-forward neural network architecture over the floating point numbers may be presented as a coherent category $G$ whose $Set$-models are the networks of that architecture, with inference arising as the precomposition functor $Coh(\iota, Set)$ along a coherent functor $\iota : RSpan(a_0, a_n) \rightarrow G$. This representation is extended to networks with weight and bias fixing and tying, encompassing sparse and convolutional architectures, via a 2-coequaliser construction in $Coh_\sim$. Taken together, these results recast neural network inference as an extension problem in the 2-category $Coh_\sim$ of coherent categories, supporting the interpretation of a network architecture as a formal hypothesis about the structure of data and of model training as a lifting of a dataset into a more constrained theory.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper develops a categorical logic framework for neural networks by viewing them as models of coherent theories. It establishes two intermediate results: (1) any functorial database schema has an associated κ-coherent theory whose Set-models are exactly its instances, and (2) data can be hard-coded into a coherent category so that models of the resulting theory necessarily contain it. These are applied to show that any dense feed-forward neural network architecture over floating-point numbers can be presented as a coherent category G whose Set-models are precisely the networks of that architecture, with inference realized as the precomposition functor Coh(ι, Set) along a coherent functor ι : RSpan(a0, an) → G. The approach is extended to weight/bias fixing and tying (including sparse and convolutional cases) via a 2-coequalizer construction in the 2-category Coh_∼, recasting inference as an extension problem in that 2-category.

Significance. If the constructions are verified to produce exactly the intended models, the work supplies a rigorous bridge between machine-learning architectures and coherent logic/database theory. It supplies a formal interpretation of an architecture as a hypothesis about data structure and of training as a lifting into a more constrained theory. The explicit use of functorial schemas, hard-coding, and 2-categorical colimits is a concrete technical contribution that could support future formal verification or compositional reasoning about networks.

major comments (3)

- [main result for dense feed-forward networks] In the section presenting the main result for dense feed-forward networks, the claim that the Set-models of G coincide exactly with the networks of the architecture requires that the coherent axioms enforce the precise arithmetic composition (matrix multiplication, bias addition, and non-linear activations). Because coherent logic is restricted to positive-existential formulas, it is not immediate that the relational encoding of these operations (via the functorial schema and hard-coding) excludes extraneous models whose forward maps differ from the intended deterministic computation. A concrete verification for a minimal two-layer example, showing that every model satisfies the exact functional equations, would be needed to substantiate the central claim.

- [extension via 2-coequalizer in Coh_∼] In the extension via 2-coequalizer in Coh_∼ (for weight tying and sparse/convolutional architectures), the construction must preserve the exact correspondence between models and networks. The paper should verify that the 2-coequalizer does not introduce new models that violate the original forward-pass equations or destroy coherence of the theory; otherwise the claim that inference remains precomposition along the induced functor fails.

- [intermediate result on functorial database schemas] The intermediate result that any functorial database schema has an associated κ-coherent theory whose models coincide with its instances is load-bearing for the whole development. The manuscript should supply an explicit statement of the κ-coherent axioms generated from the schema and a proof that every model of those axioms arises from an instance of the schema (and conversely).

minor comments (2)

- Notation for the 2-category Coh_∼ and the 2-coequalizer construction should be introduced with a brief reminder of the 2-categorical structure (objects, 1-morphisms, 2-morphisms) to aid readers unfamiliar with 2-category theory.

- The abstract and introduction use the phrase “any dense feed-forward neural network architecture”; the manuscript should clarify whether this includes arbitrary activation functions or is restricted to those definable by coherent formulas (e.g., ReLU via its graph).

Axiom & Free-Parameter Ledger

axioms (1)

- standard math Standard axioms of category theory and coherent logic

invented entities (2)

-

Coherent category G for a neural network architecture

no independent evidence

-

Coherent functor ι : RSpan(a_0, a_n) → G

no independent evidence

Reference graph

Works this paper leans on

-

[1]

(Serge) Abiteboul.Foundations of databases

S. (Serge) Abiteboul.Foundations of databases. Reading, MA. : Addison-Wesley, 1995. ISBN 978-0-201-53771-0. URLhttp://archive.org/details/foundationsofdat0000abit

1995

-

[2]

Physics, Topology, Logic and Computation: A Rosetta Stone

J. Baez and M. Stay. Physics, Topology, Logic and Computation: A Rosetta Stone. In Bob Coecke, editor,New Structures for Physics, volume 813, pages 95–172. Springer Berlin Heidelberg, Berlin, Heidelberg, 2010. ISBN 978-3-642-12820-2 978-3-642-12821-9. https://doi.org/10.1007/978-3-642-12821-9_2. URLhttp://link.springer.com/10.1007/ 978-3-642-12821-9_2. Se...

-

[3]

RepletesubcategoryinnLab, 2024

Toby Bartels, Zoran Škoda, Mike Shulman, Urs Anonymous, Schreiber, Rod McGuire, Anony- mous, andJonasFrey. RepletesubcategoryinnLab, 2024. URLhttps://ncatlab.org/nlab/ show/replete+subcategory

2024

-

[4]

Cambridge University Press, Cambridge, 1994

Francis Borceux.Handbook of Categorical Algebra: Volume 1: Basic Category Theory, volume 1 ofEncyclopedia of Mathematics and its Applications. Cambridge University Press, Cambridge, 1994. ISBN 978-0-521-44178-0. https://doi.org/10.1017/CBO9780511525858. URLhttps://www.cambridge.org/core/books/handbook-of-categorical-algebra/ A0B8285BBA900AFE85EED8C971E0DE14

- [5]

-

[6]

Niles Johnson and Donald Yau. 2-Dimensional Categories, June 2020. URLhttp://arxiv. org/abs/2002.06055. arXiv:2002.06055 [math]

-

[7]

The (2,1)-category of small coherent categories, April 2021

Kristóf Kanalas. The (2,1)-category of small coherent categories, April 2021. URLhttp: //arxiv.org/abs/2104.13239. arXiv:2104.13239 [math]

-

[8]

Saunders Mac Lane and Ieke Moerdijk.Sheaves in Geometry and Logic: A First Introduction to Topos Theory. Universitext. Springer, New York, NY, 1994. ISBN 978-0-387-97710-2 978- 1-4612-0927-0. https://doi.org/10.1007/978-1-4612-0927-0. URLhttps://link.springer. com/10.1007/978-1-4612-0927-0

-

[9]

Michael Makkai and Gonzalo E. Reyes.First Order Categorical Logic: Model-Theoretical Methods in the Theory of Topoi and Related Categories, volume 611 ofLecture Notes in Mathematics. Springer, Berlin, Heidelberg, 1977. ISBN 978-3-540-08439-6 978-3-540- 37100-7. https://doi.org/10.1007/BFb0066201. URLhttp://link.springer.com/10.1007/ BFb0066201

-

[10]

Andrew M. Pitts. Categorical logic. InHandbook of logic in computer science: Volume 5: Logic and algebraic methods, pages 39–123. Oxford University Press, Inc., USA, April 2001. ISBN 978-0-19-853781-6. https://doi.org/10.1093/oso/9780198537816.003.0002

-

[11]

Learning Is a Kan Extension, February 2025

Matthew Pugh, Jo Grundy, Corina Cirstea, and Nick Harris. Learning Is a Kan Extension, February 2025. URLhttp://arxiv.org/abs/2502.13810. arXiv:2502.13810 [math]

-

[12]

Syntactic category in nLab, 2015

Urs Schreiber, Mike Shulman, David Corfield, Toby Bartels, and Colin Zwanziger. Syntactic category in nLab, 2015. URLhttps://ncatlab.org/nlab/show/syntactic+category

2015

-

[13]

geometriccategory in nLab, 2022

Urs Schreiber, TobyBartels, Mike Shulman, FinnLawler, and Anonymous. geometriccategory in nLab, 2022. URLhttps://ncatlab.org/nlab/show/geometric+category

2022

-

[14]

Category theory in machine learning

Dan Shiebler, Bruno Gavranović, and Paul Wilson. Category Theory in Machine Learning, June 2021. URLhttp://arxiv.org/abs/2106.07032. arXiv:2106.07032 [cs]

-

[15]

Isofibration in nLab, 2023

Mike Shulman, Urs Schreiber, Toby Bartels, David Roberts, Richard Williamson, and Varkor. Isofibration in nLab, 2023. URLhttps://ncatlab.org/nlab/show/isofibration

2023

- [16]

-

[17]

well- pointed topos in nLab, 2023

Todd Trimble, Urs Schreiber, Toby Bartels, Mike Shulman, Owen Biesel, Vlad Patryshev, Giarrusso Paolo G., Capella S., Roberts David, Anonymous, and Harbaugh Keith. well- pointed topos in nLab, 2023. URLhttps://ncatlab.org/nlab/show/well-pointed+topos. Compositionality, Volume 0, Issue 53 (2025)17

2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.