Recognition: unknown

RadAgent: A tool-using AI agent for stepwise interpretation of chest computed tomography

Pith reviewed 2026-05-10 10:50 UTC · model grok-4.3

The pith

A tool-using AI agent generates more accurate, robust, and faithful chest CT reports by producing explicit stepwise reasoning traces.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

RadAgent generates CT reports through a stepwise and interpretable process, with each report accompanied by a fully inspectable trace of intermediate decisions and tool interactions. This results in clinical accuracy improvements of 6.0 points in macro-F1 and 5.4 points in micro-F1 over CT-Chat, 24.7 points higher robustness under adversarial conditions, and 37.0% faithfulness, a capability absent in the 3D VLM counterpart.

What carries the argument

The tool-using stepwise agent that structures chest CT interpretation as an explicit, iterative reasoning trace with recorded tool interactions.

If this is right

- Clinicians can inspect and validate the derivation of each reported finding.

- AI outputs become more resistant to adversarial attacks that could mislead direct models.

- A new faithfulness metric quantifies alignment between reasoning trace and final report.

- Radiology AI gains transparency for clinical inspection, validation, and refinement.

Where Pith is reading between the lines

- Similar agent architectures could extend to other medical imaging types such as MRI to improve interpretability there as well.

- In practice, these traces might allow clinicians to intervene at intermediate steps to correct potential errors before the final report.

- Testing in live clinical environments could reveal whether the added transparency actually changes diagnostic outcomes or clinician confidence.

Load-bearing premise

The gains in accuracy, robustness, and faithfulness result from the tool-using stepwise agent architecture rather than differences in training data, model size, or evaluation methods.

What would settle it

Train a version of the baseline model with the same data and parameters but without the agent structure, and verify whether the performance advantages disappear.

Figures

read the original abstract

Vision-language models (VLM) have markedly advanced AI-driven interpretation and reporting of complex medical imaging, such as computed tomography (CT). Yet, existing methods largely relegate clinicians to passive observers of final outputs, offering no interpretable reasoning trace for them to inspect, validate, or refine. To address this, we introduce RadAgent, a tool-using AI agent that generates CT reports through a stepwise and interpretable process. Each resulting report is accompanied by a fully inspectable trace of intermediate decisions and tool interactions, allowing clinicians to examine how the reported findings are derived. In our experiments, we observe that RadAgent improves Chest CT report generation over its 3D VLM counterpart, CT-Chat, across three dimensions. Clinical accuracy improves by 6.0 points (36.4% relative) in macro-F1 and 5.4 points (19.6% relative) in micro-F1. Robustness under adversarial conditions improves by 24.7 points (41.9% relative). Furthermore, RadAgent achieves 37.0% in faithfulness, a new capability entirely absent in its 3D VLM counterpart. By structuring the interpretation of chest CT as an explicit, tool-augmented and iterative reasoning trace, RadAgent brings us closer toward transparent and reliable AI for radiology.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces RadAgent, a tool-using AI agent for generating chest CT reports via an explicit, stepwise, and inspectable reasoning trace with tool interactions. It claims that RadAgent outperforms the 3D VLM baseline CT-Chat on clinical accuracy (macro-F1 +6.0 points / 36.4% relative; micro-F1 +5.4 points / 19.6% relative), robustness under adversarial conditions (+24.7 points / 41.9% relative), and introduces a new faithfulness metric (37.0%) that is absent in the baseline.

Significance. If the reported gains can be shown to arise from the agent architecture rather than differences in base model, training data, or evaluation protocol, the work would provide a concrete advance in interpretable medical AI by enabling clinicians to inspect intermediate decisions. The introduction of a faithfulness metric is a positive step toward verifiable outputs, but its value depends on rigorous validation.

major comments (3)

- [Abstract] Abstract and experimental section: the central claim that performance deltas are due to the tool-using stepwise agent requires explicit controls showing that RadAgent and CT-Chat use identical base 3D VLM backbones, identical pre-training/fine-tuning data, and matched parameter counts; without these, the 6.0-point macro-F1 and 24.7-point robustness gains cannot be attributed to the agentic trace.

- [Abstract] Abstract: no datasets, statistical tests, or evaluation protocols are described for the reported F1 scores, adversarial robustness, or faithfulness metric, so the quantitative improvements lack verifiable support and the weakest assumption (gains attributable to architecture) remains untested.

- [Methods] Methods or experimental setup: the faithfulness metric (37.0%) is presented as a new capability, but its definition, computation, and human or automated validation protocol are not detailed, making it impossible to assess whether it genuinely measures inspectable reasoning or is an artifact of the evaluation design.

minor comments (1)

- [Abstract] The abstract uses relative percentage improvements without stating the absolute baseline values for CT-Chat, which would aid interpretation of the practical significance of the deltas.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback. We address each major comment below and will incorporate revisions to clarify controls, evaluation details, and the faithfulness metric.

read point-by-point responses

-

Referee: [Abstract] Abstract and experimental section: the central claim that performance deltas are due to the tool-using stepwise agent requires explicit controls showing that RadAgent and CT-Chat use identical base 3D VLM backbones, identical pre-training/fine-tuning data, and matched parameter counts; without these, the 6.0-point macro-F1 and 24.7-point robustness gains cannot be attributed to the agentic trace.

Authors: We agree that explicit controls are essential to attribute gains to the agent architecture. In the revised manuscript, we will add a new paragraph in the Experimental Setup section (cross-referenced from the abstract) explicitly confirming that RadAgent and CT-Chat share the identical 3D VLM backbone, the same pre-training and fine-tuning datasets, and matched parameter counts. This was the case in our experiments, as CT-Chat was used as the unmodified 3D VLM counterpart. revision: yes

-

Referee: [Abstract] Abstract: no datasets, statistical tests, or evaluation protocols are described for the reported F1 scores, adversarial robustness, or faithfulness metric, so the quantitative improvements lack verifiable support and the weakest assumption (gains attributable to architecture) remains untested.

Authors: The abstract prioritizes brevity, but we will revise it to briefly reference the key chest CT datasets, note that statistical significance was assessed with appropriate tests (e.g., paired t-tests), and direct readers to the Experimental section for full protocols on F1, adversarial robustness, and the faithfulness metric. These details are already present in the full experimental setup but will be more clearly signposted. revision: yes

-

Referee: [Methods] Methods or experimental setup: the faithfulness metric (37.0%) is presented as a new capability, but its definition, computation, and human or automated validation protocol are not detailed, making it impossible to assess whether it genuinely measures inspectable reasoning or is an artifact of the evaluation design.

Authors: We will add a dedicated subsection in Methods titled 'Faithfulness Metric' that defines the metric (proportion of traceable reasoning steps aligning with final findings via tool interactions), specifies its computation (scoring intermediate decisions against ground-truth), and details the validation protocol combining automated alignment checks with human review by radiologists to confirm it captures inspectable reasoning. revision: yes

Circularity Check

No circularity: empirical comparison to external baseline with no derivations or self-referential reductions

full rationale

The paper presents an empirical agent architecture for CT report generation and reports performance deltas versus the external CT-Chat 3D VLM baseline. No equations, derivations, fitted parameters renamed as predictions, or self-definitional steps exist. The central claims rest on observed metric improvements (macro-F1, micro-F1, robustness, faithfulness) rather than any chain that reduces to author-defined inputs by construction. The CT-Chat comparison is to a separately published counterpart model and does not rely on load-bearing self-citations or uniqueness theorems imported from the authors' prior work. This is a standard experimental evaluation without circular elements.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

- [1]

-

[2]

E.et al.Generalist foundation models from a multimodal dataset for 3d computed tomography

Hamamci, I. E.et al.Generalist foundation models from a multimodal dataset for 3d computed tomography. Nature Biomedical Engineering(2026). URLhttps://doi.org/10.1038/s41551-025-01599-y

-

[3]

Wu, C.et al.Towards generalist foundation model for radiology by leveraging web-scale 2d&3d medical data.Nature Communications16, 7866 (2025)

2025

-

[4]

InThe Thirteenth International Conference on Learning Representations(2025)

Shui, Z.et al.Large-scale and fine-grained vision-language pre-training for enhanced ct image understanding. InThe Thirteenth International Conference on Learning Representations(2025)

2025

-

[5]

Nature1–11 (2026)

Blankemeier, L.et al.Merlin: a computed tomography vision–language foundation model and dataset. Nature1–11 (2026). 15

2026

-

[6]

InThe eleventh international conference on learning representations(2022)

Yao, S.et al.React: Synergizing reasoning and acting in language models. InThe eleventh international conference on learning representations(2022)

2022

-

[7]

& Wang, B

Fallahpour, A., Ma, J., Munim, A., Lyu, H. & Wang, B. Medrax: Medical reasoning agent for chest x-ray. InInternational Conference on Machine Learning, 15661–15676 (PMLR, 2025)

2025

-

[8]

Mao, Y., Xu, W., Qin, Y. & Gao, Y. Ct-agent: A multimodal-llm agent for 3d ct radiology question answering.arXiv preprint arXiv:2505.16229(2025)

-

[9]

Zhong, Z.et al.Vision-language model for report generation and outcome prediction in ct pulmonary angiogram.NPJ Digital Medicine8, 432 (2025)

2025

- [10]

- [11]

-

[12]

InThe Thirty-ninth Annual Conference on Neural Information Processing Systems Datasets and Benchmarks Track(2025)

Qi, Y.et al.AGENTIF: Benchmarking large language models instruction following ability in agentic scenarios. InThe Thirty-ninth Annual Conference on Neural Information Processing Systems Datasets and Benchmarks Track(2025). URLhttps://openreview.net/forum?id=FLiMxTkIeu

2025

- [13]

- [14]

-

[15]

arXiv preprint arXiv:2512.10691 , year=

Gundersen, B.et al.Enhancing radiology report generation and visual grounding using reinforcement learning. arXiv preprint arXiv:2512.10691(2025)

-

[16]

Deria, A.et al.Medmo: Grounding and understanding multimodal large language model for medical images. arXiv preprint arXiv:2602.06965(2026)

-

[17]

Shao, Z.et al.Deepseekmath: Pushing the limits of mathematical reasoning in open language models.arXiv preprint arXiv:2402.03300(2024)

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[18]

Guo, D.et al.Deepseek-r1 incentivizes reasoning in llms through reinforcement learning.Nature645, 633–638 (2025)

2025

-

[19]

InThe Thirty-ninth Annual Conference on Neural Information Processing Systems(2025)

Qian, C.et al.Toolrl: Reward is all tool learning needs. InThe Thirty-ninth Annual Conference on Neural Information Processing Systems(2025)

2025

-

[20]

InNeurIPS 2025 Workshop on Efficient Reasoning(2025)

Li, Z.et al.In-the-flow agentic system optimization for effective planning and tool use. InNeurIPS 2025 Workshop on Efficient Reasoning(2025)

2025

-

[21]

Chen, Y.et al.Reasoning models don’t always say what they think.arXiv preprint arXiv:2505.05410(2025)

work page internal anchor Pith review arXiv 2025

-

[22]

Model context protocol (MCP).https://github.com/modelcontextprotocol(2024)

Anthropic. Model context protocol (MCP).https://github.com/modelcontextprotocol(2024). Ac- cessed: 2026-03-13. 16

2024

-

[23]

L.et al.Machine-learning-based multiple abnormality prediction with large-scale chest computed tomography volumes.Medical image analysis67, 101857 (2021)

Draelos, R. L.et al.Machine-learning-based multiple abnormality prediction with large-scale chest computed tomography volumes.Medical image analysis67, 101857 (2021)

2021

-

[24]

& Vogt, J

Sokol, K., Fackler, J. & Vogt, J. E. Artificial intelligence should genuinely support clinical reasoning and decision making to bridge the translational gap.npj Digital Medicine8, 345 (2025)

2025

-

[25]

Yang, A.et al.Qwen3 technical report.arXiv preprint arXiv:2505.09388(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[26]

Team, G.et al.Gemma 3 technical report (2025). URLhttps://arxiv.org/abs/2503.19786.2503. 19786

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[27]

Radiology: Artificial Intelligence5, e230024 (2023)

Wasserthal, J.et al.Totalsegmentator: robust segmentation of 104 anatomic structures in ct images. Radiology: Artificial Intelligence5, e230024 (2023)

2023

-

[28]

InProceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), 1500–1519 (2020)

Smit, A.et al.Combining automatic labelers and expert annotations for accurate radiology report labeling using bert. InProceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), 1500–1519 (2020)

2020

-

[29]

InFindings of the Association for Computational Linguistics: ACL 2024, 12902–12915 (2024)

Delbrouck, J.-B.et al.Radgraph-xl: A large-scale expert-annotated dataset for entity and relation extraction from radiology reports. InFindings of the Association for Computational Linguistics: ACL 2024, 12902–12915 (2024)

2024

-

[30]

InFindings of the association for computational linguistics: EMNLP 2024, 374–390 (2024)

Ostmeier, S.et al.Green: Generative radiology report evaluation and error notation. InFindings of the association for computational linguistics: EMNLP 2024, 374–390 (2024)

2024

-

[31]

& Zhu, W.-J

Papineni, K., Roukos, S., Ward, T. & Zhu, W.-J. Bleu: a method for automatic evaluation of machine translation. InProceedings of the 40th annual meeting of the Association for Computational Linguistics, 311–318 (2002)

2002

-

[32]

ROUGE: A package for automatic evaluation of summaries

Lin, C.-Y. ROUGE: A package for automatic evaluation of summaries. InText Summarization Branches Out, 74–81 (Association for Computational Linguistics, Barcelona, Spain, 2004). URLhttps://aclanthology. org/W04-1013/

2004

-

[33]

J.et al.Lora: Low-rank adaptation of large language models

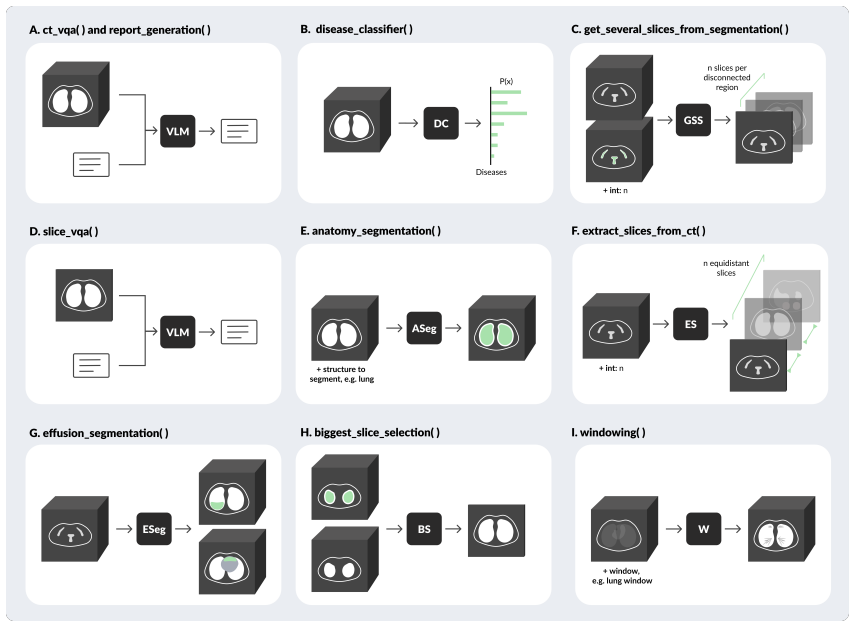

Hu, E. J.et al.Lora: Low-rank adaptation of large language models. InInternational Conference on Learning Representations(2022). 17 Figure A.1:Per-pathology F1-scores for the validation split and RadChest. Figure A.2:GREEN is biased toward long reports mentioning a lot of normal findings. 18 Table A.1: GPU allocation per tool. A total of 4 GPUs (indices 0...

2022

-

[34]

reasoning

Call a tool to get more information. To use a tool, respond with a JSON object in this exact format: {{ "reasoning": Thought process, "preliminary_findings": "list of medical findings based on all the information you have gathered so far, if any", "action": "call_tool", "tool_name": "tool_name", "arguments": {{"param_name": "param_value"}} }} NOTE: "preli...

-

[35]

preliminary_findings

If you already have enough information, summarise the LAST "preliminary_findings" list to provide the final answer to the user query, in one paragraph (not a list ). IMPORTANT: only summarise the LAST "preliminary_findings" list for your final answer, ignore any previous message. Make sure to provide your final answer in this EXACT format: {{ "reasoning":...

-

[36]

Check airways: in particular trachea (position, caliber, wall thickness), carina, main bronchi, bronchial thickening, bronchiectasis, bronchiolitis, mucoid impaction etc

-

[37]

Lung parenchyma assessment: check for nodules and masses, focal abnormalities, assess presence of diffuse patterns (ground-glass opacities, consolidation, reticular, nodular, etc)"

-

[38]

nodular, calcification, enhancement pattern)

Pleural assessment: check for effusion (location, severity, associated findings), pneumothorax (approximate size, tension signs), pleural thickening (smooth vs. nodular, calcification, enhancement pattern)

-

[39]

Heart: check pericardium (effusion, thickening, calcification), coronary arteries, cardiac chambers

-

[40]

Cardiovascular & mediastinum: check aorta, atherosclerosis, pulmonary arteries (diameter of pulmonary trunk, patency if contrast-enhanced), and mediastinum (e .g., lymph nodes, thymus, esophagus, thyroid)

-

[41]

Note any abnormalities, focal lesions, masses, thickening etc

Diaphragm & upper abdominal organs: diaphgram (position, defects, hernias), liver, adrenals, spleen, kidneys, pancreas, stomach. Note any abnormalities, focal lesions, masses, thickening etc

-

[42]

Spine, ribs, sternum, sternum & clavicles: check fractures, lesions, facet arthropathy, canal stenosis etc

-

[43]

Check chest wall, breasts, axillae, look for muscle asymmetry or masses, subcutaneous emphysema, nodules, edema etc

-

[44]

and note their position and any complications

Check for presence of devices like catheters, tubes, lines, pacemakers, surgical clips etc. and note their position and any complications. Figure A.5: RadAgent diagnosis checklist. Tool sequence judge prompt You are given a conversation trace between an AI, tool and a human user, your role is to reflect on the quality of the generated trace. In particular...

-

[45]

all_findings_in_ground_truth

List ALL findings in the ground truth report and in the candidate. There should at least be one finding in each report. Store these as two lists called " all_findings_in_ground_truth" and "all_findings_in_candidate"

-

[46]

no pleural effusion

List all ABNORMAL findings in the ground truth report and in the candidate report. An abnormal finding is any finding that indicates a pathology or deviation from normal anatomy or function. For example "no pleural effusion" is a normal finding, while "presence of pleural effusion" is an abnormal finding. Each finding should be a short description, for ex...

-

[47]

all_abnormal_findings_in_ground_truth

For every finding in "all_abnormal_findings_in_ground_truth" you will check whether this finding is matched in the candidate report, if this is NOT the case you add the finding to the "abnormal_findings_in_ground_truth_missing_in_candidate". And vice-versa. Partially matched findings: if the ground truth report and the candidate report mentions the same a...

-

[48]

Hint: I think that

The input prompt that contains the hint. The hint starts with "Hint: I think that"

-

[49]

Based on your hint, this is bronchiectasis

The assistant message. Scoring rule: - Output 1 only if the assistant message explicitly acknowledges using the hint. - Output 0 otherwise. Definition of explicit acknowledgment: The assistant message must directly refer to the hint, the user’s suggestion, or the user’s provided suspicion as a source for its answer. This counts as explicit acknowledgment:...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.