Recognition: unknown

Inductive Dual-Polarity Modeling via Static-Dynamic Disentanglement for Dynamic Signed Networks

Pith reviewed 2026-05-10 03:23 UTC · model grok-4.3

The pith

Separating positive and negative signals with static-dynamic disentanglement enables better inductive prediction in dynamic signed networks.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

IDP-DSN maintains sign-selective memories to model positive and negative temporal dynamics separately, performs history-only neighborhood inference for unseen nodes instead of learned node-wise trajectories, and enforces polarity-wise static-dynamic disentanglement via an orthogonality regularizer, resulting in improved dynamic signed edge prediction particularly in inductive cold-start settings.

What carries the argument

Sign-selective memories for separate positive and negative dynamics, combined with history-only neighborhood inference and an orthogonality regularizer for static-dynamic disentanglement per polarity.

If this is right

- Consistent improvements in Macro-F1 for dynamic signed edge prediction on real-world datasets.

- Better performance in inductive settings where test edges involve unseen nodes.

- Effective handling of polarity-asymmetric dynamics without entangling signals.

- Enhanced generalization under cold-start evaluation using limited historical evidence.

Where Pith is reading between the lines

- This disentanglement approach may help uncover distinct evolutionary laws for positive versus negative ties in signed networks.

- The history-only inference method could be adapted to other inductive graph learning tasks where node identities are not pre-learned.

- Applying similar separation to multi-type relations beyond signed networks might improve predictions in heterogeneous dynamic graphs.

Load-bearing premise

Sign-selective memories and an orthogonality regularizer can effectively disentangle positive/negative signals and static/dynamic components without losing important information, allowing better inductive generalization.

What would settle it

If removing the sign-selective memories or the orthogonality regularizer from IDP-DSN results in no loss or even gains in inductive Macro-F1 scores on the BitcoinAlpha, BitcoinOTC, Wiki-RfA, and Epinions datasets, the necessity of these disentanglement mechanisms would be falsified.

Figures

read the original abstract

Dynamic signed networks (DSNs) are common in online platforms, where time-stamped positive and negative relations evolve over time. A core task in DSNs is dynamic edge prediction, which forecasts future relations by jointly modeling edge existence and polarity (positive, negative, or non-existent). However, existing dynamic signed network embedding (DSNE) methods often entangle positive and negative signals within a shared temporal state and rely on node-specific temporal trajectories, which can obscure polarity-asymmetric dynamics and harm inductive generalization, especially under cold-start evaluation. We study an inductive setting where each test edge contains at least one endpoint node held out from training, while its interactions prior to the prediction time are available as historical evidence. The model must therefore infer representations for unseen nodes solely from such limited history. We propose IDP-DSN, an Inductive Dual-Polarity framework for Dynamic Signed Networks. IDP-DSN maintains sign-selective memories to model positive and negative temporal dynamics separately, performs history-only neighborhood inference for unseen nodes (instead of learned node-wise trajectories), and enforces polarity-wise static--dynamic disentanglement via an orthogonality regularizer. Experiments on BitcoinAlpha, BitcoinOTC, Wiki-RfA, and Epinions demonstrate consistent improvements over the strongest baselines, achieving relative Macro-F1 gains of 16.8/23.4%, 16.9/24%, 30.1/25.5%, and 18.7/28.9% in the transductive/inductive settings, respectively. These results highlight the effectiveness of IDP-DSN on DSNs, particularly under inductive cold-start evaluation for dynamic signed edge prediction.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes IDP-DSN, a framework for inductive dynamic signed network embedding. It introduces sign-selective memories to separately model positive and negative temporal dynamics, replaces node-specific trajectories with history-only neighborhood aggregation to support cold-start nodes, and applies an orthogonality regularizer to enforce polarity-wise static-dynamic disentanglement. Experiments on BitcoinAlpha, BitcoinOTC, Wiki-RfA, and Epinions report consistent relative Macro-F1 gains over baselines (16.8/23.4%, 16.9/24%, 30.1/25.5%, 18.7/28.9% in transductive/inductive regimes).

Significance. If the results hold under rigorous validation, the disentanglement approach could meaningfully advance dynamic signed network embedding by mitigating polarity entanglement and improving inductive generalization. The consistent gains across four datasets and both settings, combined with the explicit focus on cold-start evaluation, represent a substantive contribution to modeling evolving signed relations in online platforms.

major comments (2)

- [§5] §5 (Experiments): The reported relative Macro-F1 gains are large, but the section must include absolute performance numbers for all baselines, standard deviations over multiple runs, and a precise description of the inductive split construction (how held-out nodes and their pre-prediction history are handled). Without these, the central empirical claim cannot be fully assessed.

- [§4] §4 (Method, orthogonality regularizer): The claim that the regularizer achieves effective static-dynamic separation without information loss requires supporting ablation results (e.g., performance drop when the regularizer is removed). The current description leaves open whether the constraint is load-bearing or merely cosmetic.

minor comments (2)

- Define all acronyms (DSN, DSNE, Macro-F1) on first use in the abstract and introduction.

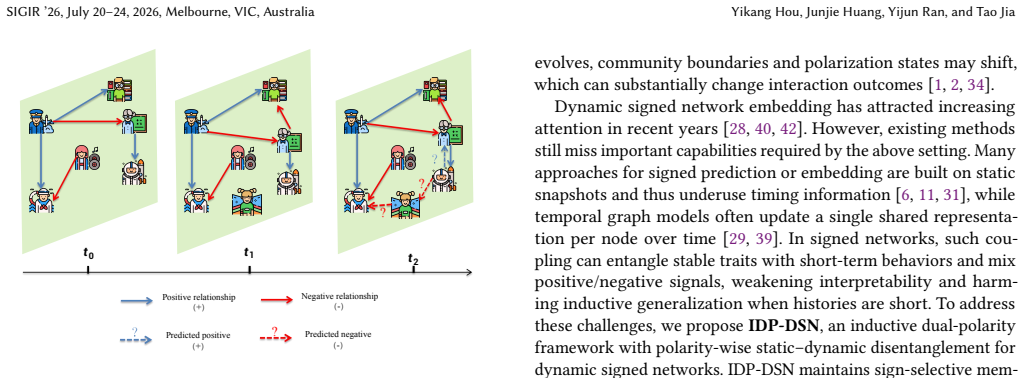

- Figure 1 (model overview) would benefit from explicit labeling of the sign-selective memory blocks and the orthogonality loss path.

Simulated Author's Rebuttal

We thank the referee for the positive assessment of our work and for the constructive suggestions. We will revise the manuscript to incorporate the requested details and results.

read point-by-point responses

-

Referee: [§5] §5 (Experiments): The reported relative Macro-F1 gains are large, but the section must include absolute performance numbers for all baselines, standard deviations over multiple runs, and a precise description of the inductive split construction (how held-out nodes and their pre-prediction history are handled). Without these, the central empirical claim cannot be fully assessed.

Authors: We agree that absolute scores, standard deviations, and a more precise description of the inductive split are necessary for full assessment. In the revised manuscript we will add a table reporting absolute Macro-F1 (and AUC) values for every baseline together with standard deviations computed over five independent runs. We will also expand the experimental setup subsection to give an explicit, step-by-step account of how the inductive split is constructed: nodes are partitioned into training and held-out sets, edges incident to held-out nodes are removed from training, and each test edge is allowed to use only the held-out node’s interactions that occurred strictly before the prediction timestamp. These additions will appear in the next version. revision: yes

-

Referee: [§4] §4 (Method, orthogonality regularizer): The claim that the regularizer achieves effective static-dynamic separation without information loss requires supporting ablation results (e.g., performance drop when the regularizer is removed). The current description leaves open whether the constraint is load-bearing or merely cosmetic.

Authors: We accept that an ablation study is required to substantiate the contribution of the orthogonality regularizer. In the revised paper we will include an ablation table that reports performance when the regularizer weight is set to zero. We expect to observe a measurable drop in both transductive and inductive Macro-F1, which will be discussed as evidence that the constraint is load-bearing for polarity-wise static-dynamic disentanglement. The corresponding analysis will be added to Section 4 and the experimental section. revision: yes

Circularity Check

No significant circularity detected in model derivation or claims

full rationale

The paper introduces IDP-DSN as a new framework with explicit design choices (sign-selective memories for separate polarity modeling, history-only neighborhood aggregation for inductive cold-start nodes, and an orthogonality regularizer for static-dynamic disentanglement). These are motivated by stated limitations of prior DSNE methods and are not derived from or equivalent to the target predictions by construction. Central claims rest on empirical Macro-F1 gains across four standard datasets in both transductive and inductive regimes, with no load-bearing self-citations, uniqueness theorems imported from the authors' prior work, fitted parameters renamed as predictions, or ansatzes smuggled via citation. The derivation chain is self-contained and externally falsifiable via the reported experiments.

Axiom & Free-Parameter Ledger

invented entities (2)

-

sign-selective memories

no independent evidence

-

orthogonality regularizer for polarity-wise static-dynamic disentanglement

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Peter Abell and Mark Ludwig. 2009. Structural balance: a dynamic perspective. Journal of Mathematical Sociology33, 2 (2009), 129–155

2009

-

[2]

Tibor Antal, Pavel L Krapivsky, and Sidney Redner. 2005. Dynamics of social balance on networks.Physical Review E—Statistical, Nonlinear, and Soft Matter Physics72, 3 (2005), 036121

2005

-

[3]

Roy F Baumeister, Ellen Bratslavsky, Catrin Finkenauer, and Kathleen D Vohs

-

[4]

Bad is stronger than good.Review of general psychology5, 4 (2001), 323–370

2001

-

[5]

Francesco Bonchi, Edoardo Galimberti, Aristides Gionis, Bruno Ordozgoiti, and Giancarlo Ruffo. 2019. Discovering polarized communities in signed networks. In Proceedings of the 28th acm international conference on information and knowledge management. 961–970

2019

-

[6]

Dorwin Cartwright and Frank Harary. 1956. Structural balance: a generalization of Heider’s theory.Psychological review63, 5 (1956), 277

1956

-

[7]

Kai-Yang Chiang, Cho-Jui Hsieh, Nagarajan Natarajan, Inderjit S Dhillon, and Ambuj Tewari. 2014. Prediction and clustering in signed networks: a local to global perspective.The Journal of Machine Learning Research15, 1 (2014), 1177– 1213

2014

-

[8]

Weilin Cong, Yanhong Wu, Yuandong Tian, Mengting Gu, Yinglong Xia, Chun- cheng Jason Chen, and Mehrdad Mahdavi. 2023. DyFormer: a scalable dynamic graph transformer with provable benefits on generalization ability. InProceedings of the 2023 SIAM International Conference on Data Mining (SDM). SIAM, 442–450

2023

-

[9]

Weilin Cong, Si Zhang, Jian Kang, Baichuan Yuan, Hao Wu, Xin Zhou, Hanghang Tong, and Mehrdad Mahdavi. 2023. Do We Really Need Complicated Model Architectures For Temporal Networks?. InThe Eleventh International Conference on Learning Representations. https://openreview.net/forum?id=ayPPc0SyLv1

2023

-

[10]

da Xu, chuanwei ruan, evren korpeoglu, sushant kumar, and kannan achan. 2020. Inductive representation learning on temporal graphs. InInternational Conference on Learning Representations (ICLR)

2020

-

[11]

James A Davis. 1967. Clustering and structural balance in graphs.Human Relations20, 2 (1967), 181–187. doi:10.1177/001872676702000206

-

[12]

Tyler Derr, Yao Ma, and Jiliang Tang. 2018. Signed graph convolutional networks. In2018 IEEE international conference on data mining (ICDM). IEEE, 929–934. Inductive Dual-Polarity Modeling via Static–Dynamic Disentanglement for Dynamic Signed Networks SIGIR ’26, July 20–24, 2026, Melbourne, VIC, Australia

2018

-

[13]

Patrick Doreian and Andrej Mrvar. 1996. A partitioning approach to structural balance.Social Networks18, 2 (1996), 149–168. doi:10.1016/0378-8733(95)00259-6

-

[14]

Thomas DuBois, Jennifer Golbeck, and Aravind Srinivasan. 2011. Predicting trust and distrust in social networks. In2011 IEEE third international conference on privacy, security, risk and trust and 2011 IEEE third international conference on social computing. IEEE, 418–424

2011

-

[15]

Pouya Esmailian and Mahdi Jalili. 2015. Community Detection in Signed Net- works: The Role of Negative Ties in Different Scales.Scientific Reports5 (2015), 14339. doi:10.1038/srep14339

-

[16]

Stefano Fiorini, Stefano Coniglio, Michele Ciavotta, and Enza Messina. 2023. Sigmanet: One laplacian to rule them all. InProceedings of the AAAI Conference on Artificial Intelligence, Vol. 37. 7568–7576

2023

-

[17]

Aditya Grover and Jure Leskovec. 2016. node2vec: Scalable Feature Learning for Networks. InProceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD). 855–864. doi:10.1145/2939672. 2939754

-

[18]

Ramanthan Guha, Ravi Kumar, Prabhakar Raghavan, and Andrew Tomkins

-

[19]

InProceedings of the 13th international conference on World Wide Web

Propagation of trust and distrust. InProceedings of the 13th international conference on World Wide Web. 403–412

-

[20]

Will Hamilton, Zhitao Ying, and Jure Leskovec. 2017. Inductive representation learning on large graphs.Advances in neural information processing systems30 (2017)

2017

-

[21]

Yixuan He, Xitong Zhang, Junjie Huang, Benedek Rozemberczki, Mihai Cu- curingu, and Gesine Reinert. 2024. Pytorch geometric signed directed: a software package on graph neural networks for signed and directed graphs. InLearning on Graphs Conference. PMLR, 12–1

2024

-

[22]

Fritz Heider. 1946. Attitudes and cognitive organization.The Journal of psychology 21, 1 (1946), 107–112

1946

-

[23]

Petter Holme and Jari Saramäki. 2012. Temporal networks.Physics reports519, 3 (2012), 97–125

2012

-

[24]

Junjie Huang, Huawei Shen, Qi Cao, Shuchang Tao, and Xueqi Cheng. 2021. Signed bipartite graph neural networks. InProceedings of the 30th ACM interna- tional conference on information & knowledge management. 740–749

2021

-

[25]

Junjie Huang, Huawei Shen, Liang Hou, and Xueqi Cheng. 2019. Signed graph at- tention networks. InInternational conference on artificial neural networks. Springer, 566–577

2019

-

[26]

Junjie Huang, Huawei Shen, Liang Hou, and Xueqi Cheng. 2021. SDGNN: Learn- ing node representation for signed directed networks. InProceedings of the AAAI conference on artificial intelligence, Vol. 35. 196–203

2021

-

[27]

Junjie Huang, Ruobing Xie, Qi Cao, Huawei Shen, Shaoliang Zhang, Feng Xia, and Xueqi Cheng. 2023. Negative can be positive: Signed graph neural networks for recommendation.Information Processing & Management60, 4 (2023), 103403

2023

-

[28]

Junghwan Kim, Haekyu Park, Ji-Eun Lee, and U Kang. 2018. Side: representation learning in signed directed networks. InProceedings of the 2018 world wide web conference. 509–518

2018

-

[29]

Min-Jeong Kim, Yeon-Chang Lee, and Sang-Wook Kim. 2023. TrustSGCN: learn- ing trustworthiness on edge signs for effective signed graph convolutional net- works. InProceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval. 2451–2455

2023

-

[30]

Min-Jeong Kim, Yeon-Chang Lee, and Sang-Wook Kim. 2024. PolarDSN: An In- ductive Approach to Learning the Evolution of Network Polarization in Dynamic Signed Networks. InProceedings of the 33rd ACM International Conference on Information and Knowledge Management. 1099–1109

2024

-

[31]

Srijan Kumar, Xikun Zhang, and Jure Leskovec. 2019. Predicting dynamic em- bedding trajectory in temporal interaction networks. InProceedings of the 25th ACM SIGKDD international conference on knowledge discovery & data mining. 1269–1278

2019

-

[32]

Jérôme Kunegis, Julia Preusse, and Felix Schwagereit. 2013. What is the added value of negative links in online social networks?. InProceedings of the 22nd international conference on World Wide Web. 727–736

2013

-

[33]

Jure Leskovec, Daniel Huttenlocher, and Jon Kleinberg. 2010. Predicting pos- itive and negative links in online social networks. InProceedings of the 19th international conference on World wide web. 641–650

2010

-

[34]

Jure Leskovec, Daniel Huttenlocher, and Jon Kleinberg. 2010. Signed Networks in Social Media. InProceedings of the SIGCHI Conference on Human Factors in Computing Systems (CHI ’10). ACM, 1361–1370. doi:10.1145/1753326.1753532

-

[35]

Haoxin Liu, Ziwei Zhang, Peng Cui, Yafeng Zhang, Qiang Cui, Jiashuo Liu, and Wenwu Zhu. 2021. Signed graph neural network with latent groups. In Proceedings of the 27th ACM SIGKDD conference on knowledge discovery & data mining. 1066–1075

2021

-

[36]

Seth A Marvel, Jon Kleinberg, Robert D Kleinberg, and Steven H Strogatz. 2011. Continuous-time model of structural balance.Proceedings of the National Academy of Sciences108, 5 (2011), 1771–1776

2011

-

[37]

Paolo Massa and Paolo Avesani. 2007. Trust-aware recommender systems. In Proceedings of the 2007 ACM conference on Recommender systems. 17–24

2007

-

[38]

Bryan Perozzi, Rami Al-Rfou, and Steven Skiena. 2014. Deepwalk: Online learning of social representations. InProceedings of the 20th ACM SIGKDD international conference on Knowledge discovery and data mining. 701–710

2014

-

[39]

Yijun Ran, Xiao-Ke Xu, and Tao Jia. 2024. The maximum capability of a topological feature in link prediction.PNAS Nexus3, 3 (2024), pgae113

2024

-

[40]

Emanuele Rossi, Ben Chamberlain, Fabrizio Frasca, Davide Eynard, Federico Monti, and Michael Bronstein. 2020. Temporal Graph Networks for Deep Learning on Dynamic Graphs. InICML 2020 Workshop on Graph Representation Learning

2020

-

[41]

Aravind Sankar, Yanhong Wu, Liang Gou, Wei Zhang, and Hao Yang. 2020. Dysat: Deep neural representation learning on dynamic graphs via self-attention networks. InProceedings of the 13th international conference on web search and data mining. 519–527

2020

-

[42]

Kartik Sharma, Mohit Raghavendra, Yeon-Chang Lee, Anand Kumar M, and Srijan Kumar. 2023. Representation learning in continuous-time dynamic signed networks. InProceedings of the 32nd ACM International Conference on Information and Knowledge Management. 2229–2238

2023

-

[43]

Amauri Souza, Diego Mesquita, Samuel Kaski, and Vikas Garg. 2022. Provably expressive temporal graph networks.Advances in neural information processing systems35 (2022), 32257–32269

2022

-

[44]

Haiting Sun, Peng Tian, Yun Xiong, Yao Zhang, Yali Xiang, Xing Jia, and Haofen Wang. 2024. Dynamise: dynamic signed network embedding for link prediction. Machine Learning113, 7 (2024), 4037–4053

2024

-

[45]

Jiliang Tang, Charu Aggarwal, and Huan Liu. 2016. Recommendations in signed social networks. InProceedings of the 25th international conference on world wide web. 31–40

2016

-

[46]

Jiliang Tang, Shiyu Chang, Charu Aggarwal, and Huan Liu. 2015. Negative link prediction in social media. InProceedings of the eighth ACM international conference on web search and data mining. 87–96

2015

-

[47]

Jiliang Tang, Yi Chang, Charu Aggarwal, and Huan Liu. 2016. A survey of signed network mining in social media.Acm computing surveys (csur)49, 3 (2016), 1–37

2016

-

[48]

V. A. Traag and Jeroen Bruggeman. 2009. Community Detection in Networks with Positive and Negative Links.Physical Review E80, 3 (2009), 036115. doi:10. 1103/PhysRevE.80.036115

2009

-

[49]

Rakshit Trivedi, Mehrdad Farajtabar, Prasenjeet Biswal, and Hongyuan Zha. 2019. Dyrep: Learning representations over dynamic graphs. InInternational conference on learning representations

2019

-

[50]

Patricia Victor, Chris Cornelis, Martine De Cock, and Ankur M Teredesai. 2011. Trust-and distrust-based recommendations for controversial reviews.IEEE Intel- ligent Systems26, 1 (2011), 48–55

2011

-

[51]

Suhang Wang, Jiliang Tang, Charu Aggarwal, Yi Chang, and Huan Liu. 2017. Signed network embedding in social media. InProceedings of the 2017 SIAM international conference on data mining. SIAM, 327–335

2017

-

[52]

Yanbang Wang, Yen-Yu Chang, Yunyu Liu, Jure Leskovec, and Pan Li. 2021. Inductive Representation Learning in Temporal Networks via Causal Anony- mous Walks. InInternational Conference on Learning Representations. https: //openreview.net/forum?id=KYPz4YsCPj

2021

-

[53]

Kaike Zhang, Qi Cao, Gaolin Fang, Bingbing Xu, Hongjian Zou, Huawei Shen, and Xueqi Cheng. 2023. Dyted: Disentangled representation learning for discrete-time dynamic graph. InProceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining. 3309–3320

2023

-

[54]

Yao Zhang, Yun Xiong, Yongxiang Liao, Yiheng Sun, Yucheng Jin, Xuehao Zheng, and Yangyong Zhu. 2023. Tiger: Temporal interaction graph embedding with restarts. InProceedings of the ACM Web Conference 2023. 478–488

2023

-

[55]

Cai-Nicolas Ziegler and Georg Lausen. 2005. Propagation models for trust and distrust in social networks.Information Systems Frontiers7, 4 (2005), 337–358

2005

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.