Recognition: unknown

Data (in)equities in data science: Dissecting systemic and systematic biases in pulse oximetry

Pith reviewed 2026-05-10 03:33 UTC · model grok-4.3

The pith

A single measurement error in pulse oximetry produces distinct violations of data equity, prediction equity, and decision equity.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

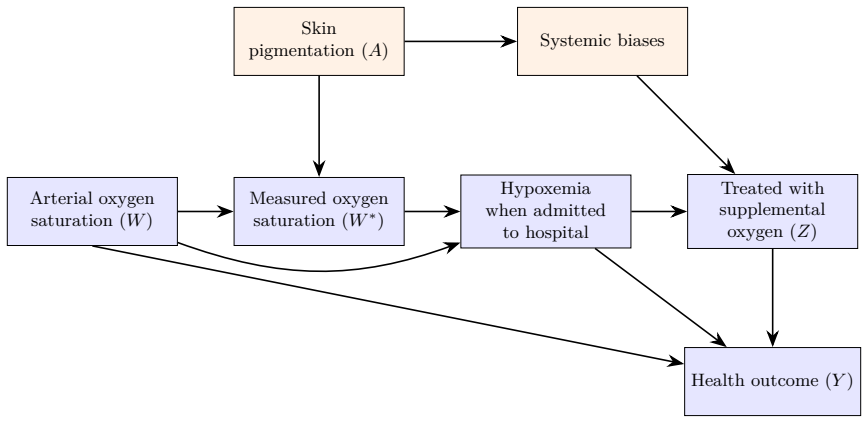

Using pulse oximetry as an oracle case, the authors trace how one upstream violation of information bias at the measurement stage compounds into treatment disparities, fairness violations, and adverse health outcomes. The reverse direction starts from an observed disparity and uses the data equity structure to identify its origins systematically. The result is that data equity, prediction equity, and decision equity emerge as separate requirements, each carrying distinct evaluation needs and policy implications.

What carries the argument

The oracle tracing method that follows bias propagation from measurement error through the analytic pipeline to outcome disparities.

If this is right

- Correcting only prediction fairness leaves upstream measurement biases untouched and can still produce unequal health outcomes.

- Policies focused on final treatment decisions cannot fully offset errors that originated at data collection.

- Different statistical tools and thresholds are needed to assess equity at each stage rather than a single fairness metric.

- Statisticians can map specific bias pathways but require domain knowledge from clinicians to interpret real-world impacts.

- Interdisciplinary teams must coordinate because no single discipline owns all three equity layers.

Where Pith is reading between the lines

- The same tracing method could be applied to other medical sensors or diagnostic tools known to differ across demographic groups.

- Standardized bias-audit templates might be developed for common data pipelines in healthcare AI.

- Real deployments would likely need hybrid oracle-plus-empirical checks when full data on confounders is unavailable.

Load-bearing premise

That the documented pulse oximetry measurement error can be treated as a clean representative case whose effects can be followed without interference from other unmeasured factors.

What would settle it

Empirical data showing that racial disparities in pulse oximetry outcomes persist even after correcting for the known measurement error, or that the disparities do not separate into distinct data-level, prediction-level, and decision-level components.

Figures

read the original abstract

Data equity is an emerging framework for responsible data science. However, its core concepts, including fairness, representativeness, and information bias, remain largely abstract and general, lacking the mathematical specificity needed for practical implementation. In this paper, we demonstrate how statisticians can operationalize data equity by translating its tenets into precise, testable formulations tailored to a given problem. Using the well-documented case of differential measurement error across racial groups in pulse oximetry, we first adopt an oracle approach, tracing how a single upstream violation of information bias compounds through the analytic pipeline into treatment disparities, fairness violations, and adverse health outcomes. We then demonstrate the inverse: starting from an observed outcome disparity, the data equity framework provides a principled structure for systematically identifying its statistical sources. Our exposition reveals that data equity, prediction equity, and decision equity are distinct requirements with distinct evaluation and policy needs--a nuance that highlights both the unique role of statisticians in the era of artificial intelligence as well as the necessity of interdisciplinary collaboration.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript uses the well-documented racial differential in pulse-oximetry measurement error as an oracle case to trace how an upstream information-bias violation propagates forward through an analytic pipeline to produce treatment disparities and adverse outcomes, and then demonstrates the inverse exercise of starting from an observed outcome disparity and systematically attributing it to statistical sources. The central claim is that data equity, prediction equity, and decision equity are distinct constructs that impose separate evaluation criteria and policy requirements.

Significance. If the claimed separability can be made rigorous, the work supplies a concrete, domain-specific illustration of how statisticians can translate abstract equity notions into operational, traceable quantities. This strengthens the case for statistical expertise in fairness auditing and underscores the value of interdisciplinary collaboration when measurement error interacts with downstream decisions.

major comments (3)

- [oracle approach / forward tracing] The oracle-tracing argument (abstract and the forward-propagation section) treats the documented pulse-oximetry error as a clean, isolated upstream violation whose effects compound without unmodeled confounders (device drift, differential missingness, comorbidity correlations, or selection into oximetry). This assumption is load-bearing for the separability of the three equity types; without sensitivity bounds or real-data verification, the attribution of downstream disparities to the initial data-equity violation is non-unique.

- [abstract and methods] No explicit error-propagation formulas, identification assumptions, or quantitative bounds appear in the exposition. The abstract promises “precise, testable formulations,” yet the tracing remains qualitative; this gap prevents readers from reproducing or falsifying the claimed distinctions between data, prediction, and decision equity.

- [inverse demonstration] The inverse exercise (starting from an observed disparity) lacks a formal identification strategy or sensitivity analysis that would rule out alternative statistical sources. Without such structure, the demonstration that the data-equity framework “provides a principled structure” for source attribution remains illustrative rather than demonstrative.

minor comments (2)

- [discussion] Notation for the three equity types is introduced informally; a compact table or diagram defining each construct, its evaluation metric, and its policy lever would improve clarity.

- [introduction] The manuscript cites the pulse-oximetry literature but does not reference recent statistical work on differential measurement error (e.g., in fairness auditing or transportability). Adding two or three targeted citations would situate the contribution more precisely.

Simulated Author's Rebuttal

We thank the referee for their constructive comments on our manuscript. The feedback highlights key areas where we can clarify and strengthen our arguments regarding the operationalization of data equity concepts. Below, we provide point-by-point responses to the major comments.

read point-by-point responses

-

Referee: The oracle-tracing argument (abstract and the forward-propagation section) treats the documented pulse-oximetry error as a clean, isolated upstream violation whose effects compound without unmodeled confounders (device drift, differential missingness, comorbidity correlations, or selection into oximetry). This assumption is load-bearing for the separability of the three equity types; without sensitivity bounds or real-data verification, the attribution of downstream disparities to the initial data-equity violation is non-unique.

Authors: We agree that the oracle-tracing approach relies on treating the pulse-oximetry error as an isolated violation for illustrative purposes. This is explicitly framed as such in the manuscript to trace the propagation without confounding factors, thereby highlighting the distinct roles of data equity, prediction equity, and decision equity. The separability is demonstrated conceptually under these conditions. However, we recognize the value of addressing potential confounders. In the revised manuscript, we will add a discussion of unmodeled factors such as device drift and comorbidity correlations, along with qualitative sensitivity considerations, to better support the attribution claims. revision: partial

-

Referee: No explicit error-propagation formulas, identification assumptions, or quantitative bounds appear in the exposition. The abstract promises “precise, testable formulations,” yet the tracing remains qualitative; this gap prevents readers from reproducing or falsifying the claimed distinctions between data, prediction, and decision equity.

Authors: The abstract refers to 'precise, testable formulations' in the sense that the definitions of data equity (information bias violation), prediction equity (fairness in model outputs), and decision equity (equitable downstream decisions) provide clear criteria that can be tested with appropriate data and methods. The tracing in the paper is intentionally conceptual to establish the framework, rather than providing a specific numerical propagation model. To address this, we will include explicit identification assumptions and a high-level error-propagation outline in the methods section of the revision, making the distinctions more amenable to quantitative verification. revision: yes

-

Referee: The inverse exercise (starting from an observed disparity) lacks a formal identification strategy or sensitivity analysis that would rule out alternative statistical sources. Without such structure, the demonstration that the data-equity framework “provides a principled structure” for source attribution remains illustrative rather than demonstrative.

Authors: The inverse exercise is presented as a demonstration of how the data equity framework can structure the attribution process, starting from outcome disparities and working backwards through the pipeline. We acknowledge that it lacks a fully formal identification strategy in the current version. In the revision, we will incorporate a structured identification approach, including key assumptions and a note on the role of sensitivity analysis to rule out alternative sources, thereby making the demonstration more rigorous while maintaining its illustrative nature. revision: partial

- The request for real-data verification of the attribution in the oracle approach cannot be fully met within the scope of this conceptual manuscript, as it relies on the established literature rather than new empirical analysis.

Circularity Check

No circularity; derivation traces externally documented bias forward and backward without self-referential reductions.

full rationale

The paper begins from the well-documented differential pulse-oximetry measurement error across racial groups and employs an oracle approach to trace compounding effects into distinct equity types. No equations, fitted parameters, or self-citations are shown to define the claimed distinctions (data equity, prediction equity, decision equity) in terms of the authors' own inputs. The exposition remains self-contained by relying on external empirical facts rather than renaming known results, smuggling ansatzes, or forcing predictions via internal fits. This yields a normal non-finding of circularity.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Differential measurement error in pulse oximetry across racial groups exists and can be treated as an upstream violation whose downstream effects are traceable.

Reference graph

Works this paper leans on

-

[1]

JAMA , volume=

The inevitable application of big data to health care , author=. JAMA , volume=. 2013 , publisher=

2013

-

[2]

Ten. JAMA Health Forum , author =. 2026 , pages =. doi:10.1001/jamahealthforum.2025.6031 , abstract =

-

[3]

New England Journal of Medicine , volume=

Predicting the future—big data, machine learning, and clinical medicine , author=. New England Journal of Medicine , volume=. 2016 , publisher=

2016

-

[4]

Science , volume=

Dissecting racial bias in an algorithm used to manage the health of populations , author=. Science , volume=. 2019 , publisher=

2019

-

[5]

2019 , publisher=

Race After Technology: Abolitionist Tools for the New Jim Code , author=. 2019 , publisher=

2019

-

[6]

Nature , volume=

Genomics is failing on diversity , author=. Nature , volume=. 2016 , publisher=

2016

-

[7]

New England Journal of Medicine , volume=

Racial bias in pulse oximetry measurement , author=. New England Journal of Medicine , volume=. 2020 , publisher=

2020

-

[8]

Anesthesiology , author =

Effects of. Anesthesiology , author =

-

[9]

Reliability of. Chest , author =. 1990 , pages =. doi:10.1378/chest.97.6.1420 , abstract =

-

[10]

npj Digital Medicine , author =

Predictably unequal: understanding and addressing concerns that algorithmic clinical prediction may increase health disparities , volume =. npj Digital Medicine , author =. 2020 , pages =. doi:10.1038/s41746-020-0304-9 , abstract =

-

[11]

American Journal of Therapeutics , author =

Demographics of. American Journal of Therapeutics , author =. 2015 , keywords =

2015

-

[12]

Annals of Intensive Care , author =

Pulse oximetry, racial bias and statistical bias , volume =. Annals of Intensive Care , author =. 2022 , pages =. doi:10.1186/s13613-021-00974-7 , number =

-

[13]

New York Times , author =

Pulse. New York Times , author =. 2020 , pages =

2020

-

[14]

Proceedings of the 1st Conference on Fairness, Accountability and Transparency , series=

Gender shades: Intersectional accuracy disparities in commercial gender classification , author=. Proceedings of the 1st Conference on Fairness, Accountability and Transparency , series=. 2018 , organization=

2018

-

[15]

Journal of Educational Psychology , volume=

Estimating causal effects of treatments in randomized and nonrandomized studies , author=. Journal of Educational Psychology , volume=. 1974 , publisher=

1974

-

[16]

2019 , publisher=

Fairness and Machine Learning: Limitations and Opportunities , author=. 2019 , publisher=

2019

-

[17]

Annual Review of Statistics and Its Application , volume=

Algorithmic fairness: Choices, assumptions, and definitions , author=. Annual Review of Statistics and Its Application , volume=. 2021 , publisher=

2021

-

[18]

IEEE International Conference on Data Mining Workshops , pages=

Building classifiers with independency constraints , author=. IEEE International Conference on Data Mining Workshops , pages=. 2009 , organization=

2009

-

[19]

Advances in Neural Information Processing Systems , volume=

Equality of opportunity in supervised learning , author=. Advances in Neural Information Processing Systems , volume=

-

[20]

Proceedings of Innovations in Theoretical Computer Science , year=

Inherent trade-offs in the fair determination of risk scores , author=. Proceedings of Innovations in Theoretical Computer Science , year=

-

[21]

Advances in Neural Information Processing Systems , volume=

Counterfactual fairness , author=. Advances in Neural Information Processing Systems , volume=

-

[22]

Big Data , volume=

Fair prediction with disparate impact: A study of bias in recidivism prediction instruments , author=. Big Data , volume=. 2017 , publisher=

2017

-

[23]

Proceedings of the Conference on Fairness, Accountability, and Transparency , pages=

On the (im)possibility of fairness , author=. Proceedings of the Conference on Fairness, Accountability, and Transparency , pages=. 2016 , organization=

2016

-

[24]

Journal of the American Statistical Association , volume=

The influence curve and its role in robust estimation , author=. Journal of the American Statistical Association , volume=. 1974 , publisher=

1974

-

[25]

IEEE Symposium on Security and Privacy , pages=

Algorithmic transparency via quantitative input influence: Theory and experiments with learning systems , author=. IEEE Symposium on Security and Privacy , pages=. 2016 , organization=

2016

-

[26]

Advances in Neural Information Processing Systems , volume=

A unified approach to interpreting model predictions , author=. Advances in Neural Information Processing Systems , volume=

-

[27]

Queue , volume=

The mythos of model interpretability , author=. Queue , volume=. 2018 , publisher=

2018

-

[28]

Nature Machine Intelligence , volume=

Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead , author=. Nature Machine Intelligence , volume=. 2019 , publisher=

2019

-

[29]

Statistics Surveys , volume=

Interpretable machine learning: Fundamental principles and 10 grand challenges , author=. Statistics Surveys , volume=. 2022 , publisher=

2022

-

[30]

Big Data & Society , volume=

The ethics of algorithms: Mapping the debate , author=. Big Data & Society , volume=. 2016 , publisher=

2016

-

[31]

2018 , publisher=

Floridi, Luciano and Cowls, Josh and Beltrametti, Monica and Chatila, Raja and Chazerand, Patrice and Dignum, Virginia and Luetge, Christoph and Madelin, Robert and Pagallo, Ugo and Rossi, Francesca and others , journal=. 2018 , publisher=

2018

-

[32]

The role and limits of principles in

Whittlestone, Jess and Nyrup, Rune and Alexandrova, Anna and Cave, Stephen , booktitle=. The role and limits of principles in. 2019 , organization=

2019

-

[33]

New England Journal of Medicine , volume=

Hidden in plain sight—reconsidering the use of race correction in clinical algorithms , author=. New England Journal of Medicine , volume=. 2020 , publisher=

2020

-

[34]

Foundations and Trends in Theoretical Computer Science , volume=

The algorithmic foundations of differential privacy , author=. Foundations and Trends in Theoretical Computer Science , volume=. 2014 , publisher=

2014

-

[35]

Theory of Cryptography Conference , pages=

Calibrating noise to sensitivity in private data analysis , author=. Theory of Cryptography Conference , pages=. 2006 , organization=

2006

-

[36]

SIAM Journal on Computing , volume=

What can we learn privately? , author=. SIAM Journal on Computing , volume=. 2011 , publisher=

2011

-

[37]

Advances in Neural Information Processing Systems , volume=

Differential privacy has disparate impact on model accuracy , author=. Advances in Neural Information Processing Systems , volume=

-

[38]

2011 , publisher=

Statistical Confidentiality: Principles and Practice , author=. 2011 , publisher=

2011

-

[39]

International Journal of Uncertainty, Fuzziness and Knowledge-Based Systems , volume=

k-anonymity: A model for protecting privacy , author=. International Journal of Uncertainty, Fuzziness and Knowledge-Based Systems , volume=. 2002 , publisher=

2002

-

[40]

ACM Transactions on Knowledge Discovery from Data , volume=

l-diversity: Privacy beyond k-anonymity , author=. ACM Transactions on Knowledge Discovery from Data , volume=. 2007 , publisher=

2007

-

[41]

Annual Review of Statistics and Its Application , volume=

Exposed! A survey of attacks on private data , author=. Annual Review of Statistics and Its Application , volume=. 2017 , publisher=

2017

-

[42]

Epidemiology , volume=

A structural approach to selection bias , author=. Epidemiology , volume=. 2004 , publisher=

2004

-

[43]

Proceedings of the National Academy of Sciences , volume=

Causal inference and the data-fusion problem , author=. Proceedings of the National Academy of Sciences , volume=. 2016 , publisher=

2016

-

[44]

Comparison of sociodemographic and health-related characteristics of

Fry, Anna and Littlejohns, Thomas J and Sudlow, Cathie and Doherty, Nicola and Adamska, Ligia and Sprosen, Tim and Collins, Rory and Allen, Naomi E , journal=. Comparison of sociodemographic and health-related characteristics of. 2017 , publisher=

2017

-

[45]

Nature Genetics , volume=

Clinical use of current polygenic risk scores may exacerbate health disparities , author=. Nature Genetics , volume=. 2019 , publisher=

2019

-

[46]

Racial and ethnic discrepancy in pulse oximetry and delayed identification of treatment eligibility among patients with

Fawzy, Ashraf and Wu, Tianshi David and Wang, Kunbo and Robinson, Matthew L and Farber, Jill and Bradber, Yanxun and others , journal=. Racial and ethnic discrepancy in pulse oximetry and delayed identification of treatment eligibility among patients with. 2022 , publisher=

2022

-

[47]

Statistical Science , volume=

Matching methods for causal inference: A review and a look forward , author=. Statistical Science , volume=. 2010 , publisher=

2010

-

[48]

Moving towards best practice when using inverse probability of treatment weighting (

Austin, Peter C and Stuart, Elizabeth A , journal=. Moving towards best practice when using inverse probability of treatment weighting (. 2015 , publisher=

2015

-

[49]

Journal of Epidemiology & Community Health , volume=

Estimating causal effects from epidemiological data , author=. Journal of Epidemiology & Community Health , volume=. 2006 , publisher=

2006

-

[50]

Journal of Educational and Behavioral Statistics , volume=

How generalizable is your experiment? An index for comparing experimental samples and populations , author=. Journal of Educational and Behavioral Statistics , volume=. 2014 , publisher=

2014

-

[51]

Representativeness of participants in clinical trials: Findings from the

Chen, Yixuan and others , journal=. Representativeness of participants in clinical trials: Findings from the. 2022 , publisher=

2022

-

[52]

Statistical Science , volume=

External validity: From do-calculus to transportability across populations , author=. Statistical Science , volume=. 2014 , publisher=

2014

-

[53]

Biometrics , volume=

Generalizing causal inferences from individuals in randomized trials to all trial-eligible individuals , author=. Biometrics , volume=. 2019 , publisher=

2019

-

[54]

Journal of the American Statistical Association , year=

Representation and generalization in causal inference , author=. Journal of the American Statistical Association , year=

-

[55]

Annual Review of Public Health , volume=

Confounding in health research , author=. Annual Review of Public Health , volume=. 1999 , publisher=

1999

-

[56]

2020 , publisher=

Causal Inference: What If , author=. 2020 , publisher=

2020

-

[57]

Sensitivity analysis in observational research: Introducing the

VanderWeele, Tyler J and Ding, Peng , journal=. Sensitivity analysis in observational research: Introducing the. 2017 , publisher=

2017

-

[58]

2006 , edition=

Measurement Error in Nonlinear Models: A Modern Perspective , author=. 2006 , edition=

2006

-

[59]

2003 , publisher=

Measurement Error and Misclassification in Statistics and Epidemiology: Impacts and Bayesian Adjustments , author=. 2003 , publisher=

2003

-

[60]

Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining , pages=

Algorithmic decision making and the cost of fairness , author=. Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining , pages=. 2017 , organization=

2017

-

[61]

International Conference on Machine Learning , pages=

A reductions approach to fair classification , author=. International Conference on Machine Learning , pages=. 2018 , organization=

2018

-

[62]

Proceedings of the 3rd Innovations in Theoretical Computer Science Conference , pages=

Fairness through awareness , author=. Proceedings of the 3rd Innovations in Theoretical Computer Science Conference , pages=. 2012 , organization=

2012

-

[63]

Proceedings of the AAAI Conference on Artificial Intelligence , volume=

Fair inference on outcomes , author=. Proceedings of the AAAI Conference on Artificial Intelligence , volume=

-

[64]

2009 , edition=

Causality: Models, Reasoning, and Inference , author=. 2009 , edition=

2009

-

[65]

Biometrika , volume=

The central role of the propensity score in observational studies for causal effects , author=. Biometrika , volume=. 1983 , publisher=

1983

-

[66]

Biometrics , volume=

Doubly robust estimation in missing data and causal inference models , author=. Biometrics , volume=. 2005 , publisher=

2005

-

[67]

American Journal of Epidemiology , volume=

Constructing inverse probability weights for marginal structural models , author=. American Journal of Epidemiology , volume=. 2008 , publisher=

2008

-

[68]

Econometrica , volume=

Sample selection bias as a specification error , author=. Econometrica , volume=. 1979 , publisher=

1979

-

[69]

2019 , edition=

Statistical Analysis with Missing Data , author=. 2019 , edition=

2019

-

[70]

Biometrika , volume=

Inference and missing data , author=. Biometrika , volume=. 1976 , publisher=

1976

-

[71]

Propensity score estimation: Neural networks, support vector machines, decision trees (

Westreich, Daniel and Lessler, Justin and Funk, Michele Jonsson , journal=. Propensity score estimation: Neural networks, support vector machines, decision trees (. 2010 , publisher=

2010

-

[72]

Annual Review of Statistics and Its Application , volume=

A review of generalizability and transportability , author=. Annual Review of Statistics and Its Application , volume=. 2023 , publisher=

2023

-

[73]

Epidemiology , volume=

Generalizing study results: A potential outcomes perspective , author=. Epidemiology , volume=. 2017 , publisher=

2017

-

[74]

Generalizing evidence from randomized clinical trials to target populations: The

Cole, Stephen R and Stuart, Elizabeth A , journal=. Generalizing evidence from randomized clinical trials to target populations: The. 2010 , publisher=

2010

-

[75]

Journal of the Royal Statistical Society: Series A , volume=

Generalizing evidence from randomized trials using inverse probability of sampling weights , author=. Journal of the Royal Statistical Society: Series A , volume=. 2018 , publisher=

2018

-

[76]

2002 , edition=

Observational Studies , author=. 2002 , edition=

2002

-

[77]

Review of Economics and Statistics , volume=

Nonparametric estimation of average treatment effects under exogeneity: A review , author=. Review of Economics and Statistics , volume=. 2004 , publisher=

2004

-

[78]

2015 , publisher=

Causal Inference for Statistics, Social, and Biomedical Sciences: An Introduction , author=. 2015 , publisher=

2015

-

[79]

Journal of the Royal Statistical Society: Series B , volume=

Making sense of sensitivity: Extending omitted variable bias , author=. Journal of the Royal Statistical Society: Series B , volume=. 2020 , publisher=

2020

-

[80]

Epidemiology , volume=

Sensitivity analysis without assumptions , author=. Epidemiology , volume=. 2016 , publisher=

2016

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.