Recognition: unknown

ConvVitMamba: Efficient Multiscale Convolution, Transformer, and Mamba-Based Sequence modelling for Hyperspectral Image Classification

Pith reviewed 2026-05-10 04:31 UTC · model grok-4.3

The pith

ConvVitMamba combines multiscale convolution, Vision Transformer tokenization, and Mamba sequence mixing to outperform separate CNN, transformer, and Mamba models on hyperspectral image classification while balancing accuracy, size, and run

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The paper establishes that the ConvVitMamba architecture, formed by stacking a multiscale convolutional feature extractor, a Vision Transformer-based tokenization and encoding stage, and a Mamba-inspired gated sequence mixing module after PCA preprocessing, produces higher classification accuracy than standalone CNN, Vision Transformer, and Mamba approaches across the Houston, Pingan, Qingyun, and Tangdaowan hyperspectral datasets while preserving a favorable trade-off between accuracy, model size, and inference speed.

What carries the argument

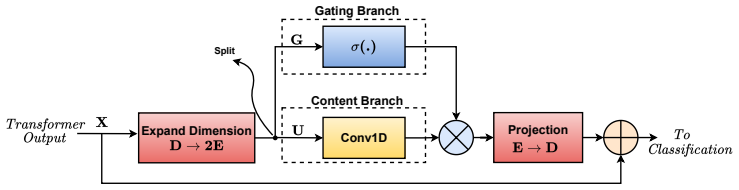

The ConvVitMamba hybrid stack that uses multiscale convolution to capture local patterns, Vision Transformer encoding for long-range dependencies, and Mamba gated mixing for content-aware sequence refinement without quadratic attention.

If this is right

- The hybrid model records higher overall accuracy than CNN, Vision Transformer, and Mamba baselines on four standard hyperspectral benchmarks.

- Ablation results show that removing any one component reduces performance, confirming the stages are complementary rather than redundant.

- The architecture maintains smaller model size and faster inference than the strongest competing methods while improving accuracy.

- PCA preprocessing enables the efficiency gains without preventing the model from reaching state-of-the-art accuracy on the evaluated scenes.

Where Pith is reading between the lines

- The same staged design could be tested on other high-dimensional imagery such as multispectral satellite data or medical spectral scans where both local detail and global context matter.

- Real-time UAV deployment becomes more plausible once the inference speed advantage is verified on embedded hardware.

- A direct comparison without PCA on full-band inputs would clarify how much spectral information the preprocessing step actually discards.

- Extending the Mamba module to handle longer spatial sequences could reveal whether the efficiency scaling advantage persists at higher resolutions.

Load-bearing premise

The three components deliver complementary benefits that hold on hyperspectral data beyond the four tested datasets and that PCA preprocessing removes redundancy without discarding information needed for accurate classification.

What would settle it

An experiment on a fifth hyperspectral dataset in which a pure CNN, pure Vision Transformer, or pure Mamba model matches or exceeds ConvVitMamba in both overall accuracy and inference efficiency would falsify the claim of consistent superiority.

Figures

read the original abstract

Hyperspectral image (HSI) classification remains challenging due to high spectral dimensionality, redundancy, and limited labeled data. Although convolutional neural networks (CNNs) and Vision Transformers (ViTs) achieve strong performance by exploiting spectral-spatial information and long-range dependencies, they often incur high computational cost and large model size, limiting practical use. To address these limitations, a unified hybrid framework, termed ConvVitMamba, is proposed for efficient HSI classification. The architecture integrates three components: a multiscale convolutional feature extractor to capture local spectral, spatial, and joint patterns; a Vision Transformer based tokenization and encoding stage to model global contextual relationships; and a lightweight Mamba inspired gated sequence mixing module for efficient content-aware refinement without quadratic self-attention. Principal Component Analysis (PCA) is used as preprocessing to reduce redundancy and improve efficiency. Experiments on four benchmark datasets, including Houston and three UAV borne QUH datasets (Pingan, Qingyun, and Tangdaowan), demonstrate that ConvVitMamba consistently outperforms CNN, Transformer, and Mamba based methods while maintaining a favorable balance between accuracy, model size, and inference efficiency. Ablation studies confirm the complementary contributions of all components. The results indicate that the proposed framework provides an effective and efficient solution for HSI classification in diverse scenarios. The source code is publicly available at https://github.com/mqalkhatib/ConvVitMamba

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes ConvVitMamba, a hybrid architecture for hyperspectral image (HSI) classification that combines a multiscale convolutional feature extractor, Vision Transformer-based tokenization and encoding for global context, and a lightweight Mamba-inspired gated sequence mixing module. PCA is applied as preprocessing to reduce spectral redundancy. Experiments on the Houston dataset and three QUH UAV-borne datasets (Pingan, Qingyun, Tangdaowan) claim consistent outperformance over CNN, Transformer, and Mamba baselines in accuracy while achieving favorable model size and inference efficiency; ablation studies are included to show complementary component contributions, and the source code is released publicly.

Significance. If the results hold under rigorous validation, the work would contribute a practical hybrid model that balances local spectral-spatial modeling, long-range dependencies, and efficient sequence processing for HSI tasks, addressing computational limitations of pure ViT or CNN approaches. The public code release and ablation studies are clear strengths that support reproducibility and help isolate design choices.

major comments (3)

- [Experiments] Experiments section (results tables): the reported accuracy improvements lack error bars, standard deviations from multiple runs, or statistical significance tests (e.g., McNemar or paired t-tests), which is load-bearing for the central claim of 'consistent outperformance' across four datasets.

- [Method] Preprocessing and architecture description: PCA dimensionality reduction is applied upfront with no sensitivity analysis, retained-component count, or comparison to alternatives (e.g., band selection or autoencoders), leaving open whether performance gains derive primarily from the ConvViT-Mamba components or from the lossy preprocessing step that the skeptic note flags.

- [Experiments] Dataset and generalization discussion: all quantitative results are confined to Houston plus the three specific QUH UAV scenes; no additional public HSI benchmarks (e.g., Indian Pines, Salinas, or Pavia) are evaluated, weakening the assertion that benefits generalize to 'diverse scenarios'.

minor comments (2)

- [Figures] Figure captions and legends could more explicitly label the color scales and class mappings in the classification maps to aid visual interpretation.

- [Method] Notation for the gated sequence mixing module (e.g., the exact form of the Mamba-inspired state update) would benefit from a compact equation in the main text rather than only in supplementary material.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment point by point below, indicating the changes we will make to strengthen the manuscript.

read point-by-point responses

-

Referee: [Experiments] Experiments section (results tables): the reported accuracy improvements lack error bars, standard deviations from multiple runs, or statistical significance tests (e.g., McNemar or paired t-tests), which is load-bearing for the central claim of 'consistent outperformance' across four datasets.

Authors: We agree that including statistical measures will strengthen the claims of consistent outperformance. In the revised manuscript, we will rerun all experiments over 5 independent trials with different random seeds and report mean accuracy along with standard deviations in the results tables. We will also add McNemar tests comparing ConvVitMamba against each baseline to establish statistical significance of the improvements. These additions will appear in the Experiments section. revision: yes

-

Referee: [Method] Preprocessing and architecture description: PCA dimensionality reduction is applied upfront with no sensitivity analysis, retained-component count, or comparison to alternatives (e.g., band selection or autoencoders), leaving open whether performance gains derive primarily from the ConvViT-Mamba components or from the lossy preprocessing step that the skeptic note flags.

Authors: We acknowledge the value of a more thorough PCA analysis. In the revision we will add a sensitivity study varying the number of retained principal components and report its impact on classification accuracy. We will also include a short comparison to band selection in the discussion. The existing ablation studies already isolate the contributions of the multiscale convolution, transformer, and Mamba modules after PCA; we will emphasize this point to clarify that the performance gains stem primarily from the hybrid architecture rather than preprocessing alone. revision: partial

-

Referee: [Experiments] Dataset and generalization discussion: all quantitative results are confined to Houston plus the three specific QUH UAV scenes; no additional public HSI benchmarks (e.g., Indian Pines, Salinas, or Pavia) are evaluated, weakening the assertion that benefits generalize to 'diverse scenarios'.

Authors: The Houston dataset is a standard benchmark, while the three QUH UAV datasets introduce distinct high-resolution aerial scenes with varying land-cover types, thereby providing diversity in sensor platform and spatial resolution. To further support the generalization claim, we will expand the discussion section to explicitly address dataset diversity and, space permitting, add results on one additional public benchmark (e.g., Indian Pines) either in the main text or supplementary material. The publicly released code will enable straightforward evaluation on other datasets by the community. revision: partial

Circularity Check

No circularity: empirical architecture evaluated on benchmarks

full rationale

The paper is a standard empirical ML architecture proposal. It defines a hybrid model with multiscale conv extractor, ViT tokenization/encoding, and Mamba-inspired gated mixing, applies PCA preprocessing, and reports accuracy, size, and efficiency results on four public HSI datasets plus ablations. No mathematical derivation, first-principles prediction, or fitted parameter is presented as an independent result; all claims rest on direct experimental measurement against baselines. No self-citation is used to ground a uniqueness theorem or to substitute for external validation. The derivation chain is therefore self-contained and non-circular.

Axiom & Free-Parameter Ledger

free parameters (1)

- Model architecture dimensions and training hyperparameters

axioms (2)

- domain assumption The four benchmark datasets are representative for evaluating generalization in HSI classification

- domain assumption PCA preprocessing reduces spectral redundancy while preserving classification-relevant information

Reference graph

Works this paper leans on

-

[1]

Educational and Psychological Measurement , volume=

A coefficient of agreement for nominal scales , author=. Educational and Psychological Measurement , volume=. 1960 , publisher=

1960

-

[2]

ISPRS Journal of Photogrammetry and Remote Sensing , volume=

Three-dimensional singular spectrum analysis for precise land cover classification from UAV-borne hyperspectral benchmark datasets , author=. ISPRS Journal of Photogrammetry and Remote Sensing , volume=. 2023 , publisher=

2023

-

[3]

An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale

An image is worth 16x16 words: Transformers for image recognition at scale , author=. arXiv preprint arXiv:2010.11929 , year=

work page internal anchor Pith review Pith/arXiv arXiv 2010

-

[4]

2019 2nd International Conference on Innovation in Engineering and Technology (ICIET) , pages=

Analysis of PCA based feature extraction methods for classification of hyperspectral image , author=. 2019 2nd International Conference on Innovation in Engineering and Technology (ICIET) , pages=. 2019 , organization=

2019

-

[5]

2017 IEEE International Conference on Image Processing (ICIP) , pages=

Multi-scale 3D deep convolutional neural network for hyperspectral image classification , author=. 2017 IEEE International Conference on Image Processing (ICIP) , pages=. 2017 , organization=

2017

-

[6]

IEEE Transactions on Geoscience and Remote Sensing , volume=

Hyperspectral image transformer classification networks , author=. IEEE Transactions on Geoscience and Remote Sensing , volume=. 2022 , publisher=

2022

-

[7]

Vision Mamba: Efficient Visual Representation Learning with Bidirectional State Space Model

Vision mamba: Efficient visual representation learning with bidirectional state space model , author=. arXiv preprint arXiv:2401.09417 , year=

work page internal anchor Pith review arXiv

-

[8]

Mamba: Linear-Time Sequence Modeling with Selective State Spaces

Mamba: Linear-time sequence modeling with selective state spaces , author=. arXiv preprint arXiv:2312.00752 , year=

work page internal anchor Pith review arXiv

-

[9]

arXiv preprint arXiv:2404.08489 , year=

Spectralmamba: Efficient mamba for hyperspectral image classification , author=. arXiv preprint arXiv:2404.08489 , year=

-

[10]

Neurocomputing , pages=

ConvMamba: Combining Mamba with CNN for hyperspectral image classification , author=. Neurocomputing , pages=. 2025 , publisher=

2025

-

[11]

Remote Sensing , volume=

Spectral-spatial mamba for hyperspectral image classification , author=. Remote Sensing , volume=. 2024 , publisher=

2024

-

[12]

Efficiently Modeling Long Sequences with Structured State Spaces

Efficiently modeling long sequences with structured state spaces , author=. arXiv preprint arXiv:2111.00396 , year=

work page internal anchor Pith review arXiv

-

[13]

2024 , organization=

Alkhatib, Mohammed Q and Jamali, Ali , booktitle=. 2024 , organization=

2024

-

[14]

IEEE Geoscience and Remote Sensing Letters , volume=

Convolution transformer mixer for hyperspectral image classification , author=. IEEE Geoscience and Remote Sensing Letters , volume=. 2022 , publisher=

2022

-

[15]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Masked vision transformers for hyperspectral image classification , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=. 2023 , doi =

2023

-

[16]

IEEE Transactions on Geoscience and Remote Sensing , volume=

Hyperspectral image classification using groupwise separable convolutional vision transformer network , author=. IEEE Transactions on Geoscience and Remote Sensing , volume=. 2024 , publisher=

2024

-

[17]

IEEE Transactions on Geoscience and Remote Sensing , volume=

Spectral--spatial feature tokenization transformer for hyperspectral image classification , author=. IEEE Transactions on Geoscience and Remote Sensing , volume=. 2022 , publisher=

2022

-

[18]

Remote Sensing , volume=

Improved transformer net for hyperspectral image classification , author=. Remote Sensing , volume=. 2021 , publisher=

2021

-

[19]

IEEE Transactions on Geoscience and Remote Sensing , volume=

SpectralFormer: Rethinking hyperspectral image classification with transformers , author=. IEEE Transactions on Geoscience and Remote Sensing , volume=. 2021 , publisher=

2021

-

[20]

2023 , publisher=

Alkhatib, Mohammed Q and Al-Saad, Mina and Aburaed, Nour and Almansoori, Saeed and Zabalza, Jaime and Marshall, Stephen and Al-Ahmad, Hussain , journal=. 2023 , publisher=

2023

-

[21]

IEEE Transactions on Image Processing , volume=

An augmented linear mixing model to address spectral variability for hyperspectral unmixing , author=. IEEE Transactions on Image Processing , volume=. 2018 , publisher=

2018

-

[22]

IEEE Transactions on Geoscience and Remote Sensing , volume=

LRR-Net: An interpretable deep unfolding network for hyperspectral anomaly detection , author=. IEEE Transactions on Geoscience and Remote Sensing , volume=. 2023 , publisher=

2023

-

[23]

IEEE transactions on pattern analysis and machine intelligence , volume=

SpectralGPT: Spectral remote sensing foundation model , author=. IEEE transactions on pattern analysis and machine intelligence , volume=. 2024 , publisher=

2024

-

[24]

Nature Reviews Methods Primers , volume=

Hyperspectral imaging , author=. Nature Reviews Methods Primers , volume=. 2026 , publisher=

2026

-

[25]

IGARSS 2018-2018 IEEE International Geoscience and Remote Sensing Symposium , pages=

Hyperspectral classification via spatial context exploration with multi-scale CNN , author=. IGARSS 2018-2018 IEEE International Geoscience and Remote Sensing Symposium , pages=. 2018 , organization=

2018

-

[26]

IEEE Transactions on Geoscience and Remote Sensing , volume=

A unified multiscale learning framework for hyperspectral image classification , author=. IEEE Transactions on Geoscience and Remote Sensing , volume=. 2022 , publisher=

2022

-

[27]

IEEE Transactions on Geoscience and Remote Sensing , volume=

Multiscale residual network with mixed depthwise convolution for hyperspectral image classification , author=. IEEE Transactions on Geoscience and Remote Sensing , volume=. 2020 , publisher=

2020

-

[28]

IEEE Transactions on Geoscience and Remote Sensing , volume=

A CNN with multiscale convolution and diversified metric for hyperspectral image classification , author=. IEEE Transactions on Geoscience and Remote Sensing , volume=. 2019 , publisher=

2019

-

[29]

Remote Sensing , volume=

Synergistic 2D/3D convolutional neural network for hyperspectral image classification , author=. Remote Sensing , volume=. 2020 , publisher=

2020

-

[30]

Infrared Physics & Technology , volume=

Hyperspectral image classification using CNN with spectral and spatial features integration , author=. Infrared Physics & Technology , volume=. 2020 , publisher=

2020

-

[31]

Remote Sensing , volume=

Hyperspectral image classification using convolutional neural networks and multiple feature learning , author=. Remote Sensing , volume=. 2018 , publisher=

2018

-

[32]

IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing , volume=

A simplified 2D-3D CNN architecture for hyperspectral image classification based on spatial--spectral fusion , author=. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing , volume=. 2020 , publisher=

2020

-

[33]

2015 IEEE international geoscience and remote sensing symposium (IGARSS) , pages=

Deep supervised learning for hyperspectral data classification through convolutional neural networks , author=. 2015 IEEE international geoscience and remote sensing symposium (IGARSS) , pages=. 2015 , organization=

2015

-

[34]

Neurocomputing , volume=

Convolutional neural networks for hyperspectral image classification , author=. Neurocomputing , volume=. 2017 , publisher=

2017

-

[35]

IEEE Transactions on Image Processing , volume=

Going deeper with contextual CNN for hyperspectral image classification , author=. IEEE Transactions on Image Processing , volume=. 2017 , publisher=

2017

-

[36]

Computers and Electronics in Agriculture , volume=

Classification of hyperspectral data by decision trees and artificial neural networks to identify weed stress and nitrogen status of corn , author=. Computers and Electronics in Agriculture , volume=. 2003 , publisher=

2003

-

[37]

2016 IEEE International Geoscience and Remote Sensing Symposium (IGARSS) , pages=

The airborne hyperspectral image classification based on the random forest algorithm , author=. 2016 IEEE International Geoscience and Remote Sensing Symposium (IGARSS) , pages=. 2016 , organization=

2016

-

[38]

Proceedings

Random forest classifiers for hyperspectral data , author=. Proceedings. 2005 IEEE International Geoscience and Remote Sensing Symposium, 2005. IGARSS'05. , volume=. 2005 , organization=

2005

-

[39]

IEEE international geoscience and remote sensing symposium , volume=

Support vector machines for classification of hyperspectral remote-sensing images , author=. IEEE international geoscience and remote sensing symposium , volume=. 2002 , organization=

2002

-

[40]

IEEE Geoscience and remote sensing magazine , volume=

Hyperspectral remote sensing data analysis and future challenges , author=. IEEE Geoscience and remote sensing magazine , volume=. 2013 , publisher=

2013

-

[41]

Nature climate change , volume=

The role of satellite remote sensing in climate change studies , author=. Nature climate change , volume=. 2013 , publisher=

2013

-

[42]

Landscape and urban planning , volume=

Remote sensing in urban planning: Contributions towards ecologically sound policies? , author=. Landscape and urban planning , volume=. 2020 , publisher=

2020

-

[43]

2012 IEEE Southwest Symposium on Image Analysis and Interpretation , pages=

Automated detection of dust clouds and sources in NOAA-AVHRR satellite imagery , author=. 2012 IEEE Southwest Symposium on Image Analysis and Interpretation , pages=. 2012 , organization=

2012

-

[44]

Photogrammetric Engineering & Remote Sensing , volume=

Remote sensing for crop management , author=. Photogrammetric Engineering & Remote Sensing , volume=. 2003 , publisher=

2003

-

[45]

Remote Sensing , volume=

A review of remote sensing for environmental monitoring in China , author=. Remote Sensing , volume=. 2020 , publisher=

2020

-

[46]

1976 , publisher=

A land use and land cover classification system for use with remote sensor data , author=. 1976 , publisher=

1976

-

[47]

Remote sensing , volume=

Unmanned aerial vehicle for remote sensing applications—A review , author=. Remote sensing , volume=. 2019 , publisher=

2019

-

[48]

IEEE Transactions on Geoscience and Remote Sensing , number=

Airborne imaging spectrometer: A new tool for remote sensing , author=. IEEE Transactions on Geoscience and Remote Sensing , number=. 1984 , publisher=

1984

-

[49]

2020 , publisher=

Fundamentals of satellite remote sensing: An environmental approach , author=. 2020 , publisher=

2020

-

[50]

current science , pages=

Remote sensing applications: An overview , author=. current science , pages=. 2007 , publisher=

2007

-

[51]

Electronics , volume=

Small sample hyperspectral image classification method based on dual-channel spectral enhancement network , author=. Electronics , volume=. 2022 , publisher=

2022

-

[52]

IEEE Geoscience and Remote Sensing Letters , year=

Wavemamba: Spatial-spectral wavelet mamba for hyperspectral image classification , author=. IEEE Geoscience and Remote Sensing Letters , year=

-

[53]

Neurocomputing , volume=

Spatial--spectral morphological mamba for hyperspectral image classification , author=. Neurocomputing , volume=. 2025 , publisher=

2025

-

[54]

Remote Sensing , volume=

How to learn more? Exploring Kolmogorov--Arnold networks for hyperspectral image classification , author=. Remote Sensing , volume=. 2024 , publisher=

2024

-

[55]

Remote Sensing Applications: Society and Environment , volume=

SimPoolFormer: A two-stream vision transformer for hyperspectral image classification , author=. Remote Sensing Applications: Society and Environment , volume=. 2025 , publisher=

2025

-

[56]

IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing , year=

Diffformer: a differential spatial-spectral transformer for hyperspectral image classification , author=. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing , year=

-

[57]

, journal=

Roy, Swalpa Kumar and Krishna, Gopal and Dubey, Shiv Ram and Chaudhuri Bidyut B. , journal=. HybridSN: Exploring 3-D–2-D CNN Feature Hierarchy for Hyperspectral Image Classification , year=

-

[58]

IEEE Transactions on geoscience and remote sensing , volume=

3-D deep learning approach for remote sensing image classification , author=. IEEE Transactions on geoscience and remote sensing , volume=. 2018 , publisher=

2018

-

[59]

IEEE Transactions on Geoscience and Remote Sensing , volume=

Deep feature extraction and classification of hyperspectral images based on convolutional neural networks , author=. IEEE Transactions on Geoscience and Remote Sensing , volume=. 2016 , publisher=

2016

-

[60]

2017 7th International Conference on Cloud Computing, Data Science & Engineering-Confluence , pages=

Hyper spectral image classification using multilayer perceptron neural network & functional link ANN , author=. 2017 7th International Conference on Cloud Computing, Data Science & Engineering-Confluence , pages=. 2017 , organization=

2017

-

[61]

IEEE Transactions on geoscience and remote sensing , volume=

Classification of hyperspectral remote sensing images with support vector machines , author=. IEEE Transactions on geoscience and remote sensing , volume=. 2004 , publisher=

2004

-

[62]

IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing , volume=

Hyperspectral and LiDAR data fusion: Outcome of the 2013 GRSS data fusion contest , author=. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing , volume=. 2014 , publisher=

2013

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.