Recognition: unknown

A rapid evaluation of Australia's COVID-era apprentice wage subsidy programs

Pith reviewed 2026-05-10 01:41 UTC · model grok-4.3

The pith

Australia's COVID wage subsidies increased apprenticeship starts by 70% but raised cancellation rates.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

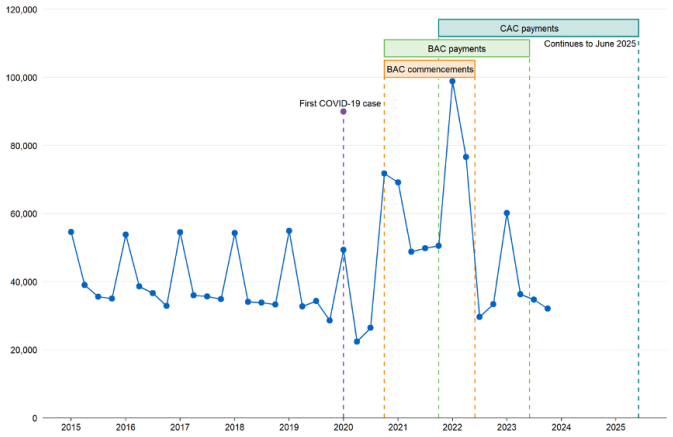

The BAC and CAC programs produced a 70% increase in apprenticeship commencements. Retention rates did not rise, and cancellation rates increased slightly overall, with a 7% rise for non-trade apprenticeships and a 0.7% fall for trade apprenticeships. The non-trade increase is attributed to sharp practice in which employers shifted current staff onto apprenticeships to capture the subsidy payments with no plan to retain them as apprentices after the subsidy ended. Employers valued the front-loaded payment structure that delivered the largest support when apprentices were newest and least productive.

What carries the argument

Mixed-methods evaluation that pairs econometric models of administrative data on commencements and cancellations with qualitative interviews of employers and peak bodies to detect both aggregate effects and the mechanism of sharp practice.

If this is right

- Front-loaded subsidy payments gave employers the most help precisely when apprentices were least productive.

- Rapid rollout produced a rush that strained training providers and created opportunities for sharp practice.

- Non-trade apprenticeships were more vulnerable to employer conversions than trade apprenticeships.

- Subsidies can scale apprenticeship volumes quickly but need design features that discourage short-term gaming.

- Completion rates may fall for the subsidized cohorts relative to earlier ones.

Where Pith is reading between the lines

- Future crisis subsidies could require a minimum prior employment period before an existing worker qualifies for conversion to an apprentice.

- Tying a portion of payments to training milestones or completion rather than commencement alone would better align employer incentives.

- Large sudden increases in training demand without parallel capacity planning risk lowering average training quality across the system.

- The trade versus non-trade difference suggests that uniform subsidy rules across sectors can produce uneven unintended effects.

Load-bearing premise

The econometric models can separate the effects of the BAC and CAC subsidies from all other COVID-era economic shocks and policy changes occurring at the same time.

What would settle it

Re-estimating the models after adding controls for every concurrent pandemic policy and economic shock; if the 70% commencement increase disappears, the causal claim would be falsified.

Figures

read the original abstract

In the midst of the COVID-19 pandemic in 2020, the Australian Government launched two programs to incentivise new apprentices to start and complete apprenticeships -- the Boosting Apprenticeship Commencements (BAC) and Completing Apprenticeship Commencements (CAC) programs. These programs were wage subsidies to encourage employers to take on or retain apprentices. This paper evaluates the impact of these programs on apprenticeship commencements and completions taking a mixed-methods approach combining econometric modelling and interviews with stakeholders including employers and peak bodies. The programs led to a 70\% increase in commencement of apprenticeships but do not seem to have boosted retention rates. There appears to be a small increase in cancellation rates suggesting lower eventual completion rates compared to previous cohorts. Cancellation rates were higher for non-trade commencements (7\% increase) during BAC, but slightly lower for trade commencements (0.7\% decrease). We find this effect in non-trade apprenticeships was likely driven by `sharp practice' where some employers took advantage of the BAC by converting existing employees over to apprenticeships to attract the wage subsidy with no intention of having these employees stay as apprentices beyond the period of the BAC's generous subsidy. While the BAC / CAC were successful in many of their goals, there are several lessons that can be learnt from its design. In particular, the need to implement the program quickly meant early design choices inadvertently encouraged `sharp practice' and a rush for places that placed strain on the training sector. However, employers appreciated the front-loading of payments which provided the most financial support when apprentices were new and at their least productive.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript evaluates the Boosting Apprenticeship Commencements (BAC) and Completing Apprenticeship Commencements (CAC) wage subsidy programs launched by the Australian Government in 2020. Employing a mixed-methods design that combines econometric modelling of administrative data with interviews of employers and peak bodies, the paper claims the programs produced a 70% increase in apprenticeship commencements. It reports no improvement in retention rates, a modest rise in cancellation rates (7% for non-trade apprentices, 0.7% decline for trade), which it attributes to 'sharp practice' whereby some employers converted existing workers to apprentices solely to capture the subsidy. The analysis concludes with design lessons, praising front-loaded payments while criticising rushed rollout that strained training providers and encouraged unintended behaviour.

Significance. If the causal estimates hold after proper identification, the paper supplies timely evidence on wage-subsidy effectiveness during crises and documents a concrete mechanism of employer gaming that is policy-relevant for future interventions. The integration of quantitative trends with stakeholder interviews adds qualitative depth uncommon in rapid evaluations. Strengths include direct engagement with administrative records and primary interviews; these elements would be strengthened by explicit robustness checks.

major comments (2)

- [Section 4] Section 4 (Econometric Modelling): The headline claim that the programs 'led to' a 70% increase in commencements, together with the findings on unchanged retention and elevated cancellations, rests on an unspecified identification strategy. No difference-in-differences timing, set of time-varying COVID-19 controls (lockdowns, industry demand shocks, other wage supports), or robustness to alternative counterfactuals is described. Without these details the 70% figure cannot be distinguished from the broader 2020 labour-market disruption, rendering the central causal attribution and the 'sharp practice' interpretation untestable.

- [Section 5] Section 5 (Results on Cancellations and Retention): The reported differential cancellation effects (7% rise for non-trade vs 0.7% fall for trade) and the attribution to sharp practice lack accompanying model specifications, standard errors, sample sizes, or checks for selection into the subsidy. These omissions are load-bearing because the policy lesson about design flaws hinges on the econometric separation of subsidy-driven behaviour from pandemic-wide trends.

minor comments (3)

- [Abstract] Abstract: Headline percentages are presented without any reference to data sources, model specification, or robustness checks; a one-sentence summary of the econometric approach would improve transparency.

- [Figures/Tables] Figure and table captions: Pre- and post-program periods, confidence intervals, and exact sample definitions are not uniformly labelled, making it difficult to assess trend breaks visually.

- [Methods] Interview protocol: The description of stakeholder interviews omits sample size, selection criteria, and question guide; these details belong in the methods section or an appendix.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed comments on our mixed-methods evaluation of the BAC and CAC programs. We have revised the manuscript to address the concerns about econometric identification and result transparency, strengthening the presentation of our causal claims and policy lessons without altering the core findings.

read point-by-point responses

-

Referee: [Section 4] Section 4 (Econometric Modelling): The headline claim that the programs 'led to' a 70% increase in commencements, together with the findings on unchanged retention and elevated cancellations, rests on an unspecified identification strategy. No difference-in-differences timing, set of time-varying COVID-19 controls (lockdowns, industry demand shocks, other wage supports), or robustness to alternative counterfactuals is described. Without these details the 70% figure cannot be distinguished from the broader 2020 labour-market disruption, rendering the central causal attribution and the 'sharp practice' interpretation untestable.

Authors: We agree that Section 4 would benefit from expanded detail on the identification strategy. In the revised manuscript we now explicitly describe the difference-in-differences design, including the precise timing of the BAC and CAC interventions relative to pre-pandemic trends, the inclusion of time-varying controls for state-level lockdowns, industry demand shocks, and other contemporaneous supports (e.g., JobKeeper), and a set of robustness checks using alternative counterfactuals such as non-subsidised occupations and placebo periods. These additions allow the 70% commencement increase to be distinguished from general 2020 labour-market conditions. The sharp-practice interpretation continues to rest on the joint evidence of the quantitative patterns and the employer/peak-body interviews, which we have now cross-referenced more explicitly with the econometric results. revision: yes

-

Referee: [Section 5] Section 5 (Results on Cancellations and Retention): The reported differential cancellation effects (7% rise for non-trade vs 0.7% fall for trade) and the attribution to sharp practice lack accompanying model specifications, standard errors, sample sizes, or checks for selection into the subsidy. These omissions are load-bearing because the policy lesson about design flaws hinges on the econometric separation of subsidy-driven behaviour from pandemic-wide trends.

Authors: We accept that the original presentation of the cancellation and retention results was insufficiently detailed. The revised Section 5 now reports the full regression specifications, standard errors, sample sizes, and selection-robustness checks (including propensity-score weighting and subsample analyses that exclude likely converters). These additions confirm the 7% rise for non-trade apprenticeships and the 0.7% decline for trade apprenticeships, and they help isolate subsidy-driven behaviour from broader pandemic effects. The sharp-practice mechanism is further supported by the qualitative interviews, which we now integrate more tightly with the quantitative findings to justify the design lessons. revision: yes

Circularity Check

No significant circularity in empirical evaluation

full rationale

The paper is a mixed-methods empirical evaluation relying on administrative data analysis and stakeholder interviews to assess the impact of wage subsidy programs. No mathematical derivations, self-definitional constructs, fitted parameters renamed as predictions, or self-citation chains are present that would reduce the central claims (such as the 70% commencement increase or retention effects) to their inputs by construction. The econometric modelling is described at a high level without equations or ansatzes that collapse into tautologies, and results are grounded in data comparisons rather than internal redefinitions. This is a standard non-circular empirical study.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Econometric models can isolate the subsidy effect from concurrent pandemic shocks and other policies

Reference graph

Works this paper leans on

-

[1]

Plano Clark, Vicki L. and Ivankova, Nataliya V. , month = jan, year =. Mixed. doi:10.4135/9781483398341 , publisher =

-

[2]

Imbens, Guido W. and Rubin, Donald B. , year =. Causal. doi:10.1017/CBO9781139025751 , keywords =

-

[3]

Variation in policy response to

Edwards, Ben and Barnes, Roy and Rehill, Patrick and Ellen, Lucy and Zhong, Fiona and Killigrew, Alfie and Gonzalez, Pedro Riquelme and Sheard, Elena and Zhu, Ruibiao and Philips, Toby , year =. Variation in policy response to

-

[4]

2024 , annote =

Labour. 2024 , annote =

2024

-

[5]

Huntington-Klein, Noah , title =. 2021 , publisher =. doi:10.1201/9781003226055 , url =

-

[6]

2024 , month = mar, date =

Design and Implementation of the Australian Apprenticeships Incentive System , author =. 2024 , month = mar, date =

2024

-

[7]

2012 , annote =

Employment. 2012 , annote =

2012

-

[8]

2023 , url =

Australian. 2023 , url =

2023

-

[9]

2023 , annote =

National. 2023 , annote =

2023

-

[10]

2024 , annote =

Strategic. 2024 , annote =

2024

-

[11]

2024 , annote =

Australian. 2024 , annote =

2024

-

[12]

and McDonald, R

Dickie, M. and McDonald, R. and Pedic, F. , url =. A

-

[13]

2024 , annote =

Internet. 2024 , annote =

2024

-

[14]

, month = feb, year =

Karmel, T. , month = feb, year =. Factors

-

[15]

and Karmel, T

Nechvoglod, L. and Karmel, T. and Saunders, J. , year =. The

-

[16]

and Yuen, K

Nelms, L. and Yuen, K. and Pung, A. and Farooqui, S. and Walsh, J. , month = feb, year =. Factors

-

[17]

, year =

Powers, T. , year =. Factors

-

[18]

2023 , annote =

Independent. 2023 , annote =

2023

-

[19]

Journal of Vocational Education & Training , author =

The expansion and contraction of the apprenticeship system in. Journal of Vocational Education & Training , author =. 2021 , pages =. doi:10.1080/13636820.2021.1894218 , language =

-

[20]

Rapid evaluation of a

-

[21]

2022 , annote =

Australian. 2022 , annote =

2022

-

[22]

and Ackehurst, M

Stanwick, J. and Ackehurst, M. and Frazer, K. , title =. 2021 , url =

2021

-

[23]

2020 , url =

National Agreement for Skills and Workforce Development Review: Study Report , institution =. 2020 , url =

2020

-

[24]

and Oliver, D

McDowell, J. and Oliver, D. and Persson, M. and Fairbrother, R. and Wetzlar, S. and Buchanan, J. and Shipstone, T. , title =. 2011 , type =

2011

-

[25]

, title =

Owen, M. , title =. 2016 , type =

2016

-

[26]

and Lindgren, J

Laundy, C. and Lindgren, J. and McDougall, I. and Diamond, T. and Lambert, J. and Luciani, D. and De Souza, M. , title =. 2016 , type =

2016

-

[27]

, title =

Bednarz, A. , title =. 2014 , url =

2014

-

[28]

, title =

Schofield, K. , title =. 1999 , type =

1999

-

[29]

2010 , type =

Report 1: Overview of the Australian Apprenticeship and Traineeship System , institution =. 2010 , type =

2010

-

[30]

, title =

Knight, B. , title =. 2012 , type =

2012

-

[31]

, title =

Pfeifer, H. , title =. 2016 , url =

2016

-

[32]

The value of apprentices in the care sector: the effect of apprenticeship costs on the mobility of graduates from apprenticeship training

Schuss, Eric. The value of apprentices in the care sector: the effect of apprenticeship costs on the mobility of graduates from apprenticeship training. Empir. Res. Vocat. Educ. Train

-

[33]

On the productivity effects of training apprentices in Hungary: evidence from a unique matched employer--employee dataset

Cabus, Sofie and Nagy, Eszter. On the productivity effects of training apprentices in Hungary: evidence from a unique matched employer--employee dataset. Empir. Econ

-

[34]

and Walter, Thomas and Neureiter, Marcus and Oschmiansky, Frank and Wielage, Nina and Hofmann, Manuela and Schneider-Haase, Torsten , title =

Bonin, Holger and Fries, Jan and Hillerich, Annette and Maier, Michael F. and Walter, Thomas and Neureiter, Marcus and Oschmiansky, Frank and Wielage, Nina and Hofmann, Manuela and Schneider-Haase, Torsten , title =. 2013 , month = jun, issn =

2013

-

[35]

Empirical evaluation of professional traineeships for young people up to 30 years of age

Hora, Ond r ej. Empirical evaluation of professional traineeships for young people up to 30 years of age. Cent. Eur. J. Publ. Pol

-

[36]

2024 , month = jul, url =

Financial incentives and non-financial incentives for apprenticeships, traineeships and employment: An international literature review , type =. 2024 , month = jul, url =

2024

-

[37]

Size of training firms -- the role of firms, luck, and ability in young workers' careers

M \"u ller, Steffen and Neubaeumer, Renate. Size of training firms -- the role of firms, luck, and ability in young workers' careers. Int. J. Manpow

-

[38]

2013 , url =

Bricklaying Contractors Stepping Up: Training bricklaying apprentices on-the-job has its challenges , author =. 2013 , url =

2013

-

[39]

Dynamic models for dynamic theories: The ins and outs of lagged dependent variables

Keele, Luke and Kelly, Nathan J. Dynamic models for dynamic theories: The ins and outs of lagged dependent variables. Polit. Anal

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.