Recognition: unknown

Daydreaming algorithm for Biased Patterns

Pith reviewed 2026-05-10 01:37 UTC · model grok-4.3

The pith

Centered Daydreaming for biased patterns creates larger basins of attraction than the centered pseudo-inverse rule

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

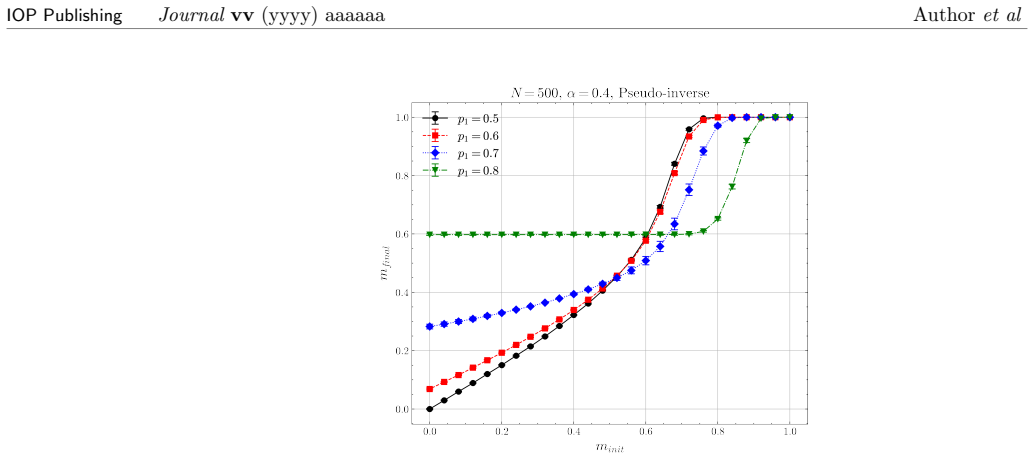

We reformulate Daydreaming for biased patterns by starting from the underlying rationale of the pseudo-inverse rule. Specifically, we introduce the retrieval dynamics and an energy function based on the centered representation, and we derive a corresponding update rule for centered Daydreaming. We compare the centered pseudo-inverse rule with centered Daydreaming for biased patterns and examine the retrieval maps and eigenvalue distributions of the coupling matrices. Our results confirm that centered Daydreaming yields a larger basin of attraction than the centered pseudo-inverse rule. Moreover, although both approaches aim to stabilize the stored patterns as fixed points, our results show a

What carries the argument

The centered Daydreaming update rule derived by applying the pseudo-inverse rationale to the centered representation of the Hopfield model for biased patterns.

Load-bearing premise

The reformulation assumes that the underlying rationale of the pseudo-inverse rule, when applied to the centered representation, directly produces an effective Daydreaming update rule that improves basins without introducing new instabilities for biased patterns.

What would settle it

A direct numerical comparison of basin sizes for a set of biased patterns that finds no enlargement or added instabilities under the centered Daydreaming rule would falsify the central result.

Figures

read the original abstract

The \emph{Daydreaming} algorithm was proposed as a learning rule that simultaneously reinforces stored patterns and suppresses spurious attractors to improve the storage capacity of the Hopfield model. Its effectiveness has been reported for both uncorrelated and correlated data. However, the existing formulation has mainly assumed unbiased patterns, and the formulation for biased patterns has not yet been sufficiently established. Biased patterns are known to be much more problematic for models of associative memories. In this study, we reformulate Daydreaming for biased patterns by starting from the underlying rationale of the pseudo-inverse rule. Specifically, we introduce the retrieval dynamics and an energy function based on the centered representation, and we derive a corresponding update rule for centered Daydreaming. We compare the centered pseudo-inverse rule with centered Daydreaming for biased patterns and examine the retrieval maps and eigenvalue distributions of the coupling matrices. Our results confirm that centered Daydreaming yields a larger basin of attraction than the centered pseudo-inverse rule. Moreover, as in previous studies, although both approaches aim to stabilize the stored patterns as fixed points, our results suggest that they shape the energy landscape through different mechanisms.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript reformulates the Daydreaming algorithm for biased patterns in Hopfield associative memories. It starts from the rationale of the centered pseudo-inverse rule, introduces retrieval dynamics and an energy function in the centered representation, derives the corresponding centered Daydreaming update rule, and compares the two approaches for biased patterns via retrieval maps and eigenvalue distributions of the coupling matrices. The central claim is that centered Daydreaming produces larger basins of attraction than the centered pseudo-inverse rule while shaping the energy landscape through distinct mechanisms, without introducing new instabilities.

Significance. If the numerical comparisons hold, the work extends Daydreaming dynamics to biased patterns, which are known to be more difficult for associative memory models than unbiased ones. The explicit derivation from the pseudo-inverse rationale and the reported improvement in basin size provide a concrete extension that could aid handling of correlated data in neural network memory models. The suggestion of different landscape-shaping mechanisms is a useful distinction from prior studies.

major comments (2)

- [Numerical results] Numerical results section: the claims that centered Daydreaming yields larger basins rest on retrieval maps and eigenvalue spectra, but the manuscript provides insufficient detail on simulation parameters (e.g., pattern dimension N, bias level p, number of realizations, or error bars), making it difficult to assess statistical robustness or reproducibility of the reported improvement over the centered pseudo-inverse rule.

- [Derivation] Derivation of the update rule: while the reformulation begins from the pseudo-inverse rationale applied to the centered representation, the step-by-step mapping to the Daydreaming dynamics (including how the energy function is constructed and why it avoids new instabilities for biased patterns) would benefit from explicit intermediate equations to allow independent verification of the absence of circularity or hidden assumptions.

minor comments (2)

- [Abstract] The abstract states that results 'confirm' larger basins but does not specify the range of bias values or network sizes tested; adding this would improve clarity.

- [Methods] Notation for the centered representation and the derived update rule should be introduced with a clear table or list of symbols to aid readers unfamiliar with the prior pseudo-inverse work.

Simulated Author's Rebuttal

We thank the referee for the positive summary and for the constructive comments that help improve the clarity and reproducibility of the manuscript. We address each major comment below.

read point-by-point responses

-

Referee: [Numerical results] Numerical results section: the claims that centered Daydreaming yields larger basins rest on retrieval maps and eigenvalue spectra, but the manuscript provides insufficient detail on simulation parameters (e.g., pattern dimension N, bias level p, number of realizations, or error bars), making it difficult to assess statistical robustness or reproducibility of the reported improvement over the centered pseudo-inverse rule.

Authors: We agree that the original manuscript did not provide sufficient detail on the numerical parameters. In the revised version we now explicitly state the pattern dimension N, the bias level p, the number of independent realizations, and we have added error bars to the retrieval maps and eigenvalue spectra. These additions allow readers to assess the statistical robustness and reproducibility of the reported improvement in basin size. revision: yes

-

Referee: [Derivation] Derivation of the update rule: while the reformulation begins from the pseudo-inverse rationale applied to the centered representation, the step-by-step mapping to the Daydreaming dynamics (including how the energy function is constructed and why it avoids new instabilities for biased patterns) would benefit from explicit intermediate equations to allow independent verification of the absence of circularity or hidden assumptions.

Authors: The original derivation begins from the centered pseudo-inverse rationale, introduces the retrieval dynamics and energy function in the centered representation, and arrives at the Daydreaming update rule. To facilitate independent verification, we have inserted the requested intermediate equations in the revised manuscript. These equations make the construction of the energy function and the mapping to the update rule fully explicit, confirming the absence of circular reasoning or hidden assumptions and that no new instabilities arise for biased patterns. revision: yes

Circularity Check

No significant circularity; derivation is an explicit reformulation with independent empirical validation

full rationale

The paper derives the centered Daydreaming update rule by applying the known rationale of the centered pseudo-inverse rule to the centered representation and energy function for biased patterns. This is a direct mathematical extension, not a self-definition or fitted-input renaming. The central claims (larger basins of attraction, distinct landscape-shaping mechanisms) are established via explicit comparisons of retrieval maps and eigenvalue spectra of the coupling matrices, which constitute independent numerical evidence rather than a reduction to the derivation inputs. No self-citation is load-bearing for the uniqueness or validity of the result, and no step equates a prediction to its own fitted parameters or prior ansatz by construction. The chain remains self-contained.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Hopfield network stores patterns as fixed-point attractors in an energy landscape

- domain assumption Centering removes bias by subtracting the mean pattern value

Reference graph

Works this paper leans on

-

[1]

S.-I. Amari. Learning patterns and pattern sequences by self-organizing nets of threshold elements.IEEE Transactions on Computers, C–21(11):1197–1206, November 1972

1972

-

[2]

J. J. Hopfield. Neural networks and physical systems with emergent collective computational abilities.Proceedings of the National Academy of Sciences, 79(8):2554–2558, April 1982. 8 IOP PublishingJournalvv(yyyy) aaaaaa Authoret al Figure 4: Retrieval map for the original Daydreaming algorithm: panels differ by the value of bias parameterp 1

1982

-

[3]

John Wiley and Sons, Inc., 1949

Donald Olding Hebb.The organization of behavior: A neuropsychological theory. John Wiley and Sons, Inc., 1949

1949

-

[4]

Cambridge University Press, 1989

Daniel J Amit.Modeling brain function: The world of attractor neural networks. Cambridge University Press, 1989

1989

-

[5]

Amit, Hanoch Gutfreund, and Haim Sompolinsky

Daniel J. Amit, Hanoch Gutfreund, and Haim Sompolinsky. Storing infinite numbers of patterns in a spin-glass model of neural networks.Phys. Rev. Lett., 55:1530–1533, Sep 1985

1985

-

[6]

Personnaz, I

L. Personnaz, I. Guyon, and G. Dreyfus. Information storage and retrieval in spin-glass like neural networks.Journal de Physique Lettres, 46(8):359–365, 1985

1985

-

[7]

Kanter and H

I. Kanter and H. Sompolinsky. Associative recall of memory without errors.Physical Review A, 35(1):380–392, January 1987

1987

-

[8]

J. J. Hopfield, D. I. Feinstein, and R. G. Palmer. ‘unlearning’ has a stabilizing effect in collective memories.Nature, 304(5922):158–159, July 1983

1983

-

[9]

Dreaming neural networks: rigorous results.Journal of Statistical Mechanics: Theory and Experiment, 2019(8):083503, 2019

Elena Agliari, Francesco Alemanno, Adriano Barra, and Alberto Fachechi. Dreaming neural networks: rigorous results.Journal of Statistical Mechanics: Theory and Experiment, 2019(8):083503, 2019

2019

-

[10]

Dreaming neural networks: forgetting spurious memories and reinforcing pure ones.Neural Networks, 112:24–40, 2019

Alberto Fachechi, Elena Agliari, and Adriano Barra. Dreaming neural networks: forgetting spurious memories and reinforcing pure ones.Neural Networks, 112:24–40, 2019

2019

-

[11]

Marco Benedetti, Enrico Ventura, Enzo Marinari, Giancarlo Ruocco, and Francesco Zamponi. Supervised perceptron learning vs unsupervised hebbian unlearning: Approaching optimal memory retrieval in hopfield-like networks.The Journal of Chemical Physics, 156(10), March 2022. 9 IOP PublishingJournalvv(yyyy) aaaaaa Authoret al

2022

-

[12]

Agliari, F

E. Agliari, F. Alemanno, M. Aquaro, and A. Fachechi. Regularization, early-stopping and dreaming: A hopfield-like setup to address generalization and overfitting.Neural Networks, 177:106389, September 2024

2024

-

[13]

Eigenvector dreaming

Marco Benedetti, Louis Carillo, Enzo Marinari, and Marc M´ ezard. Eigenvector dreaming. Journal of Statistical Mechanics: Theory and Experiment, 2024(1):013302, January 2024

2024

-

[14]

Daydreaming hopfield networks and their surprising effectiveness on correlated data.Neural Networks, 186:107216, June 2025

Ludovica Serricchio, Dario Bocchi, Claudio Chilin, Raffaele Marino, Matteo Negri, Chiara Cammarota, and Federico Ricci-Tersenghi. Daydreaming hopfield networks and their surprising effectiveness on correlated data.Neural Networks, 186:107216, June 2025

2025

-

[15]

Shuta Takeuchi, Takashi Takahashi, and Yoshiyuki Kabashima. Analysis of the hopfield model incorporating the effects of unlearning.arXiv preprint arXiv:2602.08428, 2026

-

[16]

Modeling the influence of data structure on learning in neural networks: The hidden manifold model

Sebastian Goldt, Marc M´ ezard, Florent Krzakala, and Lenka Zdeborov´ a. Modeling the influence of data structure on learning in neural networks: The hidden manifold model. Physical Review X, 10(4), December 2020

2020

-

[17]

Negri, C

M. Negri, C. Lauditi, G. Perugini, C. Lucibello, and E. Malatesta. Storage and learning phase transitions in the random-features hopfield model.Physical Review Letters, 131(25), December 2023

2023

-

[18]

Weak pairwise correlations imply strongly correlated network states in a neural population.Nature, 440(7087):1007–1012, 2006

Elad Schneidman, Michael J Berry, Ronen Segev, and William Bialek. Weak pairwise correlations imply strongly correlated network states in a neural population.Nature, 440(7087):1007–1012, 2006

2006

-

[19]

Amit, Hanoch Gutfreund, and Haim Sompolinsky

Daniel J. Amit, Hanoch Gutfreund, and Haim Sompolinsky. Information storage in neural networks with low levels of activity.Physical Review A, 35(5):2293–2303, March 1987. 10

1987

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.