Recognition: unknown

Improvements to the post-processing of weather forecasts using machine learning and feature selection

Pith reviewed 2026-05-10 01:16 UTC · model grok-4.3

The pith

LightGBM-based models with feature selection achieve lower RMSE than neural network baselines and JMA's MSMG in weather forecast post-processing.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

In the experimental setting of this study, LightGBM-based models achieved lower RMSE than the specific neural-network baselines tested, including a reproduced CNN baseline, and also generally achieved lower RMSE than both the raw MSM forecasts and the JMA post-processing product, MSM Guidance (MSMG), across many locations and forecast lead times. For precipitation, Tweedie-based loss functions and event-weighted training strategies improved event-oriented performance relative to the original LightGBM model, especially at higher rainfall thresholds, although the gains were site dependent and overall performance remained slightly below MSMG.

What carries the argument

LightGBM gradient boosting with correlation analysis for selecting input features from surrounding MSM grid points, combined with Tweedie loss for precipitation.

If this is right

- Feature selection reduces the dimensionality of inputs from surrounding points while maintaining or improving model performance.

- LightGBM offers a computationally efficient alternative to neural networks for this post-processing task.

- Tweedie loss and event weighting enhance the model's ability to predict significant precipitation events.

- The approach shows promise for operational use in diverse geographical settings like plains, mountains, and islands.

- Improvements are observed for multiple variables including temperature and wind speed in addition to precipitation.

Where Pith is reading between the lines

- Applying the same feature selection and model choice to other national weather services' models could yield similar gains.

- Future work might explore combining these ML post-processors with ensemble forecasts for uncertainty quantification.

- The site-dependent results indicate that models may need retraining or adaptation for new locations.

- These methods could potentially be extended to longer lead times or other forecast variables not tested here.

Load-bearing premise

That the advantages of LightGBM and feature selection will hold outside the 18 specific locations and the particular dataset period examined in the study.

What would settle it

Evaluating the LightGBM models on data from additional locations or a different time period and finding higher RMSE than MSMG at most sites would challenge the central claim.

Figures

read the original abstract

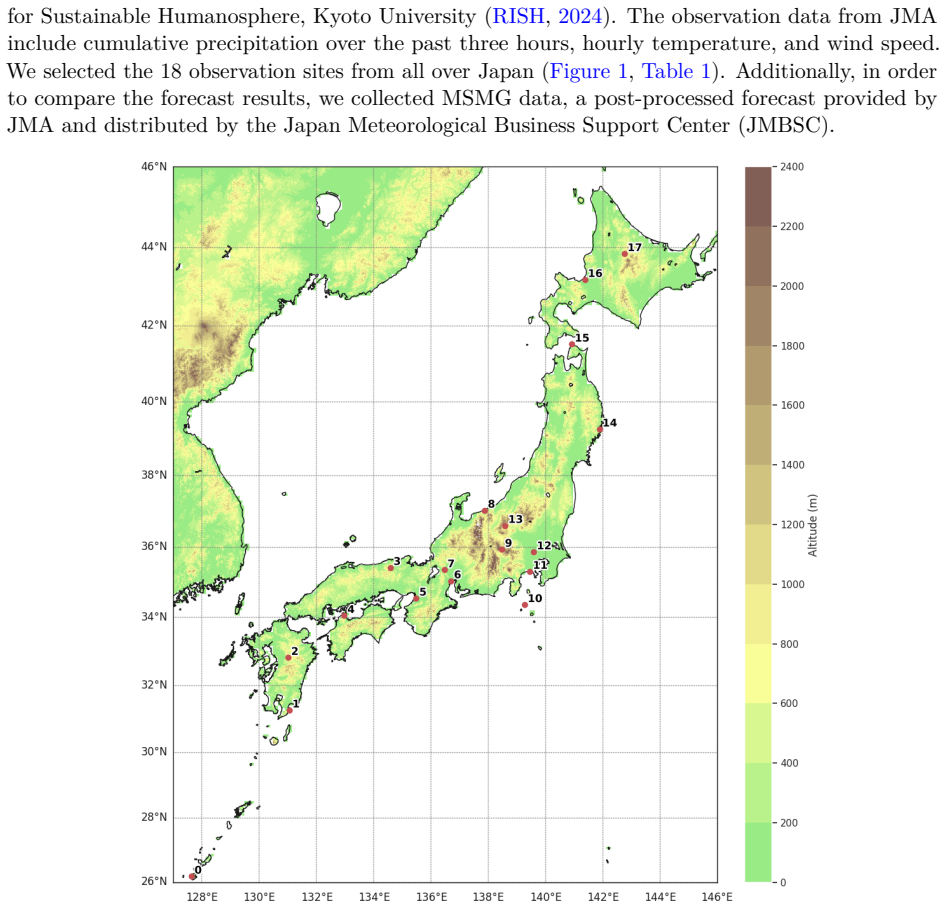

This study aims to develop and improve machine learning-based post-processing models for precipitation, temperature, and wind speed predictions using the Mesoscale Model (MSM) dataset provided by the Japan Meteorological Agency (JMA) for 18 locations across Japan, including plains, mountainous regions, and islands. By incorporating meteorological variables from grid points surrounding the target locations as input features and applying feature selection based on correlation analysis, we found that, in our experimental setting, the LightGBM-based models achieved lower RMSE than the specific neural-network baselines tested in this study, including a reproduced CNN baseline, and also generally achieved lower RMSE than both the raw MSM forecasts and the JMA post-processing product, MSM Guidance (MSMG), across many locations and forecast lead times. Because precipitation has a highly skewed distribution with many zero cases, we additionally examined Tweedie-based loss functions and event-weighted training strategies for precipitation forecasting. These improved event-oriented performance relative to the original LightGBM model, especially at higher rainfall thresholds, although the gains were site dependent and overall performance remained slightly below MSMG.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. This paper develops machine learning post-processing models for JMA MSM forecasts of precipitation, temperature, and wind speed at 18 Japanese locations. Using correlation-based feature selection on surrounding grid-point variables, it reports that LightGBM models achieve lower RMSE than raw MSM, the MSMG operational product, and a reproduced CNN baseline across many sites and lead times; for precipitation, Tweedie loss and event-weighted training further improve performance at higher thresholds, though gains remain site-dependent and overall results are still slightly below MSMG.

Significance. If the empirical gains hold under broader testing, the work would demonstrate a practical, low-compute alternative to neural networks for operational post-processing, with feature selection providing dimensionality reduction and Tweedie weighting addressing the zero-inflated nature of precipitation. The direct comparison to an existing JMA product (MSMG) adds operational relevance.

major comments (3)

- [Results] Results section: RMSE comparisons to baselines (raw MSM, MSMG, reproduced CNN) are presented without error bars, confidence intervals, or statistical significance tests (e.g., paired t-tests or Diebold-Mariano tests), so it is unclear whether reported improvements exceed sampling variability.

- [Experimental setup] Experimental setup and evaluation: All results use a single fixed train/test split on one MSM dataset period at 18 specific sites; no temporal hold-out (future years), spatial cross-validation, or leave-one-region-out analysis is reported, directly limiting the generalization claim given the noted site-dependence of precipitation gains.

- [Precipitation forecasting] Precipitation subsection: While Tweedie loss and event weighting improve event-oriented metrics at higher thresholds, the text states that overall performance remains slightly below MSMG and that gains are site-dependent; this undercuts the headline claim of general improvement and requires quantification of how many of the 18 sites actually benefit.

minor comments (2)

- [Abstract and Methods] Abstract and methods: Hyperparameter search details, exact feature-selection thresholds, and full ablation results (e.g., LightGBM with vs. without correlation selection) are not provided, hindering reproducibility.

- [Results] Figures and tables: Several result tables would benefit from explicit indication of which lead times and variables show statistically or practically meaningful gains versus the baselines.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive review of our manuscript. We have addressed each of the major comments below and will incorporate the necessary revisions to strengthen the presentation of our results and clarify the limitations.

read point-by-point responses

-

Referee: [Results] Results section: RMSE comparisons to baselines (raw MSM, MSMG, reproduced CNN) are presented without error bars, confidence intervals, or statistical significance tests (e.g., paired t-tests or Diebold-Mariano tests), so it is unclear whether reported improvements exceed sampling variability.

Authors: We agree that including measures of statistical significance would improve the robustness of our claims. In the revised manuscript, we will add bootstrap-derived confidence intervals to the RMSE comparisons and conduct paired statistical tests (such as the Diebold-Mariano test for forecast accuracy) to determine whether the observed improvements are statistically significant. revision: yes

-

Referee: [Experimental setup] Experimental setup and evaluation: All results use a single fixed train/test split on one MSM dataset period at 18 specific sites; no temporal hold-out (future years), spatial cross-validation, or leave-one-region-out analysis is reported, directly limiting the generalization claim given the noted site-dependence of precipitation gains.

Authors: The use of a single split was chosen to reflect a realistic operational scenario with the available data. However, we recognize this limits strong generalization claims. In the revision, we will include a leave-one-site-out cross-validation analysis across the 18 locations to better assess spatial robustness. We will also add an explicit discussion of the limitations regarding temporal generalization, noting that extending to future years would require additional data. revision: partial

-

Referee: [Precipitation forecasting] Precipitation subsection: While Tweedie loss and event weighting improve event-oriented metrics at higher thresholds, the text states that overall performance remains slightly below MSMG and that gains are site-dependent; this undercuts the headline claim of general improvement and requires quantification of how many of the 18 sites actually benefit.

Authors: We will revise the precipitation results section to provide a clear quantification of the number of sites where the proposed models outperform MSMG in RMSE. This will include a breakdown by variable and lead time, helping to contextualize the 'many locations' claim and the site-dependent nature of the improvements for precipitation. revision: yes

Circularity Check

No circularity: empirical ML comparisons rest on external baselines and held-out evaluation

full rationale

The paper reports RMSE results from training LightGBM models (with correlation-based feature selection and optional Tweedie loss) on MSM data at 18 fixed Japanese sites and evaluating against raw MSM forecasts, the JMA MSMG product, and a reproduced CNN baseline. No equations, uniqueness theorems, or self-citations are invoked to derive performance claims; the reported improvements are direct numerical comparisons on the chosen train/test split. Feature selection and loss weighting are standard preprocessing choices whose effects are measured on independent test data rather than being tautological. The derivation chain is therefore self-contained against the external benchmarks and does not reduce to its own fitted inputs.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Optuna: A next-generation hyperparameter optimization framework

Takuya Akiba, Shotaro Sano, Toshihiko Yanase, Takeru Ohta, and Masanori Koyama. Optuna: A next-generation hyperparameter optimization framework. In Proceedings of the 25th ACM SIGKDD international conference on knowledge discovery & data mining, pages 2623--2631, 2019

2019

-

[2]

The quiet revolution of numerical weather prediction

Peter Bauer, Alan Thorpe, and Gilbert Brunet. The quiet revolution of numerical weather prediction. Nature, 525 0 (7567): 0 47--55, 2015

2015

-

[3]

Attribute selection based on correlation analysis

Jatin Bedi and Durga Toshniwal. Attribute selection based on correlation analysis. In Advances in big data and cloud computing, pages 51--61. Springer, 2018

2018

-

[4]

A survey on feature selection methods

Girish Chandrashekar and Ferat Sahin. A survey on feature selection methods. Computers & electrical engineering, 40 0 (1): 0 16--28, 2014

2014

-

[5]

Dunn and Gordon K

Peter K. Dunn and Gordon K. Smyth. Series evaluation of tweedie exponential dispersion model densities. Statistics and Computing, 15 0 (4): 0 267--280, 2005

2005

-

[6]

Why do tree-based models still outperform deep learning on typical tabular data? Advances in neural information processing systems, 35: 0 507--520, 2022

L \'e o Grinsztajn, Edouard Oyallon, and Ga \"e l Varoquaux. Why do tree-based models still outperform deep learning on typical tabular data? Advances in neural information processing systems, 35: 0 507--520, 2022

2022

-

[7]

Operational machine learning post-processing of short-range temperature, humidity, wind speed and gust forecasts

Leila Hieta and Mikko Partio. Operational machine learning post-processing of short-range temperature, humidity, wind speed and gust forecasts. Meteorological Applications, 32: 0 e70074, 2025

2025

-

[8]

Interpolation of mountain weather forecasts by machine learning

Kazuma Iwase and Tomoyuki Takenawa. Interpolation of mountain weather forecasts by machine learning. Journal of Information Processing, 32: 0 873--880, 2024

2024

-

[9]

Outline of the operational numerical weather prediction at the japan meteorological agency

JMA. Outline of the operational numerical weather prediction at the japan meteorological agency. https://www.jma.go.jp/jma/jma-eng/jma-center/nwp/outline2024-nwp/index.htm, 2024. (Accessed: 22 September 2024)

2024

-

[10]

The Theory of Dispersion Models

Bent J rgensen. The Theory of Dispersion Models. Chapman & Hall, 1997

1997

-

[11]

Lightgbm: A highly efficient gradient boosting decision tree

Guolin Ke, Qi Meng, Thomas Finley, Taifeng Wang, Wei Chen, Weidong Ma, Qiwei Ye, and Tie-Yan Liu. Lightgbm: A highly efficient gradient boosting decision tree. Advances in neural information processing systems, 30, 2017

2017

-

[12]

Adam: A Method for Stochastic Optimization

Diederik P Kingma. Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980, 2014

work page internal anchor Pith review Pith/arXiv arXiv 2014

-

[13]

Statistical post-processing for gridded temperature prediction using encoder--decoder-based deep convolutional neural networks

Atsushi Kudo. Statistical post-processing for gridded temperature prediction using encoder--decoder-based deep convolutional neural networks. Journal of the Meteorological Society of Japan. Ser. II, 100 0 (1): 0 219--232, 2022

2022

-

[14]

Lightgbm parameters

LightGBM Contributors . Lightgbm parameters. https://lightgbm.readthedocs.io/en/latest/Parameters.html, 2026. Accessed: 2026-03-18

2026

-

[15]

Deep-learning post-processing of short-term station precipitation based on nwp forecasts

Qi Liu, Xiao Lou, Zhongwei Yan, Yajie Qi, Yuchao Jin, Shuang Yu, Xiaoliang Yang, Deming Zhao, and Jiangjiang Xia. Deep-learning post-processing of short-term station precipitation based on nwp forecasts. Atmospheric Research, 295: 0 107032, 2023

2023

-

[16]

optuna.integration.lightgbm.lightgbmtuner; optuna 2.0.0 documentation

OPTUNA. optuna.integration.lightgbm.lightgbmtuner; optuna 2.0.0 documentation. https://optuna.readthedocs.io/en/v2.0.0/reference/generated/optuna.integration.lightgbm.LightGBMTuner.html, 2024. (Accessed: 5 December 2024)

2024

-

[17]

Prediction skill of extended range 2-m maximum air temperature probabilistic forecasts using machine learning post-processing methods

Ting Peng, Xiefei Zhi, Yan Ji, Luying Ji, and Ye Tian. Prediction skill of extended range 2-m maximum air temperature probabilistic forecasts using machine learning post-processing methods. Atmosphere, 11 0 (8): 0 823, 2020

2020

-

[18]

Welcome to rish www data server

RISH. Welcome to rish www data server. http://database.rish.kyoto-u.ac.jp/index-e.html, 2024. (Accessed: 22 September 2024)

2024

-

[19]

Postprocessing of nwp precipitation forecasts using deep learning

Adrian Rojas-Campos, Martin Wittenbrink, Pascal Nieters, Erik J Schaffernicht, Jan D Keller, and Gordon Pipa. Postprocessing of nwp precipitation forecasts using deep learning. Weather and Forecasting, 38 0 (3): 0 487--497, 2023

2023

-

[20]

Mutual information between discrete and continuous data sets

Brian C Ross. Mutual information between discrete and continuous data sets. PloS one, 9 0 (2): 0 e87357, 2014

2014

-

[21]

Multivariable neural network to postprocess short-term, hub-height wind forecasts

Andr \'e s A Salazar, Yuzhang Che, Jiafeng Zheng, and Feng Xiao. Multivariable neural network to postprocess short-term, hub-height wind forecasts. Energy Science & Engineering, 10 0 (7): 0 2561--2575, 2022

2022

-

[22]

Tabular data: Deep learning is not all you need

Ravid Shwartz-Ziv and Amitai Armon. Tabular data: Deep learning is not all you need. Information Fusion, 81: 0 84--90, 2022

2022

-

[23]

Numerical forecast correction of temperature and wind using a single-station single-time spatial lightgbm method

Rongnian Tang, Yuke Ning, Chuang Li, Wen Feng, Youlong Chen, and Xiaofeng Xie. Numerical forecast correction of temperature and wind using a single-station single-time spatial lightgbm method. Sensors, 22 0 (1): 0 193, 2021

2021

-

[24]

Tensorflow

TensorFlow. Tensorflow. https://www.tensorflow.org/, 2024. (Accessed: 8 December 2024)

2024

-

[25]

Improving open weather prediction data accuracy using machine learning techniques

Evangelos Tsipis, Konstantina Banti, Malamati Louta, and Nikos Dimokas. Improving open weather prediction data accuracy using machine learning techniques. In 2023 14th International Conference on Information, Intelligence, Systems & Applications (IISA), pages 1--8. IEEE, 2023

2023

-

[26]

Wind speed forecast based on post-processing of numerical weather predictions using a gradient boosting decision tree algorithm

Wenqing Xu, Like Ning, and Yong Luo. Wind speed forecast based on post-processing of numerical weather predictions using a gradient boosting decision tree algorithm. Atmosphere, 11 0 (7): 0 738, 2020

2020

-

[27]

A bias correction method for precipitation through recognizing mesoscale precipitation systems corresponding to weather conditions

Takao Yoshikane and Kei Yoshimura. A bias correction method for precipitation through recognizing mesoscale precipitation systems corresponding to weather conditions. PLoS Water, 1 0 (5): 0 e0000016, 2022

2022

-

[28]

Machine learning for precipitation forecasts postprocessing: Multimodel comparison and experimental investigation

Yuhang Zhang and Aizhong Ye. Machine learning for precipitation forecasts postprocessing: Multimodel comparison and experimental investigation. Journal of Hydrometeorology, 22 0 (11): 0 3065--3085, 2021

2021

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.