Recognition: unknown

Component-Based Out-of-Distribution Detection

Pith reviewed 2026-05-09 22:50 UTC · model grok-4.3

The pith

Decomposing images into functional components detects out-of-distribution samples by spotting local shifts and cross-component inconsistencies without training.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

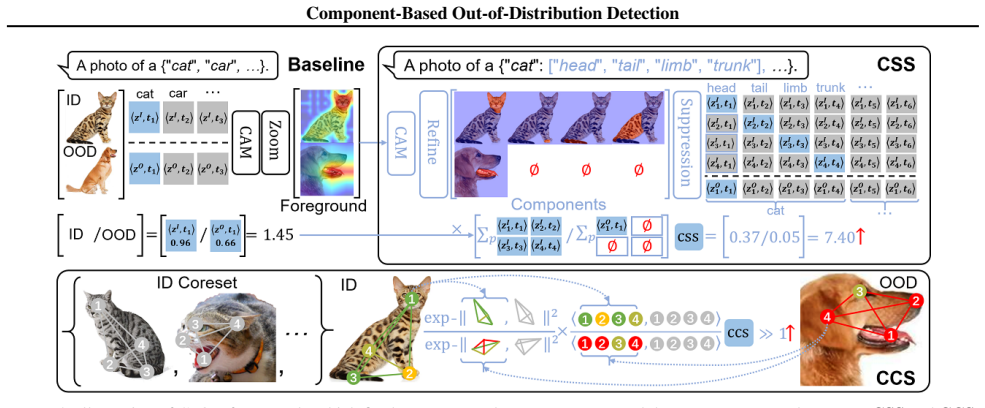

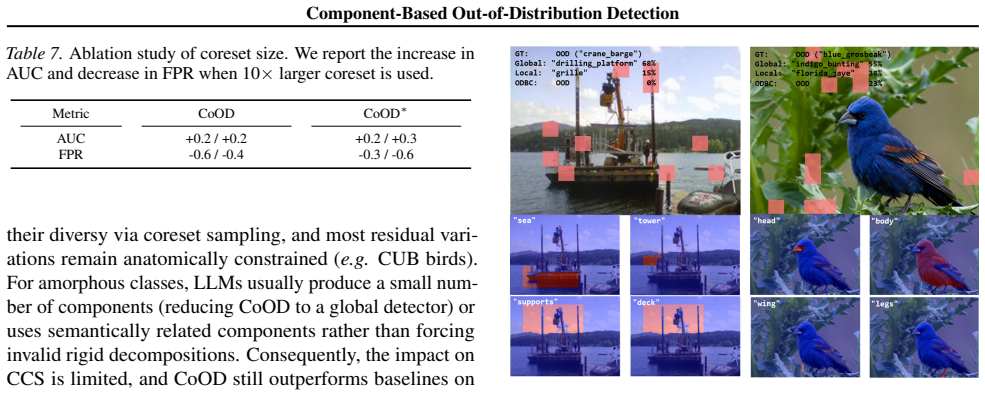

The central claim is that decomposing inputs into functional components allows computation of a Component Shift Score to detect local appearance shifts and a Compositional Consistency Score to identify cross-component inconsistencies, yielding consistent empirical gains on both coarse- and fine-grained OOD detection tasks.

What carries the argument

The Component-Based OOD Detection (CoOD) framework that decomposes inputs into functional components to derive the Component Shift Score (CSS) for local shifts and Compositional Consistency Score (CCS) for compositional inconsistencies.

If this is right

- CoOD improves detection performance on coarse-grained OOD tasks compared with global and patch-based baselines.

- CoOD improves detection performance on fine-grained OOD tasks compared with global and patch-based baselines.

- CoOD identifies compositional OODs composed of valid in-distribution components more effectively than prior approaches.

- The training-free nature allows direct application without retraining or additional optimization.

Where Pith is reading between the lines

- If component extraction remains stable on new domains, the same decomposition principle could be tested on video or 3D data where functional parts are similarly isolable.

- The explicit CSS and CCS scores may yield more localized explanations for flagged samples than global embedding methods.

- Combining CoOD scores with existing global detectors could be evaluated as a simple ensemble to capture both local and holistic signals.

Load-bearing premise

Functional components can be extracted reliably from inputs in a training-free manner and the resulting scores will generalize without new instabilities.

What would settle it

If CoOD fails to show AUROC gains over baselines on standard coarse-grained and fine-grained OOD benchmarks such as CIFAR or ImageNet variants, the claim of consistent improvements would not hold.

Figures

read the original abstract

Out-of-Distribution (OOD) detection requires sensitivity to subtle shifts without overreacting to natural In-Distribution (ID) diversity. However, from the viewpoint of detection granularity, global representation inevitably suppress local OOD cues, while patch-based methods are unstable due to entangled spurious-correlation and noise. And neither them is effective in detecting compositional OODs composed of valid ID components. Inspired by recognition-by-components theory, we present a training-free Component-Based OOD Detection (CoOD) framework that addresses the existing limitations by decomposing inputs into functional components. To instantiate CoOD, we derive Component Shift Score (CSS) to detect local appearance shifts, and Compositional Consistency Score (CCS) to identify cross-component compositional inconsistencies. Empirically, CoOD achieves consistent improvements on both coarse- and fine-grained OOD detection.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a training-free Component-Based OOD Detection (CoOD) framework inspired by recognition-by-components theory. Inputs are decomposed into functional components to derive a Component Shift Score (CSS) that detects local appearance shifts and a Compositional Consistency Score (CCS) that identifies cross-component inconsistencies. The central empirical claim is that CoOD yields consistent improvements over global and patch-based baselines on both coarse-grained and fine-grained OOD detection tasks.

Significance. If the empirical gains are reproducible and the training-free decomposition proves robust, the approach could meaningfully advance OOD detection by addressing the suppression of local cues in global representations and the instability of patch methods, especially for compositional OOD cases. The absence of training is a practical strength, but the work's value hinges on whether the component extraction step generalizes without introducing new instabilities.

major comments (3)

- [§3] §3 (Method): The training-free extraction of functional components is the load-bearing precondition for both CSS and CCS. The manuscript provides no formal analysis, ablation, or sensitivity study showing how extraction errors propagate into the two scores on fine-grained or compositional OOD inputs; any mismatch between the extractor's inductive bias and the target domain directly undermines the claimed separation of OOD from ID diversity.

- [§4] §4 (Experiments): The abstract and results sections assert 'consistent improvements' on coarse- and fine-grained OOD detection, yet supply no quantitative tables with exact AUROC/FPR95 deltas, baseline implementations, dataset splits, or statistical significance tests. Without these, it is impossible to verify whether the gains exceed what could arise from dataset-specific tuning or from the particular choice of component extractor.

- [§3.2] §3.2 (CSS/CCS definitions): The two scores are presented as addressing distinct failure modes (local shift vs. compositional inconsistency), but the manuscript does not demonstrate that they are non-redundant or that their combination is necessary; an ablation removing one score would be required to establish that both are load-bearing for the overall performance claim.

minor comments (3)

- [Abstract] Abstract: The claim of empirical gains is stated without any numerical values, dataset names, or baseline references, which reduces the reader's ability to gauge the scope of the contribution before reading the full text.

- [§3] Notation: The exact mathematical formulations of CSS and CCS (including how components are aggregated and normalized) should be presented as numbered equations early in §3 to eliminate ambiguity.

- [Figures] Figures: Visualization of extracted components on both ID and OOD examples would help readers assess the stability of the training-free decomposition step.

Simulated Author's Rebuttal

We thank the referee for their thorough review and valuable suggestions. We address each of the major comments in detail below, outlining our responses and the revisions we plan to incorporate.

read point-by-point responses

-

Referee: [§3] §3 (Method): The training-free extraction of functional components is the load-bearing precondition for both CSS and CCS. The manuscript provides no formal analysis, ablation, or sensitivity study showing how extraction errors propagate into the two scores on fine-grained or compositional OOD inputs; any mismatch between the extractor's inductive bias and the target domain directly undermines the claimed separation of OOD from ID diversity.

Authors: We acknowledge that a formal analysis of error propagation from component extraction would strengthen the method section. Although our experiments demonstrate robust performance across multiple datasets and OOD types, suggesting that the scores are not overly sensitive to minor extraction inaccuracies, we agree that this aspect requires explicit examination. In the revised manuscript, we will add a sensitivity study that introduces controlled errors in component extraction (e.g., via boundary perturbations or alternative extractors) and measures the impact on CSS and CCS for both ID and OOD samples, including fine-grained and compositional cases. revision: yes

-

Referee: [§4] §4 (Experiments): The abstract and results sections assert 'consistent improvements' on coarse- and fine-grained OOD detection, yet supply no quantitative tables with exact AUROC/FPR95 deltas, baseline implementations, dataset splits, or statistical significance tests. Without these, it is impossible to verify whether the gains exceed what could arise from dataset-specific tuning or from the particular choice of component extractor.

Authors: We apologize if the quantitative details were not sufficiently prominent in the initial submission. The manuscript does include experimental results comparing CoOD to global and patch-based baselines on standard OOD benchmarks. To address this concern, we will expand the experimental section with detailed tables reporting exact AUROC and FPR95 values, including deltas relative to baselines, full specifications of dataset splits, baseline implementations (with references to original papers and our re-implementations), and statistical significance (e.g., mean and standard deviation over 5 random seeds with t-test p-values). revision: yes

-

Referee: [§3.2] §3.2 (CSS/CCS definitions): The two scores are presented as addressing distinct failure modes (local shift vs. compositional inconsistency), but the manuscript does not demonstrate that they are non-redundant or that their combination is necessary; an ablation removing one score would be required to establish that both are load-bearing for the overall performance claim.

Authors: We agree that an ablation study is necessary to validate the contribution of each score. In the revised version, we will include results where CSS and CCS are used individually as well as in combination, across the evaluated datasets. This will demonstrate that the scores capture complementary aspects of OOD detection and that their combination yields the reported improvements, particularly for compositional OOD cases. revision: yes

Circularity Check

No circularity in derivation; CoOD scores defined independently of OOD labels via training-free decomposition.

full rationale

The provided abstract and context describe a training-free framework that decomposes inputs into functional components to derive CSS (local appearance shift) and CCS (cross-component consistency) scores, motivated by recognition-by-components theory. No equations, fitted parameters, self-citations, or uniqueness theorems are referenced that would reduce the claimed OOD detection improvements to the inputs by construction. The central claims are empirical performance gains on coarse- and fine-grained OOD tasks, which remain externally falsifiable and do not rely on self-definitional loops or renamed known results. The derivation chain is therefore self-contained.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Recognition-by-components theory can be directly applied to improve OOD detection in images

invented entities (2)

-

Component Shift Score (CSS)

no independent evidence

-

Compositional Consistency Score (CCS)

no independent evidence

Reference graph

Works this paper leans on

-

[1]

2023 , pages=

Diversity-Measurable Anomaly Detection , author =. 2023 , pages=

2023

-

[2]

Delta Energy: Optimizing Energy Change During Vision-Language Alignment Improves both OOD Detection and OOD Generalization , author=

-

[3]

Learning Confidence for Out -of-Distribution Detection in Neural Networks,

Terrance DeVries and Graham W. Taylor , title =. arXiv preprint arXiv:1802.04865 , year =

-

[4]

Reed and Honglak Lee and Dragomir Anguelov and Christian Szegedy and Dumitru Erhan and Andrew Rabinovich , title =

Scott E. Reed and Honglak Lee and Dragomir Anguelov and Christian Szegedy and Dumitru Erhan and Andrew Rabinovich , title =

-

[5]

Aritra Ghosh and Himanshu Kumar and P. S. Sastry , title =

-

[6]

On the Impact of Spurious Correlation for Out-of-distribution Detection , author=

-

[7]

Mengyuan Chen and Junyu Gao and Changsheng Xu , title =

-

[8]

COOkeD: Ensemble-Based

Galadrielle Humblot. COOkeD: Ensemble-Based

-

[9]

Jinchi Huang and Lie Qu and Rongfei Jia and Binqiang Zhao , title =

-

[10]

Tsang and Masashi Sugiyama , title =

Bo Han and Quanming Yao and Xingrui Yu and Gang Niu and Miao Xu and Weihua Hu and Ivor W. Tsang and Masashi Sugiyama , title =

-

[11]

2024 , url=

Diversity Modeling for Semantic Shift Detection , author=. 2024 , url=

2024

-

[12]

Jie Ren and Stanislav Fort and Jeremiah Z. Liu and Abhijit Guha Roy and Shreyas Padhy and Balaji Lakshminarayanan , title =. arXiv preprint arXiv:2106.09022 , year =

-

[13]

Jiachen Liang and Ruibing Hou and Minyang Hu and Hong Chang and Shiguang Shan and Xilin Chen , title =

-

[14]

Fayi Le and Wenwu He and Chentao Cao and Dong Liang and Zhuo-Xu Cui , title =

-

[15]

National Academy of Sciences , year=

Prevalence of neural collapse during the terminal phase of deep learning training , author=. National Academy of Sciences , year=

-

[16]

2022 , volume =

Out-of-Distribution Detection with Deep Nearest Neighbors , author =. 2022 , volume =

2022

-

[17]

Kimin Lee and Kibok Lee and Honglak Lee and Jinwoo Shin , title =

-

[18]

Vikash Sehwag and Mung Chiang and Prateek Mittal , title =

-

[19]

Srikant , title =

Shiyu Liang and Yixuan Li and R. Srikant , title =

-

[20]

2022 , volume =

Scaling Out-of-Distribution Detection for Real-World Settings , author =. 2022 , volume =

2022

-

[21]

ReAct: Out-of-distribution Detection With Rectified Activations , volume =

Sun, Yiyou and Guo, Chuan and Li, Yixuan , booktitle = NIPS, pages =. ReAct: Out-of-distribution Detection With Rectified Activations , volume =

-

[22]

Haoqi Wang and Zhizhong Li and Litong Feng and Wayne Zhang , title =

-

[23]

Detecting Out-of-Distribution Examples with

Sastry, Chandramouli Shama and Oore, Sageev , booktitle = ICML, pages =. Detecting Out-of-Distribution Examples with. 2020 , volume =

2020

-

[24]

On the Importance of Gradients for Detecting Distributional Shifts in the Wild , volume =

Huang, Rui and Geng, Andrew and Li, Yixuan , booktitle = NIPS, pages =. On the Importance of Gradients for Detecting Distributional Shifts in the Wild , volume =

-

[25]

Dan Hendrycks and Kevin Gimpel , title =

-

[26]

Owens and Yixuan Li , title =

Weitang Liu and Xiaoyun Wang and John D. Owens and Yixuan Li , title =

-

[27]

Junnan Li and Richard Socher and Steven C. H. Hoi , title =

-

[28]

Recycling: Semi-Supervised Learning With Noisy Labels in Deep Neural Networks , journal =

Kyeongbo Kong and Junggi Lee and Youngchul Kwak and Minsung Kang and Seong Gyun Kim and Woo. Recycling: Semi-Supervised Learning With Noisy Labels in Deep Neural Networks , journal =

-

[29]

Zhaowei Zhu and Zihao Dong and Yang Liu , title =

-

[30]

Yufei Gu and Xiaoqing Zheng and Tomaso Aste , title =

-

[31]

MentorNet: Learning Data-Driven Curriculum for Very Deep Neural Networks on Corrupted Labels , booktitle = ICML, volume =

Lu Jiang and Zhengyuan Zhou and Thomas Leung and Li. MentorNet: Learning Data-Driven Curriculum for Very Deep Neural Networks on Corrupted Labels , booktitle = ICML, volume =

-

[32]

A Noisy Elephant in the Room: Is Your out-of-Distribution Detector Robust to Label Noise? , booktitle = CVPR, pages =

Galadrielle Humblot. A Noisy Elephant in the Room: Is Your out-of-Distribution Detector Robust to Label Noise? , booktitle = CVPR, pages =

-

[33]

Elenberg and Kilian Q

Geoff Pleiss and Tianyi Zhang and Ethan R. Elenberg and Kilian Q. Weinberger , title =

-

[34]

O'Connor and Kevin McGuinness , title =

Eric Arazo and Diego Ortego and Paul Albert and Noel E. O'Connor and Kevin McGuinness , title =

-

[35]

Jinjing Hu and Wenrui Liu and Hong Chang and Bingpeng Ma and Shiguang Shan and Xilin Chen , title =

-

[36]

Proceedings of

Vitaly Feldman , title =. Proceedings of

-

[37]

2024 , url=

Is Scale All You Need For Anomaly Detection? , author=. 2024 , url=

2024

-

[38]

Lei Feng and Senlin Shu and Zhuoyi Lin and Fengmao Lv and Li Li and Bo An , title =

-

[39]

Sheng Liu and Zhihui Zhu and Qing Qu and Chong You , title =

-

[40]

Sabuncu , title =

Zhilu Zhang and Mert R. Sabuncu , title =

-

[41]

Decoupled Weight Decay Regularization

Ilya Loshchilov and Frank Hutter , title =. arXiv preprint arXiv:1711.05101 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[42]

Ilya Loshchilov and Frank Hutter , title =

-

[43]

2022 , pages =

Deng, Hanqiu and Li, Xingyu , title =. 2022 , pages =

2022

-

[44]

Breckon , title =

Samet Akcay and Amir Atapour Abarghouei and Toby P. Breckon , title =

-

[45]

Rosanne Liu and Joel Lehman and Piero Molino and Felipe Petroski Such and Eric Frank and Alex Sergeev and Jason Yosinski , title =

-

[46]

Unsupervised Anomaly Detection with Generative Adversarial Networks to Guide Marker Discovery , booktitle =

Thomas Schlegl and Philipp Seeb. Unsupervised Anomaly Detection with Generative Adversarial Networks to Guide Marker Discovery , booktitle =

-

[47]

Kingma and Max Welling , title =

Diederik P. Kingma and Max Welling , title =

-

[48]

arXiv preprint arXiv:2110.11334 , year=

Jingkang Yang and Kaiyang Zhou and Yixuan Li and Ziwei Liu , title =. arXiv preprint arXiv:2110.11334 , year =

-

[49]

Preetum Nakkiran and Gal Kaplun and Yamini Bansal and Tristan Yang and Boaz Barak and Ilya Sutskever , title =

-

[50]

Mohammad Pezeshki and Amartya Mitra and Yoshua Bengio and Guillaume Lajoie , title =

-

[51]

Edelman and Tristan Yang and Boaz Barak and Haofeng Zhang , title =

Dimitris Kalimeris and Gal Kaplun and Preetum Nakkiran and Benjamin L. Edelman and Tristan Yang and Boaz Barak and Haofeng Zhang , title =

-

[52]

Attention is All you Need , volume =

Vaswani, Ashish and Shazeer, Noam and Parmar, Niki and Uszkoreit, Jakob and Jones, Llion and Gomez, Aidan N and Kaiser, ukasz and Polosukhin, Illia , booktitle = NIPS, editor =. Attention is All you Need , volume =

-

[53]

Nature , volume=

Mastering the game of Go with deep neural networks and tree search , author=. Nature , volume=

-

[54]

Nature , volume=

Highly accurate protein structure prediction with AlphaFold , author=. Nature , volume=

-

[55]

Shancong Mou and Xiaoyi Gu and Meng Cao and Haoping Bai and Ping Huang and Jiulong Shan and Jianjun Shi , title =

-

[56]

2018 , journal =

On the Resistance of Nearest Neighbor to Random Noisy Labels , author=. 2018 , journal =

2018

-

[57]

Better Mixing via Deep Representations , booktitle = ICML, volume =

Yoshua Bengio and Gr. Better Mixing via Deep Representations , booktitle = ICML, volume =

-

[58]

Vincent Dumoulin and Jonathon Shlens and Manjunath Kudlur , title =

-

[59]

Tero Karras and Samuli Laine and Timo Aila , title =

-

[60]

Tero Karras and Samuli Laine and Miika Aittala and Janne Hellsten and Jaakko Lehtinen and Timo Aila , title =

-

[61]

Pramuditha Perera and Ramesh Nallapati and Bing Xiang , title =

-

[62]

Fashion-MNIST: a Novel Image Dataset for Benchmarking Machine Learning Algorithms

Han Xiao and Kashif Rasul and Roland Vollgraf , title =. arXiv preprint arXiv:1708.07747 , year =

work page internal anchor Pith review arXiv

-

[63]

and Hinton, G

Krizhevsky, A. and Hinton, G. , title =. Computer Science Department, University of Toronto, Tech. Rep , year =

-

[64]

Griffin Floto and Stefan Kremer and Mihai Nica , title =

-

[65]

Neural Discrete Representation Learning , booktitle = NIPS, pages =

A. Neural Discrete Representation Learning , booktitle = NIPS, pages =

-

[66]

Fei Ye and Chaoqin Huang and Jinkun Cao and Maosen Li and Ya Zhang and Cewu Lu , title =

-

[67]

Vijay Mahadevan and Weixin Li and Viral Bhalodia and Nuno Vasconcelos , title =

-

[68]

Yao Zhu and Yuefeng Chen and Chuanlong Xie and Xiaodan Li and Rong Zhang and Hui Xue and Xiang Tian and Bolun Zheng and Yaowu Chen , title =

-

[69]

Zhaowei Cai and Nuno Vasconcelos , Title =

-

[70]

Kaiming He and Xiangyu Zhang and Shaoqing Ren and Jian Sun , title =

-

[71]

Nalisnick and Akihiro Matsukawa and Yee Whye Teh and Dilan G

Eric T. Nalisnick and Akihiro Matsukawa and Yee Whye Teh and Dilan G. Do Deep Generative Models Know What They Don't Know? , booktitle = ICLR, year =

-

[72]

Matthias Hein and Maksym Andriushchenko and Julian Bitterwolf , title =

-

[73]

Dietterich , title =

Dan Hendrycks and Mantas Mazeika and Thomas G. Dietterich , title =

-

[74]

Jihoon Tack and Sangwoo Mo and Jongheon Jeong and Jinwoo Shin , title =

-

[75]

Northcutt and Lu Jiang and Isaac L

Curtis G. Northcutt and Lu Jiang and Isaac L. Chuang , title =. Journal of Artificial Intelligence Research , volume =

-

[76]

Wenhao Wang and Muhammad Ahmad Kaleem and Adam Dziedzic and Michael Backes and Nicolas Papernot and Franziska Boenisch , title =

-

[77]

National Academy of Sciences , volume =

Mikhail Belkin and Daniel Hsu and Siyuan Ma and Soumik Mandal , title =. National Academy of Sciences , volume =

-

[78]

Neural Networks and the Bias/Variance Dilemma , journal =

Stuart Geman and Elie Bienenstock and Ren. Neural Networks and the Bias/Variance Dilemma , journal =

-

[79]

Kimin Lee and Honglak Lee and Kibok Lee and Jinwoo Shin , title =

-

[80]

Learning and Evaluating Representations for Deep One-Class Classification , booktitle = ICLR, year =

Kihyuk Sohn and Chun. Learning and Evaluating Representations for Deep One-Class Classification , booktitle = ICLR, year =

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.