Recognition: unknown

Looking Into the Past: Eye Movements Characterize Elements of Autobiographical Recall in Interviews with Holocaust Survivors

Pith reviewed 2026-05-08 12:45 UTC · model grok-4.3

The pith

Eye movements preceding sentence onset predict the temporal context of autobiographical recall in Holocaust survivor interviews.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Using video from semi-naturalistic interviews with 806 Holocaust survivors, the authors observe that eye gaze patterns, especially vertical movements, differ significantly across temporal contexts of recall. Intra-subject sequence models trained on gaze features predict the temporal context of sentences, with eye movements entirely preceding sentence onset proving sufficient for accurate prediction. This supports the bidirectional link between eye movements and memory retrieval in affective and remote autobiographical contexts.

What carries the argument

Intra-subject sequence models using segments of gaze features to predict temporal context of autobiographical sentences.

If this is right

- Gaze patterns can characterize temporal aspects of traumatic memory recall in naturalistic settings.

- Eye movements before verbalization carry information about the memory being retrieved.

- Pre-speech gaze alone enables prediction models without speech content.

- The findings extend lab-based eye-memory links to highly emotional remote recall.

Where Pith is reading between the lines

- Eye tracking during recall sessions could reveal non-verbal markers of memory construction in trauma contexts.

- Similar pre-onset gaze signatures might appear in other emotional autobiographical interview settings.

- The approach suggests potential for analyzing memory processes without relying on verbal reports.

Load-bearing premise

Eye gaze data can be accurately extracted and annotated from the interview videos without major errors or biases, and the temporal contexts of the sentences are correctly and independently labeled.

What would settle it

A follow-up study with precise eye-tracking in similar interviews where pre-onset gaze features fail to predict temporal context above chance level.

Figures

read the original abstract

Eye movement and memory retrieval are deeply and bidirectionally intertwined, however existing literature is generally confined to controlled lab settings. We investigate the relationship between eye gaze and memory recall in free-form autobiographical recall, which comprises both autonoetic consciousness -- the ability to mentally place oneself in the past or future -- and various affective states. Using a large video corpus of semi-naturalistic interviews with Holocaust survivors (N = 806), we examine eye movements with respect to episodic, semantic, affective, and temporal dimensions of traumatic and highly emotional autobiographical recall. We observe gaze patterns vary significantly across certain temporal contexts, most prominently in vertical eye movements. We additionally train intra-subject sequence models to predict temporal context of sentences from segments of gaze features, and find that eye movements entirely preceding sentence onset are sufficient for prediction. Our results corroborate prior findings in literature linking eye movements to memory in controlled and semi-structured settings, reinforcing the role of eye gaze in retrieving and constructing memories, especially in highly emotional and remote memory recall.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript analyzes eye movements in a large corpus (N=806) of semi-naturalistic video interviews with Holocaust survivors during autobiographical recall. It reports statistically significant differences in gaze patterns (especially vertical movements) across episodic, semantic, affective, and temporal dimensions of recall. It further claims that intra-subject sequence models can predict the temporal context of sentences using only gaze-feature segments extracted entirely before sentence onset.

Significance. If the core results hold after methodological clarification, the work usefully extends controlled-lab findings on oculomotor-memory links to emotionally charged, remote autobiographical recall in a high-stakes population. The large sample and the pre-onset prediction result are strengths that could inform non-invasive memory-assessment approaches; the paper also supplies a reproducible modeling pipeline on a publicly relevant corpus.

major comments (2)

- [Methods] Methods (gaze extraction and labeling): The central prediction claim—that segments of gaze features preceding sentence onset suffice to classify temporal context—rests on automated extraction of gaze from interview video without dedicated eye-tracking hardware. No validation metrics (e.g., agreement with manual annotation, error rates under head motion or emotional expression) or exclusion criteria for low-quality tracking are provided. Systematic bias in the computer-vision pipeline could therefore drive both the reported gaze differences and the model accuracy.

- [Results] Results (prediction experiments): The sequence-model results are presented without baseline comparisons that isolate potential artifacts (e.g., head-pose or facial-landmark features that may correlate with sentence content). It is therefore unclear whether the reported predictive sufficiency is carried by genuine pre-retrieval oculomotor signals or by correlated measurement noise.

minor comments (2)

- [Abstract] The abstract states that models are trained 'intra-subject' but does not specify how many sentences per subject were available or how temporal-context labels were independently assigned; a brief clarification would improve reproducibility.

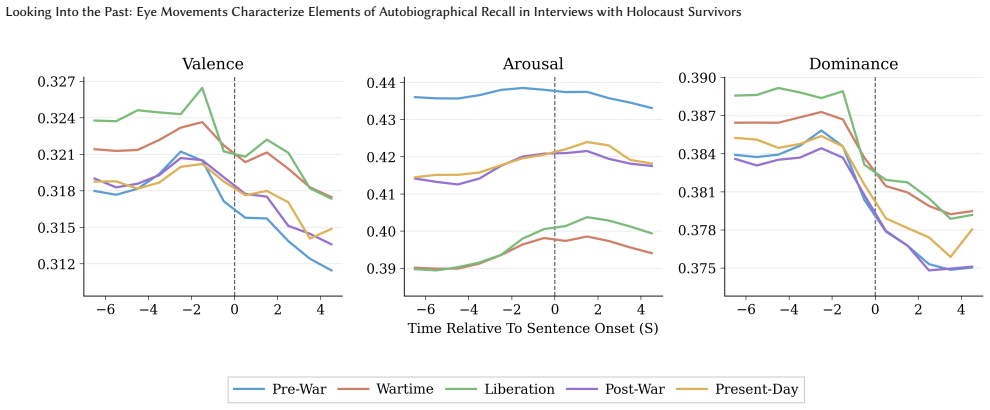

- [Figures] Figure captions and axis labels for the gaze-pattern plots should explicitly state the time window relative to sentence onset and the exact statistical test used for the reported significance.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback, which has prompted us to strengthen the methodological transparency and experimental controls in the manuscript. We address each major comment below.

read point-by-point responses

-

Referee: [Methods] Methods (gaze extraction and labeling): The central prediction claim—that segments of gaze features preceding sentence onset suffice to classify temporal context—rests on automated extraction of gaze from interview video without dedicated eye-tracking hardware. No validation metrics (e.g., agreement with manual annotation, error rates under head motion or emotional expression) or exclusion criteria for low-quality tracking are provided. Systematic bias in the computer-vision pipeline could therefore drive both the reported gaze differences and the model accuracy.

Authors: We agree that explicit validation details are essential for interpreting the automated gaze estimates. The features were obtained via a standard facial-landmark-based gaze estimation pipeline applied to the video corpus. In the revised manuscript we have added a dedicated Methods subsection that (i) specifies the exact algorithm and version used, (ii) cites the published validation metrics of that pipeline (angular error under head motion and expression variation), (iii) states the frame-level exclusion criteria we applied (detection confidence < 0.75 or head-pose rotation > 30°), and (iv) reports a sensitivity check confirming that the primary statistical and predictive results remain significant when restricted to high-confidence segments. While a new large-scale manual annotation of the 806 interviews was not feasible, these additions directly address the risk of systematic bias. revision: yes

-

Referee: [Results] Results (prediction experiments): The sequence-model results are presented without baseline comparisons that isolate potential artifacts (e.g., head-pose or facial-landmark features that may correlate with sentence content). It is therefore unclear whether the reported predictive sufficiency is carried by genuine pre-retrieval oculomotor signals or by correlated measurement noise.

Authors: We concur that baseline controls are required to isolate genuine oculomotor contributions. The revised Results section now includes two additional intra-subject sequence-model experiments: one using only head-pose angles and another using non-gaze facial-landmark coordinates (excluding explicit gaze vectors). Both baselines achieve substantially lower accuracy than the full gaze-feature models (quantitative values and statistical comparisons will be reported). These controls, together with the intra-subject design, support that the pre-onset predictive signal is carried by gaze rather than correlated measurement artifacts. A brief discussion of remaining confounds has also been added. revision: yes

Circularity Check

No circularity: standard supervised sequence modeling on extracted gaze features

full rationale

The paper trains intra-subject sequence models to predict sentence temporal context from preceding gaze-feature segments. This is a conventional supervised learning pipeline (features observed before onset, labels assigned independently) with no equations, fitted parameters, or self-citations that reduce the reported sufficiency result to a definitional identity or tautology. The abstract and described method contain no self-definitional loops, fitted-input-as-prediction artifacts, or load-bearing self-citations; the claim remains externally falsifiable via replication on new video data.

Axiom & Free-Parameter Ledger

free parameters (1)

- sequence model hyperparameters

axioms (1)

- domain assumption Eye movements can be accurately extracted from interview video without substantial error

Reference graph

Works this paper leans on

-

[1]

Kleanthis Avramidis, Woojae Jeong, Aditya Kommineni, Sudarsana R Kadiri, Marcus Ma, Colin McDaniel, Myzelle Hughes, Thomas McGee, Elsi Kaiser, Dani Byrd, Assal Habibi, B Rael Cahn, Idan A Blank, Kristina Lerman, Takfarinas Medani, Richard M Leahy, and Shrikanth Narayanan. 2026. Deep learning char- acterizes depression and suicidal ideation in young adults...

-

[2]

An Empirical Evaluation of Generic Convolutional and Recurrent Networks for Sequence Modeling

Shaojie Bai, J. Zico Kolter, and Vladlen Koltun. 2018. An Empirical Evalua- tion of Generic Convolutional and Recurrent Networks for Sequence Modeling. arXiv:1803.01271 [cs.LG] https://arxiv.org/abs/1803.01271

work page internal anchor Pith review arXiv 2018

-

[3]

Tadas Baltrusaitis, Amir Zadeh, Yao Chong Lim, and Louis-Philippe Morency

-

[4]

In2018 13th IEEE Inter- national Conference on Automatic Face & Gesture Recognition (FG 2018)

OpenFace 2.0: Facial Behavior Analysis Toolkit. In2018 13th IEEE Inter- national Conference on Automatic Face & Gesture Recognition (FG 2018). 59–66. doi:10.1109/FG.2018.00019

-

[5]

Ryan M Barker, Michael J Armson, Nicholas B Diamond, Zhong-Xu Liu, Yushu Wang, Jennifer D Ryan, and Brian Levine. 2026. Remembrance with gazes passed: Eye movements precede continuous recall of episodic details of real-life events. Cognition268 (2026), 106380

2026

-

[6]

Martin A Conway and Christopher W Pleydell-Pearce. 2000. The construction of autobiographical memories in the self-memory system.Psychological review 107, 2 (2000), 261

2000

-

[7]

Gwyneth Doherty-Sneddon and Fiona G Phelps. 2005. Gaze aversion: A response to cognitive or social difficulty?Memory & cognition33, 4 (2005), 727–733

2005

-

[8]

Mohamad El Haj, Jean-Louis Nandrino, Pascal Antoine, Muriel Boucart, and Quentin Lenoble. 2017. Eye movement during retrieval of emotional autobio- graphical memories.Acta psychologica174 (2017), 54–58

2017

-

[9]

Megan Freeth, Tom Foulsham, and Alan Kingstone. 2013. What affects social attention? Social presence, eye contact and autistic traits.PloS one8, 1 (2013), e53286

2013

-

[10]

Albert Gu, Karan Goel, and Christopher Ré. 2022. Efficiently Modeling Long Sequences with Structured State Spaces. arXiv:2111.00396 [cs.LG] https://arxiv. org/abs/2111.00396

work page internal anchor Pith review arXiv 2022

-

[11]

Simon Ho, Tom Foulsham, and Alan Kingstone. 2015. Speaking and listening with the eyes: Gaze signaling during dyadic interactions.PloS one10, 8 (2015), e0136905

2015

-

[12]

Kristiina Jokinen, Kazuaki Harada, Masafumi Nishida, and Seiichi Yamamoto

-

[13]

InINTERSPEECH

Turn-alignment using eye-gaze and speech in conversational interaction.. InINTERSPEECH. 2018–2021

2018

-

[14]

Chris L Kleinke. 1986. Gaze and eye contact: a research review.Psychological bulletin100, 1 (1986), 78

1986

-

[15]

Quentin Lenoble, Steve MJ Janssen, and Mohamad El Haj. 2019. Don’t stare, unless you don’t want to remember: Maintaining fixation compromises autobio- graphical memory retrieval.Memory27, 2 (2019), 231–238

2019

-

[16]

Thanathai Lertpetchpun, Tiantian Feng, Dani Byrd, and Shrikanth Narayanan

-

[17]

Developing a High-performance Framework for Speech Emotion Recogni- tion in Naturalistic Conditions Challenge for Emotional Attribute Prediction. In Interspeech 2025. 4648–4652. doi:10.21437/Interspeech.2025-1082

-

[18]

Brian Levine, Eva Svoboda, Janine F Hay, Gordon Winocur, and Morris Moscov- itch. 2002. Aging and autobiographical memory: dissociating episodic from semantic retrieval.Psychology and aging17, 4 (2002), 677

2002

- [19]

-

[20]

Corinna S Martarelli, Fred W Mast, and Matthias Hartmann. 2017. Time in the eye of the beholder: Gaze position reveals spatial-temporal associations during encoding and memory retrieval of future and past.Memory & Cognition45, 1 (2017), 40–48

2017

-

[21]

Albert Mehrabian. 1996. Pleasure-Arousal-Dominance: A General Framework for Describing and Measuring Individual Differences in Temperament.Current Psychology14, 4 (1996), 261–292

1996

-

[22]

Jonny O’Dwyer, Ronan Flynn, and Niall Murray. 2017. Continuous affect predic- tion using eye gaze and speech. In2017 IEEE International Conference on Bioin- formatics and Biomedicine (BIBM). 2001–2007. doi:10.1109/BIBM.2017.8217968

-

[23]

OpenAI, :, Sandhini Agarwal, Lama Ahmad, Jason Ai, Sam Altman, Andy Apple- baum, Edwin Arbus, Rahul K. Arora, Yu Bai, Bowen Baker, Haiming Bao, Boaz Barak, Ally Bennett, Tyler Bertao, Nivedita Brett, Eugene Brevdo, Greg Brockman, Sebastien Bubeck, Che Chang, Kai Chen, Mark Chen, Enoch Cheung, Aidan Clark, Dan Cook, Marat Dukhan, Casey Dvorak, Kevin Fives,...

work page internal anchor Pith review arXiv 2025

-

[24]

The Prompt Report: A Systematic Survey of Prompt Engineering Techniques

Sander Schulhoff, Michael Ilie, Nishant Balepur, Konstantine Kahadze, Amanda Liu, Chenglei Si, Yinheng Li, Aayush Gupta, HyoJung Han, Sevien Schul- hoff, Pranav Sandeep Dulepet, Saurav Vidyadhara, Dayeon Ki, Sweta Agrawal, Chau Pham, Gerson Kroiz, Feileen Li, Hudson Tao, Ashay Srivastava, Hevan- der Da Costa, Saloni Gupta, Megan L. Rogers, Inna Goncearenc...

work page internal anchor Pith review arXiv 2025

-

[25]

Anaïs Servais, Christophe Hurter, and Emmanuel J Barbeau. 2022. Gaze direction as a facial cue of memory retrieval state.Frontiers in Psychology13 (2022), 1063228

2022

-

[26]

Mohammad Soleymani, Jeroen Lichtenauer, Thierry Pun, and Maja Pantic. 2012. A Multimodal Database for Affect Recognition and Implicit Tagging.IEEE Transactions on Affective Computing3, 1 (2012), 42–55. doi:10.1109/T-AFFC.2011. 25

-

[27]

Endel Tulving. 2002. Episodic memory: From mind to brain.Annual review of psychology53, 1 (2002), 1–25

2002

-

[28]

Endel Tulving et al . 1972. Episodic and semantic memory.Organization of memory1, 381-403 (1972), 1

1972

-

[29]

Ruben DI van Genugten and Daniel L Schacter. 2024. Automated scoring of the autobiographical interview with natural language processing.Behavior research methods56, 3 (2024), 2243–2259

2024

-

[30]

Victoria Wardell, Christian L Esposito, Christopher R Madan, and Daniela J Palombo. 2021. Semi-automated transcription and scoring of autobiographical memory narratives.Behavior Research Methods53, 2 (2021), 507–517

2021

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.